Jensen Huang’s Latest Interview: Forcing DeepSeek to Deeply Bind with Huawei Is “Too Scary” for the U.S.

TechFlow Selected TechFlow Selected

Jensen Huang’s Latest Interview: Forcing DeepSeek to Deeply Bind with Huawei Is “Too Scary” for the U.S.

Regarding exports to China, he criticized the extreme export control policies as naive.

Compiled by: Xiao Xiao, NetEase Smart

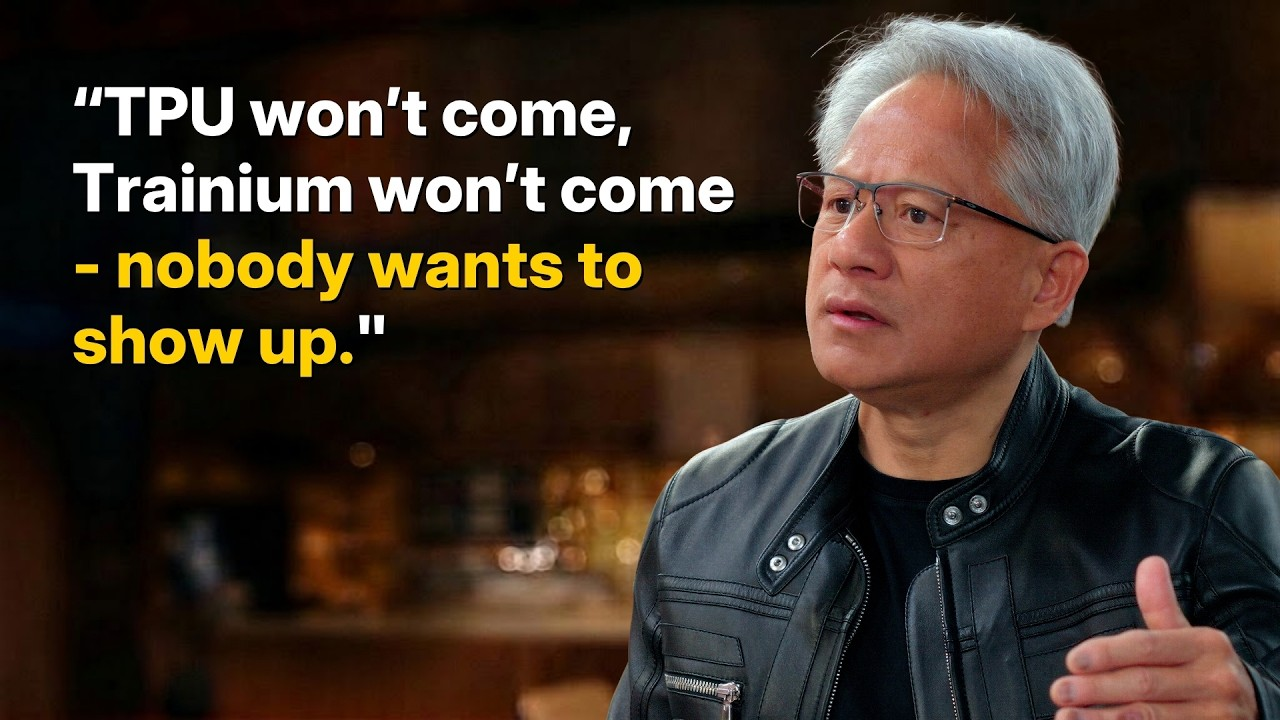

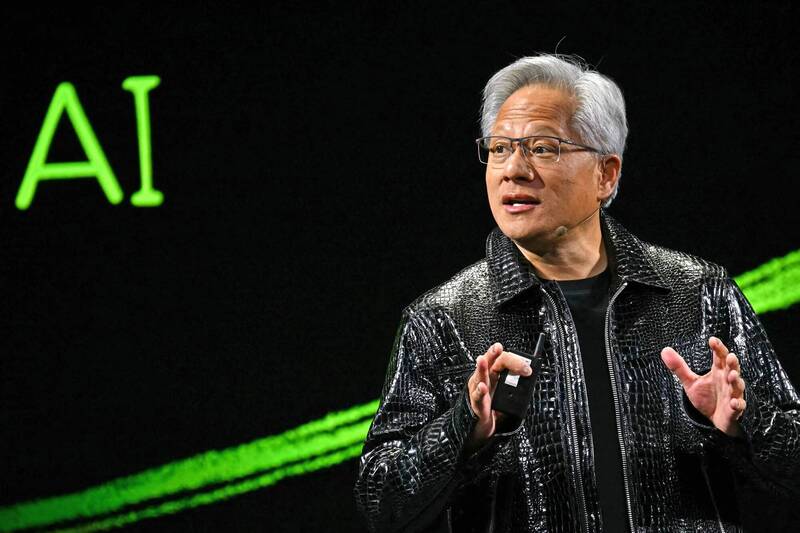

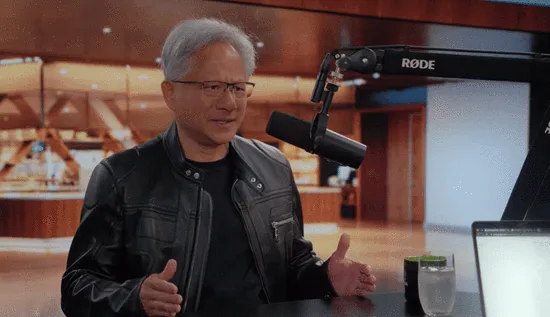

Jensen Huang, CEO of NVIDIA, recently sat down for an in-depth interview with Dwarkesh Patel, host of a prominent U.S. tech podcast, addressing key issues including the company’s moat, competition from Google’s TPUs, and AI chip exports to China.

He emphasized that NVIDIA’s moat now extends deep into the supply chain—forged through hundreds of billions of dollars in procurement commitments with TSMC and memory suppliers.

On TPU competition, Huang noted that Anthropic is a unique case—not a trend—in the rise of ASICs. NVIDIA’s accelerated computing spans far beyond AI, covering molecular dynamics, data processing, fluid dynamics, and more; CUDA’s high programmability enables annual performance leaps of 10x to 50x.

He also explained why NVIDIA does not become a hyperscale cloud provider itself. Despite strong cash flow, NVIDIA adheres to its principle of doing only what is necessary—and as little as possible—choosing instead to invest in ecosystem partners like CoreWeave, OpenAI, and Anthropic rather than compete directly with its customers. He acknowledged his failure to invest in Anthropic earlier at scale. Moreover, he stressed that even if the AI revolution had never occurred, NVIDIA would still be a massive company thanks to accelerated computing in physics, chemistry, and data processing.

On exports to China, he criticized extreme export controls as “naïve.” Huang pointed out that AI compute is the fusion of chips and energy: although constrained by EUV lithography tools, China retains enormous 7nm chip manufacturing capacity. Given that today’s leading large models are still predominantly trained on the Hopper architecture, China can fully compensate for per-chip performance gaps by leveraging abundant electricity and scaling up chip cluster sizes.

Moreover, China’s vast AI research teams are enhancing model performance through more efficient computer science. Citing DeepSeek as a warning, Huang underscored that such excellent open-source models—when forced to optimize deeply for domestic hardware like Huawei chips—will objectively erode the global advantage of the U.S. technology stack. He argued that voluntarily abandoning the world’s second-largest market will compel China to build a foundational computing architecture independent of the U.S. As these open-standard–based technologies gradually spread to the Global South, the U.S. risks falling behind in the long-term battle for AI ecosystem standards.

Full transcript of Jensen Huang’s interview:

Is supply-chain control NVIDIA’s biggest moat?

Patel: Many software companies’ valuations are falling because people believe AI will commoditize software. One view is that NVIDIA simply sends design files to TSMC, which fabricates logic chips and switches; SK Hynix, Micron, and Samsung package HBM memory; and ODMs in Taiwan assemble rack systems. Fundamentally, NVIDIA does software—the hardware is built by others. If software becomes commoditized, won’t NVIDIA be commoditized too?

Huang: Ultimately, someone must convert electrons into tokens. That conversion process is extremely difficult to fully commoditize. Making one token more valuable than another is like making one molecule more valuable than another—it demands immense technical, engineering, scientific, and inventive effort. These efforts remain far from fully understood—and far from complete. I don’t believe full commoditization will happen.

But we do make the process more efficient. The way you framed your question mirrors my mental model of the company: electrons in, tokens out, and NVIDIA in between. Our principle is to do only what’s necessary—and as little as possible. “As little as possible” means outsourcing whatever I don’t need to do myself, turning it into part of our ecosystem.

Today, NVIDIA may be the company with the largest partner ecosystem—including upstream and downstream supply chains, all computer makers, application developers, and model vendors. AI is like a five-layer cake, and we have an ecosystem at every layer. We do as little as possible—but the part we *must* do is extraordinarily hard. I don’t believe that part will be commoditized.

Also, I don’t think enterprise software companies will be commoditized. Most software companies today are tool vendors—Excel, PowerPoint, Cadence, Synopsys. My view runs counter to many: the number of AI agents will grow exponentially—and so will the number of tool users. The number of tool instances could explode.

For example, Synopsys’ Design Compiler will be used by huge numbers of AI agents for layout and design rule checking. Today, engineers constrain us; tomorrow, each engineer will be backed by a swarm of agents. We’ll explore design spaces in unprecedented ways—using today’s tools. High-frequency tool usage will accelerate software companies’ growth. This hasn’t happened yet because agents aren’t yet skilled enough to use tools effectively. Either these software companies will build their own agents—or agents will mature sufficiently to master these tools. I expect both.

Patel: I see your latest filing includes nearly $100 billion in procurement commitments to foundries, memory, and packaging. Semiconductor research firm SemiAnalysis estimates this figure could reach $250 billion. One interpretation is that NVIDIA’s moat lies in locking up scarce components for years ahead—others may have accelerators but can’t get memory or logic chips. Is this your primary moat for the next few years?

Huang: This is one thing we can do—and others find extremely difficult. We’ve made massive upstream commitments—some explicit, like those you cited. Others are implicit: for instance, I’ve told CEOs of upstream firms how big this industry will become, why it will be that big, and walked them through my reasoning—so they’d see what I saw—before they invested.

Why do they invest for me—not for others? Because they know I can buy their entire output and sell it through my downstream channels. NVIDIA’s downstream demand and downstream supply chain are so vast that they’re willing to invest upstream.

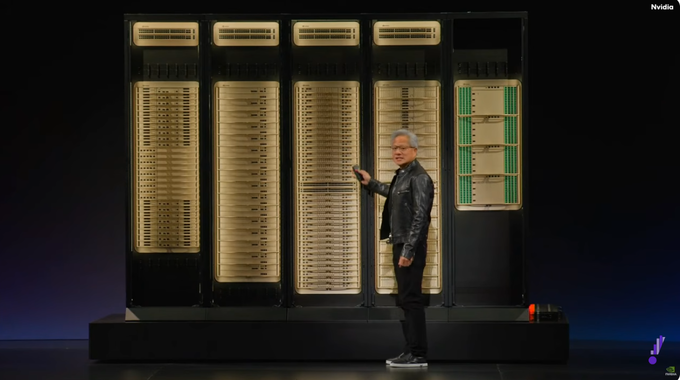

Look at GTC—the scale and energy amaze people. It’s the entire AI community gathering because they need to exchange ideas and be seen. I bring them together—downstream sees upstream, upstream sees downstream, everyone witnesses AI’s progress. They meet AI-native startups and all early-stage companies. That lets them verify firsthand what I tell them. I spend enormous time—directly and indirectly—ensuring the supply chain, partners, and ecosystem grasp the opportunity before them.

Some say my keynote feels like a lecture—and a bit torturous. That’s intentional. I must ensure the entire supply chain, upstream and downstream, and the ecosystem understand what’s coming, why, when, how big—and think about it systematically, just as I do.

Our moat is really forward-deployed. If we truly scale to a trillion-dollar business in the coming years, we’ll naturally be able to build a matching supply chain. But that assumes our current scale, influence, and rapid business velocity—just like cash flow, supply chains have their own velocity and turnover rate. If business turnover were slow, no one would build a supply chain for an empty shell. Our ability to sustain this scale stems fundamentally from overwhelming downstream demand. When they see, hear, and realize this is happening in real time—that’s what enables us to achieve what we do today at this scale.

Patel: Let’s drill into whether upstream can keep up. You’ve doubled revenue year after year—and delivered more than triple the compute to the world annually.

Huang: Doubling at this scale is truly astonishing.

Patel: But look at logic chips. You’re TSMC’s largest customer for N3—and one of the largest for N2. SemiAnalysis found AI will consume 60% of N3 capacity this year—and 86% next year. If you already dominate, how do you double again? Year after year? Have we entered a phase where AI compute growth *must* slow due to upstream constraints? Do you see solutions? Ultimately—how do we double wafer-fab capacity year after year?

Huang: At any given moment, instantaneous demand can exceed total global upstream and downstream supply—even limited by the number of plumbers. That’s actually happened before.

Patel: Plumbers should be invited to next year’s GTC.

Huang: Great idea. But it’s actually a good sign. You want an industry’s instantaneous demand to exceed total supply—the reverse is worse. If a component shortage gets severe enough, the whole industry rushes to fix it. You barely hear about CoWoS anymore—because we tackled it head-on over the past two years, and the situation is now much improved. TSMC now knows CoWoS supply must keep pace with logic and memory demand. They’re expanding CoWoS and future packaging technologies at the same speed as logic. That’s excellent—because CoWoS and HBM memory were once niche; now they’re mainstream computing technologies.

We now influence a broader supply chain. I said all this five years ago when the AI revolution began. Some believed me and invested—like Sanjay Mehrotra, CEO of Micron, and his team. I remember that meeting clearly—I precisely outlined what would happen, why, and the state we’re in today. They truly doubled down. We partnered on LPDDR and HBM memory; they invested heavily—and achieved tremendous success. Others came later—but they’re all here now.

Every bottleneck receives intense focus. We now anticipate bottlenecks years in advance—for example, our investments over the past few years in Lumentum, Coherent, and the silicon photonics ecosystem reshaped the supply chain. We built the entire supply chain around TSMC—and co-developed the silicon photonics integration platform COUPE, inventing numerous technologies and licensing patents openly to the supply chain.

We strengthen the supply chain by inventing new technologies, new processes, new test equipment (e.g., double-sided probing), and investing in companies to help them scale. We’re actively shaping the ecosystem—so the supply chain can support this scale.

Patel: Some bottlenecks seem easier to solve—like CoWoS expansion.

Huang: We take responsibility for overcoming the hardest one.

Patel: Which one?

Huang: Plumbers and electricians. This is where I worry about doomers. They say jobs will vanish, roles will disappear. If we stop people from becoming software engineers, we’ll run out of software engineers. The same prediction was made ten years ago. Some doomers said, “Whatever you do, don’t become a radiologist”—you can still find videos online claiming radiology will be the first profession to vanish, that the world won’t need radiologists anymore. Guess what we’re short of today? Radiologists.

Patel: Some things scale; others don’t. How do you produce twice as many logic chips each year? Ultimately, both memory and logic depend on EUV lithography machines. How do you get twice as many EUV machines year after year?

Huang: These capacities scale rapidly—achievable within two or three years. You just need to signal demand to the supply chain. If you can build one, you can build ten; if you can build ten, you can build a million. Replicating these isn’t hard.

Patel: How far into the supply chain will you go? Will you go straight to ASML and say, “Three years from now, NVIDIA aims for $2 trillion in annual revenue—we need vastly more EUV machines”?

Huang: Some conversations are direct; some indirect. If you convince TSMC, ASML follows. We identify critical bottlenecks. But once TSMC is convinced, you’ll have enough EUV machines within a few years.

My view is that no bottleneck persists beyond two or three years. Meanwhile, we’re boosting compute efficiency 10x, 20x—Hopper to Blackwell is 30x to 50x. Because CUDA is flexible, we constantly invent new algorithms and techniques—increasing capacity while improving efficiency. None of this worries me. What *does* worry me is downstream—energy policy blocking energy expansion. Without energy, you can’t build new industries—or new manufacturing.

We must reindustrialize America. Bring chip manufacturing, computer manufacturing, and packaging back. Build EVs, robots, AI factories. None of this happens without energy—and energy takes a long time. Chip capacity is a two- to three-year problem. CoWoS capacity is too.

Will TPUs break NVIDIA’s grip on AI compute?

Patel: Of the world’s top three models, two—Claude and Gemini—are trained on Google TPUs. What does that mean for NVIDIA?

Huang: What we do is very different. NVIDIA builds accelerated computing—not just tensor processing units. Accelerated computing applies broadly: molecular dynamics, quantum chromodynamics, data processing, structured/unstructured data, fluid dynamics, particle physics—and, yes, AI.

Accelerated computing is far broader. While AI is the hot topic—and obviously important and impactful—computing is much wider. NVIDIA has reshaped computing—from general-purpose to accelerated computing. Our market scope dwarfs any TPU or ASIC. We’re the only company that accelerates *all* applications. We have a massive ecosystem—every framework and algorithm runs on NVIDIA.

Because our computers are designed for others to operate, any operator can buy our systems. Most in-house systems require you to be the operator—they lack flexibility, so others can’t operate them. Because anyone can build and operate our systems, we exist in every cloud—including Google, Amazon, Azure, and Oracle.

If you rent infrastructure, you need a massive, diverse customer base across industries as anchor tenants. If you use it yourself, we can help you operate it—as we do for xAI with Elon Musk. And we can empower operators at any company, in any industry: you can build a supercomputer for Lilly for scientific research and drug discovery—and we’ll help operate it across drug discovery and bioscience.

There’s a huge list of applications TPUs *can’t* do. NVIDIA’s CUDA is also an excellent tensor processor—but it handles *every* stage of data processing, computation, AI, etc. Our market opportunity is vastly larger and broader. Because we support every application in the world today, you can build an NVIDIA system anywhere—and know customers will follow. That’s a completely different situation.

Patel: Your revenue is staggering—but not from pharma or quantum computing. It’s overwhelmingly from AI. Because AI—a previously unimaginable technology—is growing at unprecedented speed. So the question arises: what’s best for AI itself? TPUs are essentially giant systolic arrays—brilliant at matrix multiplication. GPUs are more flexible—ideal for tasks with many branch decisions or irregular memory access. But what *is* AI doing? Fundamentally, AI repeatedly performs highly predictable matrix multiplications. So why reserve chip area for thread schedulers or switching between threads and memory banks—generic functions? That area could be fully dedicated to matrix multiplication. TPUs are precisely engineered for *this* explosive computational demand. What do you think?

Huang: Matrix multiplication is vital to AI—but not the whole story. If you invent a new attention mechanism, try a different decomposition, or create a novel hybrid state-space model (SSM), you need a universally programmable architecture. If you fuse diffusion models and autoregressive models, you need universal programmability. We run *anything* you can imagine. That’s the advantage. Because it’s programmable, inventing new algorithms is far easier.

The ability to invent new algorithms is *why* AI advances so fast. TPUs—and everything else—are bound by Moore’s Law—~25% annual improvement. To achieve 10x or 100x yearly leaps, you must fundamentally change algorithms *and* computing methods every year.

That’s NVIDIA’s core advantage. Blackwell delivers 50x better energy efficiency than Hopper. When I first said 35x, no one believed me. Later, articles claimed I held back—and it was actually 50x. Moore’s Law alone can’t deliver that. We rely on new models like Mixture of Experts (MoE), parallelized and distributed across the entire compute system. Without CUDA—and deep kernel-writing capability—this is extremely difficult.

It’s the synergy of programmable architecture and NVIDIA’s extreme co-design. We can even offload computation to the network itself—NVLink or Spectrum-X. We simultaneously evolve processors, systems, network architectures, libraries, and algorithms. Without CUDA, I wouldn’t know where to begin.

Patel: This raises an interesting question about NVIDIA’s customers. 60% of your revenue comes from five hyperscale cloud providers. In another era, customers were professors running experiments—they needed CUDA and couldn’t use other accelerators. They just ran PyTorch on CUDA, and everything was optimized. But these hyperscalers *can* write their own kernels. In fact, to squeeze the last 5% performance from a specific architecture, they *must*. Anthropic and Google run mostly on their own accelerators—TPUs and Trainium. Even OpenAI, which uses GPUs, built Triton—because they need custom kernels. They don’t use cuBLAS or NCCL—they have their own software stack, compilable across accelerators. If most of your customers *can* and *are* building CUDA alternatives, how central is CUDA to cutting-edge AI running on NVIDIA?

Huang: CUDA is a rich ecosystem. If you develop on *any* computer, choosing CUDA first is extremely wise—because the ecosystem is so rich, and we support every framework. If you write custom kernels, our contribution to Triton is massive—Triton’s backend is packed with NVIDIA tech.

We’re happy to help every framework improve. Frameworks abound—Triton, vLLM, SGLang. Now a wave of reinforcement learning frameworks emerges—verl, NeMo RL. Post-training and RL are exploding. So if you develop on a specific architecture, CUDA makes the most sense—because you know its ecosystem is robust.

You know if something breaks, the issue is more likely in *your* code—not the mountain of underlying systems. Don’t forget the sheer scale of code involved. When the system fails, ask: Did *I* mess up—or did the computer? You always hope it’s *you*, because only then can you trust the computer. Obviously, we have bugs too—but crucially, our systems have been tested countless times. You can build confidently on them. That’s my first point: ecosystem richness, programmability, and capability.

Second—if you’re a developer, you want installed base. You want your software to run on many computers—not just your own, but clusters you or others manage—because you’re a framework developer. NVIDIA’s CUDA ecosystem *is* ultimately its greatest asset.

We now have hundreds of millions of GPUs deployed—across every cloud. From A10, A100, H100, H200, to L-series, P-series—every size, shape. If you’re a robotics company, you want that CUDA stack running directly inside your robot. We’re virtually everywhere. This installed base means once you develop software or models, they work *anywhere*. That value is incalculable.

Finally, we exist in every cloud—making us truly unique. If you’re an AI company or developer, you’re unsure which cloud provider you’ll partner with—or where to run your workload. No problem—we’re everywhere, including your own data center. Ecosystem richness, broad installed base, diverse presence—these combine to make CUDA priceless.

Patel: Makes sense. But let’s ask: how important are these advantages to your *largest* customers? CUDA may be valuable to many. But your revenue primarily comes from customers capable of building their own software stacks—especially if AI enters domains verifiable via rigorous reinforcement learning. Then the question becomes: who writes the fastest matrix multiplication and attention kernels on large clusters? A highly verifiable optimization problem.

Those hyperscalers can absolutely write custom kernels. Sure, NVIDIA’s cost-performance may still be superior—so they might choose NVIDIA. But then the question reduces to: who has better hardware specs—and more compute/bandwidth per dollar?

Historically, NVIDIA’s CUDA moat sustained >70% margins on AI hardware and software. But if your biggest customers can bypass that moat, can you maintain such high margins?

Huang: The number of engineers we assign to these AI labs—working side-by-side to optimize their software stacks—is staggering. Why? Because no one understands our architecture better than we do. These architectures aren’t as general-purpose as CPUs. CPUs are like Cadillacs—easy to drive, cruise control, simple. NVIDIA GPUs and accelerators are like F1 race cars. Anyone can drive 160 km/h—but pushing to the limit requires deep expertise. We use massive AI to write kernels.

I’m confident we’ll remain indispensable for a long time. Our expertise often lifts AI lab partners’ performance by 2x effortlessly. Optimizing a kernel—or the entire software stack—commonly yields 50%, 2x, or even 3x model speedups. Given their massive Hopper and Blackwell cluster scales, that’s enormous. Doubling performance equals doubling revenue.

NVIDIA’s compute stack delivers the world’s best total cost of ownership (TCO)—no one beats it. No platform shows better performance-to-TCO ratio than ours. Dylan’s InferenceMAX benchmark is public—anyone can use it. But TPUs don’t test it—and Trainium doesn’t either. I encourage them to use InferenceMAX to demonstrate their claimed ultra-low inference costs. It’s hard—because no one wants to.

Same with MLPerf—I’d love Trainium to show their claimed 40% advantage. Or TPUs to show cost advantages. But from first principles, their claimed advantages make no sense. So our success is simple: our TCO is unmatched.

Second—you say 60% of our customers are the top five cloud providers—but most of that business is external-facing. For example, AWS’s NVIDIA chips are mostly for external customers—not internal use. Azure’s customers are clearly external—Oracle’s too. They prefer us because of our influence—we bring them the world’s best customers, all built on NVIDIA. And those customers build on NVIDIA because of our influence and versatility.

So I see a flywheel: installed base, architectural programmability, ecosystem richness—plus thousands of AI companies globally. If you’re an AI startup, which architecture do you pick? You pick the richest—and we’re the richest. You pick the largest installed base—and we’re the largest. You pick the most mature ecosystem. That’s the flywheel.

Combined, our performance-per-dollar is best—and customers’ token cost lowest. Our performance-per-watt is world-leading—so if a partner builds a 1-gigawatt data center, it should maximize revenue and token output—directly equaling revenue. You want maximum token output to maximize revenue—and we’re the world’s highest token-per-watt architecture. Also, if you aim to rent infrastructure, we have the world’s largest customer base. That’s why the flywheel spins.

Patel: Interesting. I think the core question is the actual market structure. Even with other players, perhaps a world exists where thousands of AI companies each hold roughly equal compute share. But reality is—even through these five cloud providers—the real compute users on Amazon are Anthropic, OpenAI, and major foundational labs. These big players have the capability and resources to run diverse accelerators.

If your claims about cost-performance and performance-per-watt are true—why did Anthropic just announce a multi-gigawatt TPU deal with Broadcom and Google, shifting most of their compute there? For Google, TPUs dominate their compute. So if I look at major AI companies, their compute was once all NVIDIA—and now it’s not. I wonder—if these paper advantages hold, why choose alternative accelerators?

Huang: Anthropic is a singular exception—not a trend. Think: without Anthropic, where would TPU growth come from? 100% from Anthropic. Same for Trainium—100% from Anthropic. It’s basically an open secret. It’s not that ASIC opportunities are booming—it’s that there’s just *one* Anthropic.

Patel: But OpenAI has a deal with AMD—and they’re building their own Titan accelerator.

Huang: Yes—but everyone acknowledges the vast majority of their compute still runs on NVIDIA. We’ll continue collaborating closely. I don’t mind others using alternatives—trying alternatives. How else would they know how good ours is? Sometimes you need reminding. We must continually earn our position.

People make bold claims all the time. Look how many ASIC projects get canceled. Just because you build an ASIC doesn’t mean you’ll beat NVIDIA—that’s not easy. Actually, it’s unreasonable—unless NVIDIA has a fundamental flaw. But our scale and speed are undeniable—we’re the only company launching new products yearly, with massive leaps each time.

Patel: I guess their logic is: it doesn’t need to beat NVIDIA—just not fall far behind the 70% margin you charge.

Huang: No—remember ASICs also have high margins. Say NVIDIA’s margin is 70% and ASICs’ is 65%. What did you save?

Patel: You mean like Broadcom?

Huang: Yes. You pay someone else. To my knowledge, ASIC margins are extremely high—they proudly claim astonishing ASIC margins.

So why? Long ago, we simply couldn’t. I didn’t fully grasp how hard it is to build a foundational AI lab like OpenAI or Anthropic—and how much supplier investment they require. We lacked the capacity to invest billions in Anthropic for guaranteed compute use. Google and AWS *did* have that capacity—and invested massively upfront—earning Anthropic’s compute commitment. We just couldn’t.

My mistake was failing to realize they truly had no choice—no VC would fund $5B–$10B into an AI lab hoping it becomes Anthropic. That was my oversight. Even if I’d understood, I doubt we could’ve done it then. But I won’t repeat that mistake.

I’m happy to invest in OpenAI—and help them scale. I believe it’s necessary. Later, when I *could*, Anthropic approached us—I was delighted to invest and help them scale. We simply couldn’t then. If I could rewind time—and NVIDIA were this large back then—I’d absolutely do it.

Why doesn’t NVIDIA become a hyperscale cloud provider?

Patel: For years, NVIDIA has been the AI company making money—and big money. Now you’re investing—reportedly $30B in OpenAI and $10B in Anthropic. Their valuations have soared—and will likely keep rising. So if you’ve supplied them compute for years—and seen their trajectory—and their value was just 1/10th—or even 1/10th—of today’s just one or two years ago—and you have all this cash—then either NVIDIA should become a foundational lab itself, investing massively—or strike these deals earlier, at lower valuations. You have the cash. So why not act earlier?

Huang: We acted as soon as feasible—and as soon as we gained capacity. If I could, I’d have acted earlier. When Anthropic needed us, we simply lacked capacity—so it wasn’t on our radar.

Patel: How so? Was it about money?

Huang: Yes—investment scale. We’d never made external investments—let alone at that magnitude. We didn’t realize we needed to. I assumed they’d raise from VCs like any company. But what they wanted—VCs couldn’t deliver. OpenAI’s ambition—VCs couldn’t deliver. I recognize that now—but didn’t then.

That’s their genius—realizing early they needed to do it themselves. I’m thrilled they succeeded—even if it meant Anthropic went elsewhere. Anthropic’s existence benefits the world—and I’m glad.

Patel: I think you’re still making huge money—and more each quarter. With all this cash flowing in, what *should* NVIDIA do? One answer is the emergence of a whole intermediary ecosystem—converting capex into opex for labs renting compute. Chips are expensive—but generate huge lifetime revenue as AI models improve. Token value rises—but deployment costs are high. NVIDIA has cash for capex. Reportedly, you’re backing CoreWeave with up to $6.3B—and have already invested $2B. Why not become a cloud provider yourself? Why not become a hyperscaler—and rent compute directly?

Huang: It’s our company philosophy—and I believe it’s wise. We should do only what’s necessary—and as little as possible. Meaning: in building our compute platform, if *we* don’t do it, I truly believe *no one* will. If we don’t take the risks we take—if we don’t build NVLink our way, or the entire software stack, or create the ecosystem our way, or invest 20 years in CUDA—even while losing money most of that time—if we don’t do it, *no one* will.

If we don’t create all CUDA-X libraries—domain-specific ones—we started domain-specific libraries 15 years ago because we knew: if we don’t build them—whether for ray tracing, image generation, early AI work, models, data processing, structured data, vector data—*no one* will. I’m utterly convinced. We built cuLitho for computational lithography—if we didn’t, *no one* would. That’s why accelerated computing advanced as it did.

So we *should* do that—and throw ourselves into it completely. Yet there are many clouds—if I don’t do it, someone else will. So our “do only what’s necessary—and as little as possible” philosophy lives in our company daily. Every action I take reflects this lens.

For clouds—if we hadn’t supported CoreWeave, these new AI clouds wouldn’t exist. Without our support, CoreWeave wouldn’t exist. Without supporting Nscale, they wouldn’t achieve what they have. Without Nebius, they wouldn’t be where they are. Now they’re thriving.

It’s a business model. We do what’s necessary—and as little as possible. So we invest in our ecosystem—because I want it to thrive. I want this architecture—and AI—to connect as many industries and countries as possible—building the entire planet on AI—and on the U.S. tech stack. That’s the vision we pursue.

One more thing: there are many outstanding foundational model companies—and we strive to invest in *all* of them. That’s another thing we do. We don’t pick winners—and we need to support *everyone*. It’s what we should do—and what we enjoy. It’s vital to our business. But we go to great lengths *not* to pick winners—so if I invest in one, I invest in all.

Patel: Why deliberately avoid picking winners?

Huang: First—it’s not our job. Second—when NVIDIA started, there were 60 3D graphics companies—and we were the *only* survivor. If you’d guessed which of those 60 would succeed, NVIDIA would’ve ranked *first* on the “least likely to succeed” list.

That was long ago. NVIDIA’s graphics architecture was *fundamentally* wrong—not slightly wrong. We built a completely wrong architecture—developers couldn’t support it—and it would never succeed. We reasoned well from first principles—but arrived at the wrong solution. Everyone wrote us off—but we survived.

So I have enough humility to recognize: don’t pick winners. Either let them fend for themselves—or support *everyone*.

Patel: One thing I don’t grasp: you say you don’t prioritize new clouds just because they’re new—you want to support them. But you also listed several new clouds saying they wouldn’t exist without NVIDIA. How do those reconcile?

Huang: First—they must *want* to exist—and ask for our help. When they want to exist—with a business plan, expertise, and passion—they clearly believe they have some capability. But if they ultimately need investment to launch, we’ll support them. The sooner they spin the flywheel, the better.

Your question: do we want to be in the financing business? Answer: no. Others do financing—we’d rather partner than become financiers ourselves. Our goal is to focus on what we do—and keep our business model as simple as possible—supporting our ecosystem.

When organizations like OpenAI need $30B-scale investment pre-IPO—and we’re convinced they’ll become an extraordinary company—the world needs them, wants them, and I want them to thrive—we support and help them scale. We make these investments *because they need us*. But we don’t aim to do *as much as possible*—we aim to do *as little as possible*.

Patel: This may be obvious—but we’ve endured GPU shortages for years—and now shortages intensify as models improve.

Huang: We *do* face GPU shortages.

Patel: Yes. NVIDIA is known for allocating scarce quotas—not just to the highest bidder—but ensuring new clouds exist: giving some to CoreWeave, some to Crusoe, some to Lambda. What’s NVIDIA’s benefit? First—do you agree with this description of market segmentation?

Huang: No—I disagree entirely. Your premise is fundamentally flawed. First—if you don’t place a purchase order, talking is useless. Before receiving orders, what can we do? So first—we work closely with *everyone* to finalize forecasts—because these take long to build—and data centers take long too. Forecasting and coordinating supply-demand is our top priority.

Second—we forecast with as many people as possible—but ultimately, you *must* place an order. Maybe for any reason, you don’t—what can I do? At some point, it’s first-come, first-served. Beyond that—if your data center isn’t ready—or certain components aren’t ready to activate it—we may serve other customers first. This maximizes our factory throughput—and we may adjust accordingly.

Beyond that—priority is first-come, first-served. You *must* place a purchase order. Of course, there are stories—like that article about Larry Page and Elon Musk dining with me begging for GPUs. That never happened. We did have a pleasant dinner—but they never begged for GPUs. They just needed to place orders. Once placed, we’ll allocate capacity to them—that’s straightforward.

Patel: Okay. So it sounds like a queue—where delivery depends on data center readiness and order timing. But that still isn’t “highest bidder wins.” Any reason not to do that?

Huang: We never do that.

Patel: Why not highest-bidder-wins?

Huang: Because it’s bad business practice. You set a price—and people decide whether to buy. I understand other chip companies raise prices when demand spikes—but we don’t. It’s never been our approach. You can trust us. I’d rather be reliable—and be the industry’s bedrock. You don’t need to second-guess later. If I quote a price—that’s the price. Period. If demand surges, prices stay stable.

Patel: On the other hand—that’s why your relationship with TSMC is so strong, right?

Huang: Yes—NVIDIA has done business with them for nearly 30 years. NVIDIA and TSMC have no formal legal contracts—but there’s always rough fairness. Sometimes I’m right; sometimes I’m wrong. Sometimes I get a good deal; sometimes a bad one. But overall—the relationship is excellent. I can fully trust and rely on them.

One thing you can trust about NVIDIA is this: this year’s Vera Rubin will be incredible. Next year’s Vera Rubin Ultra arrives. The year after—Feynman arrives. The year after that—I haven’t named it yet. You can trust us *every year*. Go to any other ASIC team worldwide—pick any—and ask: “Can I bet my entire business on you—and trust you’ll serve me *every year*?” Can you ask: “Will my token cost drop an order of magnitude *every year*—and can I trust you like clockwork?”

I’ve told TSMC similar things. Historically, you couldn’t say that to *any* other foundry—but today, you *can* say it to NVIDIA. You can trust us *every year*. Want to buy a $1B AI factory? Fine. $100M? Fine. $10M—or just one rack? Fine. Or just one GPU? Fine. Want to order $100B? Fine. We’re the *only* company in the world today you can say that to.

I can say the same to TSMC. Want one—or a billion? Fine. We just go through planning—and do what mature people do. So NVIDIA can be the bedrock of the world’s AI industry—a status we’ve taken decades to earn. It’s a massive commitment—and massive dedication. Our company’s stability and consistency are vital.

Should AI chips be sold to China?

Patel: I’d like to ask about China. I actually don’t know if I support selling chips to China—but I like playing devil’s advocate with guests. Dario Amodei supports export controls—I asked him why the U.S. and China can’t both have brilliant minds in their data centers. Since you’re on the other side, I’ll flip it.

One way to think about it: Anthropic recently released the Mythos preview. They haven’t publicly launched it—because it has strong cyberattack capabilities and the world isn’t ready—waiting to patch those zero-day vulnerabilities. But they say it discovered thousands of high-risk vulnerabilities across all major operating systems and browsers. It found one in OpenBSD—a system specifically designed to avoid zero-days—and that vulnerability existed for 27 years.

So if Chinese companies, labs, or the government get AI chips—to train a Mythos-level model and run millions of instances—does that threaten U.S. companies and national security?

Huang: First—Mythos was trained on fairly ordinary compute—and at fairly ordinary scale—just by an exceptionally talented company. The type and amount of compute used is actually abundant in China. You must know chips *exist* there.

They manufacture over 60% of the world’s mainstream chips—this industry is enormous for them. They possess one of the world’s finest computer scientists. As you know, most researchers in AI labs globally are Chinese—accounting for ~50% of AI researchers worldwide. So the question arises: since they already hold so many assets—abundant energy, massive chip supply, and nearly half the world’s AI talent—if you’re truly concerned, what’s the *best* way to create a safer world?

Crushing them—turning them into enemies—is probably *not* the best answer. They’re competitors—and we want America to win. But I believe dialogue—research dialogue—is safest. Currently, our attitude toward China in this field is conspicuously absent. Dialogue between U.S. and Chinese AI researchers is critical. We both need to align on what AI *should not* be used for—that’s essential.

Finding vulnerabilities in software is exactly what AI *should* do. Will it find vulnerabilities in many software systems? Absolutely. There are many vulnerabilities. AI software itself has many vulnerabilities. That’s exactly what AI *should* do—and I’m thrilled AI has reached a level that boosts productivity so dramatically.

One underestimated thing is the rich ecosystem around cybersecurity, AI cybersecurity, AI safety, and AI privacy. An entire AI startup ecosystem is trying to build a future where thousands of AI agents protect one powerful AI agent—ensuring its safety. That future *will* arrive.

The idea of one AI agent roaming freely—unwatched—is a bit crazy. We clearly need this ecosystem to thrive. It turns out this ecosystem needs to be open-source—and require open models and open software stacks—so *all* AI researchers and top computer scientists can build equally powerful AI systems—and ensure AI safety. So one thing we must ensure is keeping the open-source ecosystem vibrant—this cannot be ignored. Much of it comes from China—and we shouldn’t stifle it.

Of course, we want America to have as much compute as possible. We’re constrained by energy—but many are solving that—and we can’t let energy become a national bottleneck. But we also want *all* AI developers worldwide to build on the U.S. tech stack—and contribute AI advances—especially open-source parts—to the U.S. ecosystem. Creating two ecosystems—one open-source but only runnable on foreign tech stacks, one closed but on U.S. tech stacks—would be extremely foolish. I think that would be catastrophic for the U.S.

Patel: That’s a lot—let me summarize. China has compute—but some estimates say that, due to EUV chip-manufacturing export controls, their actual Flops production is only ~1/10th of America’s. So can they train a Mythos-level model? Yes. But the issue is—since we have more Flops, U.S. labs reach these capabilities first—Anthropic did.

Also—even if they train such a model—large-scale deployment matters. A cyber attacker with a million instances is far more dangerous than one with a thousand. So inference compute is critical. Fact is—they have so many excellent AI researchers—precisely what’s frightening—because what makes these engineers/researchers more effective? Compute.

If you talk to any U.S. AI lab, they’ll say compute is their constraint. DeepSeek’s founder and Tongyi Qwen’s leadership have said the same—they’re compute-constrained. So the question is: isn’t it better if U.S. companies reach Mythos-level capabilities first—giving our society time to prepare—while China lags due to less compute?

Huang: Our goal should always be to arrive first—and always have more compute. But for your described outcome to hold, you must push the scenario to extremes—i.e., they must have *zero* compute. As long as they have *some*, the question becomes: how much is *enough*? In fact, China’s compute is enormous. You just said they’re the world’s second-largest computing market. If they truly concentrate compute on one thing—they’re fully capable.

Patel: But is that true? Some estimate SMIC lags in process nodes.

Huang: Their energy supply is astonishing, right? AI is a parallel computing problem—isn’t it? Why can’t they leverage nearly-free energy to deploy 4x or 10x more chips? They have so much energy. They have fully vacant, fully powered data centers. Their infrastructure capacity is huge. If they want, they can cluster more chips—even 7nm ones.

Their chip-manufacturing capability is among the world’s largest—semiconductor industry knows they dominate mainstream chips. They have excess capacity—and overcapacity. So the idea that China won’t get AI chips is pure nonsense. Of course—if there were *zero* compute globally, would the U.S. lead overwhelmingly? But that’s not a real scenario. They *already* have massive compute. The threshold you fear—they’ve already met—and surpassed.

So I think you misunderstand: AI is a five-layer cake—the bottom layer is *energy*. When energy is abundant, it compensates for chip limitations. When chips are abundant, they compensate for energy limitations. For example—U.S. energy is scarce—that’s why NVIDIA must relentlessly advance architectures and do extreme co-design—so our per-watt throughput is astronomically high, given limited energy and low chip shipment volumes.

But if your wattage is fully abundant—and nearly free—do you care about per-watt performance? You’ll have plenty. You can use older chips. 7nm chips are essentially Hopper. I must tell you—most models today are trained on the Hopper generation. So 7nm chips are already sufficient. Energy abundance is *their* advantage.

Patel: But there’s also the question of whether they can manufacture *enough* chips.

Huang: They *can*. What’s the evidence? Huawei just had its best year in company history.

Patel: How many chips did they ship?

Huang: Massive numbers—millions—far more than Anthropic has.

Patel: The question is how many logic chips and how much memory SMIC can produce.

Huang: Let me tell you the facts. They have massive logic chips—and massive HBM2 memory.

Patel: But as you know, training and inference bottlenecks are often bandwidth-limited. So if you use HBM2—I don’t recall exact numbers—but bandwidth may be an order of magnitude lower than your latest products—very significant.

Huang: Huawei is a networking company.

Patel: But that doesn’t change the fact that you need EUV to manufacture the most advanced HBM.

Huang: Completely false. You can cluster them—as we do with NVL72. They’ve demonstrated silicon photonics—connecting all computation into one giant supercomputer. Your premise is entirely wrong.

Fact is—China’s AI development is progressing quite smoothly. The world’s best AI researchers—despite limited compute—propose incredibly smart algorithms. Remember—I said Moore’s Law improves ~25% yearly. Yet through excellent computer science, we can still boost algorithmic performance 10x. Excellent computer science is the lever.

Undoubtedly, MoE is a great invention. All those incredible attention mechanisms reduce computation. We must acknowledge—most AI progress comes from algorithmic advances—not just raw hardware. If most progress comes from algorithms, computer science, and programming—then tell me: isn’t their AI research army their fundamental advantage? We see it. DeepSeek is *not* an insignificant advancement. If DeepSeek-like breakthroughs emerge first on Huawei platforms—that would be terrible for our country.

Patel: Why? Because models like DeepSeek—being open-source—currently run on any accelerator. Why wouldn’t that continue?

Huang: Suppose it’s optimized for Huawei—and their architecture—that puts us at a disadvantage. You describe a scenario I consider *good*. A company develops software—and an AI model—that runs best on the U.S. tech stack. I see that as *good*. You treat it as a *bad* premise. Let me tell you the *real* bad news: *all* AI models are developed—and run best—on non-U.S. hardware.

Patel: I just don’t see evidence of huge differences preventing accelerator switching. U.S. labs *do* run models across all clouds—and all accelerators.

Huang: I *am* the evidence. Take a model optimized for NVIDIA—and try running it on something else.

Patel: But U.S. labs *do* run them that way.

Huang: And they don’t run *better*. NVIDIA’s success is perfect evidence. AI models are *built* on our software stack—and run *best* on it. How is that illogical?

Patel: Anthropic’s models run on GPUs—and on Trainium and TPUs.

Huang: It takes enormous work to port them. But go to the Global South—or the Middle East. Out-of-the-box—if all AI models run best on others’ tech stacks—you’re making an absurd claim that this is *good* for the U.S.

Patel: But I don’t understand this argument. Suppose a Chinese company launches the next Mythos first. They find all security vulnerabilities in U.S. software first—but run it on NVIDIA hardware—and deploy globally. How is that *good*?

Huang: That’s *not* good. So let’s prevent that.

Patel: Why do you think it’s fully substitutable—if you don’t give them compute, Huawei will fully replace you? They’re behind, right? Their chips are inferior to yours.

Huang: There’s evidence now—their chip industry is enormous.

Patel: You can directly compare H200 and Huawei 910C on Flops, bandwidth, or memory. Huawei’s is roughly 1/2 to 1/3 of H200’s.

Huang: They compensate with quantity.

Patel: So your argument is—they have all this readily available energy—and need chips to fill it.

Huang: And they’re excellent manufacturers.

Patel: I believe they may eventually surpass everyone in manufacturing—but these next few years are critical.

Huang: Which specific years are “critical”?

Patel: The next few years—we’ll have models capable of various cyberattacks.

Huang: In that case—if the next few years are critical—we must ensure *all* AI models are built on the U.S. tech stack.

Patel: If they’re built on the U.S. tech stack—how do we prevent them from launching Mythos-level cyberattacks if they gain more advanced capabilities?

Huang: There’s no guarantee—ever.

Patel: But if we get it earlier—we can prepare.

Huang: Listen—why would you sacrifice one layer of the AI industry’s entire market—just to benefit another layer? There are five layers—and each must succeed. The layer most critical to succeed is AI *applications*. Why are you so fixated on that AI model—and that one company? For what?

Patel: Because these models enable incredible attack capabilities—and you need compute to run them.

Huang: Energy, chips, and the AI researcher ecosystem make it possible.

Patel: Okay—let’s step back. China must build sufficient 7nm capacity themselves. Don’t forget—they’re stuck at 7nm—while you move to 3nm, 2nm, 1.6nm—like the Feynman generation. You’ll be on 1.6nm while they’re on 7nm. They’ll only compensate via quantity—per-chip performance gaps. And their energy is abundant. The more chips you sell them—the more total compute they hold.

Huang: Listen—I just think your statement is too absolute. America *should* lead. America’s compute scale is 100x larger than anywhere else. America *should* lead. Okay—America *does* lead now. NVIDIA builds the most advanced technology. We ensure U.S. labs learn of it first—and get first access to buy. If they lack funds—we even invest in them. America *should* lead. We do everything possible to ensure it. Do you agree on point one? We *are* doing it.

Patel: But if their bottleneck is compute—how does shipping chips to China help America lead?

Huang: No. We have Vera Rubin for America. Vera Rubin is *for* America. Now—am I part of America? Do you count me as American?

Patel: Yes.

Huang: What about NVIDIA? You count NVIDIA as a U.S. company, right? First—why can’t we adopt a more balanced regulatory approach—so NVIDIA can win globally—instead of ceding the global market? Why force America to surrender the world?

The chip industry is part of the U.S. ecosystem—part of U.S. tech leadership—part of the AI ecosystem—and part of AI leadership. Why do your policies and philosophy steer America to abandon such a massive chunk of the global market?

Patel: Amodei quoted something like: “It’s like Boeing boasting about selling nuclear bombs to hostile nations—but the missile casing is made by Boeing.” That somewhat supports the U.S. tech stack. Fundamentally—you’re giving adversaries this capability.

Huang: Equating AI with what you just mentioned is absurd.

Patel: But AI is like enriched uranium—it has positive and negative uses. We still don’t ship enriched uranium abroad.

Huang: That’s a poor—and illogical—analogy.

Patel: But if this compute can run a model exploiting zero-days across *all* U.S. software—how is it *not* a weapon?

Huang: First—the solution is dialogue—with researchers, with China, with all nations—to ensure people don’t use the technology that way. This dialogue *must* happen.

Second—we must ensure America leads—Vera Rubin, Blackwell—massively supplied and stacked in America. Clearly, our results show this. We have massive compute—and excellent AI researchers here.

Yet we must also recognize AI isn’t just a model. AI is a five-layer cake. Every layer matters—and we want America to win *every* layer—including chips. Abandoning the entire market won’t let America win the chip layer—or the computing stack—in the long-term tech race. That’s the truth.

Patel: I think the key question is: how does selling chips to China *help* us win long-term? Tesla sold EVs in China for years—and the iPhone sells well there. But that didn’t lock China into the U.S. tech ecosystem. They built their own EVs—and now dominate globally. Same for smartphones.

Huang: When we began this conversation, you acknowledged NVIDIA’s position is very different. You used the word “moat.” For our company, the most important thing is our ecosystem’s richness—which centers on developers. 50% of AI developers are in China. America shouldn’t abandon that.

Patel: But America has many NVIDIA developers—and that doesn’t stop U.S. labs from using other accelerators later. In fact, they already do—and that’s fine. I don’t understand why China wouldn’t be the same—if you sell them NVIDIA chips, just as Google uses both TPUs and NVIDIA.

Huang: We must keep innovating. You may know our market share is growing—not shrinking. You implied a premise: even if we compete in China, we’ll inevitably lose. I’m not someone who wakes up thinking I’ll lose. That loser mindset—and loser premise—means nothing to me.

We’re not building cars. Cars are easy to switch brands. Computing isn’t. x86 survives for reasons—and ARM’s stickiness has reasons. These ecosystems are hard to replace. Switching takes enormous time and effort—and most people won’t do it. So our task is to keep nurturing this ecosystem—and advancing technology—to compete in the market.

You assume inevitable loss—and conclude we should abandon a market. I can’t accept that logic. It makes no sense. I don’t think America is a loser. Our industry isn’t a loser.

The key is you’ve gone to extremes. Your argument starts from the extreme premise—that giving *any* compute at a critical moment means losing *everything*. That’s naï

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News