Jensen Huang Is Satoshi Nakamoto

TechFlow Selected TechFlow Selected

Jensen Huang Is Satoshi Nakamoto

Two tokens, same name, same underlying structure: computing power goes in, valuable output comes out.

Author: Luo Yihang

In January 2009, an anonymous person invented something called a “token”: you invest computing power and receive tokens, which circulate, get priced, and are traded within a consensus network. Thus, the entire crypto economy was born. Over a decade later, people still debate whether such tokens hold any intrinsic value.

In March 2025, a man in a leather jacket redefined another kind of “token.” You invest computing power and produce tokens—tokens instantly consumed during AI inference & reasoning: thinking, reasoning, coding, making decisions. Thus, the entire AI economy accelerates. No one debates whether these tokens hold value—you just burned several million of them this morning.

Two kinds of tokens, sharing the same name and the same underlying structure: computing power goes in; something valuable comes out.

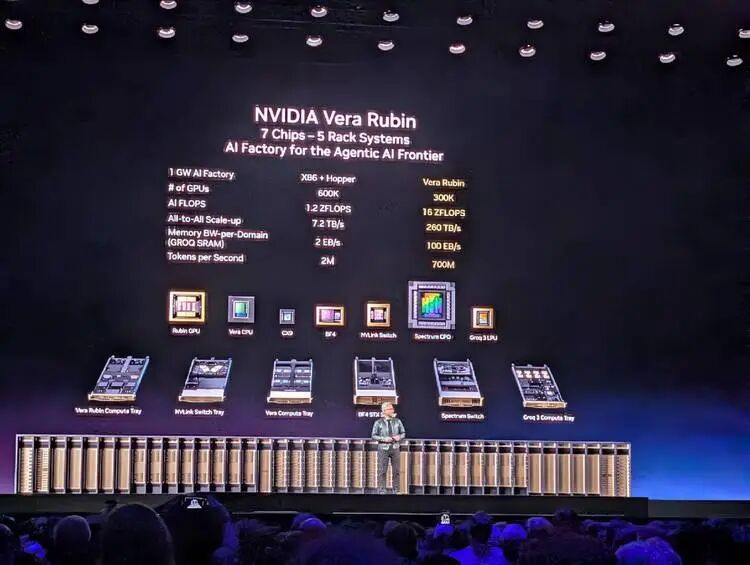

In March 2026, I sat inside the NVIDIA GTC conference hall and listened to Jensen Huang deliver a keynote speech with almost no product pitching. Yes, he unveiled Vera Rubin—a hybrid CPU-GPU product. But this time, he didn’t talk about chip specs or manufacturing processes. Instead, he presented a complete economics framework for token production, pricing, and consumption:

Which model corresponds to which token throughput; which token throughput maps to which pricing tier; and which hardware tier is required to support each pricing tier.

He even laid out a data-center compute allocation plan for CEOs and corporate decision-makers holding enterprise checkbooks: 25% for the free tier, 25% for mid-tier, 25% for high-tier, and 25% for premium-tier workloads.

Yes, this time he didn’t explicitly pitch a specific GPU configuration—as he had done two years earlier with Blackwell. This time, he was selling something much bigger. After two hours, I realized the single sentence he most wanted to convey was: “Welcome to consume tokens—and only Nvidia’s factory can produce them.”

At that moment, I understood: this man, and the anonymous person who mined the first token 17 years ago, are doing structurally identical things.

The Same Transformation Rule

The pseudonymous “Satoshi Nakamoto” authored a nine-page whitepaper in 2008, defining a rule: invest computing power to solve a mathematical proof (Proof of Work), and earn crypto tokens as reward.

The elegance of this rule lies in its trustlessness—you don’t need to trust anyone. As long as you accept the rule, you automatically become a participant in this economy. The rule works—after all, it brought together so many suspicious, self-interested actors.

And at GTC 2026, Jensen Huang did something structurally identical.

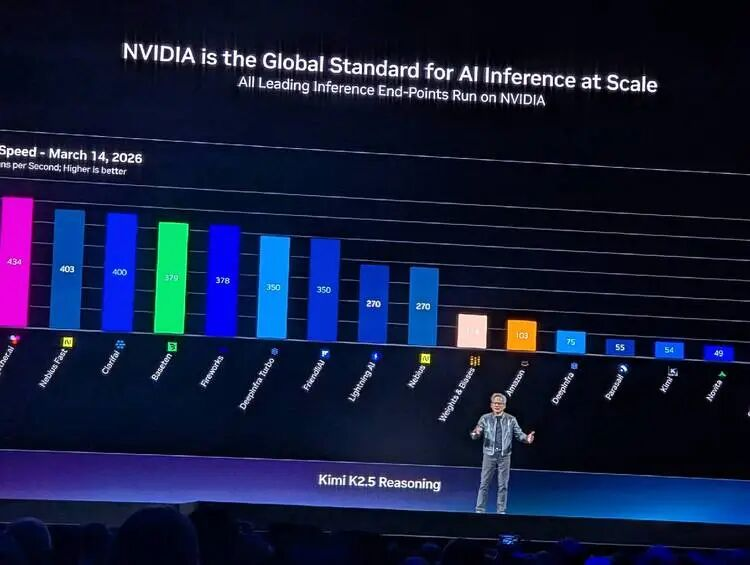

He displayed a chart illustrating the relationship and tension between inference efficiency and token consumption: the Y-axis shows throughput (how many tokens per megawatt of power), while the X-axis represents interactivity (perceived token speed per user). Beneath the X-axis, he labeled five pricing tiers: Free (Qwen 3, $0 per million tokens); Medium (Kimi K2.5, $3 per million); High (GPT MoE, $6 per million); Premium (GPT MoE with 400K context, $45 per million); and Ultra ($150 per million).

This chart could practically serve as the cover of Jensen’s “token economics” whitepaper.

Satoshi defined “what constitutes valuable computation”—solving SHA-256 hash collisions is valuable. Jensen defined “what constitutes valuable inference”—producing tokens at a specific speed, for specific use cases, under given power constraints, is valuable.

Neither Satoshi nor Jensen directly produces tokens. Both define the rules governing token production and pricing.

One sentence Jensen uttered onstage could be quoted verbatim in the abstract of a token economics whitepaper:

“Tokens are the new commodity, and like all commodities, once it reaches an inflection, once it becomes mature, it will segment into different parts.”

Tokens are the new commodity. And like all mature commodities, they naturally stratify. He isn’t describing the status quo—he’s forecasting a market structure, then precisely aligning his hardware product lineup with every layer of that structure.

Even semantically, the two token production processes mirror each other: mining is called “mining”; inference is called “inference.”

At their core, both mining and inference convert electricity into money. Miners spend on electricity to mine crypto tokens, then sell them. AI models and agents spend on electricity to generate AI tokens, then sell them to developers by the million. The middle steps differ—but the endpoints are identical: electricity meters on the left, revenue on the right.

Two Ways to Write Scarcity

Satoshi’s most consequential design decision wasn’t Proof of Work—it was capping Bitcoin’s total supply at 21 million coins. He engineered artificial scarcity via code: no matter how many mining rigs flood the network, Bitcoin’s supply will never exceed 21 million. This scarcity anchors the entire crypto economy’s value.

Jensen, by contrast, engineers natural scarcity using physical laws. He said:

“You still have to build a gigawatt data center. You still have to build a gigawatt factory, and that one gigawatt factory for 15 years amortized… is about $40 billion even when you put nothing on it. It's $40 billion. You better make darn sure you put the best computer system on that thing so that you can have the best token cost.”

A 1 GW data center can never become 2 GW. This isn’t a software constraint—it’s physics.

Land, electricity, cooling—each has hard physical limits. The number of tokens your $40 billion factory can produce over its 15-year lifetime depends entirely on what compute architecture you install inside it.

Satoshi’s scarcity can be forked. Dislike the 21-million cap? Fork a new chain, change it to 200 million, call it Ethereum or whatever—go ahead, and write your own whitepaper. People indeed did exactly that—and kept doing it, enthusiastically.

But Jensen’s scarcity cannot be forked. You cannot fork the Second Law of Thermodynamics. You cannot fork a city’s grid capacity. You cannot fork the physical area of a plot of land.

Yet whether engineered by Satoshi or Jensen, the scarcity they created leads to the same outcome: a hardware arms race.

Mining history progressed as follows: CPU → GPU → FPGA → ASIC. Each generation of specialized hardware rendered the prior generation obsolete. Similarly, AI training and inference history is now repeating: Hopper → Blackwell → Vera Rubin → Groq LPU. General-purpose hardware starts the wave; specialized hardware defines its end state. This year at GTC, Jensen showcased Groq LPU—the deterministic dataflow processor released after acquiring Groq. Static compilation, compiler-driven scheduling (no dynamic scheduling), 500MB on-chip SRAM. Its architectural philosophy is essentially ASIC-level specialization for inference: do one thing—and do it exceptionally well.

Interestingly, GPUs played pivotal roles in both waves.

Around 2013, miners discovered GPUs were far more efficient than CPUs for mining crypto tokens—NVIDIA graphics cards sold out. Ten years later, researchers found GPUs were optimal for training and running AI models—NVIDIA data-center GPUs sold out again. As a processor class, GPUs served two generations of token economies.

The difference? The first time, NVIDIA passively benefited—and then stopped there. The second time, as AI compute consumption shifted from pretraining to inference, NVIDIA seized the opportunity proactively—designing the entire game and becoming the author of AI’s rulebook.

The World’s Most Profitable Shovel

During gold rushes, the most profitable players weren’t the prospectors—they were Levi Strauss, selling shovels. During mining booms, the most profitable weren’t miners—but Bitmain and Wu Jihan, selling mining rigs. In the AI pretraining and inference boom, the most profitable aren’t base models or agents—but NVIDIA, selling GPUs.

But honestly, Bitmain’s role in its industry versus NVIDIA’s role today is incomparable.

Bitmain sells only mining rigs—and was once even NVIDIA’s customer. Once you buy a rig, what coin you mine, which pool you join, and at what price you sell your tokens—all lie beyond Bitmain’s control. It’s a pure hardware vendor, earning one-time equipment margins.

NVIDIA is different. It doesn’t just sell hardware. Especially since the 2025 inference-side AI explosion, it has deeply defined what you should mine with this GPU, how tokens should be priced, who should buy them, and how data-center compute should be allocated… All this appears in Jensen’s presentation slides: he segments the market into five tiers, mapping each to specific models, context lengths, interaction speeds, and prices… NVIDIA has standardized and formatted the future AI-inference-driven market.

Around 2018, global compute power concentrated in several major mining pools—F2Pool, Antpool, BTC.com—competing fiercely for hash-rate share, yet overwhelmingly reliant on Bitmain for hardware.

Similarly, today NVIDIA draws 60% of its revenue from competing hyperscalers—AWS, Azure, GCP, Oracle, CoreWeave—while the remaining 40% comes from fragmented AI-native startups, sovereign AI initiatives, and enterprise customers. Large “mining pools” drive primary revenue; smaller “miners” provide resilience and diversification.

Both ecosystems share identical structures. Yet Bitmain later faced competition—MicroBT, Innosilicon, and Canaan all eroded its market share. Mining rigs involve relatively simple ASIC designs—followers can catch up. Challenging NVIDIA, however, grows increasingly difficult: 20 years of CUDA ecosystem maturity, hundreds of millions of installed GPUs, sixth-generation NVLink interconnect technology, and the post-Groq disaggregated inference architecture—NVIDIA’s technical complexity and ecosystem moats render most competitive tools ineffective.

This may persist for another 20 years.

The Fundamental Fork Between Two Tokens

What fundamentally distinguishes crypto tokens from AI training and inference tokens is users’ motivation and psychology.

Crypto token demand is speculative. No one “needs” Bitcoin to perform actual work. Every whitepaper claiming blockchain tokens solve real problems is written by fraudsters. You hold crypto because you believe someone else will pay more for it tomorrow. Bitcoin’s value stems from a self-fulfilling prophecy: if enough people believe it has value, it does. This is a faith-based economy.

AI token demand, by contrast, is productivity-driven. Nestlé needs tokens to optimize supply-chain decisions—its supply-chain data refreshes every 3 minutes instead of every 15, cutting costs by 83%, a value directly reflected in its P&L. 100% of NVIDIA’s engineers now rely on tokens to write code—not hand-crafting it manually; research teams depend on tokens for scientific discovery. You don’t need to believe tokens hold value—you just need to use them, and their value proves itself in usage.

This is the most essential distinction between the two tokens. Crypto tokens are produced to be held and traded—their value lies in non-use. AI tokens are produced to be immediately consumed—their value lies in the instant they’re used.

One is digital gold—hoard it, and it appreciates. The other is digital electricity—produce it, and it’s instantly consumed.

This distinction ensures the AI token economy won’t bubble like the crypto token economy. Bitcoin’s wild volatility stems from emotional speculation driving prices. Token prices, however, are driven by usage volume and production cost. As long as AI remains useful—as long as people keep using Claude Code to write software, ChatGPT to draft reports, or agents to run business workflows—token demand won’t collapse. It doesn’t rely on faith—it relies on indispensability.

In 2008, the Bitcoin whitepaper had to repeatedly justify why a decentralized electronic cash system held value. Seventeen years later, the debate continues.

In 2026, token economics sparked no controversy—it didn’t require justification to become consensus. When Jensen stood on the GTC stage declaring “tokens are the new commodity,” no one challenged him. Because everyone seated before him had already consumed several million tokens this morning—using Claude Code or ChatGPT. They needed no persuasion about token value—their credit card statements had already proven it.

In this sense, Jensen truly is Satoshi’s counterpart—the one who stayed behind to monopolize mining-rig production, define token use cases and standards, and host an annual show at San Jose’s SAP Center showcasing next-generation AI training and inference “mining rigs.”

Satoshi possessed a restrained, deliberate allure—he designed the rules, handed them to code, and vanished. That’s cypherpunk romance. Jensen, meanwhile, resembles a businessman more than any scientist—he designed the rules, personally maintains them, constantly reinforces them, and fortifies his moat.

The token you once saw only because you believed—it’s now visible without belief. It’s the next unit after watt, ampere, and bit.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News