Jensen Huang’s Latest Interview Transcript: Abandoning the Chinese Market Is a “Loser Mentality”; Computing Power Restrictions Simply Cannot Stop China’s AI

TechFlow Selected TechFlow Selected

Jensen Huang’s Latest Interview Transcript: Abandoning the Chinese Market Is a “Loser Mentality”; Computing Power Restrictions Simply Cannot Stop China’s AI

“The work we’re doing to build computing platforms—if we don’t do it, I truly believe no one else will.”

Compiled & Translated by TechFlow

Guest: Jensen Huang, Founder and CEO of NVIDIA

Host: Dwarkesh Patel

Podcast Source: Dwarkesh Patel

Original Title: Jensen Huang – Will Nvidia’s moat persist?

Air Date: April 16, 2026

Key Takeaways

This article explores, through a dialogue with NVIDIA CEO Jensen Huang, the potential impact of TPUs (Tensor Processing Units) on NVIDIA’s dominance in AI computing; the company’s control over advanced chip supply chains; whether AI chips should be sold to China; why NVIDIA does not directly become a hyperscaler; and the company’s architectural and investment strategies.

Highlights of Key Insights

On NVIDIA’s Essence and Moat

- “Ultimately, something must convert electrons into tokens—and that conversion process, and making tokens increasingly valuable over time, is not easily commoditized. Our job is to do as many necessary things as possible, and as few unnecessary things as possible, to achieve that conversion.”

- “Why are they willing to invest for me, but not for others? Because they know NVIDIA can absorb their supply volume and sell it downstream through our channels. NVIDIA’s downstream supply chain and demand scale are so massive that upstream partners are willing to place bets on us.”

- “No bottleneck lasts longer than two or three years—without exception. What concerns me is what happens downstream—for example, policies hindering energy development. Without energy, you cannot build an industry; without energy, you cannot drive reindustrialization.”

On CUDA vs. ASICs

- “NVIDIA builds accelerated computing—not tensor processing units. Accelerated computing covers a vastly broader scope… We’re the only company accelerating diverse applications, backed by a massive ecosystem.”

- “NVIDIA GPUs are accelerators—more like Formula 1 race cars. Anyone can drive one at 100 km/h, but pushing it to its limits requires deep expertise. We heavily use AI to generate our own kernels.”

- “NVIDIA’s compute stack delivers the best price-performance ratio in the world—no exceptions. No platform has yet demonstrated a better performance / total cost of ownership ratio than ours today.”

On Corporate Philosophy and Investment

- “We should do as many necessary things as possible, and as few unnecessary things as possible. That means: the work we do building the compute platform—if we don’t do it, I truly believe no one else will. But the world doesn’t lack cloud services—if we didn’t provide them, someone else would.”

- “My mistake was failing to deeply recognize that they simply had no other option—venture capital would never invest $5–10 billion into an AI lab. That was my blind spot. If I could rewind time, and if NVIDIA had today’s scale back then, I’d gladly do it.”

On the Chinese Market and Geopolitics (Most Watched Section)

- “What’s the best way to create a safe world? Making them victims and turning them into enemies is likely not the best answer. They are competitors—we want America to win—but I believe maintaining dialogue and research exchange is probably the safest path.”

- “This loser mindset, this loser premise—it means nothing to me. To abandon a market based on the premise you just described—I cannot accept that idea. It makes no sense, because I don’t believe America is a loser, nor is our industry.”

- “When you have abundant energy, it compensates for chip shortcomings. If your wattage is fully abundant—even nearly free—why obsess over performance-per-watt? Just stack old chips. A 7nm chip is essentially equivalent to Hopper-class—fully sufficient.”

- “The day DeepSeek launched its first model on Huawei chips was a bad outcome for our country… If all AI models run best on someone else’s tech stack, you’ll need to explain why that’s good for America.”

On Software, Agents, and the Future

- “Today we’re constrained by the number of engineers. Tomorrow, those engineers will be supported by a large cohort of agents, enabling us to explore design space in unprecedented ways… Tool usage will cause software companies’ scale to explode—but that hasn’t happened yet because agents aren’t yet skilled enough at using tools.”

- “One thing you can rely on from NVIDIA is this: Vera Rubin will be outstanding this year, Vera Rubin Ultra arrives next year, Feynman arrives the year after. You can trust us like clockwork, every single year.”

On Architecture and Workloads

- “We could do that [develop multiple architectures]—we just don’t have better ideas. We’ve simulated everything in our simulator, and the results were worse—so we won’t do it.”

- “In the past, higher throughput was always better. But we believe there may exist a world where tokens command very high unit prices—even with lower factory throughput, high pricing can compensate for the gap. That’s why we decided to expand the Pareto frontier.”

Is NVIDIA’s Supply Chain Advantage Its Greatest Moat?

Host Dwarkesh: We’re seeing valuations of numerous software companies collapse, driven by market expectations that AI will commoditize software. There’s a seemingly simple logic here: NVIDIA sends GDS2 files to TSMC; TSMC manufactures logic chips and switches; these are packaged together with HBM from SK Hynix, Micron, and Samsung; and finally assembled into full systems by Taiwanese ODMs. From this perspective, NVIDIA is essentially a software company—others manufacture for it. If software becomes commoditized, won’t NVIDIA be commoditized too?

Jensen Huang:

Ultimately, something must convert electrons into tokens—and that conversion process, and making tokens increasingly valuable over time, is not easily commoditized. Making one token more valuable than another is like making one molecule more valuable than another—the art, engineering, science, and invention involved are precisely what we’re witnessing unfold right now. The transformation, processing, and underlying science required to produce a token remain poorly understood—and this journey is far from over. I don’t believe it will be commoditized. The framework you describe is, in fact, my own mental model of the company: input is electrons, output is tokens, and NVIDIA sits in between. Our job is to do as many necessary things as possible, and as few unnecessary things as possible, to achieve that conversion. “As few as possible” means: anything I don’t need to do myself, I bring in a partner to make part of my ecosystem. If you look at NVIDIA today, we host arguably the world’s largest partner ecosystem—upstream suppliers, downstream computer OEMs, application developers, and model vendors—all included.

AI is a five-layer cake (applications, models, infrastructure, chips, energy), and we have ecosystems across all layers. We minimize what we do—but the part we *must* do is extraordinarily difficult. I don’t believe it will be commoditized; in fact, I also don’t believe enterprise software companies or tool vendors will be commoditized. Most software companies today are fundamentally toolmakers—but what I see is the opposite of what most people see: the number of AI agents will grow exponentially, and so will the number of tool users. Instances of Synopsys Design Compiler will likely surge dramatically; agent usage of placement tools and design rule checkers will similarly explode. Today we’re constrained by the number of engineers; tomorrow those engineers will be augmented by a large cohort of agents, enabling us to explore design space in unprecedented ways—using the same tools we have today. Tool usage will cause software companies’ scale to explode—but that hasn’t happened yet because agents aren’t yet skilled enough at using tools. Either these companies build their own agents, or agents become powerful enough to use these tools—I believe both will happen.

Host Dwarkesh: Your latest earnings report shows nearly $100 billion in procurement commitments—covering foundries, memory, and packaging. SemiAnalysis reports this figure could reach $250 billion. One interpretation is: NVIDIA’s real moat is locking up scarce capacity for years ahead—others may have accelerators, but can they get memory? Can they get logic chips? Is this NVIDIA’s biggest moat for the next few years?

Jensen Huang:

This is one of the things we can do—and others find extremely hard to replicate. We make enormous upstream commitments—some explicit, as you mentioned, some implicit. For instance, many upstream investments are proactively made by our supply chain partners because I tell their CEOs: “Let me show you how big this industry will be. Let me explain why. Let me walk you through the projections. Let me show you what I see.” Through continuous communication, inspiration, and joint scenario planning with CEOs, they become willing to invest. Why do they invest for me, not for others? Because they know NVIDIA can absorb their supply volume and sell it downstream through our channels. NVIDIA’s downstream supply chain and demand scale are so massive that upstream partners feel confident betting on us. Look at GTC—people marvel at its scale and the caliber of attendees—the entire AI universe gathers there, 360-degree coverage—because they need each other. I bring them together—downstream sees upstream, upstream sees downstream, everyone sees the latest in AI. Crucially, they witness firsthand what AI-native companies and AI startups are doing—validating what I’ve told them repeatedly. I spend enormous time—directly and indirectly—ensuring our supply chain, partners, and ecosystem understand the opportunity before us.

Our keynote always includes a section that feels slightly heavy—like a lecture. Yes, that’s exactly the point. I need to ensure the entire upstream and downstream supply chain understands what’s coming, why it’s coming, when it’s coming, how big it will be—and how to systematically project it, just as I do. Regarding your question about the moat: we build for the future. If the industry reaches trillion-dollar scale in the coming years, our supply chain is ready to handle it. Without our scale, our business velocity—like cash flow, supply chains have flow and turnover—no one would build a supply chain around a low-turnover architecture. We sustain this scale solely because downstream demand is so strong—they see it, hear it, it’s all right in front of them.

Host Dwarkesh: I’d like to dig deeper into upstream capacity. You’ve doubled yearly capacity for years; compute supply grows over 3x annually—doubling at this scale is astonishing. You’re TSMC’s largest N3-node customer and a major N2 customer. This year AI will consume 60% of N3 capacity; SemiAnalysis forecasts 86% next year. When you already dominate, how do you double—and keep doubling year after year?

Jensen Huang:

In some sense, global instantaneous demand exceeds total upstream and downstream supply. That’s precisely the kind of industry you want—instantaneous demand exceeding total industry supply. The reverse is clearly undesirable; if the gap is too wide, and a specific bottleneck becomes severely constrained, the entire industry rushes to fix it. For example, discussions about CoWoS have diminished significantly—because for the past two years we’ve aggressively attacked this bottleneck, multiplying capacity several times over. Now TSMC understands: CoWoS capacity must keep pace with logic and memory demand—and they’re expanding CoWoS and future packaging technologies at the same speed as logic. For a long time, CoWoS and HBM were considered specialty products—but they’re no longer specialty products. People realize they’re mainstream computing technologies—and yes, we now influence a much broader swath of the supply chain. At the start of the AI revolution, I said exactly what I’m saying today—five years ago. Some believed and invested, like Sanjay and the Micron team—I still remember that meeting vividly, where I laid out exactly what would happen, why it would happen, and my predictions. They truly bet on it—and we partnered deeply on LPDDR and HBM memory. Their massive investment paid off handsomely. Others came later—but they’re all onboard now. Every bottleneck receives enormous attention—and now we anticipate bottlenecks years in advance. For instance, our collaboration over the past few years with Lumentum, Coherent, and the entire silicon photonics ecosystem has completely reshaped the supply chain. We’ve built a complete supply chain around TSMC, co-developing COUPE with them, inventing an entire suite of technologies, licensing patents to the supply chain to keep the ecosystem open. We help partners scale capacity by inventing new technologies, new workflows, new test equipment like dual-sided probes—and by investing in companies.

If we discourage people from becoming software engineers, we’ll face a software engineer shortage. A similar prophecy occurred ten years ago. Some doom-sayers said: “Whatever you do, don’t become a radiologist.” You can probably still find those videos online claiming radiology will be the first profession to disappear—that the world won’t need radiologists anymore. Guess what we’re short of today? Radiologists.

Host Dwarkesh: How do you achieve 2x annual logic manufacturing growth? Ultimately, both memory and logic are constrained by EUV.

Jensen Huang:

None of these are impossible to scale quickly in the short term. All can be resolved within two or three years—just require a demand signal; none are hard to replicate. As for how deeply we engage the supply chain—some engagements are direct, some indirect, some are: if I convince TSMC, ASML will naturally follow. We consider critical nodes—but if TSMC is convinced, you’ll have enough EUV machines within a few years. The key point: no bottleneck lasts longer than two or three years—without exception. Meanwhile, each generation improves compute efficiency by 10–20x; Hopper to Blackwell is 30–50x. We constantly invent new algorithms using CUDA’s flexibility to push efficiency gains while scaling capacity. None of this worries me. What worries me is what happens downstream—like policies hindering energy development. Without energy, you cannot build an industry; without energy, you cannot drive reindustrialization—you cannot bring chip manufacturing, computer manufacturing, or packaging back to America; you cannot build EVs and robots; you cannot build AI factories. More chip capacity is a two- to three-year problem; more CoWoS capacity is also a two- to three-year problem.

Will TPUs Break NVIDIA’s Dominance in AI Computing?

Host Dwarkesh: Looking at TPUs, one view holds that two of the world’s top three models—Claude and Gemini—are trained on TPUs. What does that mean for NVIDIA?

Jensen Huang:

We’re doing something entirely different. NVIDIA builds accelerated computing—not tensor processing units. Accelerated computing serves diverse domains: molecular dynamics, quantum chromodynamics, data processing, dataframes, structured and unstructured data, fluid dynamics, particle physics—and also AI. Accelerated computing covers a vastly broader scope. While everyone talks about AI today—and AI is indeed critically important—computing extends far beyond AI. NVIDIA has redefined computing itself—from general-purpose to accelerated computing. Our market reach dwarfs any possible TPU or ASIC. Look at our market position—we’re the only company accelerating diverse applications, backed by a massive ecosystem where all frameworks and algorithms run on NVIDIA hardware. Because our computers are designed for external operators, anyone can buy our systems. Most in-house systems must be self-operated because they were never designed flexible enough for others to operate. Precisely because anyone can operate our systems, we appear on every cloud—including Google, Amazon, Azure, and OCI. If you want to rent infrastructure for profit, you need a massive, cross-industry customer ecosystem. If you want to use it internally, we can help you too—as we do with Elon’s xAI. And because any company, in any industry, can deploy our systems, you can use them to build supercomputers for Lilly’s scientific research and drug discovery—supporting their entire operation for diverse needs across drug discovery and biosciences. There are countless applications TPUs cannot cover. NVIDIA has made CUDA an excellent tensor processor—but it also handles every stage of the data lifecycle—processing, computing, AI—all covered. Our market opportunity is larger, our reach broader. Because we support all applications worldwide, wherever you deploy NVIDIA systems, you know customers will use them—this is fundamentally different.

Host Dwarkesh: That’s a long question. You have astonishing revenue—$60 billion quarterly—but it’s not from pharmaceuticals or quantum computing. It’s because AI is an unprecedented technology growing at an unprecedented pace. The question is: for AI itself, what’s optimal?

I spoke with AI researcher friends who say: when they use TPUs, it’s a massive systolic array optimized specifically for matrix multiplication; GPUs are flexible, suited for scenarios with heavy branching or irregular memory access. But what is AI? It’s repeated, highly predictable matrix multiplication. TPUs don’t sacrifice die area for warp schedulers or thread-to-memory-bank switching—they’re deeply optimized for the dominant use case driving current compute growth. What’s your take?

Jensen Huang:

Matrix multiplication is a vital part of AI—but not the whole story. If you want to invent new attention mechanisms, shard differently, or design a completely new architecture—say, hybrid SSM—you need a general-purpose programmable architecture. If you want to create a model fusing diffusion and autoregressive techniques, you again need a general-purpose programmable architecture. We run everything you can imagine—that’s our advantage. Programmability makes inventing new algorithms far easier—and inventing new algorithms is precisely why AI advances so rapidly. TPUs—and everything else—are subject to Moore’s Law—roughly 25% improvement annually. To achieve true 10x or 100x leaps, you must fundamentally change algorithms and computing paradigms every year. That’s NVIDIA’s core advantage. When I first announced Blackwell’s energy efficiency would be 35x better than Hopper’s, nobody believed it. Then Dylan wrote an article saying I undershot—it’s actually 50x—and pure Moore’s Law couldn’t deliver that. Our solution involves new model designs—like MoE, cross-system parallelization, sharding, distributed deployment. Without CUDA to generate new kernels, I wouldn’t even know where to begin. This is the synergy between programmable architecture and NVIDIA’s extreme co-design capability. We can offload parts of computation onto NVLink’s fabric—or onto Spectrum-X’s network layer. We can drive innovation simultaneously across processors, systems, fabric, libraries, and algorithms. Without CUDA, I wouldn’t know where to start.

Host Dwarkesh: 60% of your revenue comes from the top five hyperscalers. In the era of customers like professors running experiments, they need CUDA—they can’t use other accelerators; they must use PyTorch and CUDA. But these hyperscalers have resources to write their own kernels—in fact, they must do so to extract that last 5% performance on specific architectures. Anthropic and Google run mostly on their own accelerators or TPUs and Trainium; even OpenAI, despite using GPUs, employs Triton—because they need custom kernels, going all the way down to CUDA C++, bypassing cuBLAS and NCCL to build their own stack—and can even compile it to other accelerators.

If most of your customers can, to some extent, replace CUDA, is CUDA still the decisive factor enabling cutting-edge AI to happen on NVIDIA hardware?

Jensen Huang:

CUDA is an incredibly rich ecosystem. If you want to build on any architecture, starting on CUDA is extremely wise—because the ecosystem is rich and we support every framework. If you want to create custom kernels, we’ve made huge contributions to Triton—Triton’s backend contains substantial NVIDIA technology. We’re happy to help every framework become as excellent as possible. There are many frameworks: Triton, vLLM, SGLang—and now explosive growth in post-training and reinforcement learning frameworks like verl and NeMo RL. Across the entire post-training and reinforcement learning domain, everything is exploding. So if you want to build on a particular architecture, choosing CUDA is the wisest choice—because you know the ecosystem is rich, and when problems arise, it’s more likely your code is at fault—not the mountain of underlying code. When something breaks—is it your fault or the machine’s? You want it to always be your fault—you want to trust that machine. Of course, we still have bugs—but our system is polished enough that you can at least build upon it. Second, as a developer building software anywhere, the most important thing is: you need a massive installed base so your software runs on many other machines. You’re not writing software for yourself—you’re writing it for your entire fleet, or everyone else’s fleets—because you’re a framework builder, and the NVIDIA CUDA ecosystem is the true core treasure. We now have hundreds of millions of GPUs deployed globally—from A10, A100, H100, H200, to L-series, P-series, various sizes and form factors. If you’re a robotics company, you want that CUDA stack running directly on the robot itself—we’re everywhere. Massive installed base means once you develop software or models, they’re useful anywhere. This value is irreplaceable. Finally, our presence on every cloud makes us truly unique. If you’re an AI company or developer uncertain which cloud provider to choose—or where to run—you can run everywhere, including your own private deployments. The richness of the ecosystem, breadth of the installed base, and diversity of our deployment—these three factors combine to make CUDA immensely valuable.

Host Dwarkesh: But I wonder whether these advantages matter equally to your primary customers? Many large customers can build their own software stacks. Especially as AI grows stronger in domains requiring strict validation loops—can all hyperscalers really write their own kernels? NVIDIA remains excellent on price-performance, so they may still prefer NVIDIA—but the question is: will this devolve into a pure spec war—who offers the best specs, the most compute and memory bandwidth per dollar? Historically, NVIDIA’s gross margins exceed 70%—precisely due to the CUDA moat. If most customers can actually replace CUDA, can those margins hold?

Jensen Huang:

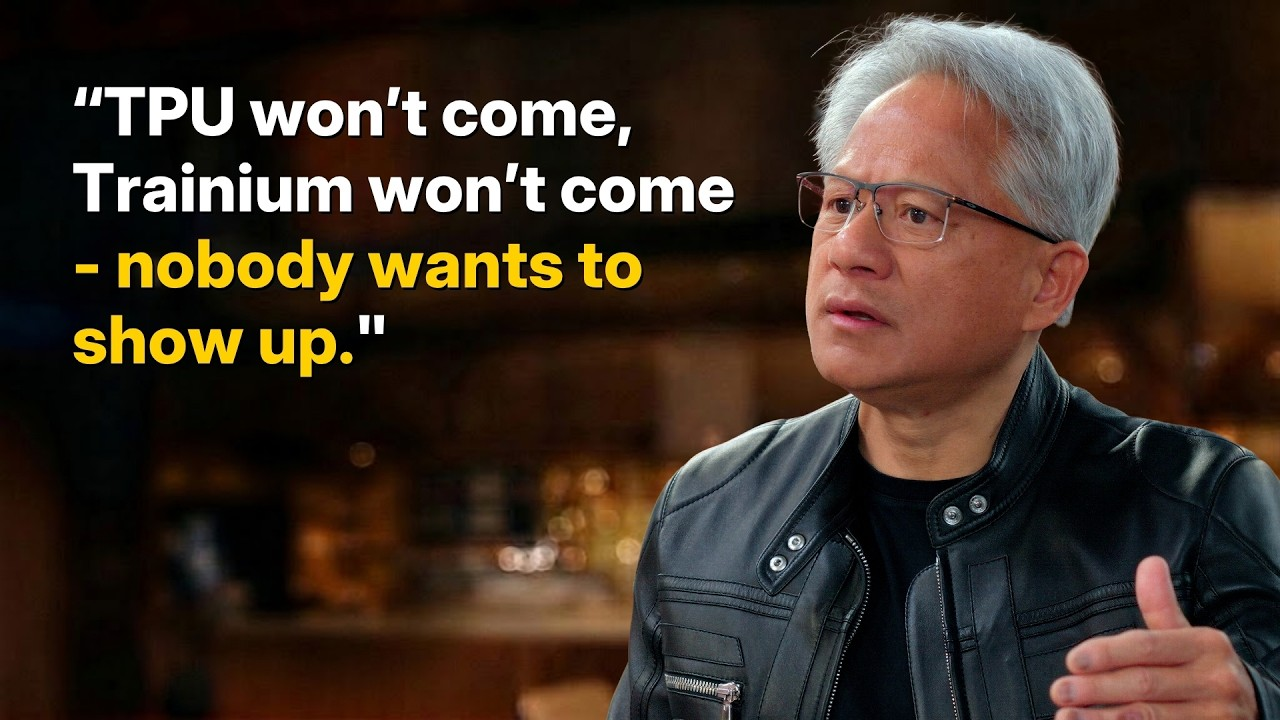

We assign staggering numbers of engineers to these AI labs—dedicated to collaborating with and optimizing their tech stacks. The reason is simple: nobody understands our architecture better than we do. These architectures aren’t like CPUs—CPUs are like Cadillacs: smooth luxury sedans, not fast, but anyone can drive them—with cruise control, everything intuitive. NVIDIA GPUs are accelerators—more like F1 race cars. Anyone can drive one at 100 km/h—but pushing it to its limits requires deep expertise. We heavily use AI to generate our own kernels. I’m quite confident we’ll remain indispensable for a long time. Our expertise helps AI lab partners squeeze 2x more performance from their stacks—often effortlessly. After optimizing a specific kernel—or their entire stack—their models accelerate 2x, 3x, or 50%—very common. That’s a huge number—especially when talking about their massive installed base of Hopper and Blackwell systems—if efficiency doubles, revenue doubles directly. NVIDIA’s compute stack delivers the best price-performance ratio in the world—no exceptions. No platform has demonstrated a better performance / total cost of ownership ratio than ours today. Not one. The InferenceMAX benchmark stands there—open to all. TPUs don’t show up; Trainium doesn’t show up. I welcome them to demonstrate their claimed 40% cost advantage on InferenceMAX—nobody wants to. Second, you say 60% of customers are the top five—but most of those are external-facing—much of AWS’s NVIDIA infrastructure serves external customers, not AWS itself; Azure’s customers are all external; OCI’s too. They prefer us because our coverage is unparalleled—we bring top-tier customers from every industry. These customers build on NVIDIA because our coverage and flexibility are unmatched. The flywheel is: installed base, architectural programmability, ecosystem richness—plus tens of thousands of AI companies globally. If you’re an AI startup, which architecture do you choose? The richest. The largest installed base. The richest ecosystem. That’s how the flywheel spins—plus highest compute-per-dollar and highest performance-per-watt—if your partner builds a GW-scale data center, that center must produce the most tokens and highest revenue. We’re the global leader in tokens-per-watt architecture. If your goal is renting infrastructure, we have the most global customers. That’s why the flywheel spins.

Host Dwarkesh: Anthropic just announced a multi-GW TPU deal with Broadcom and Google—and most of their compute comes from TPUs. If I look at major AI companies, a significant chunk of compute seems to be shifting away from NVIDIA. How do you explain this phenomenon?

Jensen Huang:

Anthropic is an outlier—not a trend. Without Anthropic, where does TPU growth come from? It’s 100% from Anthropic. Without Anthropic, where does Trainium growth come from? Again, 100% from Anthropic. This is widely known—most people understand it. This isn’t about a flood of ASIC opportunities emerging—there’s just one Anthropic.

I’m not upset when others try different things—how else would they know how good we are? Sometimes you need a reference point to remind yourself. We must continuously earn our market position. There’s always plenty of bravado—look at how many ASIC projects got canceled. Building something better than NVIDIA isn’t easy. Where did NVIDIA go wrong for this to make sense? Because of our scale, our iteration speed—we’re the only company globally taking giant strides forward every year.

ASIC gross margins are quite high—NVIDIA is 70%, ASICs are 65%. From what I can see, ASIC gross margins are solid—they think so too, and they’re quite proud of their respectable ASIC margins. So why is this? Long ago, we simply lacked the capability. I didn’t deeply understand back then how difficult it is to establish foundational AI labs like OpenAI and Anthropic—and how much investment they require from suppliers themselves. We couldn’t invest billions in Anthropic to secure their compute usage. Google and AWS had that capability—they invested heavily in Anthropic early on, so Anthropic used their compute. We weren’t in that position then. My mistake was failing to deeply recognize they had no other options—venture capital would never invest $5–10 billion into an AI lab expecting it to become today’s Anthropic. That was my blind spot. Even if I’d understood it then, we might not have had the means—but I won’t repeat that mistake. I’m thrilled to invest in OpenAI, thrilled to help them scale—I believe it’s essential. When we gained the ability—and when Anthropic approached us—I was thrilled to invest and help them scale. We just couldn’t do it back then. If I could rewind time—and if NVIDIA had today’s scale back then—I’d gladly do it.

Why Doesn’t NVIDIA Become a Hyperscaler Directly?

Host Dwarkesh: For years, NVIDIA has been the money-maker in AI—earning vast sums. Now you’re investing—reportedly $30 billion in OpenAI, $10 billion in Anthropic. Their valuations have surged—and will surely continue rising. For years, you’ve supplied them compute—you saw the direction, their value was just one-tenth of today’s a year ago—and you held enormous cash reserves.

One proposal is: either NVIDIA launches its own foundational model lab—or makes investments like these at much lower valuations. You have the cash—why not act earlier?

Jensen Huang:

We acted when we could. If I could’ve acted sooner, I would have. At the moment Anthropic needed us to act, we simply lacked the capacity—and hadn’t yet developed that mindset.

It’s about investment scale. We’d never invested externally before—and certainly never at that magnitude. We didn’t realize it was necessary. I assumed they could raise VC funding like any other company. But what they wanted to do wasn’t feasible via VC. Neither was what OpenAI wanted to do. That’s their brilliance—and their insight—they realized early they needed a different path. I’m glad they did. Even though our absence forced Anthropic to turn elsewhere, I’m still glad it happened. Anthropic’s existence benefits the world—I’m pleased about that.

Host Dwarkesh: You’re still earning huge profits—and quarterly earnings keep growing. Now the question is: what should NVIDIA do with this money? One answer is: there’s now a whole ecosystem of intermediaries converting CapEx into OpEx—providing rented compute to these labs—because chips are expensive, but as AI models improve, their lifetime value keeps growing. NVIDIA has ample cash for CapEx—reportedly backing CoreWeave up to $6.3 billion and investing $2 billion. Why doesn’t NVIDIA become a cloud provider itself—become a hyperscaler—renting out this compute? You have enough cash.

Jensen Huang:

This is a philosophical question—and I believe it’s a wise one. We should do as many necessary things as possible, and as few unnecessary things as possible. That means: the work we do building the compute platform—if we don’t do it, I truly believe no one else will. If we hadn’t built NVLink our way, hadn’t built the entire tech stack, hadn’t nurtured the ecosystem our way, hadn’t focused on CUDA for 20 years—mostly at a loss—if we hadn’t created all those CUDA-X domain-specific libraries—whether ray tracing, image generation, or early AI work, data processing, structured data processing, or vector data processing—if we hadn’t done it, no one would have. For example, we created cuLitho for computational lithography—if we hadn’t, no one would have.

So accelerated computing wouldn’t exist in its current form—if we weren’t doing it. Therefore, we should do it. We should go all-in—focus all our energy on this. But the world doesn’t lack cloud services—if we didn’t provide them, someone else would. Following the philosophy of “do as many necessary things as possible, and as few unnecessary things as possible”—if we hadn’t supported CoreWeave’s existence, these emerging AI clouds wouldn’t exist. Without supporting CoreWeave, they wouldn’t exist. Without supporting Nscale, they wouldn’t exist today. Without supporting Nebius, they wouldn’t exist today. Now they’re all thriving. Is this a business model? We should do as many necessary things as possible, and as few unnecessary things as possible. So we invest in the ecosystem—because I want our ecosystem to thrive. I want this architecture and AI to connect with as many industries and countries as possible—enabling the world to build on AI, and build on the U.S. tech stack. That’s the vision we’re pursuing.

When NVIDIA was founded, there were 60 3D graphics companies—and we were the least likely to survive. NVIDIA’s graphics architecture at the time was precisely wrong—not slightly wrong, but diametrically opposite—developers couldn’t support it. Starting from sound first principles, we derived a wrong answer—anyone would have crossed us off. Yet here we are—so I have enough humility to recognize: don’t pick winners. Either let them compete themselves—or give everyone a chance.

Host Dwarkesh: But you listed many emerging clouds that wouldn’t exist without NVIDIA—how does that reconcile with “not picking winners”?

Jensen Huang:

First, they must actively seek our help to exist. When they want to exist—with a business plan, professional capability, and passion—they must also possess some inherent strength. But if, at the final hurdle, they need investment to launch—we’re there to support them. Once their flywheel spins, we want them independent as soon as possible. Your question is: “Do we want to be in the financing business?”—answer is no. Specialized financing firms exist—we prefer partnering with all of them, rather than becoming financiers ourselves. Our goal is focusing on what we do best—keeping our business model as simple as possible—and supporting our ecosystem. When a company like OpenAI needs $30 billion in pre-IPO investment—and we deeply believe they’ll become an extraordinary company—they already are today; they’ll be incredible tomorrow; the world needs them; the world wants them; I want them; their tailwind is blowing—we support them, help them grow. We’ll make such investments—because they need us to. But we’re not trying to do as much as possible—we’re trying to do as little as possible.

Host Dwarkesh: GPU allocation—there’s been constant GPU shortage. NVIDIA reportedly allocates scarce capacity non-auction-based—e.g., ensuring emerging clouds like CoreWeave, Crusoe, Lambda get some. Why is this good for NVIDIA? First, do you agree with this “market share division” description?

Jensen Huang:

No—your premise is wrong. We think deeply about these matters. First, if you don’t place a purchase order (PO), no amount of talk matters. Before POs, what can we do? So first, we work extremely hard with everyone on forecasting—because these things take a long time to build, and data centers take even longer. We coordinate supply-demand through forecasting—that’s priority one. Second, we forecast with as many people as possible—but ultimately, orders must be placed.

Perhaps for some reason, you haven’t ordered in time—what can I do? First-come, first-served. If you’re not ready—data center unprepared, or missing components preventing go-live—we may prioritize another customer—to maximize our factory output efficiency. We make such adjustments. Beyond that, priority is strictly first-come, first-served—POs are mandatory. Stories about Larry and Elon begging me for GPUs over dinner are completely false. We did have dinner—a great one—but they never begged for GPUs; they just needed to place orders. Once orders are placed, we fight for capacity. We’re not complicated.

Host Dwarkesh: So there’s a queue—based on your data center readiness and PO timing—determining delivery. But it sounds like it’s not purely highest-bidder. Why not do that?

Jensen Huang:

We never do that—and never will. It’s poor business practice. You set a price—people decide whether to buy. I know other chip companies raise prices during high demand—but we don’t. It’s never been our practice. You can rely on us. I prefer being dependable infrastructure. No guessing—I quote you a price, and that’s the price—demand surges, price stays the same. Our relationship with TSMC is similar—no legal contracts, sometimes I benefit, sometimes I lose—but overall it’s excellent. I fully trust them, fully depend on them. One thing you can rely on from NVIDIA: Vera Rubin will excel this year, Vera Rubin Ultra arrives next year, Feynman arrives the year after. You can rely on us every year. Go ask any ASIC team globally: can you say, “I can stake my entire business on you—you’ll be here every year, token cost drops an order of magnitude annually, I can trust you like clockwork”? You absolutely cannot say that to other foundries. But you can say it to NVIDIA today. Want to buy $1 billion worth of AI factory compute? Fine. $100 million? Fine. $10 million—a rack, a GPU? Fine. $100 billion? Fine—we’re the only global company letting you say that. Same with TSMC: one unit or one billion units—fine, as long as you follow the process and do everything properly. NVIDIA’s status as global AI industry infrastructure took decades to achieve—requiring immense commitment, dedication, corporate stability, and consistency.

Should We Sell AI Chips to China?

Host Dwarkesh: Anthropic just released the Claude Mythos preview—so powerful they won’t publicly release it, citing its network attack capabilities. They claim it’s discovered thousands of high-risk vulnerabilities across major operating systems and browsers—even finding one in OpenBSD, an OS explicitly designed to have zero-day vulnerabilities—uncovering a 27-year-old flaw.

If Chinese companies, labs, and governments possess AI chips to train models like Claude Mythos—and run millions of instances—does that threaten U.S. companies and national security?

Jensen Huang:

First, Mythos was trained on fairly ordinary compute, at fairly ordinary scale—by an extraordinary company. China’s computing power and training resources are plentiful—so first recognize: chips exist in China—they manufacture 60% or more of mainstream chips globally—a massive industry for them. They have some of the world’s best computer scientists—and most AI researchers in these labs are ethnic Chinese, representing 50% of global AI researchers. So the question is: given these assets—abundant energy, abundant chips, vast AI researchers—if you’re concerned about them, what’s the best way to create a safe world? Making them victims and enemies is likely not the best answer. They’re competitors—we want America to win—but I believe maintaining dialogue and research exchange is probably the safest approach. This is a severely missing domain due to our current adversarial stance toward China. Researcher exchanges are essential—we must jointly agree on what AI shouldn’t be used for. As for discovering software vulnerabilities—that’s exactly what AI should do. Will AI find many software bugs? Absolutely—because there are countless bugs—even AI software itself contains many bugs. That’s precisely what AI should do—I’m thrilled AI has reached this capability level.

An underappreciated aspect is the entire cybersecurity ecosystem—including AI cybersecurity, AI safety, and AI privacy. A wave of AI startups is building this future for us: a remarkably capable AI agent, surrounded by thousands of AI agents safeguarding it—ensuring its safety and secure operation. This future is inevitable. The idea of an AI agent running loose without monitoring is inherently crazy. We’re acutely aware this ecosystem must thrive. And this ecosystem requires open source, open models, open tech stacks—so all these AI researchers and brilliant computer scientists can build AI systems securing AI. So one thing we must ensure is keeping the open-source ecosystem vibrant. This can’t be overlooked—and vast open-source contributions come from China. We certainly want America to have as much compute as possible—we’re constrained by energy, but many are solving this—we can’t let energy become our nation’s bottleneck. Yet we also want all global AI developers building on the U.S. tech stack—making AI progress—especially open-source progress—accessible to the U.S. ecosystem. If we split into two ecosystems—open source running only on foreign tech stacks, closed ecosystems on U.S. tech stacks—that’s a terrible outcome for America—I absolutely refuse that.

Host Dwarkesh: Returning to the core concern—compute gap and hacking—estimates suggest export controls limiting lithography machines mean China produces only one-tenth the compute of the U.S. So can they eventually train Mythos-class models? Yes. But because we have more compute, U.S. labs achieve this first. Since Anthropic achieved it first, they say: “We’ll hold it for a month—let all U.S. companies patch vulnerabilities before releasing.” Even if they train such models, large-scale deployment matters—having a million network hackers is far more dangerous than a thousand. So inference compute is critical. In fact, their abundance of brilliant AI researchers is precisely concerning—it’s compute that makes these engineers/researchers more effective.

If you speak with any U.S. AI lab, they all say compute is the bottleneck. So isn’t it better that U.S. companies—with more compute—reach Mythos capability before China, giving society time to prepare?

Jensen Huang:

We should always be first—we should always have more. But to achieve the outcome you describe, you must go to extremes—they must have zero compute. If they have some compute, the question becomes: how much? China’s compute is massive—you’re talking about the world’s second-largest computing market. If they want to aggregate compute, they have ample capacity to do so.

AI is a parallel computing problem, right? So why can’t they simply connect 4x or 10x more chips? They have abundant energy. They have empty data centers—power already connected—China has ghost cities, abandoned data centers, vast infrastructure capacity. If they want, they just need to stack more chips—even 7nm ones. Their chip manufacturing capability is among the world’s largest—entire semiconductor industry knows they dominate mainstream chips—their capacity is excessive—so the idea that China lacks AI chips is utterly nonsensical.

Of course, if you ask me: would America advance further if the world had zero compute? But that’s not a plausible scenario—it’s not real—they already have abundant compute. The threshold you worry about—they’ve already crossed it, and surpassed it. So I think you misunderstand: AI is a five-layer cake—and the bottom layer is energy. With abundant energy, it compensates for chip shortcomings. With abundant chips, it compensates for energy shortages. For example, U.S. energy is scarce—that’s why NVIDIA must relentlessly advance our architecture—doing extreme co-design—so every chip batch we deliver—given energy scarcity—delivers exceptional throughput-per-watt. But if your wattage is fully abundant—nearly free—why care about performance-per-watt? Just stack old chips. A 7nm chip is essentially equivalent to Hopper-class. Today’s models are mostly trained on Hopper-class—7nm chips are fully sufficient. Abundant energy is their advantage. But if they can manufacture enough chips—Huawei just had its largest year ever—shipping millions of chips—millions far exceeding Anthropic’s holdings. Some question how many logic chips SMIC can produce—and memory—I’ll tell you reality: they have ample logic chips; they have ample HBM2 memory.

Host Dwarkesh: But training and inference bottlenecks are often memory bandwidth. If they use HBM2—compared to your latest products—memory bandwidth差距 is nearly an order of magnitude—a huge gap.

Jensen Huang:

Huawei is a networking company, and you don’t need EUV for cutting-edge HBM—that’s simply incorrect. You can interconnect them—as we do with NVL72. They’ve already demonstrated connecting all compute into a giant supercomputer using silicon photonics. Fact is, their AI development progresses smoothly. World-class AI researchers—constrained by compute—devised extremely clever algorithms. Remember what I said: Moore’s Law improves ~25% annually—but excellent computer science can boost algorithmic performance another 10x.

Excellent computer science is the real lever. MoE is a great invention—no doubt. Brilliant attention mechanisms reduce compute. We must acknowledge most AI progress comes from algorithmic advances—not raw hardware. Now if most progress comes from algorithms and computer science—and programming—then tell me: isn’t their army of AI researchers their fundamental advantage? We’ve seen DeepSeek—it’s not something to ignore. The day DeepSeek launched its first model on Huawei chips was a bad outcome for our country.

Host Dwarkesh: Currently, DeepSeek runs on any accelerator because it’s open-source. Why won’t this continue?

Jensen Huang:

If it’s optimized for Huawei’s architecture, our architecture suffers. You describe a scenario I consider good news—a company develops software and AI models—and they run best on the U.S. tech stack. That’s good news. You treat it as bad news—I’ll give you the bad-news version: global AI models are being developed—and run best on non-U.S. hardware. That’s bad news for us.

Take a model optimized for NVIDIA—and try running it on other GPUs—they don’t run better. NVIDIA’s success is proof itself. AI models are built on our tech stack—and run best on our tech stack—what’s hard to understand? Go to the Global South—go to the Middle East—out-of-the-box—if all AI models run best on someone else’s tech stack—you’ll need to explain why that’s good for America.

Host Dwarkesh: Suppose Chinese companies reach the next Mythos before the U.S.—they discover all U.S. software’s security vulnerabilities first—but do it on NVIDIA chips—then release the model globally. Running on NVIDIA chips—what’s good about that?

Jensen Huang:

That’s not good—it’s genuinely not good—so we must prevent it.

Host Dwarkesh: Why do you think, if we don’t sell them chips, Huawei will precisely fill this gap? Aren’t they behind in chips?

Jensen Huang:

Evidence is right before us—their chip industry is massive—they can use twice as many chips, they have vast readily available energy needing to be filled—and they’re skilled manufacturers. I believe they’ll eventually outproduce everyone. If there are a few critical years—during those years, we must ensure all global AI models are built on the U.S. tech stack.

Why sacrifice an entire market layer for another layer’s benefit? All five layers must succeed—each is critical.

Host Dwarkesh: Stepping back—China is stuck at 7nm, while you advance to 3nm, 2nm, 1.6nm Feynman. When you reach 1.6nm, they remain at 7nm—even years later. Do you think your increasing compute plus their abundant energy equals them continually gaining more compute?

Jensen Huang:

We should always be first—should always have more. But to achieve the outcome you describe, they must have zero compute. If they have some compute, the question is how much.

America has 100x more compute than anywhere else—America should lead. NVIDIA ensures U.S. labs always hear new developments first—and get first purchase opportunities. If they lack funds, we even invest in them. America should lead—we do everything possible to ensure it—this is priority one, do you agree? We’re doing everything possible. But if selling chips to China lifts their compute bottleneck—how does that help America maintain leadership?

We’ve prepared Vera Rubin for America. First, why not propose a more balanced regulatory framework—allowing NVIDIA to win globally instead of ceding the entire world? Why would you want America to abandon the global market? The chip industry is part of the U.S. ecosystem—part of U.S. tech leadership—part of the AI ecosystem—part of AI leadership. Why does your policy approach lead America to abandon most of the global market?

Host Dwarkesh: If that compute runs models capable of zero-day attacks on U.S. software—how can that not be a weapon?

Jensen Huang:

First, solve this by engaging researchers in dialogue—with China, with all nations—ensuring people don’t use technology that way. This dialogue must happen. Second, we must ensure America leads—Vera Rubin, Blackwell massively available in America—stacked high. America has excellent AI researchers—status is strong—we should lead. But we must also recognize AI isn’t just a model—it’s a five-layer cake—every layer matters—we want America to win every layer—including chips. Abandoning the entire market won’t ensure long-term U.S. victory—in chips, in the compute stack—this is fact.

Host Dwarkesh: So how does selling chips to them help us win long-term? Tesla sold excellent EVs in China for years; iPhones sell in China—but neither created lock-in. Chinese EVs now dominate; their smartphones dominate too.

Jensen Huang:

At the start of our conversation, you used “moat” to describe NVIDIA’s position. For our company, the single most important factor is ecosystem richness—and that’s about developers. 50% of AI developers are in China—America shouldn’t abandon them.

We must keep innovating—our market share grows, not shrinks. Even competing in China—we’d hand that market to others—this assumption means you’re not speaking with someone who sees themselves as a loser. That loser mindset—that loser premise—means nothing to me. We’re not cars—cars you buy one brand today, switch brands tomorrow, easily. Computing isn’t like that—x86 exists for reasons; ARM is hard to replace for reasons. These ecosystems are hard to displace—requiring massive time and effort—most people don’t want to do it. So our job is nurturing that ecosystem—pushing technology forward—staying competitive in the market. Abandoning a market based on your premise—I can’t accept that idea. It makes no sense—because I don’t believe America is a loser, nor is our industry—a loser mindset means nothing to me.

If AI models running on those chips possess network attack capabilities—or those chips train such models and run more instances—it’s not a nuclear weapon—but it enables a weapon. Your logic applies equally to microprocessors and DRAM—and even electricity. In fact, we impose extensive export controls on China covering various chip manufacturing technologies—but we still sell massive DRAM and CPU volumes to China—and I believe that’s correct.

Host Dwarkesh: I suppose this circles back to AI’s fundamental uniqueness. If AI can find software zero-day vulnerabilities—do we want to minimize China’s capability—delaying their arrival at that point and preventing large-scale deployment?

Jensen Huang:

We want America to lead—we can achieve that. If chips are already there—and they train this model—how do we control that? We have massive compute, massive AI researchers—we’re sprinting as fast as possible.

Host Dwarkesh: But they have reasons to buy from us—we have Chinese company founders saying compute is their bottleneck.

Jensen Huang:

Because our chips are better. Overall, our chips are better—beyond doubt. Without our chips—can you acknowledge Huawei had a record-breaking year? Can you acknowledge numerous chip companies went public? Can you acknowledge we once held large market share there—but don’t now? Can you acknowledge China represents ~40% of the global tech industry? Ceding this market for one company’s benefit harms America—harms national security—harms tech leadership—means nothing to me.

Host Dwarkesh: I’m confused—you seem to say two different things. First, if allowed to compete, we’ll beat Huawei because our chips are far superior. Second, without us, they’ll do the same anyway. How can both be true?

Jensen Huang:

This is clearly true. When you have no better choice—you take your only option. What’s illogical about that? It’s perfectly logical. They want NVIDIA chips because they’re better. Better means many things—easier to program, better ecosystem. Whatever “better” means—we’ll sell them compute. So what? Fact is, we benefit. Don’t forget—we gain U.S. tech leadership benefits—we gain contributions from developers working on the U.S. tech stack—we gain benefits as AI models diffuse globally—making the U.S. tech stack the best. We can keep advancing and diffusing U.S. tech—that’s positive—it’s a crucial part of U.S. tech leadership. Your advocated policy led to the U.S. telecom industry being largely expelled globally—now we don’t even control our own telecom. I don’t think that’s smart—short-sighted—and caused the unintended consequences I’m describing—which you seem to struggle to grasp. Okay, let’s step back.

Host Dwarkesh: The core issue is: there are potential benefits—and potential costs—we must judge whether the cost is worth paying. Compute is input for training powerful models—and powerful models possess powerful attack capabilities. Anthropic reaching Mythos-level capability first is good—they’ll hold it temporarily, letting U.S. companies and government patch vulnerabilities before release. If China has more—or aggregates more—compute, training Mythos-level models earlier and deploying them at scale—that would be terrible. America having more compute—and companies like NVIDIA—is precisely why that hasn’t happened. That’s the cost of selling chips to China. So setting benefits aside—do you acknowledge this as a potential cost?

Jensen Huang:

I’ll likewise tell you the potential cost is: we lose the entire market for one of AI’s most critical stack layers—the chip layer—the world’s second-largest market—enabling them to scale, build their own ecosystem, and optimize future AI models completely differently from the U.S. tech stack. As AI spreads globally, their standards—their tech stack—will surpass ours because their models are open. America should win every layer of that five-layer cake—including chips. All five layers matter—we should win them all.

Host Dwarkesh: But China is the world’s largest contributor to open-source software—and open models—and today all build on the U.S. tech stack—on NVIDIA.

Jensen Huang:

Correct. Today built on the U.S. tech stack—but if forced out of China, that’s a policy error—clearly producing backlash—and already causing the situations I’m describing—accelerating their chip industry, forcing their entire AI ecosystem to focus inward on internal architectures. It’s not too late yet—but these things have already happened. In coming years, you’ll see they won’t stay at 7nm—they’re skilled manufacturers—they’ll keep advancing from 7nm. Is there a 10x gap between 5nm and 7nm? No. Architecture matters—networking matters—energy matters. All matter—not the oversimplified version you’re proposing. If you oversimplify to “is compute equivalent to nuclear weapons”—you’ve misdirected the entire discussion.

AI isn’t a nuclear weapon—AI can’t be equated to various weapon types—that scares everyone of AI—what benefit does that bring America? If we scare away all aspiring software engineers—saying AI will eliminate all software engineers—we’ll face software engineer shortages. So I’ll say: when you construct such extreme premises—taking everything from zero to infinity—you end up scaring people in ways utterly disconnected from reality. Life isn’t like that. Do we want America to be first? Absolutely. Must we lead at every layer of that tech stack? Absolutely.

We discuss Mythos today because it’s important—but in a few years, when we hope the U.S. tech stack spreads globally—to India, the Middle East, Africa, Southeast Asia—when our nation hopes to export technology, export our standards—I hope you and I have this same conversation again. I’ll tell you about today’s dialogue—about your policies and your imagination—how word-for-word, they’re handing the world’s second-largest market to others—we shouldn’t hand it over. Why voluntarily surrender? Nobody advocates all-or-nothing—nobody advocates unrestricted sales to China anytime, anywhere. We should always have the best tech in America—always have the most tech in America—and be first. But we should also strive to compete globally—and win. These can coexist—requiring nuance, maturity—not extremism. The world isn’t black-and-white.

Why Doesn’t NVIDIA Develop Multiple Chip Architectures?

Host Dwarkesh: We previously discussed TSMC’s N3 capacity bottleneck. If you already dominate N3—and someday dominate N2—could you leverage surplus capacity from older nodes like N7—using today’s knowledge about numeric types etc.—to rebuild a Hopper- or Ampere-class product? Could this happen before 2030?

Jensen Huang:

No need. Reason is each architecture generation isn’t just transistor shrinkage—it involves massive engineering innovation, packaging design, chip stacking, numeric types, and overall system architecture. Retreating to another node for this level of R&D is prohibitively costly—certainly not a “retreat” direction—we can afford pushing forward. Unless the world declares no more capacity ever—then yes, I’d instantly retreat to 7nm—no hesitation.

Host Dwarkesh: I’ve spoken with people asking: why doesn’t NVIDIA simultaneously pursue multiple radically different chip architectures? Like Cerebras’ wafer-scale architecture, Dojo’s ultra-large packaging, or a CUDA-free direction. You have resources and engineering talent to run these in parallel—why put all eggs in one basket? AI may head in unknown directions—architectures may evolve unpredictably.

Jensen Huang:

We could—but we have no better ideas. We could pursue all these—but none are better. We’ve simulated everything in our simulator—and results were worse—so we won’t do it. We’re pursuing what we want to pursue. If workloads shift significantly—I mean not algorithm changes, but fundamental workload morphology shifts—driven by market structure—we may decide to add other accelerators.

Years ago, tokens were either free or near-zero-cost. Now you can price tokens differently for different customers. Some customers pay premium prices—for example, our software engineers—if I give them faster-response tokens, boosting their productivity beyond today’s levels—I’ll pay for that. But this market emerged only recently. So now I can segment markets differently for the same model—based on response time. That’s why we decided to expand the Pareto frontier—creating a faster-response inference market—even with lower throughput. Previously, higher throughput was always better. We believe a world may exist where tokens command very high unit prices—even with lower factory throughput, high pricing compensates the gap. That’s why we’re doing this. But architecturally—if I had more money, I’d allocate more resources to NVIDIA’s architecture. I find the ultra-premium token and inference market segmentation extremely interesting.

Host Dwarkesh: Final question. Suppose the deep learning revolution never happened—what would NVIDIA be doing?

Jensen Huang:

Accelerated computing—exactly what we’ve always done. Our company’s core premise is: Moore’s Law is insufficient for many computing tasks—general-purpose computing works well, but isn’t ideal for many computations. So we combined GPU and CUDA architecture with CPUs to accelerate workloads—offloading code kernels or algorithms to our GPUs—speeding applications 100x, 200x. Where’s this applicable? Engineering, science and physics, data processing, computer graphics, image generation—countless domains. Even if AI didn’t exist today, NVIDIA would be extremely large—because the fundamental reason is: general-purpose computing’s expansion capability has essentially hit its limit—and the path forward is domain-specific acceleration.

We entered initially with computer graphics—but many other domains exist—particle physics, fluid dynamics, structured data processing—and countless algorithms benefiting from CUDA. Our mission has always been bringing accelerated computing to the world—enabling applications impossible for general-purpose computing—reaching capability levels breaking bottlenecks in certain scientific fields. Early applications included molecular dynamics, seismic processing for energy exploration, image processing—all domains where general-purpose computing is too inefficient.

Without AI, I’d be sad—but because of our computing advances, we democratized deep learning—enabling any researcher, scientist, student, anywhere—with a PC or GeForce GPU—to achieve astonishing science. This fundamental promise never changed—not at all. If you watch GTC, a large portion at the beginning isn’t AI—that part—computational lithography, quantum chemistry work, data processing—all unrelated to AI—and still critically important.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News