AI x DePIN: What New Opportunities Will Emerge from the Collision of This Hot Trend?

TechFlow Selected TechFlow Selected

AI x DePIN: What New Opportunities Will Emerge from the Collision of This Hot Trend?

By harnessing the power of algorithms, computing power, and data, advances in AI technology are redefining the boundaries of data processing and intelligent decision-making.

Authors: Cynic, Shigeru

This is the second installment of the Web3 x AI research series. For the introductory piece, see "From Parallel to Convergent: Exploring the New Wave of Digital Economy Driven by Web3 and AI Integration."

As the world accelerates its digital transformation, AI and DePIN (Decentralized Physical Infrastructure Networks) have emerged as foundational technologies driving change across industries. The convergence of AI and DePIN not only enables rapid technological iteration and broader applications but also unlocks more secure, transparent, and efficient service models, bringing profound transformations to the global economy.

DePIN: Decentralization Grounded in the Real World, a Pillar of the Digital Economy

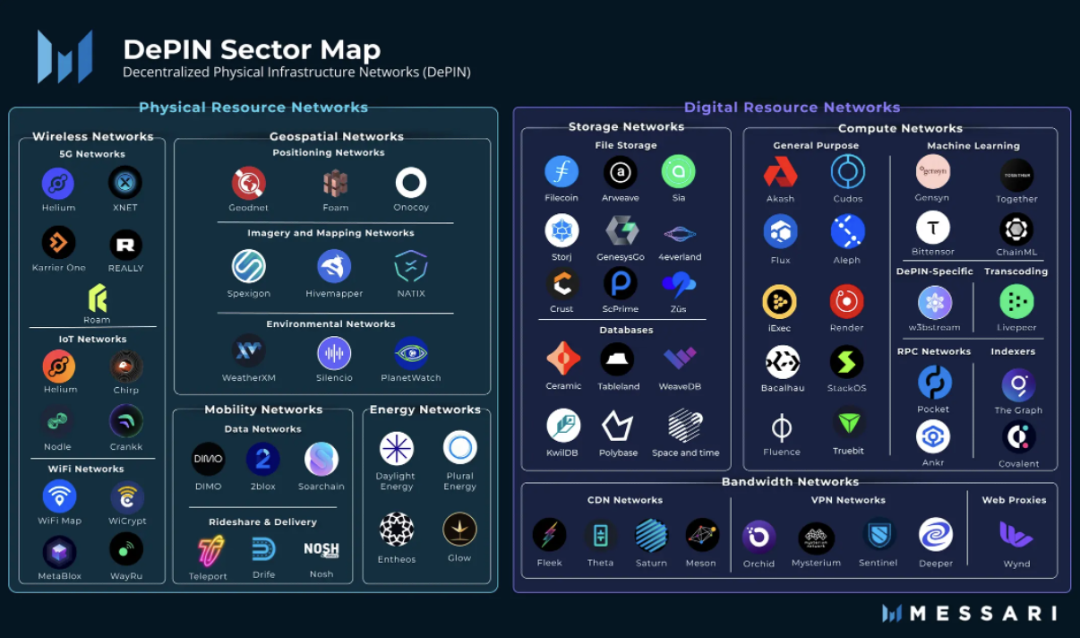

DePIN stands for Decentralized Physical Infrastructure Network. In the narrow sense, DePIN refers to distributed networks of traditional physical infrastructure—such as power grids, communication networks, and positioning systems—supported by distributed ledger technology. Broadly speaking, any distributed network underpinned by physical devices, such as storage or computing networks, can be considered DePIN.

from: Messari

If crypto brought decentralization to finance, then DePIN represents decentralization applied to the real economy. In fact, PoW mining rigs are already a form of DePIN. From day one, DePIN has been a core pillar of Web3.

AI’s Three Key Elements—Algorithms, Computing Power, and Data: DePIN Owns Two

The development of artificial intelligence is generally believed to rely on three key elements: algorithms, computing power (compute), and data. Algorithms refer to the mathematical models and program logic that drive AI systems; compute refers to the computational resources required to execute these algorithms; and data serves as the foundation for training and optimizing AI models.

Which of these three is most important? Before ChatGPT, people typically believed it was algorithms—after all, academic conferences and journals were filled with papers fine-tuning algorithms. However, after ChatGPT and the large language models (LLMs) behind it came into view, attention shifted to the latter two factors. Massive compute is a prerequisite for model creation, and data quality and diversity are crucial for building robust and efficient AI systems. In comparison, algorithmic precision has become less critical.

In the era of large models, AI has shifted from meticulous craftsmanship to brute-force scaling. Demand for compute and data continues to grow exponentially—and DePIN is uniquely positioned to meet this demand. Token incentives unlock long-tail markets, enabling vast amounts of consumer-grade compute and storage to become ideal fuel for large models.

Decentralized AI Is Not Optional—It's Essential

Some may ask: “Don’t AWS data centers already offer both compute and data, with better stability and user experience than DePIN? Why choose DePIN over centralized services?”

That argument holds some truth. After all, nearly all current large models are developed directly or indirectly by major internet companies: Microsoft powers ChatGPT, Google backs Gemini, and every major Chinese tech firm now has its own LLM. Why? Because only large tech firms possess sufficient high-quality data and the financial muscle to afford massive compute. But this status quo is problematic—people no longer want everything controlled by internet giants.

On one hand, centralized AI poses data privacy and security risks and may be subject to censorship and control. On the other hand, AI built by tech monopolies increases societal dependence and leads to market centralization, raising barriers to innovation.

from: https://www.gensyn.ai/

We shouldn't need another Martin Luther for the AI age. People should have the right to speak directly to God.

From a Business Perspective: DePIN’s Key Advantage Is Cost Reduction and Efficiency

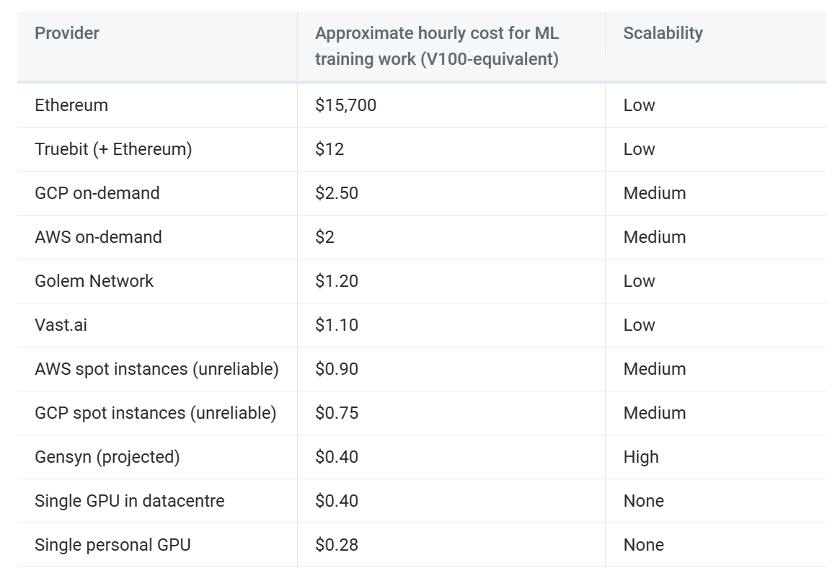

Even setting aside ideological debates between decentralization and centralization, from a business standpoint, using DePIN for AI remains compelling.

First, while big tech firms hold vast arrays of high-end GPUs, the collective power of consumer-grade GPUs scattered among individuals also forms a significant compute network—the so-called "long tail" of compute. These consumer GPUs are often highly underutilized. As long as DePIN offers incentives exceeding electricity costs, users will be motivated to contribute their idle compute. Moreover, since users manage their own hardware, DePIN networks avoid the operational overhead inherent in centralized providers, allowing focus solely on protocol design.

For data, DePIN networks enhance data availability through edge computing and reduce transmission costs. Additionally, many distributed storage networks feature automatic deduplication, reducing the burden of cleaning AI training data.

Finally, the crypto-economic mechanisms enabled by DePIN expand system fault tolerance and create potential for win-win-win outcomes among providers, consumers, and platforms.

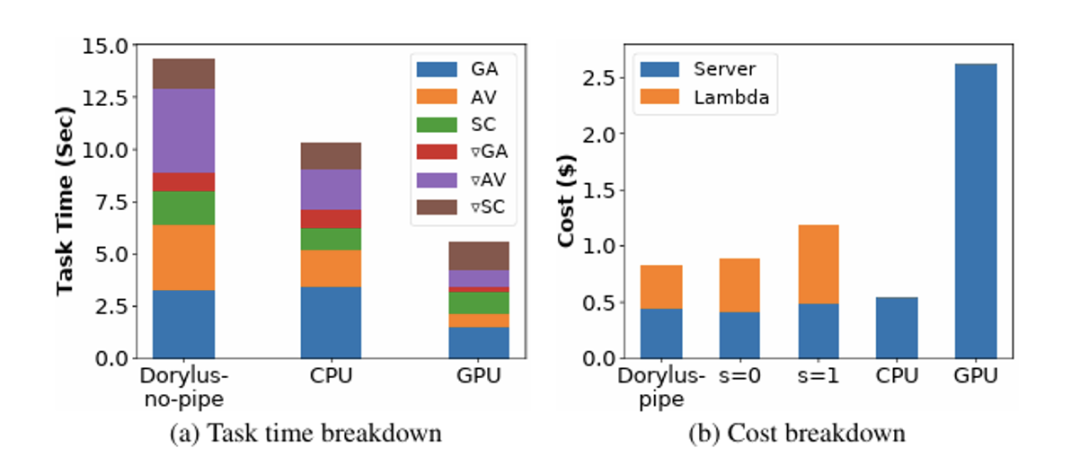

from: UCLA

In case you're skeptical, a recent study from UCLA found that decentralized computing delivers 2.75x performance at the same cost compared to traditional GPU clusters—specifically, 1.22x faster and 4.83x cheaper.

Challenges Ahead: What Obstacles Does AI x DePIN Face?

“We choose to go to the moon in this decade and do the other things, not because they are easy, but because they are hard.” — John Fitzgerald Kennedy

Building AI models using DePIN’s trustless distributed storage and computation still faces numerous challenges.

Work Verification

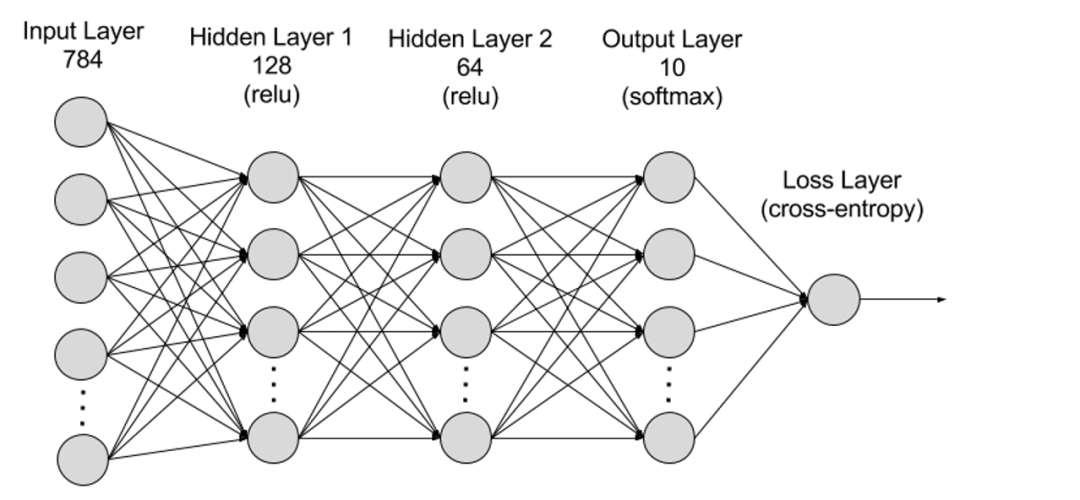

At their core, deep learning model computation and PoW mining are both general-purpose computations—ultimately based on signal changes between logic gates. Macroscopically, PoW mining involves “useless computation,” repeatedly generating random numbers and applying hash functions to find a hash with n leading zeros. Deep learning, by contrast, performs “useful computation,” calculating parameter values layer by layer via forward and backward propagation to build efficient AI models.

However, PoW uses hash functions, which are easy to compute in one direction but hard to reverse—making verification fast and simple for anyone. In contrast, due to the layered structure of deep learning models, where each layer’s output feeds into the next, verifying correctness requires re-executing all prior steps—making efficient verification difficult.

from: AWS

Work verification is essential—otherwise, compute providers could skip actual computation and submit randomly generated results.

One approach is to assign identical tasks to multiple servers and verify consistency across results. However, most model computations are non-deterministic—even under identical conditions, exact replication is impossible, with only statistical similarity achievable. Moreover, redundant computation drastically increases costs, contradicting DePIN’s goal of efficiency.

Another idea is an Optimistic mechanism: initially assume results are valid, allow anyone to challenge them, and if fraud is detected, submit a Fraud Proof. The protocol then slashes the cheater’s stake and rewards the challenger.

Parallelization

As previously noted, DePIN primarily taps into the long tail of consumer-grade compute, meaning individual devices offer limited power. Training large AI models on a single device would take prohibitively long, necessitating parallelization to reduce training time.

The main challenge in parallelizing deep learning lies in task dependencies, which hinder effective parallel execution.

Currently, deep learning parallelization falls into two categories: data parallelism and model parallelism.

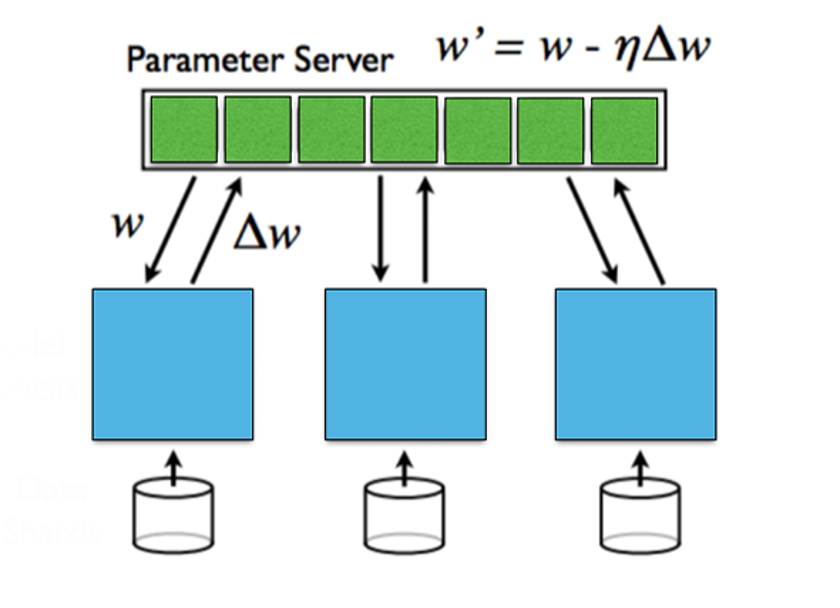

Data parallelism distributes data across multiple machines, each holding a full copy of the model parameters. Each machine trains locally, and parameters are aggregated afterward. This works well with large datasets but requires synchronization overhead.

Model parallelism splits the model itself across machines when it’s too large for a single device. Each machine stores part of the model, requiring inter-machine communication during forward and backward passes. While effective for very large models, this incurs high communication costs.

Regarding gradient updates across layers, approaches include synchronous and asynchronous updates. Synchronous updates are straightforward but introduce waiting times; asynchronous updates reduce latency but risk instability.

from: Stanford University, Parallel and Distributed Deep Learning

Privacy

Globally, there is a growing movement toward protecting personal privacy, with governments strengthening regulations around data security. Although AI heavily relies on public datasets, what truly differentiates AI models is proprietary user data held by individual companies.

How can we benefit from proprietary data during training without exposing private information? How can we ensure model parameters themselves remain confidential?

These represent two aspects of privacy: data privacy and model privacy. Data privacy protects users; model privacy protects the organizations building the models. Currently, data privacy is far more critical.

Multiple solutions are emerging. Federated learning trains models at the data source, keeping data local while transmitting only model parameters. Zero-knowledge proofs may emerge as a powerful future tool.

Case Studies: Which Projects Stand Out in the Market?

Gensyn

Gensyn is a distributed computing network designed for training AI models. It uses a Polkadot-based Layer 1 blockchain to verify whether deep learning tasks have been correctly executed and triggers payments accordingly. Founded in 2020, Gensyn raised $43 million in a Series A round in June 2023, led by a16z.

Gensyn uses metadata from gradient-based optimization processes to generate verifiable certificates of work performed. Through multi-granularity, graph-based precise protocols and cross-evaluator consensus, partial recomputation is used to validate consistency, with final confirmation recorded on-chain—ensuring computational integrity. To further strengthen verification reliability, Gensyn introduces staking to align incentives.

The system includes four types of participants: Submitters, Solvers, Validators, and Challengers.

-

Submitters are end-users who submit tasks and pay for completed work units.

-

Solvers are primary workers who perform model training and generate proofs for validation.

-

Validators bridge non-deterministic training with deterministic linear computation by replicating parts of solver proofs and comparing distances against expected thresholds.

-

Challengers act as the final safeguard, auditing validator outputs and submitting challenges. If successful, they receive rewards.

Solvers must stake tokens. Challengers audit solvers’ work; if malfeasance is detected and proven, the solver’s stake is slashed and the challenger rewarded.

According to Gensyn’s estimates, this approach could reduce training costs to just 1/5 of those charged by centralized providers.

from: Gensyn

FedML

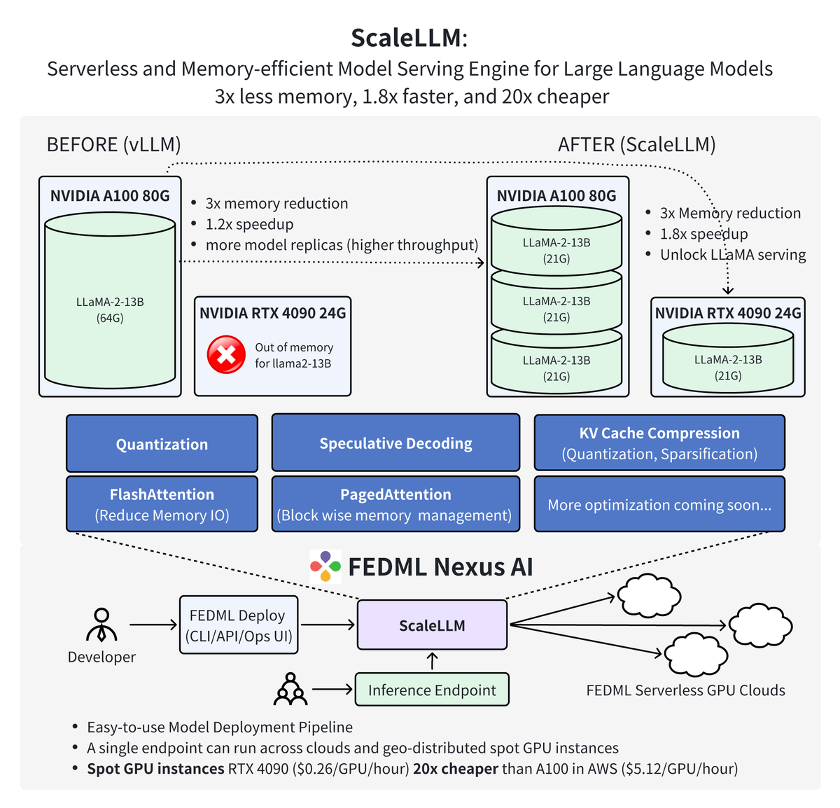

FedML is a decentralized collaborative machine learning platform enabling decentralized and cooperative AI anywhere and at any scale. More specifically, FedML provides an MLOps ecosystem for training, deploying, monitoring, and continuously improving ML models, while collaborating across combined data, models, and compute resources—all in a privacy-preserving manner. Founded in 2022, FedML announced a $6 million seed round in March 2023.

FedML consists of two core components: FedML-API and FedML-Core, representing high-level and low-level APIs respectively.

FedML-Core includes two independent modules: distributed communication and model training. The communication module handles low-level interactions between workers/clients using MPI; the training module is built on PyTorch.

FedML-API is built atop FedML-Core. Leveraging FedML-Core, new distributed algorithms can be easily implemented using client-oriented programming interfaces.

Recent work by the FedML team shows that using FedML Nexus AI for model inference on consumer-grade RTX 4090 GPUs is 20x cheaper and 1.88x faster than using A100s.

from: FedML

Future Outlook: DePIN Enables the Democratization of AI

One day, as AI evolves into AGI, compute power may become a de facto universal currency—and DePIN could accelerate this transition.

The integration of AI and DePIN opens a new frontier for technological growth, offering immense opportunities for AI advancement. DePIN provides AI with vast distributed compute and data resources, enabling the training of larger models and achieving higher levels of intelligence. At the same time, DePIN pushes AI toward greater openness, security, and reliability, reducing dependence on centralized infrastructure.

Looking ahead, AI and DePIN will continue to evolve together. Distributed networks will provide the backbone for training ultra-large models, which in turn will play vital roles within DePIN applications. While safeguarding privacy and security, AI will also help optimize DePIN network protocols and algorithms. We look forward to a more efficient, fair, and trustworthy digital world powered by AI and DePIN.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News