AI Expansion Is Overloading Power Grids: 7 Energy Investment Principles You Must Know

TechFlow Selected TechFlow Selected

AI Expansion Is Overloading Power Grids: 7 Energy Investment Principles You Must Know

Energy is the true bottleneck for intelligent growth.

Author: Joseph Ayoub

Translated and edited by TechFlow

TechFlow Introduction: Everyone is talking about compute power and models—but this article raises a more fundamental question: Can energy supply keep up? Morgan Stanley forecasts a 45 GW electricity shortfall in the U.S. by 2028; lead times for large power transformers have stretched to 24–36 months; and AI data center electricity consumption is growing at 15% annually. From this, the author derives seven investment theses—covering grid fragmentation, solid-state transformers, and two-phase cooling—offering unconventional yet critical angles.

Full text below:

NVIDIA recently introduced its “AI is a five-layer cake” framework. Today, I argue that the energy layer is the binding constraint on intelligent growth—and explore its implications.

Human civilizational progress stems from our ability to harness tools—be it hammers, fire, horses, the printing press, telephones, light bulbs, steam engines, radios, or AI. These “tools” represent humanity’s means of converting energy into productivity.

Fundamentally, we enhance human productivity by capturing energy and directing it toward goals via tools.

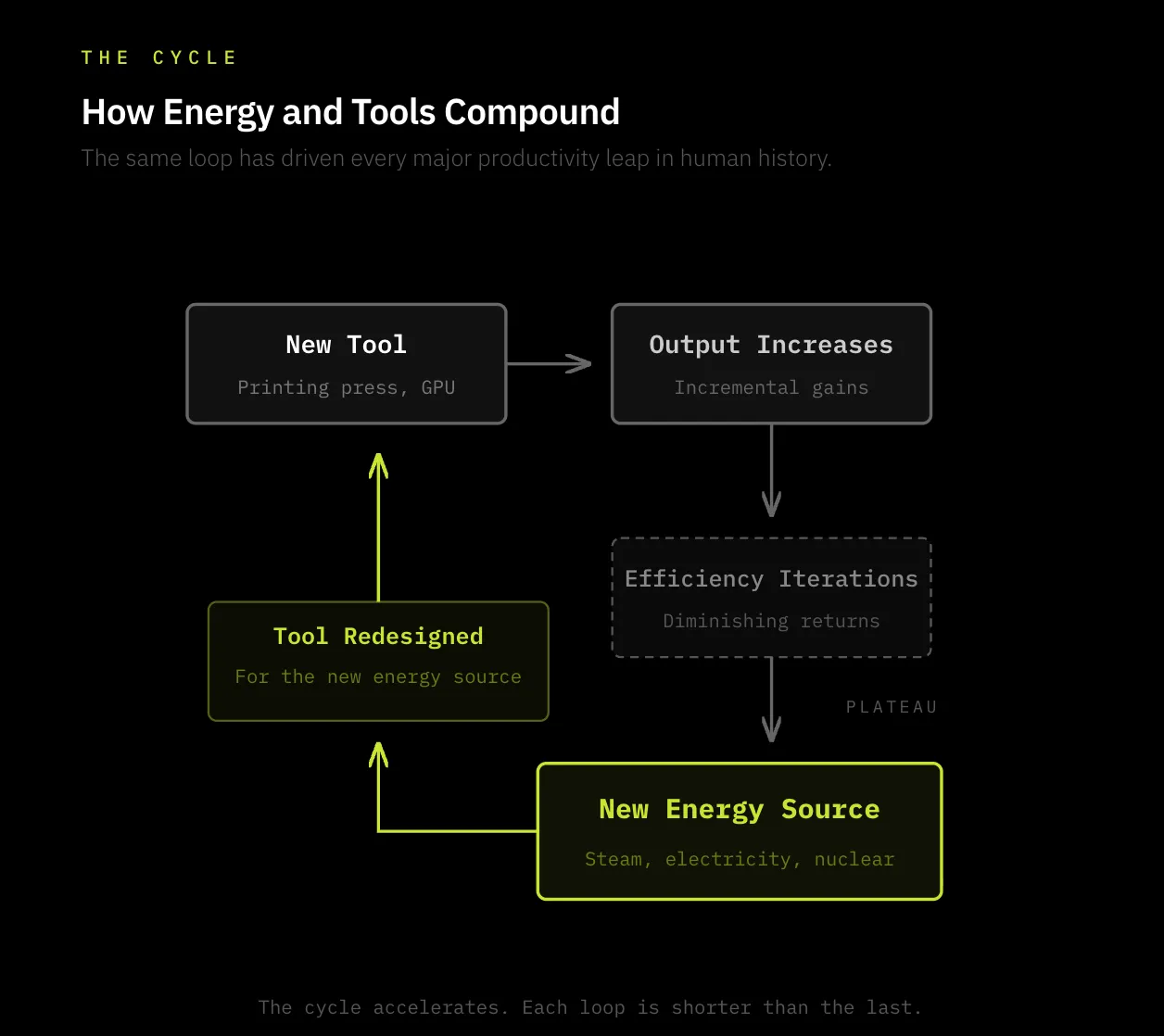

In short, the core logic of human civilizational advancement is as follows:

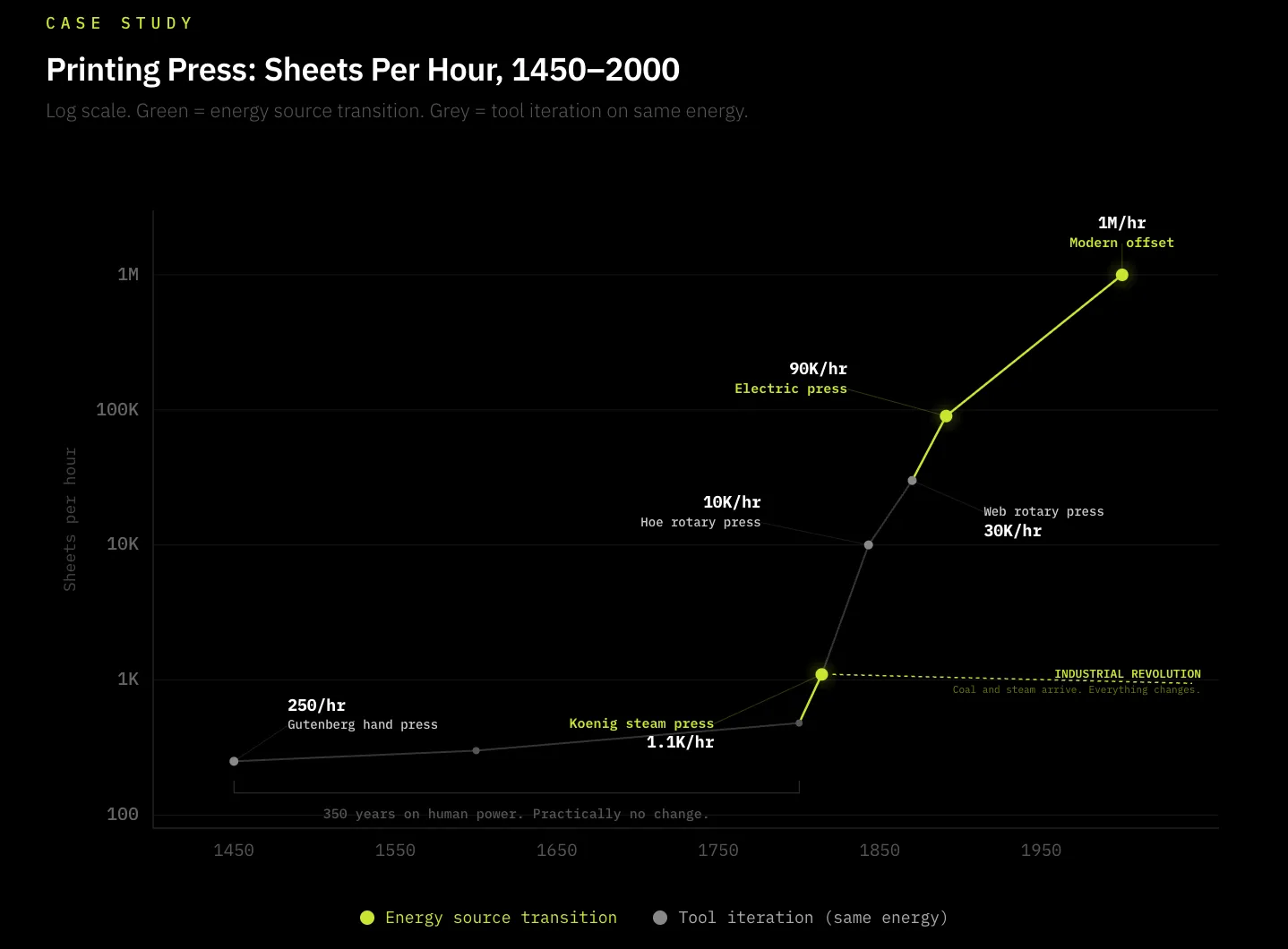

For most of human history, people relied on their own physical energy and hands as tools to achieve goals—whether farming or writing. The printing press exemplifies how energy and tools co-evolve: popularized by Gutenberg in 1440. Before this innovation, humans expended their own energy manually transcribing information with pens—a highly inefficient process. The printing press introduced a new mechanical tool that dramatically improved the efficiency of human energy use through mechanical impression, boosting productivity by orders of magnitude. Yet from 1450 to 1800—nearly 350 years—the printing press saw virtually no substantive innovation. Only when humanity harnessed a more powerful energy source—coal—did the energy side of the equation change. In 1814, Friedrich Koenig invented the steam-powered printing press, adapting the press to coal—the dominant energy innovation of the era—increasing efficiency fivefold. Thereafter, the printing press continued to integrate efficiently with new energy sources: output rose from 250 impressions per hour to 30,000 per hour within 50 years—and today exceeds millions per hour.

Thus, the continuous cycle—of innovating new tools, expanding the frontier of energy harnessing, and improving tool efficiency relative to available energy—endures to this day. Today, intelligence is our new focus of productive capacity, and energy is its fuel. Crucially, whether we can sustain intelligent growth depends on how much sustainable, reliable energy we can generate to power our tools (GPUs) and direct them toward objectives (intelligence).

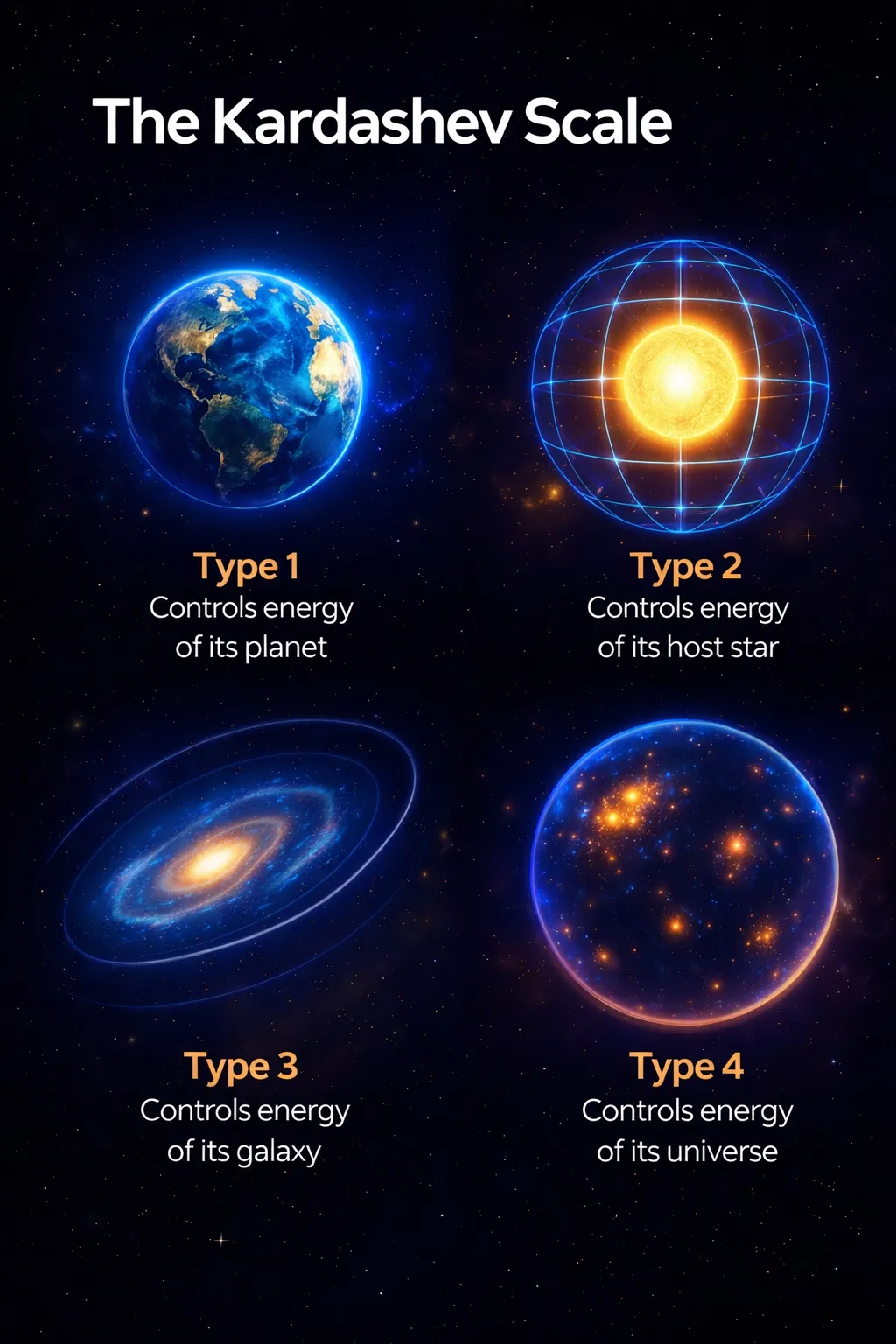

This thesis aligns with the Kardashev Scale—which measures a civilization’s technological advancement by how much energy it can harness: from planetary, to stellar, to galactic, to universal, and even multiversal. How much energy we command marks how far our civilization has progressed. Historically, this principle has held true—and will continue to do so. The ability to harness energy is foundational to civilizational advancement.

The central argument of this article is: Energy demand is rapidly outpacing supply—and this constitutes the primary bottleneck to advancing intelligence. I will examine the first- and second-order implications of this thesis.

Why is energy supply slowing?

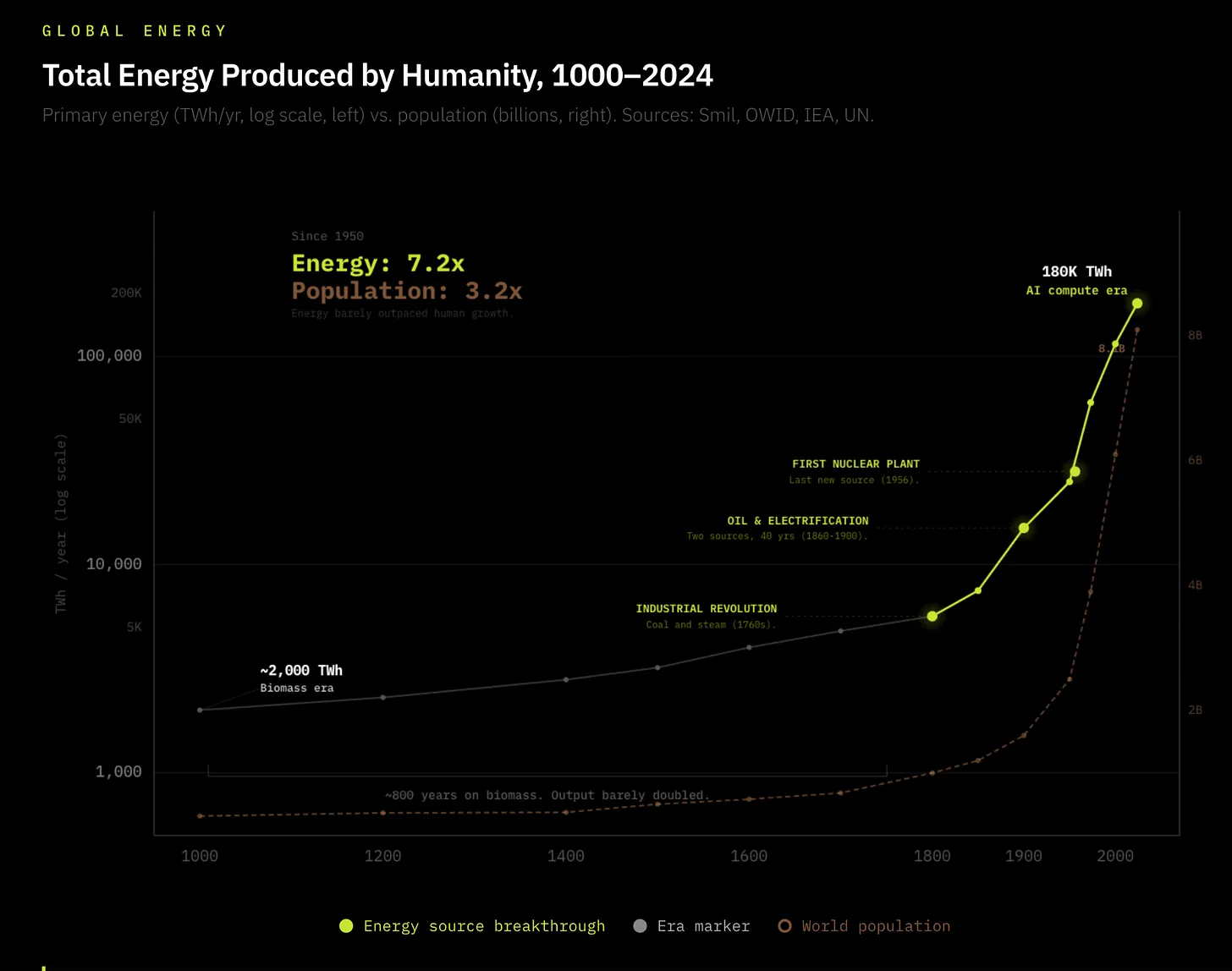

Nuclear fission was discovered in 1939—the last major energy paradigm shift since the dawn of human civilization. However, due to the Chernobyl disaster and global commitments to transition from nuclear to renewable energy, a clear mismatch emerged between tool innovation and energy advancement after 1950. Global energy production stood at 2,600 GW in 1950—and stands at 19,000 GW today (a 7.3x increase). This appears impressive, but such incremental, linear growth falls drastically short of modern computing and technology growth—and barely exceeds the 3.5x population growth over the same period.

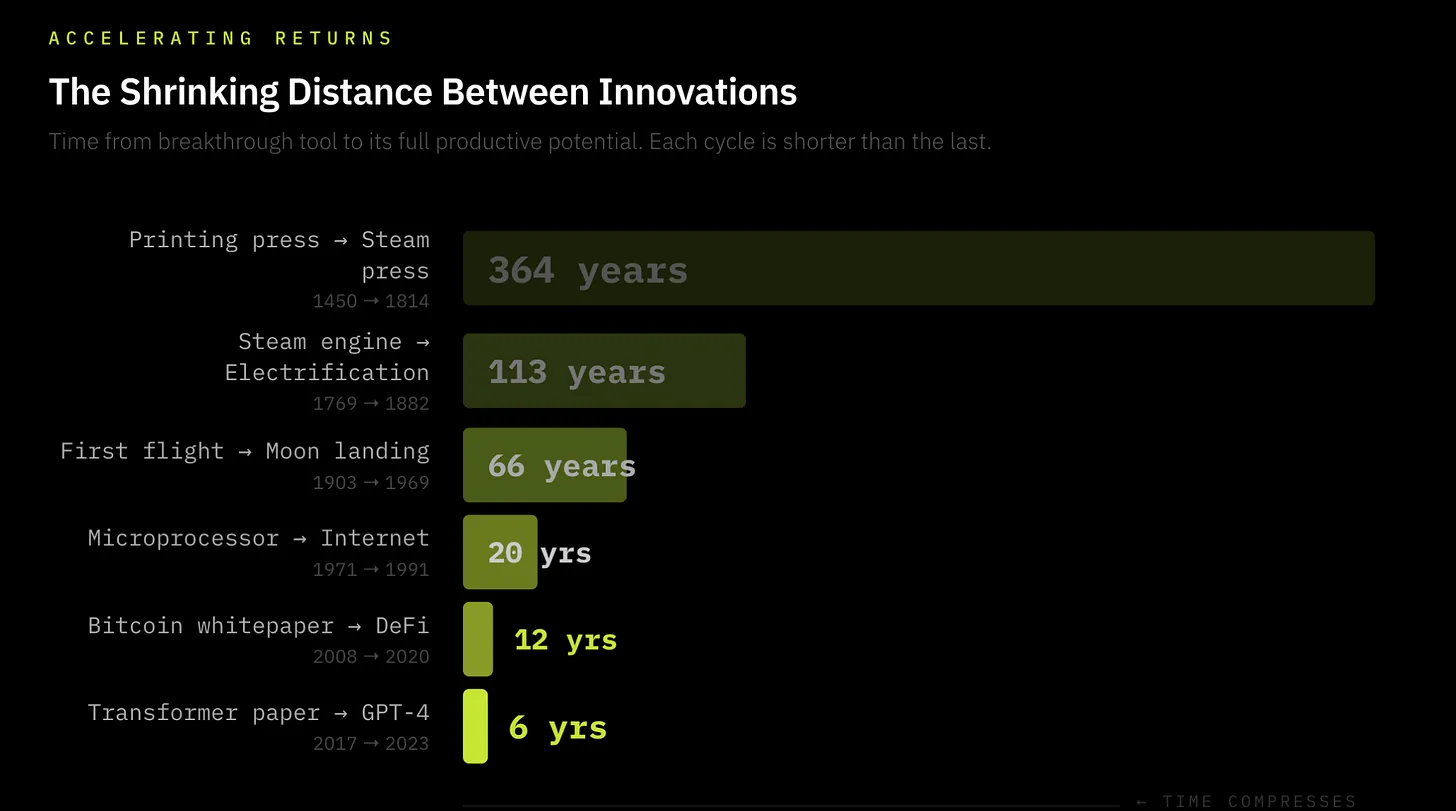

In contrast, the intervals between quantum leaps in tool innovation are shortening. It took 364 years from the first printing press to its next major improvement; 58 years from the first flight to space travel; 20 years from the first microprocessor to the internet; and today, major GPU advancements occur every two years. We live in an accelerating window of tool-efficiency gains—so rapid that multiple innovations now compound across ever-shortening cycles. From AI to cryptography to quantum computing, new innovations are discovered faster and their efficiency gains accelerate more sharply—this is the Law of Accelerating Returns.

Today, data centers consume 1.5% of global electricity—and are projected to reach 3% by 2030—a six-year leap equivalent to what steam engines achieved in fifty years. The key distinction between the Industrial Revolution and today’s intelligence explosion lies in energy infrastructure: During the Industrial Revolution, energy supply expanded in lockstep with demand—coal mines, canals, and rail networks grew alongside the machines consuming them. Every prior energy revolution built its own supply chain as it scaled; AI inherits an existing supply chain—one already beginning to fracture.

The grid is utterly unprepared for annual 15% electricity demand growth from the intelligence explosion, while U.S. electricity demand has nearly flatlined over the past decade. Cracks are already appearing in the U.S.: Grid interconnection queues have hit record lengths; lead times for large power transformers now average 24–36 months; and a 30% supply shortfall for power transformers looms in 2025. Morgan Stanley estimates the U.S. alone will face a 45 GW electricity shortfall by 2028—equivalent to the electricity needs of 33 million American households. I believe this shortfall may be significantly larger.

The problem is clear: Humanity must radically scale energy infrastructure to keep pace with innovation leaps in AI, robotics, and autonomous driving.

The Looming Energy Shortfall: First- and Second-Order Impacts

The consequences of the looming energy shortfall are historic: As demand surges beyond supply, we may witness the emergence of quasi-private energy markets.

Hyperscalers have already begun building behind-the-meter (BTM) generation facilities—and plan to expand into nuclear-powered data centers. This trend is nascent but unmistakable. I believe it will only intensify.

Below, I outline seven theses—each a derivative of the intelligence explosion and its impact on persistently strained electricity supply.

Thesis One: Grid Fragmentation—Compute Will Migrate to Energy, Not Vice Versa

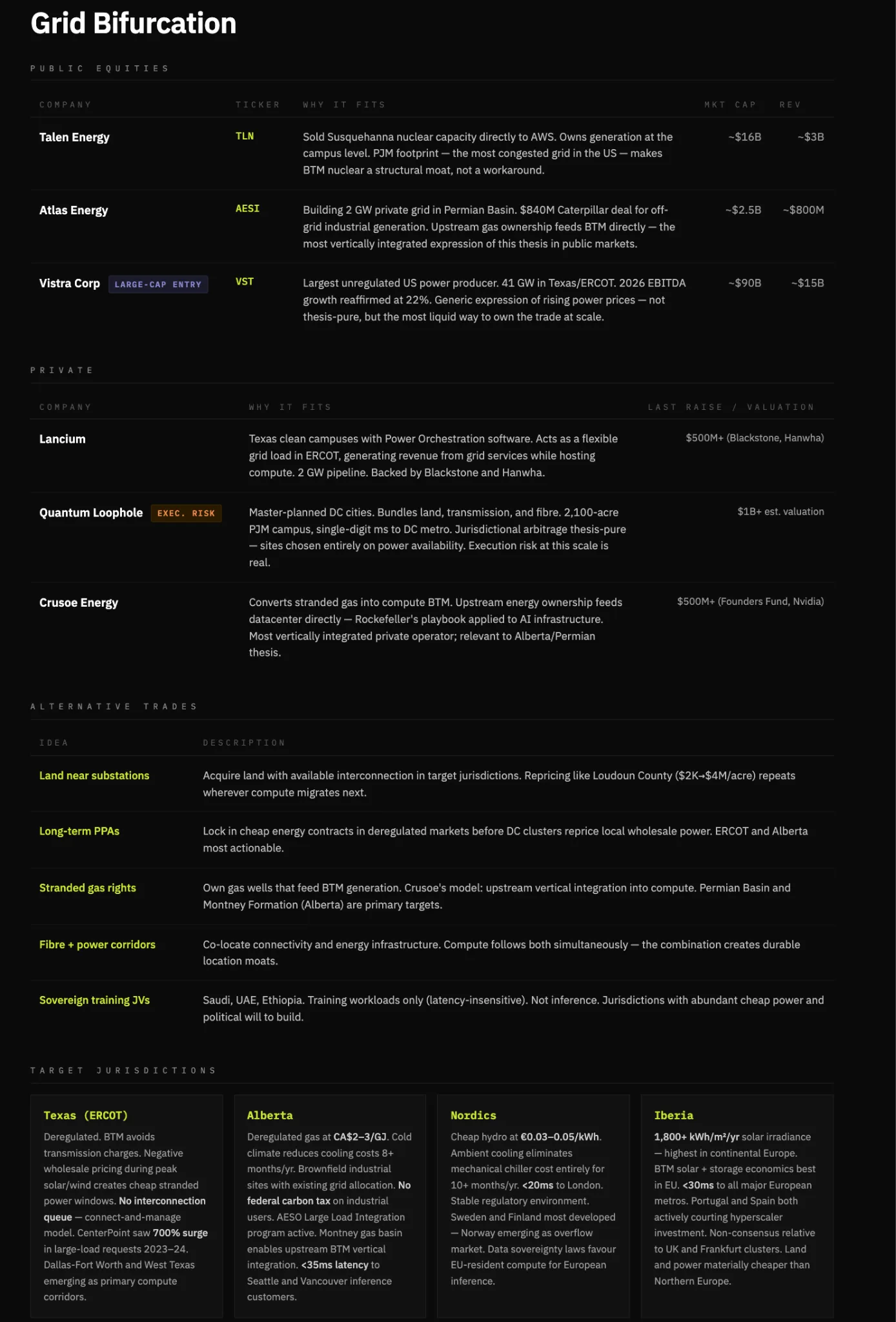

Regions with abundant energy and permissive regulation—adjacent to inference demand—will capture disproportionate value as energy systems fragment.

When energy demand begins to exceed supply, electricity becomes politically sensitive. Households have voting power; data centers do not. Under energy shortfalls, grids are unlikely to remain neutral—they will prioritize residential over industrial demand via pricing, access restrictions, or soft caps.

Given compute’s extreme sensitivity to latency, uptime, and reliability, operating in jurisdictions that prioritize residential electricity is fundamentally unviable. As grid access grows unstable—or politicized—compute workloads will migrate to BTM generation models, where electricity can be directly secured, controlled, and priced.

This will drive a structural shift: Compute relocates to energy-abundant, regulation-light economies. Winners are entities capable of integrating land, connectivity, energy generation, and fiber optics into deployable, replicable systems—and the jurisdictions hosting them will benefit accordingly.

Thesis Two: Energy as a Competitive Moat—BTM Self-Generation Becomes the Defining Capability of Compute Providers

In my view, this is the most critical first-order impact of worsening energy shortages. In a world where energy demand exceeds supply, access to cheap, reliable electricity confers a structural cost advantage that compounds over time. Moreover, data centers’ priority access to grid electricity is politically unsustainable—and that is precisely the trajectory energy markets are following. As national grids grow increasingly strained, compute providers will be forced to build their own generation. Hyperscalers have already begun this shift. Infrastructure lacking BTM generation will be rendered obsolete outright.

Essentially, companies that own electricity win; those that rent it lose. Without BTM generation, compute providers face fatal reliability issues, rising costs, and usage constraints. Pure-play colocation REITs without owned generation—such as Equinix and Digital Realty—will see relative value erode versus vertically integrated operators. Companies integrating energy generation with compute hosting are building the deepest moats (Crusoe, Iren, and select hyperscalers). This could be framed as a long/short trade—but I emphasize vertical integration’s winners instead.

Thesis Three: BTM Standardization Drives Innovation—from Conventional to Solid-State Transformers, and from Traditional to Digital Switchgear

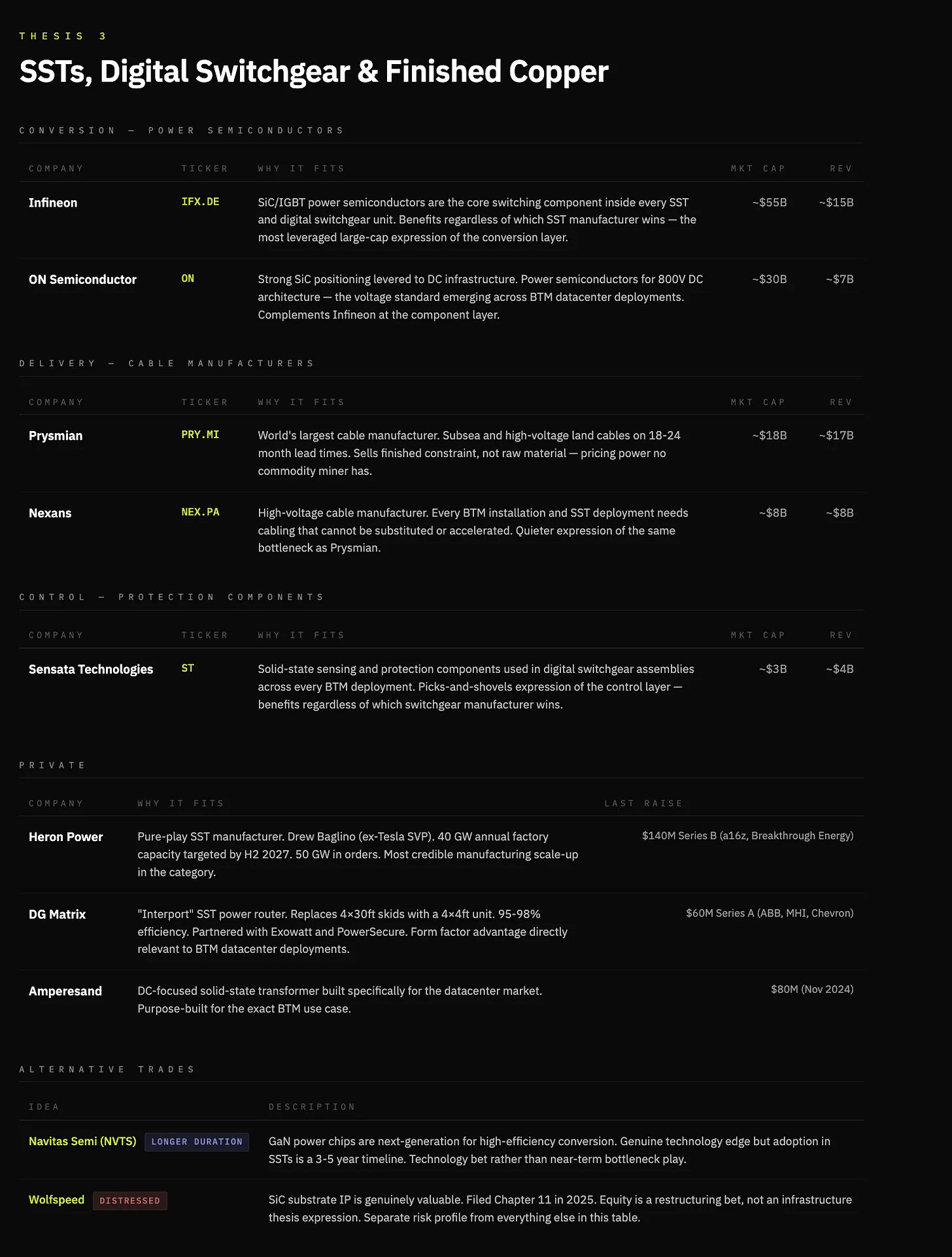

Conventional transformers step AC grid voltage up or down. Due to their size and material constraints, lead times have reached 24–36 months, with a 30% supply shortfall. They are also a late-19th-century technology, hand-assembled using constrained materials. Crucially, every megawatt of BTM generation must pass through conversion, regulation, and distribution to the compute load—transformers are unavoidable.

Solid-state transformers (SSTs) replace all this with high-frequency power electronics. They are smaller, faster, fully controllable, and handle AC–DC conversion, voltage regulation, and bidirectional current within a single unit. Manufacturing is simpler, relying on silicon-based power semiconductors like silicon carbide (SiC) and gallium nitride (GaN), rather than massive copper windings and oil-filled tanks. As BTM becomes the standard architecture, the device bridging energy and compute becomes the bottleneck—and that device is the solid-state transformer (SST).

Switchgear faces similar 80-week delays. It serves as the control layer between generation and load—routing power, isolating faults, and protecting systems. Like transformers, switchgear is labor-intensive, built around constrained materials, and has changed little since the 1880s.

Digital switchgear replaces all this with solid-state power electronics—faster, programmable, fully controllable, enabling real-time fault detection, remote isolation, and dynamic load routing. Equally important, it scales like electronics—not industrial equipment.

A note on copper: I hold a constructive view on copper. Copper is the highway for electrons—and will be the foremost commodity required in an increasingly electrified world. Yet the expression of this trade is nuanced: Traditional miners offer low-margin, potentially compressing returns over time. But at the finished-goods end—where copper is irreplaceable and time-constrained—major bottlenecks and future value accumulation exist. Cable manufacturers like Prysmian and Nexans sell finished-product constraints—not raw materials—and with transformer lead times ballooning, this is no longer a commodity market.

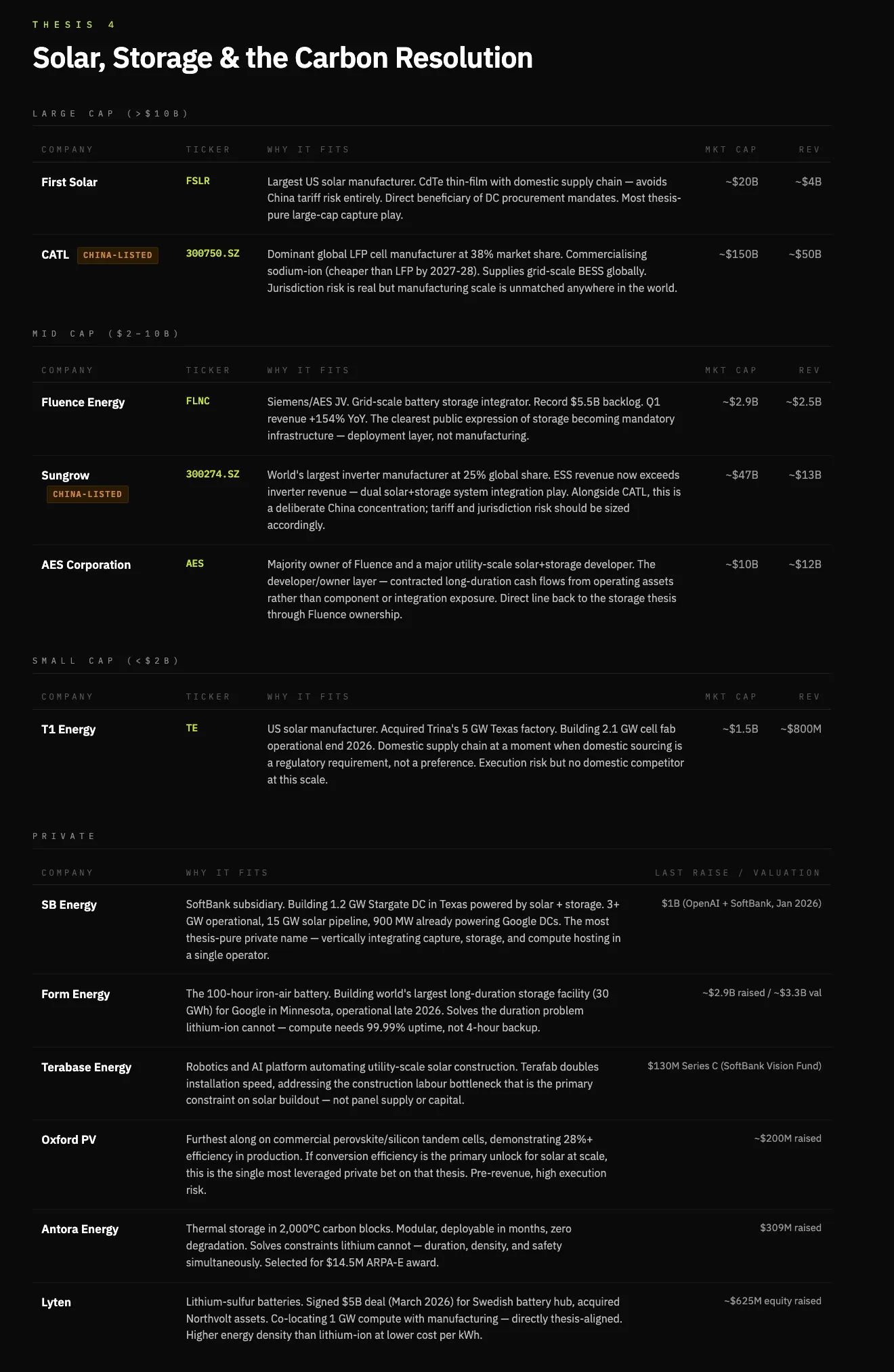

Thesis Four: AI’s Carbon Cost Is Politically Untenable—Forcing Solar-and-Battery-Centric Solutions

AI construction carries an unpriced carbon burden—a political constraint. Data centers raise electricity prices, consume vast volumes of water, and increase local emissions. Evidence is mounting: $18 billion in data center projects have been canceled outright; $46 billion more have been delayed.

Today, roughly 56% of data center electricity comes from fossil fuels. Natural gas solves deployment speed—but is politically fragile. As demand expands, resistance to fossil-fuel expansion rises—forcing near-term adoption of hybrid systems combining natural gas, nuclear, and renewables.

Although natural gas serves as a short-term bridge during the data center boom, long-term energy abundance isn’t solved by fuel extraction—it’s solved by energy capture. The sun delivers orders of magnitude more energy to Earth than humanity consumes. The constraint isn’t availability—it’s conversion, storage, and deployment.

Solar is not an immediate solution to compute energy demand—but it is the ultimate one.

Current commercial solar captures ~22% of incident energy. Each incremental gain in conversion efficiency lowers the cost per megawatt—pushing solar closer to dispatchable-generation parity within BTM systems.

Battery storage becomes a core component of this architecture—not just for smoothing intermittency, but as a revenue layer. Energy arbitrage and load balancing transform a historical cost center into a profit contributor for BTM operators.

Within this thesis, winners are vertically integrated players spanning capture, storage, and delivery: specialized solar developers with BTM contracts; battery manufacturers offering both grid-scale and site-level products; and the few operators able to combine owned generation with compute hosting.

Solar is a procurement-and-manufacturing game; batteries are the constraint-and-monetization layer; integration captures profits; cutting-edge technologies remain options—not base cases. Here, Tesla may remain a major winner—but I’ll focus on non-consensus names.

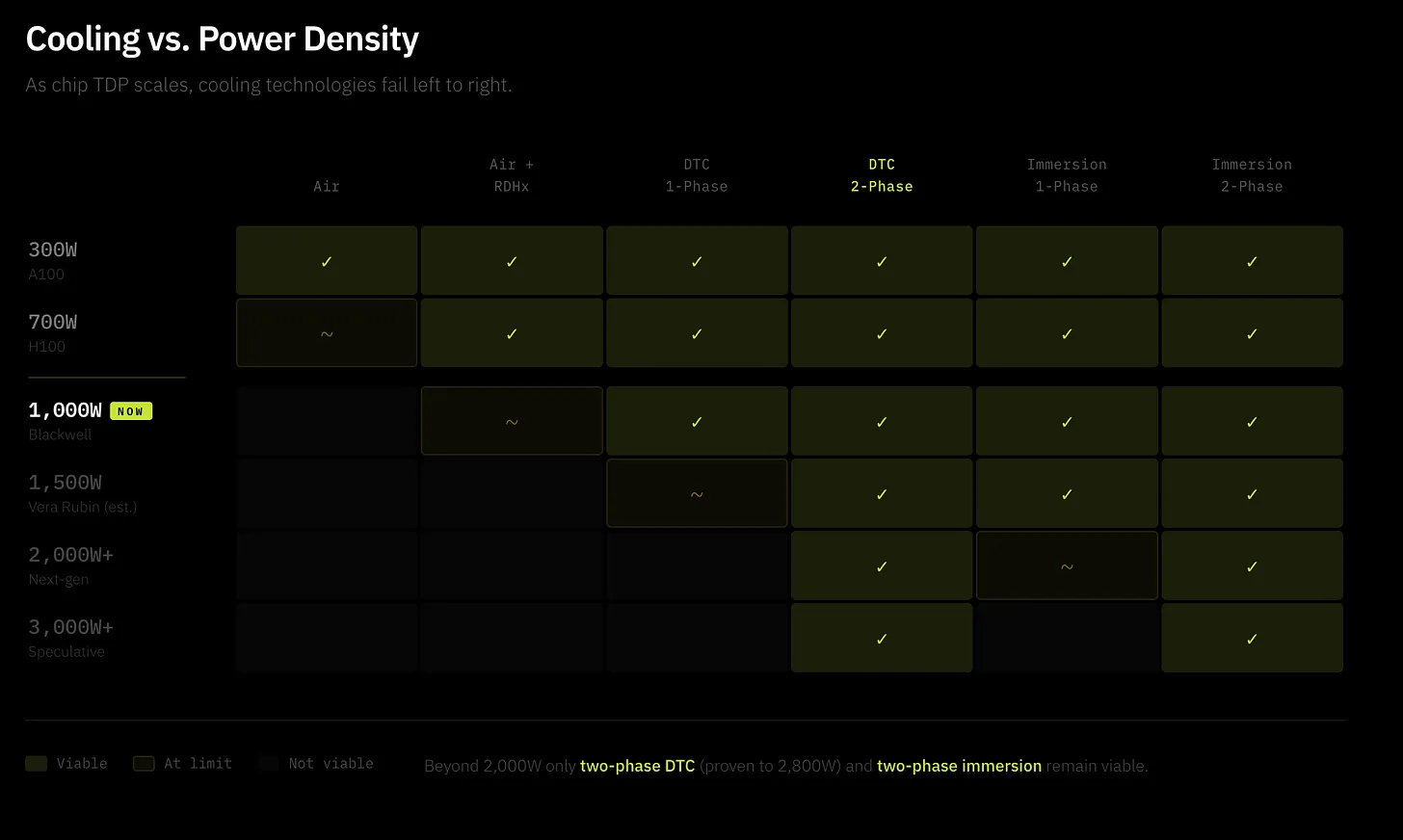

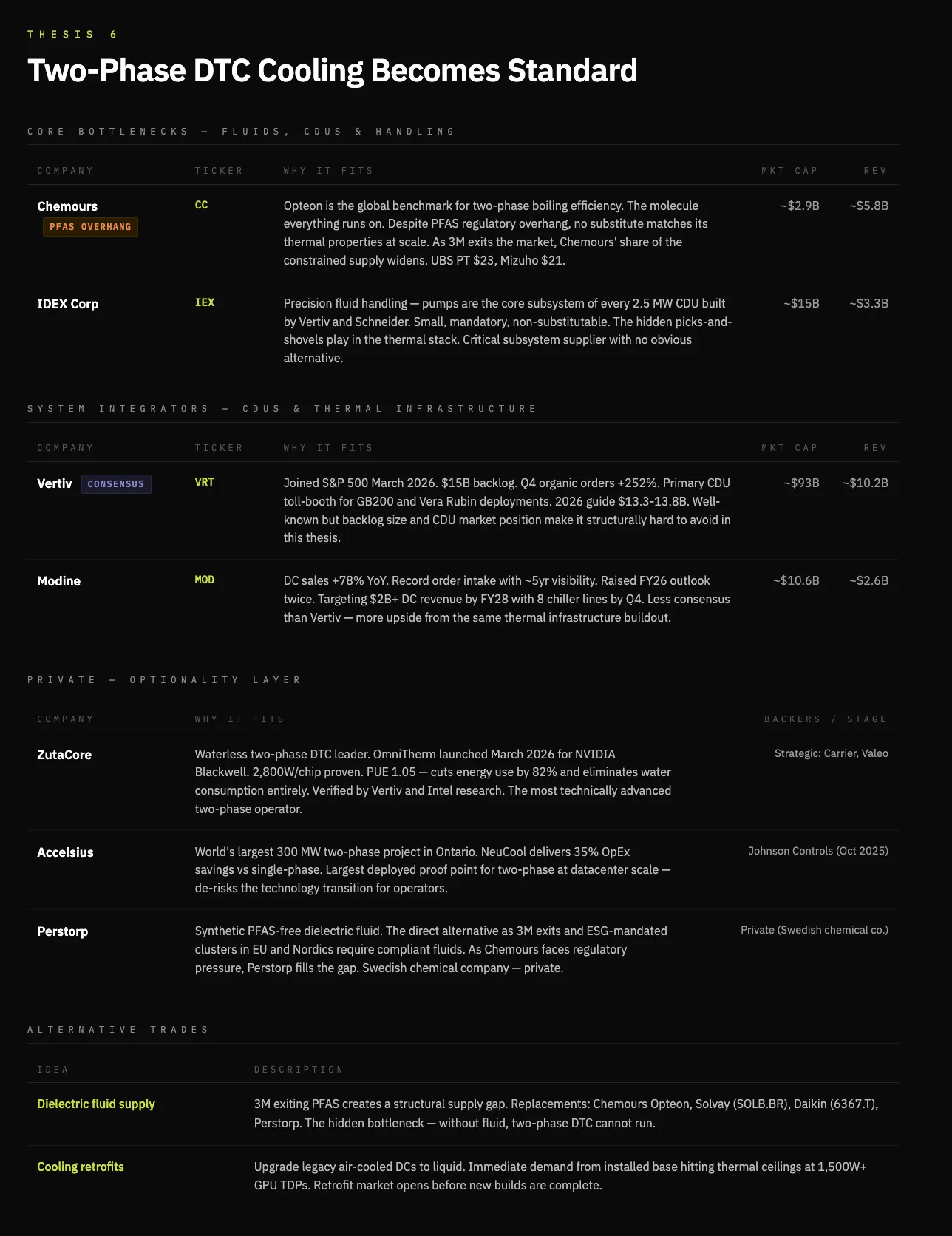

Thesis Five: Cooling Becomes a Primary Constraint—Two-Phase Direct-to-Chip (D2C) Cooling Becomes Mandatory for Frontier Applications

Another consequence is the rise of two-phase direct liquid cooling. Frankly, this thesis also reflects my own judgment: Chip power density is increasing along a parabolic trajectory—an increasingly thorny thermodynamic challenge. Traditional air cooling is fundamentally unsustainable for multiple reasons—chief among them, its inability to function at higher-density chips, compounded by environmental concerns around water and electricity consumption.

First, D2C cooling enables higher chip densities and performance—unconstrained by thermal management—a critical scaling issue. Today’s market reality is dominated by single-phase cooling, which is simpler: chilled water circulates through cold plates to cool chips—but has known limits. Once chip power density exceeds 1,500W, the shift to two-phase cooling becomes inevitable. Two-phase cooling pumps dielectric fluid around chips, engineered to boil at low temperatures—phase change from liquid to vapor dramatically boosts cooling efficiency.

Two-phase cooling can reduce energy consumption by 20% and water usage by 48%. This performance uplift permits denser chiplet packaging, enhances performance, and ultimately drives higher demand for high-performance cooling.

Leading two-phase DTC company Zutacore demonstrated two-phase D2C cooling using dielectric fluid (not water), reducing energy consumption by 82% and eliminating water use entirely—a result validated by Vertiv and Intel research. Zutacore is a notable private operator in this space; further, examining dielectric fluid suppliers may also prove valuable.

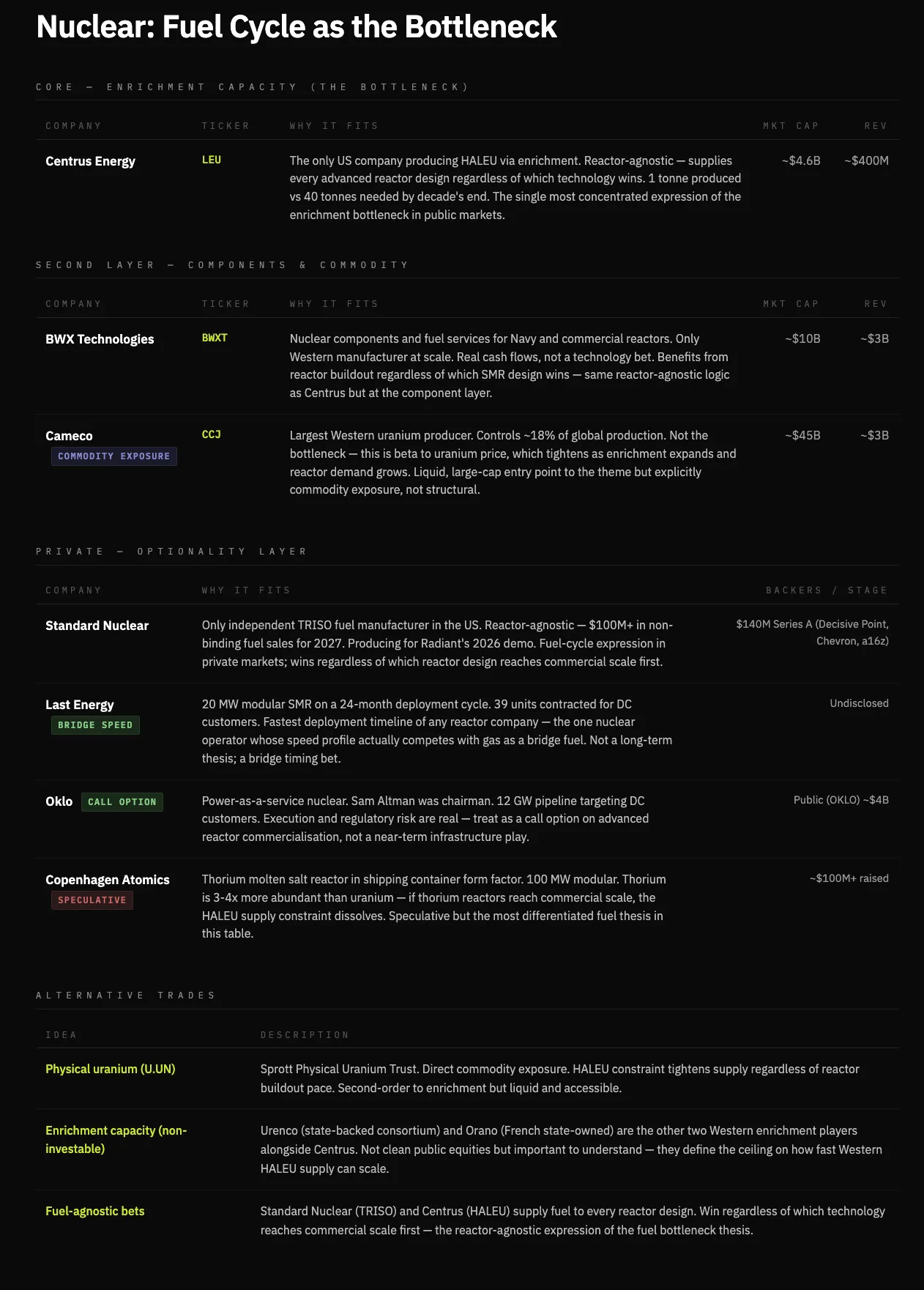

Thesis Six: Nuclear Power Can Serve as a Bridge Toward Energy Abundance and Stable Supply—but Not a Long-Term Answer for Energy Expansion

When drafting this article, I initially viewed nuclear power as a strong candidate to fill the near-term energy gap. Reality is that small modular reactors (SMRs) cost 5–10x more per kilowatt than comparable natural gas systems ($10,000–$15,000/kW)—making large-scale deployment and scaling effectively impossible.

Nuclear power solves reliability—not speed or cost—especially for BTM installations. It delivers stable, dispatchable baseload power where reliability is non-negotiable. Thus, nuclear has a role in the energy shortfall—as a bridge, not a core supply source.

Nuclear is constrained by fuel cycles and construction timelines. Today’s advanced reactors require high-assay low-enriched uranium (HALEU), for which there is virtually no commercial-scale supply today. Even once reactors are built, fuel availability becomes the key bottleneck to nuclear expansion speed.

Therefore, nuclear is unlikely to serve as the marginal solution for energy expansion—it arrives too slowly, demands excessive capital, and remains constrained by infrastructure and fuel. By contrast, the fastest-scaling systems—natural gas in the near term, solar and storage over the long term—are the real gap-closing options.

The investable bottleneck isn’t reactors—it’s fuel. As SMR demand grows, highly enriched uranium enrichment will become a critical bottleneck—a design-agnostic constraint: regardless of which reactor design ultimately prevails, value will accrue here.

Thesis Seven: A New Class of Energy Infrastructure Companies Emerges—Vertical Integrators That Convert Electrons into Compute

The bottleneck for AI infrastructure lies not just in energy—but in the large-scale conversion of energy into usable compute.

In the 1870s, like electricity today, oil wasn’t scarce—but refining and distribution were. Rockefeller built one of history’s largest companies (Standard Oil) by vertically integrating crude oil extraction, refining, and home delivery.

The intelligence revolution follows the same pattern: Electricity is the crude oil. Electricity is abundant—but reliably converting it into compute capacity faces constraints across power delivery, cooling, connectivity, and permitting. Refining electrons is where value resides. Each additional layer of ownership increases reliability, lowers cost, and captures margin—making vertical integration self-reinforcing.

Hyperscalers serve as the distribution layer—and the endpoint of compute consumption. Yet the structural opportunity lies in owning the infrastructure that distributors are forced to buy. This creates a new class of energy infrastructure firms: operators controlling generation, conversion, cooling, and hosting—all in one.

The clearest expressions are vertically integrated operators in private markets—like Crusoe and Lancium—and native compute platforms in public markets—like Iren and Core Scientific—which already possess the hardest-to-replicate foundational asset: energy.

Companies controlling electron flow to the rack are building the deepest moats in the AI economy. Software cannot eat physical infrastructure.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News