AI Inference Bills Skyrocket: Shopify and Roblox Warn That Savings from Layoffs Aren’t Enough to Cover Chip Costs

TechFlow Selected TechFlow Selected

AI Inference Bills Skyrocket: Shopify and Roblox Warn That Savings from Layoffs Aren’t Enough to Cover Chip Costs

At both ends of the AI dividend—labor savings and computational power consumption—the two items appeared side by side on the same financial report for the first time, with the latter clearly outweighing the former.

Author: Claude, TechFlow

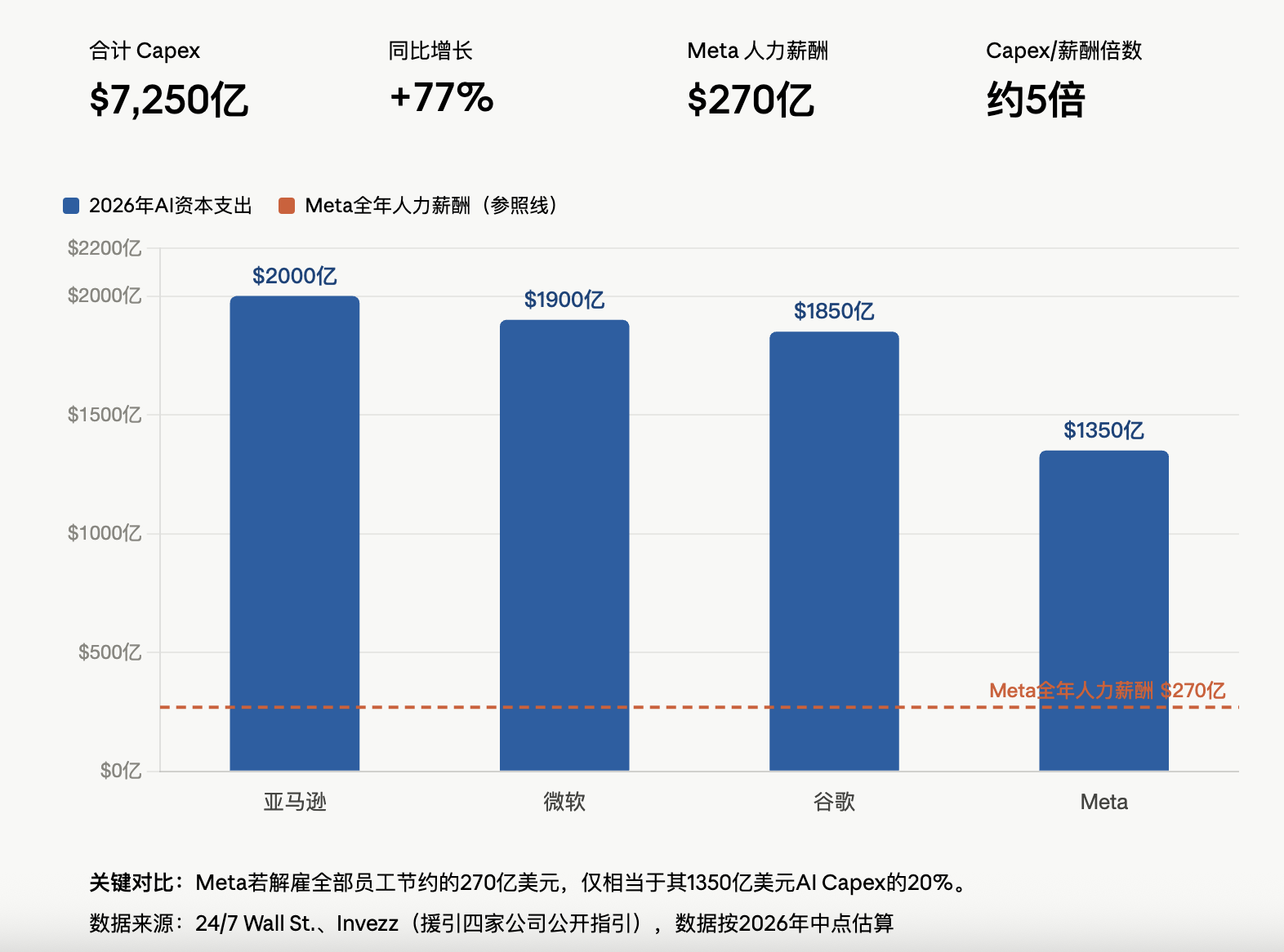

TechFlow Insight: The Q1 2026 earnings season for major tech companies has revealed a new phenomenon: while AI helps enterprises freeze hiring and cut jobs, its token consumption and GPU depreciation are simultaneously eroding gross margins in reverse. Shopify’s subscription business gross margin is being compressed by LLM costs; approximately one-quarter of Roblox’s downward revision to its full-year profit margin guidance is directly attributable to incremental AI investments. Amazon, Meta, Microsoft, and Google are collectively projected to spend $725 billion on AI capital expenditures in 2026—a 77% year-on-year increase. For the first time, the two ends of the AI dividend—labor savings and compute consumption—are appearing side-by-side on the same earnings report, with the latter clearly outweighing the former.

The Q1 earnings season is applying a corrective patch to the simplistic narrative of “AI replacing labor.”

A cohort of tech companies, while reporting achievements such as hiring freezes and accelerated product iteration, have been forced to explain a more thorny issue to investors: surging AI chip depreciation and unpredictable token consumption are eating into the savings generated by layoffs.

Harley Finkelstein, President of Shopify, stated during the May 5, 2026 earnings call that AI now handles over 50% of the company’s code-writing work—and enabled Shopify to deliver more than 300 products and features while keeping headcount flat. Yet, in the same call, management acknowledged that the gross margin for its subscription solutions is being partially offset by large language model (LLM) costs, a dynamic expected to persist.

Shopify: The LLM Cost Black Hole Behind an 80% Gross Margin

Shopify’s Q1 subscription solutions gross margin stood at 80%, unchanged year-on-year—but the cost of sustaining that figure is shifting.

According to Shopify’s 10-Q filing with the SEC, subscription solutions costs rose 20% year-on-year to $148 million in Q1 2026, up from $123 million in the prior-year quarter. Cloud and infrastructure costs—including AI-related usage—increased by $22 million, constituting the primary driver of cost expansion. CFO Jeff Hoffmeister noted on the earnings call that “economies of scale and improvements in support efficiency are being partially offset by rising LLM costs, primarily driven by merchant usage of Sidekick, and this dynamic is expected to continue.”

Sidekick is Shopify’s AI assistant embedded within its platform; weekly active stores using Sidekick surged 385% quarter-on-quarter. Merchants built over 12,000 custom apps using Sidekick this quarter—an increase of over 200% sequentially—and nearly half of all Shopify Flows were AI-generated. AI-driven store traffic grew eightfold, and orders generated via AI search rose nearly thirteenfold.

But this explosion in usage translates into exponential growth in AI inference calls. Every merchant interaction with Sidekick and every proactive suggestion generated by the Pulse feature incurs a token bill paid upstream to model providers.

Shopify separates its “internal AI” and “external AI” accounting for investors: internal AI use for coding and personnel-cost reduction represents a win in the “cost game,” whereas AI offerings delivered externally to merchants constitute a strategic choice to tightly couple infrastructure costs with merchant usage depth. Finkelstein summarized this logic on the earnings call as “AI is a structural advantage—not just a cost.”

Roblox: One-Quarter of Its Profit Margin Cut Comes Directly from AI

Naveen Chopra, Roblox’s CFO, explicitly disclosed on the April 30, 2026 Q1 earnings call that roughly one-quarter of the company’s downward revision to its full-year profit margin guidance stems from incremental AI investments and adjustments to DevEx (developer revenue share) for U.S. users aged 18 and older.

Roblox currently runs over 400 AI models across its own and cloud-based GPUs, processing 1.5 million inference calls per second—covering discovery recommendations, communication safety, marketplace recommendations, and 3D generation.

Management is attempting to isolate inference costs through business model adjustments. David Baszucki, Roblox co-founder and CEO, stated on the earnings call that the company’s upcoming “Roblox Reality” initiative—a technology enabling real-time, photorealistic 2K video rendering at 60Hz—will not be offered for free. “This will consume cloud computing resources. We’ll implement some form of subscription or pay-per-use mechanism, allowing us to offset the cost of real-time inference,” Baszucki explained.

Chopra added that Roblox’s 2026 capital expenditure guidance remains unchanged, with most inference demand met by deploying GPUs in its own data centers, while some training tasks continue to rely on the cloud. Roblox previously disclosed that by migrating certain AI inference workloads from third-party clouds to its own data centers by end-2025, it achieved a tenfold efficiency improvement in specific workloads such as safety moderation and content discovery.

Nonetheless, multiple pressures—including the aforementioned AI investment, reduced booking expectations leading to fixed-cost deleveraging, and an increase in DevEx rates for adult content creators (aged 18+) to 37.8%—collectively triggered market repricing of Roblox’s full-year profit margin outlook.

Industry Ledger: $72.5 Billion in Capital Expenditures vs. $2.7 Billion in Salary Savings

The micro-level cases of Shopify and Roblox sit within a broader macro-level structural imbalance.

Per data cited by 24/7 Wall St., Amazon, Meta, Microsoft, and Google are collectively projected to spend $725 billion on AI capital expenditures in 2026—a 77% year-on-year increase. Meta’s full-year capex guidance ranges between $125 billion and $145 billion, implying daily spending of up to $370 million on data center construction; Microsoft’s calendar-year 2026 capex stands at $190 billion, and Amazon has committed $200 billion.

This figure dwarfs personnel expenses. Meta’s total compensation—including salaries, benefits, and equity incentives—amounts to approximately $27 billion. Even if Meta laid off its entire workforce tomorrow, the resulting savings would cover less than one-fifth of its 2026 infrastructure spending.

Dan Ives, analyst at Wedbush Securities, estimated in a research note dated April 25 that Meta’s upcoming 8,000-person layoff would free up roughly $2.4 billion in annual operating expenses—offsetting only about 12% of the incremental depreciation drag expected in 2026. In other words, nearly ten dollars of labor-cost savings are required to fully hedge each dollar of AI compute spending.

Susan Li, Meta’s CFO, characterized the company’s workforce reduction during the Q4 2025 earnings call as “building a leaner operating model to help offset our ongoing large-scale investments.” This statement explicitly frames layoffs as a financial instrument to absorb AI capex—not as a byproduct of productivity gains.

Model Providers’ Victory, Application Layer’s Dilemma

The biggest beneficiaries of this ledger battle are foundational model and compute providers. Microsoft Cloud’s gross margin remains stable at 69% despite pressure from AI infrastructure expansion; OpenAI’s gross margin is estimated externally at ~50%; Anthropic’s is ~60%. NVIDIA continues to report a ~70% gross margin for FY2026.

Meanwhile, application-layer companies—particularly SaaS players that both consume AI and package AI capabilities into subscription offerings—are confronting a new financial structure: revenue correlates strongly with AI usage intensity, yet cost curves are dictated by upstream model providers—and every model upgrade may introduce new token consumption patterns.

Tanay Jaipuria, in his analysis of AI gross margins, points out that although inference costs for individual models decline annually by 80–90%, pricing for frontier models remains stable—or even rises. If application-layer firms insist on invoking the strongest available model for every request, their cost of goods sold (COGS) is effectively dictated by model providers’ pricing cards.

Shopify’s response is to position its AI products as strategic entry points deeply binding merchant traffic and engagement—making rising inference costs serve as a proxy metric for “platform embedding depth.” Roblox’s approach is to decouple premium AI experiences from its free tier and compel users to pay directly for inference costs. Both paths reflect the same consensus: mathematically, relying solely on layoffs to cover AI compute bills simply does not add up.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News