Zhipu, Moonshot, and Xiaomi Join Same Roundtable: Large Models Truly Begin “Getting Work Done,” but Computing Power Remains the Biggest Bottleneck

TechFlow Selected TechFlow Selected

Zhipu, Moonshot, and Xiaomi Join Same Roundtable: Large Models Truly Begin “Getting Work Done,” but Computing Power Remains the Biggest Bottleneck

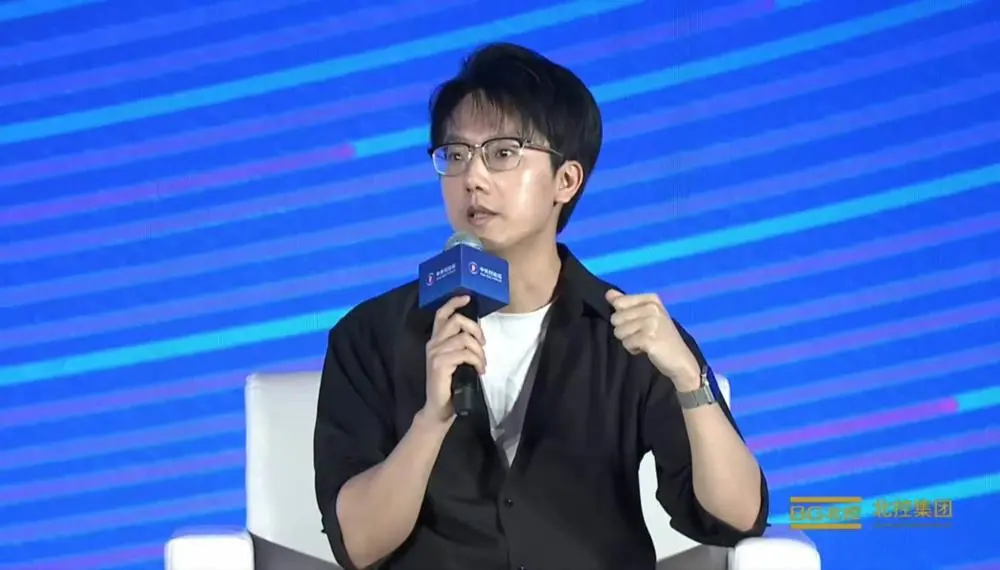

Hosted by Yang Zhilin, with Luofuli and Zhang Peng delivering insightful content, this “Lobster Dinner” thoroughly explored the future of AI.

Author: Chen Jun-da

Zhixi Dongxi, March 27 — Today at the Zhongguancun Forum, Zhipu CEO Zhang Peng, Moonshot AI CEO Yizhulin Yang (moderator), Xiaomi MiMo Large Model Lead Fuli Luo, InfraX CEO Lixue Xia, and Assistant Professor Chao Huang of the University of Hong Kong appeared together on stage—a rare occurrence—for an in-depth dialogue centered on the future of open-source large models and AI agents.

The discussion kicked off with OpenClaw—the hottest topic today. All panelists agreed that agents are what finally enable large models to “get real work done.” While OpenClaw expands the capability boundaries of large models, it also imposes higher demands on them. Zhipu is currently researching long-horizon planning and self-debugging capabilities; Luo’s team focuses instead on architectural innovation to reduce costs and accelerate inference—even enabling model self-evolution.

Infrastructure must also keep pace with agents. Xia believes current compute systems and software architectures are still designed for humans—not for agents—meaning human operational capacity effectively constrains agent performance. Thus, we need to build Agentic Infra.

To multiple panelists, open source is one of the core drivers behind large model and agent development. Assistant Professor Chao Huang of the University of Hong Kong stressed that a thriving open-source ecosystem is key to moving agents from “playthings” to genuine “co-workers.” Only through community-driven co-creation can software, data, and technology fully transition to agent-native paradigms—ultimately forming a sustainable global AI ecosystem.

In addition, panelists discussed topics including large model price hikes, explosive token consumption growth, and the top keywords for AI over the next 12 months. Below are the key takeaways from this roundtable:

1. Zhang Peng: As models grow larger, inference costs rise accordingly. Zhipu’s recent pricing adjustments reflect a return to normal commercial value—prolonged low-price competition harms industry development.

2. Zhang Peng: The surge in new technologies like agents has increased token usage tenfold—but actual demand may have grown 100-fold, leaving massive unmet needs. Compute remains the critical bottleneck over the next 12 months.

3. Luo Fuli: From the perspective of foundational model vendors, OpenClaw guarantees the floor—and raises the ceiling—for base models. Domestic open-source models + OpenClaw now achieve task-completion performance nearly on par with Claude.

4. Luo Fuli: DeepSeek has instilled courage and confidence across China’s large model industry. Certain architectural innovations—once perceived as “efficiency compromises”—have triggered genuine transformation, enabling the industry to extract maximum intelligence from fixed compute budgets.

5. Luo Fuli: Over the next year, the most important milestone in the AGI journey will be “self-evolution.” Self-evolution empowers large models to explore like top-tier scientists—the only path to truly “creating something new.” Xiaomi has already leveraged Claude Code + top-tier models to boost research efficiency tenfold.

6. Xia Lixue: When the AGI era arrives, infrastructure itself must become agent-native—autonomously managing the entire stack, iterating based on AI customer needs, and achieving self-evolution and self-updating.

7. Xia Lixue: OpenClaw has ignited token consumption. Today’s token burn rate feels like early 3G-era mobile data plans—where users had just 100 MB per month.

8. Huang Chao: In the future, much software won’t be human-facing at all. Software, data, and technology will all shift to agent-native forms. Humans may only interact with GUIs that bring them joy.

Below is the full transcript of this roundtable:

01. OpenClaw Is the “Scaffolding”; Token Consumption Remains in the 3G Era

Yizhulin Yang: It’s a great honor to host such distinguished guests today—representing the model layer, compute layer, and agent layer. Our two main themes today are open source and agents.

Let’s begin with the hottest topic right now: OpenClaw. What do you find most imaginative or memorable about using OpenClaw—or similar tools—in daily life? And from a technical standpoint, how do you view OpenClaw’s evolution and that of related agents?

Zhang Peng: I started experimenting with OpenClaw very early—back when it was still called Clawbot. Being a programmer myself, I enjoyed tinkering with it hands-on and gained firsthand experience.

I think OpenClaw’s biggest breakthrough—or novelty—is that it’s no longer exclusive to programmers or tech enthusiasts. Ordinary users can now easily harness state-of-the-art model capabilities—especially in programming and agent functionality.

So, in my conversations with others, I prefer calling OpenClaw “scaffolding.” It provides possibility—a robust, convenient, yet highly flexible framework built atop foundation models. Users can leverage many novel underlying model features according to their own needs.

Previously, ideas were often constrained by lack of coding skills or other technical know-how. Now, with OpenClaw, even complex tasks can be completed via simple, natural interaction.

OpenClaw delivered a powerful impact on me—reshaping how I understand this field altogether.

Xia Lixue: Honestly, I wasn’t comfortable using OpenClaw at first—I’m used to conversational interaction with large models, and initially found OpenClaw painfully slow.

But then I realized a crucial distinction: unlike chatbots, OpenClaw is essentially a “person” capable of completing large-scale tasks. Once I began assigning it more complex assignments, I discovered it performed remarkably well.

This realization deeply moved me. Models evolved from token-by-token chatting to becoming agents—like a “lobster”—capable of executing real tasks. This dramatically expands AI’s imaginative horizon.

Simultaneously, system-level requirements skyrocketed—explaining why OpenClaw felt sluggish at first. As an infrastructure provider, I see OpenClaw generating both opportunities and challenges across the broader AI system and ecosystem.

All existing resources fall short of supporting this rapidly accelerating era. For example, our company has seen token usage double every two weeks since late January—up roughly tenfold so far.

The last time I saw growth this fast was during the early days of 3G mobile data. I feel today’s token consumption resembles that era—when users got just 100 MB per month.

Under these conditions, all our resources require better optimization and integration—so everyone—not just in AI, but across society—can truly harness OpenClaw’s AI capabilities.

As an infrastructure player, I’m deeply excited and profoundly inspired by this era. I also believe there remain significant untapped optimization opportunities we must continue exploring and testing.

02. OpenClaw Elevates Domestic Model Ceiling; Interaction Paradigm Shift Is Highly Significant

Luo Fuli: Personally, I regard OpenClaw as a revolutionary and disruptive milestone in agent framework evolution.

Among those doing deep coding around me, Claude Code remains the top choice. Yet users of OpenClaw will notice its agent framework design leads Claude Code in several aspects—and recent Claude Code updates increasingly mirror OpenClaw’s approach.

My own experience with OpenClaw is that it extends my imagination anytime, anywhere. Claude Code initially extended creativity only on my desktop—but OpenClaw does so everywhere.

OpenClaw delivers two core values. First, its open-source nature enables deep community participation, driving serious engagement and continuous advancement of the framework—an essential prerequisite.

For AI frameworks like OpenClaw, a major value lies in elevating the ceiling for domestic models—those approaching closed-source model quality but not yet fully matching them.

In most scenarios, domestic open-source models + OpenClaw now achieve task-completion accuracy nearly on par with Claude’s latest models. At the same time, OpenClaw ensures a solid floor—via its Harness system and Skills architecture—to guarantee task integrity and accuracy.

In summary, from the perspective of foundational model developers, OpenClaw guarantees the floor and elevates the ceiling.

Additionally, another key contribution to the community is igniting awareness that beyond large models, the agent layer holds enormous imaginative potential.

I’ve recently observed increasing participation in AGI transformation—not just from researchers, but from broader audiences engaging with more powerful agent frameworks like Harness and Scaffold. These individuals are effectively substituting parts of their own work with such tools—freeing up time for more imaginative pursuits.

Huang Chao: First, regarding interaction paradigms—OpenClaw’s popularity likely stems partly from delivering a more “human-like” experience. We’ve been building agents for one or two years, but earlier ones like Cursor and Claude Code felt more like “tools.” OpenClaw, embedded directly into instant messaging apps, evokes the feeling of having a personal JARVIS—marking a breakthrough in interaction design.

Second, OpenClaw reaffirms the viability of simple yet efficient frameworks like Agent Loop. It also prompts us to reconsider whether we need an all-powerful “super-agent,” or a better “personal assistant”—a lightweight OS or scaffolding.

OpenClaw’s insight is that such a “small system”—or “lobster OS”—and its ecosystem foster a playful, exploratory mindset, unlocking all tools within the ecosystem.

With emerging capabilities like Skills and Harness, more people can design applications for OpenClaw-like systems—empowering diverse industries. This naturally integrates tightly with the open-source ecosystem. To me, these are our two biggest insights.

03. GLM New Model Built Specifically for “Getting Work Done”; Price Hikes Reflect Return to Normal Commercial Value

Yizhulin Yang: Let’s ask Zhang Peng. Recently, Zhipu launched the new GLM-5 Turbo model—we understand it significantly enhances agent capabilities. Could you introduce how this model differs from others? Also, we’ve noticed your pricing adjustment—what market signal does that convey?

Zhang Peng: Excellent question. We indeed rushed out an update recently—it’s part of our roadmap, just accelerated.

The primary goal is shifting from “simple conversation” to “getting real work done”—a trend many have recently experienced: large models aren’t just chatty anymore—they genuinely help users get things done.

But “getting work done” implies demanding underlying capabilities: long-horizon task planning, iterative trial-and-error, context compression, debugging, and multimodal processing. Requirements differ substantially from traditional dialogue-oriented general-purpose models. GLM-5 Turbo specifically strengthens these areas—particularly enabling sustained, uninterrupted operation over extended periods, like running continuously for 72 hours. We’ve invested heavily here.

Token consumption is another major concern. Deploying intelligent models for complex tasks incurs massive token usage. Average users may not notice—but billing statements reveal rapid cost escalation. So we optimized token efficiency for complex tasks. Architecturally, it remains a multitask-cooperative general-purpose model—just with targeted capability enhancements.

Pricing adjustments are straightforward to explain. As noted, it’s no longer simple Q&A—the reasoning chains are extremely long. Many tasks involve coding, interfacing with infrastructure, constant debugging, and error correction—driving huge consumption. Completing one complex task may require ten to one hundred times more tokens than answering a simple question.

Thus, prices must rise. Models are growing larger, and inference costs are rising accordingly. We’re returning to normal commercial value—because prolonged low-price competition harms industry health. This supports a virtuous commercial cycle, enabling continued model optimization and better service delivery.

04. Building a More Efficient Token Factory; Infrastructure Itself Must Be Agent-Native

Yizhulin Yang: With increasing open-source models forming ecosystems, various models deliver greater value across diverse compute platforms. As token usage explodes, large models are transitioning from the training era to the inference era. Could you share, Lixue, what this inference era means for InfraX from an infrastructure perspective?

Xia Lixue: We’re an infrastructure provider born in the AI era, currently supporting Zhipu, Kimi, MiMo, and others—helping them operate token factories more efficiently. We also collaborate extensively with universities and research institutes.

So we constantly ask ourselves: What infrastructure does the AGI era require—and how do we progressively realize and evolve it? We’re fully prepared for short-, mid-, and long-term challenges.

The most immediate issue is the explosive token volume driven by OpenClaw—demanding higher system efficiency optimization. Pricing adjustments are one response to this pressure.

We consistently pursue end-to-end hardware-software integration. For example, we support virtually all types of compute chips—unifying over a dozen domestic chip types and dozens of compute clusters. This addresses AI system compute scarcity: when resources are tight, maximize utilization of all available resources—and ensure each compute unit delivers peak conversion efficiency.

So our current focus is building a more efficient token factory. We’ve implemented numerous optimizations—including optimal alignment between models and hardware memory, and deeper synergies between cutting-edge model and hardware architectures. But solving today’s efficiency challenge merely creates a standardized token factory.

For the agent era, this isn’t enough. Agents resemble humans—they accept tasks. I firmly believe most cloud-era infrastructure is designed for programs—or human engineers—not for AI. This means our infrastructure exposes human-facing interfaces, with agents layered atop—effectively constraining agent potential with human operational limits.

For example, agents can reason and initiate tasks in milliseconds—but underlying systems like Kubernetes weren’t built for this, as humans typically initiate tasks on minute-level timescales. We need further capabilities—what we call “Agentic Infra”: a “smart token factory.” That’s what InfraX is building.

Looking farther ahead, in the true AGI era, infrastructure itself must be agent-native. Our factory must self-evolve and self-update—forming autonomous organizations. It would have a CEO—an agent, possibly OpenClaw—that manages the entire infrastructure, autonomously identifies needs, and iterates infrastructure based on AI customer demands. This enables tighter AI-to-AI coupling. We’re already exploring capabilities like improved agent-to-agent communication and cache-to-cache operations.

So we continually consider: infrastructure and AI development shouldn’t exist in isolation—where I simply fulfill requests. Instead, they must generate rich synergies: true hardware-software co-design, algorithm-infrastructure co-evolution—this is InfraX’s mission. Thank you.

05. Innovations “Compromising for Efficiency” Still Matter; DeepSeek Empowers Domestic Teams with Courage and Confidence

Yizhulin Yang: Next, let’s ask Fuli. Xiaomi has recently contributed significantly to the community by releasing new models and open-sourcing underlying technologies. What unique advantages does Xiaomi possess in large model development?

Luo Fuli: Let’s set aside Xiaomi’s specific advantages—I’d rather discuss broader strengths of Chinese large model teams. This topic holds wider relevance.

Roughly two years ago, Chinese foundational model teams achieved strong breakthroughs—overcoming constraints of limited compute, especially NVLink interconnect bandwidth limitations, through seemingly “efficiency-compromising” architectural innovations: DeepSeek V2/V3 series, MoE, MLA, etc.

Yet these innovations sparked transformative change: maximizing intelligence output from fixed compute budgets. DeepSeek gave all domestic foundational model teams courage and confidence. Though today’s domestic chips—especially inference and training chips—no longer face such constraints, these earlier limitations drove exploration of more efficient training and lower-cost inference architectures.

Recent examples include Hybrid Sparse and Linear Attention architectures—DeepSeek’s NSA, Kimi’s KSA, and Xiaomi’s HySparse for next-gen structures—all distinct from MoE-era designs and purpose-built for the agent era.

Why are structural innovations so vital? Try OpenClaw yourself—you’ll find it grows smarter and more capable the more you use it. A key enabler is inference context length. Long-context has been widely discussed—but which models truly deliver strong performance, high speed, and low inference cost at million- or ten-million-token contexts?

Many models can technically handle 1M or 10M contexts—but inference costs are prohibitive, and speeds too slow. Only by lowering costs and speeding up inference can we assign high-productivity-value tasks to models—and execute higher-complexity tasks, even enabling model self-iteration.

Model self-iteration means evolving within complex environments using ultra-long contexts—evolving the agent framework itself, or even model parameters themselves. Because context itself functions as parameter evolution. So designing long-context architectures—and achieving efficient long-context inference—is a comprehensive competitive arena.

Beyond pre-training-stage long-context-efficient architecture—which we began exploring a year ago—achieving stable, high-ceiling performance on long-horizon tasks is the innovation paradigm we’re iterating on in post-training.

We’re exploring how to construct more effective learning algorithms, collect real-world text with genuine long-range dependencies (at 1M, 10M, or 100M contexts), and integrate trajectory data generated in complex environments. This is our current post-training focus.

Longer term, given large models’ rapid progress and agent framework acceleration—as Lixue noted, inference demand has surged nearly tenfold recently. Will token usage grow 100-fold this year?

That introduces another competitive dimension—compute, or inference chips, and even energy. So if we collectively ponder this, I may learn even more from all of you. Thank you.

06. Agents Have Three Key Modules; Multi-Agent Explosion Will Bring Impact

Yizhulin Yang: Truly insightful sharing. Next, Chao, you’ve developed influential agent projects like Nanobot and enjoy strong community support. From the perspective of agent harnesses or application layers, what technical directions do you consider most important and worth attention?

Huang Chao: Abstracting agent technology reveals three key modules: Planning, Memory, and Tool Use.

First, Planning. Current challenges lie in long-horizon or highly complex contexts—e.g., 500+ steps—where many models struggle. Fundamentally, models may lack implicit knowledge, especially in complex vertical domains. Future directions may involve hard-coding such domain-specific knowledge into models.

Skill and Harness frameworks partially mitigate planning errors by providing high-quality skills—essentially guiding models through difficult tasks.

Second, Memory. Memory faces persistent issues: inaccurate information compression and unreliable retrieval—especially under long-horizon tasks and complex scenarios, where memory pressure spikes. Currently, projects like OpenClaw use simple file-system-based Markdown memory, shared via files. Future memory may adopt hierarchical design and greater universality.

Frankly, current memory mechanisms struggle with universality—coding, deep research, and multimodal scenarios involve vastly different data modalities. Achieving efficient, accurate retrieval and indexing across them remains an eternal tradeoff.

Also, OpenClaw has drastically lowered agent creation barriers—so soon there may be more than one “lobster.” I’ve seen Kimi launch Agent Swarm mechanisms—soon each person may have “a swarm of lobsters.”

Compared to a single lobster, a swarm exponentially increases context load—placing immense pressure on memory. No robust mechanism yet exists to manage swarm-generated context—especially for complex coding or scientific discovery, stressing both models and agent architectures.

Third, Tool Use—i.e., Skills. Skill issues resemble MCP’s original problems: inconsistent quality and security risks. Today’s Skills face similar issues—many exist, but few are high-quality; low-quality Skills degrade task accuracy. Malicious injection is another concern. So for Tool Use, community collaboration is essential to improve the Skill ecosystem—even enabling Skills to self-evolve new Skills during execution.

Overall, Planning, Memory, and Tool Use represent current agent pain points—and future directions.

07. Top Keywords for Next 12 Months: Ecosystem, Sustainable Tokens, Self-Evolution, and Compute

Yizhulin Yang: We see both speakers addressing a shared issue from different angles: increasing task complexity drives explosive context growth. On the model side, native context length can be enhanced; on the agent harness side, mechanisms like Planning, Memory, and Multi-Agent can support more complex tasks within fixed model capabilities. I believe these two directions will generate stronger synergies, further boosting task completion capability.

Finally, an open-ended outlook. Please each pick one word describing the trend and your expectations for large model development over the next 12 months. Let’s begin with Chao.

Huang Chao: Twelve months seems incredibly distant in AI—nobody knows where we’ll be then.

Yang Yizhulin: Originally it said five years—I changed it.

Huang Chao: Right, haha. My word is “ecosystem.” OpenClaw has energized the community, but for agents to become true “co-workers”—not just novelties—requires ecosystem-wide effort. Especially open source: once technical exploration and model tech are open-sourced, the whole community must co-create—iterating models, Skill platforms, and tools—all oriented toward empowering “lobsters.”

A clear trend: Will future software still serve humans? I believe much future software won’t be human-facing—humans need GUIs, while future software will be agent-native. Interestingly, humans may only use GUIs that bring joy. Meanwhile, the ecosystem shifts from GUI/MCP to CLI modes. So the ecosystem must transform software systems, data, and technologies into agent-native forms—enabling richer, more sustainable development.

Luo Fuli: Narrowing to one year is highly meaningful. If it were five years, based on my definition of AGI, I’d say it’s already achieved. So the most critical thing in the AGI journey over the next year is “self-evolution.”

It sounds mystical—and has been mentioned repeatedly over the past year. But recently, I’ve gained deeper, more pragmatic insights into how to implement self-evolution. With powerful models, we never fully exploited pretrained model ceilings under chat paradigms—agent frameworks unlocked them. When models execute longer tasks, they begin learning and evolving autonomously.

A simple experiment: add verifiable constraints and loops to existing agent frameworks—letting models iterate and optimize goals continuously—reveals they produce steadily improving solutions. Such self-evolution can now run for one or two days—depending on task difficulty.

For example, in scientific research—like discovering better model architectures with clear evaluation metrics (e.g., lower PPL)—we observe autonomous optimization and execution over two or three days for deterministic tasks.

So from my view, self-evolution is the only place where we truly “create new things.” It doesn’t replace human productivity—it explores undiscovered frontiers like top-tier scientists. A year ago, I’d estimate this timeline at three to five years—but now I believe it’s one to two years. Soon, combining large models with powerful self-evolution agent frameworks may accelerate scientific research exponentially.

I’ve already observed that our large model research team—whose workflows are highly uncertain and creative—has boosted research efficiency nearly tenfold using Claude Code plus top-tier models. I eagerly anticipate this paradigm spreading across disciplines and fields—making “self-evolution” critically important.

Xia Lixue: My keyword is “sustainable tokens.” AI development remains a long-term process—and we hope it sustains vitality. From infrastructure’s perspective, resources are ultimately finite.

Like sustainability discussions decades ago, as a token factory, can we sustainably, stably, and massively supply tokens—enabling top-tier models to serve downstream applications broadly? This is a key challenge we face.

We must broaden our view across the entire ecosystem—from energy to compute, to tokens, to applications—establishing sustainable economic iteration. Not only must we utilize domestic compute resources, but also export these capabilities globally—integrating worldwide resources.

I also believe “sustainability” means building China’s distinctive token economics. Historically, “Made in China” transformed China’s low-cost manufacturing into high-quality global goods.

Now we aim for “AI Made in China”—leveraging China’s advantages in energy and other areas to sustainably convert them into premium tokens via token factories—exporting them globally as the world’s token factory. This is the value China’s AI should deliver to the world this year.

Zhang Peng: I’ll keep it brief. Everyone gazes at the stars—I’ll stay grounded. My keyword is “compute.”

As mentioned earlier, all technologies and agent frameworks boost creativity and efficiency tenfold—but only if users can actually deploy them. You can’t pose a question and wait minutes for an answer—that’s unacceptable. Such delays stall research progress and block initiatives.

Two years ago, an academician at the Zhongguancun Forum said: “No GPUs, no feelings; talking GPUs hurts feelings.” Today, we’ve reached that point again—but the situation has changed. We’re now in the inference era, with demand exploding—tenfold, hundredfold. You mentioned token usage rose tenfold—but actual demand may be one hundredfold, with vast unmet needs. How do we solve this? Let’s collectively brainstorm.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News