Introducing GPU-EVM: A New Contender in the Parallel EVM Space, Using EVM to Train AI Agents

TechFlow Selected TechFlow Selected

Introducing GPU-EVM: A New Contender in the Parallel EVM Space, Using EVM to Train AI Agents

GPU-EVM improves transaction throughput by using graphics processing units (GPUs) to execute operations in parallel.

Author: Eito Miyamura

Translation: TechFlow

GatlingX is a project led by Oxford University alumni focused on machine learning and reinforcement learning. They recently launched "GPU-EVM"—which, according to internal benchmarking results, may be the highest-performing Ethereum Virtual Machine (EVM) currently available on the market.

GPU-EVM is an EVM scaling solution so powerful that state-of-the-art reinforcement learning (RL)-based AI agents can be trained directly on it, the development team says. By enabling parallel execution across multiple Ethereum applications, it helps train AI agents to discover security vulnerabilities.

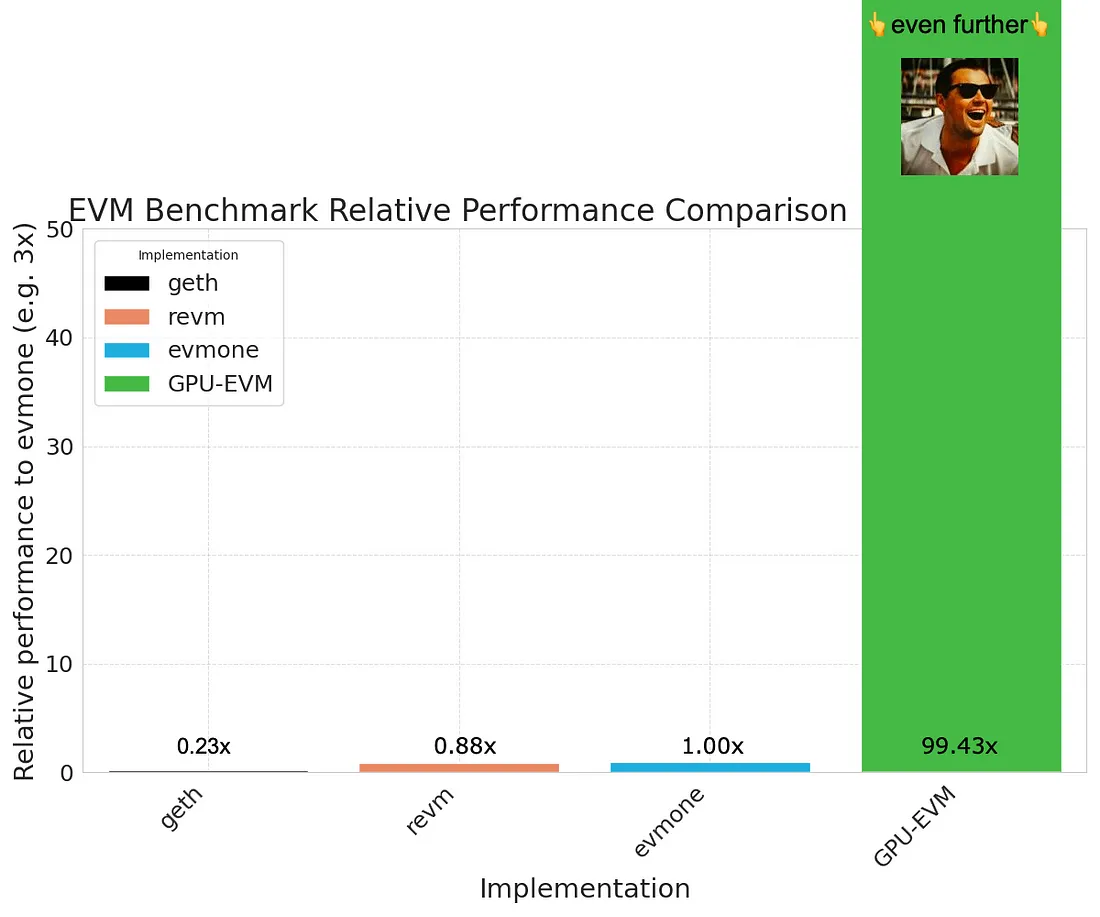

GPU-EVM leverages graphics processing units (GPUs) to execute operations in parallel, significantly increasing transaction throughput. The team claims GPU-EVM processes tasks nearly 100 times faster than current high-performance EVMs such as evmone and revm. This advantage stems from GPUs’ ability to handle numerous operations simultaneously, utilizing their inherently parallel architecture.

GPU-EVM harnesses the immense computational power of graphics processing units (GPUs) to run Ethereum Virtual Machine (EVM) operations in parallel. Instead of executing tasks sequentially, GPU-EVM can process many tasks at once, dramatically accelerating computation speed. This breakthrough by the Oxford computer science/artificial intelligence alumni team substantially improves the cost-efficiency of computations per second for the Ethereum Virtual Machine.

The Ethereum Virtual Machine (EVM) is the industry-standard virtual machine that executes smart contracts and forms the foundation of modern blockchain technology. The EVM functions like an operating system for blockchains, enabling trustless transactions across many distributed computers via its CPU-based client software.

With GPU-EVM and its enhanced performance, ambitious engineering teams downstream gain significant functional improvements: infrastructure for AI/RL models interacting with EVMs, accelerated L2s, MEV, backtesting, and more. (See details below)

GPU-EVM: A New Paradigm for EVM Computation

NVIDIA began as a niche company focused on gaming but has now become a pivotal player in computing, standing at the forefront of the AI revolution. This evolution reflects a shift from Moore's Law—which predicted computing power would double every two years—to Huang’s Law, named after NVIDIA CEO Jensen Huang. Huang’s Law states that due to the integration of hardware, software, and AI, GPU performance increases by more than double every two years, surpassing CPUs and making GPUs central to accelerating complex tasks.

As we approach the limits of Moore’s Law, reliance on GPU parallelism signals a new era of computing—one transitioning from CPU dominance to GPU-driven advancement (see Dennard scaling, Amdahl's Law). This shift is akin to moving from single-lane roads to multi-lane highways—not only speeding up processes but also enabling far more concurrent activities, vastly expanding technological possibilities.

Jevons Paradox aptly illustrates this effect: just as the efficiency of LED bulbs led to broader rather than reduced usage, the enhanced efficiency and lower costs enabled by GPU-EVM unlock a vast array of new possibilities. It does not merely conserve resources—it catalyzes innovation and adoption across blockchain and beyond, promising a future where GPU-powered efficiency drives exponential growth in computational applications.

GPU-EVM Performance

By leveraging significant advances in general-purpose computing on modern GPUs, we have elevated GPU-EVM performance to over 100x that of traditional EVMs. Modern GPUs are designed with thousands of cores capable of handling multiple operations simultaneously, making them ideal for parallel workloads. This inherent architectural advantage allows GPU-EVM to execute massive numbers of EVM instructions in parallel, greatly accelerating both speed and efficiency.

To objectively measure the performance gains brought by GPU-EVM, we conducted comprehensive benchmarking using open-source tools provided by EVM Bench. This tool enables us to simulate various EVM operations and compare execution times between traditional CPU-based EVMs and our GPU-EVM.

Compared to traditional computing paradigms, GPU-EVM completely outperforms through the unmatched processing capabilities of GPUs, setting a new standard for EVM performance and efficiency.

With this technical foundation established, let’s explore how GPU-EVM revolutionizes fields such as AI training and DeFi simulation, opening new frontiers for blockchain applications.

Training AI Agents Using the EVM

Artificial intelligence is transforming the world, led by ChatGPT and other LLM chatbots trained through reinforcement learning from human feedback (RLHF), applying principles of reinforcement learning (RL). At its core, RL embodies the process of training AI agents to make decisions by interacting with an environment that rewards correct behavior. This learning method is crucial because it mirrors the fundamental way humans and animals learn from their surroundings, making it foundational for developing intelligent systems that can adapt and optimize their behavior autonomously.

AlphaGo’s landmark victory over a Go world champion demonstrated the transformative power of RL. It was more than just a game—it showed how, through RL, AI could discover strategies and solutions that surpass human insight, achieved via simulation and interaction within the complex environment of a Go board. This breakthrough highlights the essence of RL: enabling AI agents to navigate and learn independently from their environment to achieve specific goals, guided by a reward system.

However, the journey toward such AI breakthroughs via RL is filled with computational challenges. Simulating environments for AI requires substantial computing resources. The emergence of GPU-parallelized simulation environments—such as NVIDIA’s Isaac Gym, Google’s Brax, and JAX-LOB—has played a key role in overcoming these barriers. By leveraging GPU parallelization, these platforms have achieved performance improvements ranging from 100x to 250,000x, making the computational aspects of RL far more feasible and efficient. Since the bottleneck in AI training often lies in communication bandwidth between CPU and GPU, GPU parallelization delivers these speed gains and has become the industry standard in RL research.

In the fast-evolving world of AI, GPU-EVM acts as a GPU-parallelized simulation environment that directly facilitates the training of AI agents within the blockchain ecosystem. One compelling application is in finance, where GPU-EVM could revolutionize real-time fraud detection systems. History underscores their importance—Max Levchin developed PayPal’s first anti-fraud mechanism to prevent corporate bankruptcy. By enabling financial AI to simulate and analyze millions of transactions in seconds, it can identify anomalous patterns indicative of fraud with unprecedented speed and accuracy. A task that might previously have taken days now becomes instantaneous, representing a major shift in how institutions combat fraud. Integrating AI agents with the EVM into GPU-EVM opens new pathways for applying reinforcement learning (RL) principles within blockchain. Here, AI agents learn and improve by accurately identifying fraudulent transactions based on predefined reward functions.

L2 Acceleration / Simulation

The emergence of Layer 2 (L2) solutions is critical for enhancing Ethereum’s throughput, thereby promoting mainstream adoption, especially in payments. By processing transactions off the main Ethereum blockchain (Layer 1), L2s significantly increase network capacity while preserving its core principles of security and decentralization. Unlike traditional CPU-based systems, GPU-EVM operates independently and can seamlessly integrate with and accelerate existing L2 solutions. This acceleration can be achieved through various methods, including optimizing view functions and applying algorithms like Monte Carlo Tree Search for more efficient block construction and transaction ordering.

However, the role of parallel EVMs in L2 acceleration is nuanced and requires careful consideration. Directly accelerating L2s using parallel EVMs is not as straightforward as it may seem. To fully leverage the capabilities of parallel EVMs, concerted efforts must focus on innovating the design of L2 solutions and their underlying databases. This point is emphasized by works such as the following:

Although integrating GPU-EVM with L2 solutions holds great promise, several challenges remain. Key bottlenecks include addressing storage limitations, managing interdependent transactions across long chains, and reducing state bloat costs. GPU-EVM alone cannot solve all these issues. Therefore, in the context of L2 acceleration, collaborative innovation in L2 design and their supporting databases is essential to overcome these obstacles and fully realize the benefits of GPU-EVM.

DeFi Simulation / Fuzz Testing

The foundational performance boost of GPU-EVM brings transformative change to DeFi simulation and fuzz testing. This dramatic increase in data processing capability enables the discovery of edge cases in DeFi strategies and protocol designs previously unconsidered, uncovering potential hidden vulnerabilities. To illustrate the significance of this advancement, traditional CPU-based approaches can be likened to water pistols, whereas GPU-EVM is more like a powerful fire hose—an infinitely more effective pest control tool.

Thanks to GPU-EVM’s foundational performance gains, fuzzers running on this platform can explore deeply and operate at astonishing speeds, identifying edge cases in seconds—compared to CPU-based fuzzers, which might take weeks or even months to detect the same issues. The ability to run these advanced fuzzers atop GPU-EVM enables continuous monitoring of smart contracts, particularly those already in production. These automated systems relentlessly challenge smart contracts, attempting to anticipate potential vulnerabilities several steps ahead—like a strategic game of chess—with the ultimate goal of ensuring maximum security.

Our upcoming product embodies this cutting-edge approach to DeFi simulation and fuzz testing. Stay tuned—it will redefine standards for smart contract security and resilience.

About GatlingX

GatlingX is an applied infrastructure and artificial intelligence lab focused on building heavy-duty technical infrastructure. Our mission is to create diverse blockchain application products that operate at the deepest infrastructure layers.

We believe there are extremely difficult technical problems that the blockchain industry avoids solving because they’re too hard. Fast and cheap security, computational performance, and speed are necessary prerequisites for a thriving blockchain ecosystem—but they are also profoundly challenging problems that bring immense pain. We believe unless we bring together the world’s best problem solvers to tackle them, no one else will.

We are committed to advancing the state-of-the-art in AI, GPU computing, blockchain, and distributed systems—domains critical to driving global technological progress.

We are a group of passionate builders: if we can buy something off the shelf, we will. If not, we build it ourselves.

Using GPU-EVM

GPU-EVM is currently in private early access as we scale GPU capacity. If you're interested in using GPU-EVM for your engineering work, please fill out this form to join the waitlist.

Our team is small but exceptionally talented. Our founding members are Oxford alumni who have achieved breakthroughs in infrastructure and applied AI, having worked at companies like Crowdstrike, Wayve, Citadel Securities, and created impactful projects such as ZKMicrophone and Graphite.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News