From $500 Million to $30 Billion: How Crypto Madman SBF Bet on the Most Valuable Company of the AI Era

TechFlow Selected TechFlow Selected

From $500 Million to $30 Billion: How Crypto Madman SBF Bet on the Most Valuable Company of the AI Era

SBF, Anthropic, and the Money Maze of Effective Altruism

Author: TechFlow

Anthropic is now the most important AI company on the planet—perhaps without peer.

Its Claude large language models are deployed at the Pentagon, U.S. intelligence agencies, and national laboratories, where the U.S. military uses them for intelligence analysis and target selection in its military operations against Iran.

Its annualized revenue has surged from zero to $14 billion in under three years. In February 2026, Anthropic closed its $30 billion Series G funding round, achieving a post-money valuation exceeding $380 billion. Amazon, Google, NVIDIA, and Microsoft—tech giants—are lining up to invest.

Over the past few weeks, it has been engaged in a globally watched negotiation with the Pentagon over the weaponization of AI.

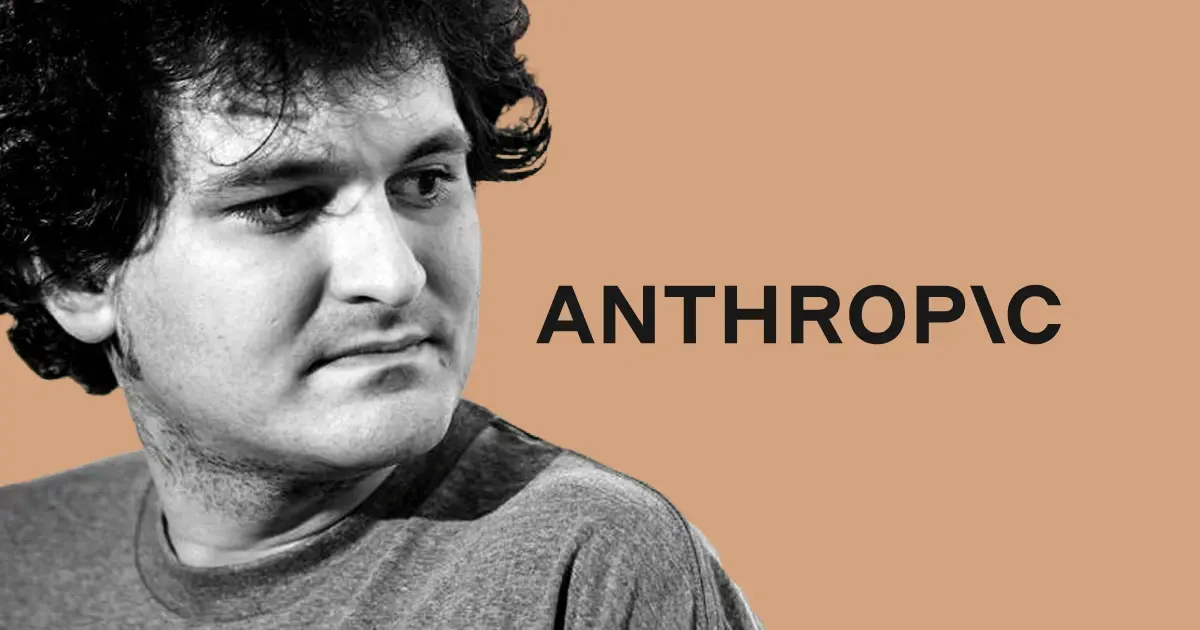

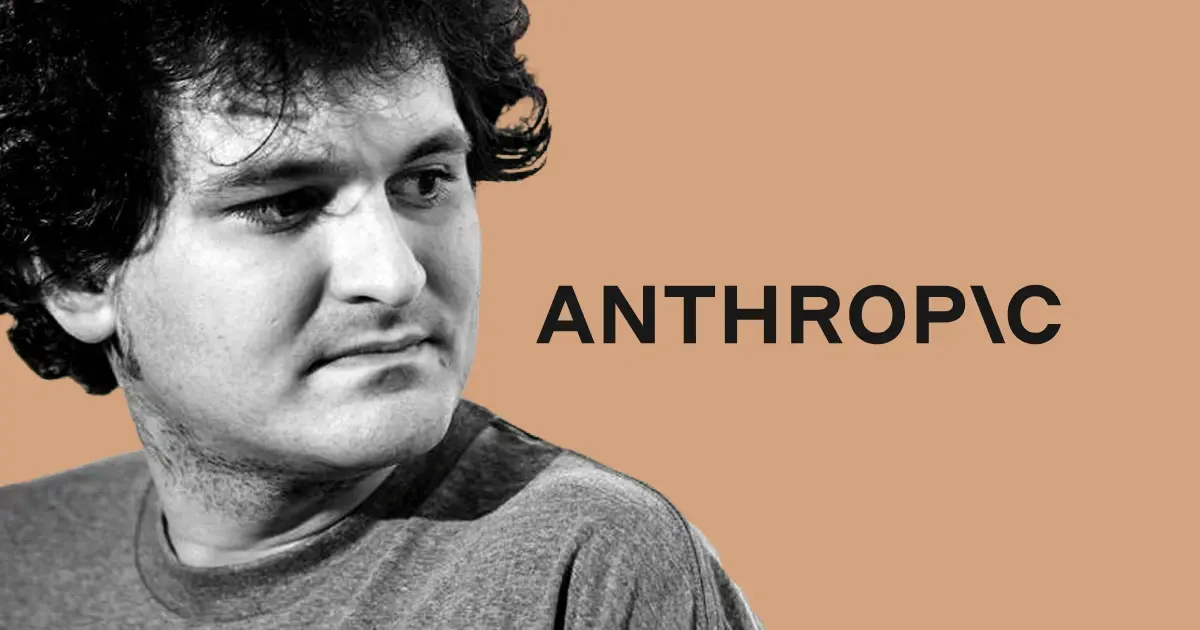

Yet buried in the company’s early funding history lies a name still widely discussed today: Sam Bankman-Fried.

In April 2022—before ChatGPT even existed and when the AI sector was far less hyped—SBF, through his hedge fund Alameda Research, poured $500 million into Anthropic’s Series B round, accounting for a staggering 86% of the entire $580 million round and securing roughly 8% equity. Seven months later, the FTX empire collapsed. SBF became the central figure in cryptocurrency’s largest fraud case in history and was sentenced to 25 years in prison—the $500 million having come from FTX customer deposits.

But had SBF not been arrested—and had that money been legitimately sourced—his 8% stake would, at today’s $380 billion valuation, be worth over $30 billion. A $500 million investment ballooning to $30 billion represents a return exceeding 60x—a profit figure that would rank among the highest ever recorded in venture capital history.

A convicted cryptocurrency fraudster currently serving time in federal prison came within inches of placing the wildest bet in AI investment history.

How did SBF identify Anthropic as early as 2022? Why did he dare commit $500 million all at once? And why did Anthropic accept the money?

The answer lies within a movement known as “effective altruism.”

A Shared Apartment, a Movement, and a Check

In mid-2010s San Francisco, a group of people lived in similar shared apartments, attended the same gatherings, read the same papers, and subscribed to the same philosophy.

That philosophy is effective altruism (EA). Its core proposition is simple: charity shouldn’t be guided by sentiment—it should be guided by calculation. Every dollar should flow toward the direction that mathematically “maximizes good,” and according to one major EA branch, humanity’s foremost existential risk isn’t nuclear war or pandemic—it’s uncontrolled artificial intelligence.

Dario Amodei was immersed in this circle.

He was the 43rd signatory of the Giving What We Can Pledge, committing to donate at least 10% of his income. He had already become a fan of GiveWell as early as 2007 or 2008.

He shared an apartment with two others: Holden Karnofsky, co-founder of GiveWell and Open Philanthropy—one of EA’s most influential fund allocators—and Paul Christiano, a leading researcher in AI alignment. At the time, both Dario and Paul served as technical advisors to Open Philanthropy.

Later, Karnofsky married Dario’s sister Daniela. After their engagement, the couple briefly lived with Dario. In January 2025, Karnofsky quietly joined Anthropic as a “technical employee” overseeing safety strategy—an appointment Anthropic hadn’t publicly announced, even when discovered by a Fortune reporter.

This was an intimate social network.

Amanda Askell, an early Anthropic employee, was formerly married to William MacAskill, one of EA’s founding figures. She was the 67th signatory of Giving What We Can and wrote her doctoral thesis on a core philosophical question within EA: how to handle infinities in ethics.

Anthropic’s most important governance body—the Long-Term Benefit Trust—holds significant theoretical control over the company. Three of its four members come directly from the EA ecosystem: Neil Buddy Shah, former managing director of GiveWell; Zach Robinson, CEO of the Center for Effective Altruism; and Kanika Bahl, CEO of Evidence Action—a long-term grantee of GiveWell.

The three largest financial backers in EA history are all early investors in Anthropic: Facebook co-founder Dustin Moskovitz, Skype co-founder Jaan Tallinn, and Sam Bankman-Fried.

This is the real pathway through which SBF found Anthropic—not genius investment insight, nor prescient judgment about the AI赛道, but rather internal capital circulation within a closed circle: EA money flows to EA projects to solve problems defined by EA.

SBF adhered to a more radical EA strand—“earning to give.” After leaving the Wall Street quant firm Jane Street, he entered cryptocurrency, publicly declaring his aim wasn’t personal wealth but “altruism”: earn as much as possible first, then direct funds toward causes generating the greatest positive impact. Anthropic’s mission—“to develop powerful AI safely”—was virtually the canonical EA prescription for AI’s existential risk.

In May 2021, Jaan Tallinn led Anthropic’s $124 million Series A round, with Moskovitz participating. In April 2022, SBF took over as lead investor in the Series B round, writing a single $500 million check—accounting for 86% of the $580 million total raised. Other participants in that round included Caroline Ellison, Nishad Singh, and James McClave of Jane Street.

That list of co-investors itself tells a story. Caroline Ellison was CEO of Alameda; Nishad Singh was FTX’s engineering director; Jane Street was SBF’s former employer.

Effectively, the $580 million Series B came almost entirely from SBF and the pool of capital he controlled.

Red Flags and Compromise

Dario Amodei is no fool.

In a deep interview later, he recalled that SBF at the time appeared to be “someone bullish on AI and concerned about safety”—a perfect fit for Anthropic’s direction. But then Dario added a crucial caveat: he had noticed “enough red flags.”

So he made a decision: take the money—but insulate governance. SBF received non-voting shares and was excluded from the board. Dario later described SBF’s conduct as “much, much, much more extreme and worse than I imagined,” stacking three “much mores” together.

This decision proved extraordinarily astute. Yet it raises a sharp question: if red flags were abundant enough to warrant structural governance isolation, why accept the money at all?

You could argue that the AI funding environment in early 2022 was far less heated than today, and Anthropic urgently needed large-scale capital to build compute infrastructure. An investor willing to write a $500 million check—red flags notwithstanding—was simply hard to find.

But there’s a subtler reason: within EA’s operational logic, the “cleanliness” of a funding source has never been the top priority. What matters is the funding’s “effectiveness”—whether it enables you to do more. SBF’s entire wealth narrative rested on this premise: earning money is merely a means; doing good is the end—so the means of earning can be flexible, as long as the ultimate “good” produced is sufficiently large.

This logic, in SBF’s hands, veered into criminality. But at the moment he invested in Anthropic, it still appeared merely as a radical yet lawful philosophical choice.

After the Collapse: A Black Comedy

The rest of the story is well-known across the crypto world.

In November 2022, CoinDesk exposed Alameda’s balance sheet; CZ announced Binance’s sale of FTT tokens; a bank run engulfed FTX; and within nine days, the empire crumbled. SBF was arrested, extradited, tried, and sentenced to 25 years in March 2024. His 8% stake in Anthropic—and all associated assets—were frozen in the bankruptcy liquidation process.

One courtroom aside, excluded from trial proceedings, merits mention.

SBF’s defense team attempted to cite the Anthropic investment as evidence of “foresight”: “Look—he didn’t just squander money; he made an investment decision whose valuation multiplied several times over.”

Prosecutor Damian Williams responded bluntly: whether these investments turned a profit is entirely irrelevant to the fraud charges. Stealing someone else’s money to invest doesn’t make the theft any less illegal—even if it profits. The judge sided with the prosecution, and Anthropic’s name was excluded from the trial record.

The prosecution added another blow: wasn’t FTX itself the best counterexample? Valued at $18 billion in 2021 and $32 billion in 2022, it’s now worth nothing.

Then came the liquidation auction.

In March 2024, the first auction valued the stake at $884 million.

The largest buyer was Abu Dhabi’s sovereign wealth fund Mubadala, investing exactly $500 million—the same amount SBF had originally committed. The second-largest buyer was Jane Street—SBF’s and Caroline Ellison’s former employer—with Craig Falls, Jane Street’s head of quantitative research, personally contributing $20 million. SBF’s first job after MIT was as a trader at Jane Street—and now his former employer was spending money to repurchase shares he’d bought with stolen funds.

The two auctions collectively recovered $1.34 billion. This sum flowed into the FTX creditor compensation pool, becoming a vital source for victims recovering their deposits.

What if the liquidation team had held off?

In February 2026, Anthropic closed its $30 billion Series G round, reaching a $380 billion post-money valuation. Without dilution, that 8% stake would theoretically have grown from $1.34 billion to $30 billion. Of course, the liquidation team didn’t hold out—their mandate was to monetize assets quickly to repay creditors—but the gulf between $1.34 billion and a potential $30+ billion is precisely why this story remains so widely discussed.

It’s the single biggest regret in the entire FTX bankruptcy saga.

EA’s Collective Forgetting

Anthropic’s current scale and influence require no elaboration. Yet an intriguing phenomenon persists: the company is systematically distancing itself from the EA movement.

All seven co-founders have pledged to donate 80% of their personal wealth—worth approximately $38 billion at current valuations. Nearly 30 Anthropic employees registered for an EA conference in San Francisco—more than double the combined attendance of OpenAI, Google DeepMind, xAI, and Meta’s superintelligence lab.

Yet Daniela Amodei told Wired: “I’m not an expert on effective altruism. I don’t identify with that term. My impression is that it’s somewhat outdated.” The speaker’s husband is one of EA’s most influential fund allocators—and had just joined her company.

This posture—“taking EA money, hiring EA people, living in EA shared housing, yet refusing to claim EA identity”—becomes understandable post-SBF. FTX’s collapse cratered EA’s reputation. Anthropic needed to decouple from the label—just as any savvy company would sever ties with a tarnished brand.

But the facts remain: Anthropic’s founding logic emerged directly from EA’s core arguments about AI’s existential risk; its early financing came almost exclusively from capital within the EA network; and its governance structure is dominated by personnel drawn from the EA ecosystem.

A Parallel Universe Behind Bars

Sam Bankman-Fried is now in federal prison. His earliest possible release date is 2049—when he’ll be 57.

During his incarceration, the AI company he funded with illicit money has soared past a $380 billion valuation and is engaged in a globally watched negotiation with the Pentagon over AI weaponization. Its founders appear regularly in the New York Times and on Capitol Hill. Had everything been legal, that $500 million bet would have cemented SBF as one of the highest-return venture capitalists of our era.

SBF’s “earning to give” and Anthropic’s “developing AI safely” share the same underlying operating system: to achieve outsized good, unconventional means and risks may be justified.

SBF pushed this logic across the line into criminality; Anthropic operates safely on the other side—but its core thesis—“we must ourselves build the most powerful AI to ensure AI safety”—is itself a grand wager bordering on self-fulfilling prophecy.

They grew from the same soil.

In that soil, Dario and SBF once attended the same gatherings, embraced the same philosophy, and occupied different nodes of the same social network. One built a $380 billion AI empire; the other entered federal prison.

And the $500 million check linking them remains the strangest page in Anthropic’s history.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News