NVIDIA's AI extravaganza GTC kicks off, unveiling its most powerful AI chip, Blackwell

TechFlow Selected TechFlow Selected

NVIDIA's AI extravaganza GTC kicks off, unveiling its most powerful AI chip, Blackwell

Nvidia said Blackwell offers a 25x improvement in cost and energy efficiency over the previous generation, making it the world's most powerful chip.

By Li Dan

Source: Wall Street Horizon

The NVIDIA GTC 2024 AI Conference, touted as this year’s premier global developer event in artificial intelligence (AI), kicked off on Monday, March 18, U.S. Eastern Time.

This year marks NVIDIA's first return to an in-person annual GTC event in five years—and analysts expected it to be the AI showcase where NVIDIA would "deliver something substantial."

On Monday afternoon local time, NVIDIA founder and CEO Jensen Huang delivered a keynote titled “1# AI Conference for Developers” at the SAP Center in San Jose, California.

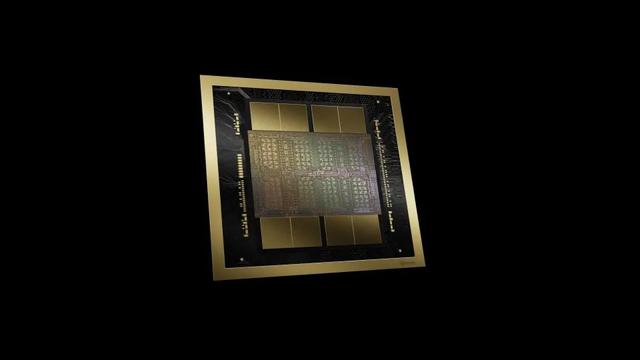

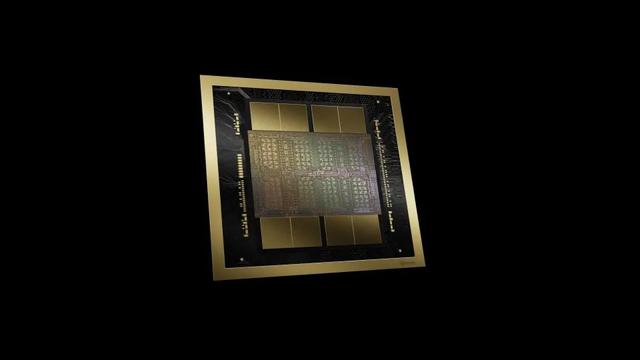

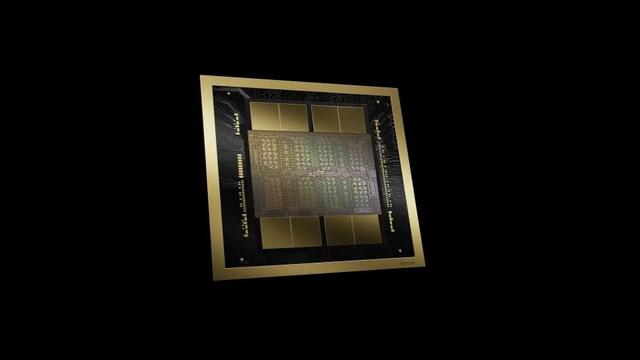

Blackwell Delivers 25x Improvement in Cost and Energy Efficiency—World’s Most Powerful Chip Built on TSMC 4nm Process

Huang introduced a new generation of chips and software designed to run AI models. NVIDIA officially unveiled Blackwell, its next-generation AI graphics processing unit (GPU), with shipments expected later this year.

The Blackwell platform enables building and running real-time generative AI on trillion-parameter-scale large language models (LLMs), delivering a 25-fold improvement in cost and energy efficiency compared to the previous generation.

NVIDIA says Blackwell incorporates six revolutionary technologies that support AI training and real-time LLM inference for models up to ten trillion parameters:

-

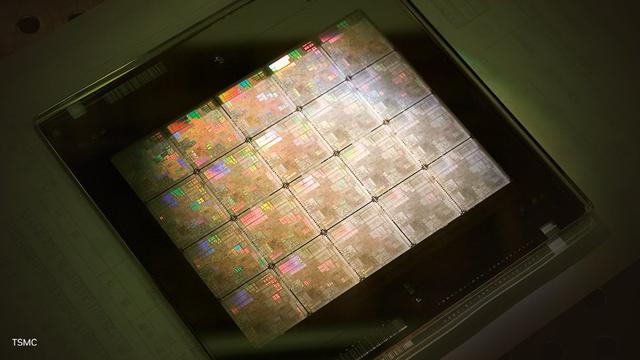

World’s most powerful chip: The Blackwell architecture GPU consists of 208 billion transistors, manufactured using a custom-built TSMC 4-nanometer (nm) process. Two reticle-maximized GPU dies are linked via a 10 TB/s chip-to-chip interconnect into a single unified GPU.

-

Second-Generation Transformer Engine: Combining Blackwell Tensor Core technology with NVIDIA’s advanced dynamic range management algorithms in TensorRT-LLM and NeMo Megatron frameworks, Blackwell supports double the computing throughput and model size inference capability through new 4-bit floating-point AI.

-

Fifth-Generation NVLink: To boost performance for trillion-parameter and Mixture-of-Experts AI models, the latest NVIDIA NVLink delivers a breakthrough 1.8TB/s bidirectional throughput per GPU, enabling seamless high-speed communication across up to 576 GPUs for the most complex LLMs.

-

RAS Engine: GPUs powered by Blackwell include a dedicated engine for reliability, availability, and serviceability. Additionally, the Blackwell architecture adds on-chip capabilities that use AI-driven predictive maintenance for diagnostics and forecasting reliability issues. This maximizes system uptime and enhances resilience for large-scale AI deployments, enabling continuous operation for weeks or even months, while reducing operational costs.

-

Secure AI: Advanced confidential computing features protect AI models and customer data without sacrificing performance and support new native interface encryption protocols critical for privacy-sensitive industries such as healthcare and financial services.

-

Decompression Engine: A dedicated decompression engine supports the latest formats, accelerates database queries, and delivers peak performance for data analytics and data science. In the coming years, GPU acceleration will play an increasingly central role in enterprise data processing, where companies spend tens of billions annually.

GB200 NVL72 Delivers Up to 30x Higher Inference Performance Than H100

NVIDIA also introduced the GB200 Grace Blackwell Superchip, which connects two B200 Tensor Core GPUs to an NVIDIA Grace CPU via a 900GB/s ultra-low-power NVLink.

For maximum AI performance, systems driven by GB200 can connect to the NVIDIA Quantum-X800 InfiniBand and Spectrum-X800 Ethernet platforms announced on Monday, delivering advanced networking speeds of up to 800Gb/s.

GB200 is a key component of the GB200 NVL72, a multi-node, liquid-cooled, rack-scale system designed for the most compute-intensive workloads. It integrates 36 Grace Blackwell superchips—including 72 Blackwell GPUs and 36 Grace CPUs—interconnected via fifth-generation NVLink. The GB200 NVL72 also includes NVIDIA BlueField®-3 data processing units (DPUs) to enable cloud network acceleration, composable storage, zero-trust security, and GPU compute elasticity in hyperscale AI clouds.

Compared to H100 Tensor Core GPUs, the GB200 NVL72 delivers up to 30x higher performance for LLM inference workloads and reduces both cost and energy consumption by up to 25x.

The GB200 NVL72 platform functions as a single GPU with 1.4 exaflops of AI performance and 30TB of fast memory, serving as the building block for the latest DGX SuperPOD.

NVIDIA also launched the HGX B200 server motherboard, which connects eight B200 GPUs via NVLink to support x86-based generative AI platforms. The HGX B200 supports network speeds up to 400Gb/s via NVIDIA Quantum-2 InfiniBand and Spectrum-X Ethernet networking platforms.

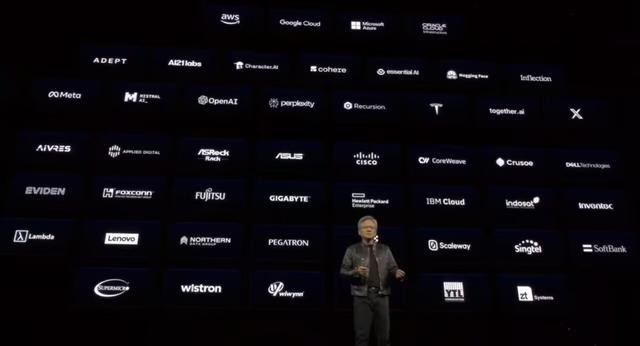

Amazon, Microsoft, Google, and Oracle Among First Cloud Providers Offering Blackwell Support

The Blackwell chip will form the foundation for new computers and other products deployed by the world’s largest data center operators, including Amazon, Microsoft, and Google. Products based on Blackwell will become available later this year.

NVIDIA said AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure will be among the first cloud service providers to offer instances powered by Blackwell. Companies in the NVIDIA Cloud Partner Program—including Applied Digital, CoreWeave, Crusoe, IBM Cloud, and Lambda—will also be among the first to deliver Blackwell-powered instances.

Sovereign AI clouds will also offer Blackwell-based cloud services and infrastructure, including Indosat Ooredoo Hutchinson, Nebius, Nexgen Cloud, Oracle EU Sovereign Cloud, Oracle U.S., UK, and Australian Government Clouds, Scaleway, Singtel, Northern Data Group’s Taiga Cloud, Yotta Data Services’ Shakti Cloud, and YTL Power International.

“For three decades, we’ve pursued accelerated computing with the goal of achieving transformative breakthroughs in areas like deep learning and AI,” said Huang. “Generative AI is the defining technology of our era. Blackwell is the engine powering this new industrial revolution. By collaborating with the world’s most dynamic companies, we will fulfill the promise of AI across every industry.”

In its press release, NVIDIA listed organizations expected to adopt Blackwell, including Microsoft, Amazon, Google, Meta, Dell, OpenAI, Oracle, Tesla, and xAI led by Elon Musk. Huang highlighted these and additional partners during his presentation.

AI Project GR00T Powers Humanoid Robots

During his keynote, Huang revealed that NVIDIA has launched Project GR00T, a multimodal AI initiative aimed at advancing humanoid robotics. Leveraging universal foundation models, Project GR00T enables humanoid robots to accept inputs such as text, speech, video, and even live demonstrations, process them, and execute general-purpose actions accordingly.

Project GR00T was developed with the help of NVIDIA’s Isaac robotics platform tools, including the newly introduced Isaac Lab for reinforcement learning.

Robots powered by the Project GR00T platform are designed to understand natural language and imitate human movements by observing behavior, allowing them to rapidly learn coordination, dexterity, and other skills necessary to adapt to and interact with the real world—without ever triggering a robot uprising, Huang emphasized.

Huang said:

“Building foundation models for general-purpose humanoid robots is one of the most exciting challenges in AI today. By converging these enabling technologies, leading robotics experts worldwide can make giant leaps in artificial general robotics.”

TSMC and Synopsys Adopt NVIDIA Lithography Technology

Huang also noted that TSMC and Synopsys will adopt NVIDIA’s computational lithography technology, utilizing NVIDIA’s CuLitho computational lithography platform.

TSMC and Synopsys have already integrated NVIDIA’s Culitho W software. They will leverage NVIDIA’s next-generation Blackwell GPUs for AI and high-performance computing (HPC) applications.

New Software NIM Simplifies AI Inference on Existing NVIDIA GPUs

NVIDIA also announced the launch of NVIDIA NIM, a set of inference microservices—optimized, cloud-native microservices designed to shorten time-to-market for generative AI models and simplify their deployment across clouds, data centers, and GPU-accelerated workstations.

NVIDIA NIM expands the developer base by abstracting the complexity of AI model development and production packaging through industry-standard APIs. As part of NVIDIA AI Enterprise, NIM provides a streamlined path for developing AI-powered enterprise applications and deploying AI models in production.

NIM makes it easier for users to perform inference or run AI software on older NVIDIA GPUs, allowing enterprise customers to continue leveraging their existing NVIDIA GPU investments. Inference requires significantly less compute power than initial AI model training. With NIM, enterprises can run their own AI models instead of purchasing AI outputs from companies like OpenAI.

Customers using NVIDIA-powered servers can access NIM by subscribing to NVIDIA AI Enterprise, at an annual licensing fee of $4,500 per GPU.

NVIDIA will collaborate with AI companies such as Microsoft and Hugging Face to ensure their AI models run seamlessly across all compatible NVIDIA chips. Developers using NIM can efficiently run models on their own servers or cloud-based NVIDIA servers without lengthy configuration processes.

Commentators noted that software like NIM simplifies AI deployment, not only generating revenue for NVIDIA but also giving customers another compelling reason to stick with NVIDIA GPUs.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News