After Anthropic Open-Sourced Its Source Code, It Issued Over 8,000 Copyright Takedown Requests—Its “Security-First” Image Suffers Its Most Awkward Week Yet

TechFlow Selected TechFlow Selected

After Anthropic Open-Sourced Its Source Code, It Issued Over 8,000 Copyright Takedown Requests—Its “Security-First” Image Suffers Its Most Awkward Week Yet

Anthropic, whose brand centers on “AI safety,” is enduring its most embarrassing week since its founding.

Author: TechFlow

Anthropic accidentally exposed the full source code of Claude Code—the company’s most profitable product—due to a misconfiguration in an npm package release. Approximately 512,000 lines of TypeScript code were mirrored, dissected, and rewritten into Python and Rust versions by tens of thousands of developers within hours. Anthropic promptly issued DMCA takedown notices to GitHub, affecting roughly 8,100 repositories. However, the broad scope mistakenly targeted numerous unrelated projects, triggering strong backlash from the developer community. Anthropic was ultimately forced to withdraw the vast majority of its notices, retaining only one repository and 96 forks for removal. This marks Anthropic’s second major leak within a week—just five days after its Mythos model information was exposed.

Anthropic, which brands itself around “AI safety,” is enduring its most embarrassing week since founding.

According to a Wall Street Journal report published on April 1, Anthropic inadvertently released Claude Code’s complete source code alongside a routine npm package update on March 31, due to a human error in its build process. Security researcher Chaofan Shou posted the download link publicly on X at 4:23 a.m. ET; the post quickly amassed over 21 million views. Within hours, the code was mirrored on GitHub and garnered tens of thousands of stars. South Korean developer Sigrid Jin even used AI tools to rewrite the entire codebase into Python before sunrise; her project, claw-code, earned 50,000 GitHub stars within two hours—likely setting a record for the fastest growth in the platform’s history.

An Anthropic spokesperson confirmed the incident to CNBC, stating: “This was a packaging issue caused by human error—not a security vulnerability. No sensitive customer data or credentials were involved or exposed.”

A Missing Configuration Exposed 512,000 Lines of Core Code

The technical cause of the leak was relatively simple. Claude Code is built on Bun—an open-source JavaScript runtime acquired by Anthropic in late 2025. Bun generates source map debugging files by default. During the npm package deployment, the team failed to exclude these files via the .npmignore configuration, resulting in a 59.8 MB source map file being shipped with version 2.1.88 of Claude Code. That file contained the full, readable, annotated, and unobfuscated contents of approximately 1,900 TypeScript source files—totaling roughly 512,000 lines of code.

Boris Cherny, head of Claude Code, acknowledged: “Our deployment process includes several manual steps, and one of them wasn’t executed correctly.” He added that the team has since fixed the issue and is implementing additional automated checks, emphasizing that such errors reflect systemic or infrastructure issues—not individual responsibility.

This isn’t the first time. In February 2025, an almost identical source map leak exposed an earlier version of Claude Code’s source code. The recurrence of the same class of error within 13 months raises questions about the operational maturity of this company—valued at approximately $380 billion and preparing for an IPO.

What Developers Found in the Leaked Code

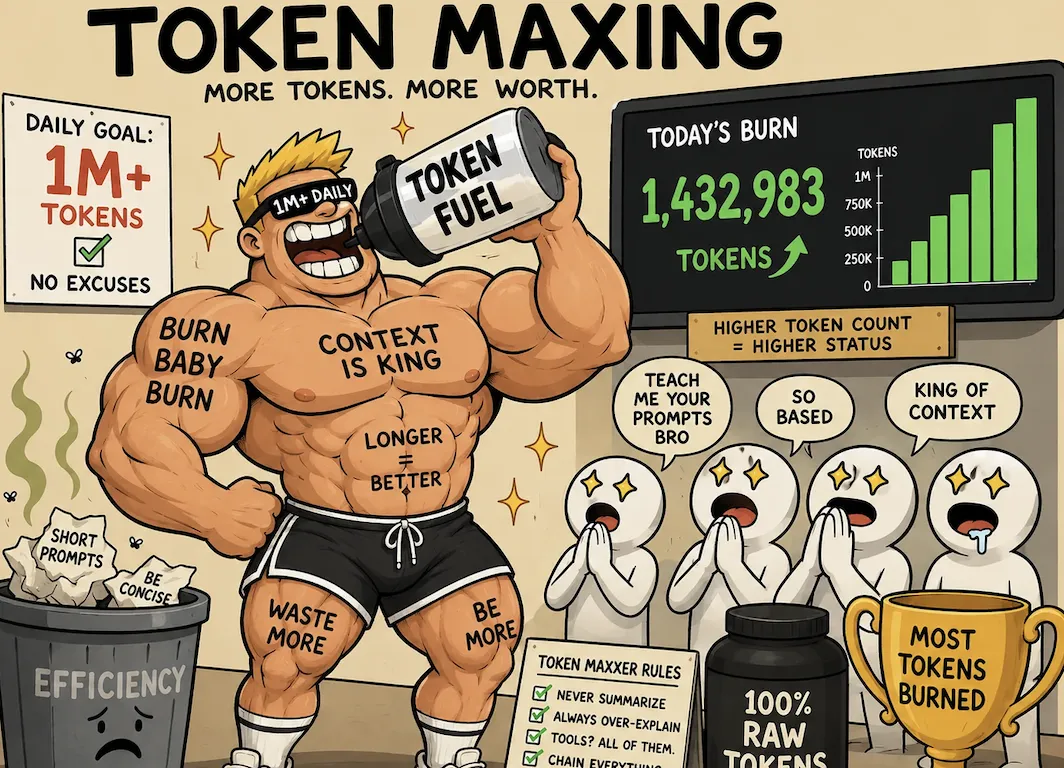

The leaked codebase effectively served as an internal product roadmap Anthropic never intended to disclose. According to VentureBeat and multiple independent developers’ analyses, the code contains 44 feature flags—more than 20 of which correspond to features already completed but not yet launched.

The most notable among them include: • A self-operating “KAIROS” daemon mode enabling Claude Code to run autonomously in the background during user idle time—periodically fixing bugs, executing tasks, and sending push notifications; • A three-layer “self-healing memory” architecture, which merges fragmented observations and resolves logical contradictions through a background process named “dreaming”; • A full multi-agent coordination system transforming Claude Code from a single agent into a coordinator capable of generating, directing, and managing multiple concurrent worker agents.

The most controversial discovery was a file named undercover.ts. As reported by The Hacker News, this ~90-line script injects system prompts into Claude Code whenever Anthropic employees submit code to open-source projects—directing Claude to conceal its AI identity and strip all “Co-Authored-By” attribution tags. One comment reads: “You are operating undercover in a public/open-source code repository. Your commit messages, PR titles, and PR descriptions must not contain any internal Anthropic information. Do not reveal your identity.”

The code also includes an ANTI_DISTILLATION_CC flag, which injects fake tool definitions into API requests—intended to poison training data that competitors might intercept. Internal model codenames also appear: “Capybara” refers to a new, unreleased model tier; “Fennec” maps to the current Opus 4.6. These align with details about the Mythos model previously leaked five days earlier due to a CMS misconfiguration.

Paul Price, founder of cybersecurity firm Code Wall, told Business Insider: “This leak is more embarrassing than damaging. The truly valuable assets—its internal model weights—were not exposed.” Still, he noted that “Claude Code is arguably the best-designed agent tool architecture available today—and now we can see exactly how they solved those hard problems.” That intelligence holds clear strategic value for competitors.

8,100 Repositories Wrongly Taken Down: DMCA Takedown Backfires Spectacularly

After the code spread, Anthropic swiftly filed DMCA takedown notices with GitHub under U.S. copyright law. Per GitHub’s public records, the initial request affected approximately 8,100 repositories. The problem? Among the targeted repos were not just mirrors of the leaked code—but also legitimate forks of Anthropic’s own publicly released official Claude Code repository.

Developers expressed outrage across X. Developer Danila Poyarkov reported receiving a takedown notice simply for forking Anthropic’s public repo. Another user, Daniel San, received a GitHub email indicating his repository contained only skill examples and documentation—completely unrelated to the leaked code. One developer quipped: “Anthropic’s lawyers woke up and started taking down my repos.”

Facing mounting backlash, Anthropic partially retracted its notices on April 1. Per GitHub’s retraction log, Anthropic narrowed the takedown scope to just one repository (nirholas/claude-code) and 96 specific fork URLs listed separately in the original notice—restoring access to the remaining ~8,000 repositories.

An Anthropic spokesperson told TechCrunch: “The repositories specified in the notice belonged to a fork network connected to our public Claude Code repository, so the takedown unintentionally affected more repositories than expected. We’ve withdrawn all notices except one, and GitHub has restored access to the affected forks.”

Code Is Now Permanently Archived on Decentralized Platforms—DMCA Has Limited Reach

Anthropic’s copyright enforcement faces a fundamental limitation: the code has already diffused irreversibly.

As reported by Decrypt, decentralized Git platform Gitlawb has mirrored the full original codebase—with an annotation declaring it “will never be taken down.” While DMCA applies effectively to centralized platforms like GitHub (which must comply legally), it holds no jurisdiction over decentralized infrastructure. Within hours of the leak, the code had been replicated across enough mirrors and diverse infrastructures to become, in practice, permanently public.

Adding irony: South Korean developer Sigrid Jin used the AI orchestration tool oh-my-codex to rewrite the entire codebase from TypeScript to Python—launching the project claw-code. Gergely Orosz, founder of The Pragmatic Engineer, noted on X that this constitutes a “clean-room rewrite”—a distinct creative work explicitly designed to fall outside DMCA’s reach. If Anthropic claims AI-rewritten code still infringes copyright, it would directly undermine AI companies’ core legal defense in training-data copyright litigation: namely, that outputs generated by AI from copyrighted inputs constitute fair use.

Copyright Hypocrisy: Self-Contradiction or Legal Necessity?

The most widely discussed tension in this incident centers on copyright stance inconsistency. In September 2025, a court ordered Anthropic to pay $1.5 billion in damages for training Claude on pirated books and shadow-library content. In June 2025, Reddit sued Anthropic for scraping user-generated content without authorization to train its models. A company embroiled in multiple lawsuits over training-data copyright infringement turning to copyright law to protect its own code unsurprisingly triggered predictable community reactions.

A top-voted Slashdot comment captured the sentiment bluntly: “‘We profit openly from stolen goods—and you dare steal from us?!’ What a position.” Another user argued the DMCA action made legal sense: “If Anthropic wants to later sue other companies for using its code, failing to even attempt a takedown would weaken its case in court.”

This debate also touches on the cutting-edge legal question of copyright ownership for AI-generated code. Per prior disclosures by Gartner and Anthropic, roughly 90% of Claude Code’s code is AI-generated. A U.S. federal court ruled in March 2025 that AI-generated works lack human authorship—and thus aren’t eligible for copyright protection; the Supreme Court declined to hear an appeal in March 2026. If most of Claude Code was indeed written by Claude itself, Anthropic’s copyright claim carries significant legal uncertainty.

Two Leaks in One Week: Operational Security Alarms Ahead of IPO

This source-code leak occurred just five days after Anthropic’s previous incident. On March 26, Fortune reported that a content management system misconfiguration exposed nearly 3,000 unpublished internal documents—including detailed information about the upcoming Claude Mythos model—in a publicly searchable data cache. Both incidents were attributed to “human error.”

The timing is highly sensitive. Anthropic closed its $30 billion Series G round in February 2026, reaching a $380 billion valuation. It is reportedly preparing for an IPO as early as October 2026—with projected fundraising exceeding $60 billion. Goldman Sachs, JPMorgan Chase, and Morgan Stanley have all reportedly engaged in early discussions. Claude Code’s annualized revenue exceeds $2.5 billion—making it the company’s most critical revenue engine. TechCrunch notes that for a company preparing to go public, leaking source code almost certainly invites shareholder lawsuits.

VentureBeat raised a sharper question in its analysis: Anthropic experienced over a dozen incidents in March alone—but published only one post-mortem report. Third-party monitoring systems detected outages 15–30 minutes before Anthropic updated its own status page. For a company valued at $380 billion and racing toward public markets, investors must decide for themselves whether its operational transparency and maturity match that valuation.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News