Claude Relay Business: The Stricter the Block, the More Complete the Gray Market

TechFlow Selected TechFlow Selected

Claude Relay Business: The Stricter the Block, the More Complete the Gray Market

The real risk lies not in geopolitics, but in how this supply chain draws ordinary people—many of whom are already vulnerable—into criminal markets.

Author: Zilan Qian

Translation & Editing: TechFlow

TechFlow Intro: The White House claims Chinese labs are using “tens of thousands of proxy accounts” to steal U.S. AI models—but it misreads the reality. This is not a coordinated operation by a handful of elite labs; rather, it’s an open, gray-market ecosystem operating publicly on GitHub, Taobao, Twitter, and Telegram. Any Chinese individual seeking advanced AI tools—from professors to programmers to hobbyists—is using API proxies, priced as low as 10% of official rates. This reveals a blind spot in U.S. AI security frameworks: every layer of restriction spawns its own corresponding circumvention infrastructure—and the real risk lies not in geopolitics, but in how this supply chain draws ordinary people—many already vulnerable—into criminal markets.

On April 23, 2026, the White House issued a memo warning that Chinese entities are launching “industrial-scale” distillation attacks against cutting-edge U.S. AI models, evading detection using “tens of thousands of proxy accounts.” In February 2026, Anthropic similarly reported that Chinese labs were conducting coordinated distillation attacks via “a single proxy network managing over 20,000 fraudulent accounts.” Both documents treat “proxies”—intermediaries between model users and providers—as a systematic, purpose-built mechanism deployed by a small number of elite Chinese labs to extract U.S. AI models.

Whether or not Chinese labs rely on distillation to “catch up,” both documents misread the proxy economy they describe. Beneath those few elite labs lies a far larger market—one operating openly on GitHub, Taobao, Twitter, and Telegram. This is a gray-market economy of API proxies (commonly called “relay stations”), enabling Chinese developers to access Anthropic’s models at costs as low as 10% of official pricing. Participants extend well beyond a handful of experienced AI researchers, and motivations are far broader than building frontier models to close the gap. Anyone wishing to use more advanced AI models or tools—university professors and students, tech workers, independent developers, or hobbyists—is using API proxies. The logs they generate have themselves become commodities, traded for purposes ranging from model training to targeted fraud.

Meanwhile, each new layer of control imposed by leading U.S. AI companies—geographic blocking, phone verification, credit card requirements, and now real-time biometric KYC checks—has spawned corresponding evasion infrastructure. The impact of these new SMS farms and biometric data collection operations extends beyond geopolitics, reaching into the very design of frontier AI security frameworks.

Building on my 2025 Chinatalk article about accessing banned U.S. models in China, this update focuses specifically on the relay station economy: how it is built, how it monetizes, and what limitations it exposes in access restrictions and account monitoring as tools of AI governance. Yet unlike the gray market described in 2025, the 2026 story does not stop at the boundary between Chinese users and U.S. AI model providers. The relay station economy exposes blind spots in AI security frameworks designed to prevent harms extending beyond U.S.-China competition—from abuse by malicious actors to erosion of provider traceability—while simultaneously fueling criminal markets that exploit ordinary people within the supply chain, many of whom are already disadvantaged.

To illustrate how relay stations operate, let’s take Anthropic as an example—the company with the strictest geographic blocking mechanisms, yet whose models remain extremely popular among Chinese developers.

Figure: A meme circulating across China’s internet: “Do you think you’re smarter than Claude?”

Geographic Blocking and Identity Verification (KYC)

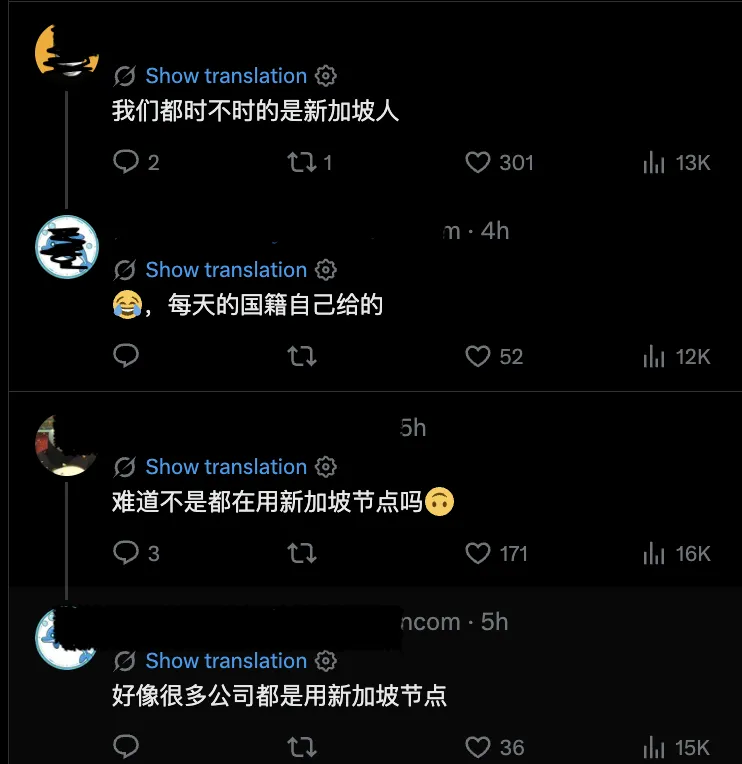

On Anthropic’s map of supported countries, China is conspicuously absent—and technically, Anthropic is invisible on China’s internet. In practice, however, neither Anthropic’s blocking nor the Great Firewall prevents Chinese users from accessing Claude and Claude Code. Since 2025, despite platform and government censorship, Claude models have thrived on e-commerce apps like Taobao, while Singapore—a city-state with fewer people than New York City—“surprisingly” led global per-capita Claude usage in April 2026.

Figure: Chinese developers joked on Twitter about reports that Singapore ranked highest globally in Claude token consumption, implying Chinese users route traffic through Singapore to access the model. “We occasionally feel like Singaporeans.” “I assign myself a nationality every day.” “Is it because we all use Singaporean nodes?” “Looks like many companies are using Singaporean nodes.”

The Chinese government currently does not actively restrict Chinese developers’ access to advanced U.S. models. By contrast, Anthropic takes this seriously, deploying multiple layers of mechanisms to block mainland Chinese users. At the most basic level, account registration requires a mobile phone number, an overseas credit card, and a matching billing address. On September 5, 2025, Anthropic further prohibited access by any entity in which more than 50% of equity is directly or indirectly held by companies headquartered in unsupported regions—including China—regardless of where the entity operates. This closed a prior loophole allowing foreign subsidiaries with Chinese ownership to retain API access.

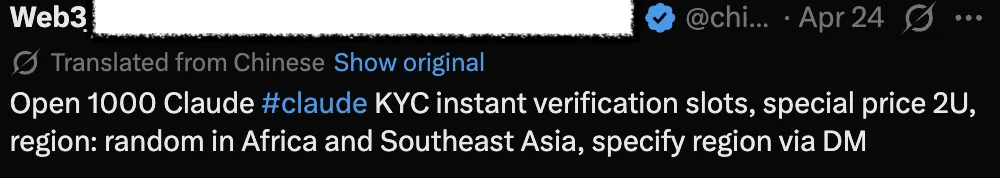

The latest measure arrived in April 2026. Anthropic began requiring certain users to verify their identity using government-issued photo IDs and real-time selfies—making Claude the first major consumer AI platform to implement identity checks of this intensity. Rollout is selective, triggered by specific use cases or platform-integrity signals. For Chinese users accessing Claude via VPNs or other intermediaries, the new KYC policy should, in theory, make access significantly harder—even if Chinese users can fake phone numbers and addresses, they theoretically cannot easily fake real-time selfies matching physical government documents.

In practice, however, Chinese users not only access Claude and related tools—they often purchase tokens at 10% of the official price. The magic lies in the “relay station.”

What Is a “Relay Station”?

A relay station is the term used across China’s developer ecosystem for an API proxy—an overseas server positioned between developers and Anthropic’s infrastructure. It receives API requests, forwards them pretending to originate from the relay station’s location, then relays responses back. Users redirect their software to the proxy’s server instead of Anthropic’s, paying in RMB via WeChat Pay or Alipay. This bypasses the need for direct-access tools like VPNs and overseas credit cards. Well-known relay stations are cataloged in community repositories, ranked by real-time pricing and uptime. Beneath them, smaller and personal projects come and go.

While functionally similar to legitimate Western API aggregators like OpenRouter, relay stations operate in a completely different universe of legality and trust. Legitimate aggregators exist to simplify developer workflows, charging standard fees under transparent corporate agreements. Relay stations, by contrast, are explicitly built for circumvention—routing data through unaccountable intermediaries.

Like offering VPN services or selling Claude on Taobao, relay stations are technically prohibited in China. Under China’s regulations on AI service registration, providing AI services without prior filing and security assessment is illegal. But just as some small businesses can skip AI registration without penalty, most relay stations do likewise. However, the larger the business, the less secure the operation becomes.

The Relay Station Supply Chain

A relay station is not a single entity. It sits in the middle of a layered supply chain, with most participants never interacting directly.

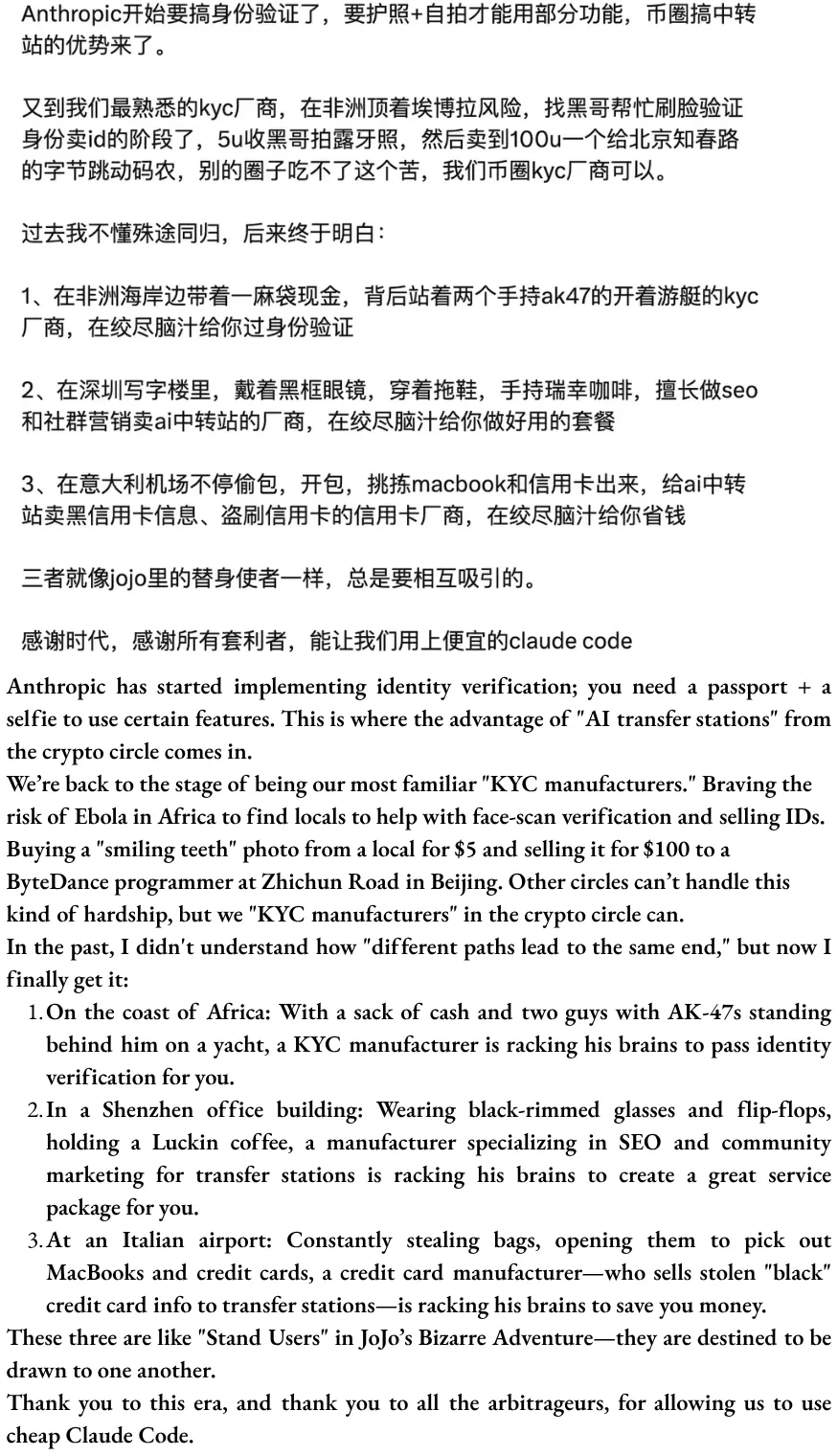

Upstream are resource providers: account brokers who bulk-register or otherwise acquire large volumes of Anthropic accounts; SMS verification platforms supplying foreign phone numbers required to pass registration checks; and, on the more technical end, reverse engineers analyzing Anthropic’s client-side code to find authentication shortcuts or detect when detection logic changes. Card vendors and proxy networks’ payment infrastructure also enable overseas billing from within China.

Upstream also handles more complex KYC mechanisms—whether AI- or human-driven. AI services have demonstrated the ability to generate highly realistic fake ID documents capable of bypassing major platforms’ identity verification, while deepfake tools now allow criminals to create digital clones that successfully pass remote biometric verification. Even if defenders succeed in detecting AI impersonating humans, a more labor-intensive alternative exists: agents travel to low-income countries in Africa or Latin America to recruit real individuals willing to complete in-person verification. The Worldcoin black market provides a documented precedent: iris scans collected by KYC vendors in Cambodia and Kenya sell for under $30.

Figure: A Twitter account promoting KYC verification services.

Middle-tier is the relay station itself: a software interface receiving user requests and forwarding them to Anthropic as if originating from legitimate accounts; a payment integration (typically Alipay or WeChat Pay); and the mundane operational layer keeping it running—rotating accounts before they’re flagged, balancing load across pools, and continuously adapting to Anthropic’s abuse-detection updates.

Downstream are customers: individual developers using Codex or Claude Code; enterprises routing internal workflows through proxies; application builders embedding APIs into their products; and secondary resellers purchasing access wholesale and repackaging it for individual customers on Taobao—as I documented last year.

Virtually no one operates the entire chain. Most participants own one or two segments and monetize them effectively, forming a resilient, modular system. AI model providers may suspend individual operators, but upstream account pools and downstream customer bases remain intact. As long as developers seek access to Claude—and as long as a black market for credentials persists—both enduring features—replacements emerge rapidly.

Figure: A screenshot circulating in developer WeChat groups joking about the supply chain for bypassing Anthropic’s KYC flow; original text is Chinese (top), translation provided by the author (bottom).

Three Ways to Make Tokens Cheap: “One Fish, Three Meals”

Yet the strangest thing is not how Chinese users gain access to Claude or Claude Code—but how they obtain it at absurdly low prices—often priced at ¥1 per $1 token, 70–90% below official rates. According to public discussions, relay stations employ at least three methods to achieve this—commonly described as “one fish, three meals.”

First meal: markup on access. This is possible because upstream resource providers can stack proxies using at least five relatively “innocent” strategies:

Bulk-registration of API accounts to collect Anthropic’s $5 free tier

Reselling unused quotas from others’ accounts

“APImaxxing”—splitting the hourly token quota of a $200 Max plan across multiple users, exploiting the gap between Anthropic’s fixed subscription pricing and the much higher equivalent cost of pay-per-token API access

Beyond these, there is a darker upstream input: accounts purchased using stolen or fraudulent credit cards, costing operators nothing and entering the proxy pool. While the scale of this relative to the above four “innocent” strategies is difficult to verify, the two markets likely share some infrastructure and personnel.

Second meal: model swapping and token inflation. Because user inputs and model outputs flow through the proxy, users cannot verify which model actually processed their request. A user selects Opus 4.7, but the proxy may quietly route to Sonnet, Haiku—or worst-case, GLM or Qwen—and fraudulently relabel the output. In a recent paper from Germany’s CISPA Helmholtz Center for Information Security (citing my 2025 gray-market article), researchers audited 17 API proxies and found widespread model swapping—accessing “Gemini-2.5” via API proxies scored only 37.00% on medical benchmarks, sharply down from the official API’s 83.82%. On the user side, telltale signs appear only on complex tasks, when outputs feel “off” (often dubbed “dumbing down”), but no concise method exists to prove it. Numerous public records highlight concerns about demonstrably degraded model performance from certain API proxies, suspected of “watering down” services by substituting inferior tiers for advanced frontier models.

Beyond model swapping, token inflation also lowers the per-token price—at the cost of inflating total expense. Some inflation is structural: frequent account rotation by proxies disrupts cache continuity as a side effect, forcing users to burn full-price tokens on contexts that would otherwise be nearly free. Some may be intentional, as proxy providers seek to maximize usage volume. Distinguishing the two externally is difficult.

Third meal: logs as product. This may be the most critical part, intersecting data privacy and distillation. Every request routed through a proxy—full prompts, full responses, tool calls, iterations—resides on the proxy operator’s servers. For AI coding proxies, these logs contain lengthy reasoning chains, authentic engineering decisions, repository context, and human-verified correct outputs. They thus constitute ideal datasets for post-training: supervised fine-tuning on real engineering tasks, and distilling Claude’s reasoning patterns into smaller models—capturing full reasoning trajectories. Chinese developer communities assert this is happening in at least some cases, but whether proxy operators systematically collect and sell such logs—and to whom—remains unconfirmed. Downstream distilled data does exist on the open web: several datasets of Claude Opus 4.6 inference outputs circulate on HuggingFace, with unknown provenance. In theory, similar distilled datasets could be cleaned and sold to other Chinese model developers.

The first two meals deliver tokens cheaper than Anthropic’s official pricing—but to reach absurdly low levels like 10% or even 5% of list price, the third meal is essential. As the Chinese proverb says: “There’s no such thing as a free lunch.” Several Chinese developers revealed that markup-based business is merely a customer-acquisition tactic—the real profit comes from harvesting logs. Users are both paying customers and unpaid data producers, trading their private data for discounted access. Others warn that leaked user data from proxies may be used for marketing, fraud, or even extortion. To avoid privacy risks, some Chinese developers have built their own Claude Code API proxies and open-sourced operational guides.

What Real-Name Verification Cannot Reveal

AI usage is shifting from chatbots toward tool use. With the rise of agents and token economies, using U.S. models is no longer just about access—it’s about cost-effectiveness. This is because China’s AI ecosystem—whether elite labs, university research groups, independent developers, or hobbyists—generally lacks funding. Meanwhile, user data generated via relay stations clearly flows downstream, feeding model training, data trading, or fraud. If distillation is part of this economic system, the problem vastly exceeds what U.S. governments or AI companies anticipate—namely, a handful of elite participants.

History shows that access restrictions rarely deter determined users. Restrictions raise access costs, thereby creating profitable markets for anyone able to lower them. The Great Firewall made VPN services a thriving cottage industry in China. KYC requirements spawned an economy of forged identities—from domestic ID resellers to biometric collection operations in Southeast Asia or Africa. Multi-layered controls by frontier AI companies—geographic blocking, phone verification, credit card requirements, and now real-time biometric checks—produce identical effects.

Yet this story transcends the “Anthropic/U.S. vs. China” framework. It points to an unsettling truth about access control—whether across geopolitical borders or more broadly. A developer in a geographically blocked region bypassing controls structurally mirrors how terrorists might access frontier AI models to engineer destructive bioweapons without being traced. Access issues are both unique geopolitical considerations and shared security concerns.

Today, AI security research treats system-level access controls—especially detection, monitoring, and account suspension for publicly available closed-weight models—as critical safeguards. Regarding monitoring, developers control inference infrastructure, including real-time flagging of harmful inputs and outputs. Detection (e.g., KYC requirements) assumes providers can attribute behavior to identifiable actors; account suspension similarly assumes suspending accounts effectively denies access. But U.S. model providers cannot control inference routed through relay stations by Chinese users—the proxy operators control it. When harmful requests arrive, AI model providers see not the real user’s IP, but the proxy’s IP. When an account is suspended, the upstream supply chain can spin up new proxies within hours.

Problems intensify with more sophisticated monitoring tools. Anthropic’s Clio system, partly designed to detect coordinated abuse invisible at the single-conversation level, works by identifying cross-account and cross-conversation patterns—for instance, recognizing an automated network of accounts generating search-engine spam using similar prompt structures, then suspending them. But because requests flow through proxies, suspension fails to meaningfully stop underlying behavior. For deliberately orchestrated attacks—such as dispersing harmful queries across multiple stages and proxy accounts, each individually harmless—the cross-account patterns are far less obvious than coordinated spam, whose signals are inherently conspicuous.

Finally, relay stations do not merely reflect traditional offense-defense dynamics—neither between U.S. AI companies and Chinese users, nor between AI safeguards and malicious actors. The black market has its own supply chain and exploitation logic, generating harms far exceeding the original access problem. Facial data harvested today to bypass Anthropic’s KYC verification for relay stations may tomorrow be resold to open fraudulent financial accounts, forge employment records, or generate deepfakes—with legal and reputational consequences borne by original subjects in the Global South. Infrastructure routing Claude requests can deceive users via model substitution, targeted scams based on leaked prompt-data, or extortion. Account-farming operations sustaining proxy pools—bulk SMS verification, fraudulent registration, credential stuffing—feed broader criminal markets in robocalls, phishing SMS, fraudulent loan applications, and credit card fraud. Many harms bear no relation to AI or geopolitics.

Yet every byproduct of today’s gray market—from the potential danger of terrorists using AI to synthesize the next pandemic, to real-world exploitation and crime—is already present. Though the Great Firewall or AI geographic blocking attempts to draw national boundaries around who accesses frontier technology, the gray market reveals that harms are indivisible.

Acknowledgments:

Zilan thanks Alan Chan, Gabriel Wagner, Karuna Nandkumar, and Kayla Blomquist for helpful feedback.

The author acknowledges using LLMs for preliminary desk research, technical concept clarification, and manuscript editing—and gratefully notes she can still access Claude via a Singapore node using a VPN in mainland China without triggering KYC.

Sources include informal conversations.

An Application Programming Interface (API) is the channel enabling developers to integrate software directly with AI models—sending requests programmatically to Anthropic’s servers and receiving responses, rather than interacting through a browser.

Specifically, replacing the ANTHROPICBASEURL environment variable with the proxy’s address.

Sources include informal conversations and desk research.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News