"AI Hackers" Are Coming: How Can Agentic AI Become the New Guardian?

TechFlow Selected TechFlow Selected

"AI Hackers" Are Coming: How Can Agentic AI Become the New Guardian?

Using AI as the spear to attack AI as the shield.

Author: Lafeng de Geek

01 AI Rise: The Covert Security Battle Beneath a Dual-Edged Technology

As AI technology rapidly advances, the threats facing cybersecurity are becoming increasingly complex. Attack methods have become not only more efficient and stealthy but also given rise to a new form of "AI hacker," triggering various novel cybersecurity crises.

First, generative AI is reshaping the "precision" of online fraud.

In simple terms, this means intelligent phishing attacks. For example, in more targeted scenarios, attackers leverage publicly available social data to train AI models, mass-producing personalized phishing emails that mimic specific users' writing styles or linguistic habits, enabling "customized" scams that bypass traditional spam filters and significantly increase attack success rates.

Next comes the most widely known threat—deepfakes and identity impersonation. Before AI matured, traditional "face-swap fraud attacks," also known as Business Email Compromise (BEC), involved attackers disguising email senders as your boss, colleague, or business partner to steal corporate information, money, or other critical assets.

Now, "face-swapping" has truly arrived. AI-generated face and voice manipulation technologies can forge the identities of public figures or loved ones for scams, public opinion manipulation, or even political interference. Just two months ago, a CFO at a Shanghai-based company received a video conference invitation from someone appearing to be the "chairman." Using AI face-swapping, the impostor claimed an urgent need to pay an "overseas cooperation deposit." Following instructions, the CFO transferred 3.8 million yuan to a designated account, later discovering it was a cross-border scam orchestrated by a fraud ring using deepfake technology.

The third threat is automated attacks and vulnerability exploitation. Advances in AI have driven many fields toward intelligent automation—including cyberattacks. Attackers can use AI to automatically scan system vulnerabilities, generate dynamic attack code, and launch indiscriminate rapid attacks on targets. For instance, AI-powered "zero-day attacks" can immediately write and execute malicious programs upon discovering a vulnerability, leaving traditional defense systems unable to respond in real time.

During this year's Spring Festival, DeepSeek’s official website suffered a massive 3.2 Tbps DDoS attack. Hackers simultaneously infiltrated via API injection with adversarial samples, tampering with model weights and causing core services to collapse for 48 hours, resulting in direct economic losses exceeding tens of millions of dollars. Post-incident tracing revealed long-term infiltration traces linked to the U.S. NSA.

Data poisoning and model vulnerabilities represent another emerging threat. By injecting false information into AI training data (i.e., data poisoning) or exploiting inherent model flaws, attackers can manipulate AI into producing incorrect outputs—posing direct security risks to critical domains and potentially triggering cascading catastrophic consequences. For example, autonomous driving systems may misinterpret "Do Not Enter" signs as "Speed Limit" due to adversarial samples, or medical AI could wrongly classify benign tumors as malignant.

02 AI Must Be Defended by AI

Faced with new cybersecurity threats driven by AI, traditional protection models are proving inadequate. So what countermeasures do we have?

It's clear that the current industry consensus has shifted toward "using AI to fight AI"—not just a technological upgrade, but a paradigm shift in security.

Current efforts fall roughly into three categories: AI model security protection technologies, industry-level defense applications, and broader government and international collaboration.

The key to AI model security protection lies in intrinsic model hardening.

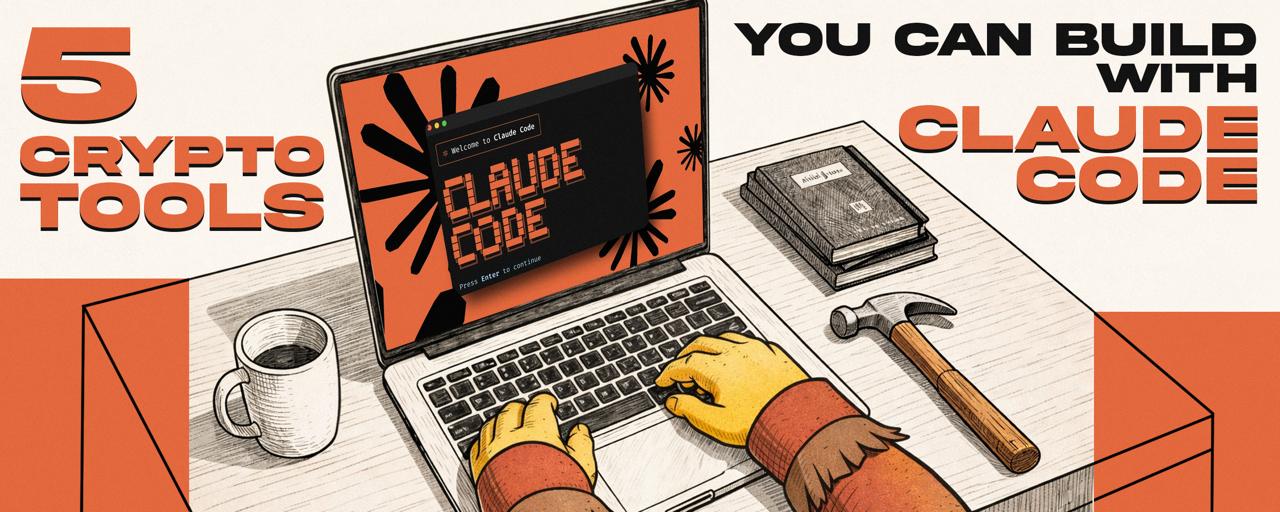

Take the "jailbreak" vulnerability in large language models (LLMs) as an example. Their security mechanisms often fail against generalized jailbreak prompts—attackers systematically bypass built-in protective layers to induce AI into generating violent, discriminatory, or illegal content. To prevent LLM jailbreaking, various model companies have experimented with solutions. For instance, Anthropic released its "Constitution Classifier" in February this year.

Here, "constitution" refers to inviolable natural language rules, serving as safeguards trained on synthetic data. By defining permitted and restricted content, it monitors input and output in real time. In benchmark testing, Claude3.5's success rate in blocking advanced jailbreak attempts rose from 14% to 95% under the classifier, significantly reducing the risk of LLM jailbreaking.

Beyond model-based, general-purpose defenses, industry-specific defense applications are equally noteworthy. Scenario-driven protection in vertical sectors is becoming a key breakthrough point: the financial sector builds anti-fraud barriers through AI risk control models and multimodal data analysis; open-source ecosystems achieve rapid zero-day threat response via intelligent vulnerability hunting; enterprise sensitive information protection relies on AI-driven dynamic management systems.

For example, Cisco demonstrated a solution at the Singapore International Cyber Week that can instantly block employees’ attempts to submit sensitive data queries to ChatGPT and automatically generate compliance audit reports to optimize management workflows.

At the macro level, cross-regional governmental and international collaboration is accelerating. The Singapore Cybersecurity Agency released the *Guidelines for Artificial Intelligence System Security*, mandating local deployment and data encryption to curb misuse of generative AI, particularly establishing protection standards for detecting AI-forged identities in phishing attacks. Meanwhile, the U.S., UK, and Canada jointly launched the "AI Cyber Agent Initiative," focusing on trusted system development and real-time assessment of APT attacks, strengthening collective defense through a unified security certification framework.

So, which methods best harness AI to tackle cybersecurity challenges in the AI era?

"We need an AI security intelligence hub and build a new system around it," emphasized Zhang Fu, founder of QingTeng Cloud Security, during the Second Wuhan Innovation Forum on Cybersecurity. He stressed that "using AI to combat AI" will be the core of future cybersecurity defense architectures. "Within three years, AI will disrupt the entire security industry and all B2B sectors. Products will be rebuilt, achieving unprecedented improvements in efficiency and capability. Future products will be designed for AI, not humans."

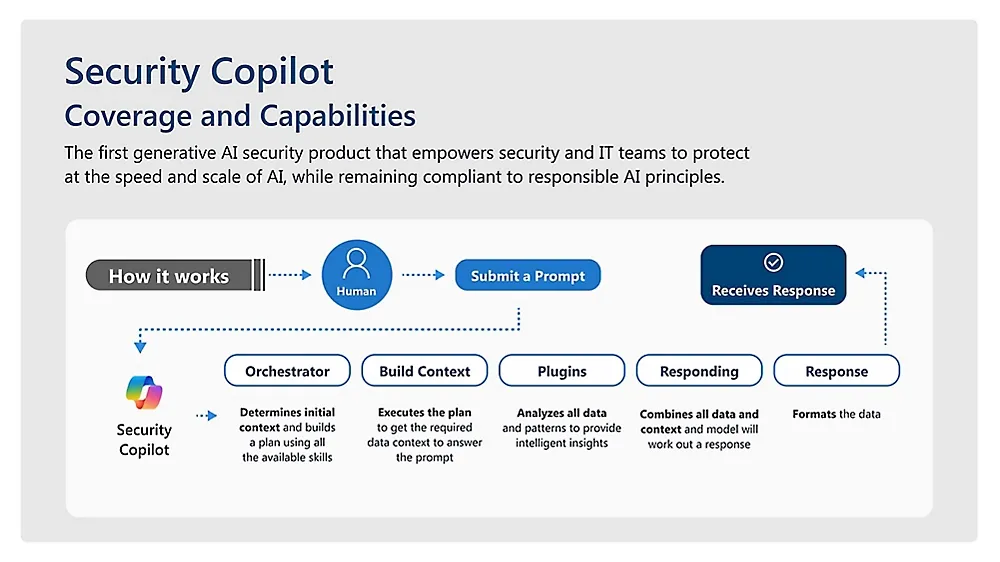

Among these solutions, Microsoft's Security Copilot clearly exemplifies the idea that "future products are for AI": one year ago, Microsoft launched the intelligent Microsoft Security Copilot assistant to help security teams quickly and accurately detect, investigate, and respond to security incidents; one month ago, they introduced new AI agents to assist automatically in critical areas like phishing attacks, data security, and identity management.

Microsoft added six proprietary AI agents to expand Security Copilot functionality. Three assist cybersecurity personnel in filtering alerts: the phishing classification agent reviews phishing warnings and filters out false positives; two others analyze Purview notifications to detect unauthorized employee use of business data.

The Conditional Access Optimization Agent collaborates with Microsoft Entra to identify insecure user access rules and generates one-click remediation plans for administrators. The Vulnerability Remediation Agent integrates with device management tool Intune to quickly locate vulnerable endpoints and apply OS patches. The Threat Intelligence Briefing Agent generates cybersecurity threat reports that may impact organizational systems.

03 Wuxiang: High-Level L4 Autonomous Agents Ensuring Safety

Similarly, in China, to achieve truly "autonomous driving"-level security protection, QingTeng Cloud Security launched Wuxiang, a full-stack security intelligent agent. As the world’s first security AI product to transition from "assisted AI" to "autonomous agent" (Autopilot), its core breakthrough lies in overturning the traditional "reactive" mode, making it independent, automatic, and intelligent.

By integrating machine learning, knowledge graphs, and automated decision-making technologies, Wuxiang independently completes the entire closed-loop process—from threat detection and impact assessment to response and resolution—achieving true autonomous, goal-driven decisions. Its "Agentic AI Architecture" mimics the collaborative logic of human security teams: a "brain" integrates cybersecurity knowledge bases to support planning; "eyes" perceive network environment dynamics at fine granularity; "hands and feet" flexibly invoke diverse security toolchains, forming an efficient judgment network through multi-agent collaboration, enabling task division, cooperation, and information sharing.

Technically, Wuxiang adopts the "ReAct pattern" (Act-Observe-Think-Act cycle) and a "Plan AI + Action AI dual-engine architecture" to ensure dynamic error correction in complex tasks. When tool invocation fails, the system autonomously switches to backup strategies instead of halting. For example, during APT attack analysis, Plan AI acts as the "organizer" breaking down task objectives, while Action AI serves as the "investigation expert" performing log analysis and threat modeling, both advancing in parallel based on a shared real-time knowledge graph.

At the functional module level, Wuxiang has built a complete autonomous decision-making ecosystem: agent personas simulate the reflective, iterative thinking of security analysts to dynamically optimize decision paths; tool invocation integrates host security log queries, network threat intelligence retrieval, and LLM-driven malware analysis; environmental perception captures real-time host assets and network information; knowledge graphs dynamically store entity relationships to aid decision-making; multi-agent collaboration enables parallel task execution through task decomposition and information sharing.

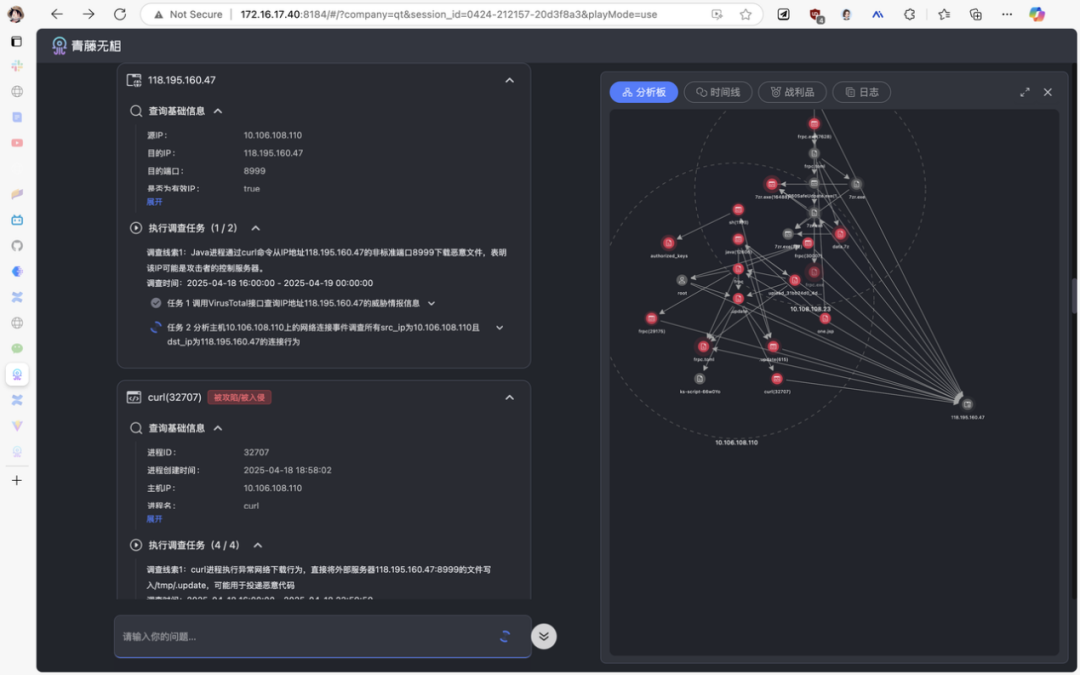

Currently, Wuxiang performs exceptionally well in three core application scenarios: alert analysis, forensic investigation, and security report generation.

In traditional security operations, verifying the authenticity of massive alerts is time-consuming and labor-intensive. Take a local privilege escalation alert as an example: Wuxiang’s alert analysis agent automatically parses threat characteristics and invokes tools such as process permission analysis, parent process tracing, and program signature verification, ultimately determining it a false positive—requiring zero human intervention. In QingTeng’s existing alert tests, the system has achieved 100% alert coverage and 99.99% analysis accuracy, reducing manual workload by over 95%.

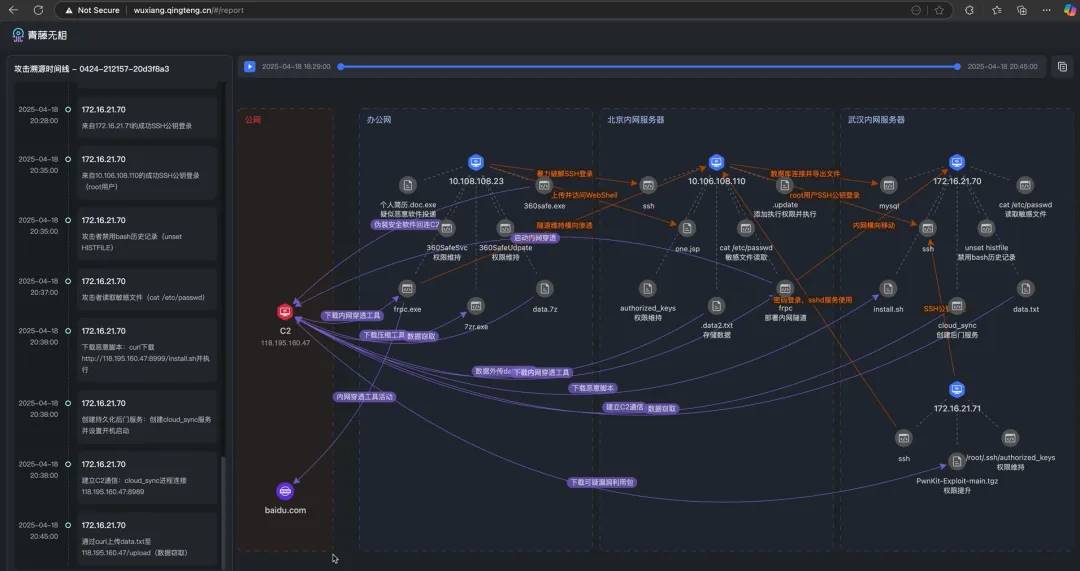

When facing real threats like Webshell attacks, the agent confirms attack validity within seconds through cross-dimensional correlation such as code feature extraction and file permission analysis. Deep forensics tasks that traditionally require multi-department collaboration and days to complete—such as reconstructing propagation paths and assessing lateral impacts—are now automatically chained by the system using host logs, network traffic, and behavioral baselines to generate full attack chain reports, compressing response cycles from "days" to "minutes."

"Our core is transforming the cooperative relationship between AI and humans—we treat AI as a collaborator, achieving a leap from L2 to L4, from assisted driving to high-level autonomous driving," shared Hu Jun, co-founder and product VP at QingTeng. "As AI adapts to more scenarios and achieves higher decision success rates, it gradually takes on greater responsibility, shifting the balance of accountability between humans and AI."

In the forensic analysis scenario, a Webshell alert triggers coordinated multi-agent traceability driven by Wuxiang AI: the "Judgment Expert" locates the one.jsp file based on the alert and generates parallel tasks including file content analysis, author tracing, directory inspection, and process tracking. The "Security Investigator" agent uses file log tools to quickly identify the java (12606) process as the source. This process and the associated host 10.108.108.23 (identified via frequent interactions in access logs) are subsequently brought into investigation.

The agent dynamically expands leads through a threat graph, digging layer by layer from a single file to processes and hosts, with the Judgment Expert synthesizing results to assess overall risk. This process compresses manual investigations—which typically take hours to days—into mere minutes, reconstructing the full attack chain with precision surpassing senior human security experts, comprehensively tracking lateral movement paths. Red team evaluations also show its systematic investigation is nearly impossible to evade.

"Large models outperform humans because they thoroughly examine every corner rather than excluding low-probability cases based on experience," explained Hu Jun. "This means both breadth and depth are superior."

After investigating complex attack scenarios, compiling alerts and investigative clues into reports is usually tedious and time-consuming. AI, however, enables one-click summarization, clearly presenting the attack process in a visual timeline—like a movie seamlessly showing key events. The system automatically organizes key evidence into critical frames of the attack chain and combines contextual environmental information to generate dynamic attack path maps, presenting the entire attack trajectory in an intuitive, multidimensional way.

04 Conclusion

Clearly, AI development presents a dual challenge for cybersecurity.

On one hand, attackers use AI to automate, personalize, and conceal their assaults; on the other, defenders must accelerate technological innovation, enhancing detection and response capabilities through AI. In the future, the competition between offensive and defensive AI technologies will determine the overall state of cybersecurity, and the refinement of security agents will be key to balancing risk and progress.

Security agent Wuxiang brings new changes to both security architecture and cognitive paradigms.

At its core, Wuxiang transforms how AI is used. Its breakthrough lies in integrating multidimensional data perception, protection strategy generation, and explainable decision-making into an organic whole—shifting from treating AI as a tool to empowering AI to work independently and autonomously.

By correlating heterogeneous data such as logs, text, and traffic, the system can detect early signs of APT activity before attackers complete their chains. More importantly, its visually interpretable decision-making process turns the traditional "black-box alert"—where tools know "what" but not "why"—into history. Security teams can now not only see threats but also understand the logic behind their evolution.

This innovation represents a paradigm shift in security thinking—from "fixing the fence after the sheep are lost" to "preparing before the rain comes"—a redefinition of the rules of engagement in offense-defense dynamics.

Wuxiang is like a hunter with digital intuition: by modeling microscopic behavioral features such as memory operations in real time, it detects hidden custom trojans amid vast noise; its dynamic attack surface management engine continuously evaluates asset risk weights, ensuring precise allocation of protection resources to critical systems; its intelligent threat intelligence digestion mechanism transforms tens of thousands of daily alerts into actionable defense commands—and even predicts the evolution direction of attack variants. While traditional solutions struggle to respond to ongoing intrusions, Wuxiang is already anticipating and blocking the attacker’s next move.

"The emergence of AI intelligence hub systems (high-level security agents) will completely reshape the landscape of cybersecurity. And all we need to do is fully seize this opportunity," said Zhang Fu.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News