Foresight Ventures: 去中心化算力网络的分析和反思

TechFlow Selected TechFlow Selected

Foresight Ventures: 去中心化算力网络的分析和反思

在 AI 大模型的发展趋势下,算力资源会是下一个十年的大战场,也是未来人类社会最重要的东西。

撰文:Yihan@Foresight Ventures

摘要

-

目前 AI + Crypto 结合的点主要有 2 个比较大的方向:分布式算力和 ZKML;关于 ZKML 可以参考我之前的一篇文章。本文将围绕去中心化的分布式算力网络做出分析和反思。

-

在 AI 大模型的发展趋势下,算力资源会是下一个十年的大战场,也是未来人类社会最重要的东西,并且不只是停留在商业竞争,也会成为大国博弈的战略资源。未来对于高性能计算基础设施、算力储备的投资将会指数级上升。

-

去中心化的分布式算力网络在 AI 大模型训练上的需求是最大的,但是也面临最大的挑战和技术瓶颈。包括需要复杂的数据同步和网络优化问题等。此外,数据隐私和安全也是重要的制约因素。虽然有一些现有的技术能提供初步解决方案,但在大规模分布式训练任务中,由于计算和通信开销巨大,这些技术仍无法应用。

-

去中心化的分布式算力网络在模型推理上更有机会落地,可以预测未来的增量空间也足够大。但也面临通信延迟、数据隐私、模型安全等挑战。和模型训练相比,推理时的计算复杂度和数据交互性较低,更适合在分布式环境中进行。

-

通过 Together 和 Gensyn.ai 两个初创公司的案例,分别从技术优化和激励层设计的角度说明了去中心化的分布式算力网络整体的研究方向和具体思路。

分布式算力 — 大模型训练

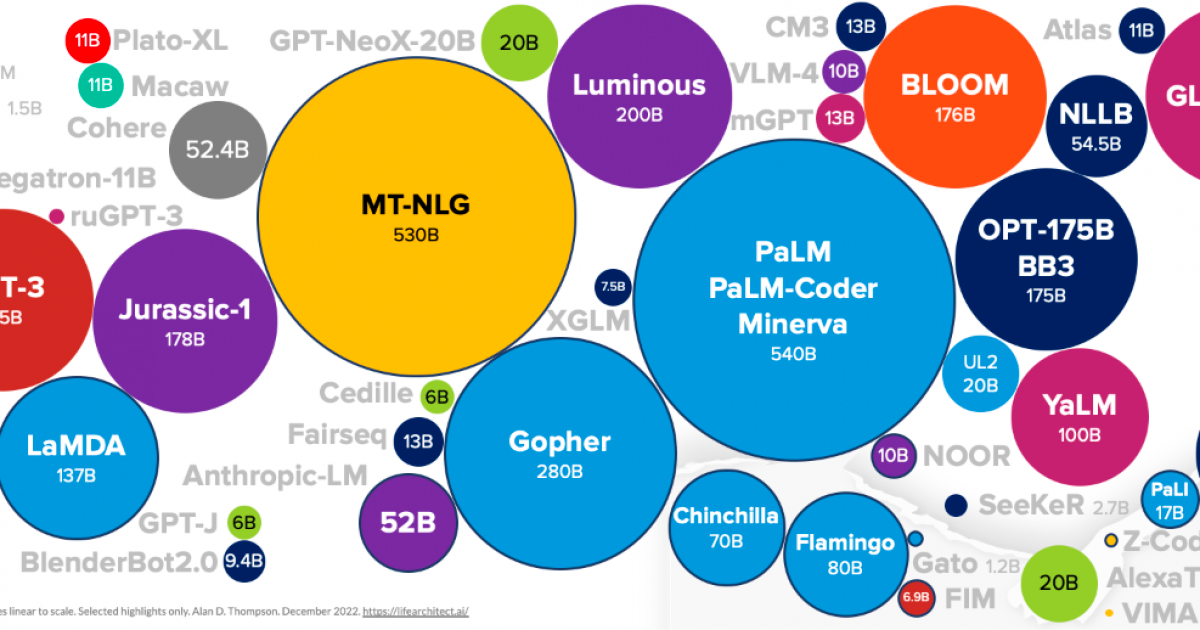

我们在讨论分布式算力在训练时的应用,一般聚焦在大语言模型的训练,主要原因是小模型的训练对算力的需求并不大,为了做分布式去搞数据隐私和一堆工程问题不划算,不如直接中心化解决。而大语言模型对算力的需求巨大,并且现在在爆发的最初阶段, 2012–2018 ,AI 的计算需求大约每 4 个月就翻一倍,现在更是对算力需求的集中点,可以预判未来 5–8 年仍然会是巨大的增量需求。

在巨大机遇的同时,也需要清晰的看到问题。大家都知道场景很大,但是具体的挑战在哪里?谁能 target 这些问题而不是盲目入局,才是判断这个赛道优秀项目的核心。

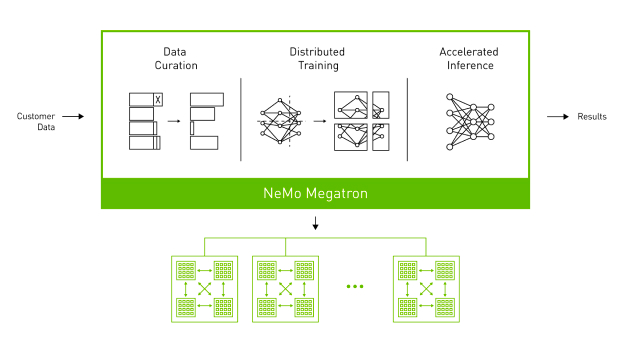

(NVIDIA NeMo Megatron Framework)

1.整体训练流程

以训练一个具有 1750 亿参数的大模型为例。由于模型规模巨大,需要在很多个 GPU 设备上进行并行训练。假设有一个中心化的机房,有 100 个 GPU,每个设备具有 32 GB 的内存。

-

数据准备:首先需要一个巨大的数据集,这个数据集包含例如互联网信息、新闻、书籍等各种数据。在训练前需要对这些数据进行预处理,包括文本清洗、标记化(tokenization)、词表构建等。

-

数据分割:处理完的数据会被分割成多个 batch,以在多个 GPU 上并行处理。假设选择的 batch 大小是 512 ,也就是每个批次包含 512 个文本序列。然后,我们将整个数据集分割成多个批次,形成一个批次队列。

-

设备间数据传输:在每个训练步骤开始时,CPU 从批次队列中取出一个批次,然后将这个批次的数据通过 PCIe 总线发送到 GPU。假设每个文本序列的平均长度是 1024 个标记,那么每个批次的数据大小约为 512 * 1024 * 4 B = 2 MB(假设每个标记使用 4 字节的单精度浮点数表示)。这个数据传输过程通常只需要几毫秒。

-

并行训练:每个 GPU 设备接收到数据后,开始进行前向传播(forward pass)和反向传播(backward pass)计算,计算每个参数的梯度。由于模型的规模非常大,单个 GPU 的内存无法存放所有的参数,因此我们使用模型并行技术,将模型参数分布在多个 GPU 上。

-

梯度聚合和参数更新:在反向传播计算完成后,每个 GPU 都得到了一部分参数的梯度。然后,这些梯度需要在所有的 GPU 设备之间进行聚合,以便计算全局梯度。这需要通过网络进行数据传输,假设用的是 25 Gbps 的网络,那么传输 700 GB 的数据(假设每个参数使用单精度浮点数,那么 1750 亿参数约为 700 GB)需要约 224 秒。然后,每个 GPU 根据全局梯度更新其存储的参数。

-

同步:在参数更新后,所有的 GPU 设备需要进行同步,以确保它们都使用一致的模型参数进行下一步的训练。这也需要通过网络进行数据传输。

-

重复训练步骤:重复上述步骤,直到完成所有批次的训练,或者达到预定的训练轮数(epoch)。

这个过程涉及到大量的数据传输和同步,这可能会成为训练效率的瓶颈。因此,优化网络带宽和延迟,以及使用高效的并行和同步策略,对于大规模模型训练非常重要。

2.通信开销的瓶颈:

需要注意的是,通信的瓶颈也是导致现在分布式算力网络做不了大语言模型训练的原因。

各个节点需要频繁地交换信息以协同工作,这就产生了通信开销。对于大语言模型,由于模型的参数数量巨大这个问题尤为严重。通信开销分这几个方面:

-

数据传输:训练时节点需要频繁地交换模型参数和梯度信息。这需要将大量的数据在网络中传输,消耗大量的网络带宽。如果网络条件差或者计算节点之间的距离较大,数据传输的延迟就会很高,进一步加大了通信开销。

-

同步问题:训练时节点需要协同工作以保证训练的正确进行。这需要在各节点之间进行频繁的同步操作,例如更新模型参数、计算全局梯度等。这些同步操作需要在网络中传输大量的数据,并且需要等待所有节点完成操作,这会导致大量的通信开销和等待时间。

-

梯度累积和更新:训练过程中各节点需要计算自己的梯度,并将其发送到其他节点进行累积和更新。这需要在网络中传输大量的梯度数据,并且需要等待所有节点完成梯度的计算和传输,这也是导致大量通信开销的原因。

-

数据一致性:需要保证各节点的模型参数保持一致。这需要在各节点之间进行频繁的数据校验和同步操作,这会导致大量的通信开销。

虽然有一些方法可以减少通信开销,比如参数和梯度的压缩、高效并行策略等,但是这些方法可能会引入额外的计算负担,或者对模型的训练效果产生负面影响。并且,这些方法也不能完全解决通信开销问题,特别是在网络条件差或计算节点之间的距离较大的情况下。

举一个例子:

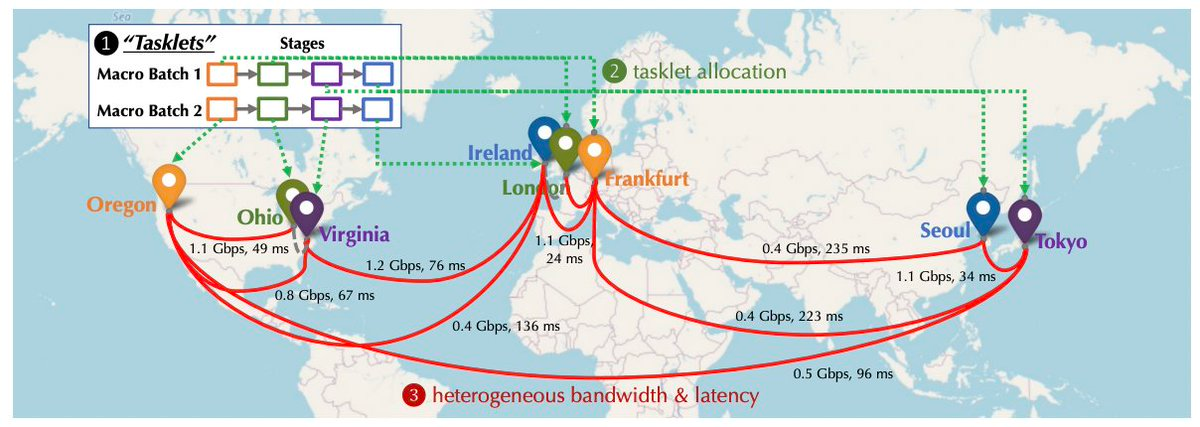

去中心化分布式算力网络

GPT-3 模型有 1750 亿个参数,如果我们使用单精度浮点数(每个参数 4 字节)来表示这些参数,那存储这些参数就需要~ 700 GB 的内存。而在分布式训练中,这些参数需要在各个计算节点之间频繁地传输和更新。

假设有 100 个计算节点,每个节点每个步骤都需要更新所有的参数,那么每个步骤都需要传输约 70 TB(700 GB* 100 )的数据。如果我们假设一个步骤需要 1 s(非常乐观的假设),那么每秒钟就需要传输 70 TB 的数据。这种对带宽的需求已经远超过了大多数网络,也是一个可行性的问题。

实际情况下,由于通信延迟和网络拥堵,数据传输的时间可能会远超 1 s。这意味着计算节点可能需要花费大量的时间等待数据的传输,而不是进行实际的计算。这会大大降低训练的效率,而这种效率上的降低不是等一等就能解决的,而是可行和不可行的差别,会让整个训练过程不可行。

中心化机房

就算是在中心化的机房环境下,大模型的训练仍然需要很重的通信优化。

在中心化的机房环境中,高性能计算设备作为集群,通过高速网络进行连接来共享计算任务。然而,即使在这种高速网络环境中训练参数数量极大的模型,通信开销仍然是一个瓶颈,因为模型的参数和梯度需要在各计算设备之间进行频繁的传输和更新。

就像开始提到的,假设有 100 个计算节点,每个服务器具有 25 Gbps 的网络带宽。如果每个服务器每个训练步骤都需要更新所有的参数,那每个训练步骤需要传输约 700 GB 的数据需要~ 224 秒。通过中心化机房的优势,开发者可以在数据中心内部优化网络拓扑,并使用模型并行等技术,显著地减少这个时间。

相比之下,如果在一个分布式环境中进行相同的训练,假设还是 100 个计算节点,分布在全球各地,每个节点的网络带宽平均只有 1 Gbps。在这种情况下,传输同样的 700 GB 数据需要~ 5600 秒,比在中心化机房需要的时间长得多。并且,由于网络延迟和拥塞,实际所需的时间可能会更长。

不过相比于在分布式算力网络中的情况,优化中心化机房环境下的通信开销相对容易。因为在中心化的机房环境中,计算设备通常会连接到同一个高速网络,网络的带宽和延迟都相对较好。而在分布式算力网络中,计算节点可能分布在全球各地,网络条件可能会相对较差,这使得通信开销问题更为严重。

OpenAI 训练 GPT-3 的过程中采用了一种叫 Megatron 的模型并行框架来解决通信开销的问题。Megatron 通过将模型的参数分割并在多个 GPU 之间并行处理,每个设备只负责存储和更新一部分参数,从而减少每个设备需要处理的参数量,降低通信开销。同时,训练时也采用了高速的互连网络,并通过优化网络拓扑结构来减少通信路径长度。

(Data used to train LLM models)

3.为什么分布式算力网络不能做这些优化

要做也是能做的,但相比中心化的机房,这些优化的效果很受限。

1. 网络拓扑优化:在中心化的机房可以直接控制网络硬件和布局,因此可以根据需要设计和优化网络拓扑。然而在分布式环境中,计算节点分布在不同的地理位置,甚至一个在中国,一个在美国,没办法直接控制它们之间的网络连接。尽管可以通过软件来优化数据传输路径,但不如直接优化硬件网络有效。同时,由于地理位置的差异,网络延迟和带宽也有很大的变化,从而进一步限制网络拓扑优化的效果。

2. 模型并行:模型并行是一种将模型的参数分割到多个计算节点上的技术,通过并行处理来提高训练速度。然而这种方法通常需要频繁地在节点之间传输数据,因此对网络带宽和延迟有很高的要求。在中心化的机房由于网络带宽高、延迟低,模型并行可以非常有效。然而,在分布式环境中,由于网络条件差,模型并行会受到较大的限制。

4.数据安全和隐私的挑战

几乎所有涉及数据处理和传输的环节都可能影响到数据安全和隐私:

1. 数据分配:训练数据需要被分配到各个参与计算的节点。这个环节数据可能会在分布式节点被恶意使用/泄漏。

2. 模型训练:在训练过程中,各个节点都会使用其分配到的数据进行计算,然后输出模型参数的更新或梯度。这个过程中,如果节点的计算过程被窃取或者结果被恶意解析,也可能泄露数据。

3. 参数和梯度聚合:各个节点的输出需要被聚合以更新全局模型,聚合过程中的通信也可能泄露关于训练数据的信息。

对于数据隐私问题有哪些解决方案?

-

安全多方计算:SMC 在某些特定的、规模较小的计算任务中已经被成功应用。但在大规模的分布式训练任务中,由于其计算和通信开销较大,目前还没有广泛应用。

-

差分隐私:应用在某些数据收集和分析任务中,如 Chrome 的用户统计等。但在大规模的深度学习任务中,DP 会对模型的准确性产生影响。同时,设计适当的噪声生成和添加机制也是一个挑战。

-

联邦学习:应用在一些边缘设备的模型训练任务中,比如 Android 键盘的词汇预测等。但在更大规模的分布式训练任务中,FL 面临通信开销大、协调复杂等问题。

-

同态加密:在一些计算复杂度较小的任务中已经被成功应用。但在大规模的分布式训练任务中,由于其计算开销较大,目前还没有广泛应用。

小结一下

以上每种方法都有其适应的场景和局限性,没有一种方法可以在分布式算力网络的大模型训练中完全解决数据隐私问题。

寄予厚望的 ZK 是否能解决大模型训练时的数据隐私问题?

理论上 ZKP 可以用于确保分布式计算中的数据隐私,让一个节点证明其已经按照规定进行了计算,但不需要透露实际的输入和输出数据。

但实际上将 ZKP 用于大规模分布式算力网络训练大模型的场景中面临以下瓶颈:

-

计算和通信开销 up:构造和验证零知识证明需要大量的计算资源。此外,ZKP 的通信开销也很大,因为需要传输证明本身。在大模型训练的情况下,这些开销可能会变得特别显著。例如,如果每个小批量的计算都需要生成一个证明,那么这会显著增加训练的总体时间和成本。

-

ZK 协议的复杂度:设计和实现一个适用于大模型训练的 ZKP 协议会非常复杂。这个协议需要能够处理大规模的数据和复杂的计算,并且需要能够处理可能出现的异常报错。

-

硬件和软件的兼容性:使用 ZKP 需要特定的硬件和软件支持,这可能在所有的分布式计算设备上都不可用。

小结一下

要将 ZKP 用于大规模分布式算力网络训练大模型,还需要长达数年的研究和开发,同时也需要学术界有更多的精力和资源放在这个方向。

分布式算力 — 模型推理

分布式算力另外一个比较大的场景在模型推理上,按照我们对于大模型发展路径的判断,模型训练的需求会在经过一个高点后随着大模型的成熟而逐步放缓,但模型的推理需求会相应地随着大模型和 AIGC 的成熟而指数级上升。

推理任务相较于训练任务,通常计算复杂度较低,数据交互性较弱,更适合在分布式环境中进行。

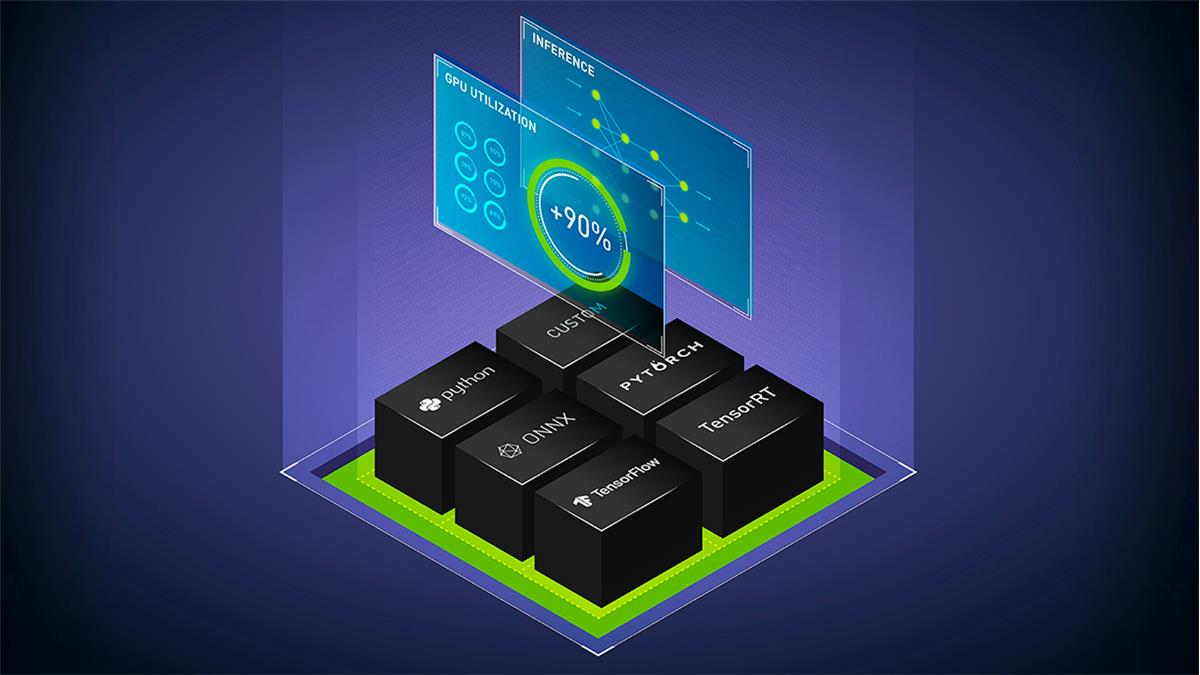

(Power LLM inference with NVIDIA Triton)

1.挑战

通信延迟:

在分布式环境中,节点间的通信是必不可少的。在去中心化的分布式算力网络中,节点可能遍布全球,因此网络延迟会是一个问题,特别是对于需要实时响应的推理任务。

模型部署和更新:

模型需要部署到各个节点上。如果模型进行了更新,那么每个节点都需要更新其模型,需要消耗大量的网络带宽和时间。

数据隐私:

虽然推理任务通常只需要输入数据和模型,不需要回传大量的中间数据和参数,但是输入数据仍然可能包含敏感信息,如用户的个人信息。

模型安全:

在去中心化的网络中,模型需要部署到不受信任的节点上,会导致模型的泄漏导致模型产权和滥用问题。这也可能引发安全和隐私问题,如果一个模型被用于处理敏感数据,节点可以通过分析模型行为来推断出敏感信息。

质量控制:

去中心化的分布式算力网络中的每个节点可能具有不同的计算能力和资源,这可能导致推理任务的性能和质量难以保证。

2.可行性

计算复杂度:

在训练阶段,模型需要反复迭代,训练过程中需要对每一层计算前向传播和反向传播,包括激活函数的计算、损失函数的计算、梯度的计算和权重的更新。因此,模型训练的计算复杂度较高。

在推理阶段,只需要一次前向传播计算预测结果。例如,在 GPT-3 中,需要将输入的文本转化为向量,然后通过模型的各层(通常为 Transformer 层)进行前向传播,最后得到输出的概率分布,并根据这个分布生成下一个词。在 GANs 中,模型需要根据输入的噪声向量生成一张图片。这些操作只涉及模型的前向传播,不需要计算梯度或更新参数,计算复杂度较低。

数据交互性:

在推理阶段,模型通常处理的是单个输入,而不是训练时的大批量的数据。每次推理的结果也只依赖于当前的输入,而不依赖于其它的输入或输出,因此无需进行大量的数据交互,通信压力也就更小。

以生成式图片模型为例,假设我们使用 GANs 生成图片,我们只需要向模型输入一个噪声向量,然后模型会生成一张对应的图片。这个过程中,每个输入只会生成一个输出,输出之间没有依赖关系,因此无需进行数据交互。

以 GPT-3 为例,每次生成下一个词只需要当前的文本输入和模型的状态,不需要和其他输入或输出进行交互,因此数据交互性的要求也弱。

小结一下

不管是大语言模型还是生成式图片模型,推理任务的计算复杂度和数据交互性都相对较低,更适合在去中心化的分布式算力网络中进行,这也是现在我们看到大多数项目在发力的一个方向。

项目

去中心化的分布式算力网络的技术门槛和技术广度都非常高,并且也需要硬件资源的支撑,因此现在我们并没有看到太多尝试。以 Together 和 Gensyn.ai 举例:

1. Together

(RedPajama from Together)

Together 是一家专注于大模型的开源,致力于去中心化的 AI 算力方案的公司,希望任何人在任何地方都能接触和使用 AI。Together 刚完成了 Lux Capital 领投的 20 m USD 的种子轮融资。

Together 由 Chris、Percy、Ce 联合创立,初衷是由于大模型训练需要大量高端的 GPU 集群和昂贵的支出,并且这些资源和模型训练的能力也集中在少数大公司。

从我的角度看,一个比较合理的分布式算力的创业规划是:

Step 1. 开源模型

要在去中心化的分布式算力网络中实现模型推理,先决条件是节点必须能低成本地获取模型,也就是说使用去中心化算力网络的模型需要开源(如果模型需要在相应的许可下使用,就会增加实现的复杂性和成本)。比如 chatgpt 作为一个非开源的模型,就不适合在去中心化算力网络上执行。

因此,可以推测出一个提供去中心化算力网络的公司的隐形壁垒是需要具备强大的大模型开发和维护能力。自研并开源一个强大的 base model 能够一定程度上摆脱对第三方模型开源的依赖,解决去中心化算力网络最基本的问题。同时也更有利于证明算力网络能够有效地进行大模型的训练和推理。

而 Together 也是这么做的。最近发布的基于 LLaMA 的 RedPajama 是由 Together, Ontocord.ai, ETH DS 3 Lab, Stanford CRFM 和 Hazy Research 等团队联合启动的,目标是研发一系列完全开源的大语言模型。

Step 2. 分布式算力在模型推理上落地

就像上面两节提到的,和模型训练相比,模型推理的计算复杂度和数据交互性较低,更适合在去中心化的分布式环境中进行。

在开源模型的基础上,Together 的研发团队针对 RedPajama-INCITE-3 B 模型现做了一系列更新,比如利用 LoRA 实现低成本的微调,使模型在 CPU(特别是使用 M 2 Pro 处理器的 MacBook Pro)上运行模型更加丝滑。同时,尽管这个模型的规模较小,但它的能力却超过了相同规模的其他模型,并且在法律、社交等场景得到了实际应用。

Step 3. 分布式算力在模型训练上落地

(Overcoming Communication Bottlenecks for Decentralized Training 的算力网络示意图)

从中长期来看,虽然面临很大的挑战和技术瓶颈,承接 AI 大模型训练上的算力需求一定是最诱人的。Together 在建立之初就开始布局如何克服去中心化训练中的通信瓶颈方面的工作。他们也在 NeurIPS 2022 上发布了相关的论文:Overcoming Communication Bottlenecks for Decentralized Training。我们可以主要归纳出以下方向:

调度优化

在去中心化环境中进行训练时,由于各节点之间的连接具有不同的延迟和带宽,因此,将需要重度通信的任务分配给拥有较快连接的设备是很重要的。Together 通过建立模型来描述特定调度策略的成本,更好地优化调度策略,以最小化通信成本,最大化训练吞吐量。Together 团队还发现,即使网络慢 100 倍,端到端的训练吞吐量也只慢了 1.7 至 2.3 倍。因此,通过调度优化来追赶分布式网络和中心化集群之间的差距很有戏。

通信压缩优化

Together 提出了对于前向激活和反向梯度进行通信压缩,引入了 AQ-SGD 算法,该算法提供了对随机梯度下降收敛的严格保证。AQ-SGD 能够在慢速网络(比如 500 Mbps)上微调大型基础模型,与在中心化算力网络(比如 10 Gbps)无压缩情况下的端到端训练性能相比,只慢了 31% 。此外,AQ-SGD 还可以与最先进的梯度压缩技术(比如 QuantizedAdam)结合使用,实现 10% 的端到端速度提升。

项目总结

Together 团队配置非常全面,成员都有非常强的学术背景,从大模型开发、云计算到硬件优化都有行业专家支撑。并且 Together 在路径规划上确实展现出了一种长期有耐心的架势,从研发开源大模型到测试闲置算力(比如 mac)在分布式算力网络用语模型推理,再到分布式算力在大模型训练上的布局。 — 有那种厚积薄发的感觉了:)

但是目前并没有看到 Together 在激励层过多的研究成果,我认为这和技术研发具有相同的重要性,是确保去中心化算力网络发展的关键因素。

2.Gensyn.ai

(Gensyn.ai)

从 Together 的技术路径我们可以大致理解去中心化算力网络在模型训练和推理上的落地过程以及相应的研发重点。

另一个不能忽视的重点是算力网络激励层/共识算法的设计,比如一个优秀的网络需要具备:

1. 确保收益足够有吸引力;

2. 确保每个矿工获得了应有的收益,包括防作弊和多劳多得;

3. 确保任务在不同节点直接合理调度和分配,不会有大量闲置节点或者部分节点过度拥挤;

4. 激励算法简洁高效,不会造成过多的系统负担和延迟;

看看 Gensyn.ai 是怎么做的:

-

成为节点

首先,算力网络中的 solver 通过 bid 的方式竞争处理 user 提交的任务的权利,并且根据任务的规模和被发现作弊的风险,solver 需要抵押一定的金额。

-

验证

Solver 在更新 parameters 的同时生成多个 checkpoints(保证工作的透明性和可追溯性),并且会定期生成关于任务的密码学加密推理 proofs(工作进度的证明);

Solver 完成工作并产生了一部分计算结果时,协议会选择一个 verifier,verifier 也会质押一定金额(确保 verifier 诚实地执行验证),并且根据上述提供的 proofs 来决定需要验证哪一部分的计算结果。

-

如果 solver 和 verifier 出现分歧

通过基于 Merkle tree 的数据结构,定位到计算结果存在分歧的确切位置。整个验证的操作都会上链,作弊者会被扣除质押的金额。

项目总结

激励和验证算法的设计使得 Gensyn.ai 不需要在验证过程中去重放整个计算任务的所有结果,而只需要根据提供的证明对一部分结果进行复制和验证,这极大地提高了验证的效率。同时,节点只需要存储部分计算结果,这也降低了存储空间和计算资源的消耗。另外,潜在的作弊节点无法预测哪些部分会被选中进行验证,所以这也降低了作弊风险;

这种验证分歧并发现作弊者的方式也可以在不需要比较整个计算结果的情况下(从 Merkle tree 的根节点开始,逐步向下遍历),可以快速找到计算过程中出错的地方,这在处理大规模计算任务时非常有效。

总之 Gensyn.ai 的激励/验证层设计目标就是:简洁高效。但目前仅限于理论层面,具体实现可能还会面临以下挑战:

-

在经济模型上,如何设定合适的参数,使其既能有效地防止欺诈,又不会对参与者构成过高的门槛。

-

在技术实现上,如何制定一种有效的周期性的加密推理证明,也是一个需要高级密码学知识的复杂问题。

-

在任务分配上仅仅算力网络如何挑选和分配任务给不同的 solver 也需要合理的调度算法的支撑,仅仅按照 bid 机制来分配任务从效率和可行性上看显然是有待商榷的,比如算力强的节点可以处理更大规模的任务,但可能没有参与 bid(这里就涉及到对节点 availability 的激励问题),算力低的节点可能出价最高但并不适合处理一些复杂的大规模计算任务。

对未来的一点思考

谁需要去中心化算力网络这个问题其实一直没有得到验证。闲置算力应用在对算力资源需求巨大的大模型训练上显然是最 make sense,也是想象空间最大的。但事实上通信、隐私等瓶颈不得不让我们重新思考:

去中心化地训练大模型是不是真的能看到希望?

如果跳出这种大家共识的,“最合理的落地场景”,是不是把去中心化算力应用在小型 AI 模型的训练也是一个很大的场景。从技术角度看,目前的限制因素都由于模型的规模和架构得到了解决,同时,从市场上看,我们一直觉得大模型的训练从当下到未来都会是巨大的,但小型 AI 模型的市场就没有吸引力了吗?

我觉得未必。相比大模型小型 AI 模型更便于部署和管理,而且在处理速度和内存使用方面更有效率,在大量的应用场景中,用户或者公司并不需要大语言模型更通用的推理能力,而是只关注在一个非常细化的预测目标。因此,在大多数场景中,小型 AI 模型仍然是更可行的选择,不应该在 fomo 大模型的潮水中被过早地忽视。

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News