a16z: What Are the New Opportunities in Gaming Infrastructure for the Metaverse Era?

TechFlow Selected TechFlow Selected

a16z: What Are the New Opportunities in Gaming Infrastructure for the Metaverse Era?

How can we transcend the legacy walled gardens and unlock the potential of the metaverse?

Translated by: GameLook

Imagine downloading a super popular parkour game where your in-game character instantly gains new abilities. After just a few minutes of onboarding—climbing walls and vaulting over obstacles—you're ready for bigger challenges. You teleport yourself into one of your favorite games, GTA: Metaverse, and follow a route set by another player, flipping over car hoods and leaping from rooftop to rooftop. Wait… what's that glowing object beneath the mailbox? An ultra-evolved Charizard! You pull out a Poké Ball from your backpack, catch it, and carry on...

This gameplay scenario is impossible today, but could become reality in the future. Composability and interoperability will be realized within games, revolutionizing how games are built and experienced. Composability refers to the ability to reuse, recombine, and iterate on foundational building blocks; interoperability means components from one game can be used in another.

Game developers will build products faster because they won't need to start from scratch every time. With lower barriers to experimentation and risk-taking, they’ll create more innovative games, and more creators will join the field as development becomes more accessible. The essence of a game may include new "meta-experiences"—like the example above—that span across multiple games.

Of course, any discussion about “meta-experiences” inevitably leads to a frequently discussed concept: the metaverse. In fact, many view the metaverse as an intricate game, but its potential extends far beyond that. Ultimately, the metaverse will represent everything we do online to interact and connect with others. Built upon gaming technology and workflows, only game creators hold the key to unlocking the metaverse’s full potential.

Why game creators? No other industry has such extensive experience in creating large-scale online worlds where thousands—or even millions—of participants interact in real time. Games have long moved beyond mere “playing”—they now include “trading,” “building,” “streaming,” and “shopping.” The metaverse adds further actions like “working” or “dating.” Just as microservices and cloud computing unleashed waves of innovation in tech, I believe the next generation of game technologies will spark a new era of creativity and invention in gaming.

Many games today already support UGC (user-generated content), allowing players to create expansions for existing titles. Some games, like Roblox and Fortnite, are so extensible that they now refer to themselves as metaverses. Yet current-generation game technology remains largely rooted in single-player design, limiting how far we can go.

A true game revolution will require full-stack innovation—from production pipelines and creative tools to game engines, multiplayer networking, data analytics, and live operations. Recently, a16z analyst James Gwertzman wrote about his vision for the evolution of games, detailing the areas of innovation needed to usher in this new era.

Below is Gamelook’s translation of the full article:

The Future of Gaming

For decades, games were primarily singular, fixed experiences. Developers built them, released them, then moved on to sequels. Players bought them, played through, and once the content was exhausted, moved on to the next title. Typically, a traditional game offered only 10–20 hours of gameplay.

We are now in the Game-as-a-Service (GaaS) era, where developers continuously update games post-launch. Many titles also feature metaverse-like UGC capabilities, including virtual concerts and educational content. Roblox and Minecraft even host in-game marketplaces where player-creators earn revenue from their creations.

However, crucially, these games remain (intentionally) isolated from each other. While each world may be immersive, they are closed ecosystems with no transferability between them—no shared resources, skills, content, or friends.

So how do we break down these legacy walled gardens and unlock the metaverse’s potential? As composability and interoperability become central to metaverse gaming, we must rethink our approach to several key aspects:

Identity. In the metaverse, players need a single identity usable across many games and platforms. Today, each platform requires separate user profiles, forcing players to tediously rebuild reputations and profiles from scratch with every new game.

Friends. Similarly, friend lists remain siloed per game. At best, games use social accounts like Facebook as friend sources. Ideally, your friend network should follow you across games, making it easier to find people to play with and share competitive leaderboards.

Personal Items. Currently, items earned in one game cannot be transferred or used in another—and for good reason. Bringing a modern assault rifle into a medieval game might feel satisfying at first, but quickly breaks immersion. However, with proper constraints, cross-game item exchange could open new creative and improvisational gameplay possibilities.

Gameplay. Today’s games are tightly bound to specific genres. For example, the joy of a “platformer” like Super Mario Odyssey lies in mastering movement through a virtual world. But by opening up games and allowing elements to “mash up,” players can discover novel experiences and craft their own stories.

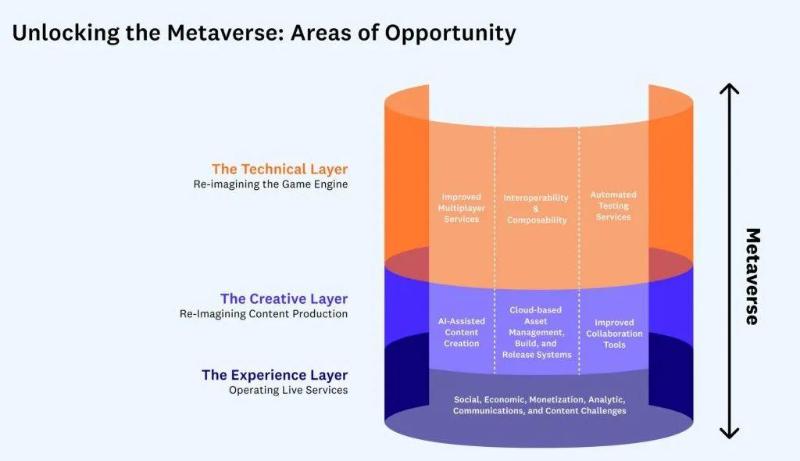

I see these changes unfolding across three distinct layers of game development: technical (game engines), creative (content creation), and experiential (live operations). Each layer presents clear opportunities for innovation, which I’ll explore below.

Note: Game development is an extremely complex process—more so than any other art form. It’s highly nonlinear, requiring constant iteration, since no matter how promising something looks on paper, you can’t know if it’s truly fun until you play it. In this sense, game development resembles choreographing a dance—the real work happens collaboratively in rehearsal studios.

The expandable section below outlines the game production pipeline, which may help readers unfamiliar with the full scope of game development.

Technical Layer: Reimagining Game Engines

At the heart of most modern game development is the game engine, which powers player experiences and enables teams to build new games efficiently. Popular engines like Unity or Unreal Engine offer reusable functionality across projects, freeing creators to focus on unique aspects of their games. This saves time and money while leveling the playing field, enabling small teams to compete with major studios.

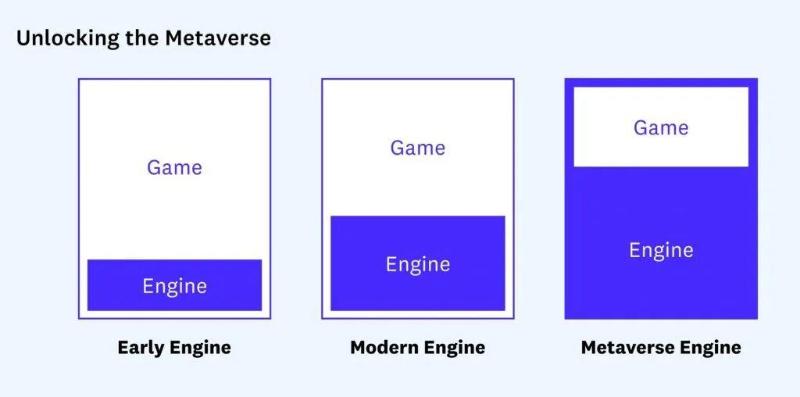

Yet over the past two decades, the core role of game engines hasn’t fundamentally changed. Despite expanding services—from graphics rendering and audio playback to multiplayer features, social systems, post-launch analytics, and in-game ads—engines are still typically distributed as codebases, with each game fully self-contained.

But when envisioning the metaverse, engines take on a greater role. To break down walls between games and experiences, it’s likely that games will run *within* engines rather than alongside them. In this expanded view, engines become platforms, and communication between them will largely define the shared metaverse I envision.

Take Roblox as an example. The Roblox platform provides core services similar to Unity or Unreal—graphics rendering, audio playback, physics simulation, and multiplayer networking. But it goes further, offering unique features such as avatars and persistent identities across its game library; enhanced social systems including shared friend lists; robust safety tools to maintain community integrity; and creation tools and asset libraries for users to build new games.

Still, Roblox falls short of being a true metaverse—it remains a walled garden. While limited sharing exists among games within the Roblox platform, there’s no interoperability between Roblox and other engines or platforms.

To fully unlock the metaverse, game engine developers must innovate in interoperability, composability, multiplayer services, and automated testing.

Interoperability and Composability

To unlock the metaverse and deliver experiences like the one described at the beginning, virtual worlds will need unprecedented levels of cooperation and interoperability. While it’s conceivable that one company could control a universal platform powering the global metaverse, this is neither desirable nor feasible. Instead, decentralized game engine platforms are more likely to emerge.

Of course, when discussing decentralization, Web3 immediately comes to mind—a suite of technologies based on blockchain and smart contracts that decentralize ownership by giving control of key networks and services back to users and developers. Notably, concepts like composability and interoperability in Web3 help address core challenges in building the metaverse—especially identity and digital property—and significant R&D efforts are underway in foundational Web3 infrastructure.

That said, while I believe Web3 will be a critical component in redefining game engines, it is not a silver bullet.

One of the most visible uses of Web3 in the metaverse is enabling users to buy and own digital items—such as virtual real estate or clothing for avatars. Because blockchain transactions are public records, purchasing items as NFTs theoretically allows owning an item and using it across multiple metaverse platforms.

However, I don’t believe this will happen until several key issues are resolved:

Single User Identity: Players need a unified identity that moves seamlessly across virtual worlds and games—essential for matchmaking, content attribution, and blocking malicious accounts. One service tackling this is Hello, a multi-stakeholder consortium aiming to transform personal identity around user-centric models, primarily leveraging Web2 centralized identity. Others use Web3 decentralized identity solutions—like Spruce, which lets users control their digital identity via wallet keys. Meanwhile, Sismo is a modular protocol using zero-knowledge proofs for decentralized identity management.

Unified Content Formats: Standardized, open formats are needed to share content across engines. Currently, each engine uses proprietary formats optimized for performance. But to exchange assets, we need open standards—like Pixar’s Universal Scene Description (USD) or NVIDIA’s Omniverse. However, standardization must extend to all content types.

Cloud-Based Content Storage: A standardized way to locate and retrieve content used across different worlds. Currently, game assets are either bundled into releases or downloaded via CDN. For cross-world sharing, a universal discovery and retrieval system is essential.

Shared Payment Mechanisms: Economic incentives must exist for metaverse owners to allow resources to flow between worlds. Digital goods sales are a primary revenue source for platforms, especially in free-to-play models. To encourage openness, resource owners could pay a “toll fee” to use their assets on another platform. Alternatively, if certain assets are highly desirable, a metaverse might pay the owner to bring them into its world.

Standardized Functionality: One metaverse must understand how a given item functions. If I want to bring my powerful sword into your game to slay monsters, your game needs to recognize it as a weapon—not just a decorative model. One solution is developing taxonomies of standard object interfaces—categories like weapons, vehicles, clothing, or furniture—that each metaverse can choose to support.

Adaptable Aesthetics: Assets should be able to modify their appearance to match the target universe. For instance, if I own a high-tech sports car and want to use it in a steampunk-themed world, it should transform into a steam-powered version. This may require the asset itself to know how to adapt, or the destination world to provide alternate skins.

Improved Multiplayer Systems

A key area demanding attention is the growing importance of multiplayer and social features. More people are playing online games today because socially connected games generate significantly higher revenues than single-player ones. Since the metaverse is inherently social by definition, it will face all the unique challenges of online experiences. Social games must combat harassment and toxic behavior, are vulnerable to DDoS attacks, and often require globally distributed servers to minimize latency and optimize player experience.

Given the centrality of multiplayer features in modern games, we still lack a fully mature, competitive solution. Engines like Unreal or Roblox, and services like Photon or PlayFab, offer basic functionality, but gaps remain—such as advanced matchmaking—which developers must solve independently.

Innovations in multiplayer systems must include:

Serverless multiplayer networking: Developers implement authoritative game logic hosted and auto-scaled in the cloud without managing physical servers.

Advanced matchmaking: Helps players quickly find peers of similar skill. AI tools can assess player ability and rank accordingly. This is especially critical in the metaverse, where matchmaking spans broader contexts.

Anti-harassment and moderation tools: Detect and remove malicious players. Any company hosting a metaverse must prioritize safety—users won’t spend time in unsafe spaces, especially when they can instantly jump to another world.

Guilds or tribes: Enable players to group together—for competition or richer social experiences. The metaverse offers vast opportunities for cooperative gameplay, creating demand for guild management services and integration with external tools like Discord.

Automated Testing Services

Testing is an expensive bottleneck when launching any online game, requiring human testers to repeatedly play through content to catch bugs and glitches.

Skipping this step is risky. Take the highly anticipated Cyberpunk 2077, which faced widespread backlash due to its buggy launch. But since the metaverse is an open-ended, “open-world” experience, testing costs could become prohibitively high.

One way to alleviate this bottleneck is automated testing tools—AI agents that play games like humans to detect bugs and crashes. A secondary benefit is generating believable AI players that can substitute for disconnected real players or smooth early matchmaking, reducing wait times.

Innovations in automated testing could include:

Automated training of new characters by observing real player interactions. Over time, these agents grow smarter and more lifelike, especially as the metaverse matures.

Automatic detection of bugs or crashes, with deep links that jump directly to the issue, enabling human testers to reproduce and fix problems quickly.

Replacing real players with AI agents when someone disconnects unexpectedly, minimizing disruption. This raises intriguing questions: Can players “delegate” to AI at will? Could they even “train” a personal proxy to play on their behalf? Will “AI assistants” become a new category in esports tournaments?

Creative Layer: Reinventing Content Creation

As 3D rendering grows more powerful, the volume of digital content required to build a game continues to rise. Consider Forza Horizon 5, the largest downloadable entry in the series, requiring over 100GB of storage—up from 60GB in the previous installment. And this is just the tip of the iceberg; original “source art files” created by artists can be many times larger. The increase stems from ever-larger, higher-fidelity virtual worlds packed with intricate detail.

Now consider the metaverse—demand for high-quality digital content will only grow as more experiences shift from physical to digital realms.

This shift is already happening in film and TV. Disney+'s recent series The Mandalorian pioneered shooting on “virtual sets” powered by Unreal Engine. This was revolutionary—reducing production time and cost while increasing scale and quality. We’ll see more films and shows adopt this method.

Unlike physical sets, which are usually dismantled after filming, digital sets can be easily archived and reused—making long-term investment worthwhile. In fact, it makes sense to invest heavily in building photorealistic digital worlds that can later serve as foundations for interactive experiences. In the future, I hope these worlds become available to other creators, enabling new content within cinematic-grade environments and further advancing the metaverse.

Content creation itself is increasingly distributed globally. One lasting impact of the pandemic has been the normalization of remote development, with teams working from home worldwide. Remote work offers clear benefits—access to global talent—but poses challenges: maintaining creative collaboration, synchronizing massive asset pipelines, and securing IP.

Given these challenges, I see three major innovation areas in digital content creation: AI-assisted tools, cloud-based asset management/build pipelines, and collaborative workflows.

AI-Assisted Content Creation

Today, nearly all digital content is created manually—increasing time and cost. Some games use “procedural generation” algorithms to create dungeons or worlds, but building those algorithms can be difficult.

A new wave of AI-assisted tools is emerging, helping artists and non-artists create content faster and at higher quality, lowering costs and democratizing game development.

This is especially important for the metaverse, where nearly everyone could become a creator—but not everyone can produce world-class art. By “art,” I mean all digital assets: virtual worlds, interactive characters, music, sound effects, etc.

Innovation in AI-assisted creation will include tools that convert images, videos, or real-world artifacts into digital assets—such as 3D models, textures, and animations. Examples include Kinetix (animation from video), Luma Labs (3D models from photos), and COLMAP (3D spaces from still images).

Creative assistants that interpret artistic direction and generate new assets. For example, turning hand-drawn sketches into 3D models. Inworld.ai and Charisma.ai use AI to create interactive characters; DALL-E generates images from natural language prompts.

Reproducibility is crucial when using AI in game creation. Since creators often revisit and revise assets, storing only the output isn’t enough. Creators must preserve the entire instruction set used to generate the asset, enabling future edits or derivative works.

Cloud-Based Asset Management, Build, and Deployment Systems

One of the biggest challenges game studios face today is managing the sheer volume of content needed to create compelling experiences. This remains an unsolved problem with no standardized solution—each studio builds its own patchwork system.

Consider the scale of data involved: A large game may involve millions of files—textures, models, characters, animations, levels, VFX, SFX, voice recordings, music.

Each file undergoes repeated revisions during production, necessitating version history to revert to earlier states. Today, artists often manage this by renaming files (e.g., forest-ogre-2.2.1), causing file bloat. These files consume vast storage—often large, hard to compress, and stored individually. Unlike source code, which stores only changes between versions, content files like artwork change entirely even with minor edits.

Moreover, these files aren’t isolated—they’re part of a pipeline describing how individual assets combine into playable experiences. Artists’ “source art” goes through intermediate conversions before becoming final “game assets” used by the engine.

Current pipelines are unintelligent, unaware of dependencies between assets. For example, they don’t know that a farmer NPC in a level holds a 3D basket with a specific texture. So, changing any asset triggers a full rebuild to ensure consistency—an hours-long process that slows iteration.

The metaverse will exacerbate these issues and introduce new ones. Metaverses will dwarf today’s largest games, magnifying storage demands. Their “always-on” nature means content must stream directly into engines—there’s no stopping the metaverse to deploy updates. Dynamic self-updating capability is essential. To achieve composability, remote creators must access source assets, create derivatives, and share them.

Meeting these needs creates two major innovation opportunities. First, artists need a GitHub-like, user-friendly asset management system offering developers’-level version control and collaboration. Such a system must integrate with popular tools like Photoshop, Blender, and Sound Forge. Mudstack is an example of a company focusing on this space.

Second, content pipelines need automation to modernize and standardize art workflows. This includes exporting source assets into intermediate formats and converting them into game-ready assets. Smart pipelines would understand dependency graphs and enable incremental builds—only rebuilding downstream files when upstream assets change—drastically reducing iteration time.

Enhanced Collaboration Tools

Despite being distributed and collaborative, many professional tools used in game development remain centralized and single-user. For example, Unity and Unreal’s level editors default to one designer editing one level at a time—slowing progress as teams can’t work in parallel.

In contrast, Minecraft and Roblox support collaborative editing—one reason for their popularity—though they lack other professional features. Once you’ve seen kids building a city together in Minecraft, it’s hard to imagine doing it any other way. I believe collaboration will be central to the metaverse, enabling creators to build and test together online.

Overall, collaboration in game development will become real-time across nearly every aspect of creation.

Ways writing processes could evolve to unlock the metaverse include:

Real-time collaborative world-building: Multiple level designers or “world builders” simultaneously edit the same environment, seeing each other’s changes live, with full version control and change tracking. Ideally, designers could seamlessly switch between playing and editing for rapid iteration. Some studios experiment with proprietary tools—like Ubisoft’s AnvilNext engine—while Unreal Engine has tested real-time collaboration, initially developed for film and TV.

Real-time content review and approval: Teams experience and discuss work together. Group reviews are vital in creative processes. Film has “dailies”; most game studios have screening rooms. But remote tools like Zoom offer low-fidelity screen sharing, inadequate for reviewing digital worlds. Game solutions might include “spectator mode,” where teams log in to watch through one player’s perspective. Alternatively, improve screen sharing fidelity—higher frame rates, stereo audio, accurate color, pause/annotate—with integration into task tracking. Companies like frame.io and sohonet are addressing this.

Real-time world tuning: Designers adjust any of the thousands of parameters defining a game or world and immediately see results. This process is vital for balanced, engaging experiences, but values are often buried in spreadsheets or config files, hard to edit or tweak live. Some studios use Google Sheets to push config changes instantly to servers, updating game behavior. The metaverse needs stronger tools. A side benefit: these parameters could drive live events or content updates, enabling non-programmers to create new content—e.g., a designer creating a special-event dungeon with tougher monsters and better loot.

A fully cloud-based virtual game studio, where team members (artists, programmers, designers, etc.) can log in from anywhere, on any device (including low-end PCs or tablets), accessing high-end development platforms and full asset libraries. Tools like Parsec offer remote desktop access, but this goes further—integrating creative software licensing and asset management.

Experience Layer: Rebuilding Live Operations

The final layer of transformation involves creating the tools and services needed to actually operate the metaverse—arguably the hardest part. Building an immersive world is one thing; running a 24/7 world with millions of global players is another.

Developers must tackle:

Social challenges of governing a large autonomous region, filled with residents who don’t always get along and disputes needing resolution.

Economic challenges of running a central bank, including issuing currency and monitoring flows to prevent inflation or deflation.

Monetization challenges of operating a modern e-commerce site, selling thousands or millions of items, with promotions, discounts, and in-world marketing tools.

Analytical challenges of understanding real-time events across a vast world, receiving alerts before issues escalate.

Communication challenges of reaching users individually or in groups, given the metaverse’s global reach.

Content challenges of producing regular updates to keep the metaverse growing and evolving.

To address these challenges, game companies need well-equipped teams with access to robust backend infrastructure, dashboards, and tools for large-scale operations. Two particularly ripe areas for innovation are live operations and in-game commerce.

Live operations is still in its infancy. Tools like PlayFab, Dive, Beamable, and Lootlocker are partial solutions. Most games still build custom backend stacks. Ideal solutions would include: real-time event calendars for planning, forecasting, templating, or cloning events; personalization via player segmentation, targeted promotions; messaging via push notifications, email, in-game mailboxes, and multilingual translation; notification builders for non-coders; and simulation tools to test upcoming events or updates, with rollback mechanisms.

More mature but still needing innovation is in-game commerce. With nearly 80% of digital game revenue coming from in-game purchases in free-to-play models, it’s striking that nothing fits the metaverse economy better.

Existing solutions only partially address the problem. Ideal systems would include: item catalogs with arbitrary metadata; storefront UIs for real-money sales; promotions and discounts, including time-limited and targeted offers; reporting and analytics with customizable dashboards; UGC monetization with revenue sharing; advanced economies featuring crafting (combining items), auction houses, trading, gifting; and full integration with Web3 and blockchain worlds.

Next Steps: Transforming Game Development Teams

In this article, I’ve shared my vision for how new technologies enabling cross-game composability and interoperability could transform gaming. I hope others in the game community share my excitement about this future and are inspired to help build the next generation of companies that will power this experiential revolution.

This coming wave of change won’t just create opportunities for new software tools and protocols—it will reshape the nature of game studios themselves, shifting the industry from monolithic teams toward specialization across new dimensions.

Indeed, I foresee greater specialization in game production. Specifically, I expect to see:

World Builders focused on creating playable worlds—realistic or fantastical—populated with creatures and characters native to that universe. Consider the incredibly detailed Wild West of Red Dead Redemption. Rather than investing so much in a world for just one game, why not reuse it to host many games? Why not continue investing in it, letting it grow and evolve over time, shaped by the needs of various games?

Narrative Designers who craft compelling interactive stories within these worlds—filled with plotlines, puzzles, and quests for players to discover.

Experience Creators who design cross-world playable experiences, focusing on gameplay mechanics, reward systems, and control schemes. In coming years, as more companies bring parts of their businesses into the metaverse, creators who bridge physical and digital worlds will be especially valuable.

Platform Builders who develop the underlying technologies used by the experts above.

Games are already the largest sector in entertainment. As more economic activity shifts online and into the metaverse, their scale will grow even further. We haven’t even mentioned exciting near-future developments like Apple’s new AR headset or Meta’s latest VR prototype…

There has never been a better time to be a creator.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News