AI Is Causing Layoffs, but OpenAI Is Hiring Salespeople

TechFlow Selected TechFlow Selected

AI Is Causing Layoffs, but OpenAI Is Hiring Salespeople

AI development companies are hiring large numbers of personnel for grassroots promotion—shovels have been built, but people are still needed to teach others how to dig.

Kuli, TechFlow

Recently, AI-driven job-loss anxiety has swept across the internet in both East and West.

Block laid off 4,000 employees, with its CEO stating AI can do your job; Pinterest cut 15% of its workforce, redirecting funds toward AI development; Dow Chemical laid off 4,500 people, citing increased automation...

Domestically, things aren’t calm either: NetEase was rumored to be replacing outsourcing staff with AI; iFLYTEK denied large-scale layoffs; ByteDance reportedly optimizes 20% of non-AI departments every six months...

According to statistics, in the first quarter of 2026 alone, over 45,000 tech-industry jobs were cut globally—nearly 10,000 of them explicitly attributed to AI.

Against this backdrop, last Friday the UK’s Financial Times reported that OpenAI plans to expand its headcount from 4,500 to 8,000 by year-end.

3,500 new positions. A company building AI claims it doesn’t have enough people?

Visit OpenAI’s careers page: engineers and researchers are indeed being hired—but equally prominent are other roles: Partner Managers, Enterprise Sales, GTM (Go-to-Market) teams—and a newly reported role called “technical ambassadorship,” which translates to:

Technical Ambassador: dedicated to helping enterprise customers adopt and deploy AI.

In short, OpenAI isn’t hiring people to make AI stronger—it’s hiring people who’ll convince others to pay for it.

Winning Customers Beats Optimizing Models

ChatGPT boasts 900 million weekly active users—but most don’t pay.

Even paying consumers are costly to serve: each heavy user consumes more compute than their $20 monthly subscription covers. OpenAI expects $25 billion in revenue this year—but also a $14 billion loss.

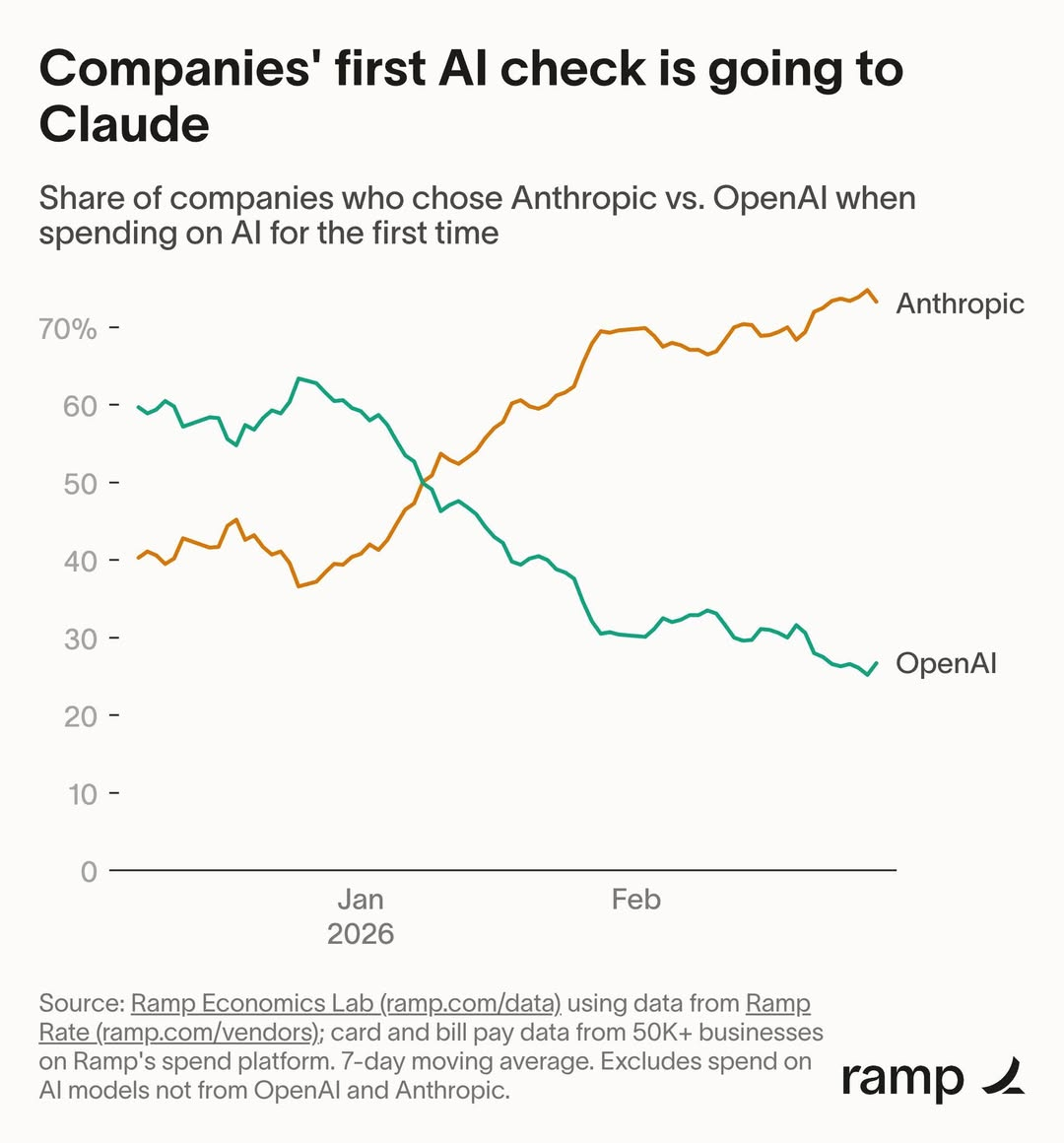

Consumers drive traffic; enterprise customers drive profits. And enterprise customers are shifting toward Anthropic’s Claude.

Ramp’s data shows that among enterprises purchasing AI tools for the first time, Anthropic captured 73% of market share. Ten weeks ago, the split was roughly 50–50.

Last December, Sam Altman sent a company-wide “code red” memo, pausing all non-core initiatives—including advertising and shopping assistants—and concentrating all resources on improving the ChatGPT experience.

The immediate trigger was Google Gemini 3 outperforming ChatGPT across multiple benchmarks—but the deeper concern lay on the enterprise side: Anthropic is embedding Claude directly into clients’ codebases and workflows. Once integrated, migration costs snowball.

Models can iterate—but lost customers won’t return on their own. Winning them back requires real people knocking on doors—not AI-generated suggestions.

A Shovel Can’t Sell Itself

AI can write code, handle customer service, and perform data analysis—but there’s one thing it cannot do:

Persuade an enterprise CTO to sign an annual contract committing to *us*.

For individuals, using AI means downloading an app—and uninstalling it if dissatisfied. For enterprises, adopting AI is entirely different. Data security reviews, internal process overhauls, integration with legacy systems, employee training—any single bottleneck can stall a project.

This isn’t solved by model benchmark scores. It demands people sitting face-to-face in client conference rooms.

OpenAI clearly gets it. It’s not just hiring salespeople—the FT reports it’s negotiating joint ventures with private equity firms like TPG and Brookfield, specifically to help enterprises implement AI. At its core, this business still hinges on deploying human talent onsite.

Block’s story echoes the same reality.

Within three weeks of laying off 4,000 people, the company began rehiring. One design engineer was told they’d been “laid off in error”; a tech lead discovered that after his entire team was cut, no one remained capable of handling critical business functions—prompting him to threaten resignation, after which the company rehired some staff.

Jack Dorsey himself had foreshadowed this in his layoff letter: “We may have laid off some people we shouldn’t have…”

AI certainly fuels layoff anxiety—but cutting away the very lifeblood of an organization in the name of AI clearly overshoots the mark. Even at a company whose CEO publicly declares AI can replace most employees, there remain vital functions AI simply cannot absorb.

AI excels at replacing tasks with clearly defined parameters—but “convincing an organization it needs AI, then helping it adopt AI successfully” is inherently unquantifiable.

Every technological revolution spawns the saying: “The shovel-sellers profit most.” This AI wave is no exception—the consensus holds that infrastructure providers enjoy stable, winner-agnostic revenue.

Yet OpenAI’s current predicament reveals a crucial truth: once shovels are built, someone must still teach others how to use them. And that “teaching” process itself cannot be performed by the shovel.

Ground Operations: The Iron-Rice-Bowl Amid AI Anxiety

Compare those recently laid off with those newly hired—and a dividing line emerges.

Among Block’s 4,000 layoffs, many were engineering and operations staff hired during the pandemic—performing standardized, easily describable tasks. By contrast, OpenAI’s 3,500 new hires are predominantly in sales, customer success, and partner management—roles impossible to codify into procedural documentation.

What OpenAI is doing has an old name: ground operations (“ground ops”).

Send people to client offices, sit down, listen to needs, integrate systems, monitor go-live. Whether “Technical Ambassadors” or “Partner Managers,” stripped of English jargon, these roles are functionally identical to what Meituan did a decade ago during the O2O wars—dispatching staff door-to-door to persuade restaurant owners to install POS terminals.

This divide isn’t unique to just these two companies.

This year, Shopify’s CEO told employees: “To request new headcount, first prove AI *cannot* do that job.” Klarna laid off 700 customer-service reps two years ago, claiming AI sufficed—then quietly rehired staff last year, with its CEO admitting it had “moved too fast on AI.”

So what distinguishes those laid off from those rehired?

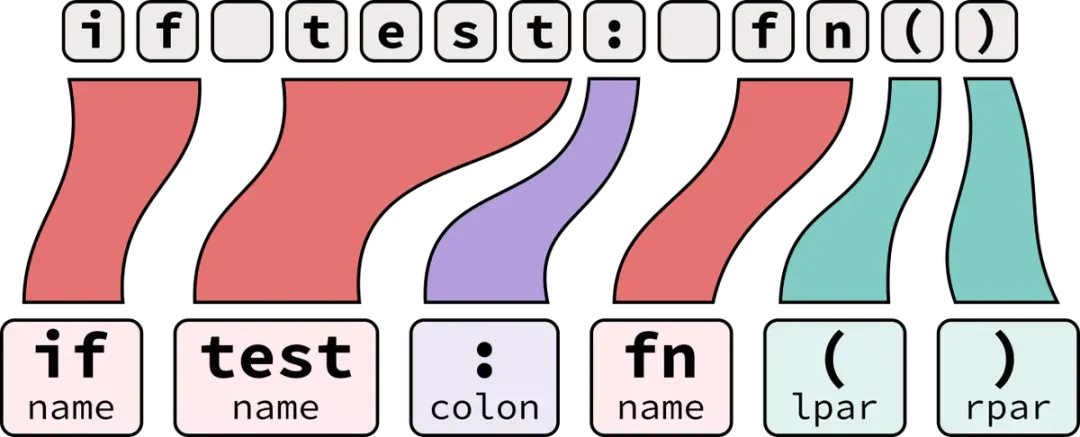

Layoff-vulnerable roles share one trait: work content decomposes cleanly into explicit inputs and outputs—writing code, responding to tickets, generating reports—clearly bounded tasks where AI excels.

Ground ops is the exact opposite. Integrating AI into a financial client’s compliance system differs fundamentally from helping a game studio leverage AI for content generation—no two projects are alike. The person across the table changes; the solution changes. You can’t capture this in a prompt.

AI isn’t eliminating all jobs—it’s re-pricing them. Tasks describable in one sentence are becoming cheaper; those requiring nuanced, contextual judgment are growing more valuable.

Three years ago, a single research paper could shift the world. Today, thousands of people must knock on doors—one by one.

If you’re anxious about AI replacing you, the answer may hinge less on your industry—and more on whether your job can be described in one sentence.

The part that *can* be described that way? It’s already precarious.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News