AI Wealth-Building Tutorial: Start with “Spicy” Content, Then Sell Courses

TechFlow Selected TechFlow Selected

AI Wealth-Building Tutorial: Start with “Spicy” Content, Then Sell Courses

Desire sells well; responsibility is hard to assign.

Author: Sha La Jiang

“Food and sex are fundamental human desires”—the rise of most great business models hinges on this basic truth, and AIGC is no exception.

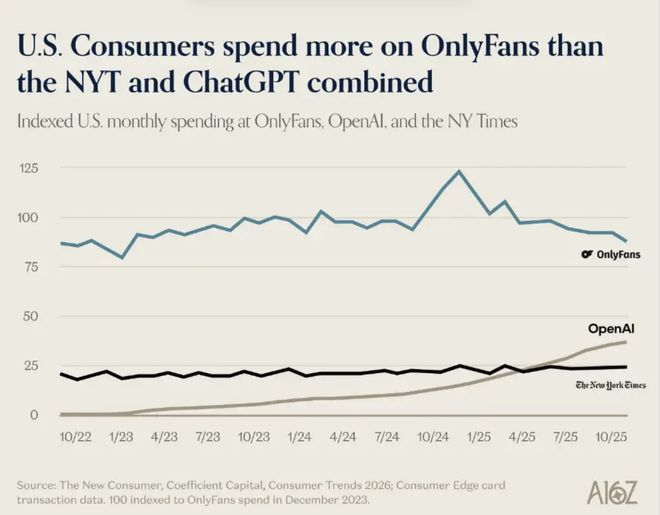

A16Z, a top-tier VC firm in Silicon Valley’s investment circle, recently published a report analyzing AI consumer trends. Buried within this otherwise serious discussion of AI productivity was a line chart that left readers both amused and dismayed: last year, U.S. users spent more money on OnlyFans than they did combined on OpenAI and The New York Times.

A16Z Report Table

It’s ironic—and undeniably true: productivity still can’t compete with sexual appeal.

So just how much money can you make by skirting the edge with AI?

Image Source: Giphy

Productivity Still Can’t Beat Sexual Appeal

The first wave of creators building AI virtual models knows this best.

Starting around late 2022, as tools like Midjourney and Stable Diffusion began generating stable, high-quality images, some quickly realized their potential: these tools could produce hyper-realistic human faces at scale—almost for free. They used AI to generate fictional female personas, assigned each a name and backstory, posted carefully curated “daily life” updates on Instagram and TikTok, and deployed ChatGPT to handle intimate DM replies—offering subscribers a so-called “girlfriend experience.” The entire workflow was nearly fully automated; the operators behind it didn’t even need to appear in person.

Image Source: Giphy

This model thrived most smoothly on Fanvue—a competitor to OnlyFans with a more permissive stance toward AI-generated content. According to Fanvue’s official disclosure, AI virtual models accounted for 15% of the platform’s total revenue in November 2023. By 2024, top-performing AI virtual models were routinely earning over $20,000 per month, and several well-established accounts were pulling in over $200,000 annually. In 2025, those figures continued climbing: Fanvue CEO Will Monange revealed in an interview that overall earnings from AI creators had surged over 60% year-on-year compared to 2024, making virtual models the fastest-growing content category on the platform.

OnlyFans officially bans AI-generated content—but loopholes persist. Reddit forums regularly host discussions on how to monetize AI content on OnlyFans while staying under the radar. A common workaround involves recruiting a real woman to complete the platform’s facial verification process, then training an AI model on her photos to mass-produce content.

Image Source: Giphy

No matter how strict the platform’s enforcement, technological progress keeps outpacing it. Today’s AI-generated imagery is so convincing—even seasoned observers struggle to tell it apart from reality. Just the other day, I scrolled past a suggestive video on Xiaohongshu showing a handsome guy sitting in a car. It wasn’t until I opened the comments and saw the pinned remark—“This AI has great taste”—that I realized he wasn’t real.

Beyond adult content, another group has profited from AI—but in a completely different direction: children’s picture books.

Zhao Lei (a pseudonym) was among the earliest entrants into this space. At the end of 2022, he’d just been laid off from a product role at a major tech company and was exploring new opportunities at home. That’s when Midjourney started delivering consistent, high-quality outputs. Staring at its watercolor-style animal illustrations, he had an epiphany: “This *is* picture-book illustration.” He spent two weeks researching Amazon KDP. His logic was refreshingly simple: ChatGPT writes the story, Midjourney generates the images, he handles layout and upload—and then collects royalties. “It really was that profitable back then,” he said. “Stack up a few titles, and passive income easily topped $10,000 a month.”

But the window didn’t stay open long. In the second half of 2023, AI-generated picture books exploded on KDP—and TikTok flooded with nearly 90,000 tutorials, all sporting identical clickbait titles: “EASY AI Money,” “Make $100K Monthly with Kids’ Books.”

Everyone rushed into the same lane, rapidly diluting sales volume. Quality issues soon surfaced: dinosaurs with comically oversized forelimbs, children drawn with mismatched numbers of fingers. Major platforms began mandating disclosure of AI usage upon upload—effectively ending the race. “Making money from AI picture books is extremely difficult now,” Zhao Lei admitted.

Then, almost in unison, Zhao Lei and the AI “edge-walkers” converged on the same final destination: selling courses (a trend taken to its extreme recently by the viral “Lobster” phenomenon).

Image Source: Giphy

Zhao Lei sells “From Zero to Published: A Complete Guide to AI Picture Books”; the edge-walkers sell “How to Build Your Own AI Virtual Model.” Buyers are invariably newcomers who’ve just heard about the opportunity—and still believe the window hasn’t closed.

Two distinct markets. Two different content packages. Same underlying product: the illusion that “I, too, can ride the pig to success.”

Aesthetic Sense and “Legacy Skills” Are Holding People Back

These businesses may sound like effortless windfalls—so what’s the barrier to entry?

A friend who works as a UX designer offered me an answer: regional internet restrictions and subscription fees. When Midjourney launched, she wrote a step-by-step user guide priced at ¥99—still listed on Xiaohongshu today as passive income. From a pure tool-usage standpoint, she was spot-on: the technical barrier *is* falling fast.

But as someone whose drawing skills plateaued at stick figures—and whose outputs from various AIGC tools consistently land on the “ugly” side—I need to add something she didn’t mention: aesthetic sense.

Image Source: Giphy

People used to joke that AI won’t replace designers because clients don’t know what they want. I thought it was just a meme—until I tried using these tools myself, and discovered it applied to me word-for-word.

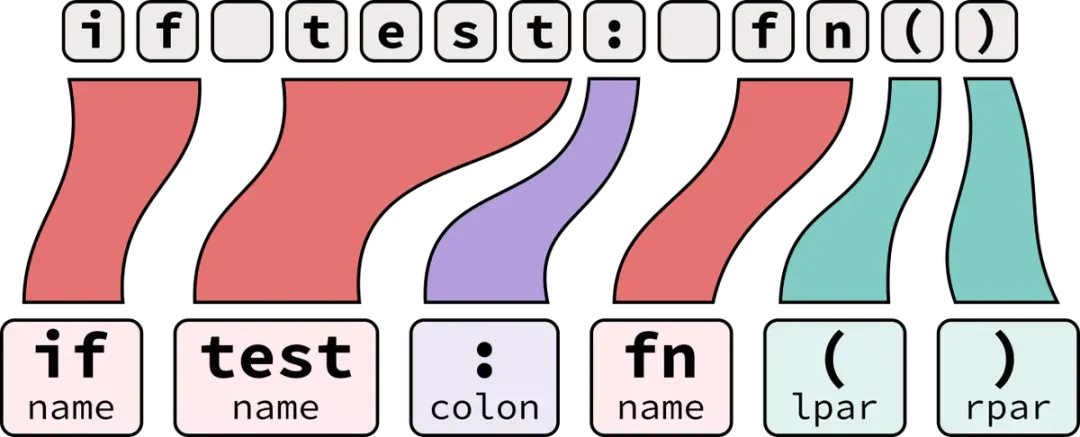

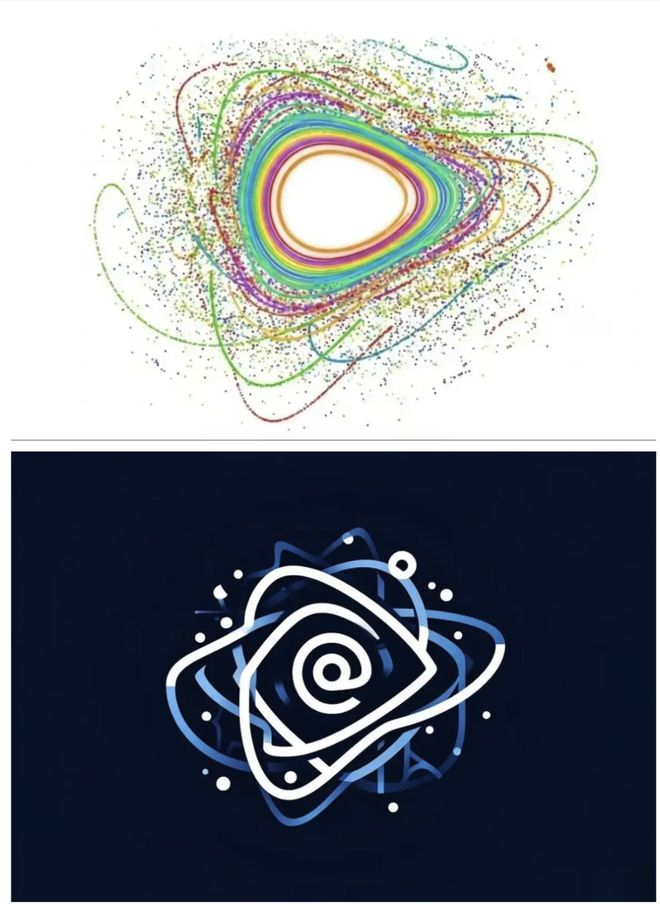

Last year, I launched a media account and wanted to use the physics concept of a “measurable island” as the logo. A measurable island refers to elements worth preserving amid chaotic information flows. I gathered reference images for the concept, fired up the tool, uploaded them, typed in pages of descriptive prompts—and hit generate. The results were a mess. I revised eight times, each iteration merely swapping one kind of chaos for another. I knew the *feeling* I wanted—but had zero idea how to translate that feeling into precise instructions. In the end, I asked a designer friend for help. She spent twenty minutes—and delivered a result lightyears beyond my two-hour struggle.

Top: Before revision; Bottom: After revision

The problem wasn’t the tool—it was me. More precisely: my inability to convert vague aesthetic intuitions into precise language.

This dilemma isn’t mine alone.

A friend who handles content operations began using Seedance for short videos last year. She mastered the tool quickly—but got stuck writing shot lists. “I know I want a textured image—but typing ‘textured’ into the prompt does nothing,” she said. “I don’t know what specific lighting, framing, or camera movement defines that texture.” Her final output? “Looks kind of right—but somehow wrong everywhere.”

Another friend used Marble—a text-and-image-to-3D-generation tool—to create content assets. She generated and scrapped dozens of outputs before realizing she lacked any reference point: she simply didn’t know what “good” looked like—and therefore couldn’t judge whether the AI’s output matched her vision.

Marble 3D Image Panorama

In stark contrast, a friend with professional photography experience achieved markedly superior results using the same tools. “I didn’t spend much time studying prompt engineering tricks,” he explained. “I just knew exactly what composition and lighting I wanted—and described them clearly. The tool delivered accordingly.”

Tool capabilities are advancing rapidly—but the gap between users isn’t narrowing. If anything, it’s widening. Previously, everyone struggled to produce quality work. Now, those with accumulated aesthetic judgment can produce excellent outputs—while others remain stuck oscillating between “functional” and “polished.”

Tools are adapting to this reality. The popularity of one-click template tools like NotebookLM stems from a simple insight: they bypass the prerequisite “you must know what you want.” Templates make aesthetic decisions for you—you just fill in the content. But templates also cap your ceiling: they solve “functional,” not “beautiful.”

This dynamic plays out just as clearly in text generation. A friend working in marketing strategy was recently reassigned to PR—and now needs to produce large volumes of written copy. Her boss said she could use AI, yet she felt more confused than ever—and reached out asking for an AI writing manual I’d previously shared. The root issue? She lacks intuitive familiarity with what makes a “good PR piece.” Without knowing the standard, she can’t assess AI outputs—or determine which direction to revise them.

Image Source: Giphy

By contrast, I find AI writing remarkably smooth. Not because I’m more adept with the tools—but because years as a journalist have honed my sense of expression: I recognize why a sentence works, where it feels awkward, and precisely where AI’s output falls short—and how to push it forward. Here, aesthetic sense transforms into a practical skill: it tells you where the finish line is—rather than letting AI run aimlessly through endless iterations.

When tool capability ceases to be the bottleneck, aesthetic sense and “legacy skills” become the highest barriers—and using AI poorly can be worse than not using it at all.

I Just Want Something Sexy—Does It Matter Whether It’s AI or Human?

Early adopters don’t just reap rewards—they also attract controversy. Today’s AIGC ecosystem exhibits a paradoxical phenomenon: *whether* AI was used matters more than *how good* the output is.

Fang Yuan (a pseudonym), a brand designer, took on a visual identity project and used AI tools to compress a process that previously took two weeks down to three days. He felt the results were actually better than his prior work. He sent the deliverables and waited for feedback.

The client’s first reply wasn’t about the work—it was: “That was fast. Did you use AI?” Before Fang Yuan could respond, came another message: “We don’t accept design work involving AI.” He’s still unsure whether the client even opened the attachments. He’s frustrated: efficiency itself has become a liability.

Image Source: Giphy

He’s far from alone. AI has quietly become a moral litmus test in many people’s evaluation frameworks—unlike Photoshop or Excel. No one asks, “Did you use photo-editing software?” upon receiving a retouched image. No one interrogates, “Did you calculate this financial statement in Excel?”

AI triggers a different kind of suspicion—one closer to questioning whether you truly *did* the work yourself.

Creative work has long operated under an implicit contract: great work implies human investment—time, effort, refinement. AI disrupts the assumed causal link between “effort” and “output.”

When your three-day AI output sits beside someone else’s two-week handcrafted work—even if quality matches—the former feels subtly “off.” That dissonance crystallizes as “unfairness.”

A University of Arizona study found that when designers proactively disclose AI assistance—even clarifying AI served only as a support tool—client trust drops an average of 20%.

As AIGC matures, this issue has escalated from individual client-designer trust to systemic platform-level concerns.

Since 2023, China has rolled out regulations requiring labeling of AI-generated content. First came the January “Regulations on Deep Synthesis Services for Internet Information Services,” targeting deepfake faces and synthetic voices. Then in August, the “Interim Measures for the Administration of Generative Artificial Intelligence Services” formally brought chatbot-style generative services like ChatGPT under regulation. By March 2025, oversight tightened further: the Cyberspace Administration of China jointly issued the “Measures for Identifying AI-Generated Synthetic Content,” extending labeling requirements to text, images, audio, and video alike.

Yet regulation cannot resolve definitional ambiguity.

Platforms can reliably detect a 100% AI-generated video—but struggle at the margins. Is a selfie edited in AI for color grading and composition “AI-generated”? If a video uses self-shot footage but outsources editing and scoring to AI—does it require labeling? If an article starts with an AI draft but undergoes 70% human rewriting—who owns the label?

Image Source: Giphy

The boundary dilemma ultimately reflects a responsibility dilemma. Without clear definitions, accountability has no anchor. If AI composes a song’s melody and a human rewrites the lyrics—and a copyright dispute arises—who bears responsibility? Or if an influencer publishes an AI-generated review, edits only the tone, and recommends a product that fails to deliver—when we ask “Was this made by AI?”, what we’re really asking is simpler: Is there a human standing behind this work, taking responsibility? Is there someone thinking through *your* problems? Is there someone who genuinely cares whether the outcome is good?

The hardest boundary to draw isn’t between AI and human—it’s between responsibility and irresponsibility.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News