Dou Bao's pressure has just begun

TechFlow Selected TechFlow Selected

Dou Bao's pressure has just begun

According to information from the reporter, the Doubao team is still discussing internally whether the Doubao app should integrate with DeepSeek.

Article source: Zhang Yangyang,Cailian Press AI Daily

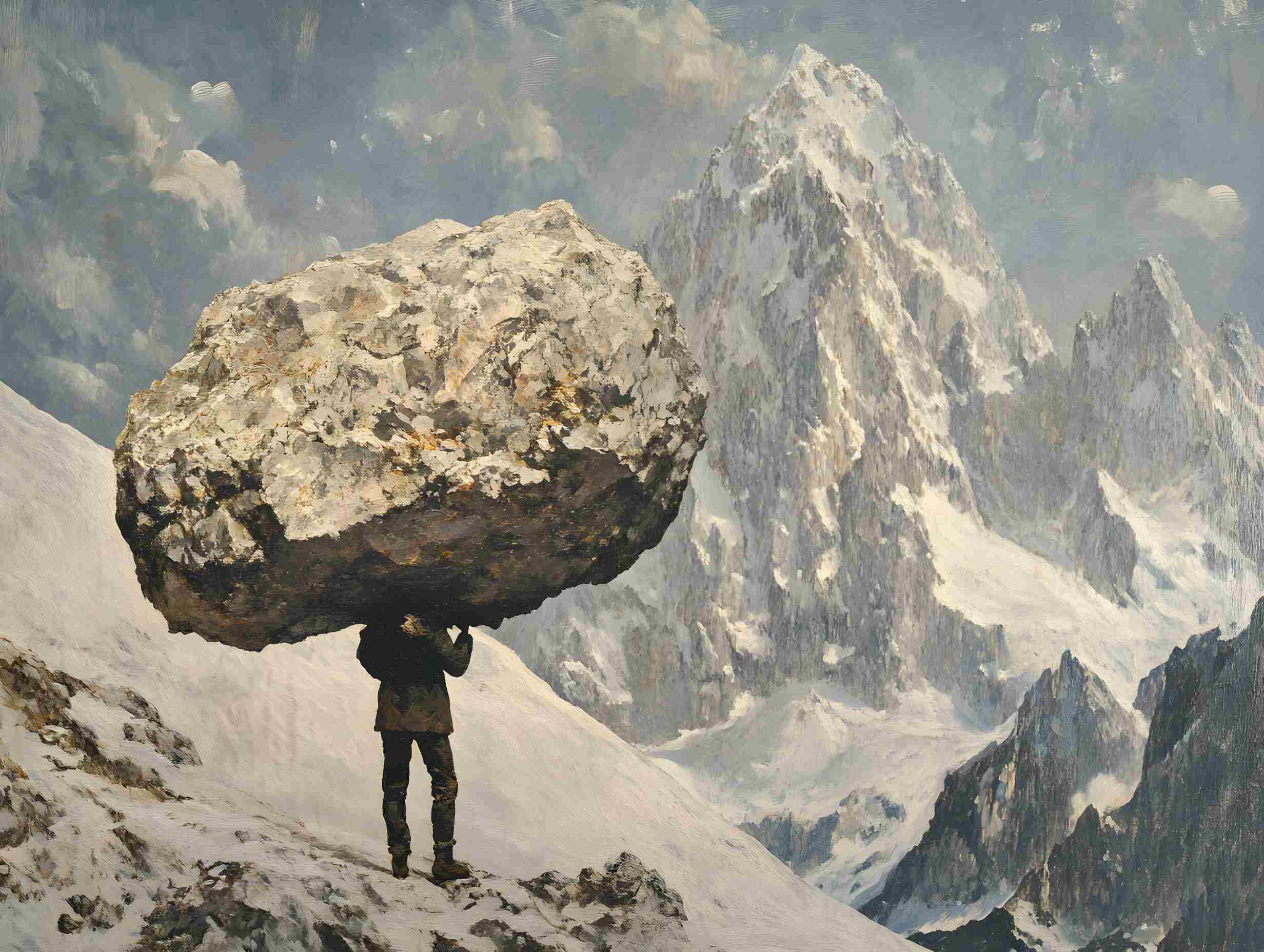

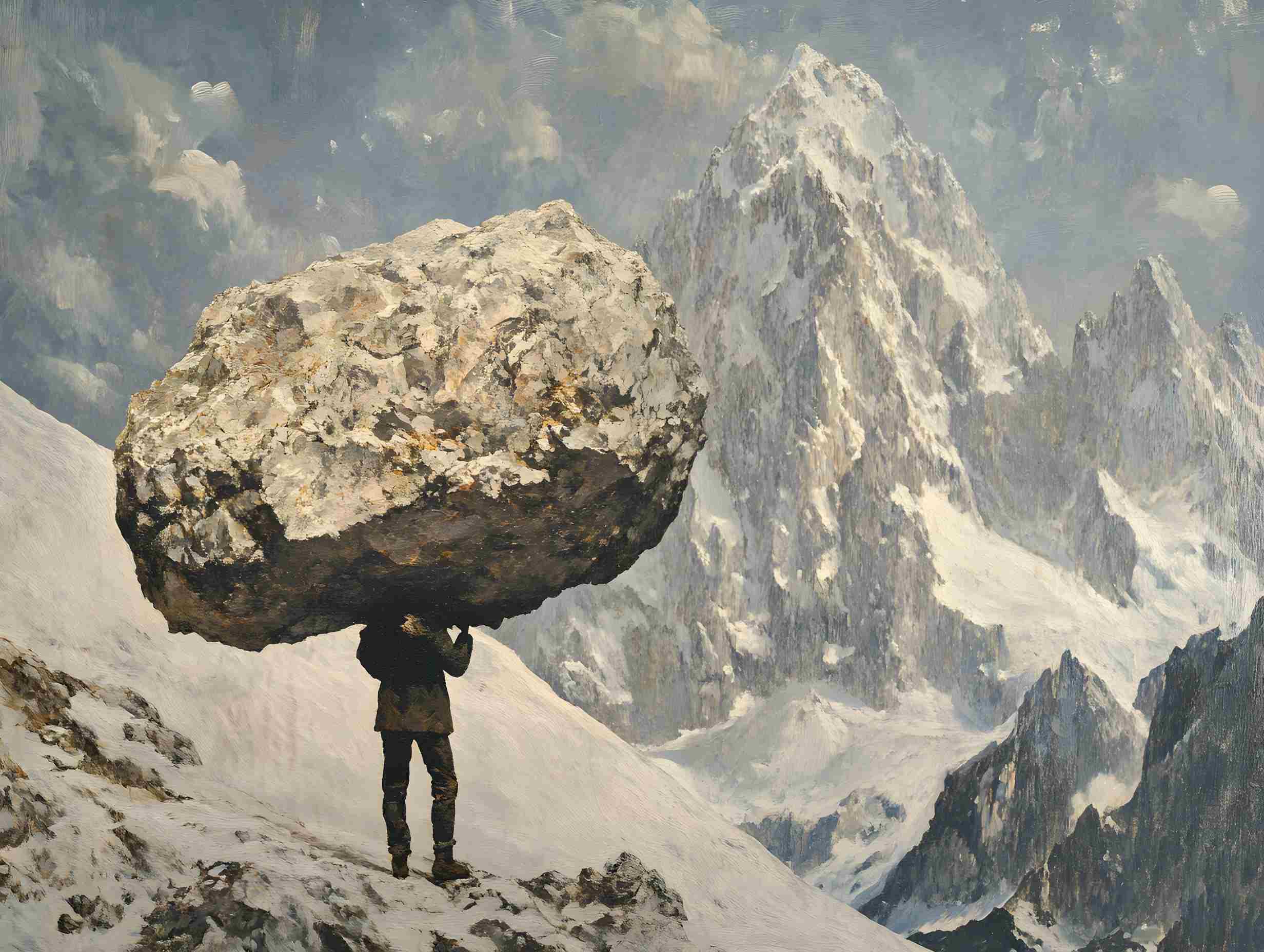

Image source: Generated by Wujie AI

Today, the Doubao large model team at ByteDance has introduced a new sparse model architecture called UltraMem. This architecture effectively addresses the high memory access costs during MoE inference, improving inference speed by 2–6 times compared to MoE and reducing inference costs by up to 83%.

Currently, competition in the large model field, both domestically and internationally, has become increasingly fierce, entering a white-hot phase. Doubao has made comprehensive moves across both the AI foundational and application layers, continuously iterating and upgrading.

Ongoing cost reduction and efficiency improvement for large models

According to research by the Doubao large model team, under the Transformer architecture, model performance exhibits a logarithmic relationship with parameter count and computational complexity. As LLMs continue to scale up, inference costs rise sharply and speeds slow down.

Although the MoE (Mixture of Experts) architecture has successfully decoupled computation from parameters, during inference even small batch sizes activate all experts, causing memory access to spike sharply and significantly increasing inference latency.

The Foundation team of ByteDance's Doubao large model has proposed UltraMem—a sparse model architecture that similarly decouples computation from parameters—effectively solving the memory access problem in inference while maintaining model performance.

Experimental results show that under identical parameter and activation conditions, UltraMem outperforms MoE in model effectiveness and boosts inference speed by 2–6 times. Moreover, under common batch size scales, UltraMem’s memory access cost is nearly equivalent to that of a Dense model with the same computational load.

It is evident that large model vendors are striving for cost reduction and efficiency gains on both training and inference fronts. The core reason is that as model scale expands, inference cost and memory access efficiency have become key bottlenecks limiting large-scale deployment of large models, and DeepSeek has already demonstrated a viable path toward "low-cost, high-performance" breakthroughs.

Liu Fanping, CEO of YANXIN AI, in an interview with the *Sci-Tech Innovation News*, analyzed that to reduce large model costs, the industry tends to seek breakthroughs at the technical and engineering levels, achieving architectural optimization as a way to “leapfrog.” Foundational architectures like Transformer still carry high costs, so new architectural research is necessary; core algorithms, particularly backpropagation, may represent bottlenecks in deep learning.

In Liu Fanping’s view, in the short term, the high-end chip market will continue to be dominated by NVIDIA. However, growing demand for inference applications presents opportunities for domestic GPU companies. In the long run, once algorithmic innovation yields results, the impact could be remarkable, and future computing power market demand remains to be seen.

Doubao’s pressure has only just begun

During the recent Spring Festival, DeepSeek rapidly gained global attention due to its low training cost and high computational efficiency, emerging as a dark horse in the AI field. Currently, competition in the large model space, both domestically and internationally, has intensified and entered a white-hot phase.

DeepSeek is currently the strongest competitor to Doubao among domestic large models, having surpassed Doubao in daily active users for the first time on January 28. DeepSeek’s daily active user count has now exceeded 40 million, making it the first application in China's mobile internet history to enter the overall network's Top 50 daily active apps within less than a month of launch.

In recent days, the Doubao large model team has been rolling out continuous advancements. Two days ago, they launched their experimental video generation model "VideoWorld." Unlike mainstream multimodal models such as Sora, DALL-E, and Midjourney, VideoWorld achieves world understanding without relying on language models—a first in the industry.

Currently, Doubao has established a comprehensive presence across both the AI foundational and application layers, continuously iterating and upgrading. Its AI product matrix spans multiple domains, including the AI chat assistant Doubao, Maoxiang, Jimeng AI, Xinghui, and Doubao MarsCode.

On February 12, stocks linked to Doubao surged rapidly in afternoon trading. According to Wind data, the Douyin Doubao index has risen over 15% since the beginning of February. On the individual stock front, Boyuan Technology surged to the daily limit, HanDe Information quickly rose and briefly hit the limit, while Guanghe Connectivity and Advanced Data Tech also saw intraday highs.

CITIC Securities previously released a report stating that the expansion of Doubao AI's ecosystem will trigger a new round of technology investment cycles among tech giants. The AI industry exhibits strong network effects and economies of scale—once leading AI applications gain a user advantage, their competitive strengths in model accuracy, marginal cost, and user stickiness will gradually strengthen.

As Doubao’s user base continues to grow, the application ecosystem built around Doubao AI is expected to accelerate. This will not only drive corporate investment in AI training and inference computing infrastructure but also stimulate other tech giants to increase their investments in AI infrastructure due to Doubao AI’s rapid growth.

However, for Doubao itself, the competition with top performer DeepSeek may have only just begun.

As an open-source model, DeepSeek’s low cost and high performance are changing many companies’ model selection strategies. Currently, numerous AI applications under companies such as Huawei and Baidu have announced integration with DeepSeek. Even ByteDance itself has integrated the DeepSeek-R1 model into Feishu’s multidimensional table feature, and Volcano Engine has also completed adaptation.

According to the *Sci-Tech Innovation News*, the Doubao team is still internally debating whether the Doubao app should integrate DeepSeek. From a user experience perspective, choosing a superior-performing model is understandable, but replacing their own model with a competitor’s would be difficult to justify to shareholders. Not to mention additional challenges such as increased adaptation burden from integrating a new model.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News