The Person Building Robots for OpenAI Sees a Terrifying Future

TechFlow Selected TechFlow Selected

The Person Building Robots for OpenAI Sees a Terrifying Future

When a person responsible for building robots resigns out of concern that robots might kill people, this act itself speaks volumes.

Author: Geek Friend

On March 7, 2026, when I saw the news of Caitlin Kalinowski’s resignation, my first reaction wasn’t shock—it was, “Finally, someone has spoken with action.”

Kalinowski served as OpenAI’s Head of Hardware and Robotics Engineering. She had only joined the company in November 2024—less than a year and a half before resigning.

Her reason was direct and weighty: she could not accept the potential domestic surveillance and autonomous weapons applications arising from OpenAI’s contract with the U.S. Department of Defense (DoD).

This is no ordinary talent exodus. It is a person who helped build AI’s physical form walking away to tell the world: she refuses to bear responsibility for what her creations might do.

To understand Kalinowski’s departure, we must first rewind to an event that occurred roughly one week earlier.

On February 28, Sam Altman announced OpenAI’s agreement with the U.S. Department of Defense, permitting the Pentagon to deploy OpenAI’s AI models on its classified networks. The announcement triggered an immediate public firestorm.

Interestingly, Anthropic—the very competitor cited as a benchmark—had recently rejected a similar DoD proposal. Anthropic insisted on stronger ethical safeguards in any contract, prompting U.S. Defense Secretary Pete Hegseth to publicly denounce the company on X as delivering “a masterclass in arrogance and betrayal,” echoing the Trump administration’s directive to halt all cooperation with Anthropic.

OpenAI then stepped in to take the deal.

User backlash was swift and intense. On February 28 alone, ChatGPT uninstallations surged 295% over the previous day. The #QuitGPT movement rapidly spread across social media, amassing over 2.5 million supporters of digital boycott within three days. Claude capitalized on the moment, overtaking ChatGPT to become the most-downloaded app in the U.S. and topping the Apple App Store’s free apps chart.

Under mounting pressure, Altman acknowledged on March 3 that he “should not have rushed this contract,” calling it “opportunistic and careless,” and announced revisions to its language—explicitly stating that “AI systems should not be intentionally used for domestic surveillance of U.S. personnel and citizens.”

Yet the word “intentionally” itself is a loophole. Lawyers at the Electronic Frontier Foundation pointed out bluntly that intelligence and law enforcement agencies routinely circumvent stricter privacy protections by relying on data collected “incidentally” or purchased commercially—adding “intentionally” does not amount to real constraint.

Kalinowski’s resignation unfolded precisely against this backdrop.

01 What She Saw Was Far More Concrete Than We Imagined

While most people were still debating whether “OpenAI is compromising with the government,” Kalinowski faced a far more specific and brutal reality—her team was building robots.

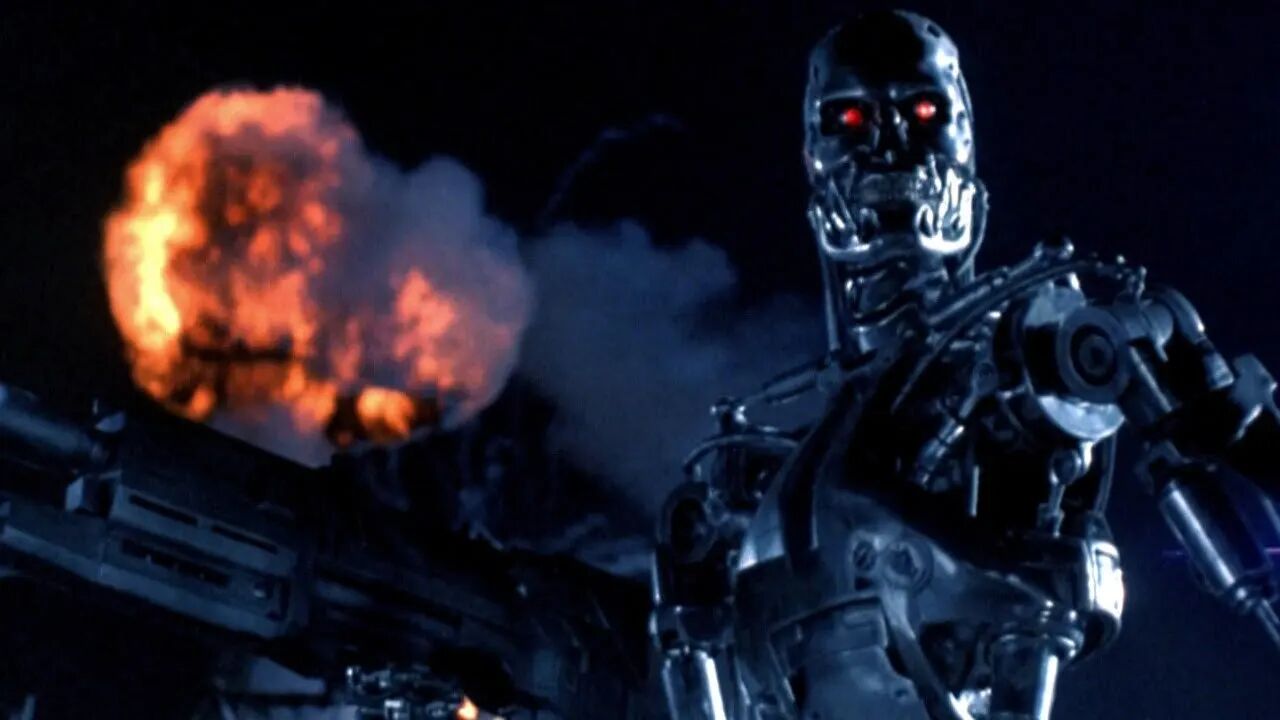

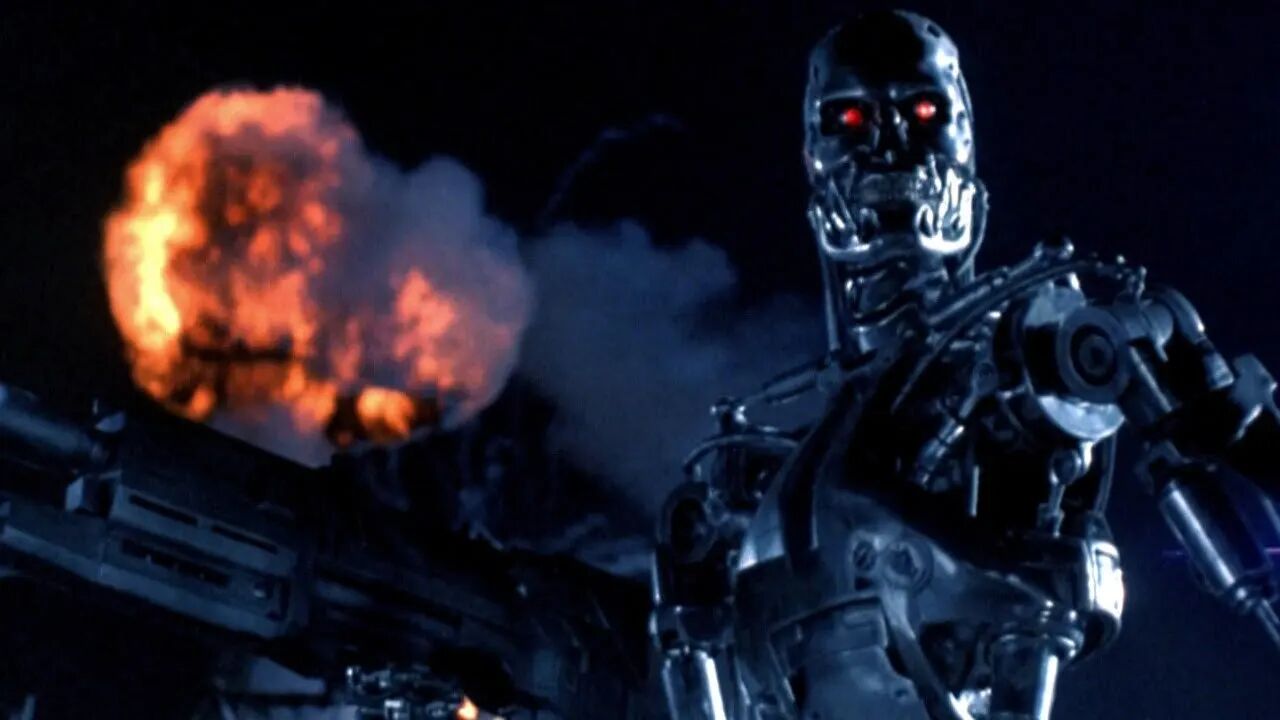

Hardware and robotics engineering isn’t abstract coding or parameter tuning. It’s about giving AI hands, feet, and eyes. When OpenAI’s collaboration with the DoD expands from “model usage” toward future “embodied AI military applications,” the nature of Kalinowski’s work fundamentally shifts.

Researchers in autonomous weapons have long warned of this day.

Current U.S. DoD policy does not require human approval before autonomous weapons employ lethal force. In other words, OpenAI’s signed contract technically does not prevent its models from becoming part of a system where “GPT decides to kill someone.”

This is not alarmist rhetoric. Jessica Tillipman, a lecturer in government procurement law at Georgetown University, analyzed OpenAI’s revised contract and explicitly noted its language “does not grant OpenAI the same freedom as Anthropic to prohibit lawful government use.” Instead, it merely states the Pentagon may not use OpenAI technology in violation of “existing laws and policies”—but existing law contains massive gaps in regulating autonomous weapons.

Governance experts at Oxford University echoed this assessment, concluding OpenAI’s agreement “is unlikely to close” the structural governance gaps left by AI-driven domestic surveillance and autonomous weapons systems.

Kalinowski’s departure is her personal response to that judgment.

02 What’s Happening Inside OpenAI

Kalinowski is neither the first nor likely the last to leave.

Data indicates that the attrition rate among OpenAI’s Ethics and AI Safety teams has reached 37%, with most citing “misalignment with company values” or “unwillingness to accept AI’s military use” as their reason for departing. Research scientist Aidan McLaughlin wrote internally: “I personally don’t believe this deal is worth it.”

Notably, this wave of departures coincides precisely with OpenAI’s rapid commercial expansion. Around the time of the DoD contract controversy, the company announced an expansion of its existing $38 billion agreement with AWS by an additional $100 billion over eight years—and revised its publicly disclosed spending targets, forecasting total revenue exceeding $280 billion by 2030.

Commercial acceleration versus sustained safety-team attrition: this widening gap is the most critical axis for understanding OpenAI’s current predicament.

A company’s values are ultimately revealed by whom it retains—and whom it fails to retain. When those most concerned with “how this technology will be used” begin leaving in succession, the direction in which the remaining organizational structure will drift is not hard to infer.

Anthropic chose a different path in this contest—rejecting the contract, enduring the DoD’s wrath, yet winning widespread user trust. During that period, Claude’s downloads rose against the tide, proving that “principled refusal” need not be a losing commercial strategy.

But Anthropic paid a price too—it was effectively excluded from government contracts, at least for now.

That is the true dilemma: no option is perfect.

Refusal risks losing influence—or even being shut out of rulemaking altogether. Acceptance means endorsing, with your own technology, actions you cannot fully control.

Kalinowski chose a third path—leaving.

It was the most honest thing she could do.

03 Silicon Valley’s Soul Battle Has Just Begun

Zooming out, the significance of this incident extends far beyond one person’s resignation.

The integration of AI and military applications is a choice the entire industry must eventually confront. The Pentagon possesses budget, demand, and technical integration capability—it will not stop extending olive branches to AI companies. And AI firms—whether AGI-focused OpenAI, safety-oriented Anthropic, or others—will inevitably have to answer this question.

Altman’s strategy attempts to reconcile commercial reality with contractual guardrails. Yet, as numerous legal and governance experts have stressed, such wording functions more as PR-level protection than technical hard constraints.

A deeper issue arises when AI models are deployed on classified networks and begin participating in military decision-making: the outside world simply lacks the ability to verify whether those “guarantees” are actually enforced.

The absence of transparency is itself the greatest risk.

Kalinowski spent less than a year and a half at OpenAI—but chose to leave at this precise moment. She issued no lengthy public statement, named no individuals in criticism, and simply drew her boundary through action.

In some sense, that speaks louder than any policy paper.

AI hardware and robotics engineering was once among Silicon Valley’s most exciting frontiers. When Kalinowski departed, she took more than just her résumé—she left behind a question for everyone still working in this field:

To what extent are you willing to take responsibility for what you’ve built?

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News