DeepSeek's computing power bottleneck, could university AI research hit a wall?

TechFlow Selected TechFlow Selected

DeepSeek's computing power bottleneck, could university AI research hit a wall?

Huawei collaborates with 15 universities to deliver the optimal solution.

Article source:Xinzhiyuan

Image source: Generated by Wujie AI

A PhD student in machine learning at a top 5 U.S. university, yet their lab doesn't even have one GPU capable of delivering substantial computing power?

In mid-2024, a Reddit post by a netizen immediately sparked widespread discussion in the community—

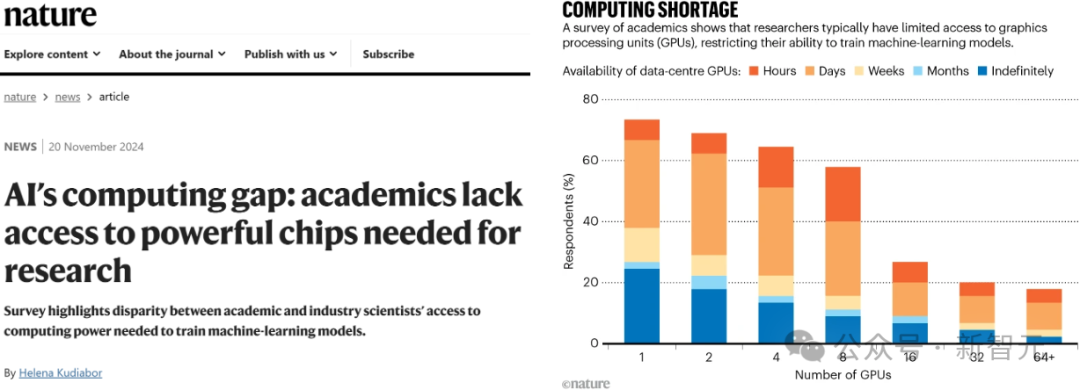

By year-end, a report from Nature further exposed the severe challenges academia faces in accessing GPUs—researchers actually need to queue and apply for time slots on university GPU clusters.

Similarly, within Chinese universities, severe GPU shortages are also common. There have even been reports of universities requiring students to bring their own computing resources to attend classes—an absurd situation.

Clearly, the bottleneck of "computing power" has made AI itself an extremely high-barrier subject.

AI talent shortage, coupled with insufficient computing power

Meanwhile, the rapid development of frontier technologies such as large models and embodied intelligence is triggering a global talent shortage.

According to calculations by a professor at Oxford University, in the U.S., the proportion of job postings requiring AI skills has increased fivefold.

Globally, Tech-AI job openings have grown ninefold, while Broad-AI job openings have surged 11.3 times.

During this period, growth in Asia has been particularly significant.

Although universities worldwide are attempting to help students master critical AI capabilities, as previously mentioned, computing power has now become a "luxury."

To bridge this gap, collaboration between enterprises and universities has become a key approach.

Kunpeng Ascend Scientific Research and Innovation Incubation Centers launch academic research initiatives

Fortunately, Huawei has already begun establishing a similar innovation system within China's universities!

Currently, Huawei has signed cooperation agreements with five top-tier universities—Peking University, Tsinghua University, Shanghai Jiao Tong University, Zhejiang University, and the University of Science and Technology of China—for the "Kunpeng Ascend Scientific Research and Innovation Excellence Center."

In addition, Huawei is simultaneously advancing collaborations with ten other universities—Fudan University, Harbin Institute of Technology, Huazhong University of Science and Technology, Xi’an Jiaotong University, Nanjing University, Beihang University, Beijing Institute of Technology, University of Electronic Science and Technology of China, Southeast University, and Beijing University of Posts and Telecommunications—on the "Kunpeng Ascend Scientific Research and Innovation Incubation Center."

The establishment of Excellence and Incubation Centers exemplifies industry-education integration:

-

By introducing the Ascend ecosystem, it addresses universities' computing power shortages, greatly promoting the emergence of more research outcomes;

-

By reforming curriculum systems, driving education through research projects, industrial topics, and competition-based learning to cultivate top-tier talents for the computing industry;

-

By tackling challenges in system architecture, computing acceleration, algorithm capabilities, and system-level innovations, aiming to produce world-class breakthroughs;

-

By building numerous "AI+X" interdisciplinary programs, leading intelligent ecosystem development.

Building fully independent domestic computing power for AI research

Today, the significance of AI for Science is self-evident.

According to a recent survey by Google DeepMind, one out of every three postdoctoral researchers uses large language models to assist with literature reviews, programming, and paper writing.

This year’s Nobel Prizes in Physics and Chemistry were both awarded to researchers in the AI field.

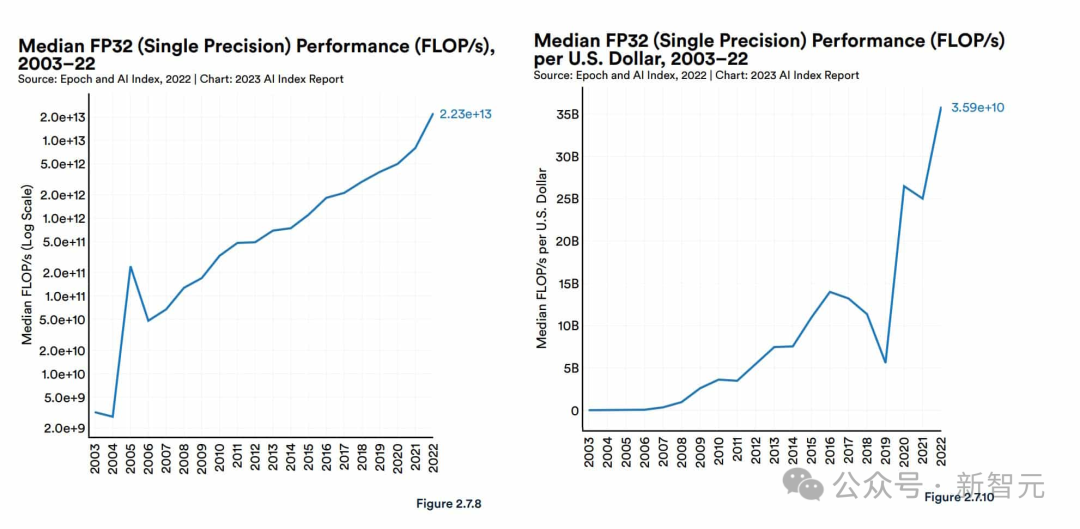

It is evident that during AI-empowered scientific research, GPUs—thanks to their outstanding performance in high-performance computing domains and powerful capabilities in LLM training and inference—have become precious "gold," fiercely sought after by companies like Microsoft, xAI, and OpenAI.

However, U.S. restrictions on GPU exports have made progress in AI and scientific research extremely difficult for China.

To overcome this gap, we must build and grow an independent and complete technological ecosystem.

At the computing level, Huawei’s Ascend series AI processors have taken on the crucial role of reshaping China’s competitiveness.

Beyond raw computing power, we also need a self-developed computing framework to fully leverage the advantages of NPUs/AI processors.

As is well known, the CUDA architecture, designed specifically for NVIDIA GPUs, is commonly used in AI and data science fields.

The only true domestic alternative capable of competing with and replacing CUDA is CANN.

As Huawei’s heterogeneous computing architecture designed for AI scenarios, CANN supports mainstream AI frameworks such as PyTorch, TensorFlow, and MindSpore at the upper layer, while enabling Ascend AI processors at the lower layer—it is the key platform for enhancing Ascend AI processor efficiency.

Therefore, CANN inherently possesses many technical advantages, most notably deeper software-hardware co-optimization for AI computing and a more open software stack:

-

First, it supports multiple AI frameworks, including Huawei’s own MindSpore and third-party frameworks like PyTorch and TensorFlow;

-

Second, it provides multi-level programming interfaces for diverse application scenarios, allowing users to quickly build AI applications and services based on the Ascend platform;

-

Moreover, it offers model migration tools that make it easy for developers to rapidly port projects to the Ascend platform.

Currently, CANN has preliminarily established its own ecosystem. Technically, CANN encompasses a wide range of applications, tools, and libraries, forming a comprehensive technical ecosystem that delivers a one-stop development experience. Meanwhile, the developer community built upon the Ascend technology foundation continues to expand, laying fertile ground for future technological applications and innovation.

Above the heterogeneous computing architecture CANN, we also need deep learning frameworks for building AI models.

Almost all AI developers rely on deep learning frameworks, and nearly all DL algorithms and applications are implemented through them.

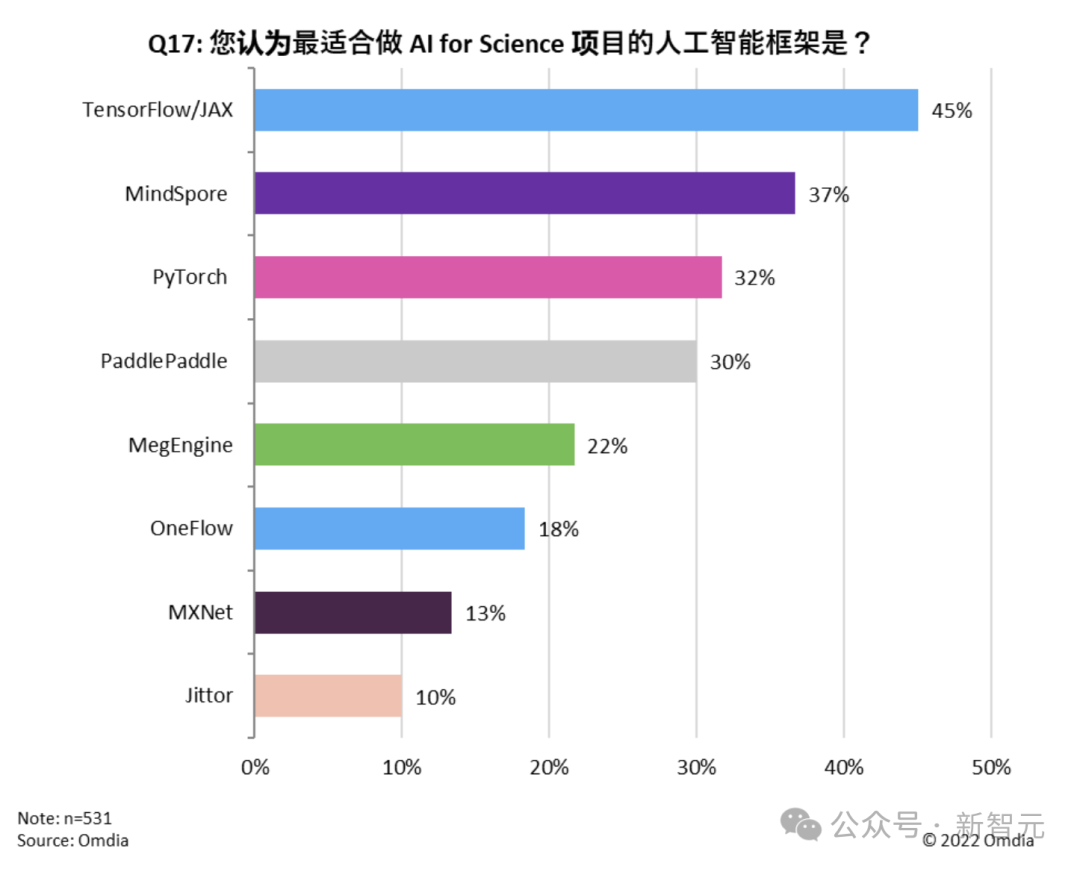

Nowadays, popular frameworks like Google’s TensorFlow and Meta’s PyTorch dominate the market and have formed massive ecosystems.

In the era of large-model training, deep learning frameworks must enable effective training across thousands of computers.

The full-scenario deep learning framework—Huawei Ascend MindSpore, officially open-sourced in March 2020—has filled the domestic gap in this field, achieving true independence and control.

MindSpore features full-scenario deployment (cloud, edge, device), native support for large-model training, and capabilities in AI+scientific computing. It establishes a simplified, end-to-end native development environment that accelerates domestic research innovation and industrial applications.

Notably, as the "perfect partner" for Ascend AI processors, MindSpore supports full-scenario deployment across "device, edge, cloud," enabling unified architecture, single training, and multi-point deployment.

From large-scale Earth system simulations and autonomous driving to small-scale protein structure prediction, all can be realized using MindSpore.

For open-source deep learning frameworks, only broad developer ecosystems can drive continuous improvement and unlock greater value.

A 2023 report by research firm Omdia titled "China Artificial Intelligence Framework Market Research Report" shows that MindSpore has entered the top tier of AI framework adoption, ranking just behind TensorFlow.

Furthermore, inference applications across countless industries are key to unlocking AI’s value. During GenAI’s accelerated development, both universities and enterprises urgently require faster inference speeds.

For example, TensorRT, a high-performance optimization compiler, is a powerful tool for improving large model inference performance. By leveraging quantization and sparsity, it reduces model complexity and efficiently optimizes inference speed. However, the problem is that it only supports NVIDIA GPUs.

Similarly, having developed computing architecture and deep learning frameworks, we now also have a matching inference engine—Huawei Ascend MindIE.

MindIE is a full-scenario AI inference acceleration engine that integrates state-of-the-art inference acceleration technologies and inherits characteristics from the open-source PyTorch.

Designed for flexibility and practicality, MindIE seamlessly connects with multiple mainstream AI frameworks and supports various types of Ascend AI processors, providing users with multi-level programming interfaces.

Through full-stack joint optimization and layered release of AI capabilities, MindIE unleashes the ultimate computing power of Ascend hardware, offering users efficient and fast deep learning inference solutions. It solves challenges related to high technical difficulty and complex development steps in model inference and application development, improves model throughput, shortens time-to-market, enables diverse AI model applications, and meets diversified AI business needs.

It is clear that self-innovated technologies such as CANN, MindSpore, and MindIE not only fill the gaps in domestic computing power but also achieve leapfrog breakthroughs in model training, framework usability, and inference performance—directly rivaling advanced foreign technology stacks.

Building world-class incubation centers

Besides technological advantages, using Ascend computing power aligns better with national needs over the coming decades.

Only domestically developed computing power can free us from unpredictable external environments and ensure stability in scientific research foundations.

Now that the platform is ready, how do we help university faculty and students learn to use it?

Starting September 6 last year, Huawei has successively held the first Ascend AI Special Training Camp at four major universities: Peking University, Shanghai Jiao Tong University, Zhejiang University, and the University of Science and Technology of China. Among hundreds of participating students, 90% were graduate or doctoral students. The curriculum covered multiple areas including CANN, MindSpore, MindIE, MindSpeed, HPC, and Kunpeng development tools within the Ascend domain.

At the training camp, students not only gain in-depth understanding of core technologies but also get hands-on practice. This setup perfectly matches students’ learning patterns, progressing from basic to advanced concepts step by step.

For example, at the SJTU session, Day One focused on migration, teaching students about Ascend AI hardware-software solutions, practical cases of native PyTorch model development on Ascend, and features and migration cases of the MindIE inference solution.

Day Two focused on optimization, covering the Ascend heterogeneous computing architecture CANN, Ascend C operator development, and hands-on optimization of long-sequence inference in large models.

The design of migration and optimization courses demonstrates long-term strategic thinking.

Many current university practical courses are based on CUDA/X86 setups. But under sanctions, computing power shortages are becoming increasingly acute. With migration skills, students can move projects onto the Ascend platform, keeping academic work running continuously.

After mastering foundational knowledge, students proceed to hands-on practice. Huawei experts guide them step-by-step through processes like large model quantization, inference, and Codelabs coding implementation, helping them learn the Ascend tech stack and experience the full workflow of large model inference.

Through hands-on experience, students gain deeper familiarity with the Ascend ecosystem, laying a solid foundation for future careers in technology.

Students from SJTU engaging in hands-on exercises during the first special training camp

Besides courses, Huawei will also host operator challenge competitions for university developers to identify elite talent in operator development.

The competition encourages developers to conduct deep innovation and practice based on Ascend computing resources and CANN’s core capabilities, accelerating AI-industry integration and boosting developer skill levels.

In addition, the incubation centers place strong emphasis on academic achievements.

Students conducting academic research based on key Kunpeng or Ascend computing technologies and tools can apply for postgraduate scholarships. If they publish papers in top international conferences or leading domestic journals, they will receive additional rewards.

Meanwhile, Huawei collaborates with Kunpeng & Ascend ecosystem partners to launch the Elite Talent Program.

This program helps students transition from theory to practice by immersing them in real enterprise work environments, while connecting outstanding students with companies early on.

Currently, the Elite Talent Program has partnered with over 200 companies across 15 cities, offering more than 2,000 technical positions, helping over 10,000 university students secure jobs.

In summary, through these educational practices and incentive programs, student engagement increases significantly. These initiatives enhance their academic experience, help produce research outcomes, enrich their profiles, and give them an edge in the job market—making them more attractive to top-tier companies both domestically and internationally.

So, after mastering the latest technologies and their applications, how can truly groundbreaking research outcomes be cultivated in today’s fast-evolving AI landscape?

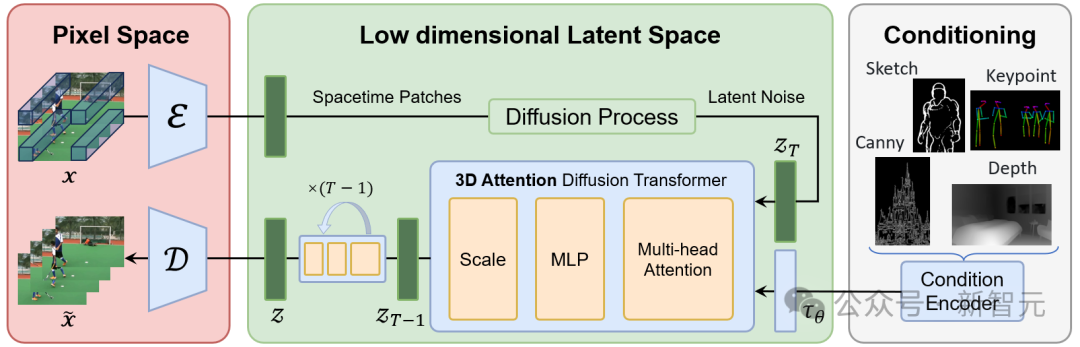

Since Sora ignited the AI-generated video wave in 2024, text-to-video large models have continued to emerge. Peking University and TusStar’s open-source text-to-video project, Open-Sora Plan, once caused a stir in the industry.

In fact, even before Sora was released, the team had already started planning an open-source version. However, due to unmet requirements for computing power and data, the project was temporarily shelved. Fortunately, the partnership between Peking University and Huawei to establish the Kunpeng Ascend Scientific Research and Innovation Excellence Center quickly provided the team with much-needed computing power.

The team originally used NVIDIA A100s. After migrating to the Ascend ecosystem, they discovered many pleasant surprises—

CANN enabled highly efficient parallel computing, significantly accelerating large dataset processing; the Ascend C interface library simplified AI application development; and the operator acceleration library further optimized algorithm performance.

More importantly, the open Ascend ecosystem allowed quick adaptation of large models and applications.

Thus, despite starting from zero with the Ascend ecosystem, team members were able to get up to speed very quickly.

During subsequent training, the team kept discovering new benefits—for instance, when developing with torch_npu, the entire codebase could seamlessly train and infer on Ascend NPUs.

When model partitioning was needed, the Ascend MindSpeed distributed acceleration suite offered rich distributed algorithms and parallel strategies for large models.

Additionally, during large-scale training, the stability of MindSpeed combined with Ascend hardware far exceeded other computing platforms, capable of running uninterrupted for a full week.

As a result, just one month later, Open-Sora Plan was officially launched, earning widespread recognition in the industry.

The clip of "Black Myth: Wukong" generated by Open-Sora Plan rivals cinematic quality, stunning countless netizens

Additionally, targeting Ascend computing power, Southeast University developed a multimodal transportation large model called MT-GPT.

Previously, deploying transportation large models was extremely difficult due to data silos caused by different government departments collecting data, inconsistent data formats and standards, and heterogeneous, multi-source traffic data.

To solve these issues, the team conceived a conceptual framework named MT-GPT (Multimodal Transportation Generative Pre-trained Transformer), providing data-driven solutions for multidimensional and multigranular decision-making tasks in multimodal transportation systems.

However, developing and training large models inevitably demands extremely robust computing infrastructure.

To address this, the team leveraged Ascend AI capabilities to accelerate the development, training, fine-tuning, and deployment of the transportation large model.

During development, the Transformer large model development kit enhanced multimodal generative task comprehension accuracy through multi-source heterogeneous knowledge corpora and multimodal feature encoding.

During training, the Ascend MindSpeed distributed training acceleration suite provided multidimensional, multimodal, and multimodal-accelerated algorithms for the transportation large model.

During fine-tuning, the Ascend MindStudio full-process toolchain integrated fine-tuning of transportation-specific domain knowledge.

During deployment, the Ascend MindIE inference engine supported one-stop inference for the transportation large model, along with cross-city migration analysis, development, debugging, and optimization.

In summary, Peking University’s Open-Sora is a migration project replicating Sora, and as an open-source initiative, it better empowers global developers to create applications in diverse scenarios.

Southeast University’s multimodal transportation large model MT-GPT demonstrates the Ascend computing power’s practical ability in transforming research into real-world impact, directly benefiting urban transportation industries.

Thus, a complete industry-academia-research closed loop is effectively formed.

These fruitful outcomes further prove this point: Excellence Centers and Incubation Centers not only provide fertile ground for academic research and scientific innovation in universities but also cultivate a large number of top AI talents, ultimately spawning world-leading research achievements.

For example, during Peking University’s Open-Sora Plan development, Professor Yuan Li organized daily brainstorming sessions with students and the Huawei Ascend team on code and algorithm development.

Through this trial-and-error process, many students from Peking University personally participated in high-quality scientific practice, demonstrating exceptional research creativity.

This team, with an average age of 23, has become a core force driving domestic AI video applications.

Throughout this process, the group of young learners mastering the Kunpeng Ascend ecosystem continues to grow.

Therefore, universities conducting research based on domestic computing power and platforms not only benefit from top-tier intellectual input but also expand Huawei’s technology ecosystem and applications in the process.

What kind of innovation system should China build?

It is clear that this new paradigm of university-enterprise collaboration has officially launched with Huawei taking the lead.

After establishing its computing product line in 2019, Huawei quickly signed an Intelligent Base project with the Ministry of Education in 2020, initiating educational cooperation with 72 leading universities nationwide.

At that time, some technical knowledge about Kunpeng/Ascend had already been integrated into required undergraduate courses at certain universities.

However, investment in universities is a medium- to long-term cultivation process. Only by prioritizing exposure to relevant technologies among teachers and students can greater value be realized years later.

Therefore, Huawei plans to invest 1 billion yuan annually to develop the native Kunpeng and Ascend ecosystems and nurture talent. This strategy provides university talent and developers with richer resources and broader development opportunities. It has already launched a program donating 100,000 Kunpeng development boards and Ascend inference development boards, encouraging their use in teaching experiments, competition practices, and scientific innovation.

Under this program, teachers and students can closely interact with and test the development boards. Whether for teaching or research experiments, university members can experiment with innovative ideas on these boards, sparking new inspiration.

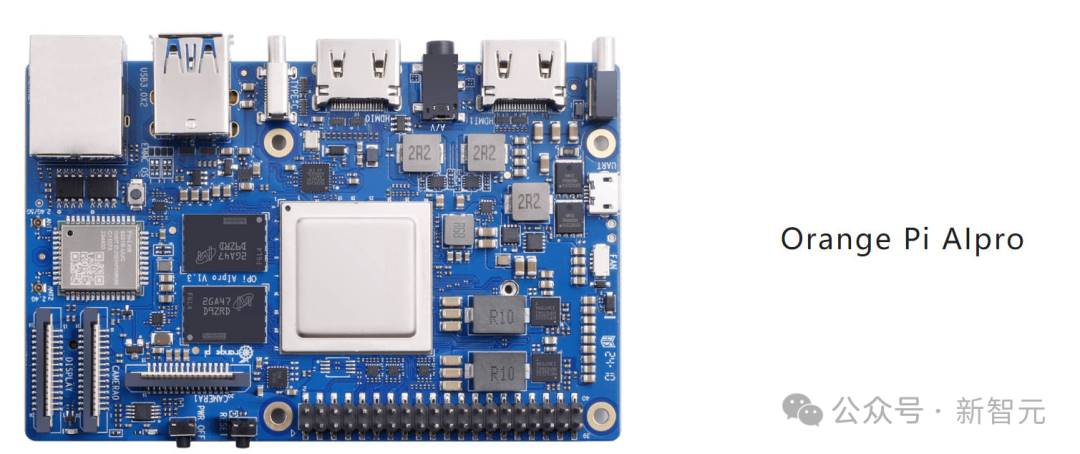

The OrangePi AIpro development board, jointly launched by Orange Pi and Huawei Ascend, meets most needs for AI algorithm prototype verification and inference application development. It is widely applicable in AI edge computing, deep visual learning, drones, cloud computing, and other fields, demonstrating strong capabilities and broad adaptability

On the other hand, China’s current unique situation—external technological blockades—means we have little time left. We must establish an independent and controllable technology stack.

Native development has become inevitable. Only Made-in-China solutions best align with China’s long-term national development trends.

As localization becomes an unstoppable trend, domestic technology stacks like Kunpeng/Ascend will permeate every aspect of IT infrastructure.

The launch of Excellence Centers and Incubation Centers is also increasing industry confidence.

It is foreseeable that after several years of incubation, research talents proficient in domestic technology foundations will continuously promote the Kunpeng/Ascend technology path, generating sufficient world-leading research outcomes.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News