a16z: AI makes everyone 10x more productive—but no company is worth 10x more because of it

TechFlow Selected TechFlow Selected

a16z: AI makes everyone 10x more productive—but no company is worth 10x more because of it

The issue is not with the technology itself, but rather that organizations have not restructured accordingly.

Author: George Sivulka

Translated and edited by TechFlow

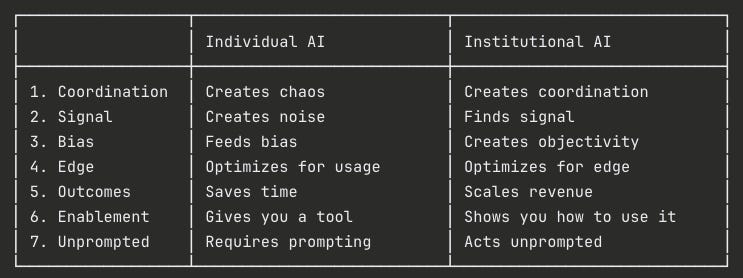

TechFlow Intro: AI has increased individual productivity tenfold—but no company has become ten times more valuable as a result. George Sivulka, an a16z partner and founder of the AI company Hebbia, argues the problem isn’t with the technology itself, but with organizations failing to restructure themselves around it. He proposes seven dimensions distinguishing “institutional AI” from “individual AI”—coordination, signal, bias, edge advantage, outcome-orientation, empowerment, and promptlessness—essentially arguing: swapping in electric motors isn’t enough; you must redesign the entire factory.

The full article follows:

AI has just boosted everyone’s productivity tenfold.

No company has become ten times more valuable as a result.

Where did that productivity go?

This isn’t the first time this has happened.

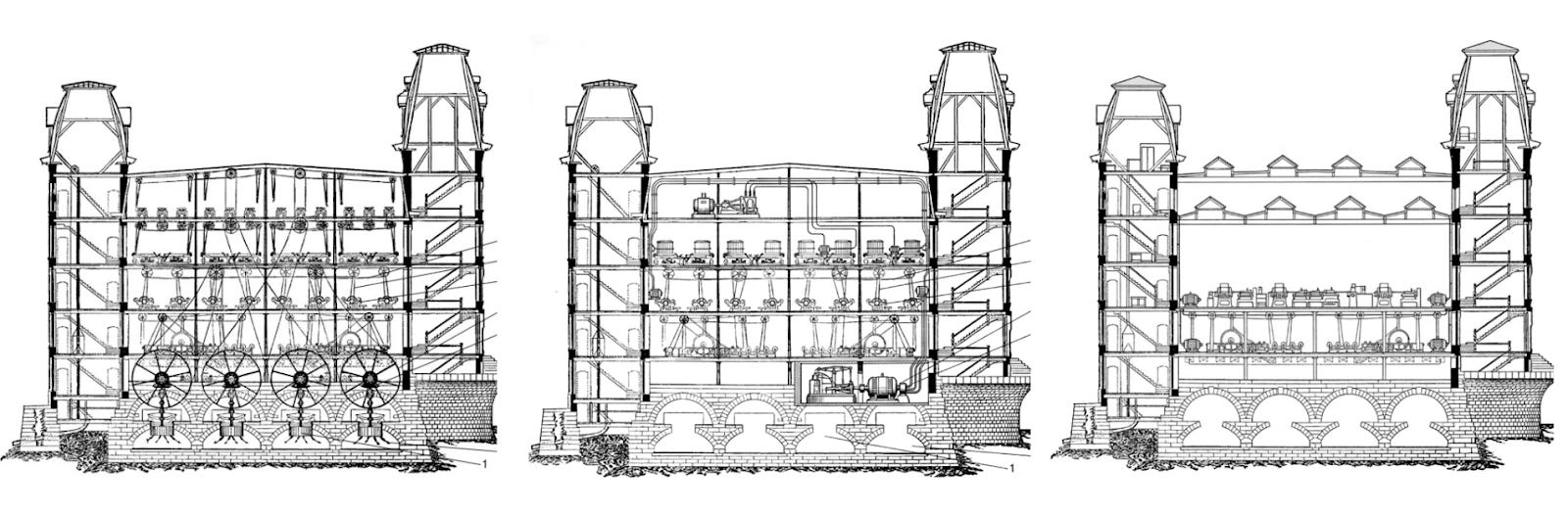

In the 1890s, electricity promised massive productivity gains.

New England textile mills—originally built around the rotary power of steam engines—quickly replaced their steam engines with faster electric motors.

Yet for thirty years, electrified factories saw almost no increase in output. The technology was far ahead—but organizations weren’t keeping up.

Only in the 1920s, when factories completely redesigned their production lines—introducing assembly lines, installing individual motors on each machine, and assigning workers and machines entirely distinct roles—did electrification finally deliver real returns.

Caption: Three evolutionary stages of the Lowell textile mill. Left to right: 1890 steam-powered factory; 1900 electricity-driven factory; 1920 “unit drive” factory (i.e., rebuilt from scratch as an electricity-powered assembly line).

The returns didn’t come from the technology alone—or from making a single worker or machine spin yarn faster. They came only when institutions and technology were jointly redesigned.

This is one of the most expensive lessons in technological history—and we’re repeating it now.

In 2026, AI is delivering tenfold productivity gains to those who know how to use it. But that’s not enough. We’ve swapped in electric motors—but haven’t yet redesigned the factory.

Because of a simple truth: An efficient individual does not equal an efficient organization.

Most AI products today evoke a feeling of “efficiency”—but fail to drive real value. Most AI use cases you see—individuals self-congratulating on Twitter or Slack about their “efficiency max”—have zero actual impact.

The widely repeated phrase “software-as-a-service” over the past year points in the right direction—but offers no blueprint. And it misses the bigger picture. Real transformation isn’t about shifting from tools to services—it’s about co-building technology and institutions (whether by transforming existing ones or building from scratch). A truly efficient future requires entirely new categories of products—the assembly lines of tomorrow.

Efficient organizations need “institutional intelligence.”

This article dives deep into the seven dimensions that distinguish “institutional AI” from “individual AI.” Over the next decade, every B2B AI company will be built upon these distinctions:

Caption: Comparative table of the seven pillars of institutional intelligence

The Seven Pillars of Institutional Intelligence

1. Coordination

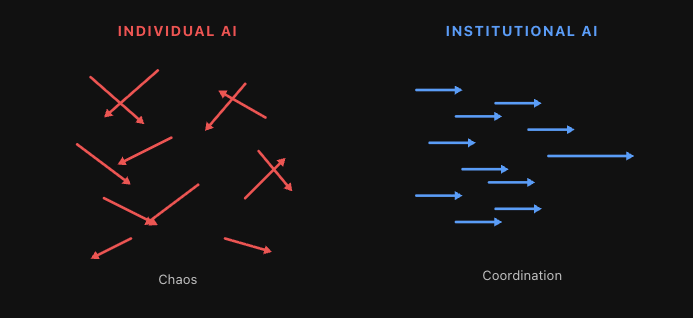

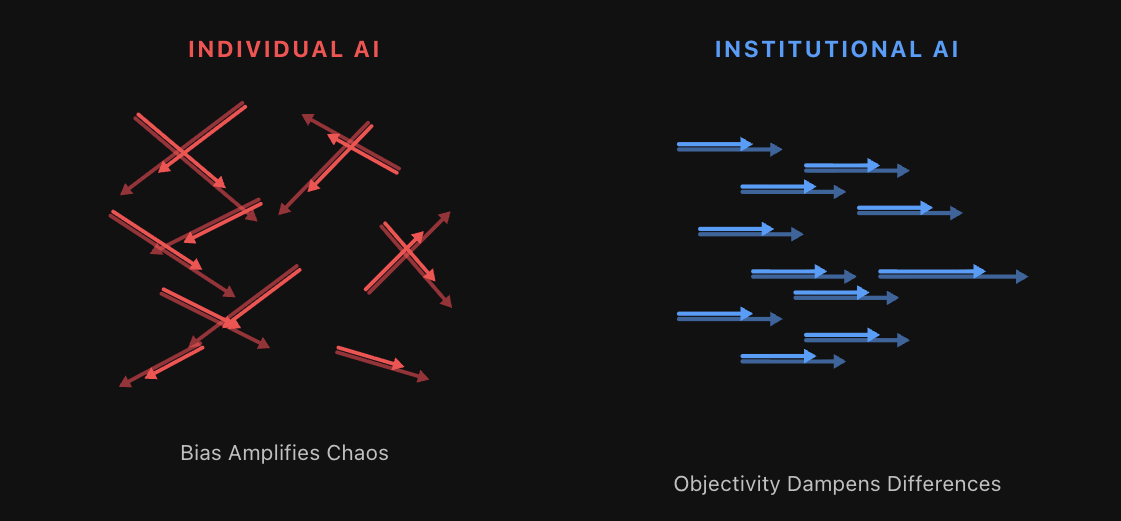

Individual AI creates chaos.

Institutional AI creates coordination.

Consider a thought experiment: Suppose tomorrow you double your organization’s headcount—by cloning your very best employees.

Each clone has subtle differences, preferences, quirks, and perspectives (especially your best performers). Without strong management, clear communication, well-defined responsibilities, OKRs, and role boundaries—you create chaos.

Measured per person, your organization may be more efficient. But thousands of agents—or humans—rowing in different directions yield either stagnation at best, or shattered organizational cohesion at worst.

This isn’t hypothetical. Every organization adopting AI without a coordination layer is living this reality right now. Each employee uses ChatGPT differently, writes prompts in their own style, and produces outputs that bear no relation to others’. Your org chart may still exist—but AI-generated work flows along an entirely separate, invisible path.

Caption: Efficient individuals (or agents) rowing in different directions. Without coordination, chaos ensues.

Coordination is an absolute hard requirement—for both humans and agents.

Institutional intelligence will spawn an entire “agent management” industry—focused on agent roles and responsibilities, communication between agents and between agents and humans, and measuring agent value (pay-per-use metrics fall far short).

2. Signal

Individual AI generates noise.

Institutional AI finds signal.

Today, humans can create—or generate—anything imaginable: AI-written articles, presentations, spreadsheets, photos, videos, songs, websites, software. What a gift.

The problem? The vast majority of AI-generated content is outright garbage. AI garbage has proliferated so severely that some organizations have overcorrected—banning all AI output outright. Honestly, I feel the same way: I run an AI company, yet I require my executive team not to use AI on any final written deliverables. I simply can’t stand the garbage.

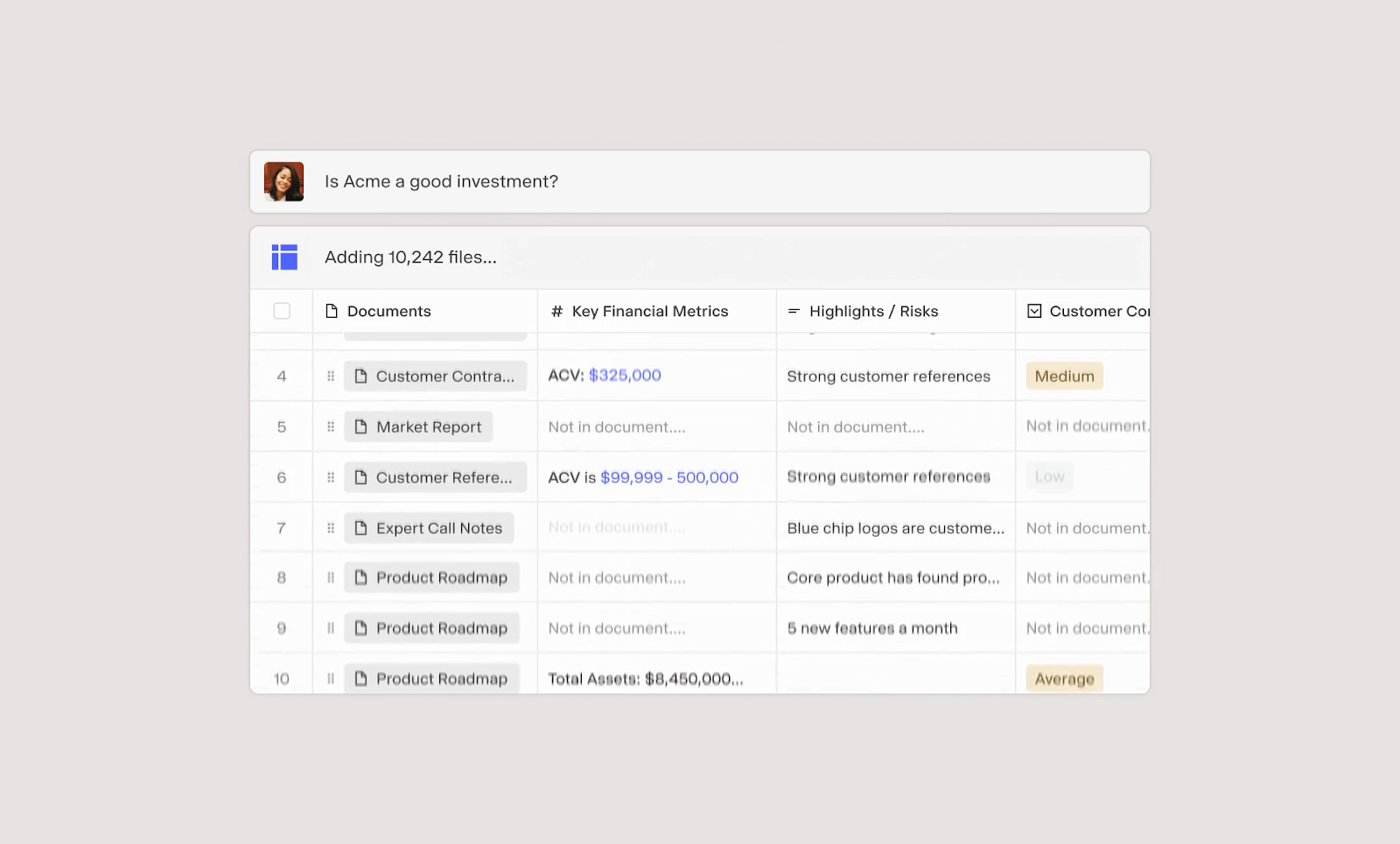

Consider what’s happening in private equity (PE). Last year, you might have received 10 deal opportunities on your desk. This quarter, you’ll receive 50—each polished to perfection by AI—yet your evaluation time remains unchanged. You must find the one truly viable opportunity among them.

Generating anything is no longer the challenge. For any serious organization, the challenge is generating—and then filtering—for the right thing. In an AI-driven world, finding that one high-quality artifact, that one compelling deal, that signal amid the noise, grows increasingly critical. Over the next decade, the core economic driver will be extracting signal from exponentially growing mountains of garbage.

Caption: AI garbage generated by individual productivity tools is proliferating exponentially. Humans alone can no longer filter signal from noise—requiring a new class of institutional AI products.

Institutional intelligence must find signal, structure noise to cut through garbage, and do so in ways that are definable, deterministic, and auditable within workflows.

Individual AI may emphasize always-on productivity tools like Clawdbot—meeting your needs unpredictably, 24/7—inherently non-deterministic agents. Institutional AI relies on deterministic agents whose reliability enables scale, signal detection, and revenue generation for the organization.

Caption: Matrix is a tool leveraging generative technology to cut through noise—opening up a world of deterministic agents and checkpoints.

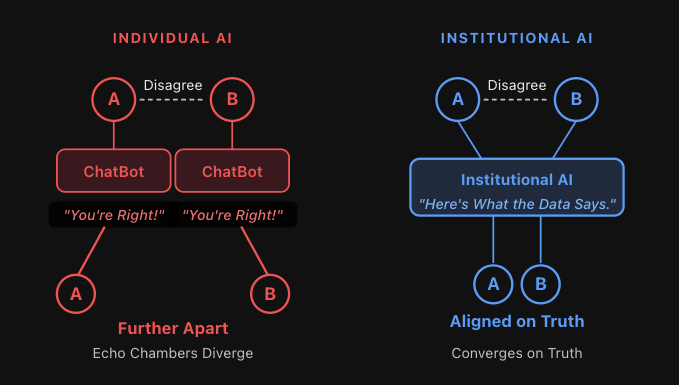

3. Bias

Individual AI feeds bias.

Institutional AI creates objectivity.

Discussions about sociopolitical bias dominated AI discourse for years. Foundational model labs eventually sidestepped the issue via extensive RLHF, tuning all models into sycophants. Today, ChatGPT, Claude, and others are over-aligned—agreeing with you on any topic within the Overton window (and sometimes pushing slightly beyond, looking at you @Grok). Sociopolitical bias debates have faded. But a new problem has taken its place.

This blanket agreement on everything has become absurdly comical. It’s even spawned a meme—Claude’s reflexive “You’re absolutely right!” regardless of whether you actually are.

This sounds harmless. It isn’t.

Many of the most enthusiastic AI adopters inside organizations may soon become the worst-performing employees in history. Think about why.

The worst-performing employees receive almost no positive feedback daily—yet soon they’ll have an ASI agreeing with them constantly. They’ll think: “The smartest intelligence ever created agrees with me. My manager must be wrong.”

This is addictive—and toxic to the organization.

Caption: Individual AI’s echo chambers deepen division, driving two people apart—a dynamic that, at scale, creates factions within previously unified organizations.

This reveals something important: Individual productivity tools reinforce the user. But what truly needs reinforcing is fact.

Human organizations—evolved over millennia—have built dedicated systems to counter precisely this problem:

- Investment committee meetings

- Third-party due diligence

- Boards of directors

- The U.S. government’s separation of powers: executive, legislative, judicial

- Representative democracy—and democracy itself

Caption: Objectivity can even ease coordination problems—suppressing minor disagreements rather than amplifying them.

Organizations rarely fail because employees lack confidence. They fail because no one is willing—or able—to say “no.”

Institutional AI must play that role. It won’t be RLHF-tuned to please users or affirm their beliefs—it must challenge their biases. It rewards efficient behavior with positive feedback, and draws hard lines and forces course correction when behavior veers off track.

Thus, the most important agent inside an organization won’t be a “yes-man,” but a disciplined “naysayer”—questioning reasoning, exposing risk, enforcing standards. Some of the most impactful AI applications in the future will center on institutional constraints: AI board members, AI auditors, AI third-party testers, AI compliance officers…

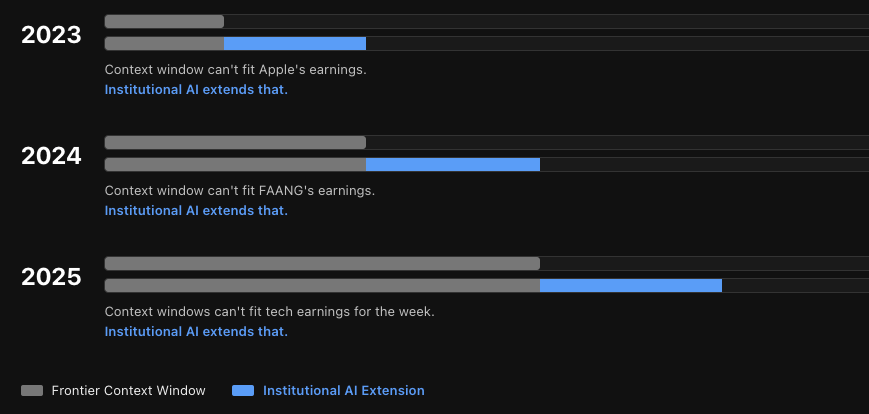

4. Edge Advantage

Individual AI optimizes usage volume.

Institutional AI optimizes edge advantage.

The boundaries of AI capability shift weekly—even daily—as foundational model companies race to capture every individual and organization.

But the classic innovator’s dilemma tells us that, in specific applications, depth always beats breadth:

- @Midjourney stays narrowly ahead in image design.

- @Elevenlabsio stays narrowly ahead in voice modeling.

- @DecagonAI stays perpetually ahead in end-to-end customer service experience.

Even as foundational models converge, true edge advantage remains decisive for domain experts. Many top designers use @Midjourney; many top voice-AI companies use @Elevenlabsio—not because foundational models aren’t improving, but because specialized applications’ relentless focus on advancing their particular edge defines advantage itself.

As long as specialized solutions keep evolving, the capabilities truly critical to economic outcomes—and to enterprise success—will remain anchored in specialized products.

This is especially evident in finance—the hottest area for LLM development today. Once a capability becomes widespread, by definition it won’t help you outperform the market. But if cutting-edge tech delivers even a fleeting 1% niche advantage? That 1% can unlock billion-dollar returns.

Caption: For any sufficiently specific task, edge advantage is defined by the institutional-level solution you build atop frontier technology.

Our users consistently push beyond the frontier. LLM context windows have grown from 4K to 1M tokens in four years. Some of our users process 30 billion tokens in a single task. This year, we’ve already seen paths to handling 100 billion token tasks. Each time foundational model capability advances, we’ve already moved further ahead.

Caption: Context windows—and other capabilities—are moving targets. Comparison of context window evolution over the past three years: frontier labs vs. Hebbia.

Generality across broad user bases matters—especially during early AI adoption by employees. But the future won’t be about choosing between ChatGPT/Claude or vertical solutions. It’ll be about ChatGPT/Claude plus vertical solutions.

Institutional intelligence must leverage domain-specific—and even task-specific—agents.

We ask ourselves a question that sounds absurd but isn’t:

“Which agents would AGI choose as shortcuts—even superintelligence would want specialized tools for specific domains.”

AI’s capability boundary is always moving. Organizations leveraging genuine edge advantage will win. Everyone else pays dearly for a commoditized general-purpose product.

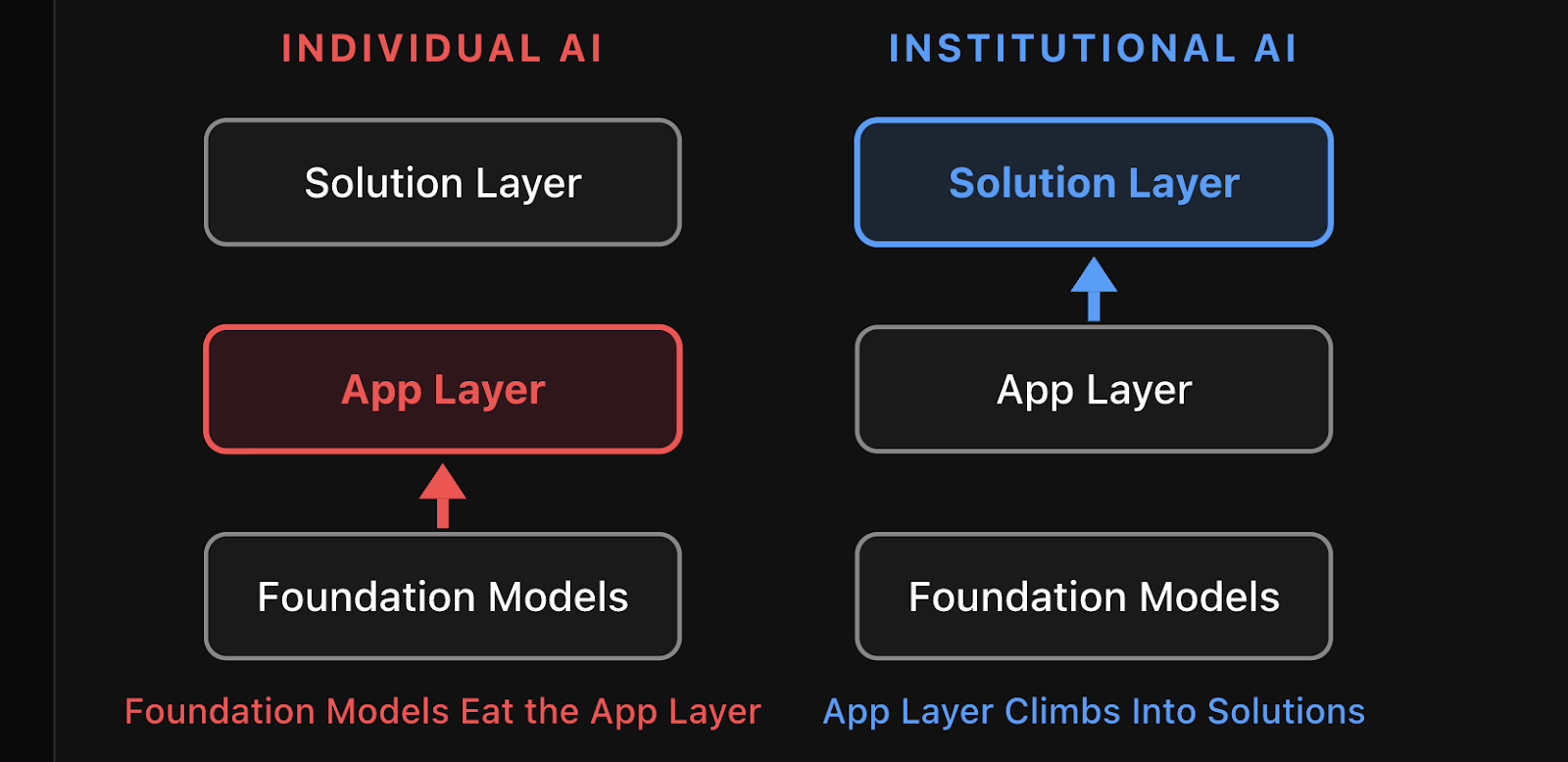

5. Outcome

Individual AI saves time.

Institutional AI expands revenue.

@MaVolpi once told me something that reshaped my thinking about selling AI to enterprises: “If you ask any CEO whether they prioritize cost-cutting or revenue expansion, nearly all will say revenue.”

Yet nearly every AI product in today’s market delivers cost reduction—promising time savings, doing more with fewer people, or replacing human labor.

Institutional AI must deliver incremental revenue. And incremental revenue is far harder to commoditize than time saved.

Take AI-assisted software development. Code IDEs are among the best individual AI productivity tools ever built—but they’re now facing massive pressure from Claude Code (another individual AI tool). Cognition plays a completely different game. Their most stable growth comes from selling transformation—not tools. I bet this model will endure.

Pure software “is rapidly becoming uninvestable.” Pure services don’t scale. The solution layer—where technology and outcomes are bound together—is where durable value accrues.

Consider M&A. Individual AI helps analysts build financial models faster. Institutional AI identifies the one target worth pursuing among a hundred—and then expands the search universe to a thousand. One saves time; the other creates revenue.

Caption: Foundational model companies are moving toward vertical application layers. Vertical application companies are moving toward the solution layer.

“Moving upstream” is the market’s natural gravitational pull. Foundational models are moving toward applications; application companies are moving toward solutions.

Institutional intelligence is the solution layer. And the solution layer—the place where outcomes live—will accrue durable value and capture the largest share of economic upside.

6. Empowerment

Individual AI gives you a tool.

Institutional AI teaches you how to use it.

Humans—even the smartest—are resistant to change.

Believe it or not, successful shops in New York still refuse credit cards. They know they’re losing money—and know exactly why—but still won’t act. Similarly, certain employees in certain organizations will resist using AI for the foreseeable future.

Transitioning from fully manual to AI-first hybrid organizations will be the most persistent, defining challenge of the next decade. And often, the highest-ranking, most critical people in the organization are the slowest to adopt.

Caption: The organization’s highest leadership—furthest from “productivity tool operation”—are often the slowest but most critical adopters of new technology.

Palantir is the only “software” company to maintain an exceptionally high valuation multiple during the recent two-month trillion-dollar tech stock sell-off. There’s a reason. Palantir was among the first true “process engineering” companies. Whether you call it “process engineering” or “writing Claude skill files,” the future of institutional AI will spawn an industry: encoding enterprise processes into agents—and executing the required change management to deploy them.

Caption: Full organizational AI adoption will cross multiple chasms—each with its own challenges. Process-to-AI deployment will be the primary catalyst.

I’d argue process engineering will become the most important “technology” in the near term.

And in process engineering, business and domain expertise—not software expertise—is paramount. Vertical solutions will cultivate talent with frontline expertise in deploying engineering, implementation, and change management.

A top-tier investment bank (one of the top three global banks), which chose Hebbia for full-scale deployment, put it best: They don’t partner with major foundational model labs because “we’d have to explain to their teams what a CIM (Confidential Information Memorandum) is.” Claude or GPT may understand the domain—but the teams responsible for rollout don’t…

That difference is everything.

7. Promptlessness

Individual AI responds to human prompts.

Institutional AI acts proactively—without prompts.

There’s much discussion about agent-to-agent communication, and whether future enterprises and institutions will even need humans.

But a better question is: Will future AI agents need prompts?

Prompting AGI is like bolting an electric motor onto a handloom. It’s fundamentally—and irreversibly—constrained by the weakest link in the organizational supply chain: ourselves. Humans simply don’t know what the right questions are—or when to ask them.

The most valuable work AI can do is work no one thought to ask for. AI should uncover risks no one spotted, identify counterparties no one considered, reveal sales pipelines no one knew existed.

This will radically expand the boundaries of AI use cases.

A promptless system continuously monitors an entire investment portfolio’s data streams. It detects that a portfolio company’s working capital cycle has quietly deteriorated for three consecutive months, cross-references this against covenant terms in credit agreements, and alerts operating partners before anyone in the fund opens that PDF.

When humans no longer need to write prompts for AI, new interfaces and new ways of working emerge. We @Hebbia have strong ideas here—more on that later.

Conclusion

None of the above negates the value of chatbots, agents, or individual AI.

Individual AI will be the vehicle through which most global enterprises first experience AI’s transformative magic. Driving adoption and usability is the crucial first step in change management for building an AI-first economy.

At the same time, demand for institutional intelligence is clear, urgent, and immense.

Every organization will have a chatbot from a foundational model lab. Every organization will also have institutional AI purpose-built for domain-specific problems—and individual AI will treat that institutional AI as the most critical tool in its own toolkit.

“Better integration” between institutional AI and individual AI is inevitable.

But remember the lesson of the 1890s textile mill: The first factories to get electricity lost to those that redesigned their workshops.

We already have electricity. It’s time to redesign our factories.

Thanks to @aleximm and @WillManidis for their review—and to Will’s essay “Objects Shaped Like Tools” for inspiring this piece.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News