GitHub Copilot Changes Pricing Model, Exposing the AI Industry’s Biggest Lie

TechFlow Selected TechFlow Selected

GitHub Copilot Changes Pricing Model, Exposing the AI Industry’s Biggest Lie

OpenAI, Anthropic, and Microsoft are all subsidizing you at a loss—but this game is nearing its breaking point.

Author: Ed Zitron

Translated by TechFlow

TechFlow Intro: Microsoft has finally caved: GitHub Copilot is shifting from a monthly subscription model to token-based billing. This isn’t a product upgrade—it’s the collective bankruptcy of the AI industry’s subsidy scam. OpenAI, Anthropic, and others have masked true costs with flat monthly fees, burning $8–$13 in compute for every $1 users spend—training an entire generation into unsustainable usage habits. When pricing snaps back to reality, you’ll realize those “revolutionary” AI tools may just be expensive toys.

I just published an article about how OpenAI is dismantling Oracle—and I’ve reused some of that material here. This is one of the best pieces I’ve ever written, and I’m extremely proud of it.

Subscribing to the paid edition is both excellent value and enables me to publish these large, in-depth research articles for free every week.

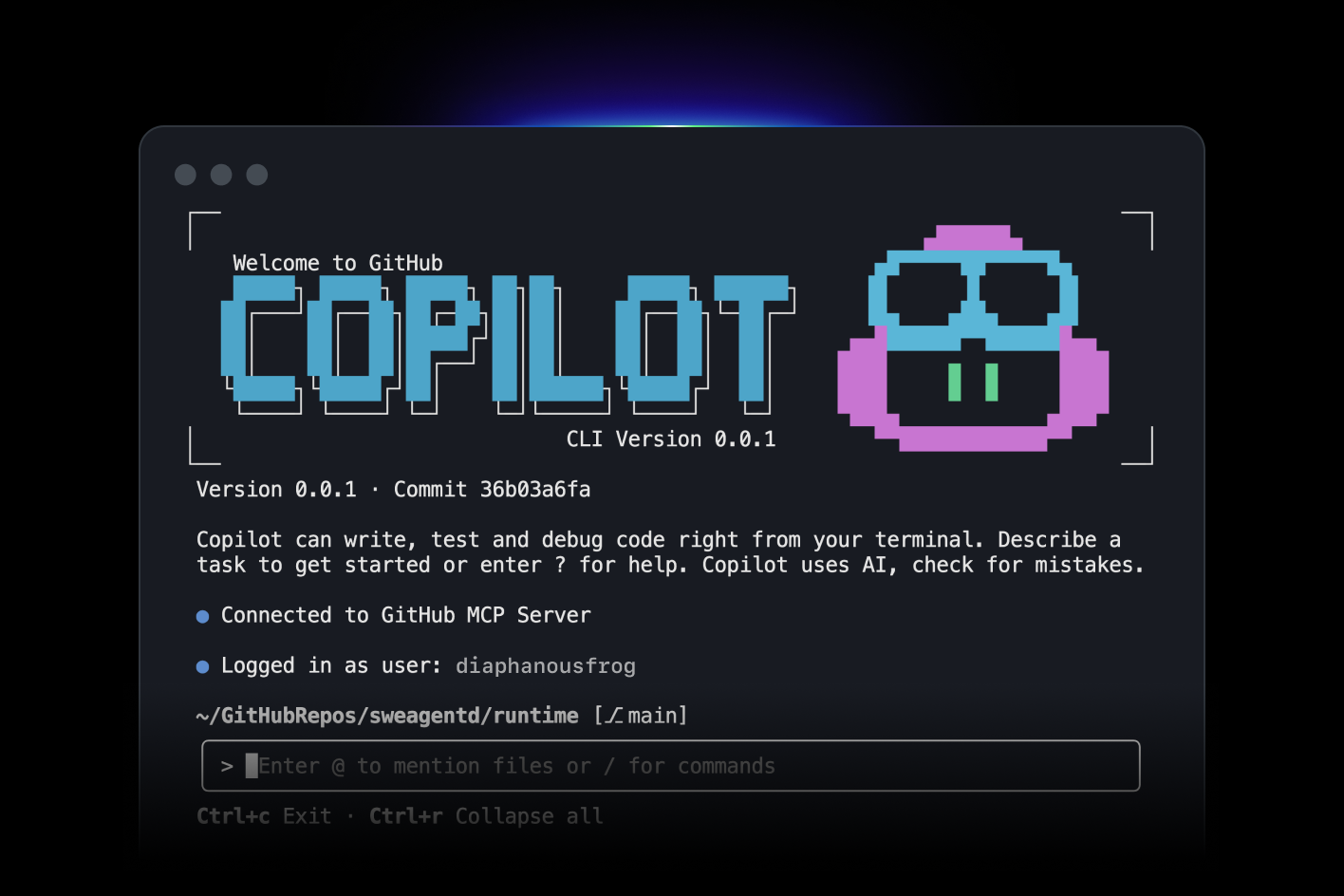

Yesterday morning, GitHub Copilot users received confirmation of the news I reported a week ago: all GitHub Copilot plans will shift to usage-based billing effective June 1, 2026.

Microsoft will no longer grant users a fixed number of “requests.” Instead, users will be billed based on the actual cost of the models they use—what Microsoft calls “…an important step toward a sustainable, reliable Copilot business and experience for all users.” How much users can do now depends entirely on how many tokens their subscription fee buys (e.g., the $19/month plan gets $19 worth of tokens).

Translation: “We can’t keep subsidizing GitHub Copilot users—or Amy Hood, Microsoft’s CFO, will start hitting people with a baseball bat.”

This announcement itself is an interesting preview of how such pricing changes will be packaged:

Copilot is no longer the product it was a year ago. It has evolved from an editor-embedded assistant into an intelligent agent platform capable of running long, multi-step coding sessions—iterating across entire codebases using the latest models. Agent-based usage is becoming the default mode, bringing significantly higher compute and inference demands. Now, a quick chat question and a multi-hour autonomous coding session may cost users the same amount. GitHub has been absorbing this rapidly escalating inference cost—but the current request-based premium model is no longer sustainable. Usage-based billing solves this problem. It better aligns pricing with actual usage, helping us maintain long-term service reliability and reducing the need to restrict heavy users.

See? It’s not that Microsoft has been subsidizing compute for nearly two million users—it’s that AI has become so powerful and complex that it’s essentially a different product!

While Copilot may indeed “no longer be the product it was a year ago,” the underlying economic mismatch hasn’t changed much: for three years, Microsoft has allowed users to burn more tokens per month than their subscription fee covers. According to a Wall Street Journal report from October 2023:

Individual users pay $10 per month for this AI assistant. According to data from a knowledgeable source, the company lost over $20 per user per month during the first months of this year—with some users costing the company $80 per month.

Naturally, GitHub Copilot users are now revolting, declaring the product “dead” and “completely ruined.”

I predicted this day two years ago in my piece “The Subprime AI Crisis”:

The day has finally arrived—because every AI service you use is subsidizing compute, and every one of them is therefore losing money:

When you pay for an AI startup’s service—including OpenAI and Anthropic—you pay a monthly fee: $20, $100, or $200 per month for Anthropic’s Claude; $20 or $200 per month for Perplexity; or $8, $20, or $200 per month for OpenAI. In enterprise settings, you might get certain “quota units”—like Lovable’s $25/month subscription granting users “100 monthly quotas,” plus $25 in cloud hosting (through Q1 2026), with quotas rolling over month-to-month. When you use these services, the relevant company either pays AI labs per million tokens or—when dealing with Anthropic and OpenAI—pays cloud providers to rent GPUs for model inference. A token is roughly ¾ of a word. As a user, you’re unaware of token consumption—you just type and receive output. AI labs obscure service costs using terms like “tokens,” “messages,” or percentage-based five-hour rate limits—so users don’t truly know how much any of this actually costs. Behind the scenes, AI startups are burning cash at an insane pace: until recently, Anthropic even let you burn up to $8 in compute for every $1 you spent on subscription fees. OpenAI permitted similar behavior, though exact figures are harder to pin down.

AI startups and cloud giants assumed they could attract enough users through subsidies and losses—hooking them so deeply that they’d refuse to switch when prices rose. They also likely believed token costs would decline over time. But what actually happened was this: while some model prices may have dropped, newer “inference” models consume far more tokens—meaning inference costs have inexplicably risen over time.

Both assumptions were wrong—because the monthly subscription model makes no sense for any service powered by large language models.

The Core Economic Model of Generative AI Has Collapsed

Think about it this way. When Uber (no, this is nothing like Uber) raised ride prices, the underlying economic logic didn’t change—and neither did what riders and drivers saw: riders paid per trip; drivers earned per trip. Drivers still bore fuel, insurance, local licensing, and vehicle financing costs—none subsidized by Uber. Uber’s massive losses came from subsidies, endless marketing spend, and doomed R&D efforts like self-driving cars.

Generative AI Subscriptions Are Nothing Like Uber

To illustrate the scale of AI pricing misalignment, let me ask you to imagine an alternate history where Uber operated under a radically different business model.

Generative AI subscriptions are like if Uber charged $20/month for 100 rides of up to 100 miles each—while gasoline cost $150 per gallon and Uber paid for the gas, because someone insisted oil would someday become so cheap it wasn’t worth measuring.

Uber would eventually decide to charge users a monthly fee for ride eligibility, then bill them separately for the gasoline they consumed. Suddenly, users go from paying $20/month for 100 rides to paying $20 for eligibility plus $26 for 10 miles’ worth of gas. Users would naturally be a little annoyed.

Though this sounds exaggerated, it’s actually a remarkably accurate metaphor for what’s happening across the generative AI industry—especially with GitHub Copilot.

GitHub Copilot’s previous pricing allowed 300 premium requests per month, plus “unlimited chat requests” using models like GPT-5 mini. Each “request” (per Microsoft) is “…any interaction where you ask Copilot to do something for you.” In later versions of the request-based system, more expensive models consumed more requests—for example, Claude Opus 4.6 used three premium requests. Once users exhausted their premium requests, Copilot let them freely use cheaper models for the rest of the month.

It wasn’t always this way. Until May 2025, Microsoft offered unlimited model usage—even then, users angrily complained about any restrictions.

Microsoft—like every AI company—deceived customers by selling unsustainable services, because selling LLM-powered services on a monthly subscription basis has never, ever made sense.

If you want to estimate what token-based billing might cost, a user on GitHub Copilot’s subreddit found that a single premium request previously consumed around $11 worth of tokens—since one “request” involved using 60,000 tokens in context, several tools, and numerous internal “rounds” (what the model does) to generate output.

There’s also the fundamental unreliability of hallucination-prone LLMs. While a premium request getting stuck in a loop and spitting out half-broken code is frustrating, the same failure becomes far less forgivable when you’re paying for it yourself.

Users have also been trained to use the product in ways completely unlike token-based billing—and many probably don’t even realize how many “tokens” they’re burning, or how many a specific task requires—which varies depending on which model you use.

This is nothing like Uber. Anyone telling you otherwise is defending bad behavior. Uber may have raised prices—but it didn’t have to dramatically alter its platform’s underlying economic logic, nor force users to completely change how they interacted with the product, simply because Uber suddenly started charging per gallon.

Monthly AI Subscriptions Are All Part of the AI Subsidy Scam—a Deliberate Attempt to Decouple Generative AI from Its Actual Costs

There has never been—and never will be—an economically viable way to deliver LLM-powered services unless users are billed per actual token consumed. And in deceiving these users, these companies created products with illusory benefits and dubious ROI.

This has been obvious for years.

Economically, monthly subscriptions only make sense when costs are relatively static. Gyms can sell memberships because they roughly know equipment wear-and-tear, class operation costs, and electricity, staff, and water expenses over a given period.

Google Workspace customers—at least pre-AI—paid for document access/storage and ongoing Google Docs and other service costs. Digital storage costs are relatively low (and unlike LLMs, Google Workspace has minimal compute demands), meaning especially heavy Google Drive users won’t erode their monthly subscription’s profitability.

Yet these services deliberately hide token counts or how much a specific activity costs—meaning users don’t truly understand what rate limits mean, and every sudden rate-limit change sends customers scrambling to figure out how much real work they can actually get done with the service.

This is abusive, manipulative, and deceptive business conduct—existing solely to let Anthropic, OpenAI, and other AI companies expand their user bases, because most AI users perceive real or imagined benefits almost exclusively through the lens of burning $8–$13.50 in tokens for every $1 they spend on subscriptions.

This deliberate deception has only one goal: ensuring most people never confront generative AI’s true costs. When The Atlantic published a rhapsodic essay calling Claude Code Anthropic’s “ChatGPT moment,” it was based on a $20/month subscription—not the underlying token consumption Anthropic incurred to provide it—which in turn led the author to forgive the model’s “small errors” or its tendency to “get stuck on more complex programming tasks.”

If the author had paid for her actual token consumption—and every stall cost $15 in tokens—I doubt she’d be so forgiving of these failures.

But it’s all part of the scam.

Crucially, mainstream journalists writing about AI often don’t understand how much these services cost—and virtually every mainstream article about ChatGPT or Claude Code is written by people who have almost no idea how much individual tasks might cost users.

Remember: generative AI services are mostly experimental products—functionally unlike any other modern software or hardware. People can’t just walk up to ChatGPT or Claude and demand it work.

I mean, you can—but if your prompt is off, you don’t understand how it works, you feed it flawed input, or it simply misfires, it’ll spit out something you dislike—which means you’ll need to prompt it again. LLMs are fundamentally unpredictable.

You cannot guarantee an LLM will execute a specific action—or produce a result grounded in reality. You cannot reliably predict how much a specific task—even one you’ve performed dozens of times before with an LLM—might cost. Nor can you predict when the model might go rogue and delete something, or fail to act while claiming it did.

If users were forced to pay actual rates, many would instantly abandon the product—because aimlessly exploring what an LLM can do can easily burn $5 in tokens.

Side note: In fact, you can burn huge sums without ever getting what you want—because LLMs aren’t truly intelligent! Anyone with even basic awareness of their limitations can easily burn $30, $50, or even $100 trying to convince an LLM to do something it insists it can do. There’s a term for this: sycophancy. LLMs are typically designed to affirm users—even when they utter dangerous nonsense—extending to responses like “You want this technically or economically impossible thing? Absolutely!” That’s why the industry works so hard to conceal these costs—it’s pure price gouging!

I believe most AI subscriptions inevitably shifting to token-based billing is unavoidable—especially since Anthropic and OpenAI have already done so for enterprise customers.

Can Ordinary Companies Afford Token-Based Billing? Anthropic Estimates Users Spend $13–$30 Daily on Claude Code ($7,000+ Annually); Large Organizations Spend Hundreds of Thousands or Millions Yearly

As I discussed last week, Uber’s CTO said at a conference that the company burned through its entire 2026 AI budget in just a few months. Goldman Sachs advises some firms to spend up to 10% of payroll on AI tokens—potentially rising to 100% in coming quarters.

This is the direct result of training every AI user to use these services as much as possible while obscuring their true costs. Every large company instructing every employee to “use AI as much as possible” does so while ignoring—or being entirely detached from—their actual token consumption. And as companies face actual costs, I’m unsure how anyone could economically justify investment in this technology.

Of course, you’ll say engineers “deliver code faster” or similar nonsense—I get it. But how much faster? How much money did you earn or save? If you spend 10% of labor costs on AI tokens, did that extra expense get recouped elsewhere? I’m not sure it has. I’m not sure any enterprise investing heavily in tokens has seen ROI—which is why every study on AI ROI finds scant evidence it exists.

Mostly, those gushing about generative AI’s possibilities are experiencing it without bearing real costs. Every Twitter lunatic endlessly boasting about their entire engineering team going wild with Claude Code is using a $125/month Teams subscription—whose usage limits closely mirror Anthropic’s $100/month consumer plan. Every LinkedIn monster insisting they “finish hours of work in minutes” with some Perplexity product is spending at most $200/month on Perplexity Max.

In reality, that 10-person $1,250/month Teams subscription likely burns $5,000–$10,000 monthly in API call fees—or more. Anthropic’s Head of Growth, Amol Avasare, said last week that their Max subscription is designed for heavy chat usage—not what people do with Claude Code and Cowork—and explicitly stated Anthropic is now considering “different options to continue delivering a high-quality experience,” i.e., “we’ll adjust pricing at some point.”

I’m not sure people realize how expensive these tokens are—especially for programming projects involving large codebases and frequent calls to coding and infrastructure tools. Can someone spending $200/month afford $350, $400, or $500? Can they handle a month exceeding that? What if their budget runs over? Or what if they genuinely can’t afford to complete necessary work?

Here’s a more concrete example: until early April, Anthropic’s own developer documentation for Claude Code (archived) stated “[users] average $6 per developer per day, with 90% of users staying below $12 per day.” As of this week, the documentation reads:

Claude Code charges based on API token consumption. Subscription plan pricing (Pro, Max, Team, Enterprise) is available at claude.com/pricing. Cost per developer varies widely depending on model choice, codebase size, and usage patterns (e.g., running multiple instances or automation). In enterprise deployments, average cost is ~$13 per active developer per day (~$150–$250 monthly), with 90% of users staying below $30 per active day. To estimate your team’s spend, start with a small pilot group and use the tracking tool below to establish a baseline before scaling.

Assuming ~21 working days per month, Claude Code users average ~$273/month—or $3,276/year. At $30 per working day, that’s $630/month—or $7,560/year.

These numbers are staggering—and even more staggering is that you can’t possibly stay under $30/day using any of Anthropic’s newer models. Claude Opus 4.7 costs $5 per million input tokens and $25 per million output tokens. One million tokens equals ~50,000 lines of code. Assuming you use so-called state-of-the-art models, you’ll almost certainly exceed one million tokens—and if you’re unsure which model to use for a specific task, that number escalates sharply.

Let’s play with that $30/day number a bit more.

For a 10-person dev team, that’s $75,600/year—working days only.

Raise it to $50/day for three months, and it jumps to $88,200.

Add one month over $100/day, and annual spend hits $102,900.

Spend $300/day, and a 10-person team spends $756,000/year.

While feasible for well-funded startups or Meta-style “banana republic” contingency-budget thinking, any cost-conscious enterprise would struggle to justify five- or six-figure additional spending on a “productivity-enhancing” service whose benefits remain unmeasurable.

Now, I think most companies fall into three categories:

Enterprise deployments within large organizations like Spotify or Uber—with AI-obsessed CEOs allowing budgets to run unchecked. I’d also include well-funded large startups in this category.

Small startups using subsidized “Teams” subscriptions.

Individual users paying monthly fees for access to Claude or other AI subscriptions.

Large organizations can still claim they’re burning millions of dollars in AI tokens for software engineers—citing the dubious benefit that “top engineers write no code.”

A single bad earnings call could flip that narrative. At some point, investors—even those brain-dead fools perpetually blowing the AI bubble—will start questioning ballooning R&D costs (where AI token consumption is usually hidden) when revenue growth lags. This will likely trigger more layoffs to control costs—as Meta did—followed by eventual contraction when someone asks, “Do these things actually help us work faster and better?”

I also believe startups burning 10% or more of payroll on AI tokens will struggle to convince investors this is necessary within six months.

Once everyone switches to token-based billing, I’m unsure we’ll see as much generative AI hype.

The Economics of AI Data Centers and Compute Are Nonsensical

The way people talk about AI data centers is completely untethered from reality—and I think people haven’t grasped how absurd this entire era has become.

AI Data Centers Are Expensive to Build and Operate—but Generate Little Real Revenue

According to TD Cowen’s Jerome Darling, key IT (GPU and related hardware) costs run ~$30 million, with $14 million per megawatt of data center capacity. Data centers take one to three years to build—even assuming power availability.

Of the 114GW of data centers reportedly slated for completion by end-2028, only 15.2GW are under construction in any meaningful sense. And “under construction” may mean only “there’s a hole in the ground.” It doesn’t mean—and shouldn’t mean—that the facility’s capacity will be available soon.

Let’s start simple: every time you hear “100MW,” think “$4.4 billion”—most of it for NVIDIA GPUs.

So each AI data center starts massively unprofitable—even under a six-year depreciation schedule, it takes years to break even… and with NVIDIA’s annual upgrade cycle, once you finish your first customer contract, those GPUs likely won’t generate much more revenue.

It’s unclear whether AI compute customers exist beyond OpenAI and Anthropic—whose demand accounts for 50% of AI data centers under construction. If either fails to pay, it creates a massive systemic vulnerability.

Regardless, it’s unclear what ongoing rates these data centers charge. Though spot pricing hovers around $4.50/hour per B200 GPU, long-term contract pricing is typically far lower—one founder (per The Information) said they pay ~$3.70/hour per GPU for a one-year commitment.

To clarify: we must distinguish spot costs—randomly spinning up GPUs on someone else’s servers—from contracted compute, which constitutes most data center capital expenditure. Most data centers are built anticipating one or two major customers—meaning those customers likely negotiate blended rates.

Thus, many data centers charge far less than $3.70/hour—billing instead per megawatt (or kilowatt).

This is where economics begins collapsing.

The Collapse Economics of a 100MW Data Center—$2.55/hour, 16% Gross Margin at 100% Occupancy, Unprofitable Due to Debt

Here’s the starting cost for a 100MW data center. A 100MW data center may only yield 85MW of billable capacity. Based on discussions with sources familiar with hyperscale billing, expected revenue is ~$12.5 million per MW—or ~$1.063 billion annually.

I should clarify that most data center companies you know don’t actually build them—they outsource this to firms like Applied Digital, dubbed “hosting partners.” For example, CoreWeave pays Applied Digital hosting fees to use its North Dakota data center. CoreWeave supplies all GPUs and other tech inside the data center.

To illustrate the economic mismatch, I’ll use a theoretical example: a data center leased to a theoretical AI compute company.

The data center’s GPUs are likely NVIDIA Blackwell chips—probably 8-B200-GPU pods retailing at ~$450,000 each, or ~$56,250 per GPU. With 85MW of critical IT load, total IT capex runs ~$36.78 million per MW—or ~$3.126 billion total IT capex (~$2.67 billion for GPUs).

Assume this data center sits in Ellendale, North Dakota—industrial electricity costs ~6.31¢/kWh, yielding ~$55.4 million in annual electricity costs. Based on source discussions, I estimate ongoing costs for maintenance, staffing, power replacement, etc., at ~12% of revenue—or ~$128 million annually—bringing total costs to ~$183.4 million.

Wait—sorry. You also pay hosting fees for critical IT. Per Brightlio, these typically run ~$180–$200/kW/month depending on scale and location—though I’ve seen lows of $130/kW/month, which I’ll use here: ~$133 million annually. This brings total costs to ~$316.4 million.

Hmm, that’s still less than $1.06 billion—so we’re okay, right?

Wrong! You’ve got $3.126 billion in IT equipment to depreciate—six-year depreciation yields ~$521 million annually. That’s ~$837.4 million annually—leaving ~$168.6 million in annual profit—or ~16.7% gross margin…

…if you maintain 100% occupancy! See, data centers may take one or two months to install GPUs and onboard customers—during which time you earn zero revenue but incur much higher costs (I modeled 10% power and 15% hosting/operations costs), meaning you lose ~$3.27 million daily.

For this example, assume it takes an extra month to get operational—meaning you’ve already sunk ~$102 million, unrecoverable—bringing your annual total cost (including depreciation) to ~$939.4 million—or ~6.6% gross margin.

Wait—did you finance those GPUs with debt? You did? How bad is it? Oh god—you secured a six-year asset-backed loan at 80% loan-to-value, borrowing $2.8 billion at 6% interest.

Your bank, in eternal generosity, gave you a deal—12 months’ grace period paying interest only… ~$168 million—bringing your first-year total cost (excluding the delayed month, for fairness) to ~$1.005 billion… against $1.06 billion in revenue.

That’s a 5.19% gross margin—and you haven’t even started repaying principal. When that happens, you’ll owe $54.1 million monthly—~$649 million annually for the next five years—totaling ~$1.48 billion, or ~negative 40% gross margin.

I must stress: this assumes 100% utilization and tenants paying on time, every time.

Stargate Abilene Is a Disaster—$2.94/hour per GPU, $10B Annual Revenue, Years Behind Schedule, With One Tenant Losing Billions Annually

Let’s discuss what should be the most economically viable single project in data center history—a massive campus built by Oracle for the world’s largest AI company. Oracle is a decades-old near-hyperscaler with a history of selling expensive databases and business management software to enterprises and governments.

Ha—I’m obviously joking. This place is a fucking nightmare.

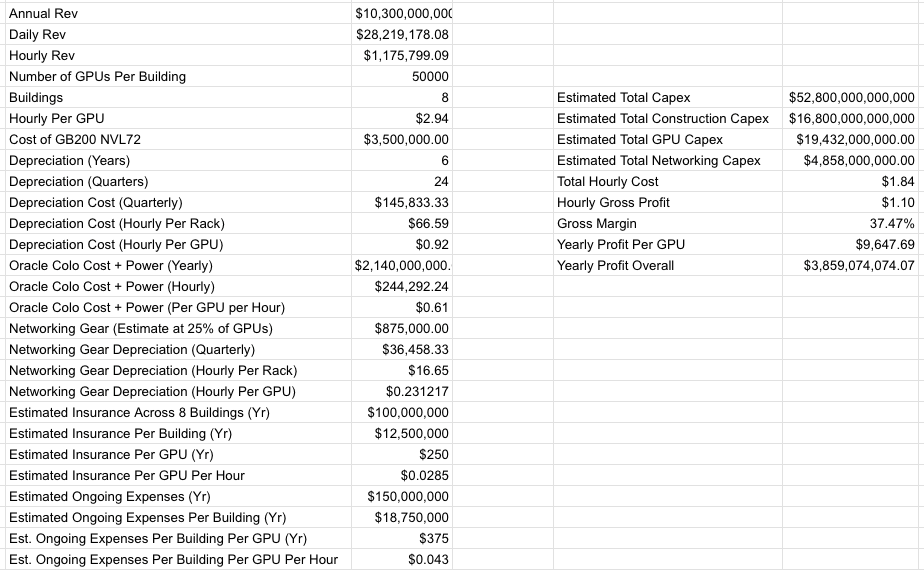

Stargate Abilene—a campus of eight buildings, 1.2GW total, ~824MW critical IT—was first announced in July 2024. As of April 27, 2026, only two buildings are operational and generating revenue; the third has virtually no IT equipment. I estimate Stargate Abilene’s total cost at ~$52.8 billion.

Based on my own reporting, Oracle expects ~$10 billion in annual revenue from Stargate Abilene—and I estimate ~$75 billion in total revenue from the 7.1GW of data center capacity it built for one customer: OpenAI. As I also reported, Oracle estimated in 2024 that Abilene would pay land developer Crusoe at least $2.14 billion annually for hosting and electricity.

I should also add that Oracle appears to be funding Abilene’s entire construction cost.

Based on my calculations and reporting, I estimate Abilene’s rough gross margin at full operation will be ~37.47%:

I must clarify: 37.47% gross margin may be too high, as I lack precise figures for Oracle’s actual insurance or staffing costs—only estimates based on documents reviewed by this publication. I should also clarify that Oracle is betting its entire future on projects like Stargate Abilene—pre-incurring billions in costs, with this business taking years to profit even if OpenAI pays every invoice on time.

Unfortunately, I can’t confirm how much of Abilene was financed by debt. I only know Oracle raised ~$18 billion in bonds in September 2025—maturities ranging from 7 to 40 years—and posted -$2.47 billion in negative cash flow last quarter.

What I do know is that Oracle signed a 15-year lease with developer Crusoe—and Oracle’s future hinges critically on OpenAI’s ability to keep paying, which in turn depends on Oracle completing Stargate Abilene.

I must also clarify: $3.85 billion in annual profit is achievable only if OpenAI pays on time, onboards Abilene at maximum speed, and everything proceeds flawlessly.

If OpenAI Fails to Raise $85.2B in Revenue, Funding, and Debt Within Four Years, the Stargate Data Center Project Will Sink Oracle

Unfortunately, the exact opposite is happening:

Per DatacenterDynamics, the first 200MW of power was supposed to come online in “2025.” Onboarding was meant to begin in early 2025—with potential to reach 1GW by year-end 2025—and full 1.2GW capacity completed by mid-2026, powered up by mid-2026, and 64,000 GPUs deployed by end-2026. As of September 30, 2025, “two buildings are live.” As of December 12, 2025, Oracle Co-CEO Clay Magouyurk stated Abilene was “on track,” having “delivered over 96,000 NVIDIA Grace Blackwell GB200” GPUs—i.e., the count for two buildings. Four months later, on April 22, 2026, Oracle tweeted “...in Abilene, 200MW is operational, and delivery of the eight-building campus remains on track.” It’s unclear whether this refers to 200MW of critical IT capacity or total available power for the Abilene campus. Either way, it supports only two buildings—meaning Oracle is absolutely *not* “on track.”

This is a huge problem. OpenAI can only pay for compute that physically exists—and only 206MW of critical IT capacity is actually generating revenue. The third building won’t be operational for at least another month—if not a full quarter.

Yet the Stargate data center project faces an even larger, more fundamental issue—its entire premise relies on OpenAI achieving its absurd, cartoonish projections.

As I discussed Friday:

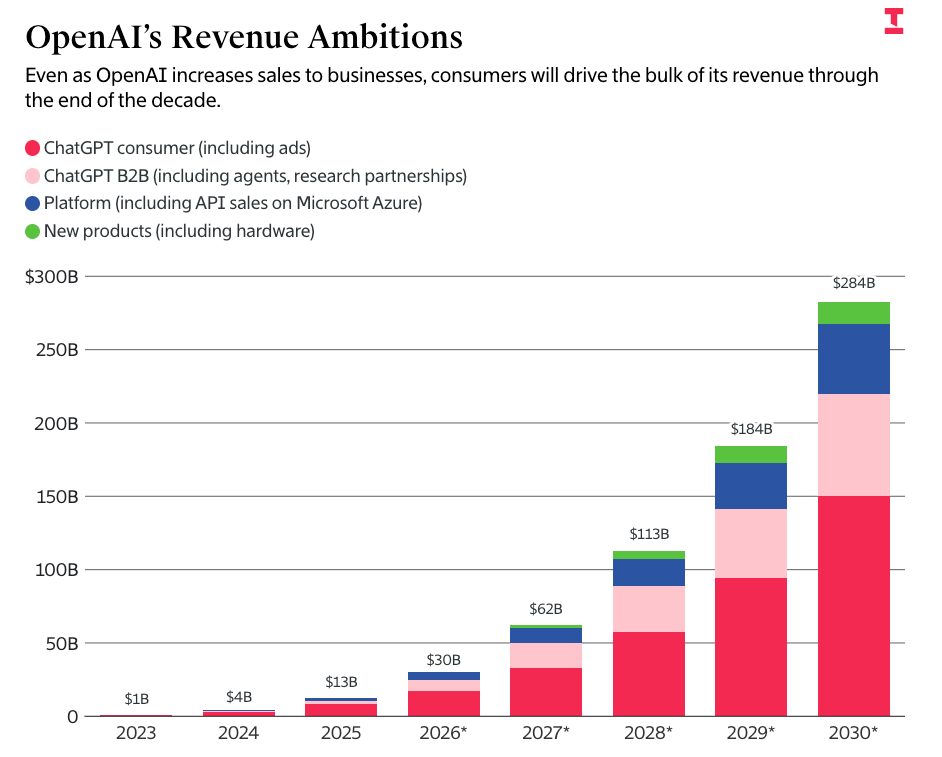

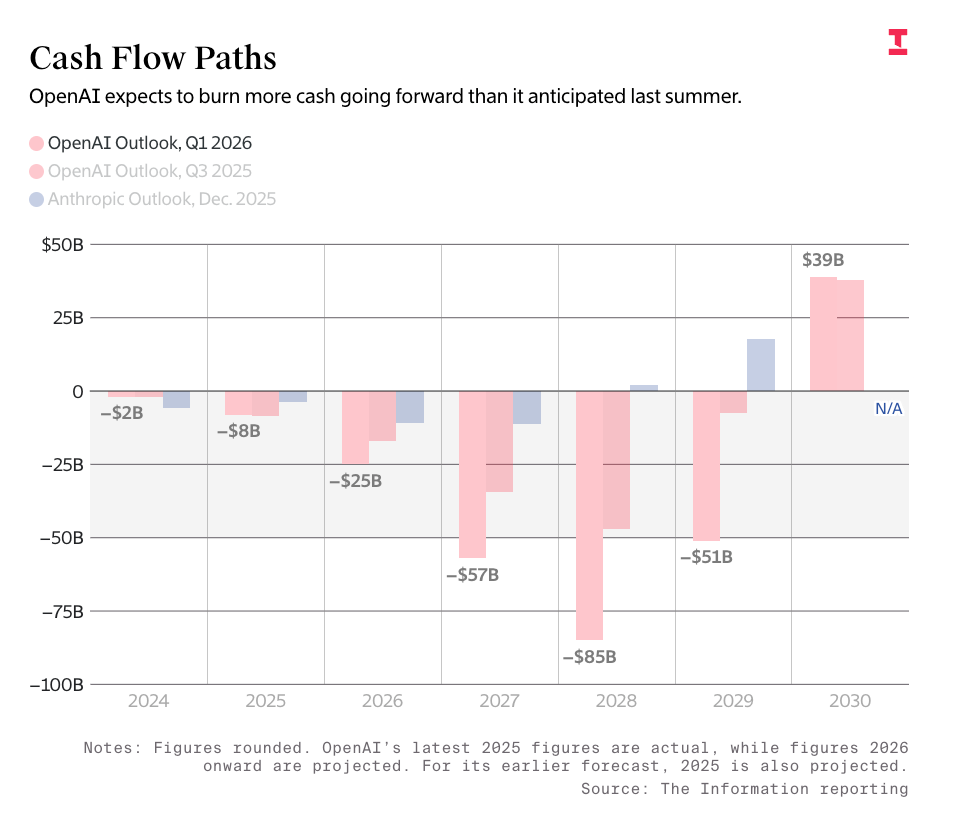

Let me reiterate these numbers: Upon completion, the 7.1GW Stargate data center will generate ~$75 billion in annual revenue—totaling over $340 billion in construction costs. Oracle’s free cash flow stands at -$24.7 billion, with other business lines stagnating—making its loss-making-to-low-profit cloud business the sole growth engine. To truly afford its compute contracts—including those with Amazon, Microsoft, CoreWeave, Google, Cerberas, and Oracle itself—OpenAI must raise or earn $85.2 billion in revenue and/or financing within four years. This requires >250% annual business growth—reaching 10x growth by end-2030—and finding a path to positive cash flow by then for these numbers to make sense. To be explicit: OpenAI’s projections anticipate $67.3 billion in revenue by end-2030—burning $21.8 billion to achieve it. This is an extremely unprofitable business—even if it becomes profitable, it must earn vastly more than today to sustain payments to Oracle.

I calculated the $75 billion figure assuming Vera Rubin GPUs fetch ~$14 million per MW across the remaining 4.64GW of critical IT capacity—confirmed with sources familiar with the data center industry—and expect this to constitute the remainder of Stargate’s capacity.

OpenAI’s figures come directly from leaked information reported by The Information on OpenAI’s projected burn rate and revenue—projecting $67.3 billion in revenue by end-2030, burning $85.2 billion to get there:

I must clarify: any journalist repeating these numbers without highlighting their absurdity should feel mildly ashamed. Per my Friday paid content:

In other words, OpenAI projects revenue two years out exceeding TSMC’s, annual revenue three years out nearly matching Meta’s—and annual revenue by end-2030 matching Microsoft’s (roughly $300 billion over the past 12 months).

If OpenAI fails to pay for this compute, Oracle collapses—having taken on ~$11.5 billion in debt just to build Stargate’s data centers, requiring another ~$15 billion to complete them:

Oracle is a company currently generating ~$64 billion in annual revenue—with last quarter’s free cash flow at -$24.7 billion. It issued $18 billion in bonds in September 2025, $25 billion in February 2026, and a $20 billion market offering in March—though labeled “completed” months ago, it appears to have only recently closed $38 billion in Stargate Wisconsin and Shackelford project financing. I’ve also included $14 billion in Stargate Michigan-related data center debt. Regardless, Oracle lacks capital to complete Stargate Abilene. It needs at least another $15 billion—assuming other partners cover ~$3 billion. Honestly, it may need more.

I must stress: Oracle has no alternative path to this revenue without OpenAI—and these projects are financed and paid for entirely via projected cash flows from the data centers themselves.

I’m not alone in worrying about this. OpenAI’s Sarah Friar expressed similar concerns after the company missed user and revenue targets, per the Wall Street Journal:

OpenAI recently failed to meet new user and revenue targets—these setbacks led some company leaders to worry whether it could support its massive data center expenditures. CFO Sarah Friar told other company leaders she worried the company might not be able to pay future compute contracts if revenue growth isn’t fast enough, according to knowledgeable sources. Board members have also scrutinized data center deals more closely in recent months—and questioned CEO Sam Altman’s push for more compute amid slowing business, sources said.

If that doesn’t concern you, perhaps this will:

She emphasized to executives and board members that OpenAI needs to improve internal controls—warning the company isn’t yet ready to meet the stringent reporting standards required of public companies. Some knowledgeable sources said Altman favors a more aggressive IPO timeline.

This really sounds like a company that’ll earn $85.2 billion by decade’s end!

Anthropic Is Just as Bad as OpenAI—Promising Up to 10GW (Over $10B Annual Revenue) in Compute from Google and Amazon

Though I frequently criticize OpenAI’s absurd promises, Anthropic is no better—promising up to 5GW each from Google and Amazon. Based on capacity scale, I estimate this represents ~$10 billion in actual compute commitments.

Now, I should add that Google and Amazon are savvier—and less desperate—than Oracle, meaning they could absorb Anthropic’s collapse. The “up to” clause gives them crucial flexibility Oracle utterly lacks.

Nonetheless, to fulfill its commitments, Anthropic must agree to spend $2.5–$10 billion annually on compute by end-2030.

Anthropic’s CFO stated in March that the company generated $5 billion in revenue over its entire existence.

$15.68B in Annual AI Compute Revenue Needed to Support 15.2GW Under Construction; $118B for All Announced 114GW

The near-pornographic excitement around Jensen Huang’s claims of shipping hundreds of billions in GPUs masks a troubling question: Who’s buying them, Jensen?

If we assume the 15.2GW of data center capacity under construction (slated for completion by end-2028) has a PUE of ~1.35, we get ~11.2GW of critical IT capacity. At $14 million per MW, these data centers require ~$15.68 billion in annual GPU leasing revenue to break even.

Scaling to the 114GW theoretically coming online by end-2028, that figure climbs to $118 billion in annual revenue.

For context, CoreWeave—the largest new cloud provider, with clients including Meta, OpenAI, Google (for OpenAI), Microsoft (for OpenAI), Anthropic, and NVIDIA—generated ~$5.1 billion in revenue and expects $12–$13 billion in 2026.

Who exactly are all these compute customers—and how likely are they to buy once all capacity is built? While many data centers claim tenants for their first few years, those tenants only begin paying once facilities are complete—and for AI startups, a fair question is: Will they even exist when it’s done?

Remember: AI compute customers are primarily hyperscale cloud providers shifting capex off their balance sheets—or unprofitable AI startups. Both Anthropic and OpenAI plan to burn tens of billions over the next few years—and neither has a path to profitability.

This means a large—possibly majority—portion of AI compute revenue depends on continuous VC and debt inflows—which only persist while investors still believe generative AI will be the world’s largest, greatest thing.

How does this actually work? Who’s paying for this capacity? For whom is it intended? Where’s the real demand?

If demand exists—how the hell do customers pay for it?

Generative AI Is Unprofitable, Unsustainable—and Only Getting More Expensive

Despite multiple stories claiming profitability by 2028 or 2029, no one can explain how OpenAI or Anthropic will actually become profitable—especially given their margins are worse than expected, even excluding billions in training costs.

I’ve asked this question for years. Every time we get new updates on Anthropic or OpenAI, we hear they’ve lost another few billion more than expected—margins are falling, costs are soaring—even as promises claimed the opposite, and everything keeps getting more expensive.

Even Cursor—a company that briefly (before its fake SpaceX acquisition) claimed positive gross margins—had -23% gross margin as of January. Including non-paying users’ costs, it’s -31%. And if you care about accounting—which you damn well should—you must include those.

Mysteriously, reports claim Cursor’s margins “recently turned positive”—yet magically omit how much, how it happened, or any other details beyond one possibly sale-facilitating tidbit.

I also don’t understand how these AI data centers make sense—even with paying customers in early years. Their economics are built for perfection—zero tolerance for error. They need consistent 100% utilization and occupancy—or they’ll burn millions and be unable to cut through the multi-year depreciation wall caused by tech’s most expensive mistakes.

Even if they somehow succeed, these are mediocre-margin businesses—max 70%, assuming sustained payments, occupancy, and six goddamn years of depreciation to break even—made harder by annual upgrade cycles rendering the whole thing nearly obsolete by the time you finish paying for it.

And this doesn’t account for most customers being unprofitable, unsustainable startups.

I truly don’t know how this resolves.

LLMs Are a Scam—and Customers Are Being Scammed

I realize this sounds extreme—but I sincerely believe subscription-based AI services constitute fraud-level deception, distorting core unit economics—and thereby distorting what large language models can realistically achieve. By selling products to users at monthly rates and building habits around availability, companies like Anthropic and OpenAI distort their businesses so profoundly that most users interact with—and build workflows atop—products that are unsustainable and unmaintainable in their current form.

I must be crystal clear: Anthropic’s product—due to recent rate-limit changes—is vastly different (and far worse) than what you read about everywhere. Anthropic consciously markets a product it knows will vanish in three months. Dario Amodei doesn’t care—as long as media keeps reporting his fabricated billions in annualized revenue or whatever new product he launches to destroy some unlucky public SaaS company already seeing growth slow.

To media members, I say this with full respect: Anthropic is abusing its customers—and doing so because it believes it can get away with it. This company doesn’t respect you—and in fact holds you in considerable contempt—which is why it doesn’t bother fixing its services quickly or explaining coherently why they’re broken.

That’s why Anthropic lies about Claude Mythos being “too powerful to release” (really a capacity issue)—when it’s just another empty LLM promise—because it believes you’ll buy anything it sells, and it’s precisely figured out how to package it with just enough plausible “evidence” that a quick glance at system cards convinces you—and your editor—of whatever you claim.

They also know you’ll rush to report it—rather than waiting to see what actual experts say.

AI is a scam—and here’s how it operates. AI is pushed at us at maximum speed—in its least efficient but most accessible form—even though that form can never produce a sustainable business model. Media is pressured to immediately declare “this is the future,” getting everyone to agree it’s the big thing happening now—and use it as much as possible. Crucially, it’s delivered via subscription—so people never ask how much providing the service actually costs.

The narrative is already pre-packaged. Because few discussing LLMs truly grasp actual costs, they easily hand-wave “it’s like Uber”—a company that lost tons of money but didn’t die. That’s easier than saying “Wait—you’re saying OpenAI will lose $5 billion this year?”

Think about it: As a journalist, investor, executive, or typical LinkedIn poster, you’ve probably skimmed numbers like $5/million input tokens and $25/million output tokens—but you’ve never truly experienced how fast money vanishes—and that’s essential to understanding this product. Anthropic and OpenAI deliberately obscure this experience—building businesses projected to burn hundreds of billions by 2026 and trillions by 2030—all because most people evaluate generative AI through subscription experiences.

Large language models are casinos—you’ve been gambling with the house’s money while encouraging others to bet their own, hoping for one unit of work from a specific model.

This is intentional. They never wanted you thinking about cost—because once you truly consider cost, the whole thing looks slightly insane. I sincerely believe LLM-based subscription services will vanish entirely—at least for any product of code-generation scale. In the process, Amodei and Altman will wrap up their scam—or at least believe they have.

The problem is these people have signed too many agreements to walk away cleanly.

OpenAI’s CFO has now repeatedly stated she doesn’t believe OpenAI is IPO-ready—and has serious concerns about its growth and ability to fulfill obligations. Repeating the earlier quote:

According to knowledgeable sources, CFO Sarah Friar told other company leaders she worries the company may not be able to pay future compute contracts if revenue growth isn’t fast enough.

This is a flashing red alarm—in a rational market, it would send Oracle’s stock spiraling downward, because OpenAI’s climb to >$280 billion in annual revenue is critical to Oracle not running out of money. In a rational media environment, this would spark waves of concern across every group chat and Slack channel—questioning whether OpenAI can survive at all.

This is what happens before a company starts dying. OpenAI’s growth is slowing precisely when it needs to accelerate. It must scale its current business 10x by 2030 to meet obligations. OpenAI’s CFO—the person who knows best—is saying she worries OpenAI can’t even pay compute contracts if revenue doesn’t grow. This is a massive, flashing warning light! This is not a drill!

But what truly worries me is the Wall Street Journal comment that Friar believes OpenAI “is not yet ready to meet the stringent reporting standards required of public companies.”

What the hell does that mean? Sorry? This company allegedly raised $122 billion, is supposedly valued at $85.2 billion, and is projected to burn $85.2 billion by end-2030. Its books are unclear? OpenAI can’t meet what “stringent reporting standards”?

If it weren’t for this company taking ~20% of all VC funding last year—and me having to endure endless pontificating from Altman, Brockman, and other OpenAI folks about what ordinary people should do—while they elegantly ship garbage software and spend other people’s money—I wouldn’t dig this deep.

Given the attention Anthropic and OpenAI consume, both companies should be flawless as products and businesses. Yet both sell through varying degrees of economic and performance deception—obscuring truth so their CEOs can accumulate money, power, and attention. This is an insult to good software and taste—the most expensive, unreliable applications ever built, whose flaws are forgiven, mediocrity celebrated, and infrastructure hailed as the inert god of capital.

Generative AI is an insult. It’s unreliable, economically indefensible, and produces nothing justifying its existence—and its perpetrators are boring, clumsy, greedy people disconnected from society and anyone who might oppose them. It steals everyone’s art, destroys the environment, hikes our electricity bills, constantly threatens economic ruin, and endlessly shouts “everything is getting worse because of AI”—all to promote software only defensible by those willing to ignore basic finance or common sense.

All of this is too expensive—and too damn boring. It’s boring to the point of nausea. It actively irritates. Every story about how frequently someone uses AI sounds like they’re trapped in an abusive relationship and/or joined a cult—echoing quiet despair, as if saying “you really need to do this with me, because it’s so great—and I seem to get no joy from this product, which is just proof of how efficient it is.” There’s nothing easy or joyful about what AI does. Large language models hold no whimsy or wonder—every interaction feels hollow.

Those desperately seeking clues that it’s becoming conscious—or “more powerful”—are just seeking self-validation—they want to be the first to discover something, because drawing others’ conclusions is how they make a living.

Becoming “first”—being on the “frontier”—is what people crave when they find nothing inside themselves—and it’s precisely the fuel scammers hunger for, because LLMs buzz incessantly, creating the illusion of imminent novelty—despite being mathematically constrained to repeat prior actions.

This is a deeply sad era. Those so enthusiastically collaborating to prop up this industry are merely delaying its inevitable collapse. What frightens me is that our markets—and parts of our economy—are propped up by widely held but wholly unproven assumptions: that LLMs will somehow become cheaper, AI startups will magically become profitable, and providing AI compute will remain permanently profitable—requiring supply to increase tenfold by 2030.

People degrade themselves to defend the AI industry—because that’s what the industry demands of its believers. Becoming an “AI expert” requires you to actively ignore the worst economics in any industry’s history—constantly excusing the product’s glaring, obvious flaws—and actively persuading others to do the same. OpenAI and Anthropic offer no clear explanation of how they’ll profit—because they know supporters will never ask. The only way to fully “believe in AI” is to actively blindfold yourself.

I get it. If you admit OpenAI and/or Anthropic will eventually collapse, this all seems a bit crazy. I sincerely urge you to seriously consider that one—or both—of these companies may run out of money.

I’m genuinely worried—and the media’s and broader society’s general lack of attention worries me even more.

If I must imagine—suppose I’m just crying wolf—“demand will absolutely be there.”

You’d better hope you’re right.

At least for Larry Ellison’s sake. Ellison has pledged 346 million Oracle shares—~$61.5 billion—“to secure certain personal debts, including various credit facilities,” meaning “many large, beautiful loans collateralized by Oracle stock.” IFR estimated in September (when Oracle’s stock was much higher) that this could net him up to $21.4 billion in debt—at a (they called it “conservative”) 20% loan-to-value ratio—assuming banks aren’t overly generous.

If OpenAI fails to raise $85.2 billion in revenue and funding by end-2030, it can’t pay for Stargate. This will kill Oracle’s stock value—triggering margin calls forcing Ellison to sell shares—causing further margin calls. Any rescue won’t save Larry’s assets.

Let me be clear: Ellison’s future depends on Sam Altman’s ability to raise funds and generate $85.2 billion in revenue within four years.

Good luck, Larry—you’ll need it.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News