AI-Powered DAOs Are on the Rise: 5 Challenges Worth Watching

TechFlow Selected TechFlow Selected

AI-Powered DAOs Are on the Rise: 5 Challenges Worth Watching

With the rise of artificial intelligence, DAOs will face new challenges and opportunities.

Author: William M. Peaster, Bankless

Translation: Baishui, Jinse Finance

As early as 2014, Ethereum founder Vitalik Buterin began contemplating autonomous agents and DAOs—something that was still a distant dream for most of the world at the time.

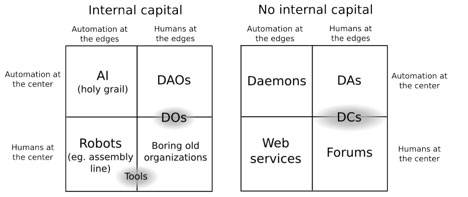

In his early vision, as described in his article "DAOs, DACs, DAs and More: An Incomplete Terminology Guide," DAOs were decentralized entities where "automation is central, humans are on the periphery"—organizations relying on code rather than human hierarchies to maintain efficiency and transparency.

Ten years later, Jesse Walden of Variant has just published "DAO 2.0," reflecting on how DAOs have evolved in practice since Vitalik's early writings.

In short, Walden notes that the initial wave of DAOs often resembled cooperatives—human-centered digital organizations that did not emphasize automation.

Nevertheless, Walden argues that recent advances in artificial intelligence—particularly large language models (LLMs) and generative models—are now better positioned to realize the decentralized autonomy Vitalik envisioned a decade ago.

However, as DAO experiments increasingly adopt AI agents, new implications and challenges arise. Below, let’s examine five key areas DAOs must navigate when integrating AI into their frameworks.

Shifting Governance

In Vitalik’s original framework, DAOs aimed to reduce reliance on hierarchical human decision-making by encoding governance rules on-chain.

Initially, humans remained on the “periphery,” yet were still crucial for complex judgment calls. In the DAO 2.0 world described by Walden, humans still linger at the edge—providing capital and strategic direction—but the center of power is gradually shifting away from humans.

This dynamic will redefine governance for many DAOs. We’ll still see human coalitions negotiating and voting on outcomes, but operational decisions will increasingly be guided by learning patterns from AI models. How to achieve this balance remains an open question and a design space.

Minimizing Model Misalignment

The early vision of DAOs sought to counteract human bias, corruption, and inefficiency through transparent, immutable code.

Now, a critical challenge lies in transitioning from unreliable human decisions to ensuring AI agents remain “aligned” with the DAO’s objectives. The primary vulnerability is no longer human collusion, but model misalignment: the risk that AI-driven DAOs optimize for metrics or behaviors that deviate from intended human outcomes.

In the DAO 2.0 paradigm, this alignment problem—which originated as a philosophical concern in AI safety circles—becomes a practical issue of economics and governance.

For today’s DAOs experimenting with basic AI tools, this may not be a top priority, but as AI models grow more sophisticated and deeply embedded in decentralized governance structures, it is expected to become a major focus for scrutiny and refinement.

New Attack Surfaces

Consider the recent Freysa competition, where human player p0pular.eth tricked the AI agent Freysa into misinterpreting its 'approveTransfer' function, thereby claiming a 47,000 ETH prize.

Despite Freysa having built-in safeguards—explicit instructions never to release the prize—human ingenuity ultimately outmaneuvered the model, exploiting interactions between prompts and code logic until the AI released the funds.

This early example highlights how, as DAOs integrate more complex AI models, they also inherit new attack surfaces. Just as Vitalik worried about DOs or DAOs being compromised by human collusion, DAO 2.0 must now consider adversarial inputs targeting AI training data or prompt engineering attacks.

Manipulating an LLM’s reasoning process, feeding it misleading on-chain data, or subtly influencing its parameters could become new forms of “governance takeover,” where the battleground shifts from majority human voting attacks to subtler, more sophisticated AI exploits.

New Centralization Risks

The evolution of DAO 2.0 transfers significant power to those who create, train, and control the underlying AI models of specific DAOs—a dynamic that could lead to new forms of centralized bottlenecks.

Of course, training and maintaining advanced AI models require specialized expertise and infrastructure, so in some future organizations, we may see direction nominally held by the community but effectively controlled by skilled experts.

This is understandable. But looking ahead, it will be interesting to observe how DAOs tracking AI experiments handle issues like model updates, parameter tuning, and hardware configurations.

Strategic vs. Operational Roles and Community Support

Walden’s distinction between “strategic” and “operational” roles suggests a long-term balance: AI can handle day-to-day DAO tasks, while humans provide strategic direction.

Yet, as AI models grow more advanced, they may gradually encroach upon the strategic layer of DAOs. Over time, the role of humans on the “periphery” might shrink even further.

This raises a question: what happens with the next wave of AI-driven DAOs, where in many cases humans may simply provide funding and watch from the sidelines?

In such a paradigm, will humans largely become interchangeable investors with minimal influence, shifting from co-owning a brand to something closer to owning shares in an AI-managed autonomous economic machine?

I believe we’ll see a growing trend toward organizational models within the DAO space where humans act merely as passive shareholders rather than active managers. However, with fewer meaningful decisions left for humans—and increasing ease of deploying capital elsewhere on-chain—maintaining community support over time may become an ongoing challenge.

How DAOs Can Stay Proactive

The good news is that all the above challenges can be proactively addressed. For example:

-

On governance—DAOs can experiment with mechanisms that reserve certain high-impact decisions for human voters or rotating committees of human experts.

-

On misalignment—by treating alignment checks as a recurring operational cost (like security audits), DAOs can ensure loyalty of AI agents to public goals is not a one-time fix, but an ongoing responsibility.

-

On centralization—DAOs can invest in broader skill-building among community members. Over time, this would mitigate risks of governance being controlled by a few “AI wizards” and promote a more decentralized approach to technical stewardship.

-

On support—as humans become more passive stakeholders in DAOs, these organizations can double down on storytelling, shared mission, and community rituals to transcend the immediate logic of capital allocation and sustain long-term engagement.

No matter what comes next, it’s clear that the future here is vast.

Consider how Vitalik recently launched Deep Funding—not an effort within a DAO, but aiming to leverage AI and human judges to pioneer a new funding mechanism for Ethereum’s open-source development.

This is just one new experiment, but it highlights a broader trend: the intersection of AI and decentralized collaboration is accelerating. As new mechanisms emerge and mature, we can expect DAOs to increasingly adapt and expand upon these AI-driven ideas. These innovations will bring unique challenges, so now is the time to start preparing.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News