2024 Crypto X AI Industry Landscape: How Cryptocurrency Penetrates Every Aspect of Generative AI

TechFlow Selected TechFlow Selected

2024 Crypto X AI Industry Landscape: How Cryptocurrency Penetrates Every Aspect of Generative AI

AI and cryptocurrency are natural partners.

Author: MagnetAI

Compiled by: TechFlow

Key Takeaways

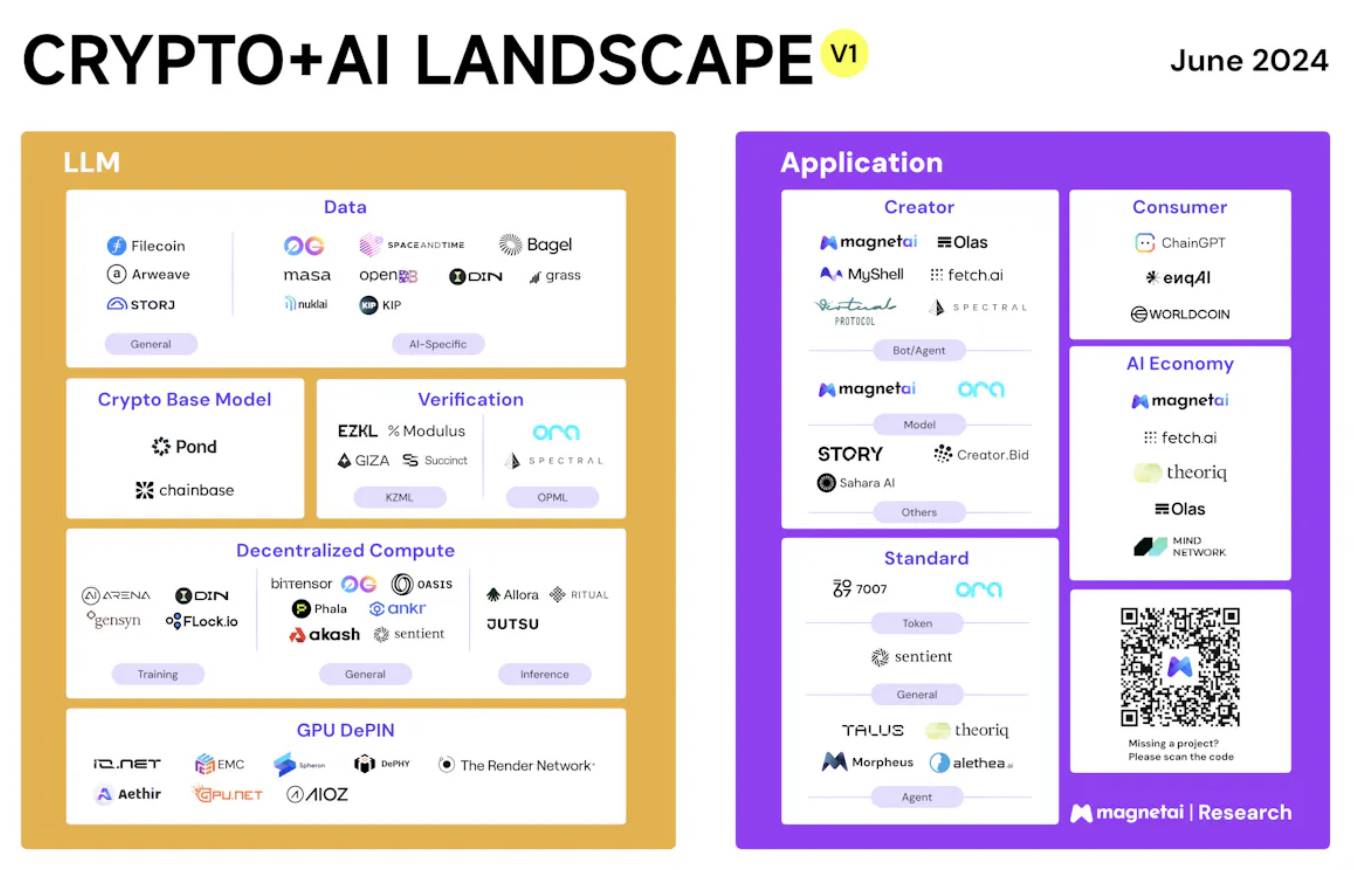

We conducted an in-depth analysis of 67 Crypto+AI projects and classified them from the perspective of generative AI (GenAI). Our classification covers:

-

GPU DePIN

-

Decentralized Computing (Training + Inference)

-

Verification (ZKML + OPML)

-

Crypto Large Language Models (LLMs)

-

Data (General + AI-Specific)

-

AI Creator Applications

-

AI Consumer Applications

-

AI Standards (Tokens + Agents)

-

AI Economy

Why This Article?

The narrative around Crypto+AI has attracted significant attention. Numerous reports on Crypto+AI are emerging, but they either cover only part of the AI story or explain AI solely from a cryptocurrency perspective. This article explores the topic from the AI angle, examining how crypto can support AI and how AI can benefit crypto, to better understand the current landscape of the Crypto+AI industry.

Part 1: Decoding the Generative AI Landscape

Let’s begin exploring the entire generative AI (GenAI) landscape through the AI products we use daily. These products typically consist of two main components: a large language model (LLM) and a user interface (UI). For large models, there are two key processes: model creation and model utilization, commonly known as training and inference. As for user interfaces, they come in various forms, including conversational (e.g., GPT), visual (e.g., LumaAI), and many others that integrate inference APIs into existing product interfaces.

Computing

Delving deeper, computing is fundamental to both training and inference, heavily relying on underlying GPU computation. While physical GPU connections may differ between training and inference, GPUs serve as a common infrastructure component for AI products. Above this layer lies the orchestration of GPU clusters, known as the cloud. These clouds can be divided into traditional multi-purpose clouds and vertical clouds, which are more focused on and optimized for AI computing scenarios.

Storage

Regarding storage, AI data storage can be divided into traditional solutions like AWS S3 and Azure Blob Storage, and specialized storage solutions optimized specifically for AI datasets. These specialized solutions, such as Google Cloud's Filestore, aim to improve data access speed in specific scenarios.

Training

Continuing with AI infrastructure, it is crucial to distinguish between training and inference, as they differ significantly. Beyond general computing, both involve numerous AI-specific business logics.

For training, infrastructure can roughly be categorized into:

-

Platforms: Designed specifically for training, helping AI developers efficiently train large language models and offering software acceleration solutions, such as MosaicML.

-

Foundation Model Providers: This category includes platforms like Hugging Face, which offer base models that users can further train or fine-tune.

-

Frameworks: Finally, there are various foundational training frameworks built from scratch, such as PyTorch and TensorFlow.

Inference

For inference, the landscape can broadly be divided into:

-

Optimizers: Specializing in a series of optimizations for specific use cases, such as algorithmic enhancements supporting parallel processing or media generation. An example is fal.ai, which optimizes the text-to-image inference process, achieving diffusion speeds 50% faster than general methods.

-

Deployment Platforms: Providing general-purpose model inference cloud services, such as Amazon SageMaker, facilitating deployment and scaling of AI models across different environments.

Applications

Although AI applications are countless, they can be broadly categorized into two groups based on user demographics: creators and consumers.

-

AI Consumers: This group primarily uses AI products and is willing to pay for the value these products deliver. A typical example is ChatGPT.

-

AI Creators: Conversely, AI creator applications more actively invite AI creators onto their platforms to build agents, share knowledge, and then share profits with them—GPT Store being one of the most prominent examples.

These two categories cover nearly all AI applications. While more detailed classifications exist, this article will focus on these broader categories.

Part 2: How Cryptocurrency Can Help AI

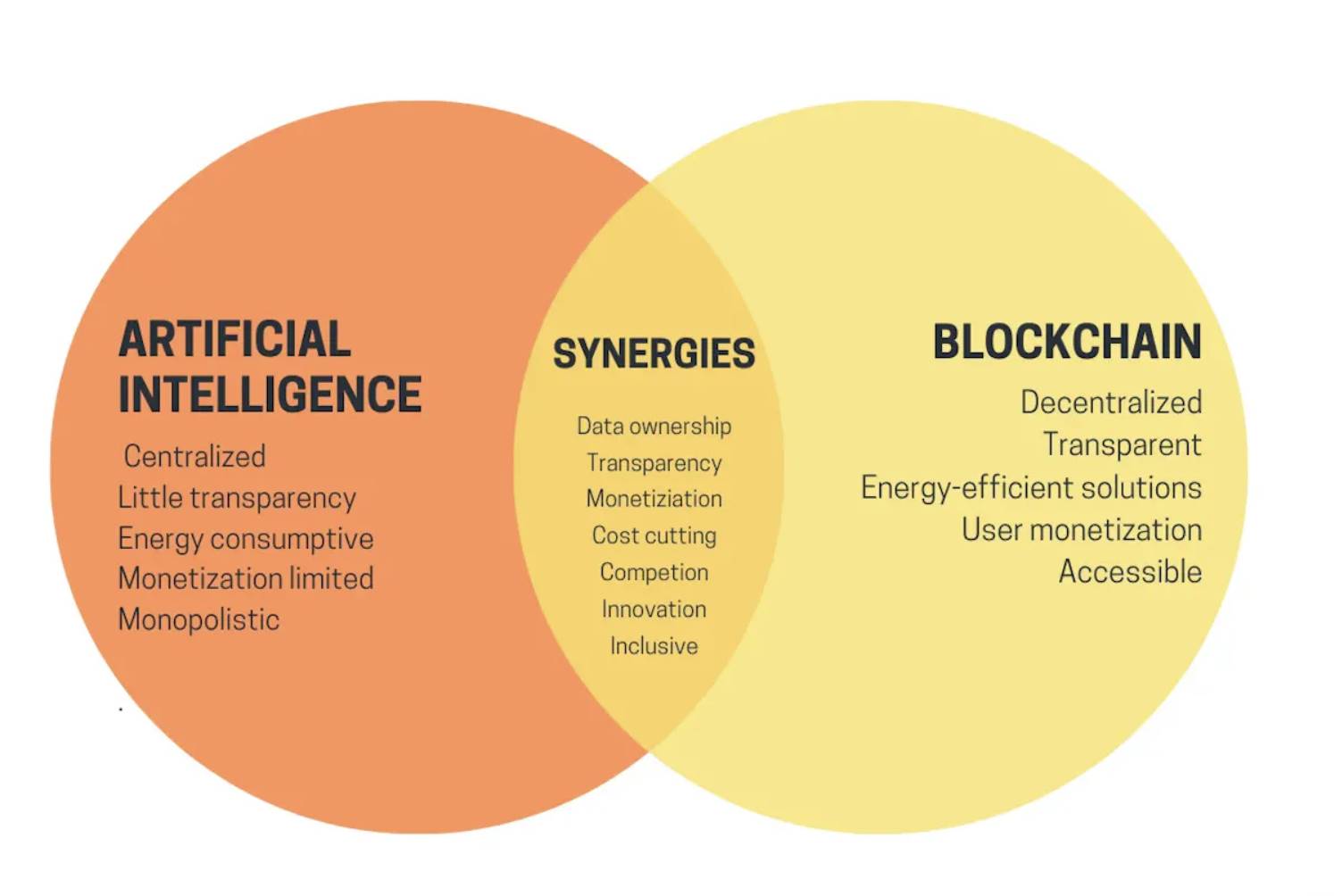

Before answering this question, let’s summarize the main advantages cryptocurrency can bring to AI: monetization, inclusivity, transparency, data ownership, cost reduction, etc.

From vitalik.eth blog: High-level summary of the crypto+AI intersection

These key synergies help the current landscape primarily through the following ways:

-

Monetization: Through unique crypto mechanisms such as tokenization, monetization, and incentives, disruptive innovations can occur within AI creator applications, ensuring an open and fair AI economy.

-

Inclusivity: Cryptocurrency allows permissionless participation, breaking down various restrictions imposed today by closed, centralized AI companies. This enables AI to become truly open and free.

-

Transparency: Cryptocurrency can leverage ZKML/OPML technologies to make AI completely open-source, placing the entire LLM training and inference process on-chain, ensuring openness and permissionless access for AI.

-

Data Ownership: By enabling on-chain transactions to establish account (user) data ownership, users truly own their AI data. This is particularly beneficial at the application layer, helping users effectively safeguard their AI data rights.

-

Cost Reduction: Through token incentives, future compute capacity value can be unlocked, dramatically lowering current GPU costs. This approach significantly reduces AI costs at the computational level.

Part 3: Exploring the Crypto+AI Landscape

Applying the advantages of cryptocurrency across different categories within the AI landscape creates a new AI perspective from a crypto standpoint.

Large Language Model Layer

-

GPU DePIN

We continue outlining the AI+Crypto blueprint based on the AI landscape. Starting with large language models and beginning at the foundational GPU layer, a long-standing narrative in crypto is cost reduction.

Through blockchain incentives, we can significantly reduce costs by rewarding GPU providers. This narrative is currently known as GPU DePIN. While GPUs are used not only in AI but also in gaming, AR, and other scenarios, the GPU DePIN track typically encompasses these areas.

Projects focusing on the AI track include Aethir and Aioz Network, while those dedicated to visual rendering include io.net, Render Network, among others.

-

Decentralized Computing

Decentralized computing is a narrative that has existed since the birth of blockchain and has evolved significantly over time. However, due to the complexity of computing tasks (compared to decentralized storage), it often requires limiting computing scenarios.

AI, as the latest computing scenario, naturally gives rise to a series of decentralized computing projects. Compared to GPU DePIN, these decentralized computing platforms not only offer cost reduction but also cater to more specific computing scenarios: training and inference. They orchestrate across wide-area networks, significantly enhancing scalability.

Achieving scale and cost efficiency as per gensyn.ai

For example, platforms focusing on training include AI Arena, Gensyn, DIN, and Flock.io; platforms focusing on inference include Allora, Ritual, and Jutsu.ai; platforms handling both aspects include Bittensor, 0G, Sentient, Akash, Phala, Ankr, and Oasis.

-

Verification

Verification is a unique category in Crypto+AI, primarily because it ensures the entire AI computation process—whether training or inference—can be verified on-chain.

This is crucial for maintaining complete decentralization and transparency. Additionally, technologies like ZKML protect data privacy and security, allowing users to own 100% of their personal data.

Based on algorithms and verification processes, this can be divided into ZKML and OPML. ZKML uses zero-knowledge (ZK) techniques to convert AI training/inference into ZK circuits, making the process verifiable on-chain, as demonstrated by platforms like EZKL, Modulus Labs, Succinct, and Giza. On the other hand, OPML leverages off-chain oracles to submit proofs to the blockchain, as shown by Ora and Spectral.

-

Crypto Foundation Models

Unlike general-purpose large language models such as ChatGPT or Claude, crypto foundation models are retrained using vast amounts of crypto data, giving these base models a specialized knowledge base in cryptocurrency.

These foundation models can provide powerful AI capabilities for crypto-native applications (such as DeFi, NFTs, and GamingFi). Currently, examples of such foundation models include Pond and Chainbase.

-

Data

Data is a critical component in the AI domain. In AI training, datasets play a vital role, while during inference, massive user prompts and knowledge bases require substantial storage.

Decentralized data storage not only significantly reduces storage costs but, more importantly, ensures data traceability and ownership.

Traditional decentralized storage solutions such as Filecoin, Arweave, and Storj can store large volumes of AI data at very low costs.

Meanwhile, newer AI-specific data storage solutions are optimized for the unique characteristics of AI data. For instance, Space and Time and OpenDB optimize data preprocessing and querying, while Masa, Grass, Nuklai, and KIP Protocol focus on AI data monetization. Bagel Network centers on user data privacy.

These solutions leverage the unique advantages of cryptocurrency to innovate in AI data management—an area previously receiving less attention.

Application Layer

1.Creators

At the Crypto+AI application layer, creator applications are particularly noteworthy. Given cryptocurrency's inherent monetization capabilities, incentivizing AI creators is a natural fit.

For AI creators, the focus splits between low/no-code users and developers. Low/no-code users, such as bot creators, use these platforms to create bots and monetize them via tokens/NFTs. They can quickly raise funds through ICOs or NFT mints, then reward long-term token holders through shared ownership (e.g., revenue sharing). This fully opens up their AI products, enabling community co-ownership and completing the AI economic lifecycle.

Moreover, as Crypto AI creator platforms, they address early-to-mid-stage funding and long-term profitability issues for AI creators by leveraging crypto’s native tokenization advantages, offering services at a fraction of the typical Web2 take rate—demonstrating the zero-operational-cost advantage brought by crypto decentralization.

In this space, platforms like MagnetAI, Olas, Myshell, Fetch.ai, Virtual Protocol, and Spectral provide agent creation platforms for low/no-code users. For AI model developers, MagnetAI and Ora offer model developer platforms. Additionally, for other categories such as AI+social creators, platforms like Story Protocol and CreatorBid are tailored specifically for them, while SaharaAI focuses on knowledge base monetization.

2. Consumers

Consumers refer to AI directly serving crypto users. Currently, there are few projects in this lane, but the existing ones are irreplaceable and unique, such as Worldcoin and ChainGPT.

3. Standards

Standards represent a unique track in crypto, characterized by developing independent blockchains, protocols, or improvements to create AI dApp blockchains or enable existing infrastructures (like Ethereum) to support AI applications.

These standards allow AI dApps to embody crypto advantages such as transparency and decentralization, providing fundamental support for creator and consumer products.

For example, Ora extends ERC-20 to provide revenue sharing, 7007.ai extends ERC-721 to tokenize model inference assets. Additionally, platforms like Talus, Theoriq, Alethea, and Morpheus are creating on-chain virtual machines (VMs) to provide execution environments for AI agents, while Sentient offers comprehensive standards for AI dApps.

4. AI Economy

The AI economy represents a major innovation in the Crypto+AI space, emphasizing the use of crypto’s tokenization, monetization, and incentive mechanisms to achieve democratization of AI.

AI economic lifecycle developed by MagnetAI

It highlights AI shared economies, community co-ownership, and shared ownership. These innovations greatly drive further prosperity and development of AI.

Among these, Theoriq and Fetch.ai focus on agent monetization; Olas emphasizes tokenization; Mind Network offers restaking benefits; MagnetAI integrates tokenization, monetization, and incentives into a unified platform.

Conclusion

AI and cryptocurrency are natural partners. Cryptocurrency helps make AI more open and transparent, providing indispensable support for its continued growth.

Conversely, AI expands the boundaries of cryptocurrency, attracting more users and attention. As a universal narrative for humanity, AI also introduces an unprecedented mass adoption story to the crypto world.

Join TechFlow Official Community

Telegram Subscription Group:

Twitter Official Account:

Twitter English Account:

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News