Born at the Edge: How Decentralized Computing Networks Empower Crypto and AI?

TechFlow Selected TechFlow Selected

Born at the Edge: How Decentralized Computing Networks Empower Crypto and AI?

From the most practical perspective, a decentralized computing network needs to balance current demand exploration with future market potential.

Authors: Jane Doe, Chen Li

1 The Intersection of AI and Crypto

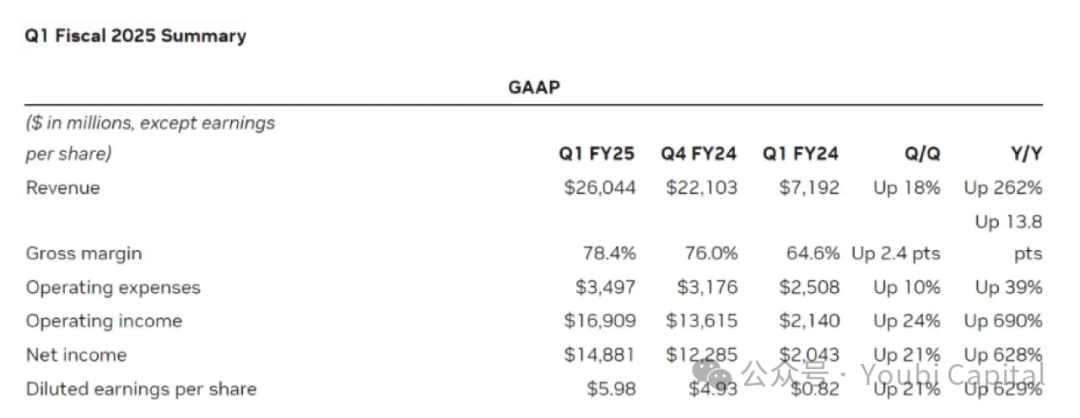

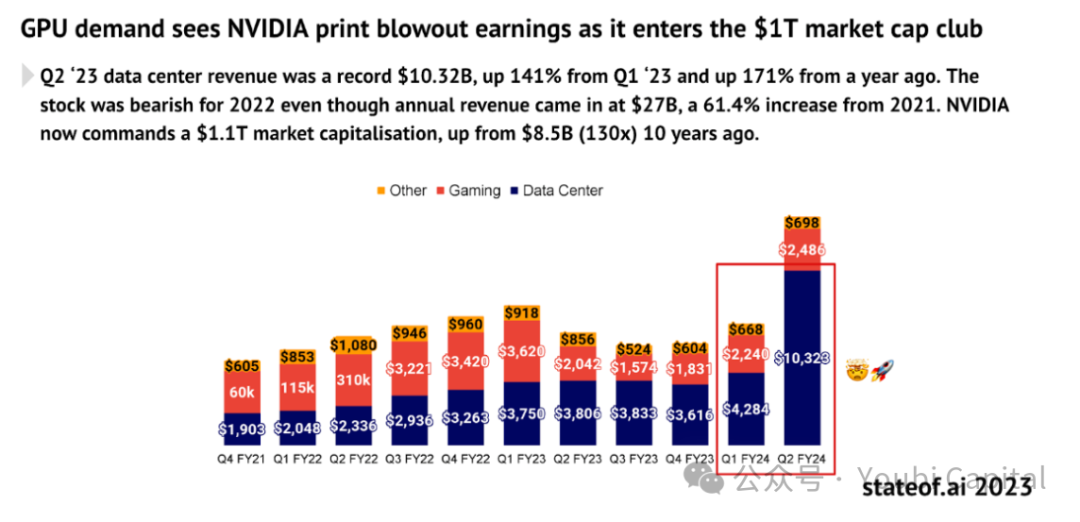

On May 23, chip giant NVIDIA released its first-quarter financial report for fiscal year 2025. The report showed that NVIDIA's revenue for the quarter reached $26 billion. Data center revenue alone surged 427% year-over-year, reaching an astonishing $22.6 billion. The financial performance of NVIDIA—capable of single-handedly lifting the entire U.S. stock market—reflects the explosive demand for computing power driven by global tech companies racing to dominate the AI landscape. The more ambitious top-tier tech firms are in AI, the more exponentially their demand for compute grows. According to TrendForce, in 2024, the demand from the four major U.S. cloud service providers—Microsoft, Google, AWS, and Meta—for high-end AI servers is expected to account for 20.2%, 16.6%, 16%, and 10.8% of global demand respectively, totaling over 60%.

“Chip shortages” have become a recurring buzzword in recent years. On one hand, large language models (LLMs) require massive computational power for both training and inference, and as models evolve, both cost and demand grow exponentially. On the other hand, large corporations like Meta purchase vast quantities of chips, causing global compute resources to concentrate among tech giants and making it increasingly difficult for smaller enterprises to access needed resources. Small businesses face not only supply shortages due to surging demand but also structural imbalances in supply. Currently, significant GPU capacity remains idle—for instance, many data centers operate at utilization rates as low as 12–18%, and declining profitability in crypto mining has left large amounts of mining hardware underutilized. While this idle compute may not all be suitable for specialized applications such as AI training, consumer-grade hardware can still play a major role in areas like AI inference, cloud gaming rendering, and cloud phone services. The opportunity to integrate and leverage these fragmented compute resources is substantial.

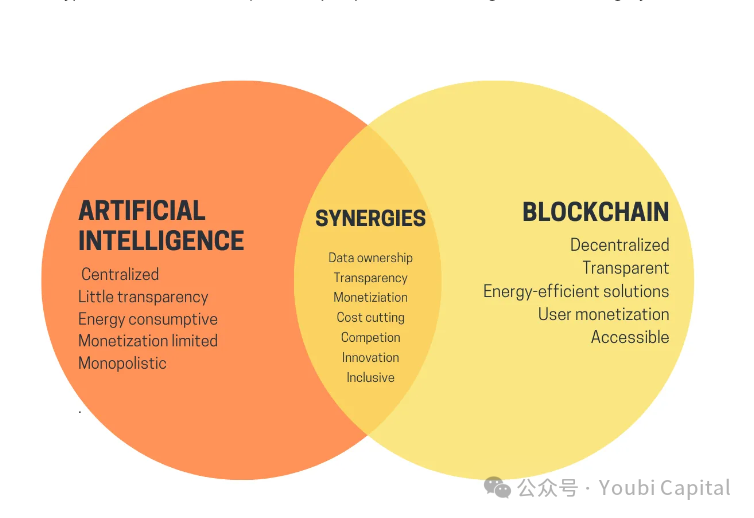

Shifting focus from AI to crypto, after three years of stagnation in the crypto market, a new bull run has finally emerged, with Bitcoin hitting record highs and memecoins proliferating. Although AI and crypto have been popular buzzwords for years, artificial intelligence and blockchain—two transformative technologies—have remained like parallel lines, failing to find a true "intersection." Earlier this year, Vitalik published an article titled “The promise and challenges of crypto + AI applications,” discussing potential future synergies between AI and crypto. In it, he envisioned scenarios such as using blockchain and MPC-based cryptographic techniques to decentralize AI training and inference, thereby opening up the black box of machine learning and making AI models more trustless. These visions remain distant goals. However, one use case mentioned by Vitalik—leveraging crypto’s economic incentives to empower AI—is both critical and achievable in the near term. Decentralized compute networks represent one of the most viable current applications of AI + crypto.

2 Decentralized Compute Networks

Currently, numerous projects are developing within the decentralized compute network space. Their underlying logic is similar and can be summarized as: Using tokens to incentivize holders of idle compute resources to participate in the network and provide computing services. These fragmented resources can then be aggregated into a large-scale, decentralized compute network. This increases utilization of idle compute while meeting customer demands at lower costs, creating a win-win for both buyers and sellers.

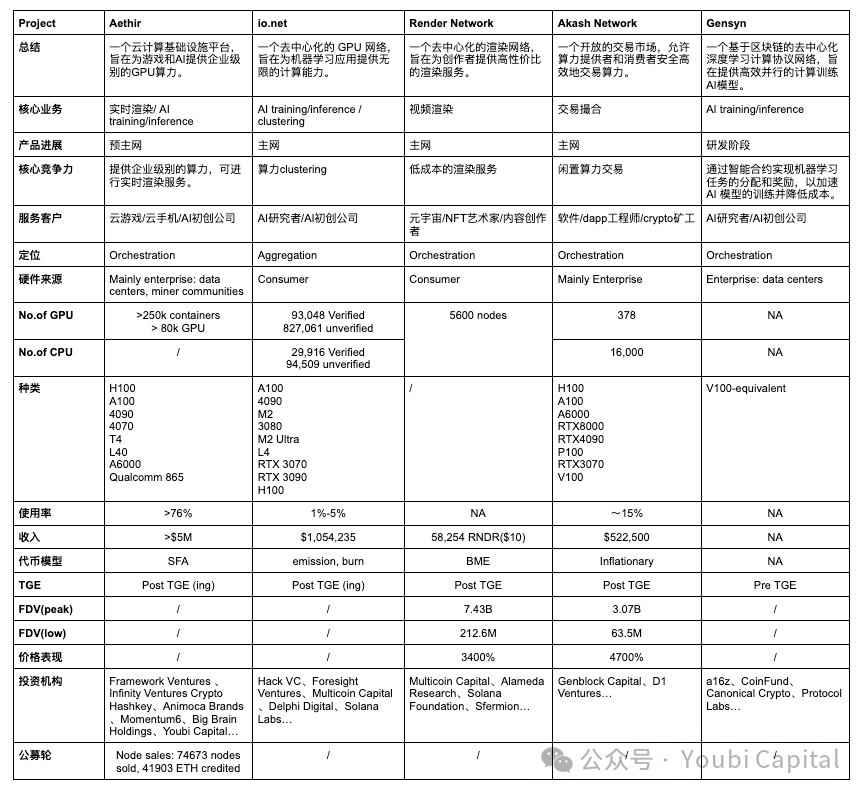

To help readers quickly grasp the overall landscape of this sector, this article will deconstruct specific projects and the broader industry from micro to macro perspectives, aiming to equip readers with analytical frameworks to understand each project's core competitive advantages and the overall development of the decentralized compute sector. We will introduce and analyze five projects: Aethir, io.net, Render Network, Akash Network, and Gensyn, followed by a summary and evaluation of their status and sector trends.

From an analytical framework perspective, when focusing on a specific decentralized compute network, we can break it down into four core components:

-

Hardware Network: Aggregates distributed compute resources through nodes located around the world, enabling resource sharing and load balancing—the foundational layer of decentralized compute networks.

-

Bilateral Market: Matches compute providers with users via fair pricing and discovery mechanisms, providing a secure trading platform that ensures transparency, fairness, and trustworthiness in transactions.

-

Consensus Mechanism: Ensures nodes in the network correctly perform assigned tasks. It monitors two levels: 1) whether nodes are online and ready to accept tasks; 2) proof of work—whether the node successfully completed the task without diverting compute to other purposes.

-

Token Incentives: Token models encourage participation from both providers and users, capturing network effects and enabling community-wide value sharing.

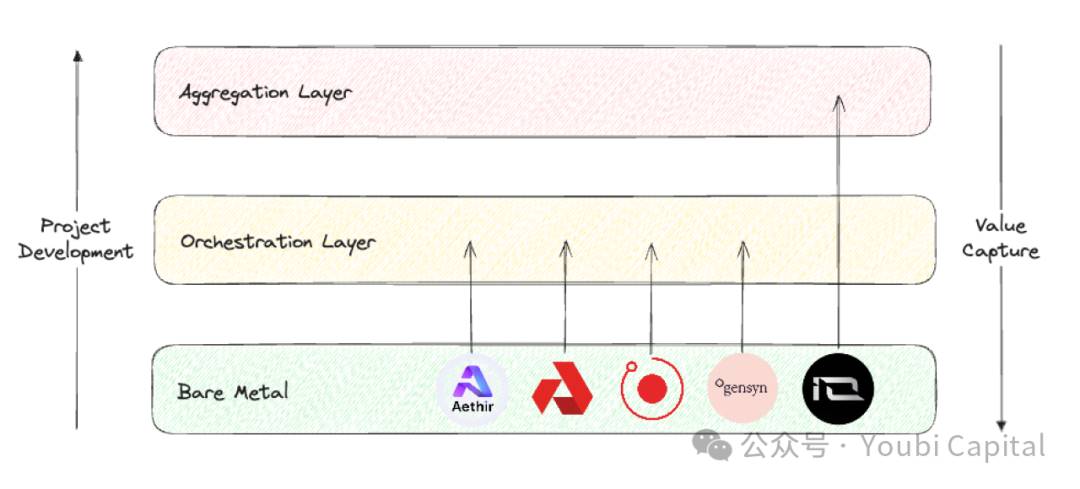

When taking a bird’s-eye view of the entire decentralized compute sector, Blockworks Research offers a useful analytical framework, categorizing projects into three distinct layers:

-

Bare metal layer: Forms the base of the decentralized compute stack, primarily responsible for aggregating raw compute resources and making them accessible via APIs.

-

Orchestration layer: The middle layer of the decentralized compute stack, responsible for coordination and abstraction—handling scheduling, scaling, operations, load balancing, and fault tolerance. Its main function is to abstract away the complexity of managing underlying hardware, offering end users a higher-level interface tailored to specific customer segments.

-

Aggregation layer: The topmost layer, focused on integration—providing a unified interface where users can execute multiple types of compute tasks (e.g., AI training, rendering, zkML). Acts as a orchestrator and distributor across multiple decentralized compute services.

Image Source: Youbi Capital

Using these two analytical frameworks, we will conduct a horizontal comparison of the five selected projects, evaluating them across four dimensions: core business, market positioning, hardware infrastructure, and financial performance.

2.1 Core Business

At a fundamental level, decentralized compute networks are highly homogeneous—using token incentives to mobilize owners of idle compute to provide services. Around this shared logic, we can distinguish differences in core business across three aspects:

-

Sources of Idle Compute:

-

There are two primary sources of idle compute in the market: 1) enterprise-held compute (e.g., data centers, miners); 2) individual-held compute. Enterprise compute typically involves professional-grade hardware, whereas individuals usually own consumer-grade GPUs.

-

Aethir, Akash Network, and Gensyn primarily source compute from enterprises. Benefits include: 1) enterprises and data centers generally possess higher-quality hardware and professional maintenance teams, resulting in better performance and reliability; 2) enterprise resources are often more standardized, enabling efficient centralized management and monitoring. However, this approach requires strong commercial relationships with enterprises and may limit scalability and decentralization.

-

Render Network and io.net mainly incentivize individuals to contribute their idle compute. Advantages: 1) individual-owned compute has lower explicit costs, enabling more economical offerings; 2) greater scalability and decentralization enhance system resilience. Disadvantages include: fragmented and heterogeneous resources complicate management and orchestration; achieving initial network effects is harder; and security risks such as data leakage or misuse of compute are higher.

-

Compute Consumers

-

In terms of consumers, Aethir, io.net, and Gensyn primarily target enterprises. B2B clients need high-performance computing for AI and real-time game rendering. These workloads demand high-end GPUs or professional hardware. Enterprises also require high reliability and stability, necessitating robust SLAs and technical support. Moreover, migration costs are high—if decentralized networks lack mature SDKs for easy deployment (e.g., Akash requires custom remote port development)—customer adoption is unlikely unless there is a significant price advantage.

-

Render Network and Akash Network primarily serve individual users. Serving retail customers requires intuitive interfaces and tools to ensure good user experience. Price sensitivity is high, so competitive pricing is essential.

-

Hardware Type

-

Common compute hardware includes CPU, FPGA, GPU, ASIC, and SoC, each differing significantly in design goals, performance, and application domains. In short, CPUs excel at general-purpose computing, FPGAs offer high parallelism and programmability, GPUs dominate parallel computing, ASICs achieve peak efficiency for specific tasks, and SoCs integrate multiple functions for compact applications. Most decentralized compute projects focus on GPU aggregation due to their unique strengths in AI training, parallel computing, and media rendering.

-

Although most projects involve GPU integration, different applications demand varying hardware specs, leading to heterogeneous optimizations. Key parameters include parallelism vs. serial dependencies, memory bandwidth, and latency. For example, rendering workloads favor consumer GPUs (e.g., RTX 3090/4090), which feature enhanced RT cores optimized for ray tracing—unlike data center GPUs. Conversely, AI training and inference require professional GPUs like H100s or A100s. Thus, Render Network aggregates consumer GPUs, while io.net relies more on H100s and A100s to meet AI startups’ needs.

2.2 Market Positioning

Regarding project positioning, the bare metal, orchestration, and aggregation layers differ in core problems addressed, optimization priorities, and value capture potential.

-

The bare metal layer focuses on collecting physical resources, the orchestration layer on scheduling and optimizing compute according to client needs, and the aggregation layer on integrating diverse resources into a unified, general-purpose interface. From a value chain perspective, projects should start at the bare metal layer and strive to move upward.

-

Value capture increases progressively from bare metal → orchestration → aggregation layer. The aggregation layer captures the most value because platforms here achieve the strongest network effects, reach the largest user base, act as traffic gateways for decentralized networks, and thus occupy the highest-value position in the compute stack.

-

However, building an aggregation platform is extremely challenging, requiring solutions to complex technical issues, heterogeneous resource management, system reliability, scalability, network effects, security, privacy, and operational complexity. These hurdles make cold starts difficult and success dependent on timing and ecosystem maturity. Attempting to build an aggregation layer before the orchestration layer matures and captures market share is unrealistic.

-

Currently, Aethir, Render Network, Akash Network, and Gensyn belong to the orchestration layer, serving specific target markets. Aethir focuses on real-time rendering for cloud gaming and provides dev/deployment tools for enterprises; Render Network specializes in video rendering; Akash aims to be a marketplace akin to Taobao; Gensyn focuses on AI training. io.net positions itself as an aggregation layer, but its current functionality falls short—while it has integrated hardware from Render Network and Filecoin, full abstraction and integration remain incomplete.

2.3 Hardware Infrastructure

-

Not all projects disclose detailed network metrics. Among them, io.net explorer offers the best UI, displaying GPU/CPU counts, types, prices, geographic distribution, network usage, node earnings, etc. However, in late April, io.net’s frontend was hacked due to missing authentication on PUT/POST endpoints, allowing attackers to alter displayed data. This incident highlights growing concerns about privacy and data integrity across the sector.

-

In terms of GPU quantity and model diversity, io.net—as an aggregator—naturally collects the most hardware, followed by Aethir. Other projects are less transparent. io.net’s inventory includes both professional GPUs (A100) and consumer models (RTX 4090), aligning with its aggregation strategy. This flexibility allows task-specific GPU selection, though managing diverse drivers and configurations increases software complexity. Currently, task allocation on io.net is largely user-driven.

-

Aethir launched its own mining device—Aethir Edge, developed with Qualcomm support, was officially released in May. It breaks from traditional centralized GPU clusters by deploying compute closer to users at the edge. Combining H100 cluster power, Aethir Edge enables efficient inference of pre-trained AI models, delivering faster, lower-cost, and higher-performance services.

-

Looking at supply and demand, Akash Network reports ~16k CPUs and 378 GPUs, with utilization rates of 11.1% and 19.3% respectively. Only high-demand GPUs like H100 see relatively high rental rates; most other models remain idle. Other networks face similar conditions—overall demand is low, and except for hot chips like A100/H100, most compute sits unused.

-

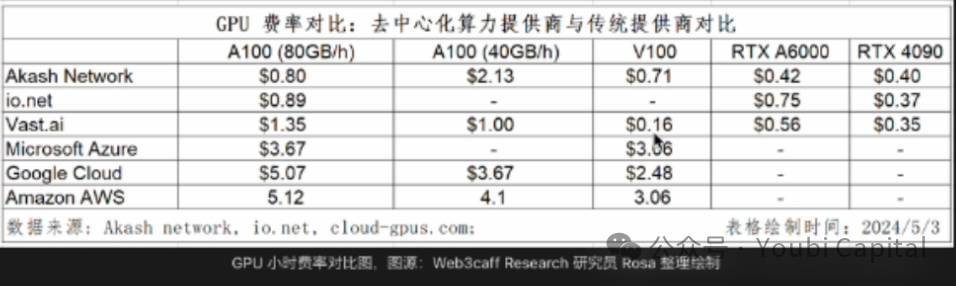

Price competitiveness remains unconvincing compared to traditional cloud providers.

2.4 Financial Performance

-

Regardless of token model design, healthy tokenomics must satisfy several conditions: 1) network demand should be reflected in token price (i.e., value capture); 2) long-term, fair incentives for all participants (developers, nodes, users); 3) decentralized governance to prevent insider concentration; 4) balanced inflation/deflation and release schedules to avoid volatility that threatens network stability.

-

Broadly speaking, token models fall into two categories: BME (Burn and Mint Equilibrium) and SFA (Stake for Access). Their deflationary pressures differ: BME burns tokens upon service usage, so deflation correlates with demand. SFA requires providers to stake tokens to participate, meaning deflation stems from supply constraints. BME suits non-standardized goods better, but if demand is weak, persistent inflation may occur. While details vary, Aethir leans toward SFA, while io.net, Render Network, and Akash Network follow BME; Gensyn’s model remains unclear.

-

In terms of revenue, network demand directly impacts total income (excluding miner subsidies). Public data shows io.net leads in revenue. Aethir has not disclosed figures but announced signed contracts with multiple enterprise clients.

-

Regarding token price, only Render Network and Akash Network have conducted ICOs. Aethir and io.net recently launched tokens—performance remains to be seen. Gensyn’s plans are unclear. Nevertheless, existing decentralized compute projects show strong price performance post-launch, reflecting significant market potential and high community expectations.

2.5 Summary

-

The decentralized compute sector is advancing rapidly, with many projects already delivering services and generating revenue. The sector has moved beyond pure narrative into early product-market fit.

-

Weak demand is a common challenge. Long-term customer demand remains unproven. Yet, token prices remain strong regardless—launched projects have performed impressively.

-

AI is the dominant narrative, but not the only use case. Beyond AI training/inference, compute can power cloud gaming rendering, cloud phones, and more.

-

Hardware heterogeneity remains high; quality and scale of compute networks need improvement.

-

For retail users, cost advantages are marginal. For enterprises, factors beyond cost—such as reliability, support, compliance, and legal safeguards—are crucial, and Web3 projects generally underperform in these areas.

3 Closing Thoughts

The massive compute demand driven by AI’s explosive growth is undeniable. Since 2012, compute used in AI training has grown exponentially, doubling every 3.5 months (compared to Moore’s Law of 18 months). Demand has increased over 300,000-fold since 2012—far outpacing Moore’s 12-fold rise. The GPU market is projected to grow at a 32% CAGR over the next five years, exceeding $200 billion. AMD forecasts even higher—$400 billion by 2027.

Image Source: https://www.stateof.ai/

The explosive growth of AI and other compute-intensive workloads (e.g., AR/VR rendering) exposes structural inefficiencies in traditional cloud computing and dominant markets. In theory, decentralized compute networks can address these gaps by leveraging distributed idle resources to deliver more flexible, low-cost, and efficient solutions. Thus, the convergence of crypto and AI holds immense market potential, but also faces fierce competition from traditional players, high barriers to entry, and complex market dynamics. Overall, among all crypto verticals, decentralized compute networks are one of the most promising sectors likely to achieve real-world demand.

Image Source: https://vitalik.eth.limo/general/2024/01/30/cryptoai.html

The future is bright, but the path is winding. Achieving the above vision requires overcoming numerous challenges. In summary: Simply offering traditional cloud services yields thin margins. On the demand side, large enterprises tend to build in-house compute, while independent developers prefer established cloud providers. Whether SMEs will generate stable demand for decentralized compute remains unverified. Meanwhile, AI is a vast, high-potential market. To capture broader opportunities, decentralized compute providers must evolve toward offering AI/model services, exploring more crypto + AI use cases, and expanding their value proposition. But significant hurdles remain:

-

Limited Price Advantage: Comparative data shows decentralized networks haven't achieved meaningful cost savings. High-demand professional chips like H100/A100 are inherently expensive due to market dynamics. Additionally, the lack of economies of scale, high network/bandwidth costs, and complex operations in decentralized systems add hidden costs that offset potential savings.

-

Challenges in AI Training: Decentralized AI training faces major technical bottlenecks. During LLM training, GPUs receive data batches, perform forward/backward passes, aggregate gradients, and synchronize model updates—all requiring massive data transfer and synchronization. Questions around optimal parallelization strategies, bandwidth optimization, latency reduction, and communication cost minimization remain unresolved. At present, decentralized AI training is impractical.

-

Data Security and Privacy: Throughout LLM training—data distribution, model training, parameter/gradient aggregation—privacy risks arise at every stage. Data privacy is even more critical than model privacy. Without solving this, large-scale adoption on the demand side cannot happen.

From a pragmatic standpoint, a decentralized compute network must balance immediate demand generation with long-term market potential. Projects should define clear product-market fits and target audiences—perhaps starting with non-AI or Web3-native use cases on the fringes—to build early user bases. Simultaneously, they must continue exploring frontier applications of AI + crypto, pushing technological boundaries and evolving their service models.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News