Sequoia Capital: Generative AI, a Creative New World

TechFlow Selected TechFlow Selected

Sequoia Capital: Generative AI, a Creative New World

Humans are good at analyzing things, and machines can do even better in this regard.

By: Sonya Huang and Pat Grady

Translation: TechFlow

AIGC (AI-Generated Content) has recently become a hot topic. With the rapid deployment of numerous applications, AI-generated images, text, audio, and even video content are gradually entering our daily lives.

Just hours ago, Sequoia US published a new article titled "Generative AI: A Creative New World". Could this mark the beginning of a new paradigm shift?

Let’s take a look at this article. The original authors are two partners at Sequoia: Sonya Huang and Pat Grady. Interestingly, GPT-3 is also credited as a co-author, and the illustrations were generated using Midjourney—this article itself is a real-world example of AIGC in action. Below is our translation, which we hope will spark new insights and reflections.

Introduction

Humans excel at analyzing things—and machines do even better. Machines can analyze datasets and identify patterns across many use cases, whether detecting fraud or spam, predicting delivery times, or deciding which TikTok video to show you. They are becoming increasingly intelligent in these tasks. This is known as "analytical AI" or traditional AI.

But humans don’t just analyze—we also create. We write poetry, design products, build games, and write code. Until recently, machines had no chance of competing with humans in creative work, relegated only to analytical and mechanical cognitive tasks. Now, however, machines are beginning to generate meaningful and beautiful things. This new category is called "generative AI", meaning that machines are creating something new rather than analyzing what already exists.

Generative AI is becoming not only faster and cheaper but, in some cases, better than human-created output. From social media to gaming, advertising to architecture, programming to graphic design, product development to law, marketing to sales—every industry that once relied on human creativity now awaits reinvention by machines. Some functions may be fully replaced by generative AI, while others will likely thrive through tightly coupled cycles of human-machine collaboration. But generative AI should unlock better, faster, cheaper creation across broad end markets. The ultimate dream? Generative AI will reduce the marginal cost of creation and knowledge work to zero, generating massive labor productivity, economic value, and corresponding market capitalization.

Generative AI applies to both knowledge work and creative work—areas involving billions of hours of human labor. It has the potential to boost the efficiency and creativity of this workforce by at least 10%, making people not only faster and more efficient but also more capable than before. As such, generative AI could generate trillions of dollars in economic value.

01. Why Now?

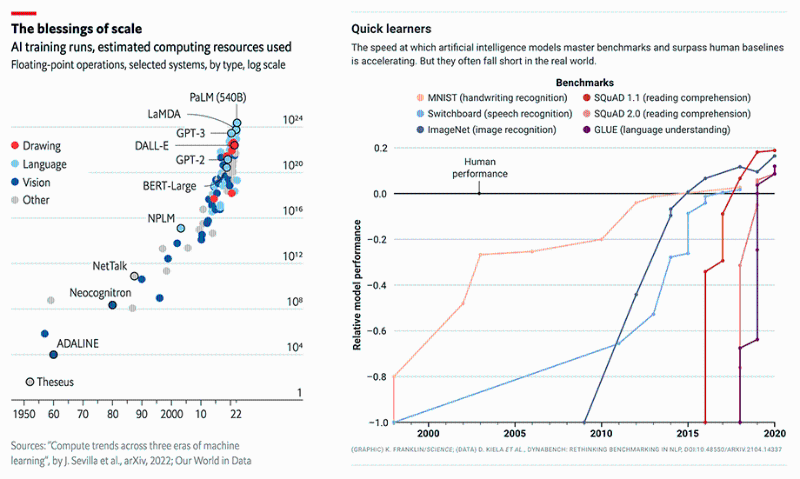

Generative AI shares the same “why now” drivers as AI more broadly: better models, more data, and greater compute power. This field is evolving faster than we can capture, but it's important to contextualize recent developments.

Wave 1: Small Models Dominate (Pre-2015) – Small models were considered state-of-the-art for language understanding. These excelled at analytical tasks like delivery time prediction or fraud classification. However, they lacked expressive capacity for general-purpose generation. Human-level writing or code generation remained a pipe dream.

Wave 2: The Scale Race (2015–Present) – A landmark paper from Google Research, “Attention Is All You Need” (https://arxiv.org/abs/1706.03762), introduced a new neural network architecture called the transformer, enabling high-quality language models with stronger parallelism and reduced training time. These models were simple learners that could be easily fine-tuned for specific domains.

Sure enough, as models grew larger, they began producing human-level results—and then superhuman ones. Between 2015 and 2020, computational power used to train these models increased by six orders of magnitude, surpassing human performance in writing, speech, image recognition, reading comprehension, and language understanding. OpenAI’s GPT-3 stood out particularly: its performance leapt dramatically over GPT-2, demonstrating excellence across tasks—from code generation to joke writing—with compelling Twitter demos.

Despite all this progress, these models weren't widely accessible. They were huge and difficult to run (requiring specialized GPU configurations), not widely available (closed access or limited beta testing), and expensive when offered as cloud services. Despite these constraints, the earliest generative AI applications began entering the market.

Wave 3: Better, Faster, Cheaper (2022+) – Compute became cheaper. New techniques like diffusion models reduced training and inference costs. Researchers continued developing better algorithms and larger models. Developer access expanded from closed beta to open testing, and in some cases, to open source.

For developers eager to experiment with LLMs (Large Language Models), the floodgates have opened. Application development is now booming.

Wave 4: Killer Apps Emerge (Now) – With platform layers stabilizing, models continue improving—becoming better, faster, and cheaper. Access to models trends toward free and open-source. Creativity at the application layer has matured.

Just as mobile devices unlocked new app categories through features like GPS, cameras, and internet connectivity, we expect these large models to spark a new wave of generative AI applications. Just as killer apps opened the mobile internet market a decade ago, we anticipate similar breakthroughs in generative AI. The race is on.

02. Market Landscape

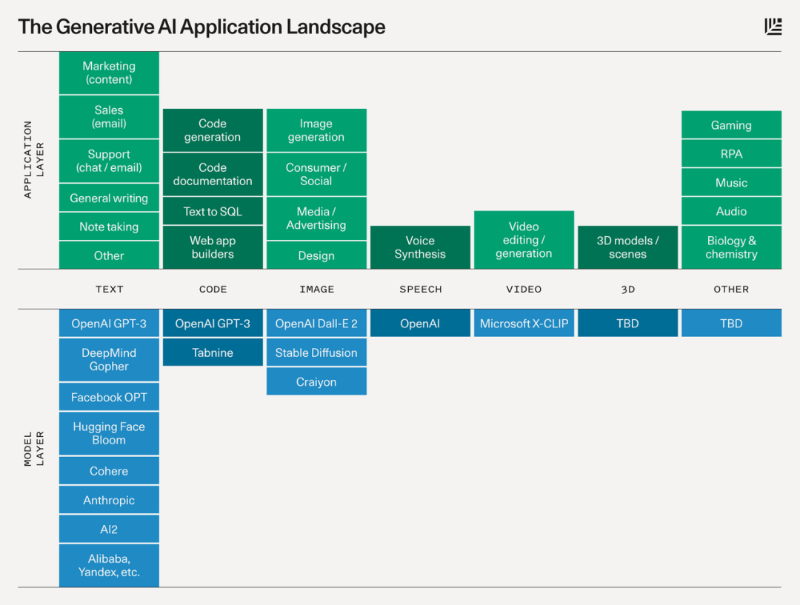

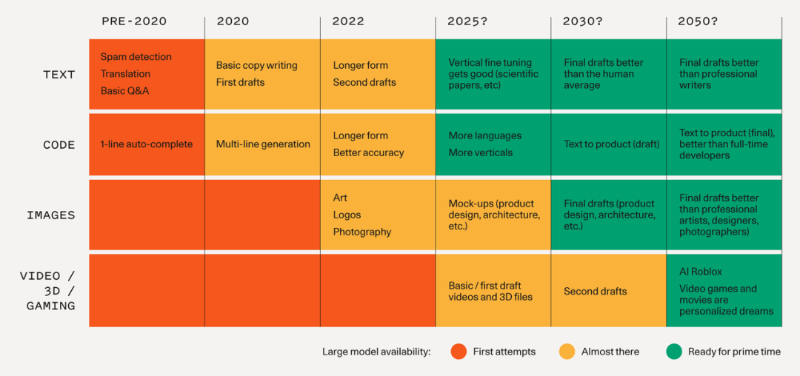

Below is a diagram illustrating the platform layer powering each category and the types of potential applications built atop them.

Models

Text is the most advanced domain, yet natural language remains hard to get right, and quality matters. Today, these models perform well in general short-to-medium-length writing (though often used for iteration or drafting). Over time, we should expect higher-quality outputs, longer-form content, and deeper vertical specialization.

Code Generation is already having a significant near-term impact on developer productivity, as demonstrated by GitHub Copilot. Moreover, it empowers non-developers to creatively use code.

Images are a recent phenomenon but have gone viral quickly. Sharing generated images on Twitter is far more engaging than text! We’re seeing image models with diverse aesthetic styles, along with various tools for editing and modifying outputs.

Speech Synthesis has existed for a while, but consumer and enterprise applications are just emerging. For high-end uses like film and podcasts, achieving natural-sounding, human-quality voice is a high bar. But like images, today’s models provide a starting point for further refinement or final output.

Video and 3D Models lag significantly behind. Yet there's great excitement about their potential to unlock large creative markets in film, gaming, VR, architecture, and physical product design. We expect basic 3D and video models to emerge within the next 1–2 years.

Many other fields—from audio and music to biology and chemistry—are also seeing foundational model research. The chart below shows the timeline of base model progress and when related applications may become feasible; projections beyond 2025 remain speculative.

Applications

Here are some applications that excite us—only a fraction of what’s possible. We're inspired by the creative visions founders and developers are pursuing.

Copywriting: Increasing demand for personalized web and email content for sales, marketing, and customer support makes this an ideal use case for language models. These tasks often follow simple templates under tight time and budget constraints, driving strong demand for automation and augmentation tools.

Vertical-Specific Writing Assistants: Most current writing assistants are general-purpose. We believe huge opportunities exist in building better generative apps tailored to specific end markets—such as legal contract drafting or screenwriting. Product differentiation lies in fine-tuning models and UX interactions for specific workflows.

Code Generation: Current tools have already boosted developer productivity significantly—Copilot generates nearly 40% of code in projects where it’s installed. But an even bigger opportunity may lie in empowering consumers to program. Learning to prompt could become the ultimate high-level programming language.

Art Generation: The entire history of art and pop culture is now encoded into these large models, allowing anyone to explore themes and styles that might otherwise take a lifetime to master.

Gaming: The long-term vision is creating complex scenes or manipulatable models via natural language—a distant goal. But nearer-term opportunities include generating textures and skybox art.

Media/Advertising: Imagine autonomous agents optimizing ad copy and creatives in real time for individual consumers. Multimodal generation offers a prime opportunity to combine sales messaging with complementary visuals.

Design: Prototyping digital and physical products is a labor-intensive, iterative process. AI generating high-fidelity mockups from rough sketches and prompts is already real. With 3D models, generative design will extend from manufacturing to physical goods—your next iPhone app or sneaker could be machine-designed.

Social Media and Digital Communities: Are there new ways to express oneself using generative tools? As new apps like Midjourney learn to create on social networks like humans do, they’ll enable novel social experiences.

03. Anatomy of Generative AI Applications

What will generative AI applications look like? Here are some predictions:

Intelligence and Model Fine-Tuning

Generative AI apps are built atop large models like GPT-3 or Stable Diffusion. As these apps gather more user data, they can fine-tune models—improving quality and performance for specific problem spaces, while reducing model size and cost.

We can think of generative AI apps as a UI layer and a "little brain" sitting atop the large general-purpose "big brain".

Form Factors

Today, most generative AI apps exist as plugins within existing software ecosystems: code generation inside your IDE, image generation in Figma or Photoshop, or Discord bots bringing generative AI into digital communities.

There are also a few standalone generative AI web apps—Jasper and Copy.ai for copywriting, Runway for video editing, Mem for note-taking.

Plugins may be the best early entry point for generative AI apps, helping overcome the "chicken-and-egg" challenge between user data and model quality (i.e., needing distribution to collect usage data to improve models, while also needing good models to attract users). We’ve seen this strategy succeed in other markets, especially consumer and social.

Interaction Paradigms

Most current generative AI demos are “one-shot”: you input a prompt, the machine produces an output, you keep it or discard it and try again. In the future, models will support iteration—you’ll be able to modify, refine, upgrade, and generate variations from prior outputs.

Currently, generative AI outputs serve as prototypes or drafts. Apps excel at generating multiple distinct ideas to advance the creative process (e.g., different logo or architectural design options), and at producing first drafts that still require human polishing (e.g., blog posts or code completions). As models grow smarter—partly fueled by user data—we should expect drafts to improve continuously until they’re good enough to serve as final products.

Sustained Industry Leadership

The best generative AI companies will build sustainable competitive advantages through a flywheel connecting user engagement, data, and model performance. To win, teams must drive this cycle by:

Delivering exceptional user engagement → Converting engagement into better model performance (timely improvements, model fine-tuning, using user choices as labeled training data) → Leveraging superior model performance to drive further user growth and retention.

They may focus on specific domains (e.g., code, design, gaming) rather than trying to solve everything for everyone. They might first deeply integrate into existing applications to leverage distribution, then gradually replace them with AI-native workflows. Building these apps the right way to accumulate users and data takes time—but we believe the best ones will endure and have the potential to become massive.

04. Challenges and Risks

Despite its vast potential, generative AI faces unresolved issues in business models and technology, including critical concerns around copyright, trust, safety, and cost.

05. Looking Ahead

Generative AI is still very early. The platform layer is just gaining momentum, and the application layer is only beginning.

To be clear, we don’t need generative AI powered by large language models to write Tolstoy novels. These models are already good enough to draft blog posts and generate prototypes for logos and product interfaces—creating immense value in the medium term.

The first wave of generative AI apps resembles early mobile apps after the iPhone launch—novel but shallow, with unclear differentiation and business models. Yet some offer glimpses into what’s possible. Once you've seen machines generate complex functional code or stunning images, it becomes hard to imagine a future where machines don’t play a central role in our work and creativity.

If we allow ourselves to dream decades ahead, it’s easy to envision a future where generative AI is deeply embedded in how we work, create, and entertain: memos that write themselves, 3D printing of anything you can imagine, Pixar-style films generated from text, Roblox-like game experiences that instantly create rich worlds. While these may seem like science fiction today, technological progress moves astonishingly fast. It took only a few years to go from narrow language models to automated code generation. If we continue at this pace—following a "Large Model Moore’s Law"—these distant scenarios may soon become within reach.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News