Tokenizing Power Generation Assets for Global Export: Selling China’s Electricity to the World

TechFlow Selected TechFlow Selected

Tokenizing Power Generation Assets for Global Export: Selling China’s Electricity to the World

A smokeless power war.

Author: Black Lobster, TechFlow

In the summer of 1858, a copper-core cable crossed the Atlantic seabed, connecting London and New York.

Its significance has never lain in transmission speed, but rather in power structures: whoever lays the undersea cable gains the ability to siphon value from information flows. The British Empire wielded its global telegraph network to tightly control intelligence from its colonies, cotton prices, and wartime news.

Imperial strength rested not only on naval fleets, but also on that cable.

Over 160 years later, this logic is replaying itself—in an unexpected way.

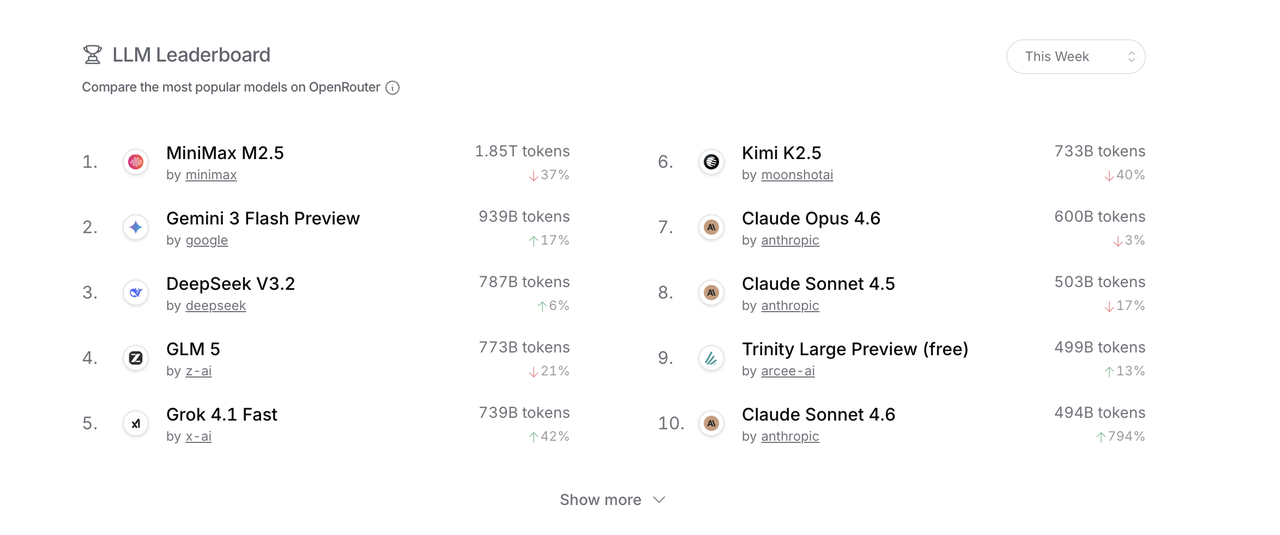

In 2026, Chinese large language models (LLMs) are quietly capturing the global developer market. According to the latest data from OpenRouter, Chinese models account for 61% of token consumption among the platform’s top ten models—and occupy all three top spots. Developers in San Francisco, Berlin, and Singapore send API requests daily, which traverse Pacific undersea fiber-optic cables to reach Chinese data centers—where compute resources are consumed, electricity flows, and results are returned.

Electricity never leaves China’s grid—but its value crosses borders via tokens.

The Great AI Model Migration

On February 24, 2026, OpenRouter released weekly data showing that total token consumption across its top ten models amounted to approximately 8.7 trillion tokens—with Chinese models accounting for 5.3 trillion, or 61%. MiniMax’s M2.5 model surged to first place with 2.45 trillion tokens, followed closely by Kimi’s K2.5 and Zhipu’s GLM-5—making all three top performers Chinese.

Latest data as of February 26

This is no coincidence—a single catalyst ignited everything.

Earlier this year, OpenClaw burst onto the scene—an open-source tool enabling AI to truly “get work done.” It can directly control computers, execute commands, and parallelize complex workflows. Its GitHub star count surpassed 210,000 within weeks.

John, a finance professional, installed OpenClaw immediately and integrated it with Anthropic’s API to automatically monitor stock markets and generate real-time trading signals. Hours later, he stared blankly at his account balance: dozens of dollars—gone.

That is OpenClaw’s new reality. Previously, chatting with AI cost just a few thousand tokens per conversation—negligible expense. With OpenClaw, however, AI runs dozens of subtasks simultaneously in the background, repeatedly invoking context and iterating through loops—causing token consumption to scale exponentially, not linearly. Bills accelerate like cars with hood open and engines roaring—fuel gauge dropping relentlessly, impossible to stop.

A “clever workaround” quickly spread across developer communities: using OAuth tokens to directly connect Anthropic or Google subscription accounts to OpenClaw—converting monthly “unlimited” plans into free fuel for AI agents. Many developers adopted this method.

Official pushback followed swiftly.

On February 19, Anthropic updated its terms of service explicitly prohibiting use of Claude subscription credentials with third-party tools like OpenClaw. To access Claude functionality, users must route traffic exclusively through its billed API channel. Google went further—massively suspending subscription accounts linked to Antigravity and Gemini Ultra via OpenClaw.

“The people have long suffered under Qin’s tyranny.” John promptly embraced domestic LLMs.

On OpenRouter, MiniMax’s M2.5 scores 80.2% on software engineering tasks—versus Claude Opus 4.6’s 80.8%. The performance gap is negligible. Yet pricing differs dramatically: $0.30 per million input tokens for M2.5 versus $5.00 for Claude Opus—nearly 17 times more expensive.

John switched over. His workflow continued uninterrupted—while his bill shrank by an order of magnitude. This migration is unfolding globally in sync.

OpenRouter’s COO Chris Clark put it bluntly:Chinese open-source models captured substantial market share because they dominate agent-based workflows run by U.S. developers.

Exporting Electricity

To understand the essence of token export, one must first unpack the cost structure of a single token.

Tokens appear weightless: one token equals roughly 0.75 English words, and a typical AI conversation consumes only a few thousand. But when stacked in trillions, their underlying physical reality becomes profoundly tangible.

Token costs break down into two core components:compute and electricity.

Compute refers to GPU depreciation—the amortized cost per inference of purchasing an NVIDIA H100 for ~$30,000. Electricity powers data centers continuously: each GPU draws ~700 watts at full load, plus cooling overhead—so annual electricity bills for major AI data centers easily exceed hundreds of millions of dollars.

Now map this physical process geographically.

A developer in San Francisco sends an API request. Data travels from California via Pacific undersea fiber-optic cables to a Chinese data center. GPU clusters activate; electricity flows from China’s grid to those chips; inference completes; results return—all within one or two seconds.

Electricity never leaves China’s grid—but its value crosses borders via tokens.

Here lies something magical beyond conventional trade: tokens lack physical form, bypass customs, evade tariffs, and don’t appear in any existing trade statistics. China exports massive amounts of compute and electricity services—yet these remain nearly invisible in official merchandise trade data.

Tokens become derivatives of electricity—token export is, fundamentally, electricity export.

This is aided by China’s relatively low electricity prices—about 40% cheaper than U.S. rates—a physical cost advantage competitors cannot easily replicate.

Additionally, Chinese LLMs hold algorithmic and “cutthroat competition” advantages.

DeepSeek V3’s Mixture-of-Experts (MoE) architecture activates only subsets of parameters during inference—third-party benchmarks show its inference cost is ~36x lower than GPT-4o. Similarly, MiniMax’s M2.5—despite having 229B total parameters—activates only 10B at runtime.

At the highest level lies cutthroat competition: Alibaba, ByteDance, Baidu, Tencent, Moonshot, Zhipu, MiniMax—over a dozen companies fiercely compete on the same track, driving prices below rational profit margins. Selling at a loss for brand visibility has become industry standard.

Look closer—this mirrors China’s manufacturing export strategy: leveraging supply-chain advantages and internal competition to slash token prices aggressively.

From Bitcoin to Tokens

Before tokens, there was another electricity export wave.

Around 2015, power plant managers in Sichuan, Yunnan, and Xinjiang began receiving unusual visitors.

These individuals leased abandoned factories, filled them with dense arrays of machines, and kept them running 24/7. The machines produced nothing tangible—only endlessly solving mathematical problems. Occasionally, from this infinite computation, a bitcoin would emerge.

This was electricity export’s first generation: converting cheap hydropower and wind power—via miners’ hash computations—into globally tradable digital assets, then cashing out into U.S. dollars on exchanges.

Electricity never crossed any border—but its value flowed globally, carried by bitcoin.

For several years, China accounted for over 70% of global bitcoin mining hash rate. Its hydropower and coal power participated—albeit indirectly—in a global reallocation of capital.

In 2021, this abruptly ended. Regulatory crackdowns scattered miners, pushing hash rate migrations to Kazakhstan, Texas, and Canada.

Yet the underlying logic never vanished—it merely awaited a new shell. Then ChatGPT emerged, triggering an LLM arms race. Former bitcoin mines transformed into AI data centers; mining rigs became GPU clusters; bitcoins became tokens—electricity remained unchanged.

Bitcoin export and token export share identical underlying logic—but tokens hold greater commercial value today.

Mining is pure mathematical computation producing financial assets whose value stems solely from scarcity and market consensus—not from what is computed. Compute itself lacks productivity; it functions primarily as a side effect of trust mechanisms.

LLM inference differs. GPUs consume electricity to produce real cognitive services: code, analysis, translation, creativity. Token value derives directly from user utility—a deeper integration:Once a developer’s workflow depends on a specific model, switching costs accumulate over time.

Crucially, another distinction exists: bitcoin mining was expelled from China, whereas token export is actively chosen by global developers.

The Token War

The undersea cable laid in 1858 represented the British Empire’s sovereignty over the information superhighway—who controls infrastructure defines the rules of the game.

Token export likewise constitutes an undeclared war—fraught with resistance.

Data sovereignty forms the first barrier: an American developer’s API request processed through a Chinese data center physically routes data across China. For individual developers and small applications, this poses no issue—but for enterprise-sensitive data, financial information, or government compliance scenarios, it represents a hard constraint. Hence, Chinese models achieve highest penetration in developer tools and personal applications, yet remain virtually absent from enterprise core systems.

Chip restrictions constitute the second barrier: China’s AI development faces export controls on NVIDIA’s high-end GPUs. MoE architectures and algorithmic optimizations partially offset this disadvantage—but a ceiling remains.

Yet current obstacles are merely prologue—the larger battlefield is forming.

Tokens and AI models have become a new strategic dimension in U.S.-China rivalry—comparable in significance to semiconductors and the internet in the 20th century, or even more aptly analogous to:the Space Race.

In 1957, the Soviet Union launched Sputnik 1—triggering nationwide shock in the United States and launching the Apollo Program, investing resources equivalent to thousands of billions of dollars today to avoid losing the space race.

The AI race follows a strikingly similar logic—but its intensity will far exceed the Space Race. Outer space is physical and distant; ordinary people rarely feel it. AI permeates economic capillaries—every line of code, every contract, every government decision-making system may run a national LLM. Whose model becomes the default infrastructure for global developers wields invisible structural influence over the global digital economy.

This is precisely what unsettles Washington about China’s token export.

When a developer’s codebase, agent workflow, and product logic are all built around a Chinese model’s API, migration costs rise exponentially over time. At that point, even U.S. legislation restricting such usage would be resisted by developers voting with their feet—just as programmers today cannot abandon GitHub.

Today’s token export may only mark the opening chapter of this prolonged contest. Chinese LLMs make no claim to disrupt—they simply deliver services globally at lower prices to every developer holding an API key.

This time, the engineers laying the cable are those coding in Hangzhou, Beijing, and Shanghai—and the GPU clusters operating day and night in southern provinces.

This contest has no countdown—it proceeds 24/7, measured in tokens, fought on every developer’s terminal.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News