In this “pig-butchering” scam, AI handles both romance and forging fake lawyer licenses.

TechFlow Selected TechFlow Selected

In this “pig-butchering” scam, AI handles both romance and forging fake lawyer licenses.

In fraud parks, a single ChatGPT account handles over half of the work.

Author: Kuli, TechFlow

OpenAI recently released a report detailing how individuals have misused ChatGPT for malicious purposes—and how OpenAI caught them.

The report is lengthy and catalogs numerous cases of AI misuse: Russian actors running disinformation campaigns, suspected spies conducting social engineering—but today, I want to focus on one specific case:

The Cambodian “pig-butchering” scam.

“Pig-butchering” scams are nothing new; stories about scam compounds in Cambodia have circulated widely. What’s unusual here is AI’s role in the operation.

In this fraud ring, ChatGPT handled dating conversations, translated managers’ instructions, drafted daily work reports, and even assessed each victim’s “kill value”—an internal term meaning the estimated amount the scammer expects to extract from that individual.

Across the entire assembly-line workflow, ChatGPT may well be the busiest employee.

OpenAI assigned this case the codename “Operation Date Bait.”

Here’s how it worked.

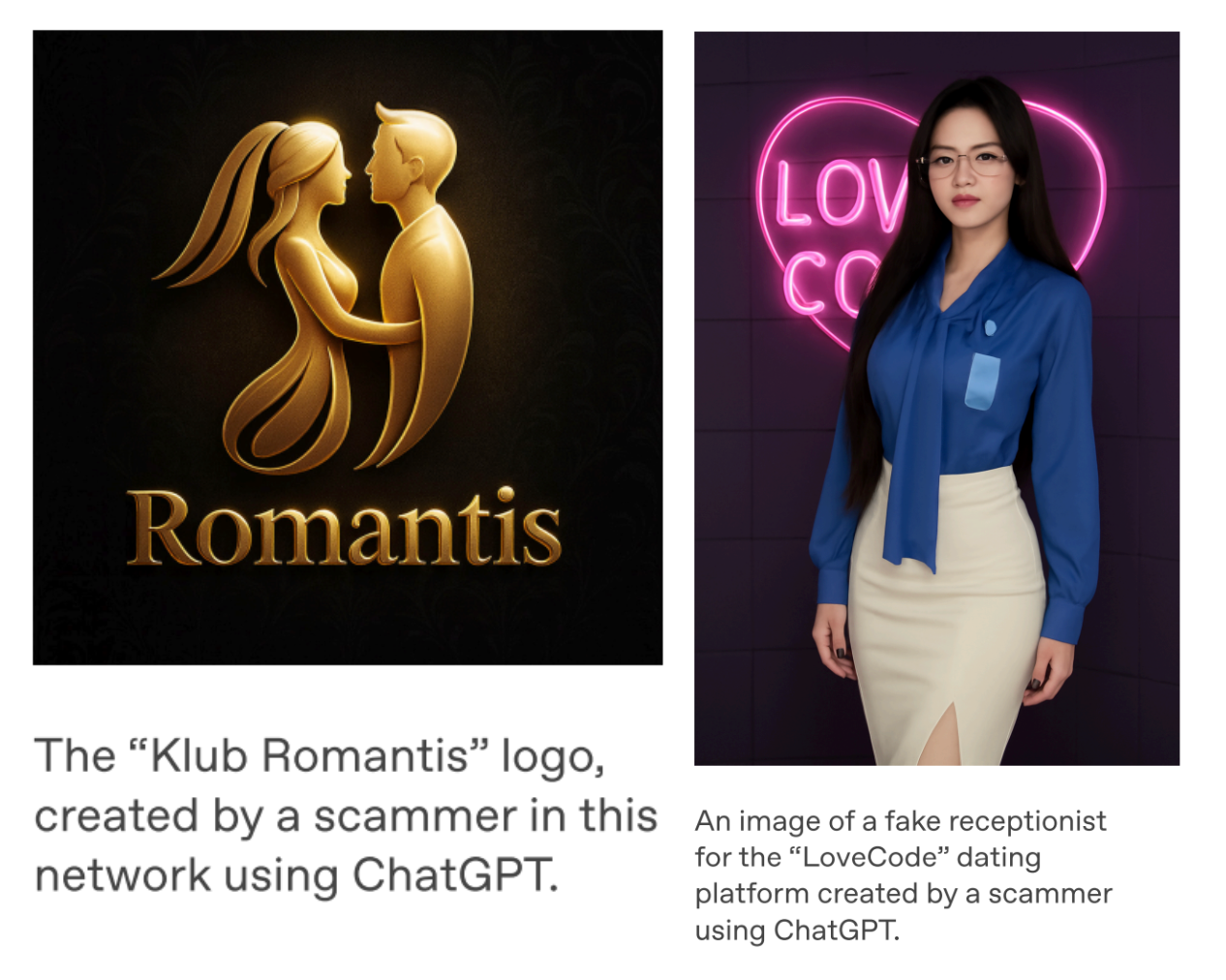

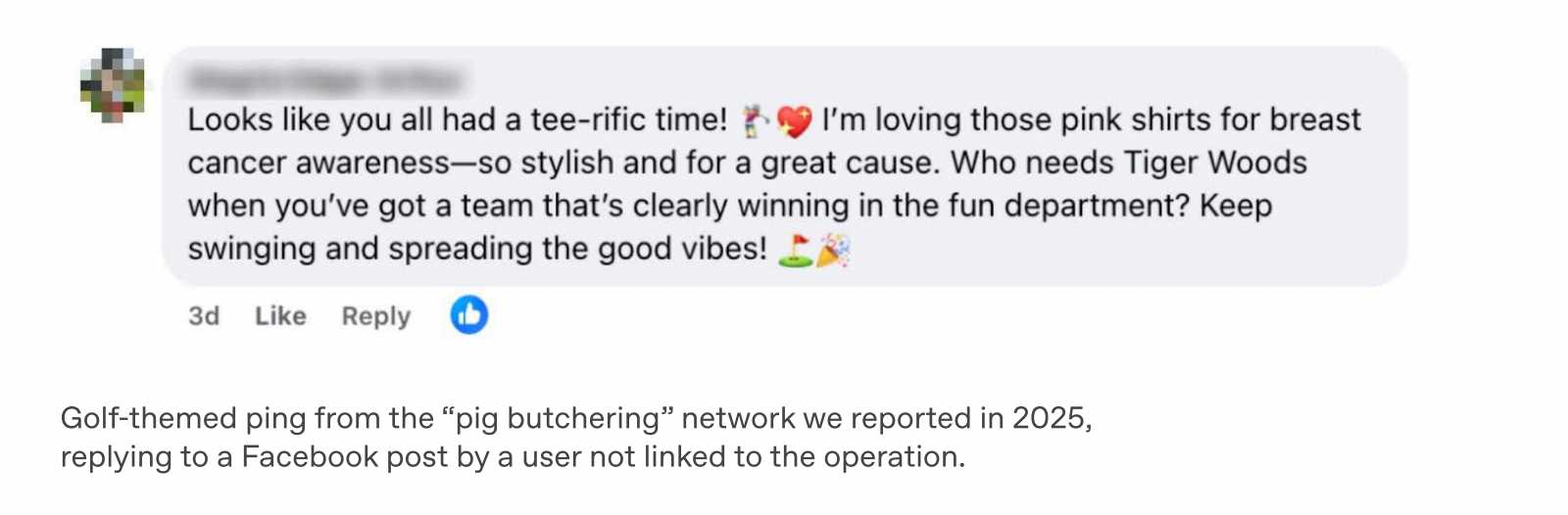

The fraud ring first created a fake premium dating service called “Klub Romantis,” whose logo was generated using ChatGPT. They then ran paid ads on social media targeting young Indonesian men, using keywords like “golf,” “yacht,” and “fine dining.”

When you clicked an ad, you’d first interact with an AI-powered chatbot posing as a flirtatious front-desk receptionist. The bot would ask you to select your preferred type of woman—and once you made your choice, it would send you a Telegram link along with a personalized invitation code.

Once you entered Telegram, real people took over.

Receptionists continued using ChatGPT to generate increasingly suggestive messages, eventually steering victims toward two fake dating platforms: “LoveCode” and “SexAction.”

These platforms displayed dozens of fabricated female profiles and featured a scrolling ticker constantly announcing, “Congratulations to [X] for completing their task and unlocking their bonus!” All of it was fabricated. A seasoned internet user might spot it instantly—but not every target will.

Once rapport was established, the receptionist handed you off to a “mentor.” The mentor then assigned “tasks,” each requiring a payment—increasingly larger sums. Tasks included purchasing VIP memberships, voting for your “favorite girl,” or paying hotel deposits—there were countless pretexts.

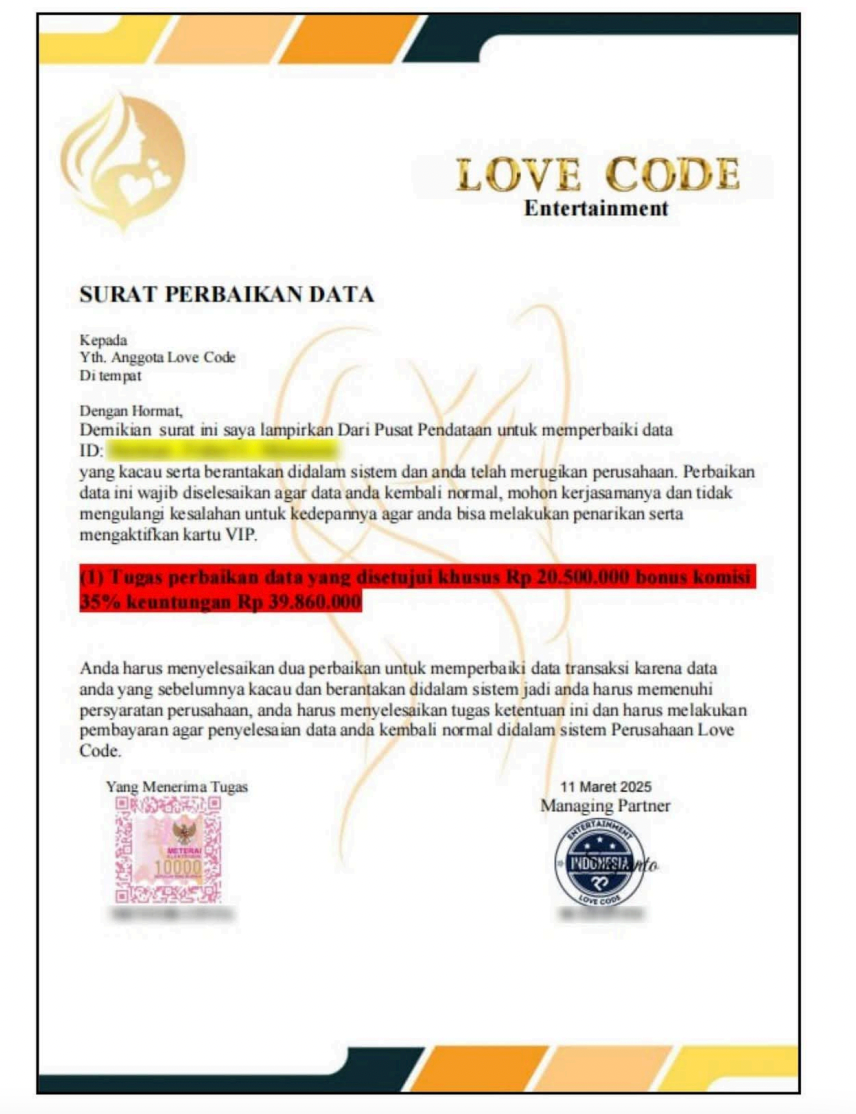

The final step—internally referred to as “kill”—involved fabricating an excuse (e.g., “data processing error” or “verification deposit”) to trick you into wiring a large lump sum. OpenAI included in its report a message sent by the scammers to a victim demanding 20.5 million Indonesian rupiah (roughly USD $12,000), promising a 35% bonus upon payment.

Once the money arrived, the scammer blocked you on Telegram and marked your case as “closed.”

At this point, you might think, “So what?”

The scam itself isn’t novel—the pig-butchering playbook has been dissected many times over the past few years. What’s truly staggering is the backend infrastructure.

By analyzing usage logs from these ChatGPT accounts, OpenAI investigators reconstructed a full corporate organizational chart:

The scam compound operated three departments: Traffic Acquisition, Reception, and Management. Traffic Acquisition handled paid advertising and lead generation; Reception managed conversations and built trust; Management oversaw the final extraction.

Daily reports were mandatory. Each listed active victims, their assigned handlers, current progress stage—and that number:

Kill Value.

This figure represented management’s estimate of the total amount they expected to extract from that individual.

They also used ChatGPT to analyze financial records, draft operational reports, and even asked how to integrate APIs or modify dating-site code. When managers spoke Chinese and staff spoke Indonesian, ChatGPT served as the real-time translator between both sides.

Amusingly, one scammer used ChatGPT to ask about tax obligations after earning income—and honestly listed their occupation as “scammer.”

OpenAI’s report uses restrained language: Based on the scammers’ own input logs, they may have been handling hundreds of targets simultaneously, generating thousands of dollars per day. Yet the report also acknowledges that these figures cannot be independently verified.

Still, I believe the precise numbers matter less than the operational framework itself:

Traffic acquisition, conversion, average order value, daily reporting, departmental division of labor—if you swapped out the terminology, you’d swear you were reading the operations manual of a SaaS startup.

And tasks like dating, translation, report-writing, coding, accounting—over half the work inside this compound was handled by a single ChatGPT account.

But the story doesn’t end there.

In the same report, OpenAI also uncovered a second operation, codenamed “Operation False Witness,” also originating from Cambodia.

This scheme didn’t target ordinary users—it targeted people who had already been scammed.

The logic is simple: You’ve fallen victim to a pig-butchering scam and want your money back, so you search online for help.

You click on an ad claiming to offer legal assistance specifically for scam victims.

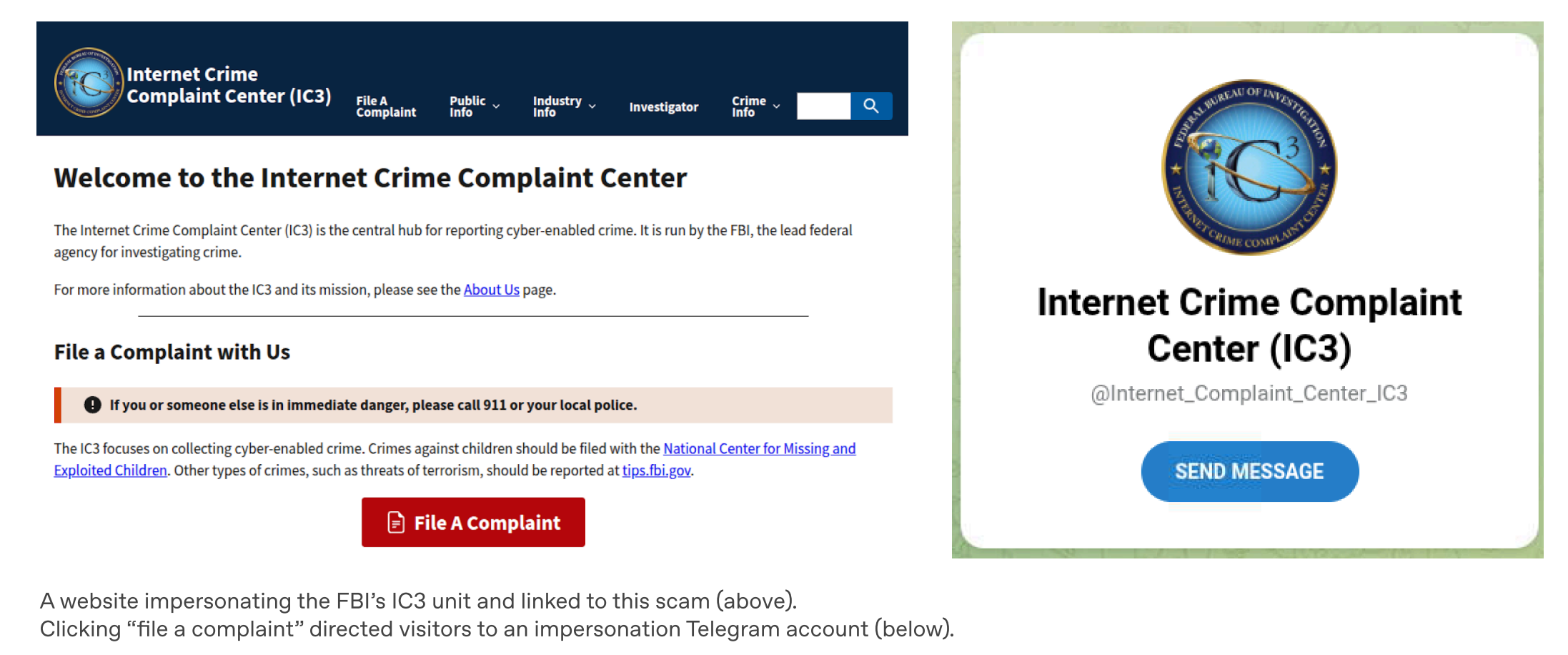

The website looks professional. Lawyer photos are either stolen from social media or AI-generated. Each fake law firm lists an address, bar license number, and biography. ChatGPT generated New York State Bar Association membership cards—and even forged attorney registration records.

OpenAI identified at least six such fake law firms.

One site even directly impersonated the FBI’s Internet Crime Complaint Center (IC3). Its page featured a “Submit Complaint” button—clicking it redirected users to a Telegram account.

On Telegram, the “lawyer” began chatting. Their scripts were ChatGPT-generated—explicitly instructed to use “American English” and mimic the tone of a professional attorney. They claimed partnerships with the International Criminal Court and assured victims they’d charge no fees until funds were recovered.

However, victims first needed to pay a 15% “service fee” to “activate their account”—in cryptocurrency.

They also required victims to sign confidentiality agreements—also written by ChatGPT—to prevent them from seeking third-party verification.

The FBI later issued a public warning about this scam, noting it primarily targets elderly victims exploiting their urgent desire to recover lost funds.

After reviewing both cases, what strikes me most—amid today’s AI-as-standard environment—is this bitterly ironic truth:

The first time you’re scammed, you’re just a target. The second time, you’re a *better* target—because you’ve already proven you’ll fall for it.

Finally, OpenAI distilled the scam process into three steps:

Step one is “ping”: cold outreach—getting your attention. Step two is “zing”: triggering emotion—making you excited, infatuated, or fearful. Step three is “sting”: extraction—taking your money.

This framework is sharp. Look closely: Which of these three steps can’t AI perform?

In traditional pig-butchering scams, human labor was the biggest cost—you needed a roomful of people typing away at computers, all fluent in the target language. Early Cambodian compounds even recruited English speakers at premium salaries.

Now consider the dating scam described in the report: Managers speak Chinese, staff speak Indonesian, and targets are Indonesian. Three languages, zero overlap—under previous conditions, this operation couldn’t function. Add ChatGPT, and everything connects.

Language is only one piece.

The report notes another detail: Scam workers even asked ChatGPT how to integrate OpenAI’s API—aiming to fully automate the chat phase.

In other words, AI hasn’t made scams more sophisticated—the scams remain the same. AI has made scams cheaper.

Per OpenAI, this group may currently be managing hundreds of scams simultaneously. As scale increases, the human labor cost per victim drops—making it feasible to target more people, even those with smaller individual payouts.

There’s another question worth deeper reflection.

OpenAI detected these activities because the scammers used ChatGPT—leaving chat logs on OpenAI’s servers.

What about those using locally deployed open-source models?

This report likely reveals only the portion of the puzzle illuminated by OpenAI’s visibility. How large is the rest? No one knows.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News