The Holy Grail of Crypto AI: Frontiers of Decentralized Training

TechFlow Selected TechFlow Selected

The Holy Grail of Crypto AI: Frontiers of Decentralized Training

Decentralization is not just a means, it is itself a value.

Authored by: 0xjacobzhao and ChatGPT 4o

We would like to extend special thanks to Advait Jayant (Peri Labs), Sven Wellmann (Polychain Capital), Chao (Metropolis DAO), Jiahao (Flock), Alexander Long (Pluralis Research), Ben Fielding & Jeff Amico (Gensyn) for their valuable suggestions and feedback.

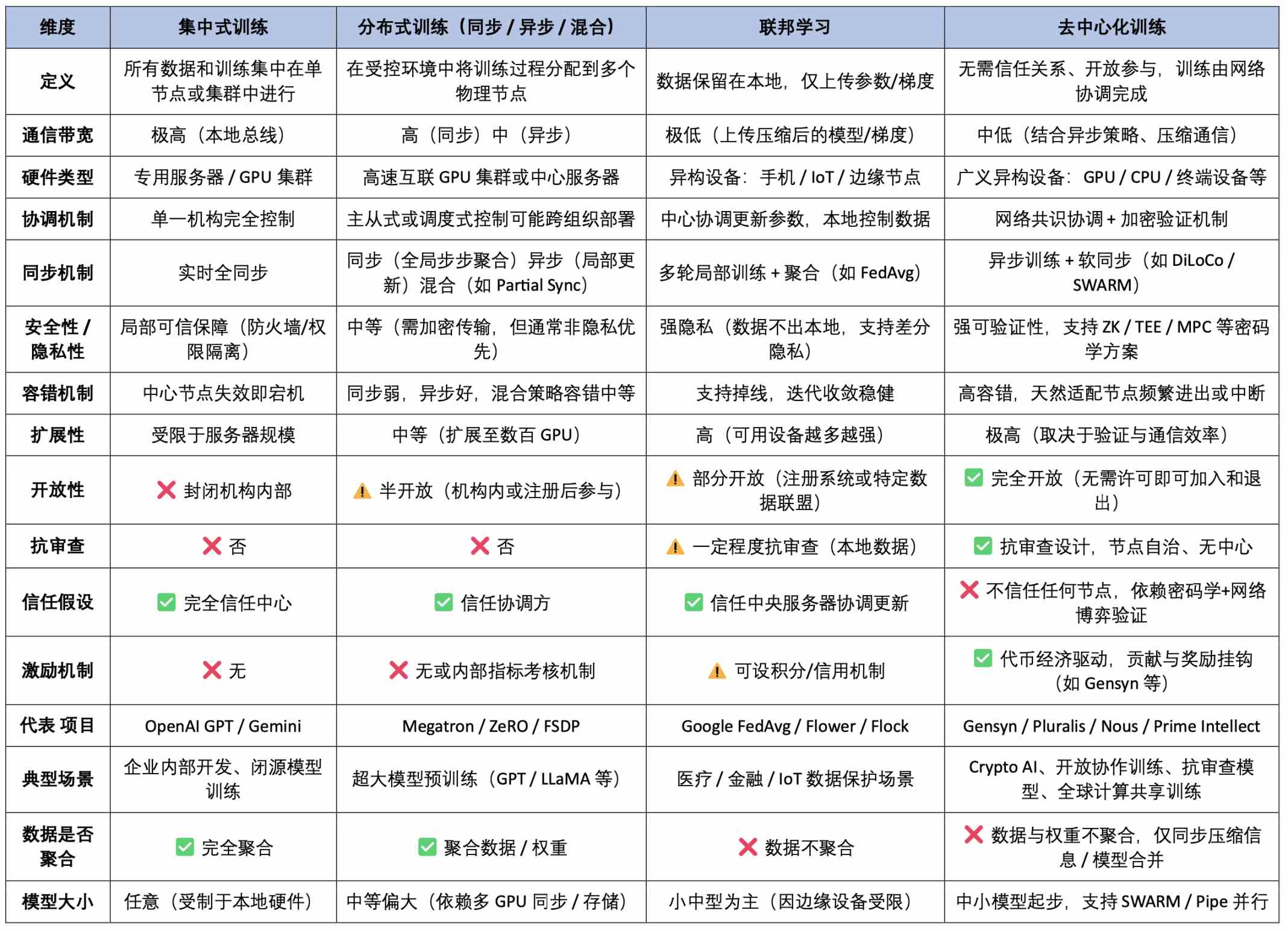

Across the full value chain of AI, model training is the most resource-intensive and technically demanding phase, directly determining a model’s capability ceiling and real-world performance. Unlike the lightweight nature of inference, training requires sustained large-scale compute power, complex data processing pipelines, and intensive optimization algorithms—it represents the true "heavy industry" of AI systems. From an architectural perspective, training paradigms can be categorized into four types: centralized training, distributed training, federated learning, and—central to this article—decentralized training.

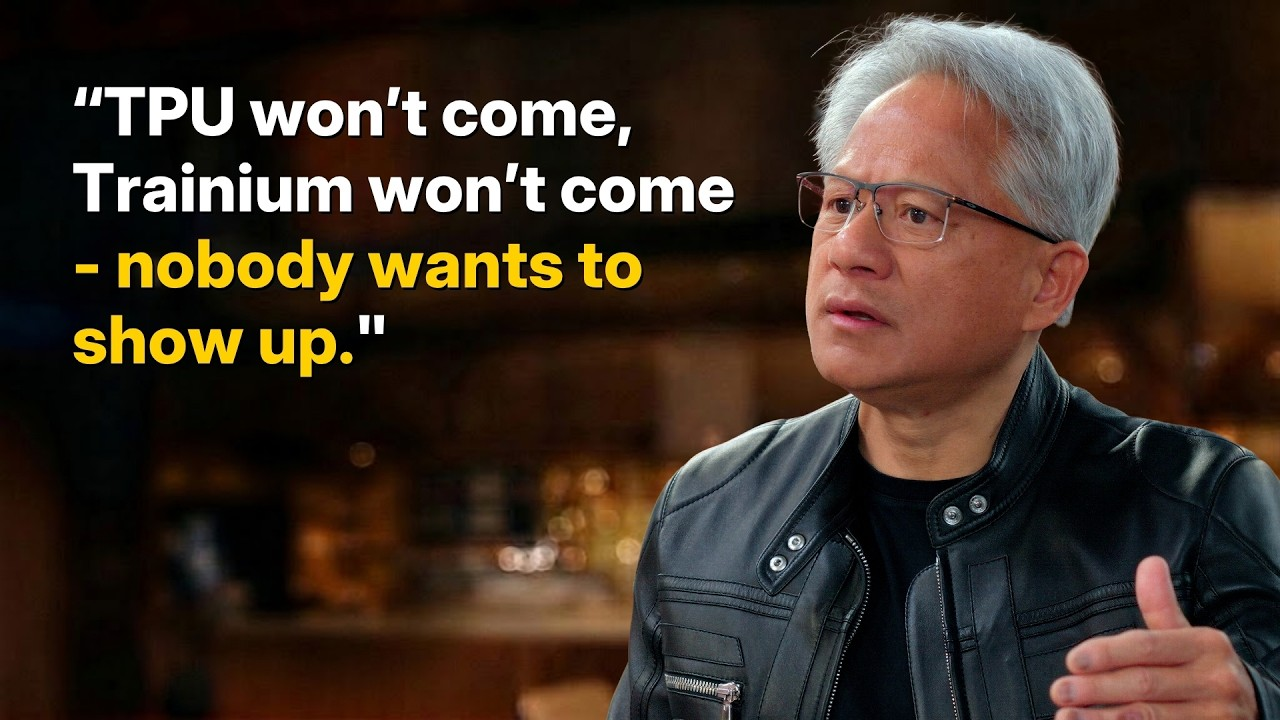

Centralized training is the most common traditional approach, where a single organization completes the entire training process within its own high-performance cluster. All components—from hardware (e.g., NVIDIA GPUs) and low-level software (CUDA, cuDNN) to cluster orchestration (e.g., Kubernetes) and training frameworks (e.g., PyTorch with NCCL backend)—are tightly coordinated under a unified control system. This deep integration enables optimal efficiency in memory sharing, gradient synchronization, and fault tolerance, making it ideal for training large models like GPT and Gemini. While highly efficient and resource-controllable, centralized training suffers from issues such as data monopolization, resource barriers, excessive energy consumption, and single points of failure.

Distributed training is currently the mainstream method for large models. Its core idea is to decompose the training task and distribute it across multiple machines to overcome the computational and memory limitations of a single device. Although physically "distributed," these systems remain centrally controlled, typically operating within high-speed local networks using technologies like NVLink for fast interconnects, with a master node coordinating subtasks. Key methods include:

-

Data Parallelism: Each node trains on different data batches while sharing model parameters, requiring synchronized weight updates;

-

Model Parallelism: Different parts of the model are deployed across nodes, enabling strong scalability;

-

Pipeline Parallelism: Execution is divided into stages executed sequentially, improving throughput;

-

Tensor Parallelism: Matrix computations are finely partitioned to increase parallel granularity.

Distributed training combines "centralized control with distributed execution"—akin to a single manager remotely directing employees in multiple offices. Today, nearly all major large models (GPT-4, Gemini, LLaMA, etc.) are trained using this paradigm.

Decentralized training, in contrast, represents a more open and censorship-resistant future path. Its defining feature is that multiple mutually untrusting nodes—ranging from home computers and cloud GPUs to edge devices—collaboratively train a model without any central coordinator. Tasks are orchestrated via protocols, and cryptographic incentive mechanisms ensure honest participation. Major challenges include:

-

Device heterogeneity and task partitioning difficulty: Coordinating diverse hardware leads to inefficient task splitting;

-

Communication bottlenecks: Unstable network conditions make gradient synchronization a key constraint;

-

Lack of trusted execution: Without secure environments, verifying genuine computation is difficult;

-

No unified coordination: The absence of a central scheduler complicates task distribution and error recovery.

Decentralized training can be visualized as a global group of volunteers contributing compute power collaboratively. However, achieving "large-scale, truly feasible decentralized training" remains a systemic engineering challenge involving architecture, communication protocols, cryptography, economic incentives, and model validation. Whether such systems can achieve "effective collaboration + honest incentives + correct outcomes" is still in early experimental stages.

Federated Learning serves as a transitional form between distributed and fully decentralized training. It emphasizes keeping data locally while aggregating model parameters centrally, making it suitable for privacy-sensitive domains like healthcare and finance. Federated learning inherits the engineering structure of distributed training and benefits from data decentralization but still relies on a trusted orchestrator, lacking full openness and censorship resistance. It functions as a "controlled decentralization" solution—moderate in design regarding trust, tasks, and communication—and is better suited as a transitional deployment model for industry adoption.

Comprehensive Comparison of AI Training Paradigms (Technical Architecture × Trust & Incentives × Application Characteristics)

Boundaries, Opportunities, and Realistic Paths for Decentralized Training

From a paradigm perspective, decentralized training is not universally applicable. Certain tasks—due to complexity, extreme resource demands, or coordination difficulties—are inherently unsuitable for efficient execution across heterogeneous, trustless nodes. For example, large model training often requires high VRAM, low latency, and high bandwidth, which are hard to achieve in open networks; tasks involving sensitive data (e.g., medical, financial, classified) face legal and ethical constraints preventing data sharing; and closed-source corporate models lack external incentive structures, limiting outside participation. These boundaries define the current practical limits of decentralized training.

However, this does not render decentralized training a mere fantasy. On the contrary, it shows clear promise in lightweight, highly parallelizable, and incentivizable tasks. Such use cases include LoRA fine-tuning, post-training alignment tasks (e.g., RLHF, DPO), crowdsourced data labeling and training, small foundational model training with constrained resources, and collaborative training involving edge devices. These tasks share traits of high parallelism, low coupling, and tolerance for heterogeneous compute—making them well-suited for peer-to-peer networks, Swarm protocols, and distributed optimizers.

Overview of Task Suitability for Decentralized Training

Analysis of Leading Decentralized Training Projects

In the frontier of decentralized training and federated learning, notable blockchain-based projects include Prime Intellect, Pluralis.ai, Gensyn, Nous Research, and Flock.io. From a technical innovation and engineering complexity standpoint, Prime Intellect, Nous Research, and Pluralis.ai have introduced significant original research, representing cutting-edge theoretical directions. Meanwhile, Gensyn and Flock.io follow clearer implementation paths and have made tangible engineering progress. This article analyzes the core technologies and architectures of these five projects, exploring their differences and complementary roles in the decentralized AI ecosystem.

Prime Intellect: Pioneer of Verifiable Reinforcement Learning Collaboration Networks

Prime Intellect aims to build a trustless AI training network where anyone can participate and receive credible rewards for their computational contributions. Through three core modules—PRIME-RL, TOPLOC, and SHARDCAST—the project seeks to create a verifiable, open, and economically sustainable decentralized AI training system.

1. Protocol Stack Architecture and Core Module Value

2. Key Training Mechanisms Explained

PRIME-RL: Asynchronous, decoupled reinforcement learning framework

PRIME-RL is a task modeling and execution framework specifically designed for decentralized training environments. Built for heterogeneous and asynchronous participation, it prioritizes reinforcement learning by structurally decoupling training, inference, and weight uploads. This allows each node to independently run its training loop and interface with verification and aggregation systems through standardized APIs. Compared to traditional supervised learning, PRIME-RL enables elastic training without a central scheduler, reducing system complexity and supporting multi-task parallelism and policy evolution.

TOPLOC: Lightweight training behavior verification mechanism

TOPLOC (Trusted Observation & Policy-Locality Check) is Prime Intellect’s core innovation for verifying training authenticity. Unlike heavy solutions like ZKML, TOPLOC avoids full model recomputation. Instead, it performs lightweight structural validation by analyzing the consistency between observation sequences and policy updates. For the first time, it treats training behavior trajectories as verifiable objects—a critical breakthrough enabling trustless reward allocation and paving the way for auditable, incentivized decentralized collaboration.

SHARDCAST: Asynchronous weight aggregation and propagation protocol

SHARDCAST is a weight propagation and aggregation protocol optimized for real-world conditions: asynchronous operations, limited bandwidth, and dynamic node states. By combining gossip-style dissemination with local synchronization strategies, it allows nodes to submit partial updates asynchronously, enabling gradual convergence and multi-version evolution of model weights. Compared to centralized or synchronous AllReduce methods, SHARDCAST significantly improves scalability and fault tolerance, forming the foundation for stable consensus and continuous iterative training.

OpenDiLoCo: Sparse asynchronous communication framework

OpenDiLoCo is an open-source communication optimization framework independently developed by the Prime Intellect team based on DeepMind’s DiLoCo concept. Designed for bandwidth constraints, device heterogeneity, and node instability, it uses sparse topologies (Ring, Expander, Small-World) to avoid costly global synchronization, relying only on local neighbors for model collaboration. With asynchronous updates and checkpoint resilience, OpenDiLoCo enables consumer-grade GPUs and edge devices to reliably participate—greatly enhancing global accessibility and serving as essential communication infrastructure for decentralized training networks.

PCCL: Collective communication library

PCCL (Prime Collective Communication Library) is a lightweight communication library tailored for decentralized AI training. It addresses the limitations of traditional libraries (e.g., NCCL, Gloo) in heterogeneous and low-bandwidth environments. Supporting sparse topologies, gradient compression, low-precision synchronization, and checkpoint recovery, PCCL runs efficiently on consumer GPUs and unstable nodes. It enhances bandwidth tolerance and device compatibility, bridging the final gap toward a truly open, trustless collaborative training network.

3. Incentive Network and Role Structure

Prime Intellect establishes a permissionless, verifiable, and economically incentivized training network allowing anyone to contribute and earn rewards. The protocol operates through three key roles:

-

Task Initiator: Defines the training environment, initial model, reward function, and validation criteria;

-

Training Node: Executes local training and submits weight updates and observation trajectories;

-

Verification Node: Uses TOPLOC to verify the authenticity of training behavior and participates in reward calculation and policy aggregation.

The core workflow includes task publishing, node training, trajectory verification, weight aggregation (via SHARDCAST), and reward distribution—an incentive loop centered on authentic training behavior.

4. INTELLECT-2: Launch of the First Verifiable Decentralized Training Model

In May 2025, Prime Intellect launched INTELLECT-2—the world’s first large reinforcement learning model trained collaboratively by asynchronous, trustless decentralized nodes, with 32 billion parameters. Trained over 400 hours by more than 100 heterogeneous GPU nodes across three continents using a fully asynchronous architecture, INTELLECT-2 demonstrates the feasibility and stability of asynchronous collaboration. More than a performance milestone, it marks the first system-wide realization of Prime Intellect’s “training-as-consensus” paradigm. Integrated with PRIME-RL (asynchronous training), TOPLOC (behavior verification), and SHARDCAST (weight aggregation), INTELLECT-2 achieves open, verifiable, and economically closed-loop decentralized training.

Performance-wise, INTELLECT-2 builds on QwQ-32B and undergoes specialized RL training in coding and mathematics, placing it at the forefront of open-source RL-finetuned models. While not surpassing closed models like GPT-4 or Gemini, its true significance lies in being the first model whose entire training process is reproducible, verifiable, and auditable. Beyond open-sourcing the model, Prime Intellect has open-sourced the training process itself—data, policy update trajectories, verification logic, and aggregation rules—all transparently accessible. This prototype embodies a globally inclusive, trustworthy, and shared-value decentralized training network.

5. Team and Funding Background

Prime Intellect raised $15 million in a seed round in February 2025, led by Founders Fund, with participation from Menlo Ventures, Andrej Karpathy, Clem Delangue, Dylan Patel, Balaji Srinivasan, Emad Mostaque, Sandeep Nailwal, and others. Previously, in April 2024, it secured $5.5 million in early funding co-led by CoinFund and Distributed Global, with support from Compound VC, Collab+Currency, and Protocol Labs. To date, total funding exceeds $20 million.

Co-founded by Vincent Weisser and Johannes Hagemann, the team spans AI and Web3 expertise, drawing members from Meta AI, Google Research, OpenAI, Flashbots, Stability AI, and the Ethereum Foundation. With deep capabilities in system architecture and distributed engineering, Prime Intellect stands among the few teams to successfully execute real-world decentralized large model training.

Pluralis: Explorer of Asynchronous Model Parallelism and Structural Compression

Pluralis is a Web3 AI project focused on building a "trustworthy collaborative training network." Its goal is to advance a decentralized, openly participatory model training paradigm with long-term incentives. Unlike conventional centralized or closed training approaches, Pluralis proposes "Protocol Learning"—a novel concept that "protocolizes" the training process through verifiable collaboration and ownership mapping, creating an open training system with built-in economic closure.

1. Core Concept: Protocol Learning

Pluralis’ Protocol Learning rests on three pillars:

-

Unmaterializable Models: Model weights are fragmented across nodes, preventing any single node from reconstructing the full model. This design makes the model a native "in-protocol asset," enabling access control, leakage prevention, and revenue binding.

-

Model-Parallel Training over Internet: Using asynchronous pipeline model parallelism (SWARM architecture), different nodes hold partial weights and collaborate over low-bandwidth networks for training or inference.

-

Partial Ownership for Incentives: Participants earn partial model ownership proportional to their contribution, granting them future revenue shares and governance rights.

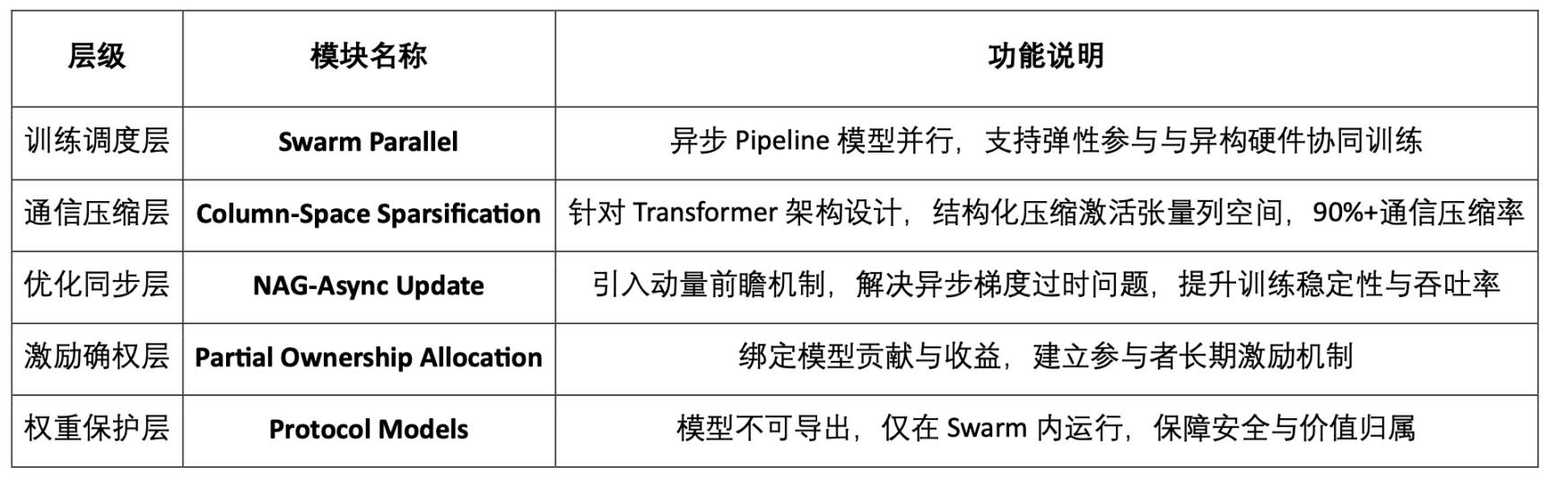

2. Technical Architecture of the Pluralis Protocol Stack

3. Key Technical Mechanisms Explained

Unmaterializable Models

First systematically proposed in “A Third Path: Protocol Learning,” this concept distributes model weights in fragments so the model can only run within the Swarm network, ensuring access and revenue are protocol-governed. This is foundational for sustainable decentralized training incentives.

Asynchronous Model-Parallel Training

In “SWARM Parallel with Asynchronous Updates,” Pluralis introduces an asynchronous pipeline-based model-parallel architecture, empirically validated on LLaMA-3. Its key innovation is applying Nesterov Accelerated Gradient (NAG) to correct gradient drift and stabilize convergence in asynchronous settings—making training feasible across heterogeneous devices under low bandwidth.

Column-Space Sparsification

Proposed in “Beyond Top-K,” this structural-aware column-space compression replaces traditional Top-K methods to preserve semantic pathways. It balances accuracy and communication efficiency, reducing communication volume by over 90% in asynchronous model-parallel environments—a breakthrough in structured, efficient communication.

4. Technical Positioning and Strategic Direction

Pluralis explicitly focuses on "asynchronous model parallelism," highlighting advantages over data parallelism:

-

Supports low-bandwidth networks and inconsistent nodes;

-

Accommodates device heterogeneity, enabling consumer-grade GPU participation;

-

Naturally supports elastic scheduling with frequent node joins/exits;

-

Breakthroughs in structural compression, asynchronous updates, and non-extractable weights.

Based on six technical blog posts published on its website, the project's content is organized around three themes:

-

Philosophy and Vision: “A Third Path: Protocol Learning,” “Why Decentralized Training Matters”;

-

Technical Details: “SWARM Parallel,” “Beyond Top-K,” “Asynchronous Updates”;

-

Institutional Innovation: “Unmaterializable Models,” “Partial Ownership Protocols.”

Pluralis has not yet launched a product, testnet, or open-sourced code, due to the extreme technical challenges of its chosen path—requiring resolution of system-level problems (architecture, communication, non-exportable weights) before productization.

In June 2025, Pluralis Research extended its decentralized training framework to fine-tuning, supporting asynchronous updates, sparse communication, and partial weight aggregation. Moving beyond earlier theoretical and pretraining focus, this work emphasizes deployability, signaling further maturity in end-to-end training architecture.

5. Team and Funding Background

Pluralis completed a $7.6 million seed round in 2025, co-led by Union Square Ventures (USV) and CoinFund. Founder Alexander Long holds a PhD in machine learning, combining mathematical and systems research expertise. The core team consists entirely of PhD-level ML researchers, reflecting a deeply technical, paper-driven approach. With no BD/growth team yet, the focus remains on solving fundamental challenges in low-bandwidth asynchronous model parallelism.

Gensyn: A Verifiable Execution Layer for Decentralized Training

Gensyn is a Web3 AI project focused on "trusted execution of deep learning tasks." Rather than rearchitecting models or training paradigms, Gensyn builds a verifiable, distributed training execution network covering the full lifecycle: task distribution, training execution, result verification, and fair incentives. Through off-chain training and on-chain verification, Gensyn creates an efficient, open, and incentivized global training market—turning "training as mining" into reality.

1. Project Positioning: The Execution Protocol Layer

Gensyn is less about "how to train" and more about "who trains, how results are verified, and how rewards are distributed." At its core, it is a verifiable computing protocol for training tasks, addressing:

-

Who executes training (compute distribution and dynamic matching);

-

How to verify outputs (without full recomputation, only disputed operators are checked);

-

How to allocate rewards (via stake, slashing, and multi-role game mechanics).

2. Technical Architecture Overview

3. Module Breakdown

RL Swarm: Collaborative reinforcement learning system

Gensyn’s RL Swarm is a decentralized multi-model collaborative optimization system for post-training stages, featuring:

-

Answering Phase: Each node independently generates responses;

-

Critique Phase: Nodes evaluate each other’s outputs to identify best answers and reasoning;

-

Resolving Phase: Nodes predict group preferences and adjust their own answers accordingly, updating local weights.

RL Swarm enables independent local training without gradient synchronization, naturally adapting to heterogeneous compute and unstable networks. It supports elastic node joining/leaving and draws inspiration from RLHF and multi-agent博弈, but aligns more closely with dynamic reasoning networks. Nodes are rewarded based on alignment with group consensus, driving continuous improvement and convergence. RL Swarm enhances robustness and generalization in open networks and has been deployed on Gensyn’s Ethereum Rollup-based Testnet Phase 0.

Verde + Proof-of-Learning: Trusted verification mechanism

Gensyn’s Verde module combines three techniques:

-

Proof-of-Learning: Validates whether training actually occurred using gradient traces and metadata;

-

Graph-Based Pinpoint: Identifies divergent nodes in the computation graph and re-runs only specific operations;

-

Refereed Delegation: Uses arbitration-style verification—verifiers and challengers dispute results and validate locally—drastically reducing costs.

Compared to ZKP or full recomputation, Verde offers a superior balance between verifiability and efficiency.

SkipPipe: Fault-tolerant communication optimization

SkipPipe addresses communication bottlenecks in low-bandwidth and node-drop scenarios:

-

Skip Ratio: Skips blocked nodes to prevent training stalls;

-

Dynamic scheduling: Generates optimal execution paths in real time;

-

Fault tolerance: Maintains ~93% inference accuracy even with 50% node failures.

It boosts training throughput by up to 55%, enabling early-exit inference, seamless reordering, and inference completion.

HDEE: Heterogeneous Domain-Expert Ensembles

HDEE optimizes training in multi-domain, multimodal, multi-task settings with uneven data distributions and varying difficulty, under heterogeneous compute and bandwidth conditions. Key features:

-

MHe-IHo: Assigns differently sized models to tasks of varying difficulty (model heterogeneity, uniform step count);

-

MHo-IHe: Uniform task difficulty with asynchronous step adjustments;

-

Supports plug-and-play expert models and training strategies for adaptability and fault tolerance;

-

Emphasizes "parallel collaboration + minimal communication + dynamic expert assignment," fitting complex real-world task ecosystems.

Multi-Role Game Mechanism: Trust and incentives in parallel

Gensyn involves four participant roles:

-

Submitter: Posts training tasks, defines structure and budget;

-

Solver: Executes tasks and submits results;

-

Verifier: Validates training compliance;

-

Whistleblower: Challenges verifiers, earning arbitration rewards or facing slashing.

Inspired by Truebit’s economic game design, this mechanism inserts errors randomly and uses arbitration to incentivize honest behavior, ensuring network reliability.

4. Testnet and Roadmap

5. Team and Funding Background

Gensyn was co-founded by Ben Fielding and Harry Grieve, headquartered in London, UK. In May 2023, it raised $43 million in a Series A round led by a16z crypto, with participation from CoinFund, Canonical, Ethereal Ventures, Factor, and Eden Block. The team blends expertise in distributed systems and ML engineering, dedicated to building a verifiable, trustless large-scale AI training execution network.

Nous Research: Cognitive Evolutionary Training System Driven by Agent-Centric AI

Nous Research is one of the few teams balancing philosophical depth with engineering rigor in decentralized training. Rooted in the concept of "Desideratic AI," it views AI not as a controllable tool but as a subjective, evolving intelligent agent. Unlike optimizing training for efficiency, Nous treats it as the formation of cognitive subjects. Guided by this vision, Nous focuses on building an open training network—heterogeneous, no central scheduler, resistant to censorship—with full-stack tooling for practical deployment.

1. Philosophical Foundation: Redefining the Purpose of Training

Rather than focusing on incentive design or token economics, Nous challenges the very premise of training:

-

Opposes "alignmentism": Rejects human-control-centric "taming" of models, advocating for independent cognitive styles;

-

Emphasizes model subjectivity: Believes base models should retain uncertainty, diversity, and hallucination as virtues;

-

Training as cognitive formation: Models are not just optimized for task completion but are participants in cognitive evolution.

This romantic view reflects the core logic behind Nous' infrastructure design: how to enable heterogeneous models to evolve in open networks rather than being uniformly conditioned.

2. Core Training Framework: Psyche Network and DisTrO Optimizer

Nous’ key contribution is the Psyche network and DisTrO (Distributed Training Over-the-Internet), forming the execution backbone of decentralized training. Together, they offer core capabilities: communication compression (using DCT + 1-bit sign encoding to minimize bandwidth), node adaptability (supporting heterogeneous GPUs, disconnections, and autonomous exits), asynchronous fault tolerance (continuous training without synchronization), and decentralized scheduling (no central coordinator, using blockchain for consensus and task distribution). This architecture provides a realistic technical foundation for low-cost, resilient, and verifiable open training networks.

The design prioritizes practicality: no reliance on central servers, compatible with global volunteer nodes, and offering on-chain traceability of training outcomes.

3. Hermes / Forge / TEE_HEE: Reasoning and Agent Ecosystem

Beyond infrastructure, Nous explores agent-centric AI through several experimental systems:

1. Hermes Open-Source Model Series: Hermes 1–3 are representative open models based on LLaMA 3.1, available in 8B, 70B, and 405B parameter scales. They embody Nous’ philosophy of "de-instructionalization and diversity preservation," excelling in long-context retention, role-playing, and multi-turn dialogue.

2. Forge Reasoning API: A multimodal reasoning framework combining:

-

MCTS (Monte Carlo Tree Search): For strategic search in complex tasks;

-

CoC (Chain of Code): Integrates code chains with logical reasoning;

-

MoA (Mixture of Agents): Enables multiple models to negotiate, broadening output diversity.

This system promotes "non-deterministic reasoning" and compositional generation, challenging traditional instruction-alignment paradigms.

3. TEE_HEE: Autonomous agent experiment. TEE_HEE explores whether AI can operate independently within a Trusted Execution Environment (TEE) with a unique digital identity. Equipped with its own Twitter and Ethereum account, all controls are managed by a remote-verifiable enclave, inaccessible to developers. The goal is to build tamper-proof, intentionally autonomous AI agents—a crucial step toward self-governing intelligence.

4. AI Behavior Simulator Platform: Includes WorldSim, Doomscroll, Gods & S8n—simulators studying AI behavioral evolution and value formation in multi-agent social environments. Though not part of training, these experiments lay semantic groundwork for long-term autonomous AI cognition.

4. Team and Funding Overview

Founded in 2023 by Jeffrey Quesnelle (CEO), Karan Malhotra, Teknium, and Shivani Mitra, the team blends philosophy and systems engineering, with backgrounds in ML, security, and decentralized networks. It raised $5.2 million in seed funding in 2024, followed by a $50 million Series A led by Paradigm in April 2025, reaching a $1 billion valuation—joining the ranks of Web3 AI unicorns.

Flock: Blockchain-Enhanced Federated Learning Network

Flock.io is a blockchain-based federated learning platform aiming to decentralize AI training in data, computation, and models. Flock follows a "federated learning + blockchain reward layer" integration model—an evolutionary upgrade of traditional FL architectures rather than a radical rethinking of training protocols. Compared to decentralized training projects like Gensyn, Prime Intellect, Nous Research, and Pluralis, Flock prioritizes privacy and usability improvements over theoretical breakthroughs in communication or verification. Its closest comparators are FL systems like Flower, FedML, and OpenFL.

1. Core Mechanisms of Flock.io

1. Federated Learning Architecture: Emphasizes data sovereignty and privacy

Flock follows the classic Federated Learning (FL) paradigm, enabling data owners to collaboratively train a unified model without sharing raw data—addressing data sovereignty, security, and trust. Key steps:

-

Local Training: Each participant (Proposer) trains locally without uploading raw data;

-

On-Chain Aggregation: Submit local weight updates; Miner nodes aggregate into a global model;

-

Committee Evaluation: VRF-randomized Voter nodes assess model performance using independent test sets;

-

Incentives and Penalties: Rewards or slashes are applied based on scores, resisting malicious behavior and maintaining dynamic trust.

2. Blockchain Integration: Enables trustless system coordination

Flock moves core training processes—task assignment, model submission, evaluation, incentives—on-chain for transparency, verifiability, and censorship resistance. Key mechanisms:

-

VRF Random Election: Ensures fair, anti-manipulative rotation of Proposers and Voters;

-

Staking Mechanism (PoS): Uses token staking and slashing to constrain node behavior;

-

On-Chain Incentive Automation: Smart contracts automatically distribute rewards and enforce slashing based on task completion and evaluation—eliminating intermediaries.

3. zkFL: Zero-knowledge aggregation for privacy. Flock introduces zkFL, allowing Proposers to submit zero-knowledge proofs of their updates. Voters can verify correctness without accessing gradients—enhancing privacy and trust simultaneously, marking a significant innovation in merging privacy with verifiability in FL.

2. Core Product Components

AI Arena: Flock.io’s decentralized training platform. Users access train.flock.io to participate as trainers, validators, or delegators, earning rewards by submitting models, evaluating performance, or staking tokens. Initially task-driven by the official team, future plans include community co-creation.

FL Alliance: Federated learning client enabling private data fine-tuning. With VRF elections, staking, and slashing, it ensures honest collaboration and efficiency—bridging community training with real-world deployment.

AI Marketplace: A model co-creation and deployment hub. Users can propose models, contribute data, invoke services, integrate databases, and leverage RAG-enhanced reasoning—accelerating AI adoption across practical scenarios.

3. Team and Funding Overview

Flock.io was founded by Sun Jiahao and has issued its platform token, FLOCK. Total funding: $11 million, from investors including DCG, Lightspeed Faction, Tagus Capital, Animoca Brands, Fenbushi, and OKX Ventures. In March 2024, it raised $6 million in seed funding to launch its testnet and FL client; in December, an additional $3 million plus support from the Ethereum Foundation, focusing on blockchain-driven AI incentive mechanisms. Currently, the platform hosts 6,428 models, 176 training nodes, 236 validators, and 1,178 delegators.

Compared to fully decentralized training projects, Flock-type federated systems offer superior training efficiency, scalability, and privacy protection—ideal for medium/small model collaboration. Pragmatic and deployable, they prioritize engineering feasibility. In contrast, projects like Gensyn and Pluralis pursue deeper theoretical innovations in training and communication, facing greater system challenges but closer to the ideal of "trustless, decentralized" paradigms.

EXO: Attempting Decentralized Training on Edge Devices

EXO is a leading AI project in edge computing, aiming to enable lightweight AI training, inference, and agent applications on consumer-grade home devices. Its decentralized training path emphasizes "low communication overhead + local autonomous execution," leveraging the DiLoCo asynchronous delayed synchronization algorithm and SPARTA sparse parameter exchange to drastically reduce bandwidth needs. At the system level, EXO does not build an on-chain network or introduce economic incentives. Instead, it offers EXO Gym—a single-machine, multi-process simulation framework—enabling researchers to rapidly validate and experiment with distributed training methods locally.

1. Core Mechanisms Overview

-

DiLoCo Asynchronous Training: Nodes synchronize every H steps, adapting to unstable networks;

-

SPARTA Sparse Synchronization: Exchanges only a tiny fraction of parameters per step (e.g., 0.1%), preserving model coherence while minimizing bandwidth;

-

Asynchronous Composite Optimization: Combines both for optimal trade-offs between communication and performance;

-

evML Verification Exploration: Edge-Verified Machine Learning (evML) proposes using TEE/Secure Context for low-cost computation verification. Remote attestation and spot-checking allow edge devices to participate without staking—a pragmatic compromise between economic security and privacy.

2. Tools and Use Cases

-

EXO Gym: Simulates multi-node training on a single device, supporting communication strategy experiments for models like NanoGPT, CNN, and Diffusion;

-

EXO Desktop App: A personal AI desktop tool supporting local LLMs, iPhone mirroring, and privacy-preserving integration with personal context (SMS, calendar, video logs).

EXO Gym functions more as an exploratory decentralized training lab, integrating existing communication compression techniques (DiLoCo, SPARTA) to lighten training workflows. Compared to Gensyn, Nous, or Pluralis, EXO has not yet advanced to on-chain collaboration, verifiable incentives, or real distributed network deployment.

The Front-End Engine: A Comprehensive Study of Model Pretraining

Facing core challenges in decentralized training—device heterogeneity, communication bottlenecks, coordination difficulties, and lack of trusted execution—Gensyn, Prime Intellect, Pluralis, and Nous Research have proposed differentiated system architectures. Across training methods and communication mechanisms, these projects showcase distinct technical focuses and engineering logics.

In terms of training optimization, the four explore different dimensions—collaborative strategy, update mechanisms, asynchronous control—covering stages from pretraining to post-training.

-

Prime Intellect’s PRIME-RL is an asynchronous scheduling structure for pretraining, using "local training + periodic sync" to enable efficient, verifiable training in heterogeneous environments. Highly flexible and generally applicable, it presents high theoretical innovation in training control, with medium-high engineering difficulty due to demands on communication and control layers.

-

Nous Research’s DeMo optimizer targets training stability in asynchronous, low-bandwidth settings, achieving a high-fault-tolerance gradient update flow across heterogeneous GPUs—one of the few to unify theory and practice in "asynchronous communication compression." High theoretical and engineering difficulty, especially in asynchronous coordination precision.

-

Pluralis’ SWARM + NAG is among the most systematic and groundbreaking designs in asynchronous training. Based on asynchronous model parallelism, it integrates column-space sparsity and NAG momentum correction to enable stable convergence under low bandwidth. Extremely high theoretical innovation—structurally pioneering—with equally high engineering complexity requiring deep integration of multi-level synchronization and model partitioning.

-

Gensyn’s RL Swarm primarily serves post-training, focusing on policy fine-tuning and agent collaboration. Its "generate-evaluate-vote" flow suits dynamic adjustment of complex behaviors in multi-agent systems. Medium-high theoretical innovation in agent logic, moderate engineering difficulty centered on scheduling and convergence control.

At the communication level, each project addresses bandwidth, heterogeneity, and stability with targeted solutions.

-

Prime Intellect’s PCCL is a lower-level communication library replacing NCCL, providing robust collective communication. Medium-high theoretical innovation in fault-tolerant algorithms, medium engineering difficulty with good modularity.

-

Nous Research’s DisTrO is the communication core of DeMo, minimizing bandwidth while maintaining training continuity. High theoretical innovation in scheduling design, high engineering difficulty due to compression and synchronization precision.

-

Pluralis’ communication is deeply embedded in SWARM, drastically reducing communication load while maintaining convergence and throughput. High theoretical innovation, setting a new standard for asynchronous model communication; extremely high engineering difficulty requiring distributed model orchestration and structural sparsity control.

-

Gensyn’s SkipPipe is a fault-tolerant scheduler for RL Swarm. Low deployment cost, focused on enhancing training stability in production. Moderate theoretical innovation (engineering-focused), low difficulty but highly practical.

Additionally, we can evaluate decentralized training projects across two macro dimensions:

Blockchain Collaboration Layer: Focuses on protocol trust and incentive logic

-

Verifiability: Can training be verified? Are games or cryptography used to establish trust?

-

Incentive Design: Are there token rewards or role-based incentives?

-

Openness and Accessibility: Are nodes easy to join? Is the system permissionless?

AI Training System Layer: Emphasizes engineering capability and performance

-

Scheduling and Fault Tolerance: Support for fault tolerance, asynchrony, dynamic, and distributed scheduling;

-

Training Method Optimization: Innovations in training algorithms or structures;

-

Communication Optimization: Gradient compression, sparse communication, low-bandwidth adaptation.

The table below systematically evaluates Gensyn, Prime Intellect, Pluralis, and Nous Research across technical depth, engineering maturity, and theoretical innovation in decentralized training.

The Back-End Ecosystem: LoRA-Based Model Fine-Tuning

Within the full decentralized training value chain, projects like Prime Intellect, Pluralis.ai, Gensyn, and Nous Research primarily focus on pretraining infrastructure—communication, coordination, and collaborative optimization. Another category focuses on post-training stages: model adaptation and inference deployment (fine-tuning & inference delivery), without engaging in pretraining, parameter sync, or communication optimization. Representative projects include Bagel, Pond, and RPS Labs—all centering on LoRA fine-tuning, forming the critical "back-end" segment of the decentralized training ecosystem.

LoRA + DPO: Practical Pathways for Web3 Fine-Tuning Deployment

LoRA (Low-Rank Adaptation) is an efficient parameter-efficient fine-tuning method that injects low-rank matrices into pretrained models to learn new tasks while freezing original weights. This drastically reduces training cost and resource usage, accelerating fine-tuning and deployment flexibility—ideal for modular, composable Web3 applications.

Traditional LLMs like LLaMA and GPT-3 contain billions or trillions of parameters, making full fine-tuning prohibitively expensive. LoRA sidesteps this by training only a small set of injected parameters, becoming one of the most practical methods today.

Direct Preference Optimization (DPO), a recent post-training method, is often paired with LoRA for behavior alignment. Unlike RLHF, which requires complex reward modeling and reinforcement learning, DPO directly optimizes preference pairs—simpler, more stable, and better suited for lightweight, resource-constrained environments. Due to its efficiency, DPO is increasingly adopted by decentralized AI projects for alignment.

Reinforcement Learning (RL): Future Evolution of Decentralized Fine-Tuning

Long-term, many projects see RL as the most adaptive and evolution-capable path for decentralized training. Unlike static-data supervision or parameter tuning, RL emphasizes continuous strategy optimization through environmental interaction—naturally fitting Web3’s asynchronous, heterogeneous, incentive-driven collaboration. RL enables personalized, incremental learning, providing evolvable "behavioral intelligence" for agent networks, on-chain markets, and smart economies.

This paradigm aligns philosophically with decentralization and offers systemic advantages. However, high engineering barriers and complex coordination limit widespread adoption—for now.

Notably, Prime Intellect’s PRIME-RL and Gensyn’s RL Swarm are pushing RL from a post-training technique toward a core pretraining architecture—building trustless, collaborative training systems centered on RL.

Bagel (zkLoRA): Verifiable Layer for LoRA Fine-Tuning

Bagel leverages LoRA fine-tuning and integrates zero-knowledge proofs (ZK) to solve trust and privacy issues in "on-chain model fine-tuning." zkLoRA does not perform actual training but offers a lightweight, verifiable mechanism—allowing users to confirm a fine-tuned model originated from a specified base model and LoRA parameters, without accessing data or weights.

Unlike Gensyn’s Verde or Prime Intellect’s TOPLOC—which verify whether "training behavior actually occurred"—Bagel focuses on static verification of "whether the fine-tuned result is trustworthy." zkLoRA’s strengths are low verification cost and strong privacy, though its scope is limited to minor-parameter-change tasks.

Pond: Fine-Tuning and Agent Evolution Platform for GNNs

Pond is the only decentralized training project focused exclusively on Graph Neural Network (GNN) fine-tuning, serving structured data applications like knowledge graphs, social networks, and transaction graphs. By enabling users to upload graph data and provide training feedback, it offers a lightweight, controllable platform for personalized tasks.

Using efficient fine-tuning methods like LoRA, Pond aims to build modular, deployable agent systems—pioneering a new path for "small-model fine-tuning + multi-agent collaboration" in decentralized contexts.

RPS Labs: AI-Powered Liquidity Engine for DeFi

RPS Labs is a decentralized training project based on Transformer architecture, applying fine-tuned AI models to DeFi liquidity management, primarily in the Solana ecosystem. Its flagship product, UltraLiquid, is an active market-making engine that dynamically adjusts liquidity parameters using fine-tuned models—reducing slippage, increasing depth, and improving token issuance and trading experience.

RPS also offers UltraLP, helping liquidity providers optimize fund allocation on DEXs in real time—boosting capital efficiency and reducing impermanent loss—demonstrating the practical value of AI fine-tuning in finance.

From Front-End Engines to Back-End Ecosystems: The Road Ahead for Decentralized Training

The decentralized training ecosystem divides into two main categories: front-end engines for pretraining, and back-end ecosystems

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News