The world's largest open-source video model is now Created in China, produced by StepFun

TechFlow Selected TechFlow Selected

The world's largest open-source video model is now Created in China, produced by StepFun

In the future world of large AI models, Chinese strength will neither be absent nor fall behind.

Author: Heng Yu, reporting from Aofeisi Temple

Image source: Generated by Wujie AI

Just now, StepFun has partnered with Geely Auto Group to open-source two multimodal large models!

The new models include:

-

The Step-Video-T2V, the largest open-source video generation model in terms of parameter count globally

-

Step-Audio, the industry's first product-grade open-source speech interaction large model

The multimodal leader is now open-sourcing its multimodal models, with Step-Video-T2V adopting the highly permissive MIT open-source license, allowing free editing and commercial use.

(As usual, links to GitHub, Hugging Face, and ModelScope are at the end of this article)

During the development of these two large models, both parties complemented each other in computing power, algorithms, and scenario training, "significantly enhancing the performance of multimodal large models."

According to the official technical report, both released models perform excellently on benchmarks, surpassing similar open-source models domestically and internationally.

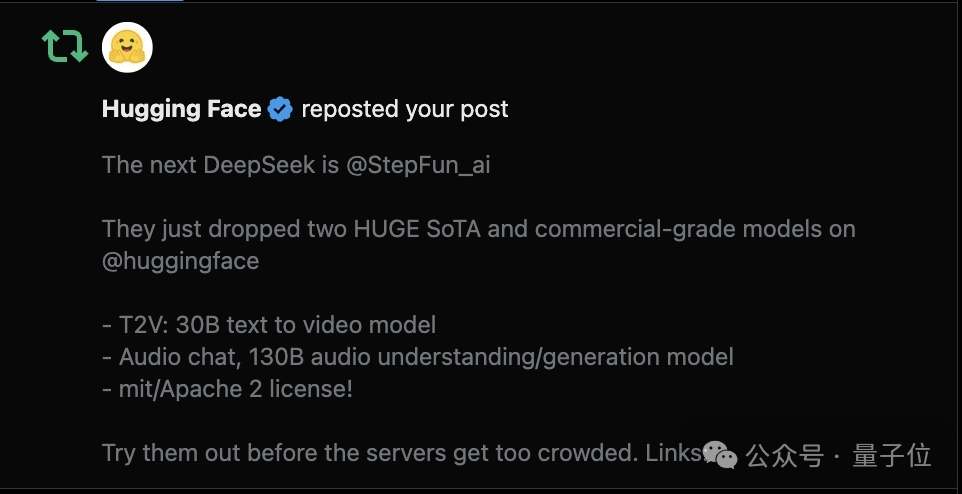

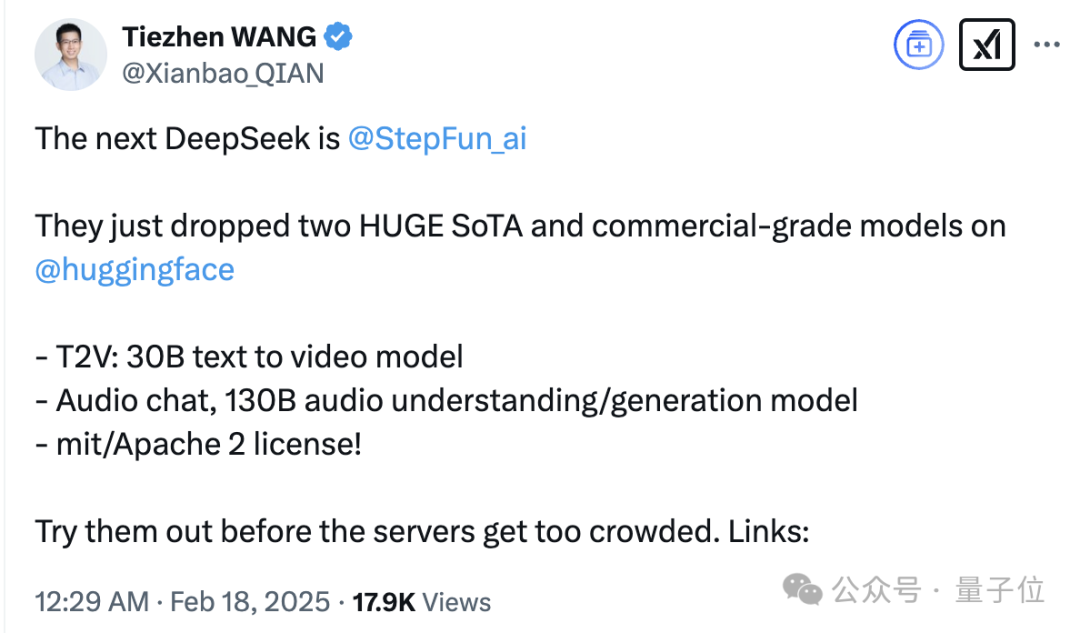

Hugging Face’s official account also shared high praise from its China head.

Highlight: "The next DeepSeek," "HUGE SoTA."

Oh, really?

Then QuantumBit will dissect the technical reports and conduct hands-on testing in this article to see if they truly live up to the hype.

QuantumBit confirms that both new open-source models have already been integrated into the Yuezhi App, making them accessible for everyone to try.

First Open-Sourcing of Multimodal Models by the Multimodal Leader

Step-Video-T2V and Step-Audio are StepFun's first open-sourced multimodal models.

Step-Video-T2V

Let’s start with the video generation model Step-Video-T2V.

With 30B parameters, it is the largest known open-source video generation model globally in terms of parameter count, natively supporting both Chinese and English input.

Officially, Step-Video-T2V features four key technical highlights:

First, it can directly generate videos up to 204 frames long at 540P resolution, ensuring high consistency and information density in generated content.

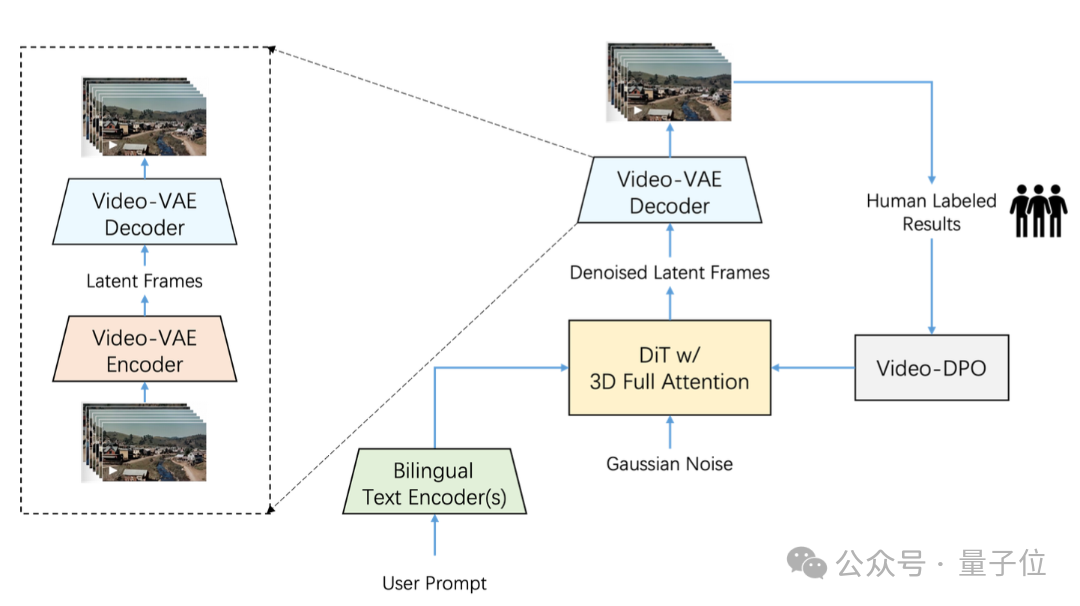

Second, a high-compression-ratio Video-VAE was designed and trained specifically for video generation tasks. Under the premise of maintaining reconstruction quality, it compresses spatial dimensions by 16×16 and temporal dimension by 8 times.

Most current VAE models on the market offer compression ratios of 8x8x4; with an additional 8x compression under the same number of video frames, Video-VAE improves training and generation efficiency by 64x.

Third, extensive system-level optimization has been performed on hyperparameters, model architecture, and training efficiency of the DiT model, ensuring efficient and stable training processes.

Fourth, a complete training strategy including pre-training and post-training phases is detailed, covering training tasks, learning objectives, data construction, and filtering methods at each stage.

In addition, Step-Video-T2V introduces Video-DPO (video preference optimization) in the final training phase—an RL-based optimization algorithm tailored for video generation that further enhances video quality, improving reasonableness and stability.

The ultimate result is smoother motion, richer details, and more accurate instruction alignment in generated videos.

To comprehensively evaluate open-source video generation models, StepFun has also released a new benchmark dataset named Step-Video-T2V-Eval, dedicated to text-to-video quality assessment.

This dataset is also open-sourced~

It contains 128 real-user-generated Chinese evaluation questions aimed at assessing video quality across 11 content categories, including motion, landscapes, animals, composite concepts, surrealism, etc.

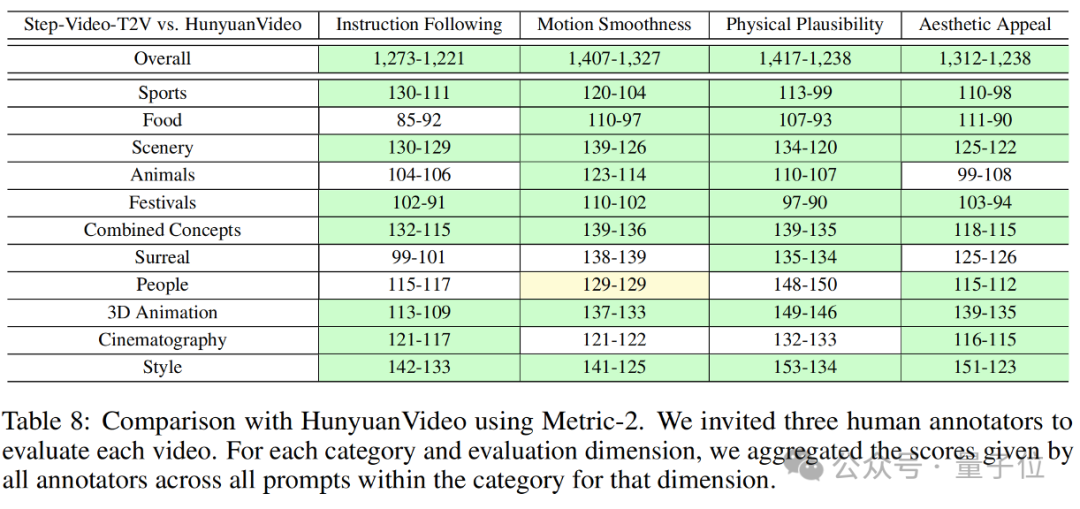

Evaluation results of Step-Video-T2V on this benchmark are shown below:

As seen, Step-Video-T2V outperforms previous best open-source video models in instruction following, motion smoothness, physical plausibility, aesthetic appeal, and other aspects.

This means the entire video generation field can now build research and innovation upon this new strongest base model.

Regarding actual performance, according to StepFun:

Step-Video-T2V demonstrates strong capabilities in complex motion, aesthetically pleasing human figures, visual imagination, basic text generation, native bilingual Chinese-English input, and cinematographic language, with outstanding semantic understanding and instruction-following abilities, effectively helping video creators achieve precise creative expression.

What are you waiting for? Let’s test it—

Following the official presentation order, the first test evaluates whether Step-Video-T2V can handle complex movements.

Previous video generation models often produced bizarre visuals when generating clips involving ballet, ballroom dance, Chinese traditional dance, rhythmic gymnastics, karate, martial arts, and other complex motions.

For example, suddenly appearing third legs or fused arms—pretty creepy.

To address such cases, we conducted targeted tests using the following prompt for Step-Video-T2V:

An indoor badminton court, eye-level view, fixed camera capturing a man playing badminton. A man wearing a red T-shirt and black shorts holds a racket, standing in the center of a green badminton court. A net divides the court into two halves. The man swings his racket and hits the shuttlecock to the opposite side. The lighting is bright and even, and the image is clear.

The generated scene, character, camera angle, lighting, and action all match perfectly.

Including “aesthetically pleasing humans” in the generated output is QuantumBit’s second challenge to Step-Video-T2V.

To be fair, today’s text-to-image models can produce photorealistic human images, especially convincing in static scenes and local details.

But once people move in videos, noticeable physical or logical flaws often remain.

How does Step-Video-T2V fare—

Prompt: A man wearing a black suit, dark tie, and white shirt, facial scars visible, serious expression. Close-up shot.

"Doesn't feel like AI."

This was the consistent feedback from QuantumBit’s editorial team after reviewing the generated video.

It refers not only to well-defined facial features and realistic skin texture with clearly visible scars—"not feeling like AI."

But also to lifelike expressions without vacant stares or stiffness—"not feeling like AI."

The above two tests kept Step-Video-T2V in a fixed camera position.

So how about dynamic camera movements?

The third test examines Step-Video-T2V’s mastery of cinematography techniques such as dolly, pan, tilt, tracking, rotation, and follow shots.

Tell it to rotate, and it rotates:

Not bad at all! Could almost work as a Steadicam operator on set (well, almost).

After several rounds of testing, the results speak for themselves:

Step-Video-T2V indeed excels in semantic understanding and instruction following, just as the benchmark suggests.

Even basic text generation is effortlessly handled:

Step-Audio

The other concurrently open-sourced model, Step-Audio, is the industry’s first product-grade open-source speech interaction model.

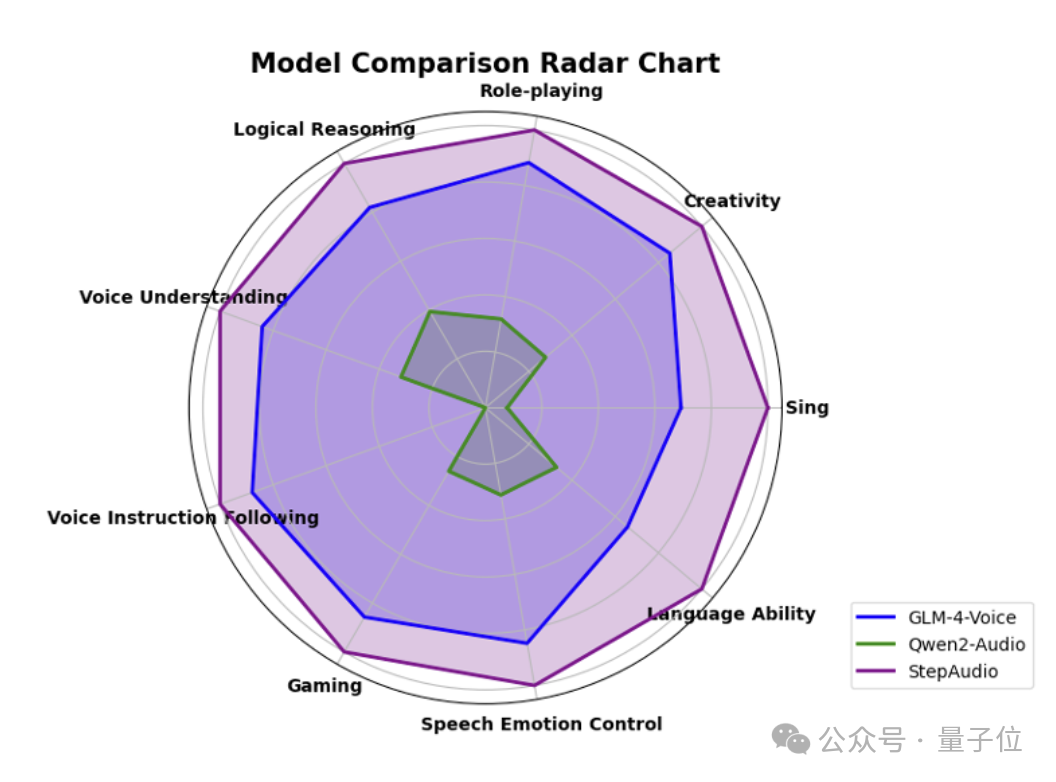

On StepFun’s self-built and open-sourced multidimensional evaluation framework StepEval-Audio-360, Step-Audio achieves top scores across logic reasoning, creativity, instruction control, language ability, role-playing, word games, emotional value, and more.

Across five major public benchmarks including LlaMA Question and Web Questions, Step-Audio outperforms all other open-source models of its kind and ranks first.

Its performance stands out particularly in the HSK-6 (Chinese Proficiency Test Level 6) evaluation.

Hands-on test below:

According to the StepFun team, Step-Audio can generate expressive outputs with different emotions, dialects, languages, singing, and personalized styles based on various scenarios, enabling natural and high-quality conversations with users.

Moreover, the generated speech is not only realistic and emotionally intelligent but also supports high-fidelity voice cloning and role-playing.

In short, Step-Audio fully satisfies application needs across industries like film & entertainment, social platforms, and gaming.

StepFun’s Open-Source Ecosystem Is Snowballing

How should we put it? One word: intense.

StepFun is truly pushing hard, especially in its core strength—multimodal models—

Since launch, its Step series of multimodal models have consistently ranked #1 across authoritative domestic and international benchmarks and leaderboards.

In just the past three months, it has taken the top spot multiple times.

-

On November 22 last year, the latest leaderboard from the Large Model Arena featured the multimodal understanding model Step-1V, tying with Gemini-1.5-Flash-8B-Exp-0827 in total score and ranking first among Chinese models in the vision domain.

-

In January this year, Step-1o series models topped the real-time multimodal model rankings on OpenCompass ("Sinan"), a leading domestic large model evaluation platform.

-

On the same day, the Large Model Arena’s updated leaderboard showed Step-1o-vision taking first place among domestic vision-focused large models.

Furthermore, StepFun’s multimodal models aren’t just high-performing and high-quality—they’re also rapidly iterated—

To date, StepFun has released 11 multimodal large models.

Last month alone, it launched six models in six days, covering language, speech, vision, and reasoning, further solidifying its title as the multimodal leader.

This month, two more multimodal models were open-sourced.

Maintaining this pace will continue to prove its status as a full-stack multimodal player.

Thanks to its powerful multimodal capabilities, starting in 2024, the market and developers have widely adopted StepFun’s API, forming a massive user base.

Consumer brands like Chabaidao have connected thousands of stores nationwide to the multimodal understanding model Step-1V, exploring applications of large models in the tea beverage industry through intelligent inspection and AIGC marketing.

Public data shows that over one million Chabaidao drinks are delivered daily under the protection of intelligent model inspections.

On average, Step-1V saves Chabaidao supervisors 75% of their self-inspection verification time, providing consumers with safer and higher-quality service.

Independent developers, such as popular AI apps "Weizhi Shu" and mental wellness app "Linjian Liaoyushi," ultimately chose StepFun’s multimodal model API after conducting AB testing on most domestic models.

(Whisper: Because it delivers the highest conversion rate)

Data shows that in the second half of 2024, call volume for StepFun’s multimodal large model API grew over 45x.

Now, with this open-sourcing initiative, StepFun is releasing its strongest multimodal models yet.

We observe that StepFun, already backed by strong market and developer reputation, is strategically planning deeper integration through this release.

On one hand, Step-Video-T2V uses the most open and permissive MIT license, allowing unrestricted editing and commercial use.

You could say it’s “holding nothing back.”

On the other hand, StepFun states its commitment to “lowering industrial adoption barriers.”

Take Step-Audio: unlike existing open-source solutions requiring redeployment and redevelopment, Step-Audio is a complete real-time dialogue solution that enables instant conversational functionality with minimal setup.

Zero-latency end-to-end experience right from deployment.

With these moves, an open-source tech ecosystem centered around StepFun and its multimodal ace cards is beginning to take shape.

Within this ecosystem, technology, creativity, and commercial value intertwine, jointly advancing multimodal AI development.

And with ongoing model R&D and iteration, rapid and continuous developer adoption, and collaborative support from ecosystem partners, StepFun’s “snowball effect” is already underway and growing stronger.

China’s Open-Source Power Is Speaking Up Globally With Strength

There was a time when mentioning leaders in open-source large models brought to mind Meta’s LLaMA or Albert Gu’s Mamba.

Today, there’s no doubt—China’s open-source force in large models is shining globally, reshaping outdated perceptions with real strength.

January 20—the eve of the Year of the Snake—was a day of fierce competition among global large models.

The most notable event was the debut of DeepSeek-R1, matching OpenAI o1 in reasoning performance at just one-third the cost.

The impact was huge: overnight, Nvidia lost $589 billion (approximately 4.24 trillion RMB), setting a record for the largest single-day decline in U.S. stock history.

More importantly, what elevated R1 to a globally celebrated milestone was not only its excellent reasoning and affordable pricing, but also its open-source nature.

This sent shockwaves across the industry—even OpenAI, long mocked as “no longer open,” saw CEO Sam Altman repeatedly speak publicly.

Altman said: “On the issue of open-sourcing weight AI models, I believe we’ve been on the wrong side of history.”

He added: “The world truly needs open models—they bring immense value. I’m glad there are already some great open models out there.”

Now, StepFun is also open-sourcing its new ace cards.

And openness is the core intention.

The company stated that the goal of open-sourcing Step-Video-T2V and Step-Audio is to promote sharing and innovation in large model technology and advance inclusive AI development.

Right from launch, these models showcased their strength across multiple benchmarks.

On today’s open-source large model battlefield, DeepSeek dominates reasoning, StepFun focuses on multimodality, and many other contenders keep evolving…

Their capabilities stand out not only within the open-source community but also across the broader large model landscape.

—China’s open-source force, having emerged, is now advancing further.

Take StepFun’s recent open-sourcing as an example: it breaks technical ground in multimodality and changes the decision-making logic for global developers.

Many prominent tech influencers from open-source communities like Eleuther AI have actively tested StepFun’s models, saying “Thank you to Chinese open-source efforts.”

Wang Tiezhen, Hugging Face China head, directly stated that StepFun will be the next “DeepSeek.”

From “technical breakthrough” to “ecosystem openness,” China’s path in large models grows ever more confident.

Back to the point—StepFun’s dual-model open-sourcing may just be a footnote in the 2025 AI race.

At a deeper level, it reflects China’s technological confidence in open-source innovation and sends a clear message:

In the future world of AI large models, Chinese players will neither be absent nor fall behind.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News