The Bitter Religion: The Holy War of Artificial Intelligence Centered on the Law of Expansion

TechFlow Selected TechFlow Selected

The Bitter Religion: The Holy War of Artificial Intelligence Centered on the Law of Expansion

The AI community is embroiled in a doctrinal dispute over its future and whether there is sufficient scale to create a god.

By Mario Gabriele

Translated by Block unicorn

The Holy War of Artificial Intelligence

I would rather live my life as if there is a God and die to find out there isn't, than live my life as if there isn't and die to find out there is. — Blaise Pascal

Religion is an interesting thing. Perhaps because it's utterly unprovable in any direction, or perhaps because of my favorite saying: "You can’t reason someone out of a position they didn’t reason themselves into."

The hallmark of religious belief is that during its ascent, it accelerates at such an incredible pace that doubting the existence of God becomes nearly impossible. When everyone around you increasingly believes, how can you question a divine presence? When the world rearranges itself around a doctrine, where is there room for heresy? When temples and cathedrals, laws and norms are all structured according to a new, unshakable gospel, where is there space for opposition?

When Abrahamic religions first emerged and spread across continents, or when Buddhism moved from India throughout Asia, the immense momentum of faith created a self-reinforcing cycle. As more people converted and complex theological systems and rituals were built around these beliefs, questioning their basic premises became increasingly difficult. In an ocean of credulity, being a heretic was never easy. Grand churches, intricate religious texts, and thriving monasteries stood as physical evidence of divine presence.

But religious history also tells us how easily such structures can collapse. As Christianity spread into Scandinavia, ancient Norse beliefs crumbled within just a few generations. Egypt’s religious system lasted thousands of years before vanishing as newer, more enduring beliefs rose alongside larger power structures. Even within the same religion, we’ve seen dramatic splits—the Reformation tore through Western Christianity, while the Great Schism split Eastern and Western churches. These divisions often began with seemingly minor doctrinal disagreements that gradually evolved into entirely different belief systems.

The Canon

God is a metaphor for that which transcends all levels of intellectual thought. It's as simple as that. — Joseph Campbell

In short, believing in God is religion. And perhaps creating God isn't so different either.

Since its inception, optimistic AI researchers have imagined their work as creationism—literally, the creation of God. In recent years, the explosive development of large language models (LLMs) has further solidified believers’ conviction that we are on a sacred path.

It also confirmed a blog post written back in 2019. Though largely unknown outside the AI community until recently, Canadian computer scientist Richard Sutton’s “The Bitter Lesson” has become an increasingly important text within the field, evolving from obscure knowledge into the foundation of a new, all-encompassing religion.

In 1,113 words (each religion needs its sacred number), Sutton summarized a technical observation: “The biggest lesson that can be read from 70 years of AI research is that general methods that leverage computation are ultimately the most effective, by a large margin.” Advances in AI models have ridden the massive wave of exponentially increasing computational resources, driven by Moore’s Law. Meanwhile, Sutton pointed out, much of AI research focused on optimizing performance through specialized techniques—adding human knowledge or narrow tools. While these optimizations might help in the short term, in Sutton’s view, they ultimately waste time and resources—like adjusting your surfboard fin or trying new wax as a giant wave approaches.

This is the foundation of what we now call the “Bitter Religion.” It has only one commandment, commonly known in the community as the “Scaling Law”: exponential growth in computation drives performance; everything else is folly.

The Bitter Religion has expanded from large language models (LLMs) to world models, and is now rapidly spreading through untouched holy sites like biology, chemistry, and embodied intelligence (robotics and autonomous vehicles).

Yet as Sutton’s doctrine spreads, definitions begin to shift. This is the mark of any active, living religion—debate, extension, annotation. The “Scaling Law” no longer merely means scaling computation (the Ark was never just a boat); it now refers to various methods aimed at boosting transformer and computational performance, including clever tricks.

The modern canon now includes attempts to optimize every part of the AI stack—from techniques applied directly to core models (model merging, Mixture of Experts (MoE), knowledge distillation) to generating synthetic data to feed these ever-hungry gods—with extensive experimentation along the way.

Warring Sects

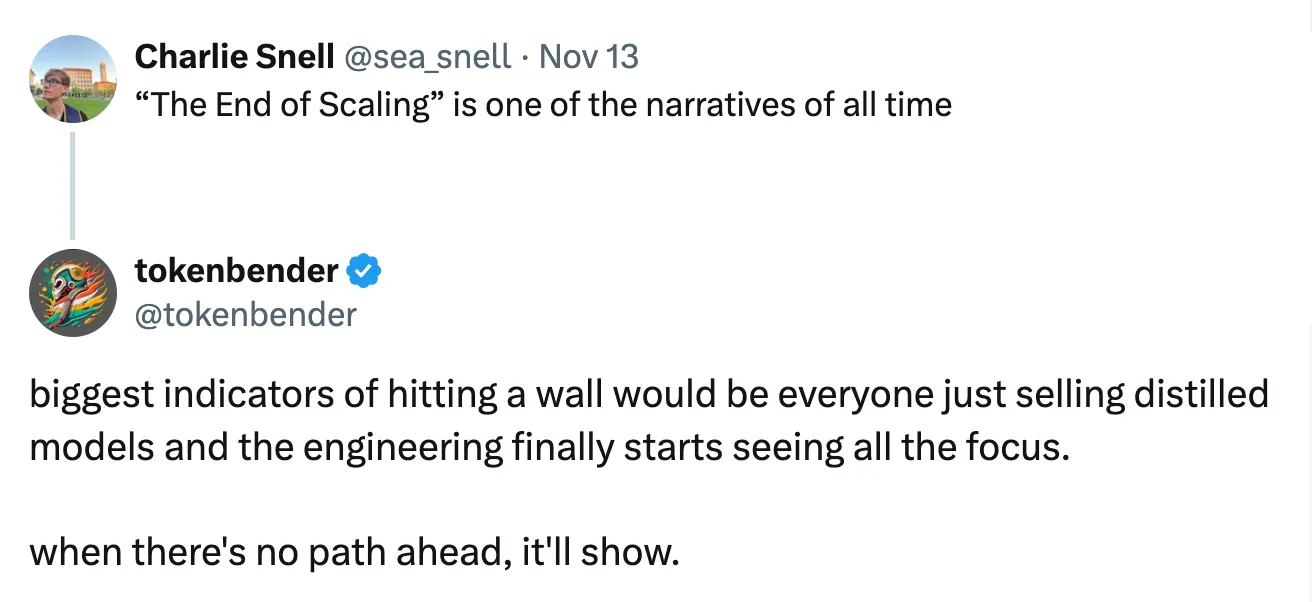

Recently, a question stirring debate within the AI community carries the scent of holy war: Is the “Bitter Religion” still correct?

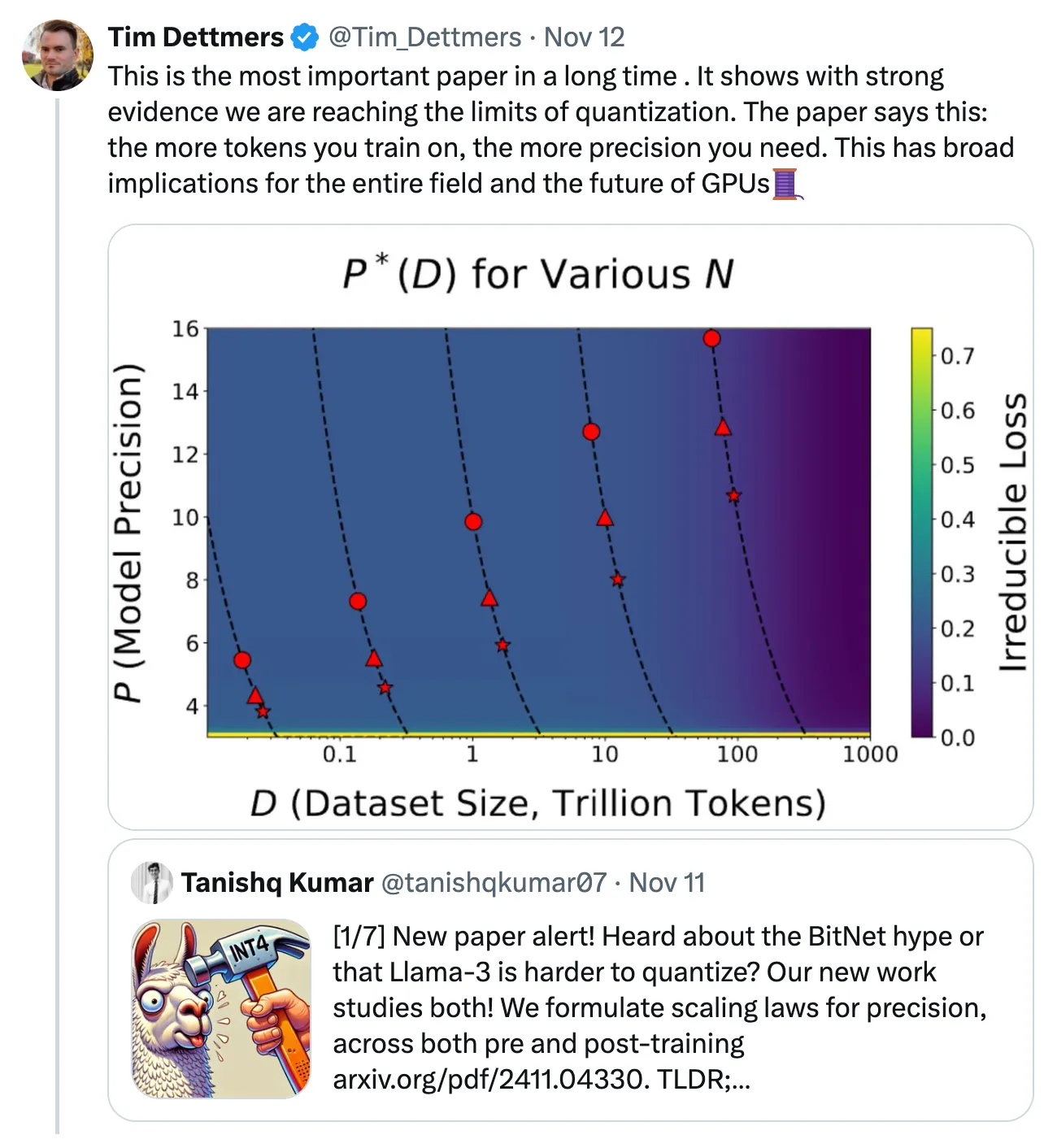

This week, Harvard, Stanford, and MIT published a new paper titled “The Scaling Law of Precision,” igniting this conflict. The paper discusses the end of efficiency gains from quantization—a suite of techniques that improve AI model performance and greatly benefit the open-source ecosystem. Tim Dettmers, a research scientist at the Allen Institute for AI, summarized its importance in the post below, calling it “the most important paper in a long time.” It continues a conversation intensifying over the past few weeks and reveals a notable trend: the increasing consolidation of two opposing religions.

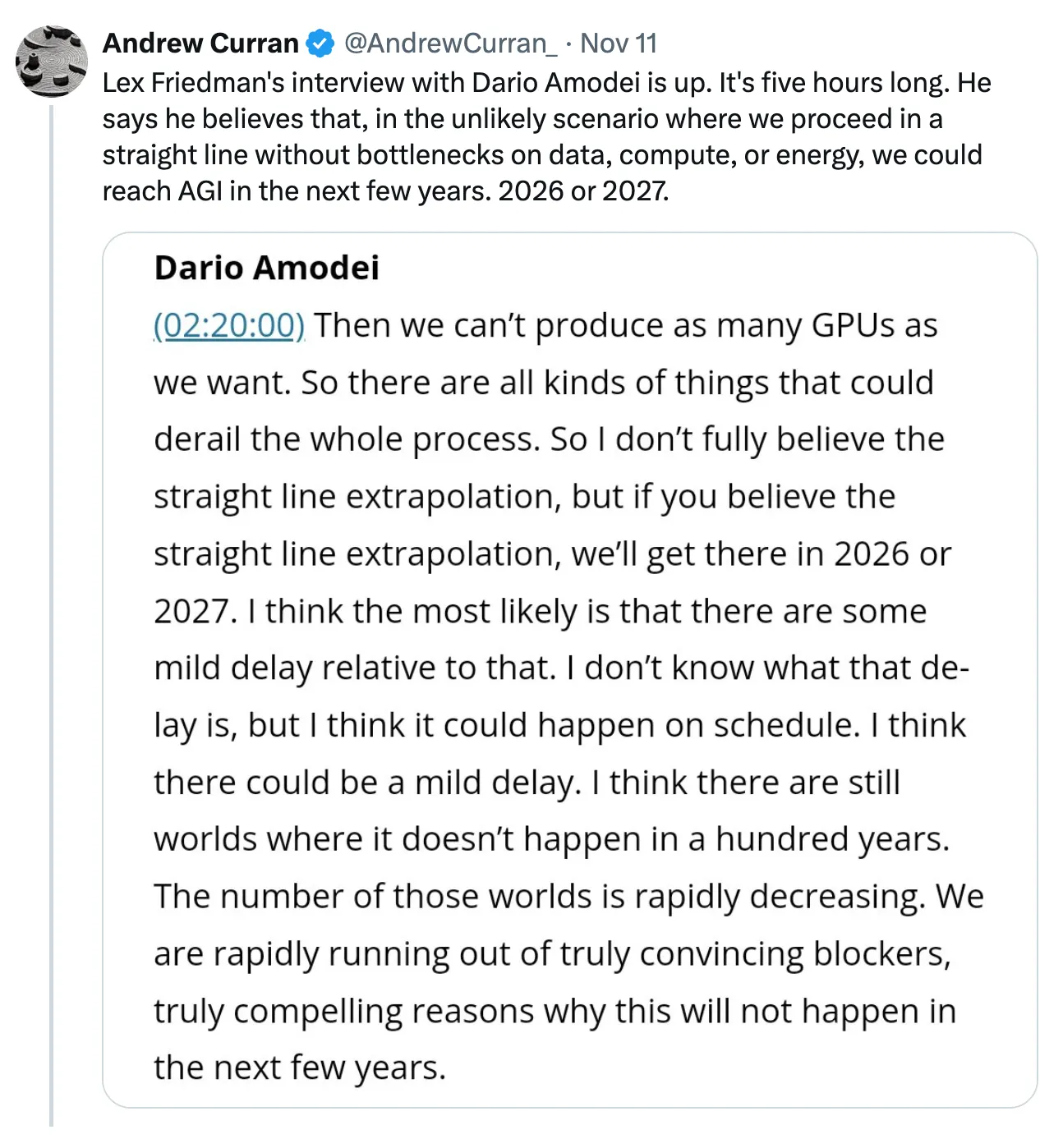

Sam Altman, CEO of OpenAI, and Dario Amodei, CEO of Anthropic, belong to the same sect. Both confidently assert that we will achieve Artificial General Intelligence (AGI) within roughly the next 2–3 years. Altman and Amodei could arguably be considered the two figures most reliant on the sanctity of the Bitter Religion. All their incentives push toward over-promising, generating maximum hype to accumulate capital in a game almost entirely dominated by economies of scale. If the Scaling Law isn’t the Alpha and Omega—the beginning and the end—what do you need $22 billion for?

Ilya Sutskever, former chief scientist at OpenAI, adheres to a different set of principles. Alongside other researchers—including many reportedly from within OpenAI—he believes scaling is approaching its limits. This group argues that sustaining progress and bringing AGI into reality will inevitably require new science and research.

The Sutskever faction reasonably points out that Altman’s continued scaling ideology is economically unfeasible. As AI researcher Noam Brown asked: “After all, are we really going to train models that cost hundreds of billions or even trillions of dollars?” Not to mention the additional tens of billions in inference compute costs required if we shift scaling from training to inference.

But true believers are well-versed in countering their opponents’ arguments. The missionary at your doorstep can effortlessly resolve your hedonistic trilemma. To counter Brown and Sutskever, proponents point to the potential of scaling “test-time compute.” Unlike previous approaches that relied on larger-scale training, test-time compute allocates more resources during execution. When an AI model needs to answer your question or generate code or text, it simply spends more time and computation. It’s like shifting focus from studying math to persuading your teacher to give you an extra hour and let you use a calculator. For many in the ecosystem, this represents the new frontier of the Bitter Religion, as teams move from orthodox pre-training toward post-training/inference methods.

It’s easy to point out flaws in other belief systems, to critique rival doctrines without exposing your own. So what is my own belief? First, I believe this current generation of models will deliver very high returns on investment over time. As people learn to bypass limitations and leverage existing APIs, we’ll see genuinely innovative product experiences emerge and succeed. We’ll move beyond the skeuomorphic and incremental phases of AI products. We shouldn’t call this “Artificial General Intelligence” (AGI), as that definition suffers from structural flaws, but rather “minimal viable intelligence,” customizable depending on product and use case.

As for achieving Artificial Superintelligence (ASI), more structure is needed. Clearer definitions and distinctions will help us better discuss the trade-offs between economic value and cost. For example, AGI may offer economic value to a subset of users (a local belief system), while ASI could unleash unstoppable compounding effects, transforming the world, our belief systems, and social structures. I don’t believe scaling transformers alone will get us to ASI—but sadly, as some might say, this is merely my atheistic belief.

Lost Faith

The AI community won’t resolve this holy war in the short term; in emotional battles, facts carry little weight. Instead, we should turn our attention to what it means for AI to question its faith in the Scaling Law. A loss of faith could trigger ripple effects beyond LLMs, impacting entire industries and markets.

To be fair, in most areas of AI/machine learning, we haven’t fully explored the limits of scaling laws—more miracles likely lie ahead. But if doubt truly creeps in, it will become harder for investors and builders to maintain high confidence in the ultimate performance ceilings of “early-curve” categories like biotech and robotics. In other words, if we see LLMs slowing down and deviating from the chosen path, the belief systems of many founders and investors in adjacent fields could collapse.

Whether that’s fair is another question.

One argument holds that “AGI naturally requires greater scale,” implying that specialized models should demonstrate “quality” at smaller scales, making them less prone to bottlenecks before delivering real value. If a domain-specific model consumes only a fraction of data and thus needs only a fraction of compute to become viable, shouldn’t it have ample room for improvement? Intuitively, this makes sense. Yet repeatedly, we find the key often lies elsewhere: including relevant or seemingly irrelevant data frequently boosts performance in apparently unrelated models. For instance, incorporating programming data appears to enhance broader reasoning capabilities.

In the long run, the debate over specialized models may prove irrelevant. Anyone building ASI will likely aim for an entity capable of self-replication and self-improvement, exhibiting infinite creativity across domains. Holden Karnofsky, former OpenAI board member and co-founder of Open Philanthropy, calls this creation “PASTA” (Process for Automating Scientific and Technological Advancement). Sam Altman’s original profit plan seems to rely on a similar principle: “Build AGI, then ask it how to make money.” This is eschatological AI—the final destiny.

The success of large AI labs like OpenAI and Anthropic has fueled capital markets’ enthusiasm for funding similar “OpenAI for X” labs, whose long-term goal is to build “AGI” within their specific vertical or domain. This decomposition of scale suggests a paradigm shift away from OpenAI-style simulation toward product-centric companies—a possibility I raised at Compound’s 2023 annual meeting.

Unlike eschatological models, these companies must demonstrate a series of tangible advances. They will be companies built on scaling engineering problems, not scientific organizations conducting applied research, with the ultimate goal of building products.

In science, if you know what you’re doing, you shouldn’t be doing that. In engineering, if you don’t know what you’re doing, you shouldn’t be doing that. — Richard Hamming

Believers are unlikely to lose their sacred faith anytime soon. As previously noted, as religions grow, they codify scripts and heuristics for living and worship. They build physical monuments and infrastructure that reinforce their strength and wisdom, signaling that they “know what they’re doing.”

In a recent interview, Sam Altman said the following about AGI (emphasis ours):

This is the first time I feel we actually know what to do. There’s still a lot of work between now and building an AGI. We know there are known unknowns, but I think we basically know what to do, it’s just going to take a while; it will be hard, but it’s also incredibly exciting.

Judgment

In questioning the Bitter Religion, scaling skeptics are reckoning with one of the deepest discussions of the past few years. Each of us has pondered it in some form: What happens if we invent God? How quickly would that God appear? And if AGI truly rises irreversibly, what then?

Like all complex and uncertain topics, we quickly store our personal reactions in our minds: some despair at becoming obsolete, most expect a mix of destruction and prosperity, while others believe humanity will do what it does best—continue finding problems to solve and solving those we create—leading to pure abundance.

Anyone with significant stakes wants to predict what the world will look like if the Scaling Law holds and AGI arrives within years. How will you serve this new God, and how will this God serve you?

But what if the gospel of stagnation displaces optimism? What if we start thinking—even God might decline? In a previous article, “Robot FOMO, Scaling Laws, and Technological Forecasting,” I wrote:

Sometimes I wonder what would happen if the Scaling Law fails—would it resemble the impact of revenue losses, slowing growth, and rising interest rates across many tech sectors? Sometimes I wonder if the Scaling Law fully succeeds—would it mirror the experience of pioneers in other fields, seeing value capture commoditized along predictable curves?

“The benefit of capitalism is that, regardless, we’ll spend vast amounts of money to find out.”

For founders and investors, the question becomes: What comes next? Candidates who could become great product builders in each vertical are gradually emerging. More will appear across industries, but the story has already begun. Where do new opportunities arise?

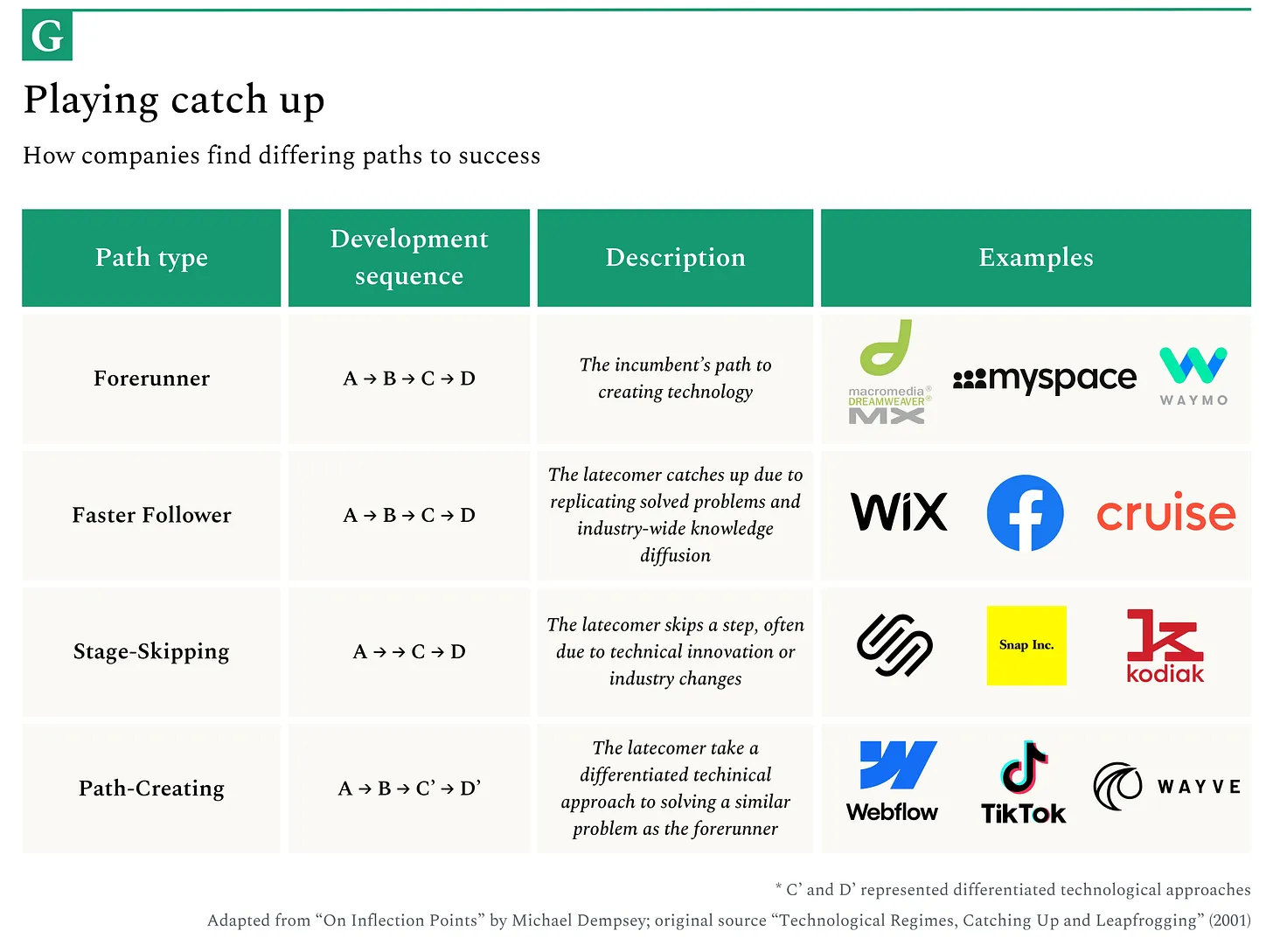

If scaling stalls, I expect a wave of shutdowns and consolidations. Surviving companies will increasingly shift focus toward engineering—an evolution we should anticipate by tracking talent flows. We’ve already seen signs: OpenAI is moving in this direction as it increasingly productizes itself. This shift will open space for the next generation of startups to “cut corners” by relying on innovative applied research and science rather than engineering, surpassing incumbents by forging new paths.

Lessons from Religion

My view of technology is that anything appearing to have obvious compounding effects usually doesn’t last long—and the widespread belief is that any business seeming to have clear compounding advantages strangely develops at speeds and scales far below expectations.

Early signs of religious schism typically follow predictable patterns—frameworks we can use to continue tracking the evolution of the Bitter Religion.

It usually begins with competing interpretations, driven by capitalist or ideological motives. In early Christianity, differing views on Christ’s divinity and the nature of the Trinity led to splits and divergent biblical interpretations. Beyond the AI splits we’ve mentioned, other cracks are emerging. For example, some AI researchers are rejecting the core orthodoxy of transformers in favor of alternative architectures like State Space Models, Mamba, RWKV, and Liquid Models. While these remain soft signals today, they reveal budding heretical thoughts and a willingness to rethink the field from first principles.

Over time, impatient prophets breed distrust. When religious leaders’ predictions fail or divine intervention doesn’t arrive as promised, seeds of doubt are planted.

The Millerite movement predicted Christ’s return in 1844, but when Jesus didn’t show up, the movement collapsed. In tech, we quietly bury failed prophecies and allow our prophets to keep painting optimistic, long-cycle visions, despite repeated missed deadlines (hi, Elon). However, without sustained improvements in raw model performance, faith in the Scaling Law could face a similar collapse.

A corrupt, bloated, or unstable religion is vulnerable to apostasy. The Protestant Reformation succeeded not only because of Luther’s theology but because it emerged during a period of Catholic Church decay and turmoil. When mainstream institutions crack, long-standing “heretical” ideas suddenly find fertile ground.

In AI, we might watch smaller models or alternative approaches achieving similar results with less computation or data—such as work from various Chinese enterprise labs and open-source teams like Nous Research. Those who break through biological intelligence barriers and overcome obstacles once deemed insurmountable may forge a new narrative.

The most direct and timely way to spot transformation is to track practitioner movements. Before any formal schism, theologians and clergy often privately hold heretical views while publicly conforming. Today, this might manifest as AI researchers who outwardly follow the Scaling Law but secretly pursue radically different approaches, waiting for the right moment to challenge consensus—or leave their labs in search of theoretically freer grounds.

The tricky thing about religious and technological orthodoxy is that they often contain partial truths, just not as universally valid as their most devoted followers believe. Just as religions embed fundamental human truths within metaphysical frameworks, the Scaling Law clearly describes real aspects of how neural networks learn. The question is whether this reality is as complete and immutable as current fervor suggests, and whether these religious institutions (AI labs) are flexible and strategic enough to lead zealots forward—while building the printing presses (chat interfaces and APIs) that spread their knowledge.

Endgame

"Religion is regarded by the common people as true, by the wise as false, and by rulers as useful." — Lucius Annaeus Seneca

A possibly outdated view of religious institutions is that once they reach a certain size, like many human-run organizations, they become susceptible to survival instincts, striving merely to stay competitive. In doing so, they neglect truth and higher purpose—two aims that need not be mutually exclusive.

I’ve written before about how capital markets become narrative-driven information cocoons, where incentives perpetuate these narratives. The consensus around the Scaling Law carries an ominous familiarity—a deeply entrenched belief system that is mathematically elegant and extremely useful for coordinating large-scale capital deployment. Like many religious frameworks, it may prove more valuable as a coordination mechanism than as a fundamental truth.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News