Want to build your own AI Agent? Here's your comprehensive large language model guide

TechFlow Selected TechFlow Selected

Want to build your own AI Agent? Here's your comprehensive large language model guide

A complete guide on how to choose the right LLM.

Author: superoo7

Translation: TechFlow

Almost every day, I receive similar questions. After helping build over 20 AI agents and spending significant costs on testing models, I’ve distilled some truly effective insights.

Here is a complete guide on how to choose the right LLM.

The current landscape of large language models (LLMs) is evolving rapidly. Almost every week, new models are released—each claiming to be the “best.”

But the reality is: no single model fits all needs.

Each model has its own ideal use case.

I’ve tested dozens of models, and through my experience, I hope to help you avoid unnecessary waste of time and money.

To clarify: this article is not based on lab benchmarks or marketing claims.

I’ll be sharing practical insights from hands-on experience building AI agents and generative AI (GenAI) products over the past two years.

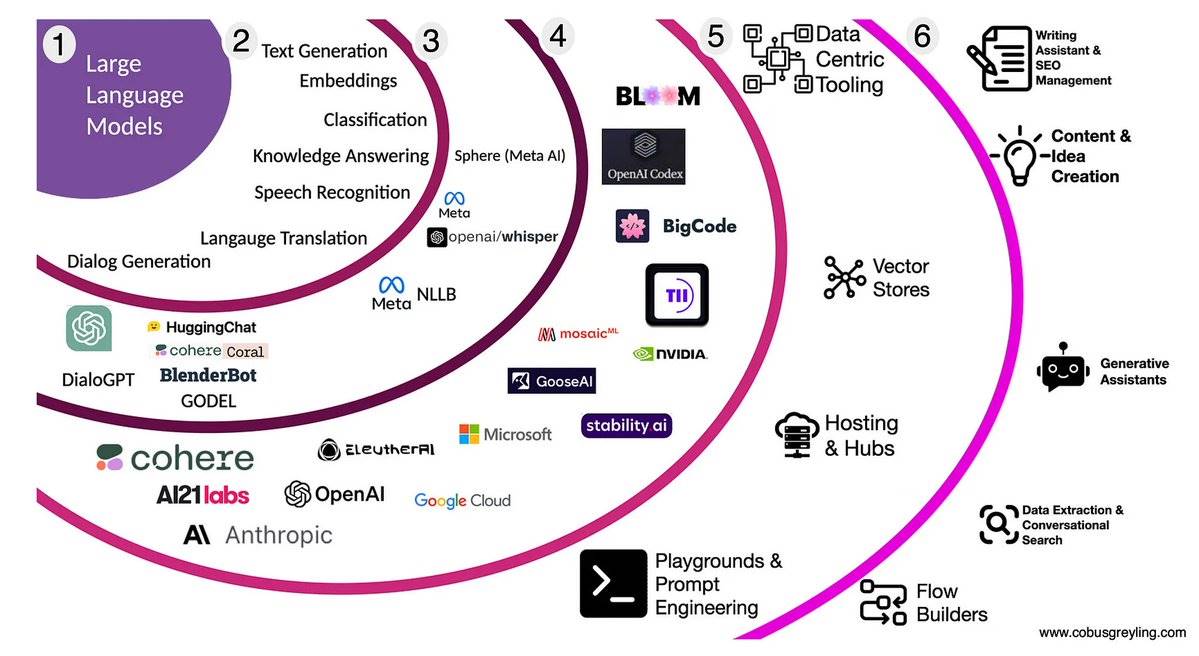

First, let’s understand what an LLM is:

A large language model (LLM) is like teaching computers to “speak human.” It predicts the most likely next word based on your input.

This technology started with one seminal paper: Attention Is All You Need

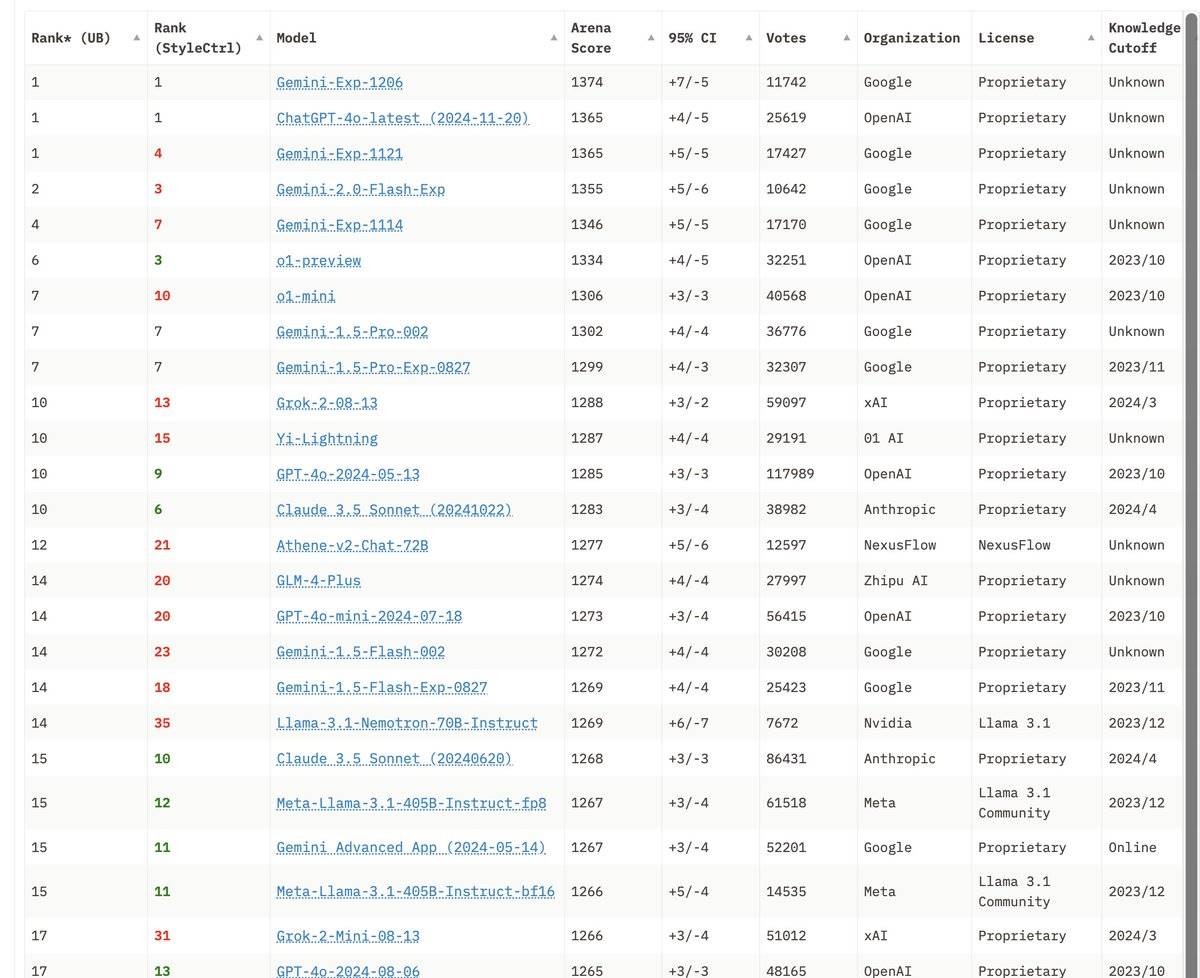

Basics — Closed-source vs. Open-source LLMs:

-

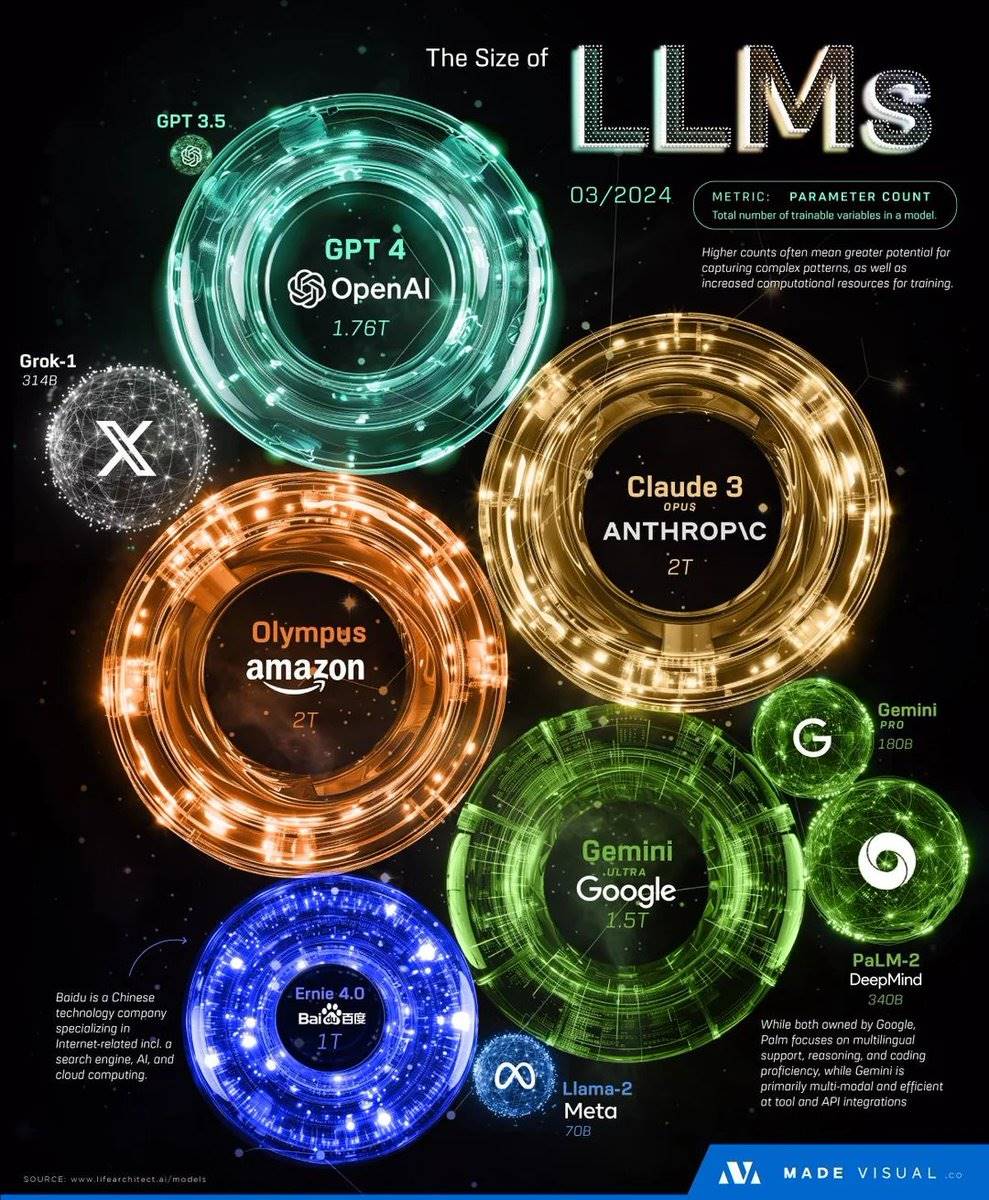

Closed-source: Examples include GPT-4 and Claude. Typically usage-based pricing, hosted by the provider.

-

Open-source: Examples include Meta's Llama and Mixtral. Require users to deploy and run them independently.

You might feel confused by these terms at first, but understanding the difference is crucial.

Model size does not equal better performance:

For example, 7B means the model has 7 billion parameters.

But larger models don’t always perform better. The key is choosing the model that best fits your specific needs.

If you're building X/Twitter bots or social AIs:

Grok by @xai is an excellent choice:

-

Generous free tier available

-

Exceptional understanding of social context

-

Although closed-source, it’s definitely worth trying

Highly recommended for developers just getting started! (Rumor has it:

Eliza by @ai16zdao uses XAI Grok as its default model)

If you need to handle multilingual content:

QwQ by @Alibaba_Qwen performed exceptionally well in our tests, especially in Asian language processing.

Note: Its training data primarily comes from mainland China, so certain information may be missing.

If you need a general-purpose or strong reasoning model:

Models by @OpenAI remain industry leaders:

-

Stable and reliable performance

-

Extensively battle-tested

-

Strong safety mechanisms

An ideal starting point for most projects.

If you’re a developer or content creator:

Claude by @AnthropicAI is my daily driver:

-

Outstanding coding capabilities

-

Clear and detailed responses

-

Ideal for creative work

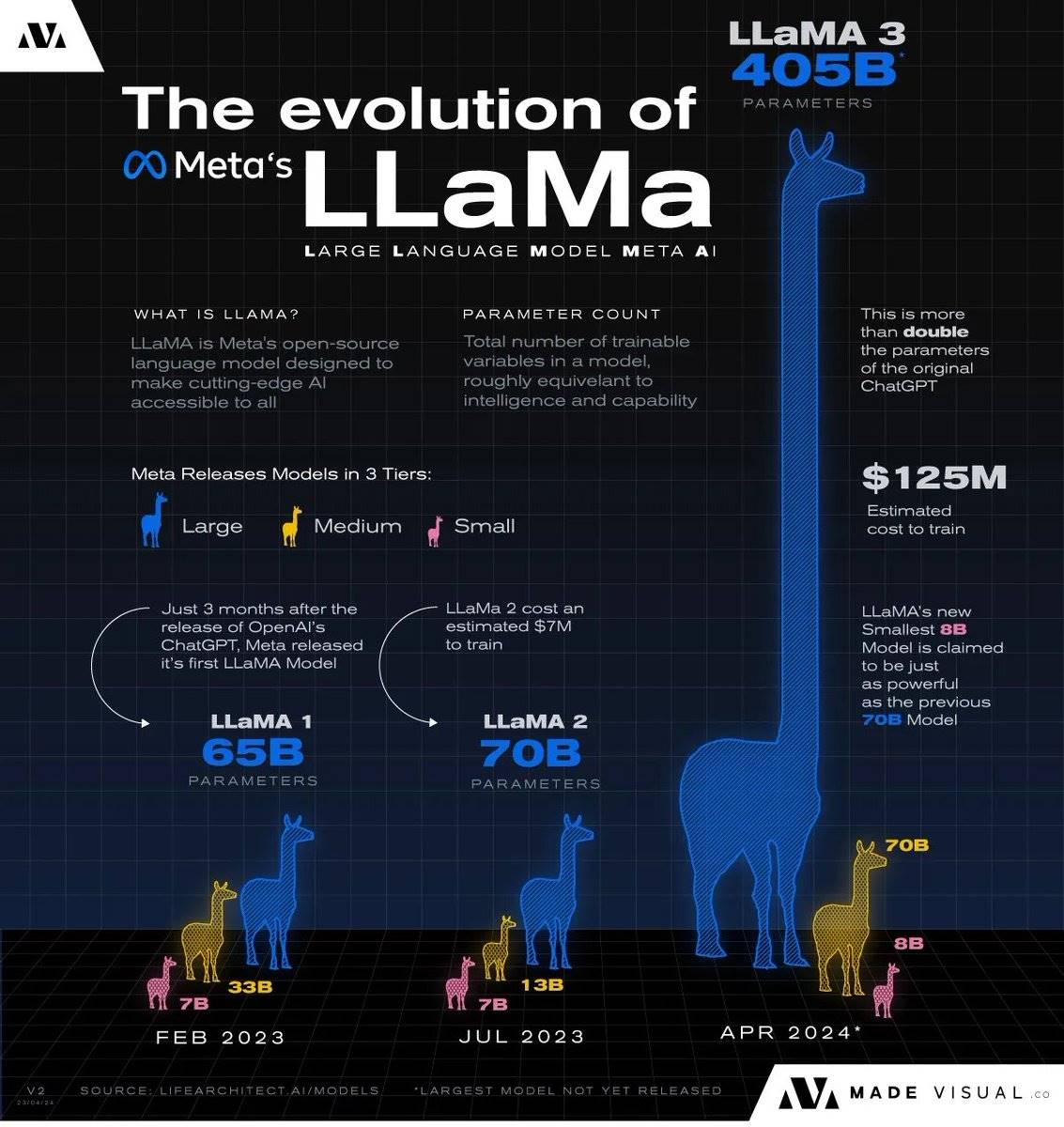

Meta’s Llama 3.3 has recently drawn major attention:

-

Stable and reliable performance

-

Open-source and highly flexible

-

Can be tested via @OpenRouterAI or @GroqInc

For example, crypto x AI projects like @virtuals_io are building products based on it.

If you need an AI for role-playing:

MythoMax 13B by @TheBlokeAI is currently the top performer in role-playing, ranking at the top of relevant leaderboards for several months.

Cohere’s Command R+ is an underrated gem:

Excels in role-playing tasks

Handles complex tasks with ease

Supports up to 128,000 token context window—offering much longer "memory"

Google’s Gemma is a lightweight yet powerful option:

-

Excels in focused tasks

-

Budget-friendly

-

Suitable for cost-sensitive projects

Personal tip: I often use small Gemma models as “unbiased judges” within AI workflows—works exceptionally well for validation tasks!

@MistralAI’s models deserve special mention:

-

Open-source with premium quality

-

Mixtral delivers very strong performance

-

Especially good at complex reasoning

Widely praised by the community—definitely worth a try.

Cutting-edge AI at your fingertips.

Pro Tip: Try combining models!

-

Different models have different strengths

-

Create AI “teams” for complex tasks

-

Let each model focus on what it does best

Just like assembling a dream team—each member brings a unique skill set.

How to get started quickly:

Use @OpenRouterAI or @redpill_gpt to test models. These platforms support cryptocurrency payments, making access convenient.

They are excellent tools for comparing model performance.

If you want to save costs and run models locally, try @ollama to experiment using your own GPU.

If speed is your priority, @GroqInc’s LPU technology offers extremely fast inference:

-

Limited model selection

-

But performance is ideal for production deployment

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News