The Airdrop Trilemma: How to Acquire Users and Retain Them—What’s the Right Airdrop Strategy for Projects?

TechFlow Selected TechFlow Selected

The Airdrop Trilemma: How to Acquire Users and Retain Them—What’s the Right Airdrop Strategy for Projects?

Stop doing one-time airdrops!

Author: KERMAN KOHLI

Translation: TechFlow

Recently, Starkware launched its long-anticipated token airdrop. As with most airdrops, this sparked significant controversy.

So why does this keep happening over and over again? You might hear some of the following arguments:

-

Insiders just want to dump and cash out billions of dollars

-

Teams don’t know better and haven’t received proper advice

-

Whales should be prioritized because they bring in total value locked (TVL)

-

Airdrops are meant to democratize participation in crypto

-

Without farmers, there would be no protocol usage or stress testing

-

Misaligned airdrop incentives continue to produce strange side effects

None of these points are entirely wrong, but none are fully correct either. Let’s dive deeper into some of them to ensure we have a comprehensive understanding of the current issues.

When conducting an airdrop, you must make trade-offs among three factors:

-

Capital efficiency

-

Decentralization

-

Retention rate

You’ll often find that an airdrop performs well along one dimension, but rarely achieves good balance across two or all three dimensions.

Capital efficiency refers to the criteria used to determine how many tokens participants receive. The more efficiently you allocate your airdrop, the more it becomes like liquidity mining (one token per dollar deposited), which benefits whales.

Decentralization refers to who receives your tokens and based on what criteria. Recent airdrops have adopted arbitrary standards aimed at maximizing the breadth of recipient coverage. This is generally positive, as it helps avoid legal trouble and creates more millionaires, boosting public goodwill.

Retention rate is defined as the percentage of users who remain after the airdrop. In a sense, it measures how aligned users are with your intentions. The lower the retention rate, the less aligned users are with your goals. As an industry benchmark, a 10% retention rate means only 1 out of 10 addresses represents a genuine user!

Setting retention aside for now, let’s examine the first two factors—capital efficiency and decentralization—in greater detail.

Capital Efficiency

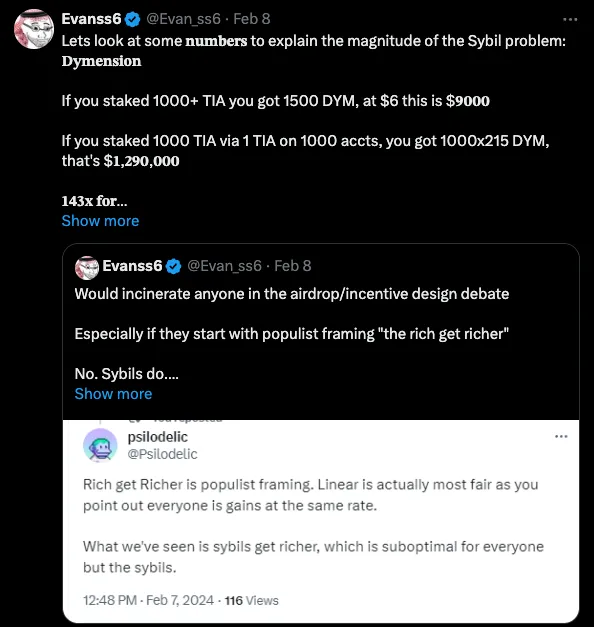

To understand the first point about capital efficiency, let’s introduce a new term: “sybil coefficient.” It essentially measures how much benefit you get from allocating one dollar of capital across a number of accounts.

Where you fall on this spectrum ultimately determines how wasteful your airdrop will be. If your sybil coefficient is 1, technically speaking, you’re running a liquidity mining program—which tends to anger many users.

However, when projects like Celestia see their sybil coefficient spike to 143, you observe extremely wasteful behavior and rampant farming activity.

Decentralization

This brings us to the second point about decentralization: ideally, you want to help the “little guy”—a genuine early user of your product, even if not wealthy. If your sybil coefficient approaches 1, you'll give very few tokens to the “little guy” and mostly reward “whales.”

Now, the airdrop debate gets heated. There are three types of users involved:

-

“Small player A,” who just wants to quickly make money and leave (possibly using multiple wallets in the process)

-

“Small player B,” who stays after receiving the airdrop and genuinely likes your product

-

“Professional farmers who act like many small players,” who are solely focused on capturing most of your incentives before moving on to the next project.

The third type is the worst; the first is somewhat acceptable; the second is ideal. Distinguishing between these three is the core challenge of airdrop design.

So, how do you solve this? While I don’t have a specific solution, I’ve developed a philosophical approach through years of personal observation: project-relative segmentation.

Let me explain. Zooming out, consider the meta-problem: you have all these users, and you need to group them based on some value judgment. That value is context-specific to the observer and thus varies by project. Trying to apply a single “magical airdrop filter” is never sufficient. By exploring data, you can begin to understand your users’ true behaviors and use data science to inform your airdrop strategy.

Why doesn’t anyone do this? That’s another article I’ll write later, but briefly: it’s a hard problem requiring data expertise, time, and money. Few teams are willing or able to invest in this.

Retention Rate

The final dimension I want to discuss is retention rate. Before we proceed, it’s best to define what we mean by retention. I summarize it as follows: Retention Rate = Number of recipients / Number of users who retained tokens

Most airdrops make a classic mistake: treating it as a one-time event.

To illustrate this, I felt some data was needed! Fortunately, OP actually conducted multiple rounds of airdrops! I hoped to find simple Dune dashboards giving me the retention data I wanted, but unfortunately, I was mistaken. So, I decided to collect the data myself.

I didn’t want to overcomplicate things—I just wanted to understand one simple thing: how the percentage of users with non-zero OP balances changed across successive airdrops.

I visited this site to obtain lists of all addresses that participated in OP airdrops. Then I built a small crawler to manually fetch the OP balance for each address (using some internal RPC credits), followed by basic data processing.

One important note before diving in: each OP airdrop was independent of the previous one. There were no rewards or mechanisms linking retention of tokens from prior drops.

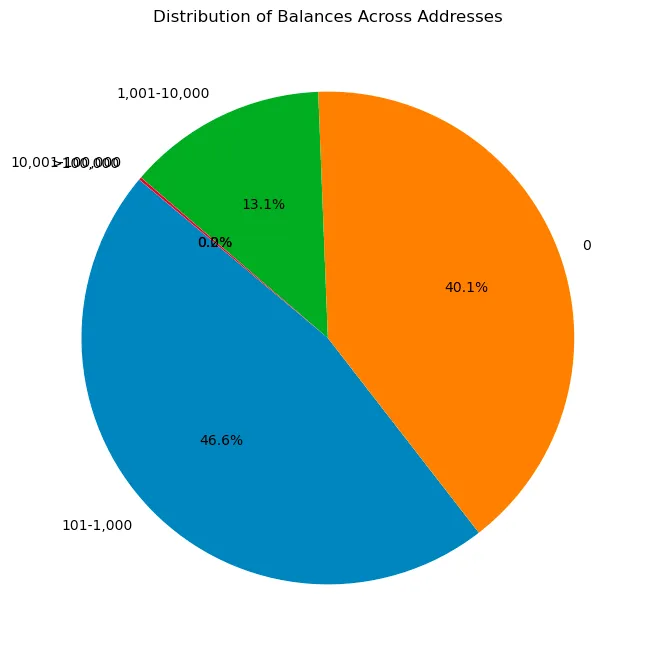

Airdrop 1

Tokens were distributed to 248,699 recipients based on the criteria listed here. In short, users received tokens based on the following actions:

-

OP mainnet users (92k addresses)

-

Repeated OP mainnet users (19k addresses)

-

DAO voters (84k addresses)

-

Multisig signers (19.5k addresses)

-

Gitcoin donors on L1 (24k addresses)

-

Users excluded due to ETH price (74k addresses)

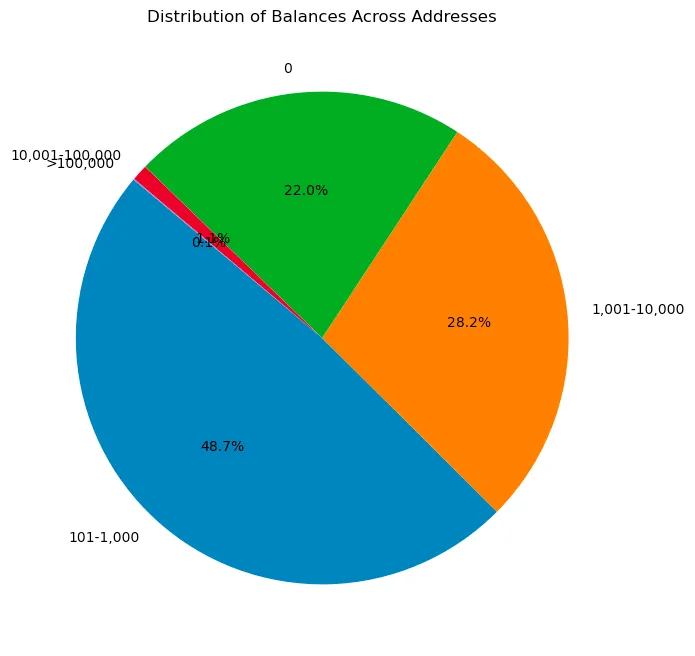

After analyzing all these users and their OP balances, I obtained the following distribution. A zero balance indicates the user sold off, as unclaimed OP tokens were directly sent to eligible addresses—details available here.

Regardless, compared to previous airdrops I’ve observed, this first drop was surprisingly good! Most allocations were above 90%. Only 40% had zero balance—an excellent outcome.

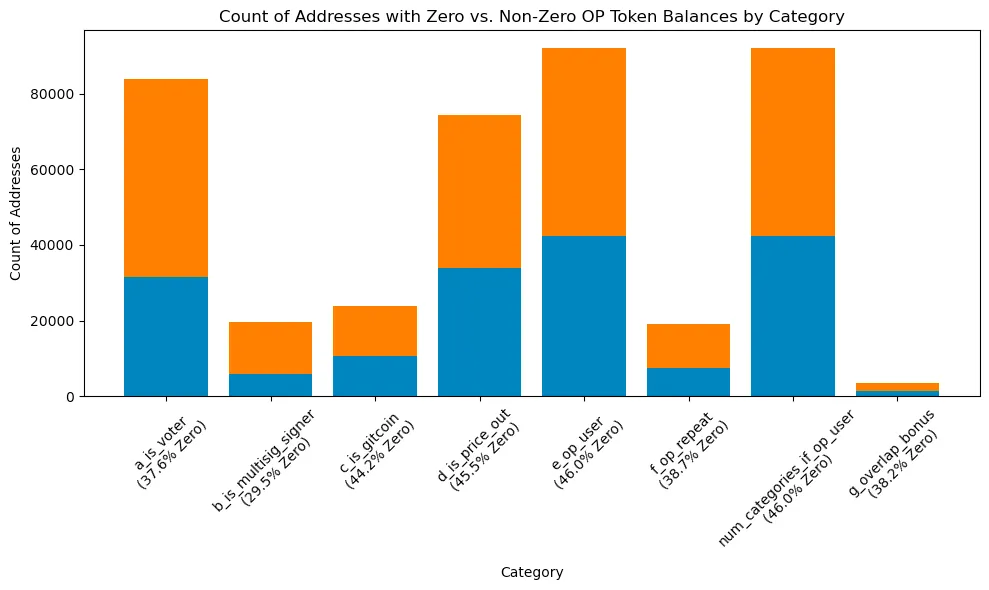

Then I wanted to understand how each criterion influenced whether users were likely to retain tokens. The only issue with this method is that addresses may belong to multiple categories, distorting the data. I won’t take surface values literally, but rather treat this as a rough indicator:

Among one-time OP users, the proportion with zero balance was highest, followed by those excluded due to ETH price. Clearly, these were not the best user groups. Multisig users had the lowest proportion, which I believe is a strong signal—after all, setting up a multisig just for an airdrop isn’t obvious to typical farmers!

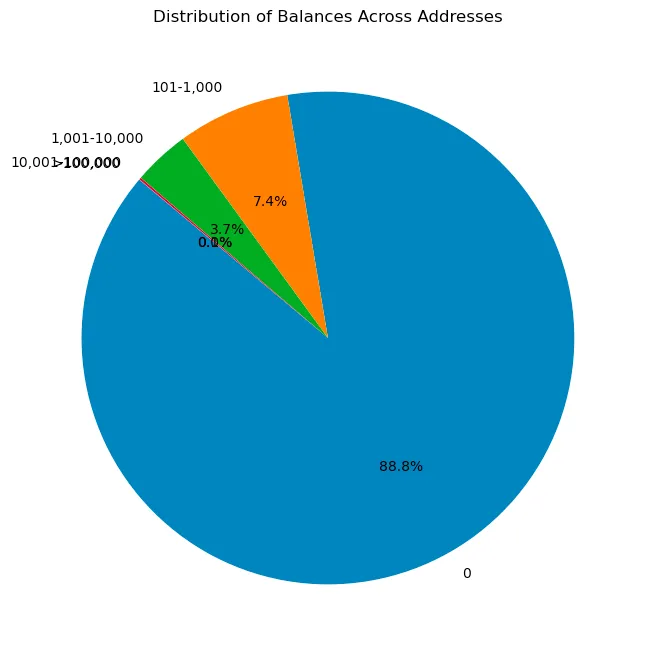

Airdrop 2

This airdrop reached 307,000 addresses, but in my view, it was poorly thought out. Criteria included:

-

Governance delegation rewards based on amount and duration of delegated OP.

-

Partial gas refunds for active OP users who spent a certain amount on gas fees.

-

Multiplier rewards determined by additional attributes related to governance and usage.

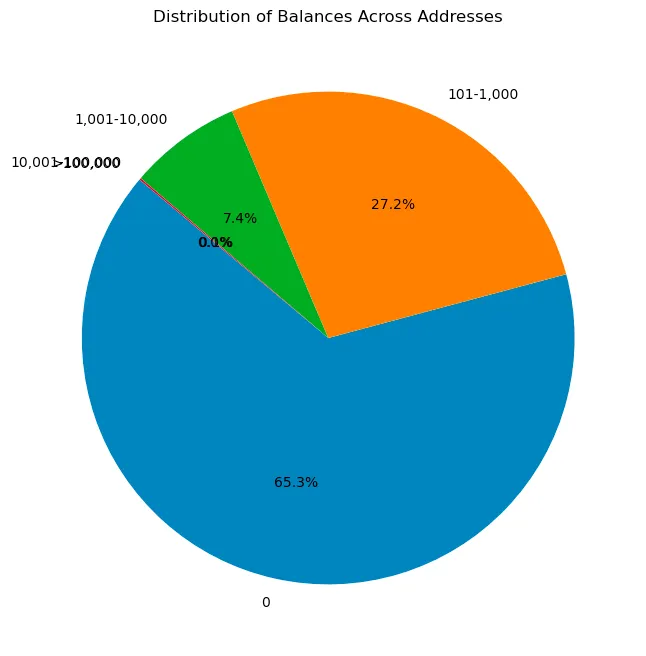

Intuitively, this didn’t feel like a good standard, because governance voting is easily manipulable by bots and highly predictable. As we’ll see below, my intuition wasn’t far off. I was shocked by how low the actual retention rate was!

Nearly 90% of addresses had zero OP balance! This is the typical retention statistic people are used to seeing. I’d love to dig deeper here, but I’d rather move on to the remaining airdrops.

Airdrop 3

This was undoubtedly the best-executed airdrop by the OP team. Its criteria were more complex than previous ones. Distributed to around 31,000 addresses, it was smaller in scale but far more effective. Details available here:

-

Cumulative amount of OP delegated per day (e.g., delegating 20 OP for 100 days: 20 * 100 = 2,000 OP-delegation-days).

-

Representatives who voted on the OP governance chain during the snapshot period (UTC Jan 20, 2023 – UTC Jul 20, 2023).

A key detail: the on-chain voting requirement occurred after the previous airdrop cycle. Thus, users from the first round might think, “Okay, I’ve done what the airdrop required—time to move on.” This is great for analysis, allowing us to assess these retention stats!

Only 22% of recipients had zero token balance! To me, this shows significantly less waste than any prior airdrop. It supports my argument that retention is crucial, and that additional data from multi-round airdrops is more valuable than people realize.

Airdrop 4

This airdrop reached 23,000 addresses and used more interesting criteria. I personally expected high retention, but upon reflection, I developed hypotheses for why it might fall short:

-

You created NFTs involved in participation transactions on the Superchain. Total gas on OP chains (OP Mainnet, Base, Zora) from NFT transfer transactions associated with your address, measured over the 365 days before the airdrop deadline (Jan 10, 2023 – Jan 10, 2024).

-

You created engaging NFTs on Ethereum mainnet. Total L1 Ethereum gas from NFT transfer transactions involving your address, measured over the same 365-day window.

You’d surely think that creators of NFT contracts would be a solid signal, right? Unfortunately, no. The data shows the opposite.

While not as bad as Airdrop 2, we took a major step back in retention compared to Airdrop 3.

My hypothesis is that numbers would improve significantly if they added extra filters—for example, flagging spammy NFTs or identifying contracts with some level of "legitimacy." The current criteria are too broad. Additionally, since tokens were directly airdropped to these addresses (without requiring claim), scammers creating spam NFTs likely thought, “Wow, free money—time to sell.”

Final Thoughts

As I wrote this article and collected data myself, I managed to prove or disprove several assumptions—insights that proved highly valuable. In particular, the quality of your airdrop is directly tied to your screening criteria. Those trying to build universal “airdrop scores” or apply advanced machine learning models often fail due to inaccurate data or high false positives. Machine learning is powerful—until you try to understand how it arrived at its conclusions.

While writing the scripts and code for this piece, I also obtained Starkware’s airdrop data—an interesting exercise in itself. I’ll cover that in my next article. Key takeaways for teams:

-

Stop doing one-off airdrops! It’s self-defeating. Deploy incentive structures akin to A/B testing. Iterate heavily and use past experiences to guide future decisions.

-

Use criteria that build upon prior airdrops to increase efficiency. In practice, reward users who hold tokens in the same wallet. Make it clear to your users that they should stick with one wallet unless absolutely necessary to switch.

-

Obtain better data to enable smarter, higher-quality airdrop segmentation. Bad data leads to bad outcomes. As shown in this article, the lower the “predictability” of your criteria, the better your retention results tend to be.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News