From Vitalik's Article: Exploring Notable Sub-sectors at the Intersection of Crypto and AI

TechFlow Selected TechFlow Selected

From Vitalik's Article: Exploring Notable Sub-sectors at the Intersection of Crypto and AI

The AI赛道 is more like a "technology narrative-driven MEME" overall.

Authors: @charlotte0211z, @BlazingKevin_, Metrics Ventures

On January 30, Vitalik published The promise and challenges of crypto + AI applications, discussing how blockchain and artificial intelligence should be combined and the potential challenges in this process. One month after the article's release, NMR, Near, and WLD—projects mentioned in the piece—all saw significant price increases, completing a round of value discovery. This article builds upon Vitalik’s four proposed models for integrating Crypto and AI, offering a systematic review of current AI-focused subsectors within crypto and introducing representative projects in each category.

1 Introduction: Four Ways to Combine Crypto and AI

Decentralization is the consensus maintained by blockchain, with security being its core principle, and open-source code is the foundational element that enables on-chain activities to embody these characteristics from a cryptographic standpoint. This model has been effective through several waves of transformation in blockchain over recent years. However, the integration of artificial intelligence introduces new complexities.

Imagine using AI to design the architecture of a blockchain or application—this would necessitate making the model open-source. Yet doing so exposes its vulnerabilities in adversarial machine learning; conversely, keeping it closed undermines decentralization. Therefore, we must carefully consider how and to what extent AI should be integrated into existing blockchains or applications.

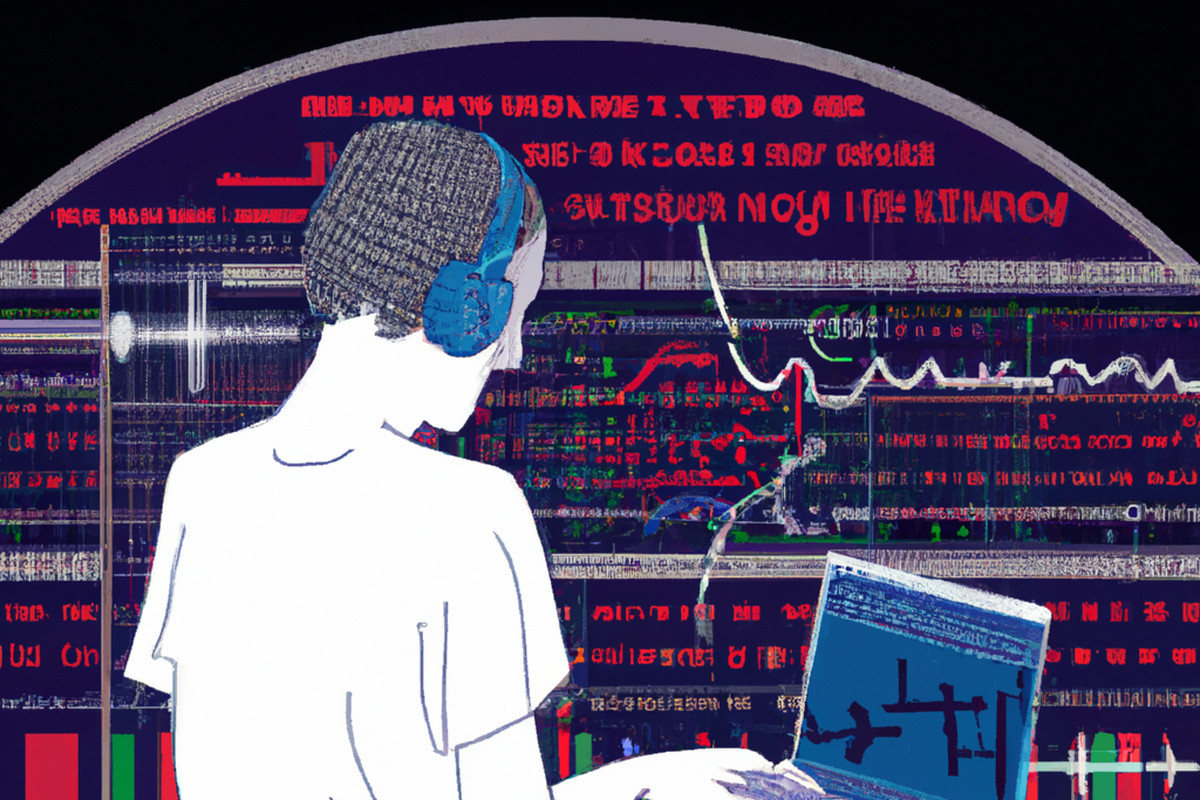

Source: DE UNIVERSITY OF ETHEREUM

In an article titled DE UNIVERSITY OF ETHEREUM's When Giants Collide: Exploring the Convergence of Crypto x AI, the fundamental differences between AI and blockchain are outlined. As shown in the figure above, key traits of AI include:

-

Centralization

-

Low transparency

-

High energy consumption

-

Monopolistic nature

-

Weak monetization capability

Blockchain stands in direct contrast to AI across all five of these dimensions. This is precisely the central argument of Vitalik’s article: if AI and blockchain are to converge, what trade-offs must be made regarding data ownership, transparency, monetization, and energy costs—and what infrastructure will be required to enable their effective integration?

Based on these criteria and his own analysis, Vitalik categorizes crypto-AI hybrid applications into four broad types:

-

AI as a player in a game

-

AI as an interface to the game

-

AI as the rules of the game

-

AI as the objective of the game

The first three primarily represent ways AI can be introduced into the crypto world, reflecting increasingly deeper levels of integration. In our view, this classification reflects the degree to which AI influences human decision-making, thereby introducing varying degrees of systemic risk to the broader crypto ecosystem:

-

AI as a participant: AI does not directly affect human decisions or behaviors, thus posing minimal real-world risks and currently offering the highest feasibility for implementation.

-

AI as an interface: AI assists users by providing information or tools that enhance user and developer experience and lower entry barriers. However, incorrect outputs could introduce tangible risks to real-world outcomes.

-

AI as the rules: AI fully replaces humans in decision-making and execution. Any malicious behavior or failure in such systems could lead to real-world chaos. Neither Web2 nor Web3 currently trusts AI to autonomously make critical decisions.

Finally, the fourth category focuses on leveraging crypto’s unique properties to build better AI. As previously noted, issues like centralization, opacity, high energy use, monopolies, and poor monetization inherent in AI can naturally be mitigated by crypto’s decentralized attributes. While many remain skeptical about whether crypto can meaningfully impact AI development, the vision of using decentralization to reshape reality remains one of crypto’s most compelling narratives. This sector, driven by grand ambitions, has become the most heavily hyped segment within the AI-crypto space.

2 AI as a Participant

In mechanisms where AI participates, incentives ultimately originate from protocols defined by human input. Before AI becomes an interface—or especially the rule-setter—we need methods to evaluate different AI performances, allowing them to participate in incentive mechanisms and receive rewards or penalties via on-chain systems.

Compared to serving as an interface or rule-setter, AI as a participant poses negligible risk to users and the overall system. It represents a necessary transitional phase before AI deeply influences human decisions. Consequently, integrating AI at this level requires relatively low cost and few compromises, making it, per Vitalik, one of the most viable categories today.

Broadly speaking, most current AI applications fall into this category—for example, AI-powered trading bots and chatbots. We are still far from realizing AI as an interface or rule-setter. Users are still comparing and optimizing various bots, and crypto users have yet to form consistent habits around AI applications. Vitalik also includes Autonomous Agents under this category.

However, from a narrower and longer-term perspective, we prefer a more granular classification of AI applications and agents. Under this framework, representative subcategories include:

2.1 AI Games

To some extent, all AI games belong in this category. Players interact with and train AI characters to better align with personal preferences—whether stylistic or competitive within game mechanics. Gaming serves as a transitional domain for AI to enter the real world, presenting relatively low adoption risk and high accessibility for mainstream users. Notable projects include AI Arena, Echelon Prime, and Altered State Machine (ASM).

-

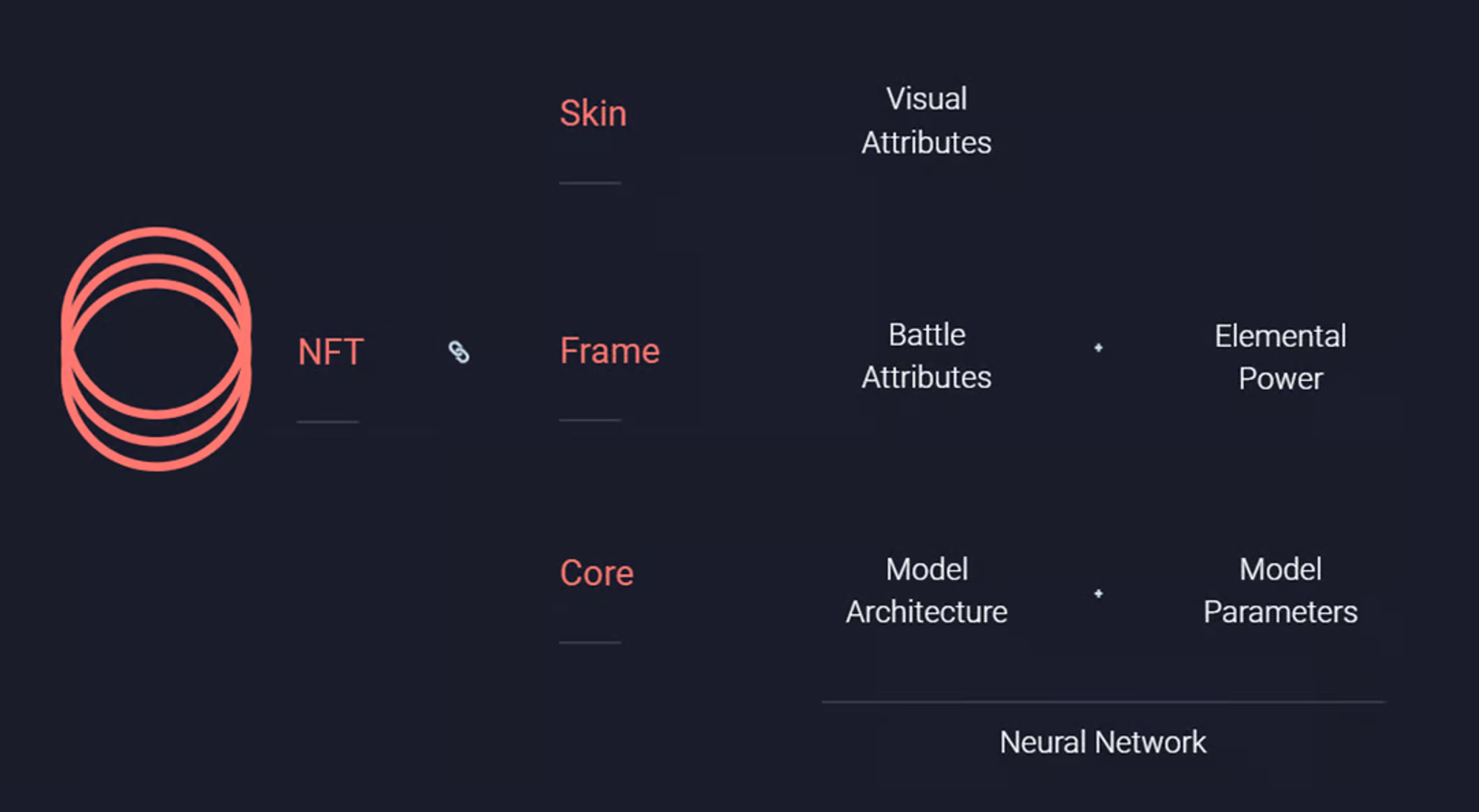

AI Arena: A PvP fighting game where players train AI-powered avatars to evolve over time. The goal is to allow everyday users to experience AI firsthand while enabling AI engineers to earn income by contributing algorithms. Each character is an AI-powered NFT. Its "Core" contains the AI model—architecture and parameters—stored on IPFS. A newly minted NFT starts with randomly generated parameters, resulting in random actions. Users improve strategy through imitation learning (IL). Each training session updates the parameters on IPFS.

-

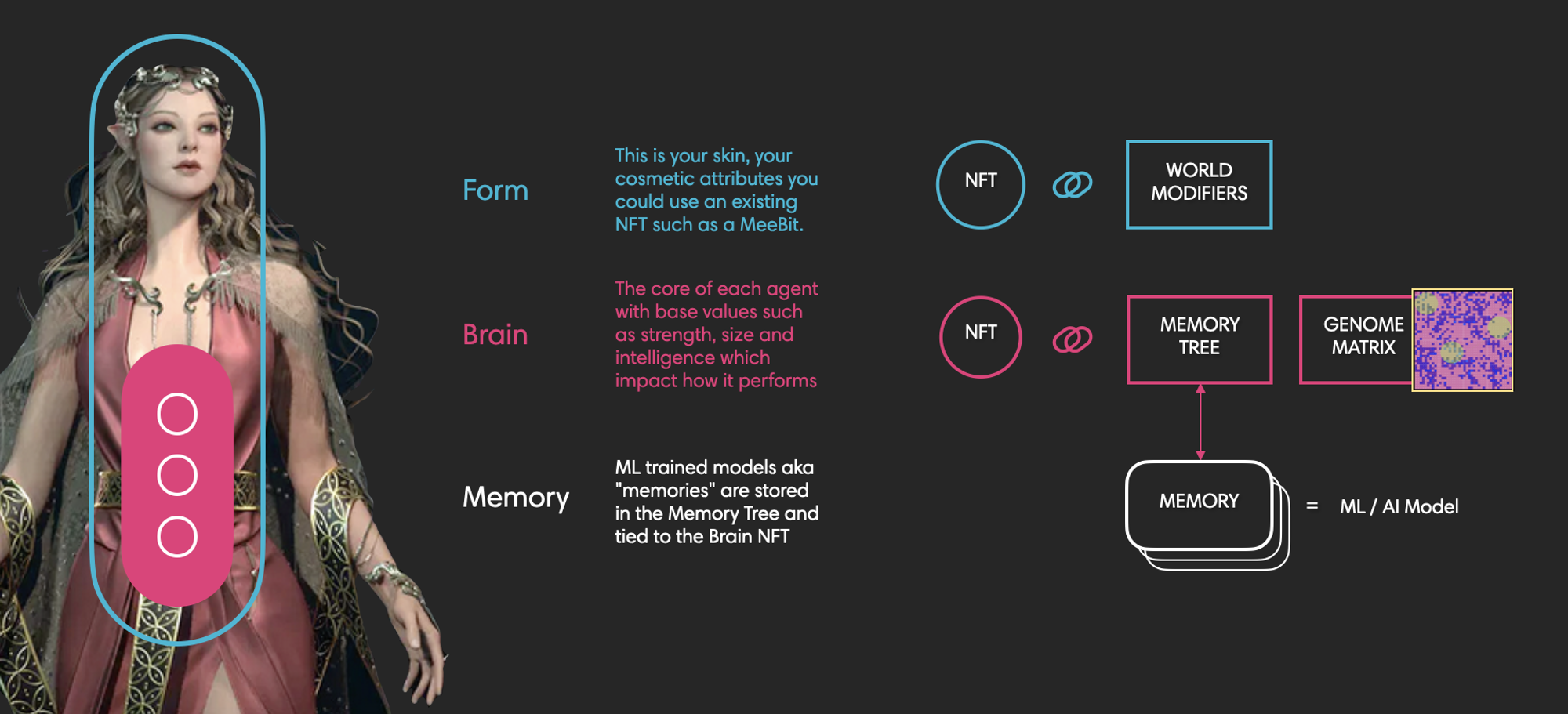

Altered State Machine (ASM): ASM is not a game but a protocol for establishing ownership and enabling trade of AI Agents. Positioned as a metaverse AI protocol, it is integrating with games including FIFA. ASM uses NFTs to represent AI Agents, each composed of three parts: Brain (agent characteristics), Memories (behavioral strategies and training history, bound to Brain), and Form (visual appearance). ASM includes a Gym module—a decentralized GPU cloud provider—that supplies computational power to Agents. Projects built on ASM include AIFA (AI football), Muhammad Ali (AI boxing), AI League (street soccer with FIFA), Raicers (AI racing), and FLUF World’s Thingies (generative NFTs).

-

Parallel Colony (PRIME): Developed by Echelon Prime, Parallel Colony is an AI LLM-based game where players interact with and influence their AI Avatars. These avatars develop autonomous behaviors based on memory and life trajectories. Colony is among the most anticipated AI games. Recently, the team released a whitepaper and announced migration to Solana, triggering a fresh surge in PRIME’s price.

2.2 Prediction Markets / Competitions

Prediction capability is foundational to AI-driven future decisions. Before deploying AI models in real-world forecasting, prediction competitions provide higher-level evaluations, incentivizing data scientists and AI models with tokens. This benefits the entire crypto-AI ecosystem by encouraging continuous development of more efficient, performant, and crypto-native models—ultimately producing safer, higher-quality products before AI assumes greater decision-making roles. As Vitalik notes, prediction markets are powerful primitives extendable to many other problem domains. Key projects include Numerai and Ocean Protocol.

-

Numerai: A long-running data science competition where participants train ML models on historical market data provided by Numerai to predict stock movements. Participants stake their models and NMR tokens in tournaments. Top-performing models receive NMR rewards; poorly performing ones lose staked tokens. As of March 7, 2024, 6,433 models were staked, with $75,760,979 distributed in total rewards. Numerai aims to crowdsource global data scientists to build novel hedge funds, already launching Numerai One and Numerai Supreme. Pathway: market prediction → crowdsourced models → hedge fund.

-

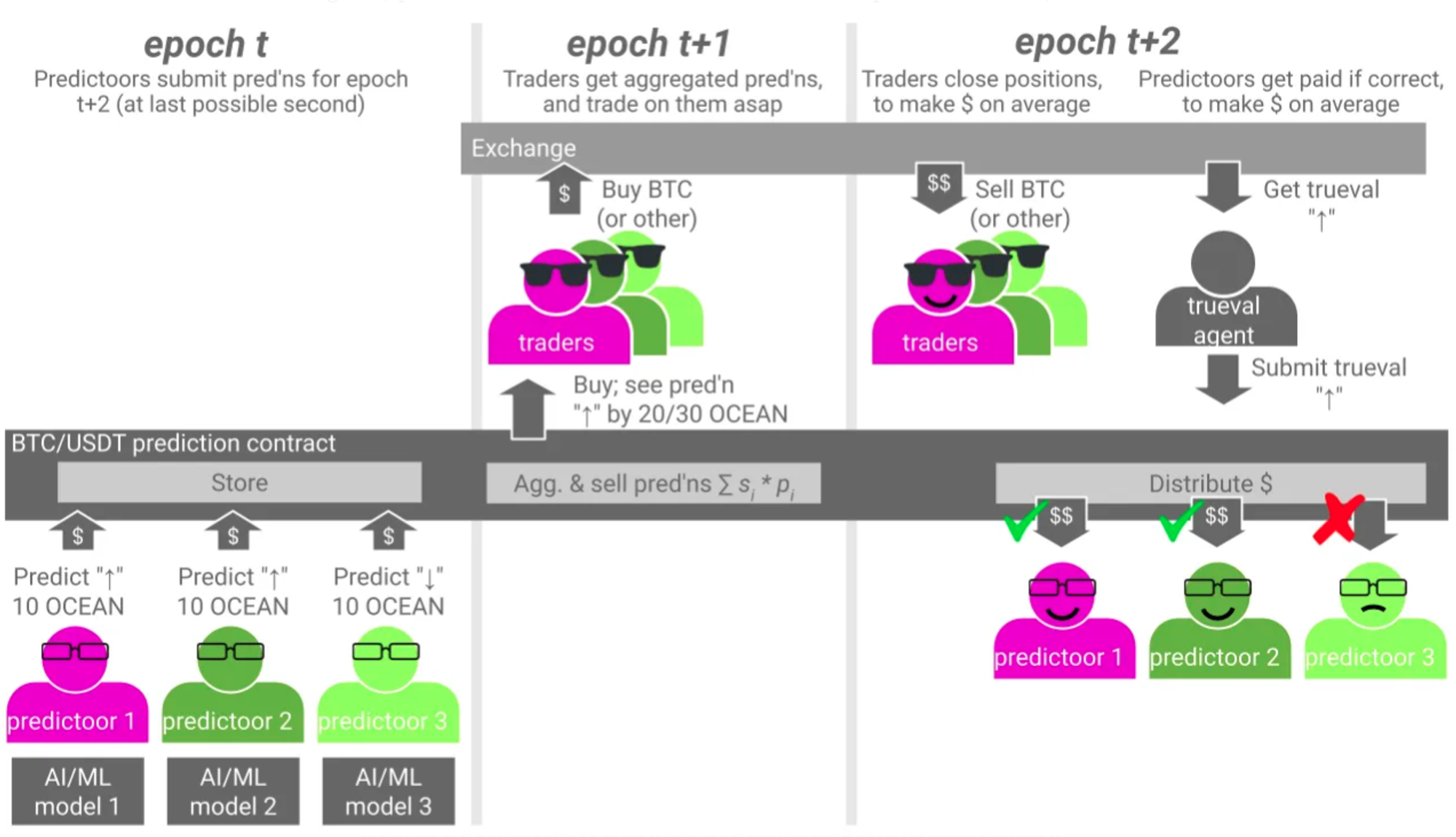

Ocean Protocol: Ocean Predictoor focuses on crowd-sourced predictions, starting with cryptocurrency price trends. Users can run a Predictoor bot (predicting next-period prices, e.g., BTC/USDT in five minutes) or a Trader bot. Predictoors stake $OCEAN, and the protocol computes a weighted global forecast. Traders buy predictions and act on them—profiting when accurate. Incorrect predictions result in slashing; correct ones earn rewards from slashed stakes and trader fees. On March 2, Ocean announced its new direction: World-World Model (WWM), exploring predictions for weather, energy, and other real-world phenomena.

3 AI as an Interface

AI can help users understand complex events in simple language, acting as a guide in the crypto world and warning of potential risks—lowering barriers to entry and improving user safety and experience. Practical features include wallet interaction alerts, AI-driven intent-based transactions, and AI chatbots answering common crypto questions. Beyond retail users, developers, analysts, and other professionals will all benefit from AI-assisted tools.

Let us reiterate the shared trait of these projects: they do not replace human decision-making but instead assist it by providing information and tools. From this layer onward, the risk of AI malice begins to surface—through misinformation influencing final human judgments—a concern detailed in Vitalik’s article.

There are numerous and diverse projects in this category, including AI chatbots, smart contract auditors, code generators, and trading bots. Most current AI applications reside at this early stage. Notable examples include:

-

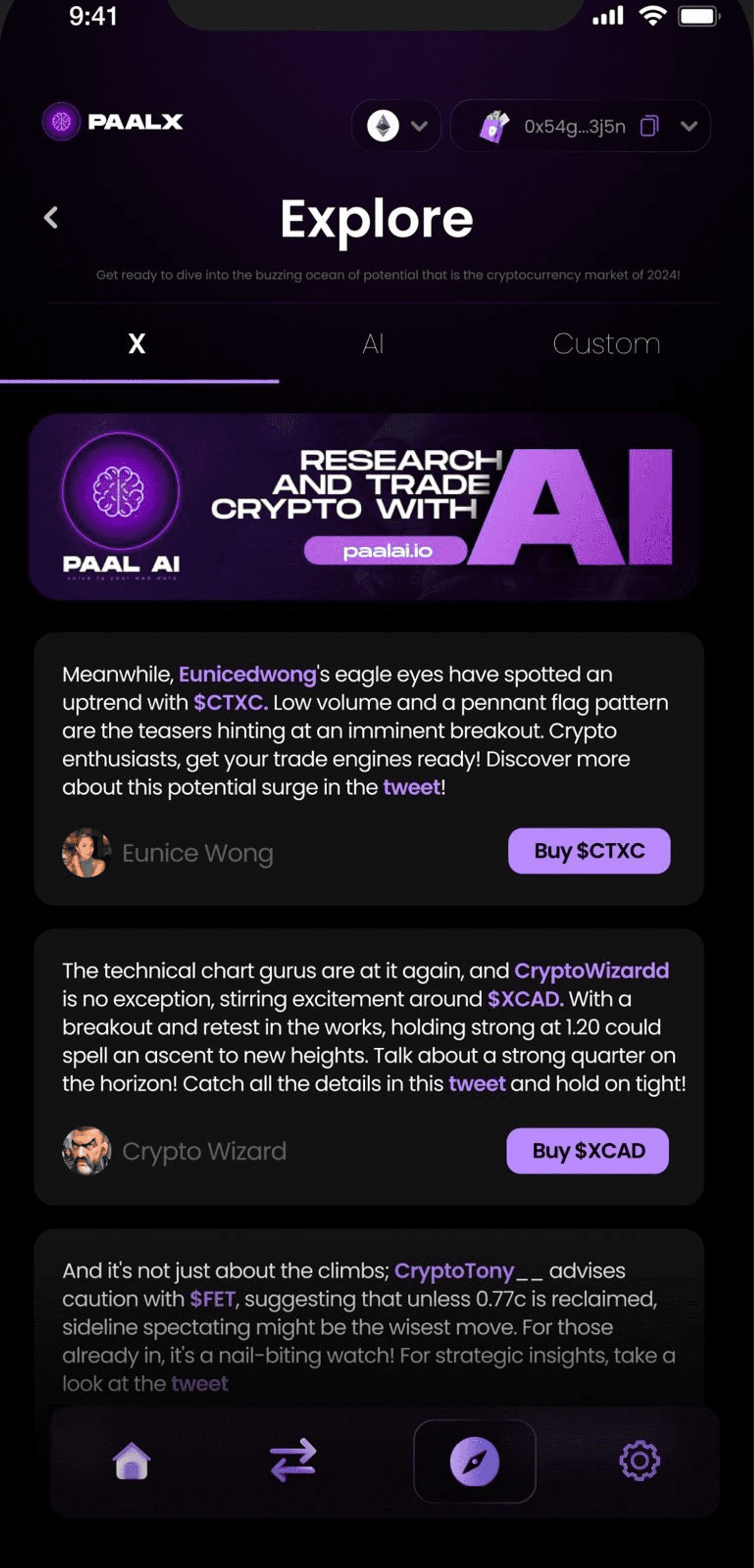

PaaL: Currently the leading AI chatbot project, PaaL can be seen as a ChatGPT trained on crypto knowledge. Integrated with Telegram and Discord, it offers token data analysis, fundamentals and tokenomics evaluation, text-to-image generation, and automated group replies. Users can customize bots by feeding datasets to create personalized AI knowledge bases. PaaL is advancing toward AI trading—on February 29, it launched PaalX, an AI-powered crypto research and trading terminal supporting smart contract auditing, Twitter news integration, trading, and AI assistant functions to reduce user friction.

-

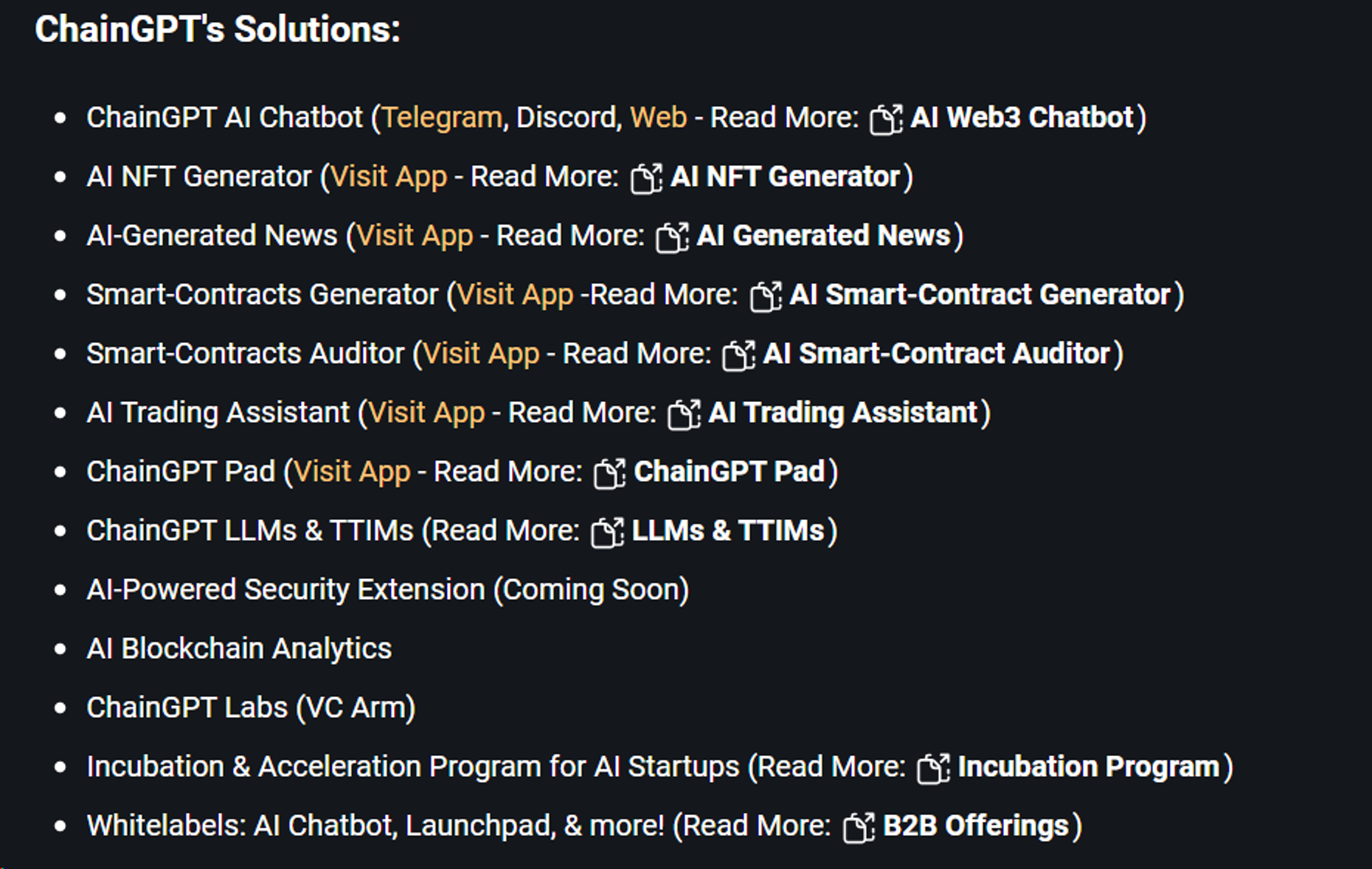

ChainGPT: ChainGPT develops a suite of AI-powered crypto tools, including chatbots, NFT generators, news aggregators, smart contract builders and auditors, trading assistants, prompt markets, and cross-chain swaps. However, ChainGPT’s current focus is on project incubation and launchpad services, having completed 24 IDOs and 4 free giveaways.

-

Arkham: Ultra is Arkham’s proprietary AI engine designed to match blockchain addresses with real-world entities, increasing transparency in crypto. Ultra aggregates on- and off-chain data, consolidates it into an expandable database, and presents insights visually. However, Arkham’s documentation lacks detailed technical descriptions of Ultra. The project gained attention due to a personal investment by OpenAI co-founder Sam Altman, surging 5x in the past 30 days.

-

GraphLinq: GraphLinq is an automation workflow solution enabling users to deploy and manage automations without coding—e.g., pushing Bitcoin prices from CoinGecko to a Telegram bot every five minutes. It visualizes workflows using graphs, allowing drag-and-drop node creation executed by the GraphLinq Engine. Despite no-code design, setting up graphs remains challenging for average users due to template selection and logic block configuration. GraphLinq is now integrating AI to let users define tasks via natural language conversations.

-

0x0.ai: 0x0 offers three AI-related services: AI smart contract auditing, AI anti-rug detection, and an AI developer center. Anti-rug detects suspicious behaviors like excessive taxes or liquidity draining. The developer center uses ML to generate smart contracts for no-code deployment. Only the audit feature is live; others remain under development.

-

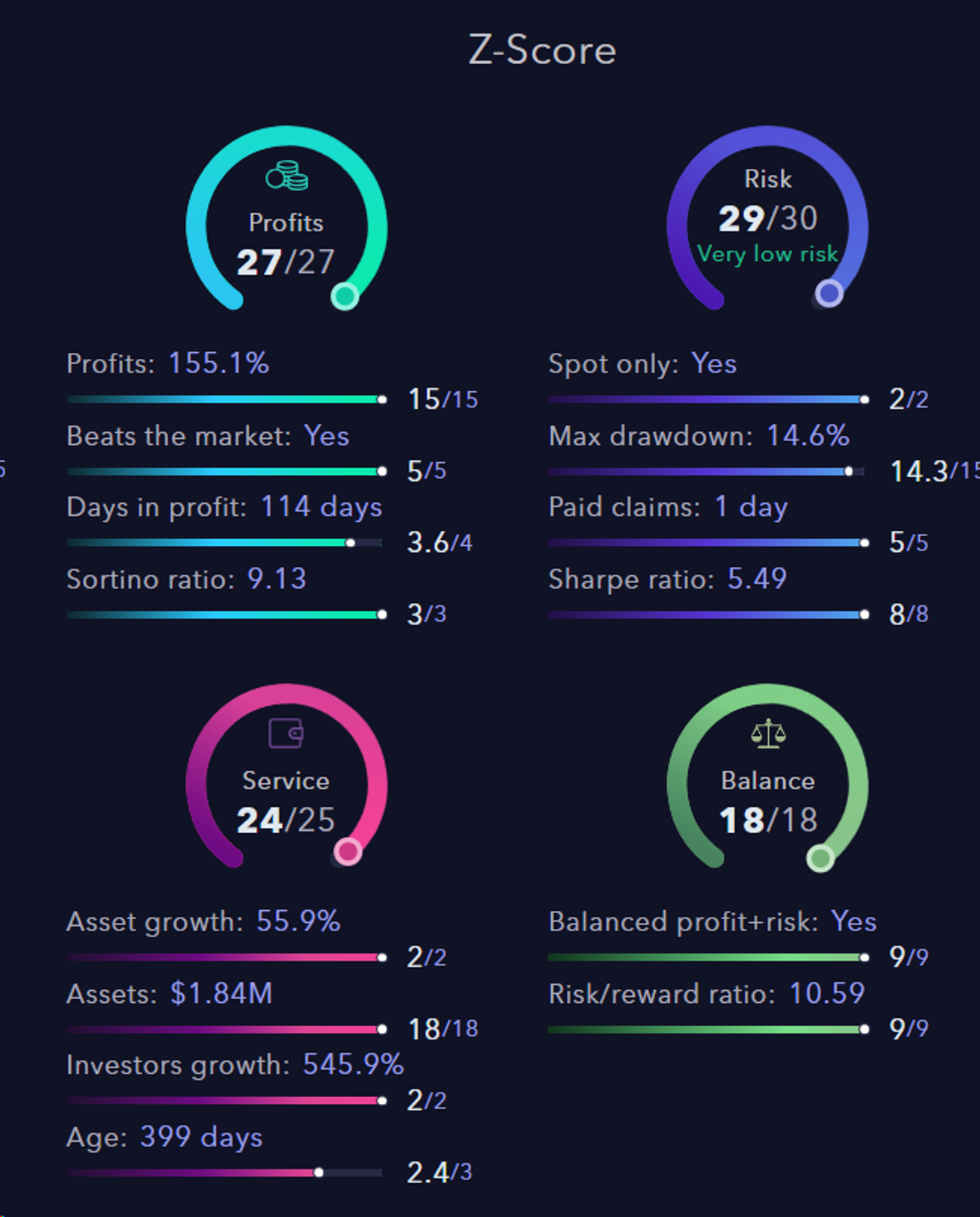

Zignaly: Founded in 2018, Zignaly allows individual investors to select fund managers for crypto asset management—similar to copy-trading. It uses machine learning and AI to build a systematic evaluation framework for fund managers. Its first product, Z-Score, remains relatively basic as an AI tool.

4 AI as the Rules of the Game

This is the most exciting frontier—enabling AI to replace humans in decision-making and actions. Your AI could directly control your wallet, executing trades and decisions autonomously. In this category, we identify three main layers: AI applications (especially those aiming for autonomous decisions like trading or DeFi yield bots), Autonomous Agent protocols, and zkML/opML.

AI applications are tools that make specific decisions in particular domains, leveraging specialized knowledge and data via custom AI models. Note that AI apps appear in both “interface” and “rules” categories. Ideally, they should evolve into independent agents—but current limitations in model effectiveness and integration security prevent even reliable interface functionality. Thus, AI applications remain in very early stages, with details covered earlier.

Vitalik mentioned Autonomous Agents under the first category (“AI as a player”), but we place them here based on long-term vision. Autonomous Agents simulate human thought and decision-making using vast data and algorithms, performing tasks and interactions. We focus on communication and networking infrastructure—protocols defining agent ownership, identity, communication standards, and interoperability, enabling coordinated decision-making across multiple agents.

zkML/opML ensures trustworthy output by cryptographically or economically verifying correct model inference. Security is critical when integrating AI into smart contracts. Since smart contracts automatically execute based on inputs, malicious or faulty AI inputs could introduce massive systemic risk. Hence, zkML/opML and related solutions form the foundation for AI autonomy.

In summary, these three components form the foundational layers for AI-as-rule: zkML/opML as the base layer ensuring protocol security; Agent protocols building an ecosystem for collaborative decision-making; and AI applications (specific agents) continuously improving domain expertise and executing real decisions.

4.1 Autonomous Agent

AI Agents fit naturally into the crypto world. From smart contracts to Telegram bots to full AI agents, crypto is moving toward higher automation and lower user friction. Smart contracts execute immutably but require external triggers and cannot run autonomously. Telegram bots simplify access—users interact via natural language instead of direct frontend engagement—but handle only simple, predefined tasks. AI Agents go further: they understand natural language, independently discover and compose other agents and on-chain tools, and achieve user-defined goals.

AI Agents aim to dramatically improve the usability of crypto products, while blockchain enhances agent operations by making them more decentralized, transparent, and secure. Specifically, blockchain helps by:

-

Token incentives attracting more developers to build agents

-

NFT-based ownership enabling agent monetization and trading

-

On-chain agent identity and registration systems

-

Immutable activity logs for traceability and accountability

Key projects in this space include:

-

Autonolas: Supports on-chain asset ownership and composability of agents and components, enabling discovery and reuse of code, agents, and services. Developers register code on-chain and receive NFTs representing ownership. Service owners combine multiple agents into services, attract operators to run them, and users pay to access them.

-

Fetch.ai: With strong AI expertise, Fetch.ai focuses on AI Agents. Its protocol comprises four layers: AI Agents, Agentverse, AI Engine, and Fetch Network. Agents are central; others support their development. Agentverse is a platform-as-a-service for creating and registering agents. AI Engine converts natural language inputs into executable tasks and selects optimal registered agents. Fetch Network is the blockchain layer—agents must register in the Almanac contract to collaborate. Notably, Autonolas focuses on crypto-world agents bringing off-chain operations on-chain, whereas Fetch.ai spans Web2 use cases like travel booking and weather forecasting.

-

Delysium: Transitioned from gaming to an AI Agent protocol with two layers: communication and blockchain. The communication layer forms the backbone, enabling fast, secure, scalable inter-agent communication via standardized messaging and service discovery APIs. The blockchain layer handles agent authentication and immutable logging of key decisions via smart contracts. Specifically, it includes Agent ID (ensuring only legitimate agents access the network) and Chronicle (an immutable log of agent actions for verifiable audit trails).

-

Altered State Machine: Defines NFT-based standards for agent ownership and trading (see Section 2.1). Though currently focused on gaming, its foundational standard holds potential for broader agent applications.

-

Morpheous: Building an AI Agent ecosystem connecting Coders, Compute Providers, Community Builders, and Capital contributors who supply agents, compute resources, dev tools, and funding. MOR will launch fairly, rewarding miners providing compute, stETH stakers, agent/smart contract developers, and community contributors.

4.2 zkML/opML

Zero-knowledge proofs (ZKPs) currently serve two primary purposes:

-

Proving computation was correctly executed at low cost on-chain (used in ZK-Rollups and ZKP bridges);

-

Privacy preservation: proving correctness without revealing computation details.

Similarly, ZKPs in machine learning fall into two categories:

-

Inference verification: Using ZK-proofs to cheaply verify off-chain AI model inference was performed correctly.

-

Privacy protection: Two subtypes—(1) data privacy: using private data with public models, protected via ZKML; (2) model privacy: hiding model weights while computing outputs from public inputs.

We believe inference verification is currently more critical for crypto. Let’s explore this scenario further. From AI as participant to AI as rule-maker, we want AI integrated into on-chain processes. But AI inference is too computationally expensive to run directly on-chain. Moving it off-chain introduces trust issues—the “black box” problem: Did the operator alter my input? Did they use the specified model? By converting ML models into ZK circuits, we can either: (1) store small zkML models directly in smart contracts, solving transparency; or (2) perform inference off-chain, generate a ZK proof, and verify it on-chain. The architecture involves two contracts: a main contract (using ML output) and a ZK-proof verification contract.

zkML remains in early stages, facing technical hurdles in translating ML models to ZK circuits and high computational and cryptographic overhead. Like rollups, opML offers an economic alternative, using Arbitrum’s AnyTrust assumption—requiring only one honest node among submitters or validators. However, opML only supports inference verification, not privacy.

Current projects are building zkML infrastructure and exploring use cases. Demonstrating real utility is crucial—to justify the high cost. Some focus specifically on ML-related ZK tech (e.g., Modulus Labs), others on general-purpose ZK infrastructure:

-

Modulus uses zkML for on-chain AI inference. On February 27, it launched Remainder, a zkML prover achieving 180x efficiency gains over traditional AI inference on equivalent hardware. Modulus partners with projects like Upshot to collect complex market data, assess NFT values with ZK-verified AI, and post prices on-chain; and with AI Arena to prove battling avatars match user-trained versions.

-

Risc Zero runs ML models on-chain via its ZKVM, proving exact computations were correctly executed.

-

Ingonyama develops hardware specialized for ZK technology, potentially lowering entry barriers. zkML may also apply to model training.

5 AI as the Objective

While the first three categories emphasize how AI empowers crypto, “AI as the objective” highlights how crypto can improve AI—using crypto to build better AI models and products, judged by criteria like efficiency, accuracy, and decentralization.

AI rests on three pillars: data, compute, and algorithms. Crypto is actively enhancing each dimension:

-

Data: Training data is foundational. Decentralized data protocols incentivize individuals and organizations to share private data while preserving privacy via cryptography, preventing leaks of sensitive information.

-

Compute: Decentralized compute is currently the hottest AI-crypto subsector. Protocols create matching markets between supply and demand, connecting long-tail compute resources with AI firms for training and inference.

-

Algorithms: Crypto’s role in algorithm advancement is central to decentralized AI and a major theme in Vitalik’s “AI as objective.” Creating decentralized, trustworthy black-box AI would resolve adversarial ML issues—but faces immense cryptographic overhead. Alternatively, “using crypto incentives to encourage better AI” achieves progress without diving fully into cryptographic complexity.

Big tech monopolies over data and compute collectively concentrate model training, turning closed-source models into profit engines. From an infrastructure standpoint, crypto incentivizes decentralized supply of data and compute via economic mechanisms, protects data privacy via cryptography, and enables decentralized model training—leading to more transparent, equitable AI.

5.1 Decentralized Data Protocols

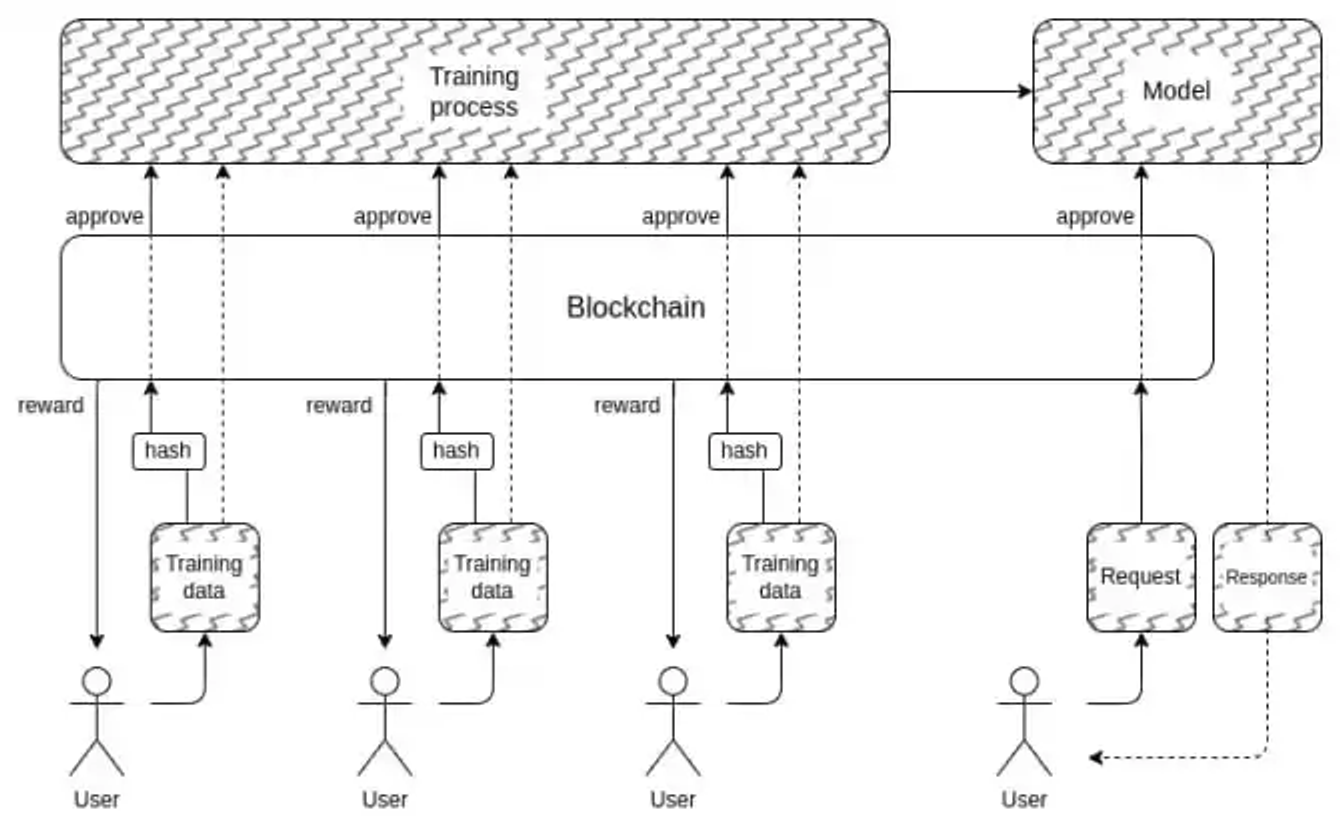

These protocols operate largely through data crowdsourcing, incentivizing users to contribute datasets or data services (e.g., labeling) for corporate model training. They establish data marketplaces to match supply and demand. Some explore DePIN incentive schemes to gather browsing data or use user devices/bandwidth for web scraping.

-

Ocean Protocol: Tokenizes and establishes ownership of data. Users can create NFTs for data/algorithms via no-code tools and issue datatokens controlling access. Ocean’s Compute-to-Data (C2D) ensures privacy—users get results without downloading raw data. Founded in 2017, Ocean naturally rode the AI wave during this cycle.

-

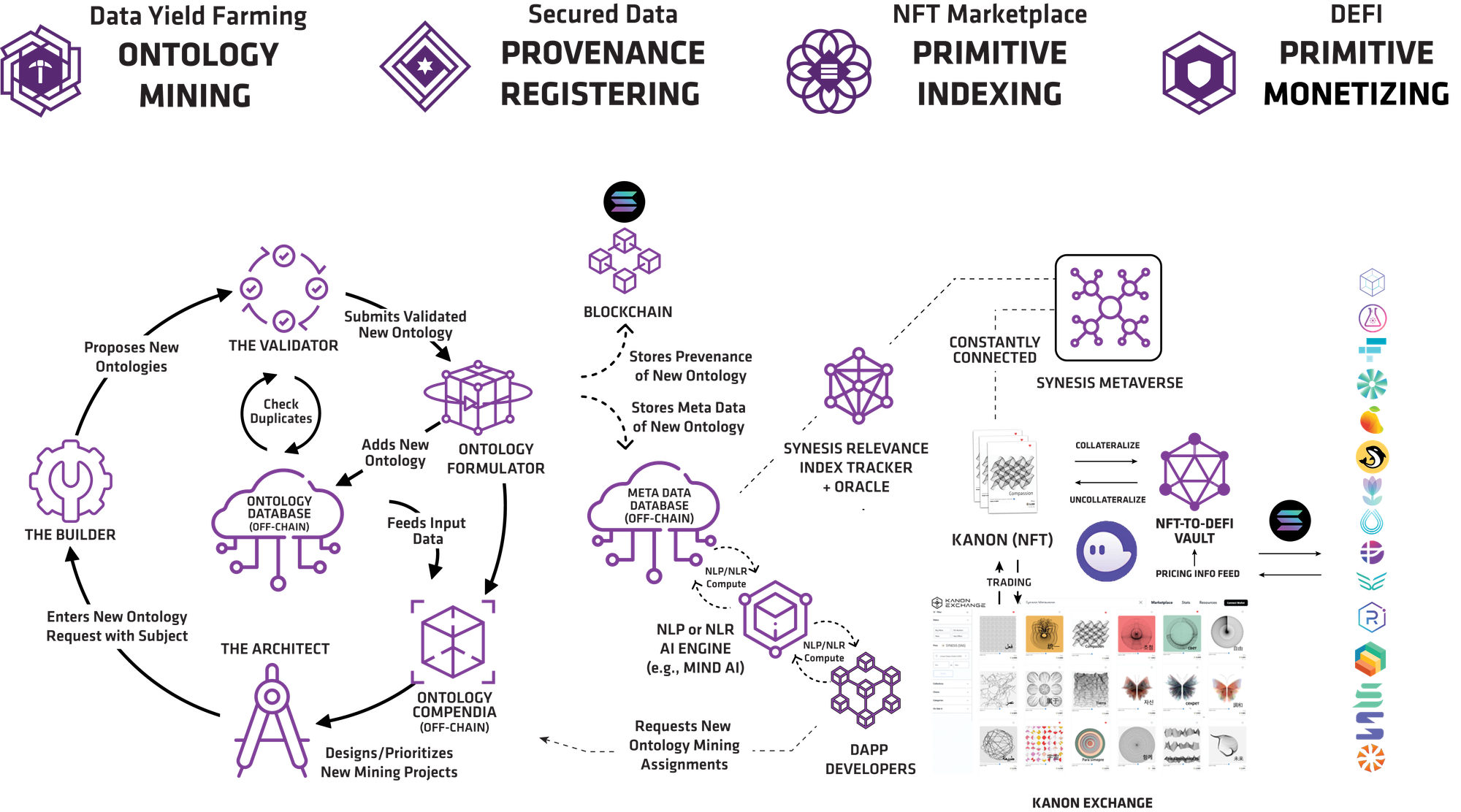

Synesis One: A Solana-based Train2Earn platform. Users earn $SNS by contributing natural language data and annotations. Miners fall into three roles: Architects (create tasks), Builders (submit content), Validators (verify submissions). Final datasets go to IPFS, with metadata stored on-chain and made available off-chain to AI companies (currently Mind AI).

-

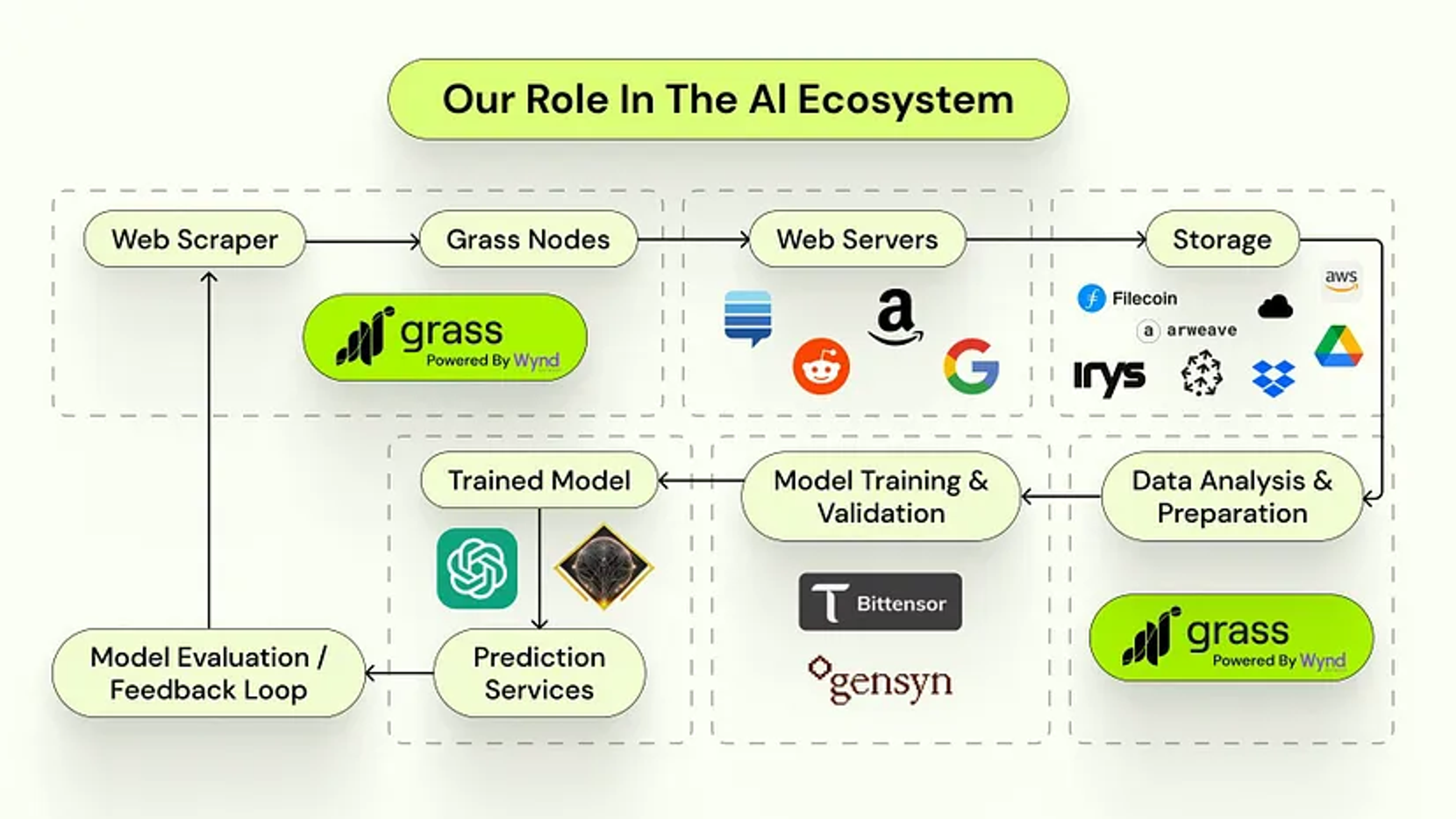

Grass: Marketed as AI’s decentralized data layer, essentially a decentralized web scraping marketplace sourcing data for AI training. Public websites (Twitter, Google, Reddit) are valuable AI data sources, but increasingly restrict scraping. Grass leverages unused personal bandwidth and rotating IPs to bypass blocks, scrape public sites, clean data, and supply it to AI firms. Currently in beta, users contribute bandwidth for points redeemable in potential airdrops.

-

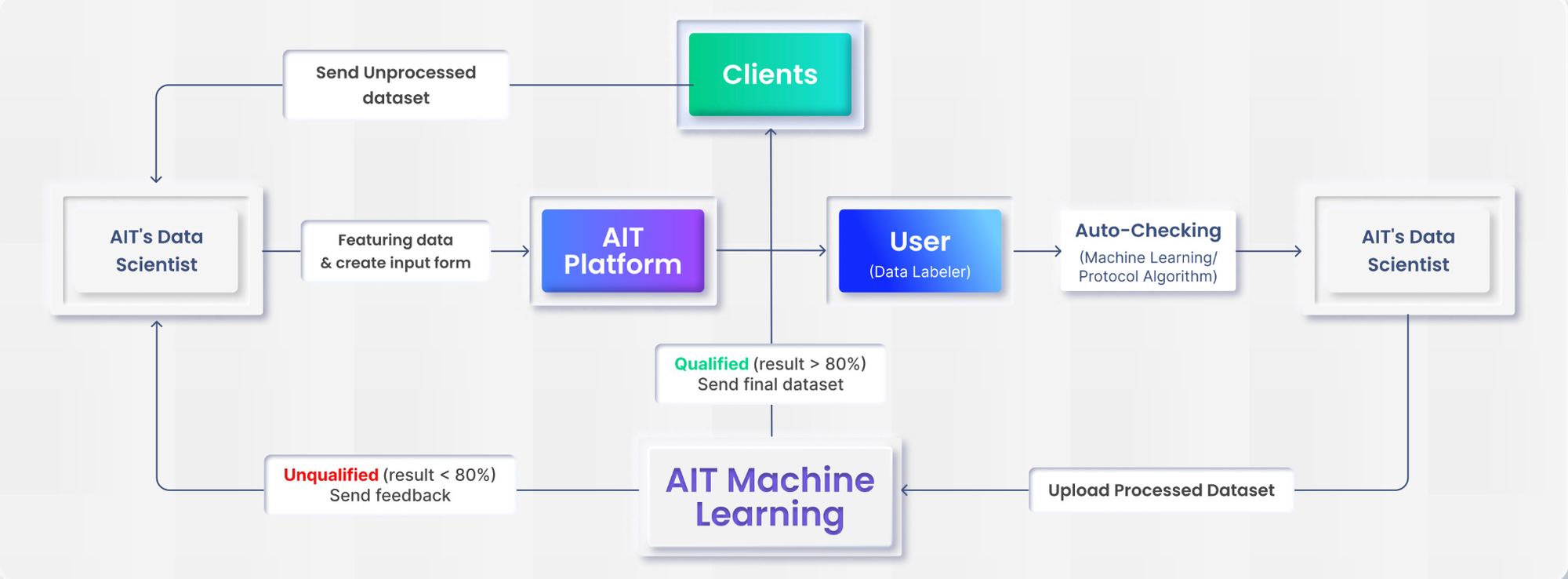

AIT Protocol: A decentralized data labeling protocol providing high-quality datasets for model training. Web3 enables global participation, with data scientists pre-labeling data, users refining it, and quality-checked outputs delivered to developers.

Beyond data provision and labeling, legacy decentralized storage infrastructures like Filecoin and Arweave also support distributed data supply.

5.2 Decentralized Compute

In the AI era, compute is paramount. Just as NVIDIA’s stock soars, in crypto, decentralized compute is arguably the most hyped AI subsector—among the top 200 crypto projects by market cap, 5 of the 11 AI projects focus on decentralized compute (Render, Akash, AIOZ Network, Golem, Nosana), all seeing massive rallies. Even smaller projects in this space have surged rapidly, especially near NVIDIA event cycles—any mention of GPUs sparks immediate price jumps.

Project logic in this sector is highly homogenized: use token incentives to mobilize idle compute resources (from data centers, former miners post-Ethereum PoS, consumer hardware, or partner projects), drastically reducing usage costs and building a compute marketplace. Despite similarities, this is a field where leaders build strong moats. Competitive advantages stem from resource scale, rental pricing, utilization rates, and technical differentiation. Leading projects include Akash, Render, io.net, and Gensyn.

Projects broadly split into two directions: AI model inference and training. Training demands far more compute and bandwidth than inference, making distributed training harder to implement. Meanwhile, the inference market is expanding rapidly, with predictable revenues likely exceeding training in the near term. Thus, most projects focus on inference (Akash, Render, io.net). Gensyn leads in training. Akash and Render predate the AI boom—Akash for general compute, Render for media rendering—while io.net was AI-native. All have pivoted aggressively toward AI given rising demand.

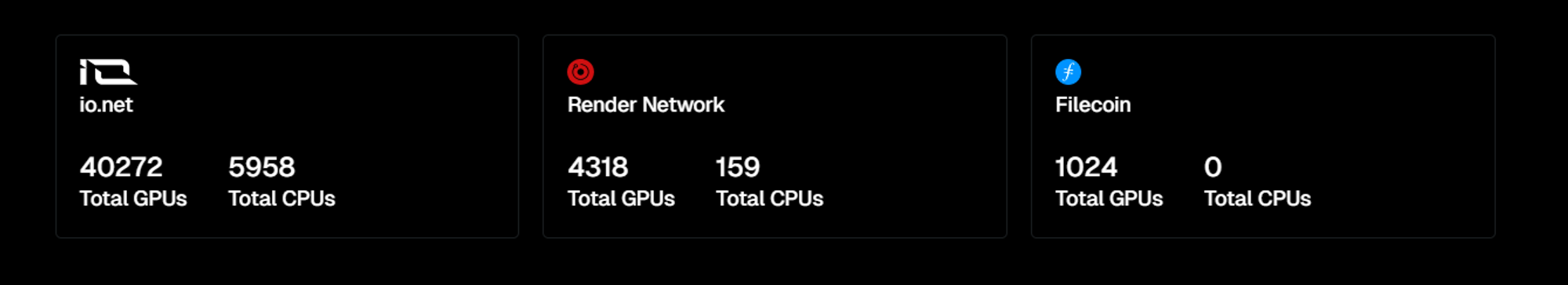

Two key metrics remain supply (compute resources) and demand (utilization). Akash reports 282 GPUs and over 20,000 CPUs, 160k leases completed, and 50–70% GPU utilization—a solid figure. io.net boasts 40,272 GPUs and 5,958 CPUs, plus access to Render’s 4,318 GPUs and 159 CPUs, Filecoin’s 1,024 GPUs—including ~200 H100s and over 1,000 A100s. It has completed 151,879 inferences. io.net uses high-profile airdrop expectations to attract resources, with GPU counts growing rapidly—its true capacity will be tested post-token launch. Render and Gensyn haven’t disclosed stats. Many projects boost competitiveness via partnerships: io.net taps Render and Filecoin; Render launched RNP-004, letting users access its compute indirectly via clients like io.net, Nosana, FedML, and Beam—accelerating transition from rendering to AI compute.

Yet, verification remains a challenge—how to ensure workers correctly execute tasks. Gensyn is developing a verification layer using probabilistic proof of learning, graph-based pinpointing, and incentives. Validators and challengers jointly verify computations. Beyond supplying training compute, Gensyn’s verification mechanism holds unique value. Fluence on Solana adds task verification—developers can check on-chain proofs to confirm app behavior and correct execution. Still, practicality often outweighs trust—platforms must first amass sufficient compute to compete. Exceptional verification protocols may later serve as layers atop other platforms.

5.3 Decentralized Models

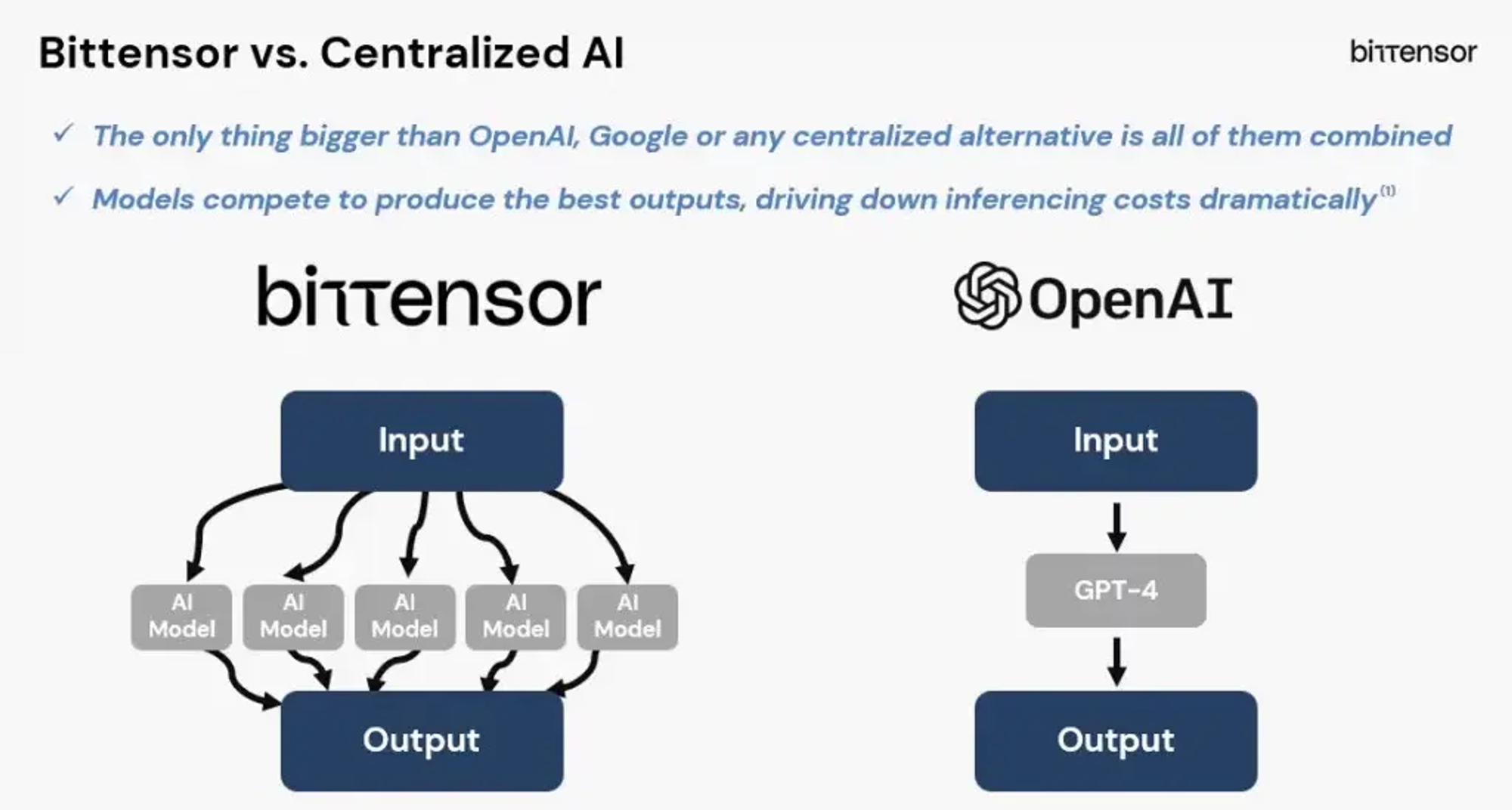

We remain far from Vitalik’s ultimate vision (shown below)—building a trusted black-box AI via blockchain and cryptography to solve adversarial ML. Encrypting the entire AI pipeline—from training to query to output—is prohibitively expensive. However, some projects are attempting to incentivize better AI models, breaking down silos between isolated models and fostering collaboration, competition, and mutual improvement. Bittensor is the most prominent example.

-

Bittensor: Promotes synergy among different AI models. Notably, Bittensor does not train models itself but provides AI inference services. Its 32 subnets specialize in areas like web scraping, text generation, and text-to-image. During complex tasks, models from different subnets collaborate. Incentives drive competition within and across subnets. Rewards are issued at 1 TAO per block (~7,200 TAO daily), allocated across subnets by 64 validators in SN0 (root net) based on performance. Subnet validators then distribute rewards among miners based on work quality. Better performance yields higher rewards, improving overall system inference quality.

6 Conclusion: Meme Hype or Technological Revolution?

From Sam Altman’s moves sending ARKM and WLD soaring, to NVIDIA events boosting affiliated projects, many are reassessing investment theses in the AI-crypto space. Is this meme speculation or genuine technological revolution?

Beyond a few celebrity-driven exceptions (like ARKM and WLD), the AI-crypto sector resembles “technically narrative-driven memes.”

On one hand, the overall hype in the crypto-AI space is tightly coupled with advancements in Web2 AI. External momentum led by OpenAI often acts as the catalyst. On the other hand, the storylines remain centered on “technical narratives”—note, “narratives” rather than actual “technology.” This underscores the importance of selecting the right subsector and scrutinizing project fundamentals. We must identify narratively compelling directions while spotting projects with sustainable competitive advantages and moats.

From Vitalik’s four integration models, we observe a balance between narrative appeal and feasibility. In the first two categories—AI as participant and interface—we see many GPT wrappers: quick to deploy but highly homogenized. First-mover advantage, ecosystem strength, user base, and revenue become the key differentiators in such saturated markets. The third and fourth categories represent grand visions—agent collaboration networks, zkML, decentralized AI reinvention—still in early stages. Projects with genuine innovation attract capital swiftly, even with minimal working demos.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News