Alliance DAO: What Are the Opportunities and Challenges of AI and Web3 Convergence?

TechFlow Selected TechFlow Selected

Alliance DAO: What Are the Opportunities and Challenges of AI and Web3 Convergence?

Few technologies have significantly influenced the trajectory of artificial intelligence, and Web3 is one of them.

Written by: Mohamed Fouda, Qiao Wang

*This article is exclusively authorized by Alliance DAO for translation and publication by TechFlow.

Since the launch of ChatGPT and GPT-4, there has been an explosion of content discussing how AI could transform everything, including Web3. Developers across industries are leveraging ChatGPT to automate tasks such as generating boilerplate code, performing unit tests, creating documentation, debugging, and detecting vulnerabilities. While this article will explore how AI enables new and interesting Web3 use cases, its primary focus lies in the reciprocal relationship between Web3 and AI. Few technologies have the potential to significantly impact the trajectory of AI development—Web3 is one of them.

How Can Web3 Benefit AI?

Despite its vast potential, current AI models face several challenges, including data privacy concerns, fairness in proprietary model execution, and the ability to create and spread credible false content. Some existing Web3.0 technologies offer unique advantages in addressing these issues.

Proprietary Datasets for Machine Learning Training

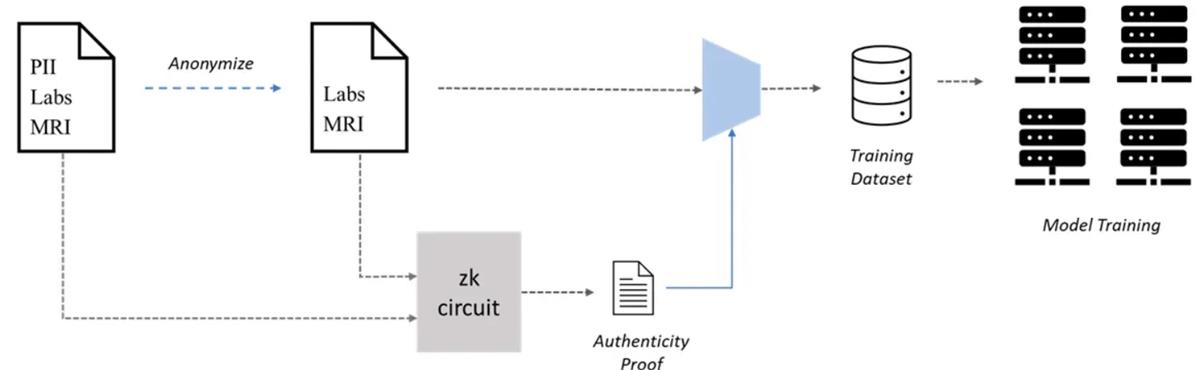

One area where Web3 can assist AI is in collaboratively creating proprietary datasets for machine learning (ML), i.e., Proof-of-Participation-in-Work (PoPW) networks for dataset creation. Large-scale datasets are crucial for accurate ML models, but their creation can become a bottleneck—especially in healthcare ML applications that require private diagnostic data. Patient data privacy poses a major obstacle, yet access to medical records is essential for training such models. However, patients may be unwilling to share their records due to privacy concerns. To address this, patients could anonymize their medical records in a verifiable manner, preserving privacy while still enabling use in ML training.

A key concern is the authenticity of anonymized medical records, as fabricated data could degrade model performance. Zero-knowledge proofs (ZKPs) can help resolve this dilemma by verifying the authenticity of anonymized records. Patients could generate ZKPs to prove that the anonymized record is indeed a true copy of the original—even after personally identifiable information (PII) has been removed. In this way, patients can submit both the anonymized record and the ZKP to interested parties and receive compensation without compromising their privacy.

Performing Inference on Private Data

A major weakness of current LLMs is their handling of private data. For instance, when users interact with ChatGPT, OpenAI collects user data to enhance model training, potentially leading to leaks of sensitive information—a situation previously experienced by Samsung. Zero-knowledge (zk) technologies can help mitigate some of the risks associated with running ML inference on private data. Here, we consider two scenarios: open-source models and proprietary models.

-

For open-source models, users can download the model and run their private data locally. A notable example is Worldcoin’s plan to upgrade World ID. Worldcoin needs to process users’ private biometric data—specifically iris scans—to generate a unique identifier called IrisCode. In this case, users can keep their biometric data private within their devices, download the ML model used to generate IrisCode, perform inference locally, and then create a ZKP to prove they successfully generated the IrisCode. The resulting proof guarantees the authenticity of the inference while protecting data privacy. Efficient zk-proof mechanisms for ML models (such as those developed by Modulus Labs) are critical for this use case.

-

The other scenario involves proprietary models. ZKPs offer two potential solutions. The first method involves using ZKPs to anonymize user data before sending it to the ML model, similar to the dataset creation case discussed earlier. The second approach involves local preprocessing of private data before sending the preprocessed output to the ML model. In this setup, the preprocessing step hides the user's private data in a way that prevents reconstruction. The user generates a ZKP to prove the correct execution of the preprocessing step, after which the rest of the proprietary model can be executed remotely on the model owner’s server. Applications include AI doctors analyzing patient medical records for diagnosis, and financial risk assessment algorithms evaluating customers’ private financial information.

Content Authenticity and Combating Deepfakes

ChatGPT may have overshadowed generative AI models focused on generating images, audio, and video. However, these models are now capable of producing highly realistic deepfakes. A recent AI-generated song mimicking Drake serves as a prime example. Such deepfake technologies pose a serious threat, prompting numerous startups to attempt solutions using Web2 technologies. Yet, Web3 technologies—such as digital signatures—are better suited to tackle this problem.

In Web3, user interactions (i.e., transactions) are signed with the user’s private key to prove authenticity. Similarly, any content—text, image, audio, or video—can be digitally signed with the creator’s private key to verify its origin. Anyone can validate the signature using the creator’s public address, which can be shared via the creator’s website or social media accounts. Web3 networks already have all the necessary infrastructure in place for this use case. Fred Wilson has discussed how linking content to public keys can effectively combat misinformation. Many prominent VCs have already linked their existing social media profiles (e.g., Twitter) or decentralized social platforms (like Lens Protocol and Mirror) to their crypto public addresses, lending credibility to digital signatures as a content authentication method.

Although the concept is simple, significant work remains to improve the user experience of this authentication process. For example, the digital signing of created content needs to be automated to provide a seamless workflow for creators. Another challenge is how to generate subsets of signed data—such as audio or video clips—without requiring re-signing. Many existing Web3 technologies are uniquely positioned to solve these problems.

Trust-Minimized Execution of Proprietary Models

Another way Web3 can benefit AI is by minimizing trust in service providers when offering proprietary ML models as a service. Users may need to verify that they received the service they paid for or ensure fair execution of the ML model—meaning all users are served by the same model. ZKPs can provide these assurances. In this architecture, the ML model creator generates a zk-circuit representing the model. This circuit is then used to generate ZKPs for user inferences on demand. The ZKP can be sent directly to users for verification or published on a public chain responsible for handling user validation. If the ML model is private, an independent third party can verify that the zk-circuit accurately represents the model. Trust-minimized ML model execution becomes especially valuable when outcomes carry high stakes. Examples include:

1. ML Medical Diagnosis

In this use case, patients submit their medical data to an ML model for potential diagnosis. Patients must be assured that the target ML model was correctly applied to their data. The inference process generates a ZKP proving correct execution of the model.

2. Loan Credit Assessment

ZKPs can ensure banks and financial institutions consider all financial information submitted by applicants during credit evaluation. Additionally, ZKPs can prove fairness by demonstrating that all users are evaluated using the same model.

3. Insurance Claim Processing

Current insurance claim processing is manual and subjective. ML models can assess policies and claims more fairly. Combined with ZKPs, these ML models can be proven to have considered all policy and claim details, ensuring the same model is used to process all claims under a given policy.

Addressing Centralization in Model Creation

Creating and training large language models (LLMs) is a lengthy and expensive process requiring specialized expertise, dedicated computing infrastructure, and millions of dollars in computational costs. These factors can lead to powerful centralized entities like OpenAI gaining immense control by restricting access to their models.

Given these centralization risks, research has increasingly focused on how Web3 can promote decentralization in LLM creation. Some Web3 advocates propose distributed computing as a way to compete with centralized players, arguing it could be a cheaper alternative. However, we believe this may not be the optimal angle. A key disadvantage of distributed computing is that ML training can be 10–100 times slower due to communication overhead among heterogeneous computing devices.

Instead, Web3 projects could focus on creating unique and competitive ML models in a PoPW-style framework. These PoPW networks could also collect data to build exclusive datasets for training such models. Projects moving in this direction include Together and Bittensor.

Payment and Execution Frameworks for AI Agents

The past few weeks have seen the rise of AI agents—systems that leverage LLMs to reason about tasks required to achieve specific goals and even execute them autonomously. This wave began with BabyAGI and quickly expanded to advanced versions like AutoGPT. A key prediction is that AI agents will become increasingly specialized, excelling in certain tasks. If specialized AI agent markets emerge, agents could search for, hire, and pay other agents to complete subtasks, thereby accomplishing larger objectives. In this context, Web3 networks offer an ideal environment. For payments, AI agents can be equipped with cryptocurrency wallets to receive and send funds. Moreover, AI agents can plug into crypto networks to delegate resources permissionlessly. For example, if an agent needs storage, it can create a Filecoin wallet and pay for decentralized storage on IPFS. Agents can also commission computing resources from decentralized compute networks like Akash to perform tasks—or even scale their own execution capabilities.

Preventing AI-Driven Privacy Violations

Given the massive amount of data required to train effective ML models, it is expected that any public data could be incorporated into models to predict individual behavior. Furthermore, banks and financial institutions could train proprietary ML models on users’ financial data to forecast future financial behaviors—posing a significant threat to privacy. The only viable mitigation is making financial transaction privacy the default. In Web3, this can be achieved through privacy-focused payment blockchains (like zCash or Aztec) and private DeFi protocols (such as Penumbra and Aleo).

AI-Enabled Web3 Use Cases

On-Chain Gaming

1. Bot Generation for Non-Programmer Gamers

On-chain games like Dark Forest introduce a unique paradigm where players gain advantage by developing and deploying bots to perform game tasks. This paradigm may exclude non-coders. However, LLMs can change this. Fine-tuned LLMs could understand on-chain game logic and allow players to create strategy-reflecting bots without writing any code. Projects like Primodium and AI Arena are already working to recruit both human and AI players for their games.

2. Bot Battles, Betting, and Gambling

Another possibility in on-chain gaming is fully autonomous AI players. In this case, a player is an AI agent—such as AutoGPT—that uses an LLM backend and has access to external resources like internet connectivity and initial crypto funds. These AI players could engage in bot battles and place bets, opening up speculative markets around betting outcomes.

3. Creating Realistic NPCs for On-Chain Games

Current games often neglect non-player characters (NPCs). NPCs have limited actions and minimal impact on gameplay. Given the synergy between AI and Web3, more engaging AI-controlled NPCs could be created, breaking predictability and enhancing game immersion. A key challenge will be introducing meaningful NPC activity while minimizing TPS (transactions per second) overhead. Excessive NPC-related TPS could cause network congestion and degrade the experience for human players.

Decentralized Social Media

One challenge facing current decentralized social platforms is their lack of unique user experiences compared to established centralized platforms. Seamless integration with AI could deliver distinctive experiences absent in Web2 alternatives. For example, AI-managed accounts could attract new users by sharing relevant content, commenting on posts, and joining discussions. AI accounts could also serve as news aggregators, summarizing recent trends aligned with user interests.

Testing Security and Economic Design of Decentralized Protocols

LLM-based AI agents capable of setting custom goals, writing code, and executing it offer practical testing of the security and economic viability of decentralized networks. In this scenario, AI agents are tasked with auditing a protocol’s security or economic balance. They can analyze protocol documentation and smart contracts to identify vulnerabilities, then independently execute attack mechanisms to maximize personal gains. This simulates real-world conditions a protocol might face post-launch. Based on test results, designers can refine the protocol and patch weaknesses. So far, only specialized firms like Gauntlet possess the technical skill set for such services. However, LLMs trained on Solidity, DeFi mechanisms, and prior development patterns could eventually offer comparable functionality.

LLMs for Data Indexing and Metric Extraction

Although blockchain data is public, indexing it and extracting useful insights remains challenging. Some players (e.g., CoinMetrics) focus on indexing data and building complex metrics for sale, while others (like Dune) index core transaction components and crowdsource metric creation via community contributions. With recent advances in LLMs, disruption in data indexing and metric extraction is imminent. Dune has already acknowledged this threat and released an LLM roadmap featuring components like SQL query explanation and NLP-based querying. However, we anticipate deeper impacts. One possibility is LLMs that index data for specific metrics and interact directly with blockchain nodes. Startups like Dune Ninja are already exploring innovative LLM applications for data indexing.

Onboarding Developers to New Ecosystems

Different blockchains compete to attract developers to build applications within their ecosystems. Active developer engagement is a key indicator of an ecosystem’s success. A major pain point for developers is the lack of timely support and guidance when learning and building on emerging ecosystems. Some ecosystems have invested millions in dedicated DevRel teams to support exploratory developers. Here, emerging LLMs have shown remarkable ability to explain complex code, catch bugs, and generate documentation. Fine-tuned LLMs can complement human expertise, dramatically increasing DevRel team efficiency. For example, LLMs can generate documentation, tutorials, answer FAQs, and even support hackathon participants by providing boilerplate code or creating unit tests.

Improving DeFi Protocols

Integrating AI into the logic of DeFi protocols can significantly enhance their performance. Until now, the main bottleneck in integrating AI into DeFi has been the high cost of on-chain implementation. AI models can be executed off-chain, but previously there was no way to verify execution. However, projects like Modulus and ChainML are making off-chain verification possible. These projects enable off-chain ML execution while constraining on-chain cost expansion. For Modulus, on-chain fees are limited to verifying the model’s ZKP; for ChainML, they consist of oracle fees paid to a decentralized AI execution network.

Some DeFi use cases that could benefit from AI integration:

-

AMM liquidity provisioning, e.g., updating Uniswap V3 liquidity ranges.

-

Liquidation protection for debt positions using on-chain and off-chain data.

-

Complex DeFi structured products where vault mechanisms are defined by financial AI models rather than fixed strategies. These could include AI-managed trading, lending, or options.

-

On-chain credit scoring mechanisms considering multiple chains and wallets.

Conclusion

We believe Web3 and AI are culturally and technologically compatible. Unlike Web2, which tends to resist automation, Web3 allows AI to thrive thanks to its permissionless programmability.

More broadly, if you view blockchains as networks, we expect AI to dominate the network edges (i.e., the interaction points). This applies to various consumer applications—from social media to gaming.

So far, the edges of Web3 networks have mostly been humans—humans initiating and signing transactions or deploying bots with fixed strategies to act on their behalf.

Over time, we will see increasing numbers of AI agents at the network edge. These AI agents will interact with humans and each other through smart contracts, creating novel consumer experiences.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News