Microsoft and OpenAI “Part Ways”: The Era of Model Exclusivity Has Ended

TechFlow Selected TechFlow Selected

Microsoft and OpenAI “Part Ways”: The Era of Model Exclusivity Has Ended

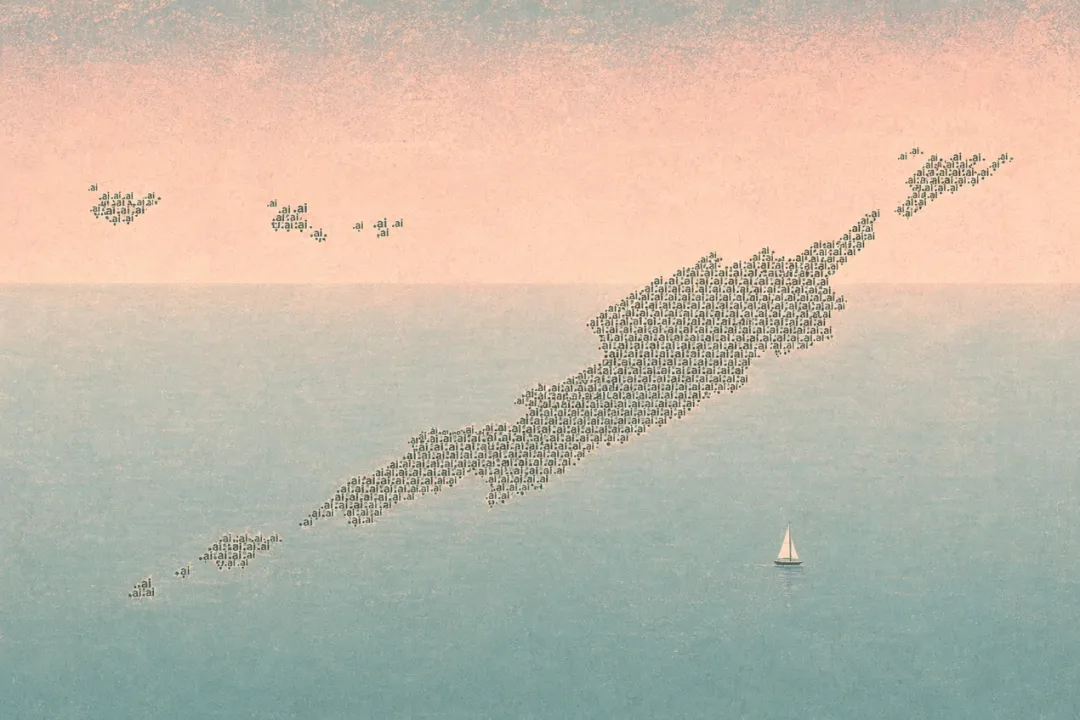

In this AI arms race, the ultimate winner may be neither the company with the best model nor the one with the most funding.

Author: Ada, TechFlow

Recently, Microsoft and OpenAI jointly announced a revision to their partnership agreement. The exclusive cloud restriction has been lifted; IP licensing has been downgraded to non-exclusive; and the AGI “escape clause” has been removed.

Upon this news, nearly every Chinese-language media outlet asked the same question: Who won? But that isn’t the core issue.

What this “breakup” truly buries is an entire era of competitive logic in the AI industry—the idea that whoever secured the best model would win.

Under the new rules of the game, the stakes have shifted—from models to something else entirely.

Models Are No Longer Scarce

First, consider a few numbers.

OpenAI’s publicly disclosed infrastructure commitments total over $250 billion with Microsoft Azure, $300 billion with Oracle’s Stargate project, and $138 billion with Amazon AWS (including the original $38 billion plus an additional $100 billion over eight years).

That adds up to more than $680 billion—while OpenAI’s annualized revenue stands at roughly $25 billion.

A company generating $25 billion per year has signed compute bills exceeding $680 billion. OpenAI has effectively sold itself to compute providers—it is now an anchor customer for the three major cloud vendors.

The same holds true for Anthropic. Last week, it finalized an expanded partnership with Amazon, committing to spend over $100 billion on AWS over the next decade in exchange for 5 gigawatts of compute capacity. Four days later, it signed a 3.5-gigawatt TPU capacity agreement with Google and Broadcom, expected to come online in 2027. Add to that Google’s recent announcement of up to $40 billion in investment—and Anthropic is now locked in by two cloud giants.

The two most advanced AI companies are both mortgaging their futures for compute.

What did Microsoft’s $1 billion investment in OpenAI back in 2019 actually buy?

Exclusive distribution rights for its models. Azure held sole access to the GPT series—if customers on other clouds wanted to use OpenAI’s models, they had to migrate to Azure.

That was the era of “model scarcity.” GPT was the only viable large language model on the market—owning it conferred pricing power.

But today, in 2026, models are no longer scarce.

Claude from Anthropic, Gemini from Google, and Meta’s open-source Llama—all run across multiple cloud platforms. Ramp’s enterprise spending data shows that 79% of enterprises paying for Anthropic also pay for OpenAI. Enterprise customers simply do not want to be locked into a single platform.

OpenAI sees this clearly too. Denise Dresser, its Chief Revenue Officer, wrote plainly in an internal memo in March: “Our partnership with Microsoft laid the foundation for our growth—but it also constrained our ability to meet real-world enterprise customer needs.”

In other words, exclusive binding used to be an advantage; now it’s a liability.

The model layer is rapidly commoditizing. When all mainstream models can run on all mainstream clouds, the value of exclusive model distribution rights approaches zero.

So what’s gaining value? Compute.

The data makes it obvious. Within two months, Amazon committed hundreds of billions of dollars each to OpenAI and Anthropic. Google invested $40 billion in Anthropic while continuing to fund its own Gemini. Microsoft loosened its grip on OpenAI while simultaneously tasking Mustafa Suleyman with leading an independent superintelligence research initiative.

At the heart of every deal lies compute, chips, and data centers—models have become the freebie.

Electricity Is the New Oil

Returning to Microsoft and OpenAI’s revised agreement:

On the surface, OpenAI gains freedom to sell its models on AWS and Google Cloud. Microsoft loses exclusivity but retains a 27% equity stake and a non-exclusive IP license through 2032.

Switching from exclusive to non-exclusive sounds like a win for OpenAI—but the $250 billion Azure procurement commitment remains intact, OpenAI products still launch first on Azure unless Microsoft chooses not to support them, and that hasn’t changed. This isn’t decoupling—it’s swapping a chain for a pipeline. Previously, contracts bound you; now, infrastructure does.

OpenAI’s current position: It has signed compute contracts worth $250 billion with Azure, $138 billion with AWS, and $300 billion with Oracle—each spanning multiple years and tied to specific chip architectures and deployment schemes. Technically, it has achieved “multi-cloud freedom”; financially, it is simultaneously locked in by three cloud vendors. It looks less like liberation and more like trading one landlord for three.

Zoom out further.

In 2023, ChatGPT burst onto the scene—and everyone declared: “Models are the new oil. Whoever controls the best model controls the future.”

Two and a half years later, oil has turned into tap water. Models remain important—but they’re no longer scarce. What’s truly scarce is the electricity, chips, and physical space required to run them.

This mirrors the early evolution of the internet. In the 1990s, everyone raced to control content and traffic gateways. Ultimately, the winners were the pipe-builders: Cisco, AT&T, AWS.

The AI industry is undergoing the same inflection point now. Model companies thought they were the protagonists—only to realize, after signing compute contracts, that they’ve become long-term customers of cloud vendors. Those hundred-billion-dollar contracts aren’t empowerment agreements—they’re lock-in agreements.

What was Microsoft’s cost for giving up OpenAI’s exclusive distribution rights? A $250 billion Azure revenue commitment.

From a business standpoint, did Microsoft lose?

According to CNBC, Barclays analysts view this as marginally positive for Microsoft—it will no longer bear the full capital burden of building OpenAI’s data centers, freeing up funds for Copilot and other cloud initiatives.

Microsoft traded “exclusivity” for “guaranteed revenue,” shifting from venture-capital logic to utility-company logic.

The entire AI industry is undergoing this shift. Frontier-model companies burn cash faster and faster; cloud vendors receive ever-thicker invoices. Model-company valuations swing wildly, while cloud vendors’ cash flows grow steadily.

Axios reported a telling detail last week: Just the week before, OpenAI had written to investors touting its compute scale as its key competitive advantage over Anthropic—and accusing Anthropic of making a “strategic mistake” by failing to secure sufficient compute.

Days later, Anthropic signed two new compute agreements totaling over 8 gigawatts.

This is the AI race in 2026: Not who builds the smartest model—but who secures the most electricity.

And amid this restructuring, there’s one rarely discussed beneficiary: Amazon.

Amazon now holds significant equity stakes in both Anthropic and OpenAI. The two most advanced AI labs have each pledged to spend over $100 billion on AWS.

Invest $50 billion in OpenAI—and earn back $138 billion in AWS revenue. Invest $33 billion in Anthropic—and earn back over $100 billion in AWS revenue.

Amazon doesn’t care who wins. It cares only that, no matter who wins, the electricity bill gets sent to its address.

The Truth Behind the Contracts

The day after Microsoft and OpenAI announced their “uncoupling,” the Wall Street Journal published a report stating that OpenAI missed its internal revenue targets for multiple consecutive months in Q1 2026—and user growth fell short of expectations.

CFO Sarah Friar internally warned that if revenue growth doesn’t accelerate, the company may struggle to fulfill its future compute obligations.

The reality is stark: Revenue remains stuck at $25 billion, yet compute contracts already exceed $680 billion.

The market’s reaction was more honest than any commentary. On the day of the WSJ report, Oracle’s stock dropped 7.7%, CoreWeave fell 7.4%, SoftBank plunged nearly 10% in Tokyo, and NVIDIA, AMD, and Broadcom declined 2%–6%. Investors weren’t selling OpenAI—they were selling every company banking on OpenAI to deliver on those compute bills.

John Belton, portfolio manager at Gabelli Funds, told CNBC that OpenAI’s growth visibly slowed from late 2025 into early 2026, with market share eroded by Anthropic and Gemini. With so many compute contracts on its books, OpenAI may simply be unable to pay its bills.

This is the real picture behind the “end of the exclusive era.”

OpenAI gained the freedom to sell its models across three clouds—but paid for it by being contractually bound to all three cloud vendors’ compute commitments. It has gone from Microsoft’s exclusive partner to a long-term paying customer of Azure, AWS, and Oracle—each contract multi-year, each tied to specific chip architectures and deployment plans, each predicated on sustained, rapid revenue growth.

OpenAI thought it had gained bargaining power—but in 2026’s supply-constrained compute market, bargaining power lies not with model companies. It lies with whoever controls electricity, chips, and physical space. The hundred-billion-dollar contracts signed by model companies aren’t procurement agreements—they’re indenture contracts. Once signed, relocation costs become prohibitive. When a model has trained for two years on Trainium chips, migrating to another chip architecture requires re-optimizing the entire training pipeline—it’s far more complex than switching cloud accounts.

OpenAI and Microsoft’s “breakup” may look like an independence declaration for the AI industry—but reading the fine print reveals the $250 billion Azure commitment remains, the CFO is warning internally about unpaid bills, revenue has repeatedly fallen short, competitors are seizing market share, and all proposed solutions hinge on revenue growing elevenfold by 2030.

The pipe-builders never discuss ideals. They talk only about contract terms, delivery timelines, and penalty clauses.

The ultimate winner of this AI arms race may be neither the company with the best model nor the one with the most funding—but the infrastructure providers who collect deposits, sign long-term contracts, and collect rent regardless of who wins. Like gold rush stories that repeat endlessly, the ones who ultimately get rich are always the ones selling shovels.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News