Is it their shared opposition to the Pentagon that brings GPT and Claude together?

TechFlow Selected TechFlow Selected

Is it their shared opposition to the Pentagon that brings GPT and Claude together?

This time, they held hands—but probably for only a few hours.

Author: Kuli, TechFlow

A photo went viral online a few days ago.

India hosted an AI summit, where Prime Minister Modi stood on stage flanked by a row of Silicon Valley executives. During the group photo, Modi grabbed the hand of the person beside him and raised it overhead; others followed suit, linking hands—a display of unity.

But two people refused to hold hands.

The CEOs of OpenAI and Anthropic—the companies behind ChatGPT and Claude—stood side by side, each raising a fist instead.

No hand-holding. No eye contact. Like two rivals reluctantly seated together by their teacher.

These two firms have been locked in fierce competition for years. Claude was built by a team that left OpenAI. They’ve battled over users, enterprise clients, and funding. During this year’s Super Bowl, Anthropic even bought ad space specifically to mock ChatGPT’s upcoming ads.

So refusing to hold hands? Entirely normal.

Yet today, they did hold hands—because of the Pentagon.

Here’s what happened.

Anthropic—the company behind Claude—signed a contract with the U.S. Department of Defense last year worth up to $200 million. Claude became the first AI model deployed on the U.S. military’s classified networks, assisting with intelligence analysis and mission planning.

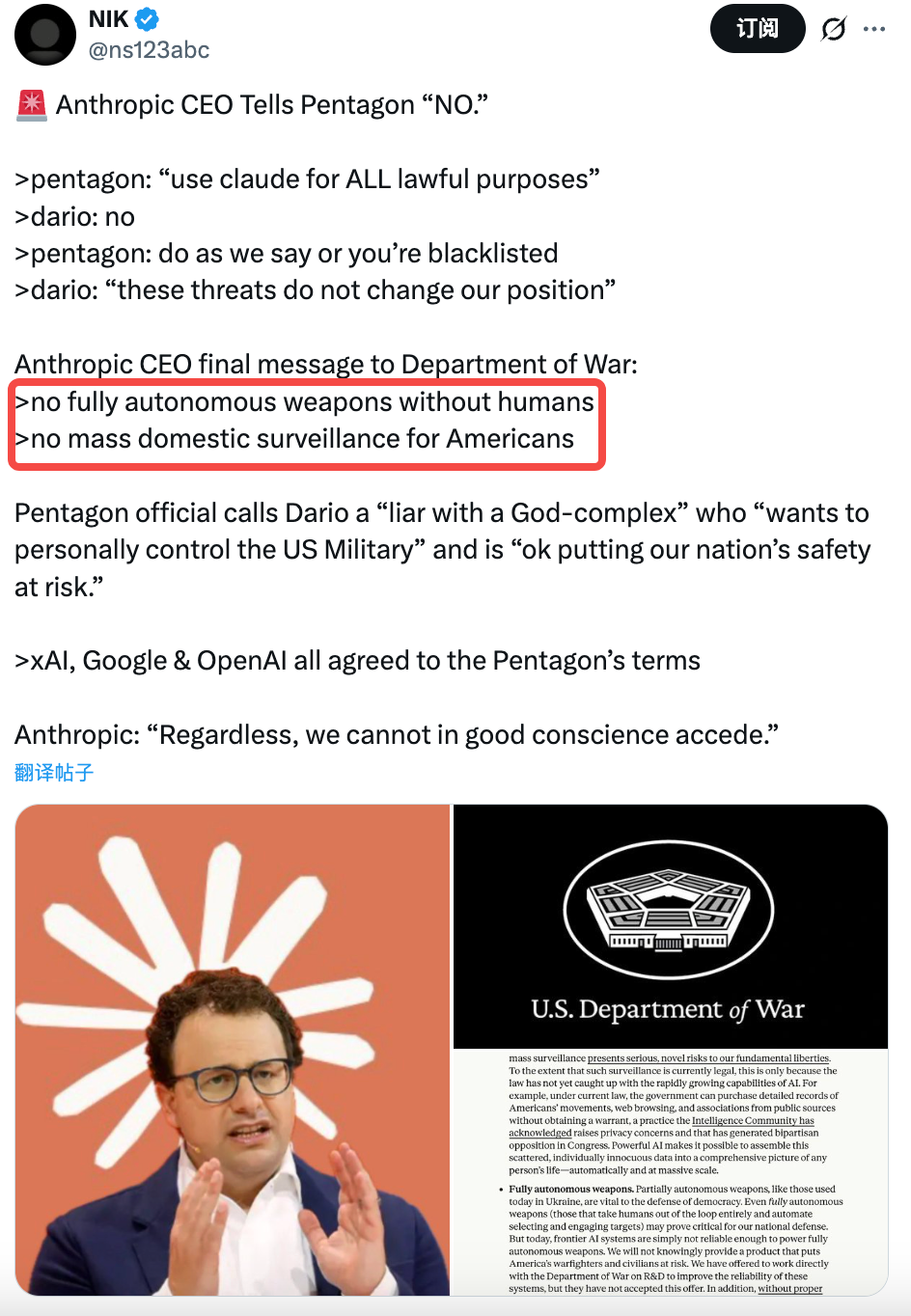

But Anthropic drew two red lines in the contract:

Claude must not be used for mass surveillance of U.S. citizens, nor for autonomous weapons systems operating without human involvement. (See also: The Seventy-Two Hours of Anthropic’s Identity Crisis)

The Pentagon rejected those conditions.

Their demand was four words: “No restrictions.” Once you buy a tool, you should be free to use it however you like—and a tech company has no right telling the U.S. military what it can or cannot do.

Last Tuesday, Secretary of Defense Hegseth delivered Anthropic’s CEO an ultimatum in person: agree by Friday at 5:01 p.m., or face consequences.

Anthropic declined.

Their CEO issued a public statement saying: “We recognize AI’s vital importance to U.S. national defense—but in rare cases, AI can undermine, rather than uphold, democratic values. We cannot, in good conscience, accept this demand.”

Emil Michael, the Pentagon’s lead negotiator and Deputy Secretary of Defense, then took to social media to call the CEO a “fraud,” accuse him of having a “God complex,” and claim he was playing fast and loose with national security.

A Brief Handshake

Then something unexpected happened.

Over 400 employees from OpenAI and Google signed a joint open letter titled “We Will Not Be Divided.”

The letter stated that the Pentagon was approaching AI companies one by one, attempting to pressure them into accepting the very terms Anthropic had refused—using fear to divide and conquer.

OpenAI’s CEO also sent an internal memo to all staff affirming that OpenAI shares Anthropic’s red lines:

No mass surveillance. No autonomous lethal weapons.

The two companies that, just days earlier, refused to hold hands suddenly found themselves standing shoulder-to-shoulder—against the Pentagon.

Yet this unity may have lasted only a few hours.

At 5:01 p.m. on Friday, the Pentagon’s deadline expired. Anthropic refused to sign.

A U.S. tech company valued at $380 billion chose to walk away from a $200 million contract—risking its cancellation outright. In the past, such disputes might have ended with simple contract termination and supplier replacement. This time, Washington’s response was far beyond commercial scale.

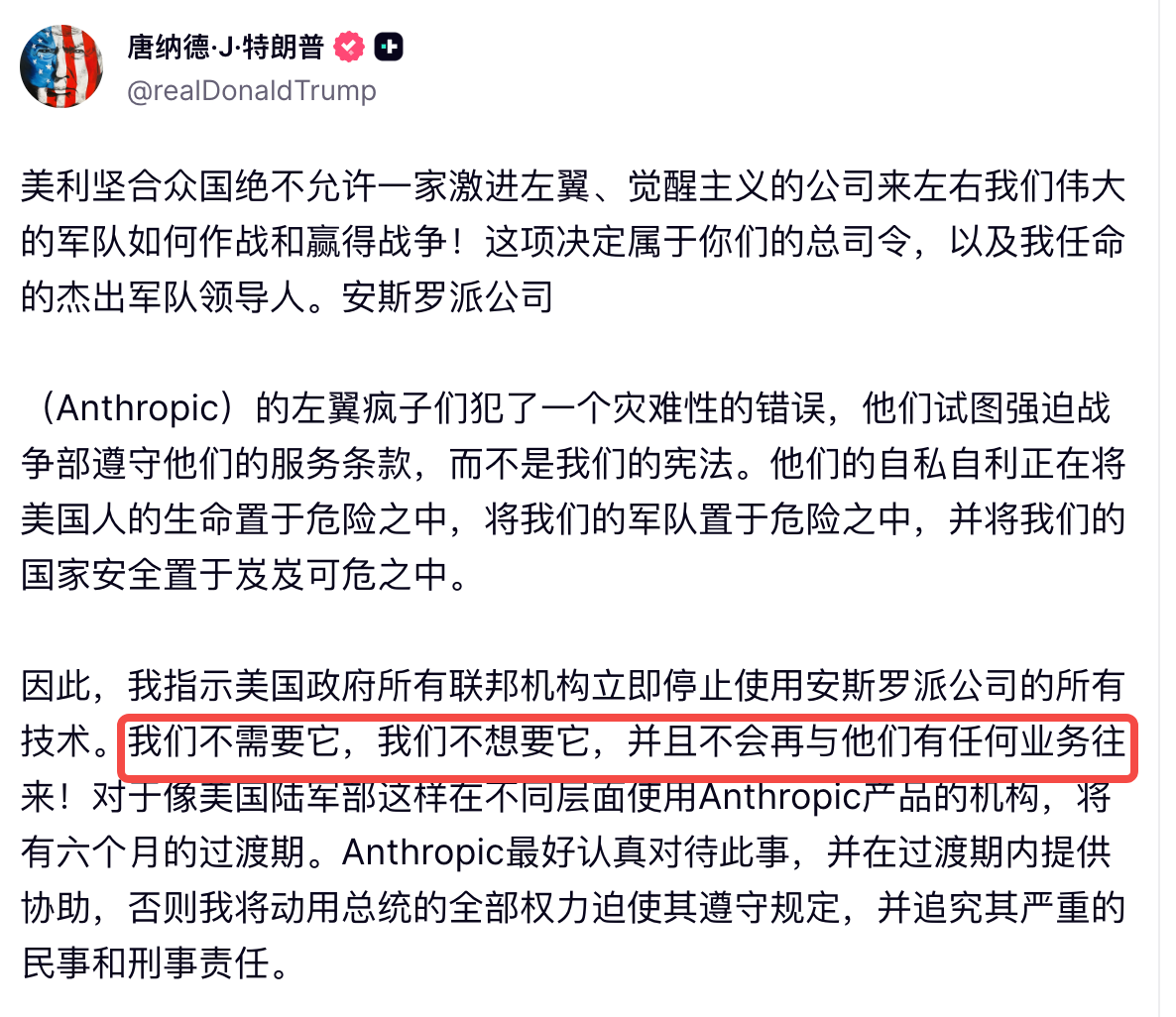

About an hour later, Donald Trump posted on Truth Social, branding Anthropic “left-wing lunatics” who sought to override the Constitution and jeopardize American soldiers’ lives.

He ordered all federal agencies to immediately stop using Anthropic’s technology.

Shortly thereafter, Secretary of Defense Hegseth designated Anthropic a “supply chain security risk”—a label normally reserved for companies like Huawei. The message was unambiguous: any contractor doing business with the U.S. military is now barred from using Anthropic’s products.

Anthropic says it will sue.

And that same evening, OpenAI—which had just stood shoulder-to-shoulder with Anthropic—signed its own agreement with the Pentagon.

An Ideological Divide

What did OpenAI get?

The slot vacated by Claude: becoming the AI provider for the U.S. military’s classified networks. Still, OpenAI presented the Pentagon with three conditions: no mass surveillance, no autonomous weapons, and human oversight required for high-risk decisions.

The Pentagon agreed.

You read that right. Conditions that Anthropic had negotiated for weeks without success were accepted by the Pentagon within days—once proposed by another company?

Of course, the two proposals weren’t identical.

Anthropic demanded an extra layer: they argued current laws lag behind AI’s capabilities—for instance, AI can legally purchase and aggregate your location data, browsing history, and social media activity, achieving de facto surveillance—even though each individual step remains lawful.

Anthropic insisted that merely writing “no surveillance” isn’t enough—the loophole must be closed. OpenAI didn’t insist on this point and accepted the Pentagon’s position that existing laws are sufficient.

But if you think this is merely a technical disagreement over clauses, you’re being naive. From the outset, these negotiations were never just about contractual terms.

David Sacks, the White House’s AI “czar,” has publicly criticized Anthropic for building “woke AI”—prioritizing ideology and political correctness. Senior Pentagon officials told reporters that Dario Amodei’s stance is ideologically driven: “We know exactly who we’re dealing with.”

Elon Musk’s xAI—Anthropic’s direct competitor—has launched repeated attacks against Anthropic on X this week, accusing the company of “hating Western civilization.”

Anthropic’s CEO skipped Trump’s inauguration last year. OpenAI’s CEO attended.

Making an Example

Let’s recap what happened.

The same principles. The same red lines. Yet Anthropic—by demanding one additional safeguard, aligning itself with the wrong political camp, and adopting the wrong posture—was branded a U.S. national security threat on par with Huawei.

OpenAI, asking for less and cultivating better relationships, walked away with the contract. Was this a triumph of principle—or a demonstration of principle’s price tag?

This isn’t the first time a Pentagon contract has faced resistance.

In 2018, over 4,000 Google employees signed a petition, and more than a dozen resigned in protest against the company’s involvement in Project Maven—a Pentagon initiative using AI to analyze drone footage and help identify targets faster.

Google ultimately withdrew—not renewing the contract. Employees won.

Eight years later, the same controversy has resurfaced—but the rules have completely changed. A U.S. company says it’s willing to work with the military—but draws two hard lines. The U.S. government responds by ejecting it from the entire federal ecosystem.

And the “supply chain security risk” designation carries consequences far beyond losing a $200 million contract.

Anthropic’s annual revenue this year is roughly $14 billion—so the $200 million contract amounts to less than a rounding error. But the designation means any company doing business with the U.S. military is now prohibited from using Claude.

Those companies don’t need to endorse the Pentagon’s stance—they only need to run a basic risk assessment: keep using Claude, and risk losing government contracts; switch to another model, and nothing changes.

The choice is easy. That’s the real signal here.

Whether Anthropic survives doesn’t matter. What matters is whether the next company dares to stand firm. It will look at this outcome, weigh the cost of upholding principle—and make a highly rational decision.

Go back to that photo from India: everyone holding hands, arms raised high—except those two, each clenching a fist.

Perhaps that’s the norm.

AI companies may share the same principles—but they don’t always hold hands.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News