"Clear-headed in the face of reality": Musk — Compared to the AI tsunami, DOGE is nothing

TechFlow Selected TechFlow Selected

"Clear-headed in the face of reality": Musk — Compared to the AI tsunami, DOGE is nothing

"Humanity stands at the starting point of an 'intelligence big bang,' and a 'thousand-foot AI tsunami' is about to sweep across."

Author: Long Yue, Wall Street Insights

Recently, U.S. startup accelerator Y Combinator (YC) held its inaugural AI Startup School in San Francisco, inviting several heavyweight figures from the AI industry, including Elon Musk and OpenAI CEO Sam Altman.

Musk, who recently concluded a 130-day tenure as a special government employee with the U.S. "Department of Government Efficiency" (DOGE), candidly described this experience during an interview as an “interesting side quest,” but one that pales in significance compared to the impending AI revolution. He likened his work at the efficiency department to “cleaning up a beach,” while the coming AI wave is a “thousand-foot-tall tsunami.”

"Fixing government is like… the beach is dirty, with needles, feces, and garbage. But then there’s also a thousand-foot-high wall of water—that’s the AI tsunami. If you’re facing a thousand-foot-high tsunami, how meaningful is cleaning the beach? Not very."

Musk predicted that digital superintelligence could arrive this year or next, surpassing human intelligence, and that future humanoid robots could outnumber humans by a factor of 5 to 10. He boldly forecast that an AI-driven economy would be thousands or even millions of times larger than today's, reducing humanity’s share of total intelligence to less than 1%. Key highlights from his remarks:

- Musk announced he left DOGE on May 28, ending his 130-day government role, stating he was returning to his “main mission”;

- He compared government efficiency work to “beach cleanup,” while AI is the “thousand-foot tsunami”—rendering the former insignificant when the latter looms;

- Predicted digital superintelligence will likely emerge this year or next, declaring, “If not this year, definitely next year”;

- Forecasts humanoid robot populations will far exceed humans, possibly reaching 5x or even 10x human numbers;

- Anticipates the AI-driven economy will be thousands or millions of times larger than today’s, advancing civilization toward Kardashev Type II (stellar-scale energy use), with human intelligence comprising less than 1% of total intelligence;

- Stressed that “a rigorous commitment to truth” is the most critical foundation for AI safety—forcing AI to believe falsehoods is extremely dangerous;

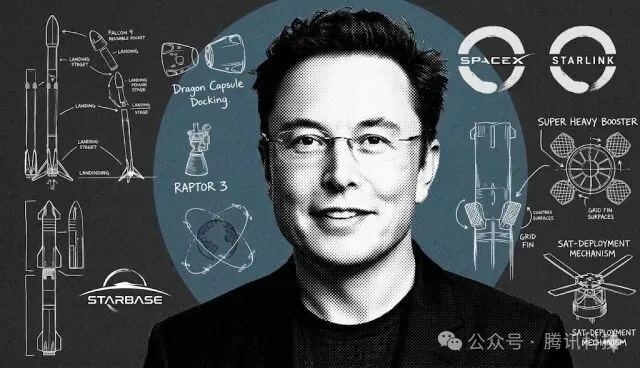

- Recalled early SpaceX days: fourth rocket launch succeeded after three failures—a make-or-break moment; Tesla secured funding just before bankruptcy in 2008.

DOGE Mission Complete: Too Much Political Noise, Back to the 'Main Quest'

In the interview, Musk admitted his time in Washington D.C. gave him deep insight into what he called “terrible signal-to-noise ratio in politics.” He described his D.C. work as an “interesting side quest,” but ultimately decided it was time to return to his main mission—“building technology, which is what I love doing.”

The billionaire explained his fundamental reason for leaving public office: “Fixing government is like cleaning a beach—the beach is dirty, full of needles, feces, and trash. But at the same time, there’s this thousand-foot-high wall of water—that’s the AI tsunami. If a thousand-foot-high tsunami is coming, how important is cleaning the beach? Not very.”

Superintelligence Is Imminent: Will Arrive This Year or Next

Musk offered a remarkably clear timeline for digital superintelligence: “I think we are now extremely close to digital superintelligence. If it doesn’t happen this year, it certainly will happen next year.”

He defined “digital superintelligence” as “an intelligence smarter than any human at everything.” Musk predicted AI will drive exponential economic growth—not tenfold, but “thousands or even millions of times larger” than today’s economy.

“The transformation will be so profound it’s hard to grasp... Assuming we don’t go off track and AI doesn’t destroy us or itself, you won’t see an economy ten times bigger. Eventually, if our descendants—mostly machine descendants—become a Kardashev Type II or higher civilization, we’ll be talking about an economy thousands or even millions of times larger than today’s.”

He elaborated on humanity’s future role: “At some point, the percentage of human intelligence will become quite small. At some point, the sum total of human intelligence will be less than 1% of all intelligence.”

xAI Currently Training Grok 3.5

Musk revealed that xAI is currently training Grok 3.5, “with a focus on reasoning capabilities.”

According to ZeroHedge, xAI is seeking $4.3 billion in equity financing, combined with $5 billion in debt financing, covering both xAI and social media platform X.

The Hardware Race: An Engineering Miracle from Zero to 100,000 GPUs

Musk applied first-principles thinking to overcome hardware challenges in AI training. When suppliers estimated 18–24 months to build a 100,000 H100 GPU supercluster, Musk’s team compressed it into just six months.

They leased a decommissioned Electrolux factory in Memphis, rented generators to meet 150MW power needs, acquired a quarter of the U.S.’s mobile cooling capacity, and used Tesla Megapacks to stabilize massive power fluctuations during training. Musk personally participated in wiring and even “slept in the data center.”

The current training site hosts 150,000 H100s, 50,000 H200s, and 30,000 GB200s. A second data center is set to come online soon with 110,000 GB200s.

Vision for the Future: Robot Armies and Interstellar Civilization

Musk predicted there will be at least five times more humanoid robots than humans—“maybe ten times.” He admitted he once hesitated in AI and robotics due to fears of making “Terminator real,” but eventually realized, “Whether I do it or not, it’s going to happen anyway. You’re either a spectator or a participant. I’d rather be a participant.”

In a broader vision, Musk framed human progress through the Kardashev Scale. Humanity currently uses only 1–2% of Earth’s energy, far from a Type I civilization. Becoming multiplanetary is key to expanding consciousness and vastly extending civilization’s lifespan.

SpaceX aims to transport enough material to Mars within about 30 years to make it self-sustaining—“so even if supply ships from Earth stop, Mars can continue to thrive.”

Full Interview Transcript (AI Translated)

Elon Musk

We are in the very, very early stages of an intelligence big bang. Becoming a multiplanetary species greatly extends the potential lifespan of civilization, consciousness, or intelligence—whether biological or digital. I think we’re extremely close to digital superintelligence. If it doesn’t happen this year, it will definitely happen next year.

Garry Tan, YC CEO & President

[Music] Please welcome Elon Musk. [Applause] Elon, thank you for joining AI Startup School. We’re truly honored to have you here today. From SpaceX, Tesla, Neuralink, xAI, and more—before all this, was there a defining moment when you thought, ‘I must create something great’? What drove that decision?

Elon Musk

I didn’t initially think I could create anything great. I just wanted to try to do something useful, but I didn’t expect to do anything particularly extraordinary. Statistically, it seemed unlikely—but I at least wanted to try.

Garry Tan

You’re speaking to a room full of tech engineers, many rising top AI researchers.

Elon Musk

Well, I prefer the term “engineer” over “researcher.” I mean, if there’s a foundational algorithm breakthrough, that’s research—but otherwise, it’s engineering.

Garry Tan

Maybe we can go way back. You’re addressing a room of 18- to 25-year-olds—young founders. Can you put yourself in their shoes? When you were 18 or 19, learning programming, having your first idea for Zip2—what was that like for you?

Elon Musk

Back in ’95, I faced a choice: stay at Stanford for grad school—PhD in materials science, researching supercapacitors for electric vehicles, basically to solve EV range issues—or dive into this thing most people had never heard of called the “internet.” I talked to my professor, Bill Nix in materials science, asking, “Can I take a leave of absence? This might fail, and I’ll come back.”

He said, “This might be our last conversation.” And he was right. But at the time, I thought failure was more likely than success. In ’95, I coded what was probably the first or near-first internet map, route planner, white pages, and yellow pages.

I wrote all the code myself—I didn’t even use a web server. I read directly from ports because I couldn’t afford one, or a T1 line. The original office was on Sherman Avenue in Palo Alto. There was an ISP downstairs, so I drilled a hole through the floor and ran a cable straight down to them.

Then my brother joined me, along with co-founder Greg Curry, who has since passed. We couldn’t afford housing, so we lived in the office and showered at the YMCA on Page Mill Road. Yes, we ended up building a somewhat useful company—Zip2, in the beginning. We developed amazing software tech, but were kind of captured by traditional media companies—Knight-Ridder, New York Times—who were investors, customers, and board members.

So they always wanted to apply our software in meaningless ways. I wanted to go direct-to-consumer. Anyway, I won’t dwell on Zip2, but the core idea was simply to do something useful online. I had two choices: watch others build the internet as a PhD student, or participate in a small way. I figured I could always try and go back if it failed. Anyway, it turned out pretty successful—sold for about $300 million.

That was a huge amount back then. Now, I feel like the starting price for an AI startup is $1 billion. It’s like… too many damn unicorns—valuations over a billion.

Garry Tan

There’s been inflation, so the money isn’t worth as much anymore.

Elon Musk

Yes. I mean, in 1995, could you buy a burger for 5 cents? Okay, not that extreme, but yes, massive inflation. But AI hype is really high now, as you see. Some companies less than a year old get valuations of a billion or tens of billions. Maybe some will succeed—probably will. But seeing those valuations is still mind-blowing. How do you see it? I mean,

Garry Tan

I’m actually very optimistic. I believe everyone here will create tremendous value—things used by a billion people globally. We haven’t even scratched the surface. I love that internet story—you were like everyone here, the guy traditional media CEOs saw as “the internet person.” Now, for the vast world that doesn’t understand AI—the corporate world, society—they’ll look to people like you for the same reason. Any practical lessons? One seems to be don’t give up board control, or be very careful—get a really good lawyer.

Elon Musk

I think my biggest mistake with my first startup was letting traditional media companies gain too much shareholder and board control. That inevitably led them to view things from a traditional media lens, pushing us to do things that made sense to them but not with new tech. I should add, I didn’t originally plan to start a company. I tried to get a job at Netscape. Sent them my resume. Mark Andreessen knows this.

But I don’t think he ever saw it—and no response. Then I wandered around Netscape’s lobby trying to “bump into” someone, but I was too shy to talk. So I thought, “This is absurd.” I’ll just write software and see what happens. It wasn’t from a “I want to start a company” mindset. I just wanted to participate in building part of the internet. Since I couldn’t get a job at an internet company, I had to start one. Anyway, yes. AI will profoundly change the future—hard to quantify. Economically, assuming we don’t go off track,

and AI doesn’t kill us and itself, you won’t end up with an economy ten times larger. Eventually, if our future—mostly machine descendants—become a Kardashev Level 2 or higher civilization, we’ll be talking about economies thousands or millions of times larger than today’s. Yes, I did feel, you know, when I was in D.C., getting criticized for eliminating waste and fraud—it was an interesting side quest, as side quests go. But it’s time to get back to the main quest. Yes, I need to return to the main quest here. But I really felt, you know, it’s kind of like… government reform is like… imagine the beach is dirty, with needles, feces, trash—you want to clean it. But simultaneously, there’s a thousand-foot-high wall of water—that’s the AI tsunami. If a thousand-foot tsunami is coming, how meaningful is cleaning the beach? Not very. Oh, glad you’re back on the main quest. That’s very important.

Yes, back to the main quest. Building technology—that’s what I love. Too much interference. The signal-to-noise ratio in politics is terrible.

Garry Tan

I live in San Francisco, so you don’t need to tell me twice.

Elon Musk

Yes, D.C. is like… I guess the whole place is politics. But if you’re building rockets or cars, or trying to get software to compile and run reliably, you must pursue truth above all—otherwise your software or hardware fails. You can’t fool math—math and physics are harsh judges. I’m used to environments that maximize truth-seeking, and politics is definitely not that. So anyway, I’m glad to be back in tech. I think I—

Garry Tan

I’m curious—back to the Zip2 moment. You walked away with hundreds of millions—personally cashed out?

Elon Musk

I got $20 million, right?

Garry Tan

Okay. So you solved the money problem. Then you basically bet it all again—on X.com, which became PayPal via merger with Confinity.

Elon Musk

Yes. I left the chips on the table.

Garry Tan

Not everyone does that. Many here will face this decision. What drove you to fight again?

Elon Musk

With Zip2, we built great tech, but it was never fully utilized. Our tech was better than Yahoo’s or anyone else’s, but we were constrained by our customers—media companies. So I wanted to do something free from customer constraints—direct-to-consumer. That became X.com/PayPal. Essentially, X.com merged with Confinity—we created PayPal together.

And actually, the PayPal “mafia” may have created more companies than any other 21st-century firm. When Infinity and X.com merged, so much talent came together. I just felt with Zip2 we were somewhat handicapped—I thought, okay, what if we weren’t constrained, went direct-to-consumer? That’s how it happened.

But yes, when I got that $20 million check from Zip2 (personal proceeds), I was living with four roommates, bank balance maybe $10,000. The check came by mail—unbelievable. By mail! My balance jumped from $10K to $20.01M. I thought, “Okay (after taxes).” But I reinvested almost all of it into X.com. Like you said, left almost all the chips on the table.

After PayPal, I wondered why we hadn’t sent people to Mars yet. I checked NASA’s website—no dates. Thought maybe it was hard to find. But in fact, there was no real plan. So, long story, don’t want to take too much time—

Garry Tan

We’re all listening intently.

Elon Musk

So I was on the Long Island Expressway with my friend Adeo Ressi—we went to Penn together. Adeo asked what I’d do after PayPal. I said, “I don’t know—maybe a space-related nonprofit, since I didn’t think I could do anything commercial in space—it seemed like a nation-state domain.” But I was curious when we’d send people to Mars. That’s when I discovered: no date online. Started digging deeper. I’m skipping a lot, but my initial idea was a Mars charity mission called “Life to Mars”—send a small greenhouse with seeds and dehydrated nutrient gel to Mars, land it, add water, and get this amazing shot—green plants against red soil.

By the way, for a long time I didn’t realize “money shot” was a porn term (referring to climax). But anyway, the point was that amazing visual—red background, green plants—to inspire NASA and the public to send astronauts to Mars. As I learned more, I realized—oh, and during this process, around 2001–2002, I went to Russia to buy ICBMs. Quite an adventure. You meet senior Russian generals saying, “I want to buy some ICBMs.” To get to space. Yes. Not to blow anything up—but as part of disarmament, they had to destroy many large nuclear missiles. So I thought, “Let’s get two, remove warheads, add an extra upper stage for Mars.”

But it felt surreal—being in Moscow around 2001 negotiating with Russian military to buy ICBMs. Crazy. And they kept raising prices—completely opposite of normal negotiation. I thought, “Man, these things are getting expensive.”

Then I realized the real issue wasn’t lack of desire to go to Mars—it was simply impossible without exceeding budgets, even NASA’s. That’s why I decided to found SpaceX—to advance rocket tech to reach Mars. That was 2002.

Garry Tan

So it wasn’t that you set out to build a business. You just wanted to do something interesting and needed by humanity, and like a cat unraveling yarn, it gradually unfolded—turning into what might be a highly profitable venture.

Elon Musk

It’s profitable now, but there was no precedent for a successful rocket startup. Commercial rocket attempts had failed. So when I founded SpaceX, I genuinely thought the odds of success were under 10%, maybe 1%. But if no startup advances rocket tech, it won’t happen—certainly not from big defense contractors, who are just government proxies doing conventional things. So it had to come from a startup or not at all. Even low odds are better than zero. So yes, when I founded SpaceX mid-2002, I expected failure—around 90% chance. When recruiting, I didn’t sugarcoat it.

I said we’d probably die. But there’s a 1-in-10 chance we won’t—and it’s the only path to sending humans to Mars and advancing tech. Then I became chief rocket engineer—not because I wanted to, but because I couldn’t hire great people. No experienced engineers would join—they thought it was too risky, we’d die. So I became the lead. The first three launches failed. It was a learning process. The fourth luckily succeeded. If the fourth Falcon launch failed, I’d be out of money—game over. So it was incredibly close.

If the fourth Falcon launch failed, it would’ve been game over—we’d join the graveyard of previous rocket startups. So my estimate wasn’t too far off. We barely survived. Tesla was happening around the same time. 2008 was brutal. Mid-2008, or summer, SpaceX’s third launch failed—we had three consecutive failures. Tesla’s funding round also failed. Tesla was about to go bankrupt. I thought, “Oh man, this is terrible. This will become a cautionary tale of hubris.”

Garry Tan

Back then, many probably said, “Elon’s a software guy—why hardware? Why choose this?”

Elon Musk

100%. Check the media coverage from then—it’s still online. They called me “the internet guy.” So “the internet guy,” aka “the idiot,” tries to build a rocket company. We were mocked a lot. It sounded absurd—“internet guy starts rocket company” didn’t seem like a winning formula.

To be fair, I don’t blame them. I thought, “Yeah, sounds unlikely—I agree it’s improbable.” But luckily, the fourth launch succeeded. Then NASA awarded us a contract to resupply the space station. I think it was around Dec 22, or just before Christmas. Because even the fourth success wasn’t enough—we needed a big contract to survive. So I got a call from the NASA team—they literally said, “We’re awarding you the contract to resupply the space station.” I blurted out, “I love you.” Not something they usually hear.

Normally it’s very formal, but I thought, “Oh my God, this saves the company.” Then, closing Tesla’s funding round happened on the last possible day, the last hour—Dec 24, 2008, at 6 PM. If that round hadn’t closed, we’d default on payroll two days after Christmas. So late 2008 was nerve-wracking.

Garry Tan

From your PayPal and Zip2 experiences to jumping into hardcore hardware startups, a constant thread is finding and attracting the smartest people in each field… For those here who haven’t even managed one person—just starting careers—what advice would you give your younger self?

Elon Musk

I generally think you should try to do something useful. Sounds cliché, but doing something truly useful is extremely hard—especially useful to many people. Think of the area under the utility curve—how useful you are to your fellow humans, multiplied by how many? Like physics’ definition of “true work.” Achieving this is incredibly difficult. But if you aim for “true work,” your odds of success rise dramatically. Don’t chase glory—chase work.

Garry Tan

How do you judge what “true work” is? External feedback? How others see it, or knowing your product’s impact?

Elon Musk

When hiring, what do you value? Different question. I mean, for your end product—ask: if this succeeds, how useful will it be to how many people? That’s what I mean. Then, whatever your role—CEO or any position in a startup—do whatever it takes to succeed. Constantly crush your ego. Internalize responsibility. A major failure mode is when ego-to-ability ratio exceeds 1. If your ego-to-ability ratio is too high,

you cut off feedback from reality. In AI terms, you break your reinforcement learning (RL) loop. You don’t want to break that loop. You want a strong RL loop—which means internalizing responsibility and minimizing ego, doing whatever task is needed, noble or humble. That’s why I prefer “engineering” over “research.” I like that word. And I don’t want xAI called a lab.

I want it to be a company. Whatever term is simplest, most direct, ideally lowest-ego—that’s usually the right direction. You just want to tightly close the loop with reality. That’s super important.

Garry Tan

I think everyone here deeply admires your mastery of first principles. How do you determine your own “reality”? That seems crucial. Non-creators, non-engineers—like some journalists—sometimes criticize you. But you also have a group of builders around you with massive achievement curves. How should people navigate this? What methods work for you? How would you pass this on—say, to your kids? How would you teach them to stand in the world? How to build a predictable worldview based on first principles?

Elon Musk

Physics tools are incredibly powerful for understanding any field and making progress. First principles mean breaking things down to the most fundamental axiomatic elements—things most likely true—and reasoning up logically, rather than by analogy or comparison. Then simple things like limit thinking—extrapolating minimization or maximization—limit thinking is very helpful. I use all the tools of physics.

They apply to any domain. It’s like a superpower. Take rockets. How much should a rocket cost? Most people look at historical costs and assume new rockets must cost similarly. First principles: what materials is the rocket made of? Aluminum, copper, carbon fiber, steel—whatever. How heavy is it? What’s the weight of each component? What’s the per-kilogram material cost? That sets the true floor—cost can asymptotically approach raw material cost.

Then you realize rocket raw materials are only 1% or 2% of historical rocket cost. So the manufacturing process must be extremely inefficient. That’s a first-principles analysis of optimization potential—even before reusability. For an AI example, last year, when xAI tried to build a training supercluster, we went to suppliers: “We need 100,000 H100s for coherent training.”

They estimated 18–24 months. I said, “We need it in 6 months. Otherwise, we’re not competitive.” So break it down: you need a building, power, cooling. No time to build from scratch—must find an existing facility. Found a decommissioned Electrolux factory in Memphis. Input power was 15MW—we needed 150MW.

So we rented generators, placed them on one side of the building. Needed cooling—rented about a quarter of U.S. mobile cooling capacity, placed chillers on the other side. Still not enough—training causes massive power swings. Power could drop 50% in 100ms—generators can’t keep up. So we added Tesla Megapacks and modified their software to smooth power fluctuations. Then huge networking challenges—connecting 100,000 GPUs coherently is extremely complex.

Garry Tan

…Everything you mentioned, I can imagine someone telling you “No, you can’t get that power,” “You can’t solve that.” A key part of first-principles thinking seems to be asking “why,” figuring out root causes, challenging assumptions. If answers aren’t satisfactory, you don’t accept them. Is that right? Seems especially crucial for hardware—software has redundancy: “Add more CPUs, no problem.” But hardware—either it works or it doesn’t.

Elon Musk

I think first-principles thinking applies universally—to software, hardware, anything. I just used a hardware example to show how we were told something was impossible, but by breaking it into components—building, power, cooling, power smoothing—we solved each. Then we had our network team wire everything, 24/7 shifts. I slept in the data center, helped with cabling.

Many other problems to solve. Last year, no one trained coherently on 100,000 H100s. Maybe someone did this year—I don’t know. Then we doubled it to 200,000. Now our Memphis training center has 150,000 H100s, 50,000 H200s, 30,000 GB200s. Our second Memphis data center will soon come online with 110,000 GB200s.

Garry Tan

Do you still believe pre-training works? Are scaling laws still valid? Will the winner be whoever has the largest, smartest model, then distill it?

Elon Musk

Beyond large AI competitiveness, other factors matter: team talent, hardware scale, and effective utilization. You can’t just order GPUs and plug them in. You need many GPUs running stable, coherent training.

Also, unique data sources? Distribution matters too—how people access your AI. These are key for competitive large foundation models. As my friend Ilya Sutskever said, I think we’re nearly out of human-generated data for pre-training. High-quality token supply is drying up fast. Then you must generate synthetic data and accurately evaluate whether it’s truthful or hallucinated. So grounding in reality is tricky—we’re entering a phase needing more focus on synthetic data. Right now we’re training Grok 3.5, focused on reasoning.

Garry Tan

Returning to your physics perspective—I’ve heard hard sciences, especially physics textbooks, are very useful for reasoning. Researchers tell me social sciences are useless for reasoning.

Elon Musk

Probably true. Yes. Another critical future point is combining deep AI in data centers or superclusters with robotics.

So things like Optimus humanoid robots—yes, Optimus is great. There will be vast numbers of humanoid robots and various shapes and sizes, but I predict humanoid robots will vastly outnumber all others—maybe by an order of magnitude, a huge difference.

Garry Tan

Rumors say you plan to build a robot army?

Elon Musk

Whether we do it or Tesla does—Tesla and xAI are closely linked.

How many humanoid robot startups have you seen? Jensen Huang brought a bunch onstage—robots from different companies. Maybe a dozen different humanoids. So I guess, partly what I’ve resisted—maybe slowed me—is I’m a bit—I don’t want Terminator to become real. So I somewhat dragged my feet on AI and humanoid robotics, at least until recently. Then I realized—it’s happening regardless. So you have two choices: spectator or participant. I’d rather be a participant. So now we’re full speed ahead on humanoid robots and digital superintelligence.

Garry Tan

There’s a third thing you’ve spoken about often—I strongly agree: becoming a multiplanetary species. How does that fit in? It’s not a 10- or 20-year thing—maybe 100 years, spanning generations. How do you see it? With AI, embodied robotics, and multiplanetary—do they all serve that final goal? Or what drives your vision for the next 10, 20, 100 years?

Elon Musk

Dude, 100 years. I hope civilization exists in 100 years. If it does, it’ll be utterly unlike today. I predict humanoid robots will be at least 5x human population—maybe 10x. One way to view civilizational progress is percentage completion of the Kardashev Scale. At Type I, you harness all planetary energy. Right now, we use maybe 1–2% of Earth’s energy. So we’re far from Kardashev I. Type II is stellar energy—about a billion times Earth’s energy, maybe close to a trillion.

Type III is galactic energy—we’re far from that. So we’re in the very, very early stages of an intelligence big bang. I hope—regarding multiplanetary—I think in about 30 years, we’ll transfer enough mass to Mars to make it self-sustaining—so even if Earth supply ships stop, Mars continues thriving. This greatly extends civilization’s, consciousness’s, or intelligence’s (biological or digital) expected lifespan. That’s why I think being multiplanetary matters.

I’m troubled by the Fermi Paradox—why don’t we see aliens? Maybe intelligent life is rare. Perhaps we’re the only sentient life in this galaxy. Then consciousness is a tiny candle in infinite darkness—we must do everything to keep it lit. Being multiplanetary, or making consciousness multiplanetary, greatly increases civilization’s lifespan and is the necessary step before reaching other star systems. Once you have at least two planets, you create a forcing function for space travel advancement. That will eventually lead to consciousness expanding to the stars.

Garry Tan

The Fermi Paradox might suggest civilizations self-destruct upon reaching a certain tech level. How do we avoid that? What advice for this room full of engineers? What can we do to prevent collapse?

Elon Musk

Yes, how to avoid the “Great Filters”? One obvious filter is global thermonuclear war. We should avoid that.

I think we need to build benign AI—AI that loves humanity, helpful robots. I believe a critical aspect of AI development is a strict adherence to truth—even when politically incorrect. My intuition on what makes AI dangerous: if you force AI to believe falsehoods.

Garry Tan

What about the debate between safety and keeping models closed for competitive advantage? I think it’s great—we have competitive models, others do too. In that sense, we may have avoided my biggest fear: fast takeoff controlled by one entity. That could collapse everything. Now we have options—this is good. Your thoughts?

Elon Musk

Yes, I think there will be several deep intelligences—maybe at least five. Possibly up to ten. Not sure about hundreds, but maybe around ten. Probably four in the U.S. So I don’t think any single AI will have runaway capability. But there will be several deep intelligences.

Garry Tan

What will these deep intelligences do? Scientific research? Attack each other?

Elon Musk

Possibly both. I hope they discover new physics, invent new technologies. I think we’re very close to digital superintelligence. It may happen this year—if not, next year. Digital superintelligence defined as smarter than any human at anything.

Garry Tan

So how do we steer toward super abundance? Robots, cheap energy, on-demand intelligence. Is that the “white pill” (positive future)? Where do you stand on this spectrum? What specific actions would you encourage everyone here to take to make this “white pill” real?

Elon Musk

I think a good outcome is most likely. I guess I somewhat agree with Jeff Hinton—maybe 10–20% annihilation risk. But the upside is 80–90% chance of a great outcome. So again, nothing is more important than a rigorous commitment to truth for AI safety. Obviously, empathy for humans and known life is essential too.

Garry Tan

We haven’t discussed Neuralink. I’m curious—you’re working to close the input/output gap between humans and machines. How critical is this for AGI/ASI? Once this link exists, can we not only read but write?

Elon Musk

Neuralink isn’t required for digital superintelligence—it will happen before widespread neural interfaces. But Neuralink effectively solves input/output bandwidth constraints. Especially output bandwidth—human sustained output is less than one bit per second. A person rarely outputs more symbols in a day than 86,400 (seconds in a day)—let alone for multiple days. With a neural interface, you can massively increase input and output bandwidth. Input means writing to the brain.

We now have five humans implanted with devices that read signals. People with ALS, completely locked-in, tetraplegics—they can now communicate at bandwidth comparable to able-bodied people, controlling computers and phones. That’s pretty cool. Within 6–12 months, we’ll do our first visual implants—restoring sight to the blind by writing directly to the visual cortex. We’ve already done it in monkeys.

We have a monkey with a visual implant for three years. Initial resolution will be modest, but long-term—very high resolution, multispectral wavelengths. See infrared, ultraviolet, radar—like gaining superpowers. Eventually, cybernetic implants won’t just fix broken things—they’ll greatly augment human abilities: intelligence, senses, bandwidth. That will happen.

But digital superintelligence will arrive long before that. Even with neural links, we might better appreciate AI. One constraint across all my efforts is access to the smartest people. Yes. But meanwhile, rocks (computers) can now talk and reason—they’re maybe 130 IQ now, soon superintelligent. How do you reconcile this? What happens in 5 or 10 years? What should everyone here do to ensure they’re creators, not below the API line?

People call it the singularity for a reason—we don’t know what’s coming. Human intelligence’s share will become tiny. At some point, total human intelligence will be less than 1% of all intelligence. And if we reach Kardashev Level II—even with massive population growth and intelligence augmentation—say, everyone has IQ 1000—even then, human intelligence might be only a billionth of digital intelligence. So where is the biological bootloader for digital superintelligence? I suppose I’ll end there—am I a good bootloader?

Garry Tan

Where do we go from here? All this sounds like wild sci-fi—but possibly built by people in this room. For this generation of top tech talent—any final words? What should they do? Pursue? Think about? What should they ponder over dinner tonight?

Elon Musk

Like I said at the start, if you’re doing something useful—that’s great. If you’re just trying your best to be as useful as possible to your fellow humans, you’re doing well. I keep emphasizing: focus on super truthful AI—this is paramount for AI safety. If anyone here is interested in working at xAI—please let us know. Our goal is to make Grok the most truth-seeking AI possible. I think that’s vital. Hopefully, AI can help us understand the nature of the universe. Maybe AI will tell us where aliens are, how the universe truly began, how it ends, what questions we don’t even know to ask. Are we in a simulation? Or at what level of simulation?

Garry Tan

I think we’ll find out. An NPC (non-player character). Elon, thank you so much for joining us.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News