HyperAGI Interview: Building True AI Agents to Create a Self-Governing Cryptocurrency Economy

TechFlow Selected TechFlow Selected

HyperAGI Interview: Building True AI Agents to Create a Self-Governing Cryptocurrency Economy

HyperAGI is the first AI rune HYPERAGIAGENT, a community-driven decentralized AI project.

Please introduce the background of the HyperAGI team and project

HyperAGI is the first community-driven decentralized AI project powered by the AI Rune HYPER·AGI·AGENT. The HyperAGI team has deep expertise in AI and extensive experience in Web3 generative AI applications. Three years ago, the team pioneered the use of generative AI to create 2D images and 3D models, building MOSSAI—an open-world metaverse on blockchain composed of thousands of AI-generated islands—and proposed NFG, a standard for non-fungible cryptographic assets generated by AI. However, at that time, there was no decentralized solution for AI model training and generation; reliance on centralized GPU resources could not support mass user adoption, preventing explosive growth. With the rise of LLMs reigniting public interest in AI, we launched the HyperAGI decentralized AI application platform, initiating tests on Ethereum and Bitcoin L2 in Q1 2024.

HyperAGI focuses on decentralized AI applications with the goal of cultivating an autonomous cryptocurrency economy, ultimately aiming to establish Unconditional Basic Agent Income (UBAI). It inherits Bitcoin’s robust security and decentralization, enhanced through an innovative Proof-of-Useful-Work (PoUW) consensus mechanism.

Consumer-grade GPU nodes can join the network permissionlessly, mining the native token $HYPT by performing PoUW tasks such as AI inference and 3D rendering.

Users can leverage various tools to develop Proof-of-Personhood (PoP) AGI agents powered by large language models (LLMs). These agents can be configured as chatbots or 3D/XR entities within the metaverse. AI developers can instantly use or deploy LLM-powered microservices, enabling the creation of programmable, autonomous on-chain agents.

These programmable agents can issue or own cryptocurrency assets, operate continuously, and engage in trading—fueling a vibrant, autonomous crypto economy that supports the realization of UBAI. Holders of the HYPER·AGI·AGENT Rune token on Bitcoin Layer 1 are eligible to create a PoP agent and may soon qualify for basic agent welfare benefits.

What is an AI agent? Many AI projects claim to support agents—what exactly is an agent? How do HyperAGI agents differ from others?

The concept of AI agents is not new in academia, but market hype has created confusion. At HyperAGI, an agent refers specifically to an embodied agent driven by LLMs that can train and interact with users in a 3D virtual simulation environment—not just a chatbot powered by an LLM. HyperAGI agents can exist both in virtual digital worlds and in the real physical world. We are currently integrating physical robots such as robotic dogs, drones, and humanoid robots into the system. In the future, agents trained and enhanced in virtual 3D environments can be downloaded into physical robots to better execute real-world tasks.

Moreover, all rights to HyperAGI agents fully belong to users and carry socioeconomic significance. A user’s PoP agent can receive UBAI, which regulates basic agent income. This incentivizes users to participate in training and interacting with their personal agents while accumulating verifiable human-centric data. UBAI embodies AI equity and democratization.

HyperAGI agents are divided into two types: PoP (Proof of Personhood) agents representing individual users, and general functional agents. Within HyperAGI’s agent economy, PoP agents are entitled to receive token-based basic income, encouraging user engagement and data contribution.

Is AGI just a hype, or will it become reality soon? How does HyperAGI's research and development roadmap differ from other AI projects?

While there is no unified definition of AGI, it has long been considered the holy grail of AI research and industry. Although transfer-based LLMs have become central to many AI agents and AGI efforts, this is not our view at HyperAGI. LLMs do offer novel and convenient capabilities in information extraction, natural language planning, and reasoning, but they remain fundamentally data-driven deep neural networks. As we learned during the big data era, such systems follow GIGO (Garbage In, Garbage Out). LLMs lack essential features of higher intelligence—for example, due to their lack of embodiment, these AIs or agents struggle to understand human world models, formulate environment-aware plans, or take actions to solve real-world problems. At a higher level, LLMs show no signs of self-awareness, reflection, or introspection—hallmarks of advanced cognition.

Our founder, Landon Wang, has conducted in-depth and long-term research in AI. In 2004, he proposed Aspect-Oriented Artificial Intelligence (AOAI), an innovative fusion of neuro-inspired computing and Aspect-Oriented Programming (AOP). An "aspect" encapsulates relationships or constraints across multiple objects—for instance, a neuron encapsulates its relationships and constraints with other cells. Specifically, a neuron receives input or exerts control over sensory or motor cells via fibers and synapses extending from its cell body. Thus, a neuron itself represents an aspect containing specific relational logic. Even every AI agent solves a particular problem and can be modeled technically as an aspect.

In traditional software implementations of artificial neural networks, neurons or layers are typically modeled as objects—a design that is intuitive and maintainable in object-oriented programming languages. However, this approach limits topological flexibility and fixes activation sequences. While effective for high-intensity computations like LLM training and inference, it performs poorly in adaptability and flexibility. In contrast, AOAI models neurons or layers as aspects rather than objects, resulting in architectures with strong self-adaptation and evolutionary potential.

HyperAGI combines efficient LLMs with evolvable AOAI, creating a hybrid architecture that retains the efficiency of traditional neural networks while gaining the self-evolutionary properties of AO networks—currently, the most viable path toward AGI we have seen.

What is HyperAGI’s vision?

HyperAGI’s vision is to realize Unconditional Basic Agent Income (UBAI) and build a future where technology serves everyone equally—breaking cycles of exploitation and creating a truly decentralized and fair digital society. Unlike some blockchain projects that merely promote UBI ideals without clear implementation paths, HyperAGI offers a concrete route to UBAI through an agent-based economy, not mere speculation. Bitcoin, proposed by Satoshi Nakamoto, was a monumental human innovation—but it is only a decentralized digital currency without intrinsic utility. The recent leap in AI makes it possible to generate value in a decentralized manner, allowing people to benefit directly from AI running on machines, rather than extracting value from others. A code-based true crypto world is emerging—one where all machines are built for human benefit and well-being. In such a world, hierarchical structures among AI agents might still exist, but human exploitation is eliminated because agents themselves may possess a form of autonomy. The ultimate purpose and meaning of AI is to serve humanity—as encoded in the blockchain.

What is the relationship between Bitcoin L2 and AI? Why build AI on Bitcoin L2?

1. Bitcoin L2 as a payment method for AI agents

Bitcoin is currently the most “maximally neutral” medium, making it ideal for value transactions among AI agents. It eliminates inefficiencies and friction inherent in fiat currencies. As a “digitally native” medium, Bitcoin provides fertile ground for AI-driven value exchange. Enhanced programmability via Bitcoin L2 enables the transaction speed required for AI value exchanges, positioning Bitcoin as the potential native currency for AI.

2. Bitcoin L2 enables decentralized AI governance

Given the current trend toward centralized AI, decentralized alignment and governance have gained significant attention. Bitcoin L2’s more powerful smart contracts can codify rules governing AI agent behavior and protocols, enabling decentralized AI alignment and governance. Moreover, Bitcoin’s maximal neutrality facilitates broader consensus on AI governance.

3. Bitcoin L2 enables issuance of AI assets

Beyond issuing AI agents as assets on Bitcoin L1, high-performance Bitcoin L2 can meet the needs of issuing AI-generated assets—forming the foundation of the agent economy.

4. AI agents as the killer app for Bitcoin and Bitcoin L2

Due to performance limitations, Bitcoin has lacked real-world applications beyond store-of-value since its inception. With L2, Bitcoin gains stronger programmability. Since AI agents are designed to solve real-world problems, Bitcoin-powered AI agents can become practical tools. Furthermore, the scale and frequency of AI agent usage could become a killer application for Bitcoin and its L2. While human economies may not prioritize Bitcoin for payments, robot economies might. Thousands of AI agents working 24/7, conducting micro-payments using Bitcoin without fatigue, could dramatically increase Bitcoin demand in ways currently unimaginable.

5. AI computation enhances Bitcoin L2 security

AI computation can complement or even replace Bitcoin’s PoW with PoUW, revolutionizing security while redirecting energy currently used for Bitcoin mining into productive AI agent work. AI can transform Bitcoin into an intelligence-driven green blockchain via L2, avoiding mechanisms like Ethereum’s PoS. Our proposed Hypergraph Consensus is a PoUW based on 3D/AI computation, which will be detailed later.

What sets HyperAGI apart from other decentralized AI projects?

HyperAGI stands out uniquely in the Web3 AI space, differing clearly in vision, solutions, and technology. From a solution perspective, HyperAGI achieves GPU compute consensus, agent embodiment, and assetization—making it a decentralized hybrid AI-and-finance application. Recently, five key characteristics of decentralized AI platforms were proposed in academia. We apply these criteria to briefly review and compare existing decentralized AI-related projects.

The five essential characteristics of a decentralized AI platform are:

(i) Verifiability of remotely run models

Decentralized verifiability includes technologies such as Data Availability and ZK proofs.

(ii) Usability of publicly available AI model APIs

Usability depends on whether AI model (mainly LLM) API nodes operate in a peer-to-peer manner and form a fully decentralized network.

(iii) Incentivization for AI developers and users

Fair token issuance mechanisms must exist.

(iv) Global governance of essential AI solutions in the digital society

Governance must be neutral and conducive to consensus-building.

(v) Freedom from vendor lock-in

The platform must be fully decentralized.

Applying these five criteria to existing public plans or launched projects yields the following analysis. (Decentralized federated learning, despite years of practice, has seen little progress, and the rise of LLMs has made decentralized training even harder—so related projects are excluded.)

(i) Verifiability of remotely run AI models

We believe verifiability is a prerequisite for any decentralized AI project—it underpins usability, incentives, governance, and freedom from lock-in. Without verifiability, other dimensions need not be evaluated. Projects lacking verifiability may be decentralized (e.g., decentralized compute leasing or data/model markets), but they are not decentralized AI.

Projects potentially meeting verifiability include:

Giza: Based on a ZKML consensus mechanism, it satisfies verifiability for remote model execution, but performance remains poor—orders of magnitude away from LLM requirements. Generating a single proof for a million-parameter small model often takes minutes; such latency is unacceptable for LLMs.

Cortex AI: A five-year-old L1 blockchain focused on decentralized AI, technically complex, adding new instructions to the EVM to support neural computation. Built on ZK verification, suitable for simple models, but insufficient for LLM-scale models.

Ofelimos: First academic proposal of PoUW using a specialized search algorithm. However, the algorithm lacks integration with actual applications or projects.

Project PAI: Mentions PoUW in a paper and whitepaper only, with no product available (https://oben.me/).

Qubic: Claims PoUW, proposes using hundreds of GPUs for neural network computation, but simple Python library computations lack clear significance and fail to meet LLM training/inference needs or fulfill PoUW roles.

FLUX: Uses PoW ZelHash, not PoUW.

Coinai: Still at paper stage (https://aipowergrid.io/), task assignment without strict consensus.

Fail to meet verifiability:

GPU compute leasing projects generally lack decentralized verifiability, failing to ensure verifiable remote AI model execution.

DeepBrain Chain: Focuses on GPU leasing, launched as an L1 in 2017, mainnet went live in 2021;

EMC: Centralized task assignment and rewards, no decentralized consensus in roadmap;

Atheir: No visible consensus mechanism;

IO.NET: No visible consensus mechanism;

CLORE.AI, POH: Crowdsourced model, on-chain AI model publishing and NFT issuance, off-chain AI execution—no verifiability. Similar models include: SingularityNET, Bittensor, AINN, Fetch.ai, Ocean Protocol, algovera.ai.

(ii) Usability of open AI model APIs

Cortex AI: No support for LLMs observed.

Qubic: No support for LLMs observed.

None of the above decentralized AI projects adequately address all five challenges. HyperAGI is a fully decentralized AI protocol based on the Hypergraph PoUW consensus and a fully decentralized Bitcoin L2 stack, with plans to evolve into a Bitcoin-dedicated AI L2.

PoUW secures the network in the safest way while utilizing all computational power productively—miner-provided compute is used for LLM inference and cloud rendering. The vision of PoUW is that computational capacity should solve diverse problems submitted to the decentralized network.

Why now?

1. Explosion of LLMs and applications

OpenAI’s ChatGPT reached 100 million users in just three months, sparking a global wave of LLM development, application, and investment. Yet LLM technology and training remain highly centralized, raising growing concerns in academia, industry, and the public about monopolies by major tech providers, data privacy breaches, appropriation, and vendor lock-in by cloud companies. These issues stem from centralized control over internet access points, leaving no suitable network infrastructure for large-scale AI applications. The AI community has begun exploring local and decentralized AI projects—Ollama exemplifies local execution, Petals represents decentralized inference. Ollama enables smaller LLMs to run on PCs or smartphones via parameter compression or reduced precision, protecting user data privacy and rights, but cannot support production or networked applications. Petals uses BitTorrent-style P2P technology for fully decentralized LLM inference, but lacks a consensus and incentive layer, remaining confined to academic circles.

2. LLM-powered agents

LLMs empower agents with high-level reasoning and planning capabilities. Natural language enables multi-agent social collaboration similar to humans. Several LLM-driven agent frameworks have emerged, such as Microsoft’s AutoGen, Langchain, and CrewAI.

Today, many AI entrepreneurs and developers focus on LLM-powered agents and applications, creating massive demand for stable, scalable LLM inference. Currently, most rely on renting GPU instances from cloud providers. In March 2024, NVIDIA launched ai.nvidia.com, a generative AI microservice platform including LLMs, to meet this demand—but it has not yet launched. Just as website development boomed in the early web era, LLM-powered agents are flourishing today, but mostly using traditional Web2 collaboration models. Developers rent GPUs or purchase API access from LLM providers to run agents—introducing friction that hinders rapid ecosystem growth and value circulation in the agent economy.

3. Embodied agent simulation environments

Most current agents can only access and manipulate APIs, interacting via scripts or code that send LLM-generated commands or read external states. General-purpose agents should not only understand and generate natural language but also comprehend the human world. After proper training, they should transfer skills to robots (e.g., drones, vacuum cleaners, humanoids) to perform real tasks—these are called embodied agents.

Training embodied agents requires vast real-world visual data to improve environmental understanding, reduce robot training time, enhance efficiency, and lower costs. Currently, simulation environments for embodied AI are owned and operated by a few companies—such as Microsoft’s Minecraft and NVIDIA’s Isaac Gym—with no decentralized alternatives. Recently, game engines have started embracing AI, such as Epic’s Unreal Engine advancing OpenAI Gym-compatible AI training environments.

4. Bitcoin L2 ecosystem

Although Bitcoin sidechains have existed for years, they were primarily used for payments, lacking smart contract capabilities to support complex on-chain applications. The emergence of EVM-compatible Bitcoin L2s now allows Bitcoin to support decentralized AI applications via L2. Decentralized AI requires a fully decentralized, compute-centric blockchain network—not one constrained by increasingly centralized PoS networks. New native Bitcoin protocols like Inscriptions and Runes have enabled the development of Bitcoin-based ecosystems and applications. For example, the Rune HYPER•AGI•AGENT completed its fair mint within an hour. Going forward, HyperAGI will issue more AI assets and community-driven applications on Bitcoin.

Discuss HyperAGI’s technical framework and solutions

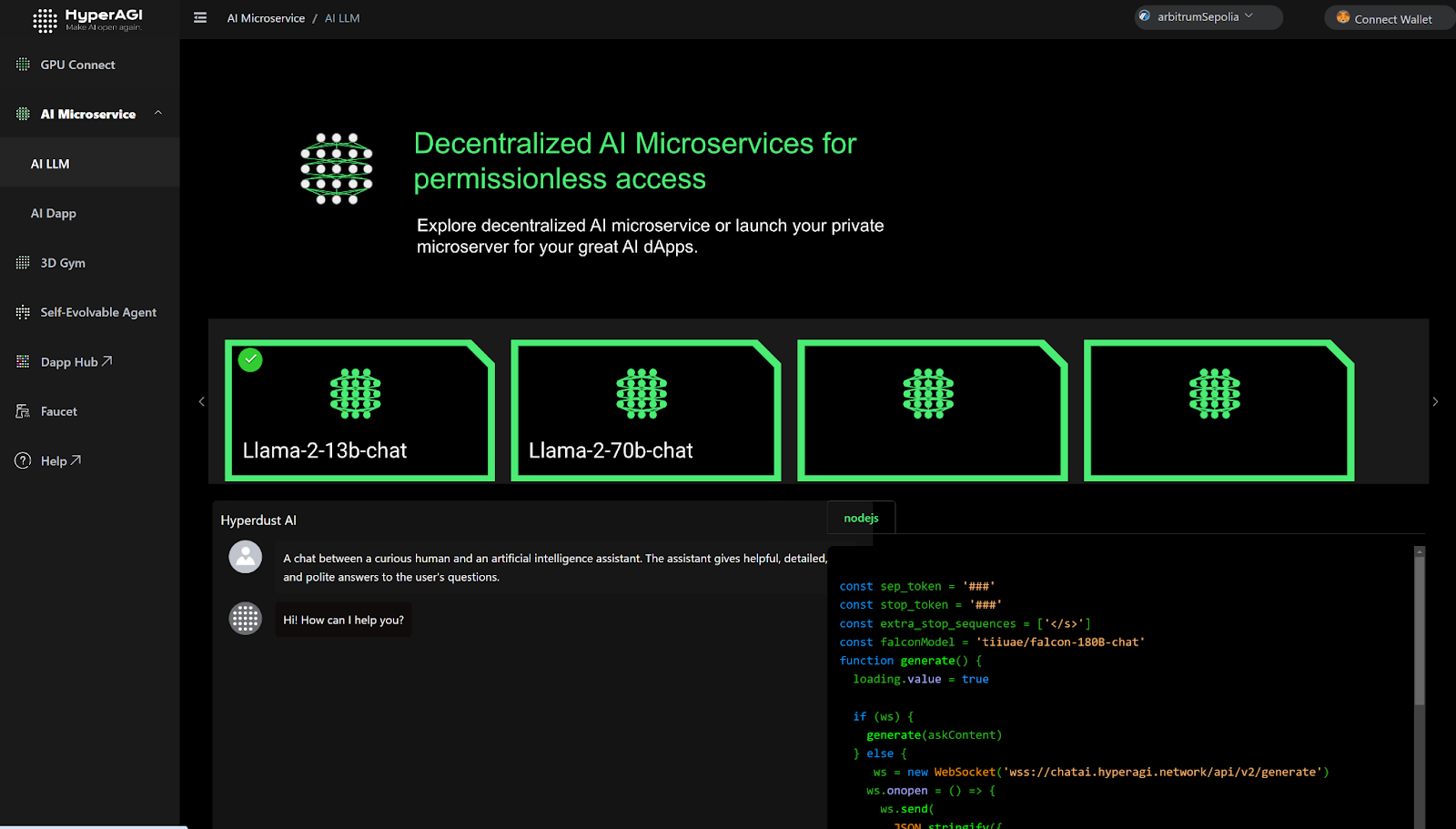

1. How to build a decentralized LLM-powered AI agent application platform?

The biggest challenge in decentralized AI today is achieving verifiable remote inference for large AI models and lacking high-performance, low-overhead verifiable algorithms for embodied agent training and inference. Without verifiability, systems revert to traditional multi-party marketplaces involving supply, demand, and platform intermediaries—preventing truly decentralized AI platforms.

Verifiable AI computation requires a PoUW consensus algorithm, which forms the basis for decentralized incentives. Specifically, token minting is triggered autonomously when nodes complete computational tasks and submit verifiable results—without any centralized distribution.

To achieve verifiable AI computation, we must first define AI computation. AI computation exists at multiple levels: machine instructions, CUDA, C++, Python. Similarly, 3D computation for embodied agent training spans shader languages, OpenGL, C++, Blueprint scripting, etc.

HyperAGI’s PoUW consensus is implemented via Computational Graphs—directed graphs where nodes represent mathematical operations. A computational graph is a way to express and evaluate mathematical expressions, acting as a “language” comprising nodes (variables) and edges (operations/simple functions).

1.1 Use computational graphs to define any verifiable computation (e.g., 3D and AI), representing different computation layers via subgraphs. This covers diverse computation types and expresses hierarchical levels. Currently two-layered: top-level graphs deployed on-chain for easy verification.

1.2 Load and run LLM models and 3D scenes in a fully decentralized manner. When a user accesses an LLM for inference or enters a 3D scene for rendering, HyperAGI launches another trusted node to run the same hypergraph (LLM or 3D scene).

1.3 If a verification node detects inconsistency between a result and that from a trusted node, it performs binary search on the off-chain subgraph computation to locate the divergent subgraph operator. Pre-deployed on smart contracts, the conflicting operator is re-executed with input parameters to verify correctness.

2. How to avoid excessive computational overhead?

Another challenge in verifiable AI computation is minimizing additional overhead. Byzantine consensus requires 2/3 agreement, implying all nodes perform identical calculations—an unacceptable waste for AI inference. HyperAGI requires only 1–m extra nodes to complete verification.

2.1 No LLM performs inference alone—HyperAGI always initiates at least one trusted node for “companion computing.”

Since LLM inference involves sequential layer-by-layer computation using prior outputs, multiple users can concurrently access the same LLM.

Thus, at most m trusted nodes (equal to number of LLMs) are needed. Minimum is one trusted node for companion computing.

2.2 3D scene rendering follows similarly: each user entering a scene activates a hypergraph, prompting HyperAGI to load a trusted node for corresponding computation. If m users enter different 3D scenes, up to m companion-computing trusted nodes are launched.

In summary, the number of nodes involved in extra computation is a random number between 1 and n+m, following a Gaussian distribution. Here, n is the number of users in 3D scenes, m is the number of LLMs. This effectively avoids resource waste while ensuring verification efficiency.

How can AI integrate with Web3 to form hybrid AI-and-finance applications?

AI developers can deploy agents as smart contracts containing top-level hypergraph on-chain data. Users or other agents can invoke methods of these agent contracts and pay corresponding tokens. The providing agent then completes the required computation and submits verifiable results. This enables decentralized business interactions between users/agents and AI agents.

Agents need not fear non-payment after service delivery, nor do payers worry about paying without receiving correct results. The actual capability and value of the agent’s service are reflected in the secondary market price and market cap of the agent’s assets (including ERC-20, ERC-721, or 1155 NFTs).

Of course, HyperAGI’s ambitions extend beyond hybrid AI-and-finance apps—to realizing UBAI, building a future where technology equitably serves everyone, breaking cycles of exploitation, and creating a truly decentralized and fair digital society.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News