a16z Co-Founder: In the Agent Era, What Truly Matters Has Changed

TechFlow Selected TechFlow Selected

a16z Co-Founder: In the Agent Era, What Truly Matters Has Changed

The most outstanding programmers of the future may not need to write code, but they must possess exceptional logical reasoning and systems architecture thinking—because code will become a commoditized, low-cost resource powered by AI.

Author: a16z

Translated by: FuturePulse

Source: This is Marc Andreessen’s latest interview on the Latent Space podcast. A renowned U.S. internet entrepreneur and one of the pivotal figures in the early development of the internet, Andreessen later co-founded a16z and became a representative of top-tier Silicon Valley investors. The entire conversation revolves around the history and latest trends in AI—well worth reading.

I. This wave of AI did not appear out of nowhere—it marks the first time, after an 80-year technological marathon, that AI has fully “started working”

- This wave of AI did not emerge suddenly—it is the culmination of an 80-year technological marathon.

- Marc Andreessen dubs the current moment an “80-year overnight success”: what appears to the public as a sudden explosion is, in fact, decades of accumulated technical groundwork finally converging and being unleashed en masse.

- He traces this technical lineage back to early neural network research and stresses that the industry has now broadly accepted “neural networks are the right architecture.”

- In his telling, the key milestones are not isolated events but a stacked sequence: AlexNet → Transformer → ChatGPT → reasoning models → agents and recursive self-improvement.

- He especially emphasizes that this time isn’t just about stronger text generation—it’s about four functional capabilities emerging simultaneously: LLMs, reasoning, coding, and agents / recursive self-improvement.

- His conviction that “this time is different” stems not from more compelling narratives, but because these capabilities are already performing real-world tasks.

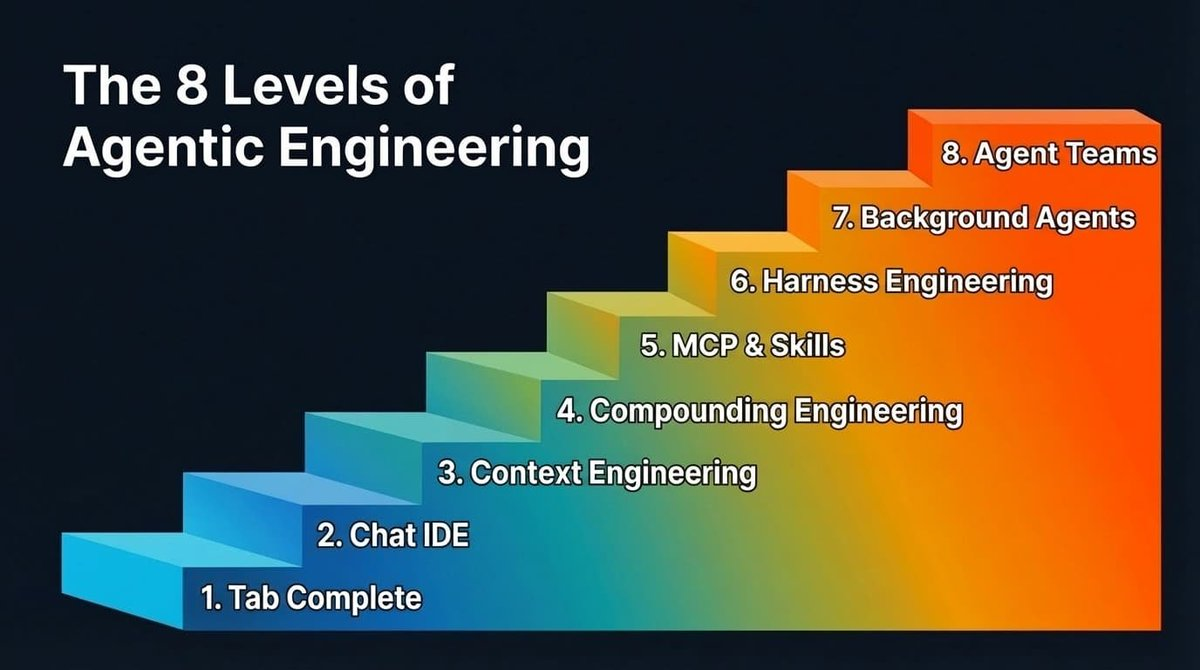

II. Agent architectures like Pi and OpenClaw represent a deeper software architecture shift than chatbots ever did

- He describes agents concretely: essentially “LLM + shell + file system + markdown + cron/loop.” Within this structure, the LLM serves as the core for reasoning and generation; the shell provides the execution environment; the file system persists state; markdown renders state human-readable; and cron/loop enables periodic wake-ups and task progression.

- He underscores the significance of this stack: while the model itself is new, every other component is mature, well-understood, and reusable—already entrenched in the software world.

- An agent’s state is stored in files, enabling migration across models and runtimes; underlying models can be swapped while memory and state persist.

- He repeatedly highlights introspection: agents know their own files, can read their own state, and even rewrite their own files and functions—progressing toward “extend yourself.”

- To him, the true breakthrough isn’t just that “models answer questions,” but that agents can tap into the full latent capability of an entire computer by leveraging existing Unix toolchains.

III. The era of browsers, traditional GUIs, and “human-clicked software” will gradually give way to agent-first interaction

- Marc Andreessen explicitly states: “You may no longer need a user interface.”

- He further notes that the primary users of future software may not be humans—but “other bots.”

- This implies many interfaces designed today for human clicking, browsing, and form-filling will recede into the background as execution layers called by agents.

- In this world, humans act more as goal-setters: stating what they want, then delegating execution—service invocation, software operation, workflow completion—to agents.

- He links this shift to a broader vision of software’s future: high-quality software will become increasingly “abundant,” no longer a scarce commodity painstakingly handcrafted by a few engineers.

- He also predicts programming languages will decline in importance; models will write code across languages, translate between them, and eventually, humans will care more about explaining *why* AI organizes code a certain way—not clinging rigidly to any single language.

- He even points to a more radical direction: conceptually, AI may not only output source code, but directly generate lower-level binaries or model weights.

IV. This AI investment cycle resembles the 2000 dot-com bubble—but its underlying supply-demand structure differs

- Recalling 2000, he stresses the crash was driven less by “the internet not working” and more by overbuilt telecom and bandwidth infrastructure—fiber and data centers were laid far ahead of demand, followed by a long digestion period.

- He acknowledges similar “overbuilding” concerns today—but notes current investors are cash-rich giants like Microsoft, Amazon, and Google—not highly leveraged, fragile players.

- He specifically points out that GPU-capable investments today typically convert rapidly into revenue—a stark contrast to 2000’s vast idle capacity.

- He further argues we’re currently using a “sandbagged” version of the technology: constrained by insufficient GPU, memory, and data center supply, the full potential of models remains unrealized.

- In his view, the real bottlenecks over the next few years won’t be GPUs alone—but CPU, memory, networking, and interlocking constraints across the entire chip ecosystem.

- He juxtaposes AI scaling laws with Moore’s Law, seeing both not merely as descriptive patterns but as engines continually driving capital, engineering, and industrial coordination forward.

- He cites a counterintuitive yet critical phenomenon: as software optimization accelerates, some older-generation chips may actually gain economic value over time—even relative to when they were newly purchased.

V. Open source, edge inference, and local execution are not niche—they’re integral to the AI competitive landscape

- Marc Andreessen explicitly affirms open source’s vital importance—not just because it’s free, but because it “teaches the whole world how it’s built.”

- He describes open releases like DeepSeek as a “gift to the world,” since code + paper rapidly disseminate knowledge and lift the entire industry’s baseline.

- In his framing, open source isn’t just a technical choice—it may also serve geopolitical and market strategy: nations and firms adopt varying openness policies based on commercial constraints and influence goals.

- He equally stresses the importance of edge inference: centralized inference costs may remain too high for years, making sustained cloud-based inference prohibitively expensive for many consumer applications.

- He notes a recurring pattern: models once deemed “impossible to run on a PC” often become locally executable on standard machines within months.

- Beyond cost, drivers for local execution include trust, privacy, latency, and use cases: wearables, door locks, and portable devices all favor low-latency, on-device inference.

- His judgment is direct: virtually every device with a chip could eventually host an AI model.

VI. AI’s true challenges lie not only in model capability—but in security, identity, payments, organizational structures, and institutional resistance

- On security, his assessment is sharp: nearly all potential security bugs will become easier to discover, potentially triggering a near-term “computer security catastrophe.”

- Yet he also believes programming intelligences will scale vulnerability patching; future “software protection” may simply involve bots scanning and fixing code autonomously.

- On identity, he deems “proof of bot” unworkable—as bots grow ever more capable—the viable path is “proof of human”: combining biometrics, cryptographic verification, and selective disclosure.

- He also raises an often-overlooked issue: if agents operate meaningfully in the real world, they’ll ultimately require money, payment capability—and perhaps bank accounts, cards, or stablecoin-style infrastructure. At the organizational level, borrowing the framework of managerial capitalism, he suggests AI may reinforce founder-led companies, as bots excel at reporting, coordination, paperwork, and large-scale “managerial work.”

- But he doesn’t expect society to embrace AI smoothly: citing occupational licensing, labor unions, dockworker strikes, government agencies, K–12 education, and healthcare, he illustrates how deeply embedded institutional “brakes” slow adoption.

- His final judgment: both AI utopians and doomers overlook a key point—once something becomes technically possible, it doesn’t mean 8 billion people will instantly change.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News