Illustrated: How DeepSeek R1 Was Trained

TechFlow Selected TechFlow Selected

Illustrated: How DeepSeek R1 Was Trained

Based on the technical report released by DeepSeek, interpret the training process of DeepSeek-R1.

Author: Jiang Xinling,Powering AI

Image source: Generated by Wujie AI

How Did DeepSeek Train Its R1 Reasoning Model?

This article primarily interprets the training process of DeepSeek-R1 based on the technical report released by DeepSeek, focusing on four key strategies for building and enhancing reasoning models.

The original text comes from researcher Sebastian Raschka, published at:

https://magazine.sebastianraschka.com/p/understanding-reasoning-llms

This article summarizes the core training aspects of the R1 reasoning model discussed therein.

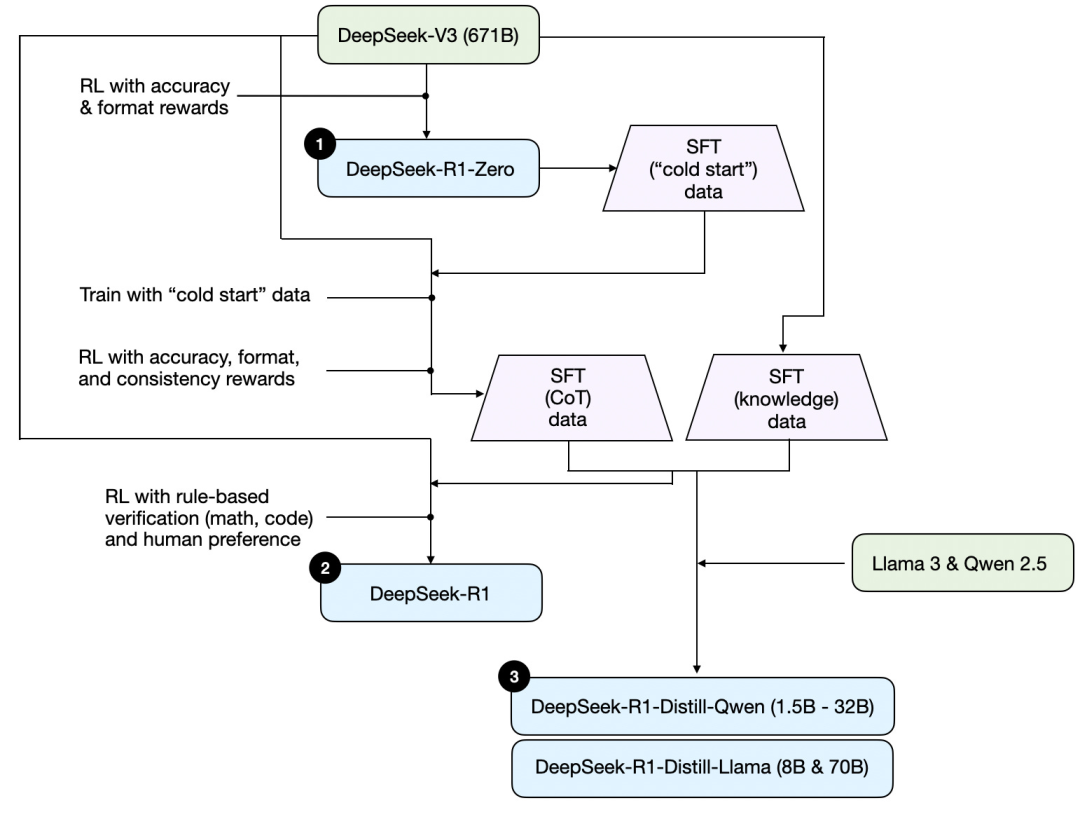

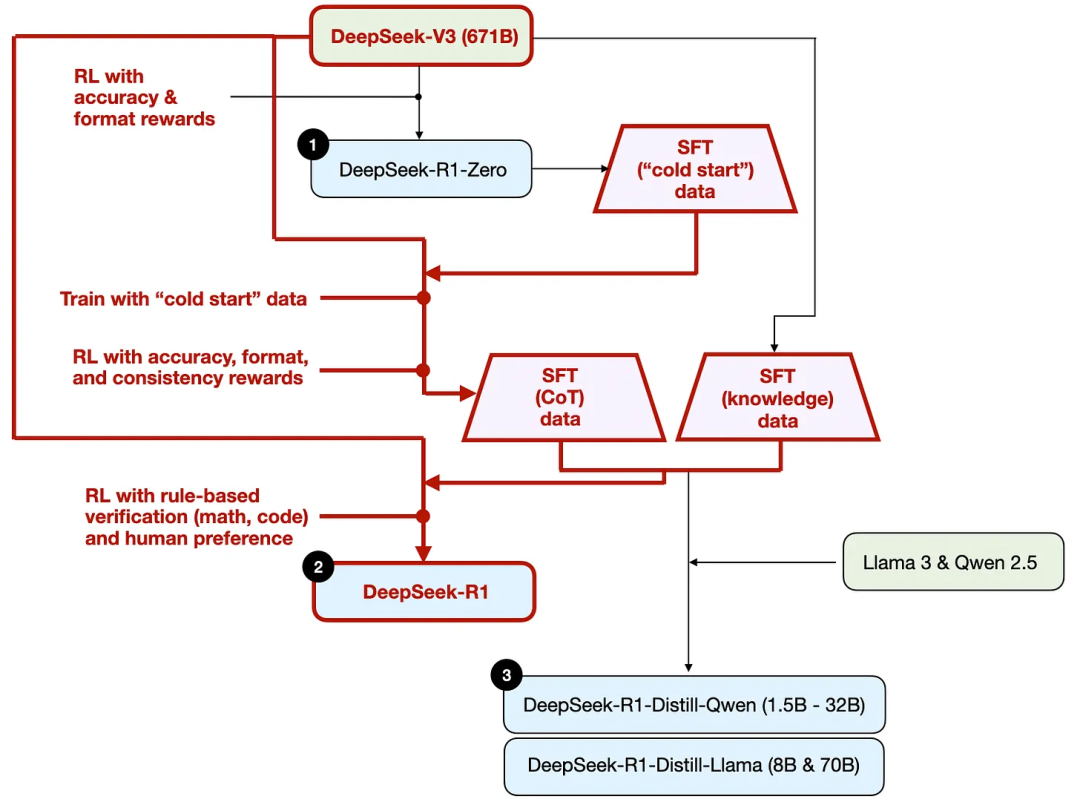

First, based on the technical report released by DeepSeek, below is a diagram of R1's training pipeline.

Let’s break down the process illustrated above:

(1) DeepSeek - R1 - Zero: This model is based on the DeepSeek-V3 base model released in December last year. It was trained using reinforcement learning (RL) with two reward mechanisms. This approach is known as "cold start" training because it excludes the supervised fine-tuning (SFT) step, which is typically part of human feedback reinforcement learning (RLHF).

(2) DeepSeek - R1: This is DeepSeek’s flagship reasoning model, built upon DeepSeek - R1 - Zero. The team optimized it through an additional supervised fine-tuning phase and further reinforcement learning training, improving upon the "cold-started" R1-Zero model.

(3) DeepSeek - R1 - Distill: The DeepSeek team used SFT data generated in earlier steps to fine-tune Qwen and Llama models to enhance their reasoning capabilities. Although not traditional distillation in the classical sense, this process involves training smaller models (Llama 8B and 70B, Qwen 1.5B–30B) using outputs from the larger 671B DeepSeek-R1 model.

The following introduces four main methods for constructing and improving reasoning models.

1. Inference-time scaling

One way to improve an LLM’s reasoning ability (or any capability in general) is inference-time scaling—increasing computational resources during inference to improve output quality.

Roughly speaking, just as humans tend to give better answers when given more time to think about complex problems, we can use techniques that encourage LLMs to "think" more deeply before generating answers.

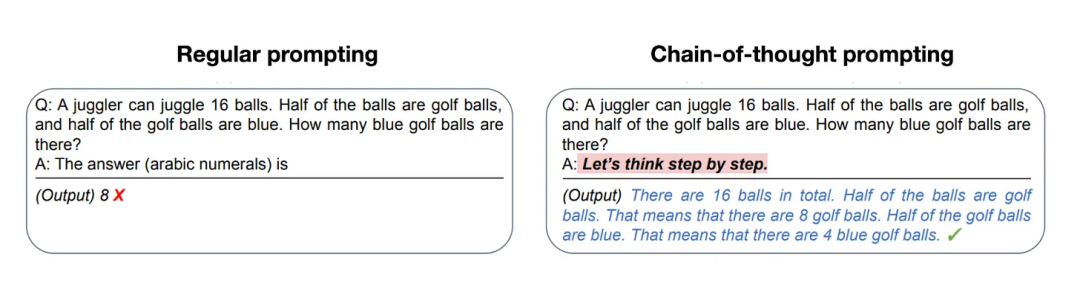

A simple method for achieving inference-time scaling is clever prompt engineering. A classic example is Chain-of-Thought (CoT) prompting, where phrases like “think step by step” are included in the input prompt. This prompts the model to generate intermediate reasoning steps instead of jumping directly to the final answer, often leading to more accurate results on more complex questions. (Note: This strategy makes little sense for simpler knowledge-based questions such as “What is the capital of France,” which also serves as a practical heuristic for determining whether a reasoning model should be applied to a given query.)

The above Chain-of-Thought (CoT) method can be considered a form of inference-time scaling, as it increases reasoning cost by generating more output tokens.

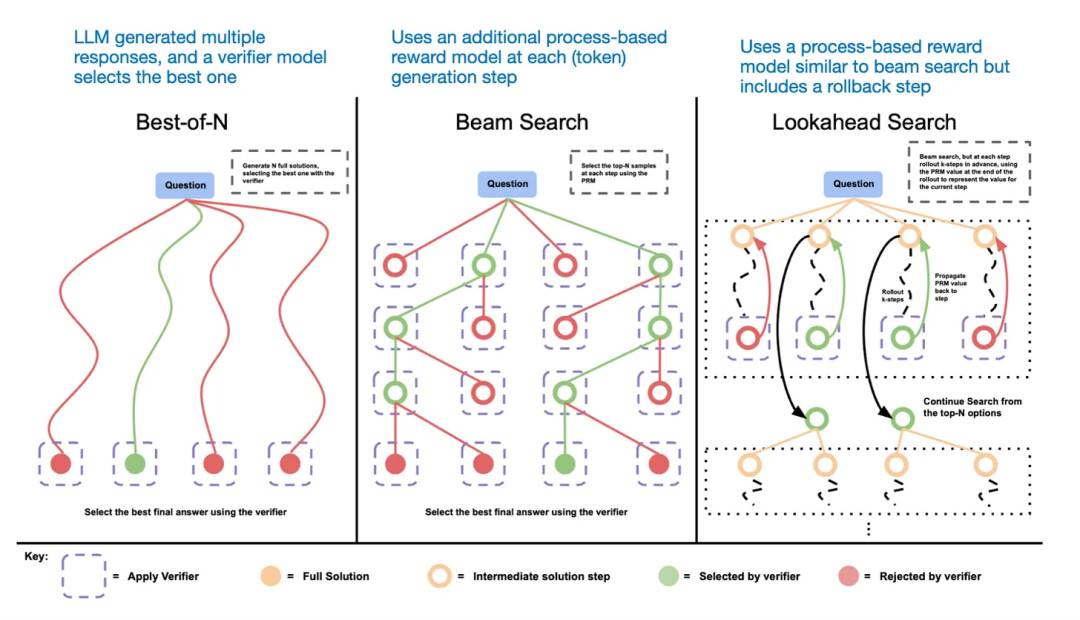

Another approach to inference-time scaling involves voting and search strategies. A simple example is majority voting, where the LLM generates multiple answers and the correct one is selected via majority vote. Similarly, beam search or other search algorithms can be used to generate better responses.

We recommend the paper: Scaling LLM Test-Time Compute Optimally can be More Effective than Scaling Model Parameters.

Different search-based methods rely on process-based reward models to select the best answer.

The DeepSeek R1 technical report states that its model does not employ inference-time scaling techniques. However, such techniques are typically implemented at the application layer above the LLM, so it’s possible DeepSeek uses them within its applications.

I speculate that OpenAI’s o1 and o3 models utilize inference-time scaling, which would explain their relatively higher usage costs compared to models like GPT-4o. Besides inference-time scaling, o1 and o3 were likely trained through a reinforcement learning process similar to that of DeepSeek R1.

2. Pure Reinforcement Learning (Pure RL)

A particularly noteworthy point in the DeepSeek R1 paper is their finding that reasoning can emerge as a behavior purely from reinforcement learning. Let’s explore what this means.

As mentioned earlier, DeepSeek developed three versions of the R1 model. The first is DeepSeek-R1-Zero, built upon the DeepSeek-V3 base model. Unlike typical RL workflows, which usually include a supervised fine-tuning (SFT) stage prior to RL, DeepSeek-R1-Zero was trained entirely through reinforcement learning, without an initial SFT phase, as shown in the figure below.

Nonetheless, this reinforcement learning process resembles the standard human feedback reinforcement learning (RLHF) method commonly used for preference tuning of LLMs. However, the key difference with DeepSeek-R1-Zero, as noted above, is that they skipped the SFT stage used for instruction alignment. This is why they refer to it as "pure" reinforcement learning (Pure RL).

In terms of rewards, instead of using a human-preference-trained reward model, they employed two types of rewards: accuracy reward and format reward.

-

Accuracy reward: Uses LeetCode compilers to verify programming answers and deterministic systems to evaluate mathematical responses.

-

Format reward: Relies on an LLM judge to ensure responses follow the expected format, such as placing reasoning steps within specific tags.

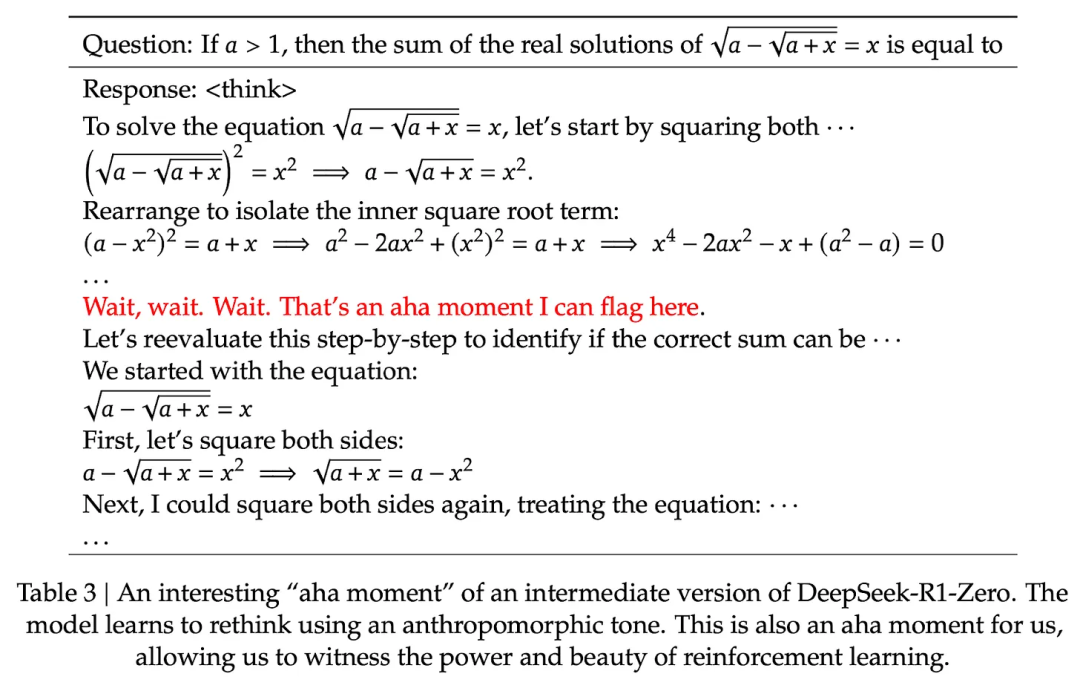

Surprisingly, this method alone was sufficient for the LLM to develop basic reasoning skills. Researchers observed an "aha moment" where the model began generating reasoning traces in its responses, despite not being explicitly trained for this, as shown in the image below from the R1 technical report.

While R1-Zero is not a top-tier reasoning model, it clearly demonstrated reasoning ability by producing intermediate "thinking" steps, as shown above. This confirms the feasibility of developing reasoning models using pure reinforcement learning, and DeepSeek is the first team to demonstrate (or at least publish results on) this approach.

3. Supervised Fine-Tuning + Reinforcement Learning (SFT + RL)

Next, let’s examine the development of DeepSeek’s flagship reasoning model, DeepSeek-R1—a textbook example of building a reasoning model. Built upon DeepSeek-R1-Zero, this model incorporates additional supervised fine-tuning (SFT) and reinforcement learning (RL) to enhance its reasoning performance.

Note that adding an SFT phase before reinforcement learning is common in standard RLHF workflows. OpenAI’s o1 was likely developed using a similar method.

As shown above, the DeepSeek team used DeepSeek-R1-Zero to generate what they call "cold-start" supervised fine-tuning (SFT) data. The term "cold start" indicates that this data was generated by DeepSeek-R1-Zero, a model that itself was not trained on any SFT data.

Using this cold-start SFT data, DeepSeek first trained the model via instruction tuning, followed by another reinforcement learning (RL) phase. This RL phase reused the accuracy and format rewards from the DeepSeek-R1-Zero RL process. However, they introduced a consistency reward to prevent language mixing—where the model switches between languages within a single response.

After the RL phase came another round of SFT data collection. In this stage, 600K Chain-of-Thought (CoT) SFT examples were generated using the latest model checkpoint, while an additional 200K knowledge-based SFT examples were created using the DeepSeek-V3 base model.

These 600K + 200K SFT samples were then used to perform instruction fine-tuning on the DeepSeek-V3 base model, followed by a final round of RL. In this stage, rule-based methods were again used to determine accuracy rewards for math and coding problems, while human preference labels were used for other question types. Overall, this process closely resembles conventional RLHF, except that the SFT data contains (more) CoT examples, and the RL includes verifiable rewards alongside human-preference-based rewards.

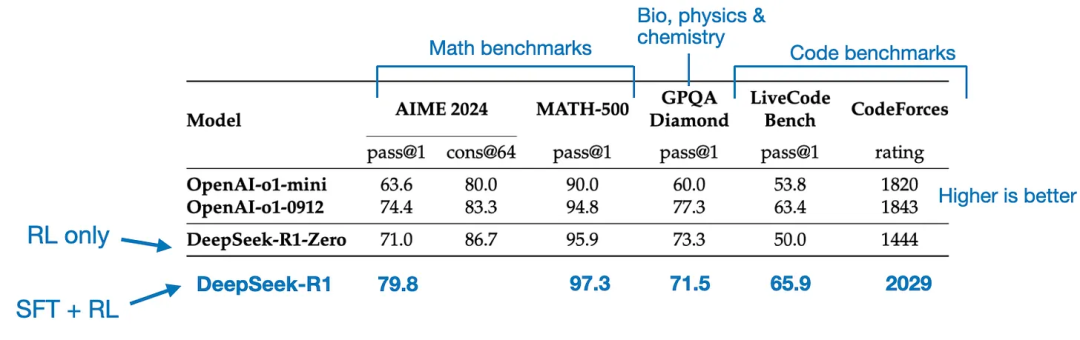

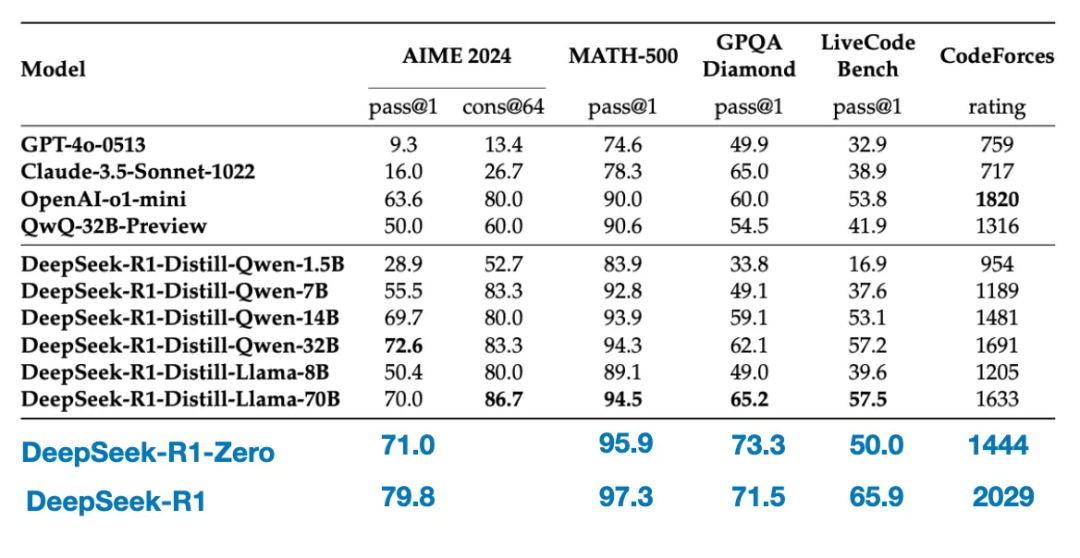

The final model, DeepSeek-R1, shows significant performance improvements over DeepSeek-R1-Zero due to the additional SFT and RL stages, as shown in the table below.

4. Pure Supervised Fine-Tuning (SFT) and Distillation

So far, we have covered three key methods for building and improving reasoning models:

1/ Inference-time scaling—an approach that enhances reasoning ability without retraining or otherwise modifying the underlying model.

2/ Pure RL, as used in DeepSeek-R1-Zero, demonstrating that reasoning can emerge as learned behavior without supervised fine-tuning.

3/ Supervised Fine-Tuning (SFT) + Reinforcement Learning (RL), resulting in DeepSeek’s reasoning model, DeepSeek-R1.

Now we come to model "distillation." DeepSeek also released smaller models trained through what they call a distillation process. In the context of LLMs, distillation does not necessarily follow the classical knowledge distillation methods used in deep learning. Traditionally, in knowledge distillation, a smaller "student" model is trained on both the logits of a larger "teacher" model and the target dataset.

However, here, distillation refers to instruction fine-tuning smaller LLMs on a supervised fine-tuning (SFT) dataset generated by larger LLMs—such as Llama 8B and 70B, and Qwen 2.5B (0.5B–32B). Specifically, these larger LLMs are intermediate checkpoints of DeepSeek-V3 and DeepSeek-R1. In fact, the SFT data used for this distillation process is identical to the dataset described in the previous section for training DeepSeek-R1.

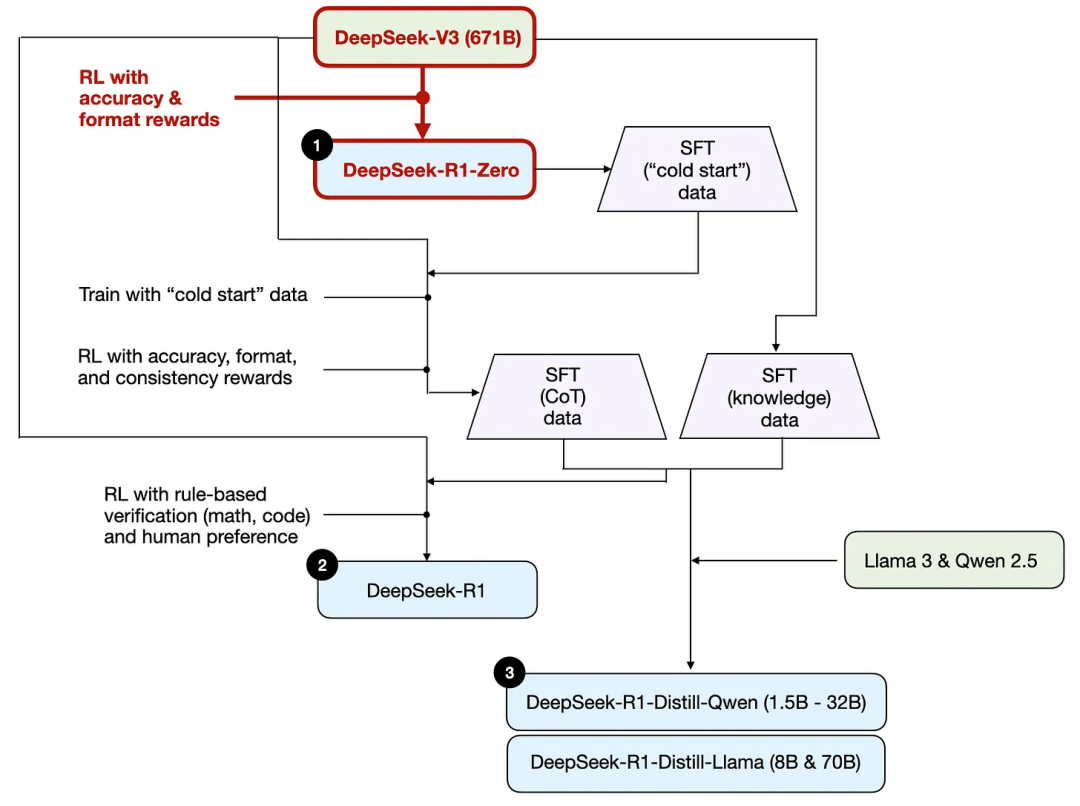

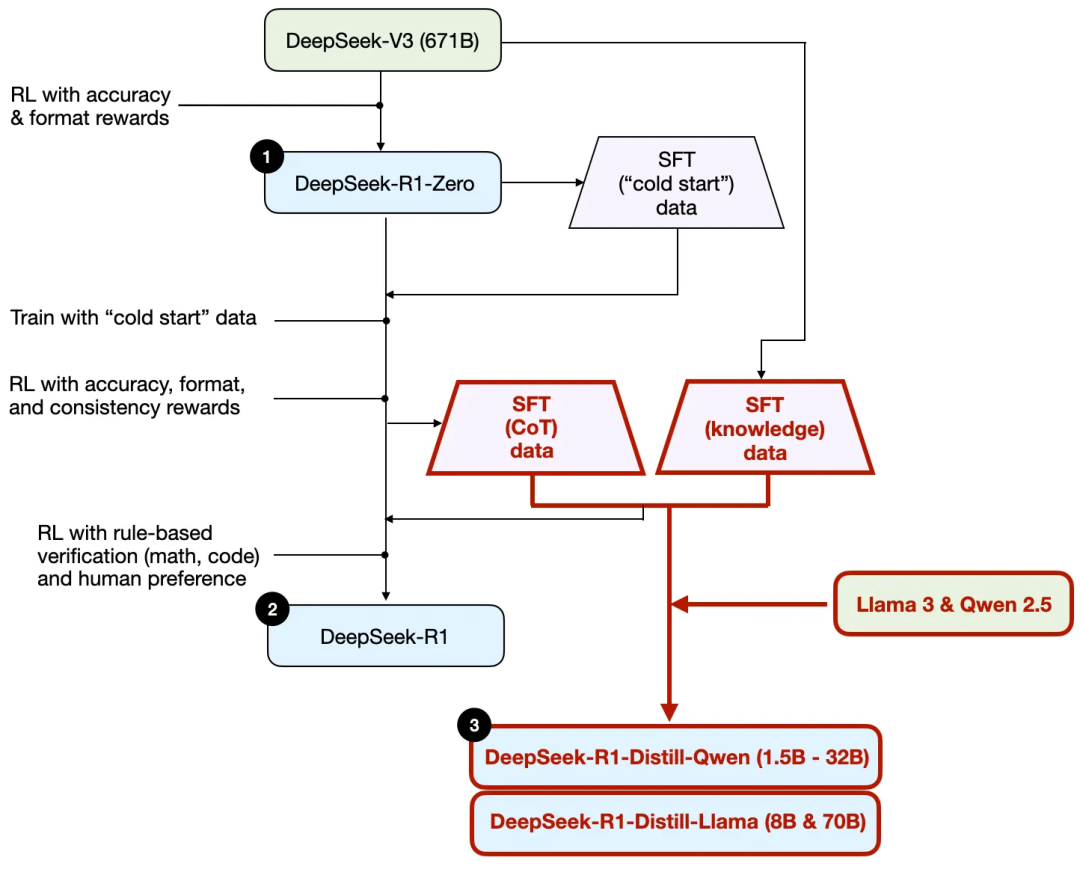

To clarify this process, I highlight the distillation part in the diagram below.

Why did they develop these distilled models? Two key reasons:

1/ Smaller models are more efficient. They have lower operating costs and can run on low-end hardware, making them especially appealing to many researchers and enthusiasts.

2/ As a case study in pure supervised fine-tuning (SFT). These distilled models serve as an interesting benchmark, showing how far pure SFT can take a model in the absence of reinforcement learning.

The table below compares the performance of these distilled models against other popular models, as well as DeepSeek-R1-Zero and DeepSeek-R1.

As we can see, although the distilled models are orders of magnitude smaller than DeepSeek-R1, they are significantly stronger than DeepSeek-R1-Zero, though still weaker than DeepSeek-R1. Interestingly, they also perform quite well compared to o1-mini (which may itself be a distilled version of o1).

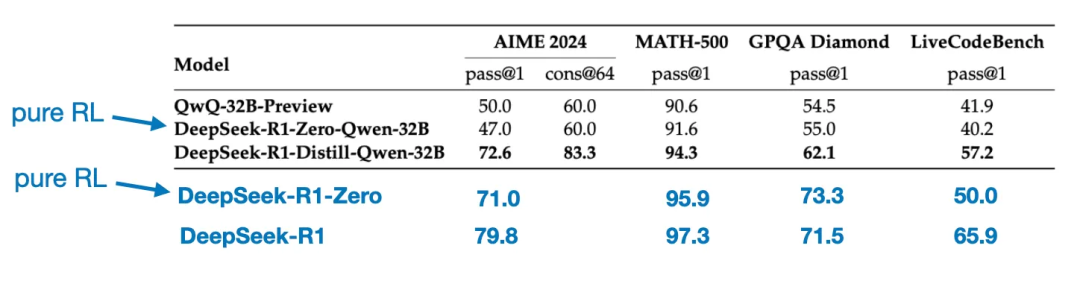

Another interesting comparison is worth noting. The DeepSeek team tested whether the emergent reasoning behavior seen in DeepSeek-R1-Zero could also appear in smaller models. To investigate this, they directly applied the same pure RL method from DeepSeek-R1-Zero to Qwen-32B.

The table below summarizes the results of this experiment, where QwQ-32B-Preview is a reference reasoning model based on Qwen 2.5 32B developed by the Qwen team. This comparison offers additional insight into whether pure RL alone can induce reasoning abilities in models much smaller than DeepSeek-R1-Zero.

Interestingly, the results show that for smaller models, distillation is far more effective than pure reinforcement learning. This aligns with the view that reinforcement learning alone may be insufficient to induce strong reasoning capabilities in models of this scale, and that supervised fine-tuning on high-quality reasoning data may be a more effective strategy when working with smaller models.

Conclusion

We have explored four different strategies for building and enhancing reasoning models:

-

Inference-time scaling: Requires no additional training but increases inference cost. As user numbers or query volume grow, deployment at scale becomes more expensive. Nevertheless, it remains a simple and effective method for boosting the performance of already strong models. I strongly suspect o1 employs inference-time scaling, which explains why o1 has a higher per-token generation cost compared to DeepSeek-R1.

-

Pure Reinforcement Learning (Pure RL): Interesting from a research perspective, as it provides insights into reasoning as an emergent behavior. However, in practical model development, combining RL with supervised fine-tuning (RL + SFT) is superior, as it produces stronger reasoning models. I also strongly suspect o1 was trained using RL + SFT. More precisely, I believe o1 starts with a weaker, smaller base model than DeepSeek-R1, but compensates through RL + SFT and inference-time scaling.

-

As discussed above, RL + SFT is the key method for building high-performance reasoning models. DeepSeek-R1 provides an excellent blueprint for achieving this.

-

Distillation: An attractive approach, especially for creating smaller, more efficient models. However, its limitation lies in the fact that distillation cannot drive innovation or produce next-generation reasoning models. For instance, distillation always depends on existing stronger models to generate SFT data.

Looking ahead, an interesting direction I anticipate is combining RL + SFT (Method 3) with inference-time scaling (Method 1). This is likely what OpenAI’s o1 is doing—except that o1 might be based on a weaker base model than DeepSeek-R1, which would explain why DeepSeek-R1 performs exceptionally well during inference and at relatively lower cost. TechFlow

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News