OpenAI Leaker Joins Musk

TechFlow Selected TechFlow Selected

OpenAI Leaker Joins Musk

In addition to Pavel Izmailov, many other outstanding talents have recently been recruited by Musk.

By: Bai Jiao, Heng Yu, Fa Zi, Ao Fei Temple

Source: Quantum Bit

The whistleblower just fired by OpenAI has swiftly joined Musk.

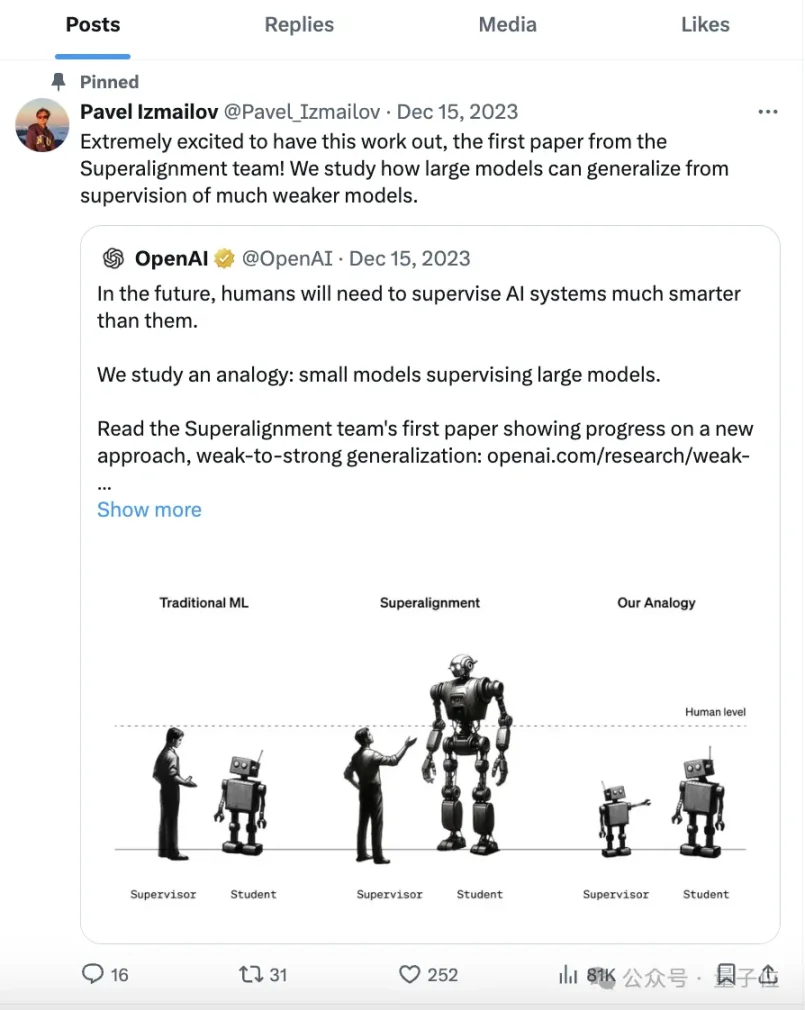

The individual in question, Pavel Izmailov (hereinafter referred to as "P"), was one of Ilya's allies and previously worked on Ilya’s Superalignment team at OpenAI.

Half a month ago, P was suspected of leaking confidential information related to Q* and was subsequently dismissed. While the specifics of what he leaked remain unclear, the incident caused quite a stir at the time.

And just like that—now his Twitter bio proudly declares:

Researcher @xai

Talk about fast hiring—aside from P, many other top talents have recently been recruited by Musk.

Netizens are buzzing with reactions. Many are praising him, calling it a brilliant move:

Others are disgusted, criticizing the decision to hire someone who leaked secrets, calling it akin to picking up trash.

Moreover, xAI’s recent performance—including the release of Grok 1.5V—has made a strong impression, prompting widespread commentary:

xAI is emerging as a major player, standing shoulder-to-shoulder with OpenAI and Anthropic.

Hiring an OpenAI-Dismissed Whistleblower

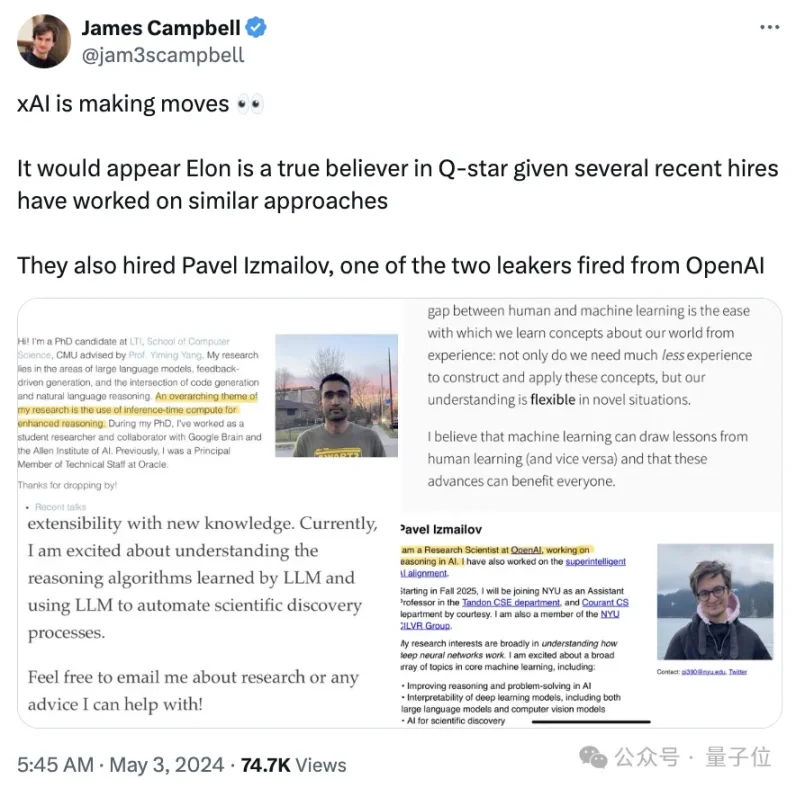

Here’s how it unfolded: a blogger deeply tuned into the latest happenings in large models made a big discovery:

Musk’s xAI has hired quite a few new people lately???

And several of them have research backgrounds connected to OpenAI’s most mysterious Q* algorithm—seems Musk might be the true believer in Q* after all.

So who exactly have recently joined xAI?

The most prominent is the aforementioned P.

He is also a member of NYU’s CILVR group and has stated he will join NYU Tandon CSE and Courant CS as an assistant professor in fall 2025.

Just half a month ago, his profile still read “working on large model inference at OpenAI.”

Now, things have changed completely.

Yet P still keeps pinned on Twitter the first paper from the Superalignment team—the very paper he co-authored.

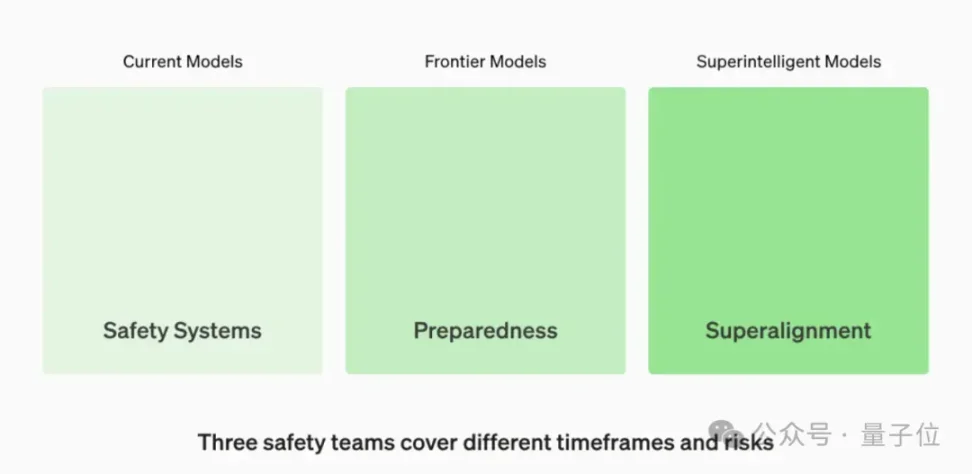

The Superalignment team was formed last July as one of OpenAI’s three safety teams, established to address potential AI safety issues across different time scales.

Tasked with securing superintelligent systems far in the future, the team was led by Ilya Sutskever and Jan Leike.

Although OpenAI appears to prioritize safety, internal disagreements over AI safety development are no secret.

These divisions were widely believed to be the primary cause behind the boardroom drama at OpenAI in November last year.

It’s rumored that Ilya Sutskever became a leader of the “coup” because something he saw deeply unsettled him.

Many members of Ilya’s Superalignment team stood with him and remained silent during the later “thumbs-up” campaign supporting Altman.

After the turmoil settled, however, Ilya seemed to vanish from OpenAI, sparking rampant speculation—but he never reappeared publicly or offered any clarification online.

Thus, the current status of the Superalignment team remains unknown.

As a member of the Superalignment team and Ilya’s subordinate, P’s sudden departure from OpenAI half a month ago has led netizens to speculate it was Altman’s act of settling scores.

Talent Who Joined Musk Overnight

Though the full scope of Q* remains unknown, various clues suggest it aims to integrate large models with reinforcement learning and search algorithms to enhance AI reasoning capabilities.

Besides the highly controversial P, several other new xAI hires have research areas tangentially related to this direction.

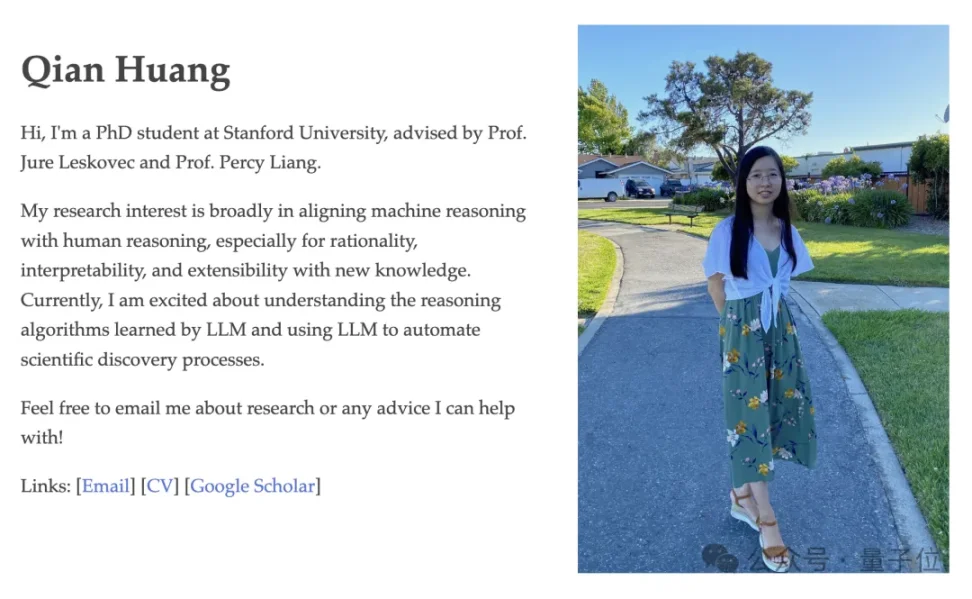

Qian Huang, currently a PhD student at Stanford University.

She began working at Google DeepMind last summer and now lists @xai on her Twitter, though her specific role is not yet known.

Her GitHub profile shows her research focuses on integrating machine reasoning with human reasoning, particularly around plausibility, interpretability, and scalability of new knowledge.

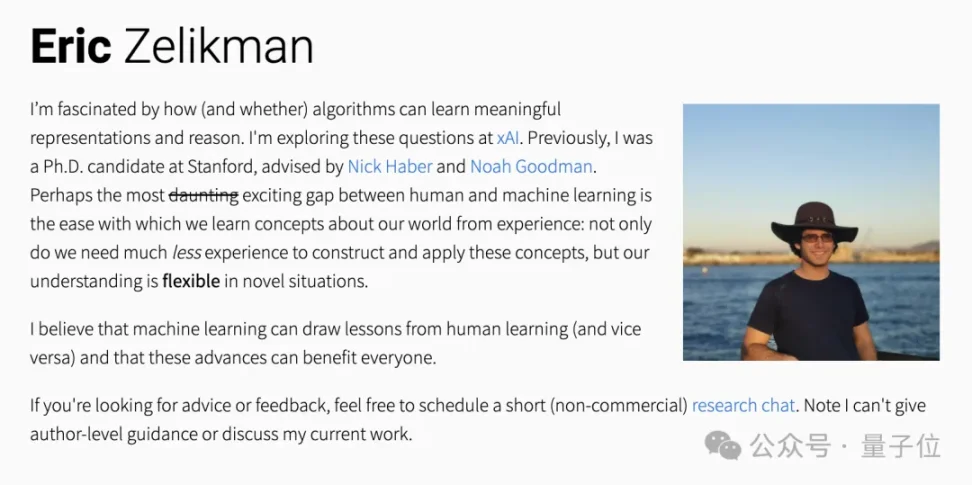

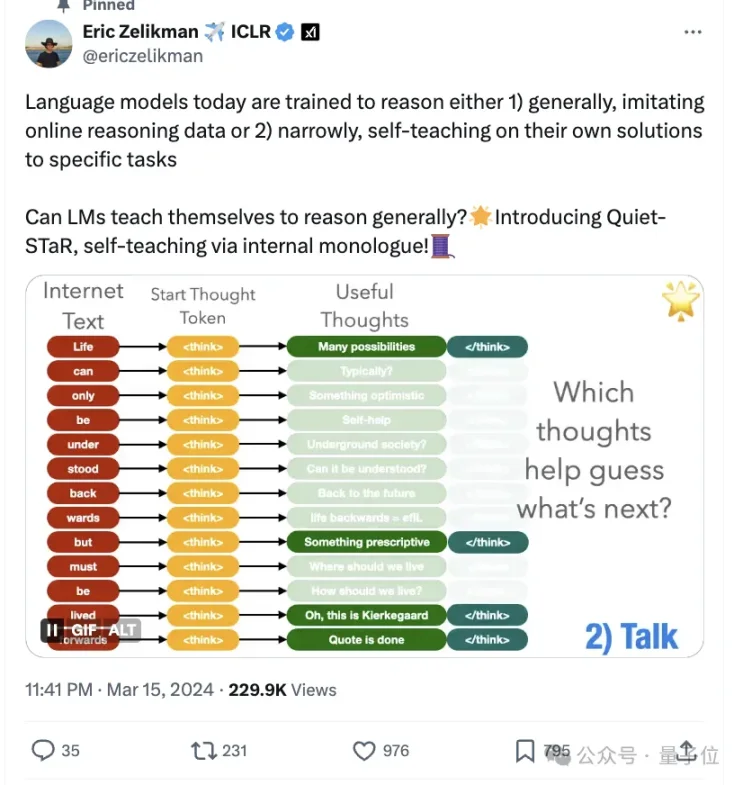

Eric Zelikman, a PhD candidate at Stanford, whose Twitter bio says “study why @xai.”

Previously, he spent time at Google Research and Microsoft Research.

On his personal website, he states: “I’m fascinated by how (and whether) algorithms can learn meaningful representations and reasoning—I’m studying this at xAI.”

In March this year, his team introduced the Quiet-Star algorithm—indeed another take on Q*—enabling large models to think independently.

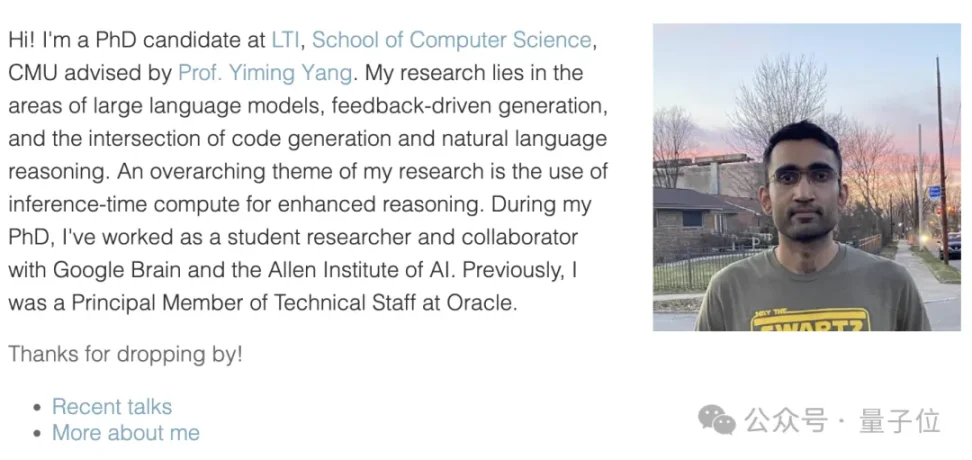

Aman Madaan, a PhD candidate at Carnegie Mellon University’s Language Technologies Institute.

His research spans large language models, feedback-driven generation, and the intersection of code generation and natural language reasoning. His core focus is enhancing reasoning through inference-time compute.

During his PhD, Aman served as a student researcher and collaborator at Google Brain and the Allen Institute for AI; earlier, he was a principal technologist at Oracle.

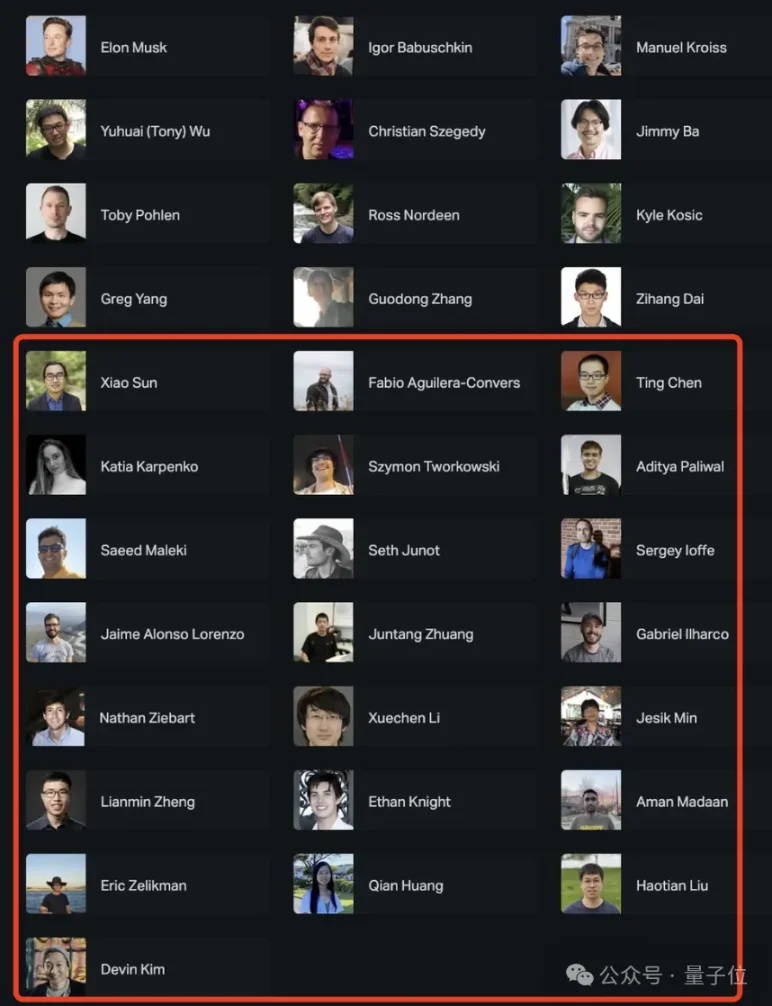

With the addition of Pavel Izmailov and others, Musk’s technical talent pool has now expanded to 34 members (excluding Musk himself), roughly doubling from the original 12-founder team.

Among the new hires, there are seven individuals of Chinese descent. Combined with five from the founding team, the total now stands at 12.

-

Xiao Sun, formerly at Meta and IBM, PhD from Yale, alumnus of Peking University.

-

Ting Chen, formerly at Google DeepMind and Google Brain, undergraduate from BUPT.

-

Juntang Zhuang, formerly at OpenAI, core contributor to DALL-E 3 and GPT-4, undergrad from Tsinghua, PhD from Yale.

-

Xuechen Li, recently graduated with a PhD from Stanford, core contributor to the Alpaca series of large models.

-

Lianmin Zheng, PhD in Computer Science from UC Berkeley, creator of Vicuna and Chatbot Arena.

-

Qian Huang, PhD student at Stanford, graduated from Nankai High School in Tianjin.

-

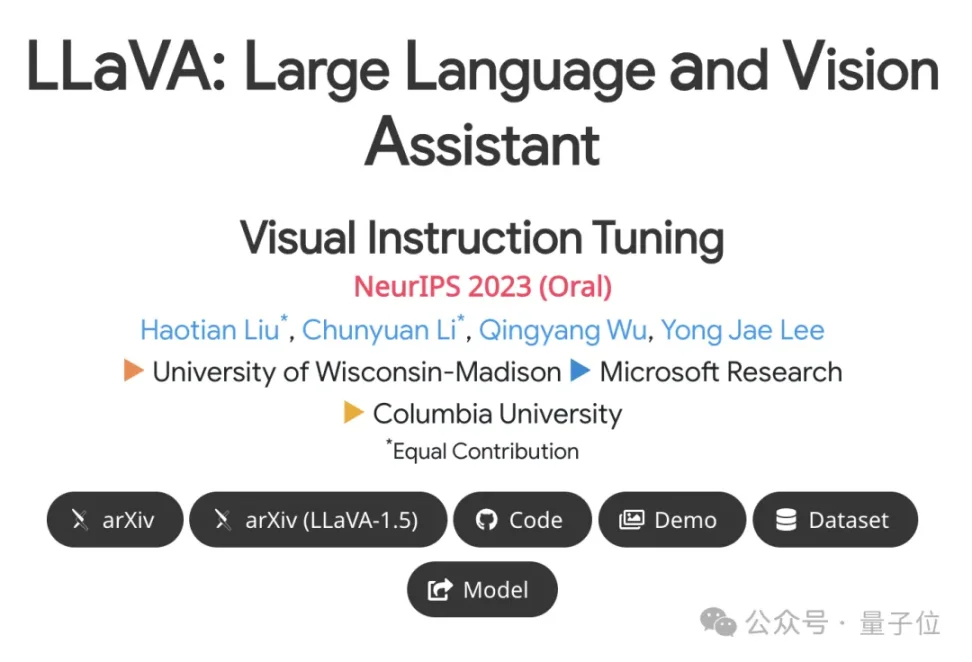

Haotian Liu, University of Wisconsin-Madison, undergrad from Zhejiang University, first author of LLaVA.

In terms of institutional background, most come from Google, Stanford, Meta, OpenAI, and Microsoft—all with extensive experience in training large models such as the GPT series, the Alpaca/Vicuna series, and relevant large models from Google and Meta.

In terms of timing, most joined between February and March this year, averaging one new member every five days—a total of 13. Between August and October last year, only five joined.

When aligned with Grok’s progress, we can clearly see the phased recruitment strategy of Musk’s xAI.

For example, on March 29, Musk suddenly launched Grok-1.5, dramatically increasing context length from 8,192 to 128k—on par with GPT-4.

One month earlier, in February, former OpenAI employee Juntang Zhuang joined xAI. At OpenAI, he developed the algorithm enabling GPT-4 Turbo’s 128k context capability.

Similarly, on April 15, the multimodal Grok-1.5V was released, capable of processing visual inputs like charts, screenshots, and photos in addition to text.

Back in March, Haotian Liu—the first author of LLaVA—had just joined. LLaVA is an end-to-end trained multimodal model demonstrating capabilities similar to GPT-4V. The updated LLaVA-1.5 achieved state-of-the-art results on 11 benchmarks.

So now, let’s boldly speculate: what upgrades might Grok receive with this new wave of talent?

Netizen comment: Whatever, where’s Grok-1.5 (still not open-sourced)?

But regardless, based on Musk’s stated hiring criteria, one netizen hit the nail on the head:

Everyone says Musk’s AI company is full of geniuses, but truth is, Musk doesn’t care about your talent. He said it himself: as long as you can work 80 hours a week without burning out, you’re in.

80 hours?!

Quantum Bit did the math—that’s working 11.5 hours every day, non-stop, no rest…

Forget IQ—we couldn’t physically handle this kind of workload.

References:

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News