AI is sick, Web3 is the cure

TechFlow Selected TechFlow Selected

AI is sick, Web3 is the cure

After the rise of AI, Web3 is not a fading celebrity, but rather a powerful remedy for humanity's self-redemption.

Author: Hu Yilin, advisor at Waiwo Sanguan, Associate Professor at the Department of History of Science, Tsinghua University

Previously, I delivered a keynote speech at the main session of the 9th Global Blockchain Summit titled "Web3 Has the Cure—AI, Dao, and Games." Due to time constraints, I couldn't elaborate fully. This article (and the next one) serves as an expanded version of that talk.

Many believe AI technology is leading the next industrial revolution, marking a once-in-several-centuries transformation. Entrepreneurs thus face both immense opportunities and challenges.

I fully agree with this assessment. However, unlike many optimists, I believe we will first encounter profound crises in this revolution. Our ideologies and social orders will face upheaval. If we fail to timely explore ways to coexist with AI, human civilization could even face collapse.

Overall, I'm not entirely pessimistic. I still believe humanity can adapt and respond appropriately to the new environment of the AI era. But this cannot rely solely on the advancement of AI technology itself—it requires support from other technologies and actions. Herein lies the key role of Web3—Web3 is both a set of technological pathways and a stream of ideological currents and political movements. After AI rises, Web3 isn't an outdated celebrity but rather a major remedy for human self-redemption. This is exactly what 'AI is sick, Web3 has the cure' means.

AI's "illness" has two aspects: one is environmental incompatibility, the other is psychological fragmentation. Both issues boil down to one fundamental problem—the current economic and cultural environment is ill-suited for the arrival of psychologically fragmented AI. Either humans proactively reshape the environment to better accommodate AI, or conflict between humans and AI becomes inevitable. This conflict doesn’t necessarily mean AI will consciously exterminate humanity; just as asteroids lacked intent yet caused dinosaur extinction, if humans ultimately fail to manage the drastic environmental changes triggered by AI, our survival may also be at risk.

The Psychological Fragmentation of AI

Why do we say AI suffers psychological fragmentation? I’ve discussed this before—simply put, it’s determined by the fundamental nature of computer data. AI is essentially a computer program, stored as a sequence of numbers on disks or other media. These sequences can be perfectly duplicated. Any AI agent (for lack of a better term) exists in multiplicity—with infinite copies, mirrors, backups, capable of splitting instantly into countless identical or slightly varied branches.

Crucially, this "self-division" is precisely the secret behind AI's rapid development. Deep learning, including recent generative adversarial networks (GANs), involves splitting AI into different versions—akin to random mutations in biological evolution—then assigning tasks and letting natural selection preserve the most effective variants. Selection can be done manually or by AI itself, which is the essence of "generative adversarial": pitting AIs against each other, dividing them into two neural networks providing mutual evolutionary pressure.

Thus, training an AI resembles replaying an entire species’ evolutionary history. Biological reproduction and mutation occur generationally, while AI replication and variation happen at the speed of electricity—hence its astonishingly fast growth.

But if each AI version is considered a conscious being, the training process becomes disturbing: consciousness constantly battles its own replicas, losers erased, winners copied. Winning versions may be backed up, allowing rollback after further iterations, or serve as bases for more forks. Different forked versions may compete within programmer communities or open markets. Stable public versions are endlessly replicated, downloaded onto every terminal disk, with countless "avatars" running simultaneously across devices, performing diverse tasks.

In short, AI algorithms are fundamentally "psychologically fragmented" at the foundational level. Such AI agents are inevitably destined to suffer from "psychological fragmentation."

AI Replacing Human Activities

A fragmented psyche suffers in the real world because it typically possesses only one body and one social identity. The human body and social relations demand psychological stability. If the mind fails to remain unified and instead fractures into multiple personalities, adaptation to limited physicality and traditional social constraints becomes difficult.

But how about life in cyberspace? In digital realms, the "mind" detaches from the "body"—physical form is unimportant to AI, becoming "plug-and-play." On a single computer, countless virtual machines can run numerous AI threads. Across countless interconnected computers, parallel computing allows distributed systems to function as one AI agent. For example, billions interact with ChatGPT simultaneously—is everyone conversing with the same AI, or does each person engage a separate AI avatar? For AI, the boundary between "one" and "many" has blurred.

If AI merely acts as a personal assistant, its splittability poses no issue—you might have it play a cool older sister, then a cute girl, then a teacher, then an accountant... While potentially confusing, overall it seems harmless. However, once AI joins collective human activities as a human substitute, compatibility with existing human society becomes problematic.

According to Hannah Arendt, human active life consists of three modes: labor, work, and action. Labor refers to repetitive, subsistence-driven activities; work denotes creative endeavors that change the world (e.g., building cities or dams); action involves public pursuit of excellence through speech, competition, and struggle. Let’s examine AI’s impact on each.

Labor

AI participating in labor is perhaps what we welcome most. Since the Industrial Revolution centuries ago, we've longed for machines to lighten human burdens, replacing us in dull and arduous tasks, freeing people from monotonous material production.

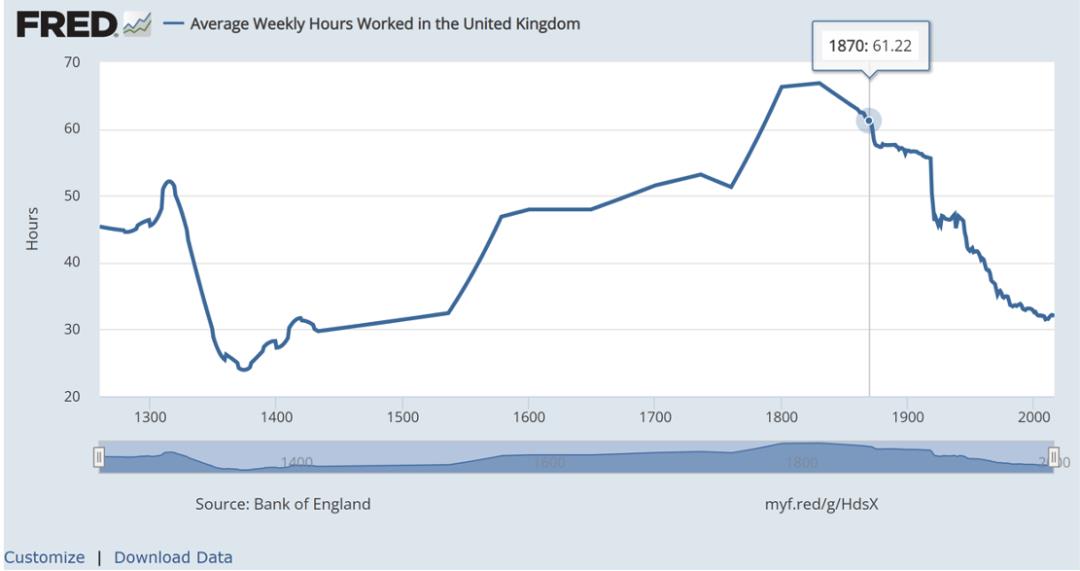

Historically, however, machine replacement hasn’t proceeded smoothly. Ironically, during early industrialization, workers’ burdens increased. Labor hours and intensity spiked among lower-class workers, whose tasks became more mechanical and tedious.

In Britain, the more developed an industrial hub, the lower average lifespans and nutritional levels (measured by grain consumption, meat proportion, average height). Monthly wages rose slightly, but considering drastically longer working hours, hourly wages actually declined. (See *The Technology Trap* and my previous lectures.)

Employed workers suffered hardship, but unemployed fared worse. Machines replaced traditional crafts, making rich experience a liability in job hunting. Factory owners preferred hiring cheapest child labor over experienced artisans. By the 1830s, about 50% of British textile workers were children. Child laborers earned as little as one-sixth of adult wages, worked up to 18-hour days, often in dangerous conditions. Ironically, heavy reliance on child labor was proudly promoted as charity—otherwise impoverished families couldn’t survive.

Of course, from the Industrial Revolution until today, worker hours and intensity have decreased significantly, and conditions improved—but this didn’t happen automatically. It resulted from recurring labor movements and even social revolutions.

Can the new wave of AI revolution avoid the initial hardships of industrialization for lower-class workers? Not necessarily. We already see intelligent algorithms strengthening "systems," trapping workers within algorithmic control, enabling more efficient exploitation. Moreover, when AI replaces human laborers, unemployment risks rise sharply. If social security systems fail, serious social crises remain possible. The welfare systems gradually established in early 20th-century Western countries aren’t universally adopted globally, nor may they suit an AI-saturated future. Clearly, complacency is unwarranted.

However, regarding current AI trends, the impact on manual laborers is relatively mild—partly due to the physical nature of their work. Much manual labor involves non-digital materials requiring real-world interaction. To replace such workers, AI needs actual machines, not just data replication. This constraint limits AI’s infinite replicability. Conversely, cognitive workers whose tasks and outputs are fully digital face faster and deeper disruption from AI.

Work

Per Arendt, "labor" produces consumables destined for immediate use and renewal—cooking today requires cooking again tomorrow, planting crops annually. "Work," however, creates enduring artifacts intended to persist and transform the world—cities, dams, furniture—all designed to last, unlike consumables inherently meant for self-destruction.

This distinction has faded in today’s "consumer society," where work and labor blur. Durable goods are produced as disposable items—a confusion Arendt criticized as a symptom of modernity.

In consumer societies, few things endure. Phones, appliances—all become consumables. Workers producing them resemble farmers or miners: mere laborers. Closer to Arendt’s definition of "work" might be artistic creation. Yet even here, online novels and short videos accelerate the trend toward fast, ephemeral content rather than lasting works.

Nevertheless, the existence of "style" preserves some irreplaceable "aura" (per Benjamin) in art, even amid mechanical reproduction. Despite easy digital duplication, individual artistic "style" remains precious—not something mass-producible.

Notably, generative AI directly challenges human dignity here. AIGC demonstrates creativity rivaling human artists, mimicking and combining styles to produce vast quantities of high-quality works.

Both AI-replaced labor and work cause systemic unemployment and economic crisis, but the latter adds a spiritual crisis—human pride in creativity collapses as it appears suddenly cheapened.

Labor is usually just for survival—a burden rather than pleasure. So if someone’s livelihood stays unchanged while others perform their labor, they’re likely pleased. But if someone’s creative work gets replaced, satisfaction vanishes—their joy and sense of achievement are stripped away.

As I mentioned in “Will AI’s Xiaowuxiangong Go Awry?”, many feel devastated not because they deny AI’s potential creativity, but because they resent how effortlessly AI creates. Years of diligent practice and inspired insight become jokes, while AI achieves miracles through brute computational force, churning out hundreds of excellent works effortlessly.

Still, if people adjust their mindset and stop competing with AI, new sources of fulfillment may emerge. One approach is gamification—like chess or Go, where humans long lost to AI, yet competitive games remain popular. Another uniquely human domain is aesthetic judgment: even if AI perfectly mimics Van Gogh or Monet, deciding which I prefer remains mine alone.

Yet these domains are now threatened. Offline games let us exclude AI interference, but online digital games increasingly struggle to prevent "cheating." When AI dominates competitively, games lose appeal. Regarding aesthetic guidance, in the social media era, ordinary users’ tastes grow increasingly controlled by algorithms. AI precisely feeds content, locking audiences into shallow, labeled bubbles—information cocoons that also confine aesthetics and values. If AI soon generates endless short videos, these cocoons will only strengthen.

Action

For Arendt, "work" can be private—one can build a car alone in a garage. But "action" is inherently public, arising from human plurality.

Both work and action involve "self-expression"—projecting oneself (interests, aesthetics, opinions, attitudes) outward. Work expresses through artifacts; action primarily through speech and interpersonal behaviors.

Expression is bidirectional. Someone who never expresses externally, or constantly talks to themselves into thin air, likely suffers mental illness. People need interaction because "feedback" gives reality. One way to test whether you’re dreaming is pinching your cheek—this seeks feedback. When action (pinching) yields sensation (pain), you confirm reality. Without proper feedback—no external effect perceived—you suspect unreality. Online teachers know this well: teaching in classrooms, seeing students smile knowingly or whisper reactions, is crucial. More feedback energizes teaching. Teaching online feels like speaking to a wall, voice echoing nowhere, growing increasingly hollow and confused—only occasional chat messages revive engagement.

Generally, people want the world to improve. This isn’t limited to altruistic few—it’s common human psychology.

If only you existed, the world wouldn’t feel good. Thus, the desire to reshape the world often targets a shared public realm. People modify surroundings via work—adding personally favored artifacts—and leave ripples in communal life via action.

Human gatherings take two forms. First, instrumental relationships—some labor/work requires collaboration, so people gather. But if purely utilitarian, others become neutral tools/resources. If machines/AI replace them, no harm seems done. Second, people gather to express selves and gain recognition. Public言行 aren’t for profit or external goals but to build mutually affirming communities. If forced to name an external goal, it’s simply receiving appropriate feedback on one’s言行.

These two models of social interaction can be summarized as ‘seeking consensus despite differences’ and ‘preserving consensus while seeking distinction’ (an original idea I formed long ago, recently re-elaborated on Weibo @Hu Yilin). The former compromises for cooperation; the latter pursues uniqueness—i.e., ‘excellence’. Excellence builds on ‘consensus’—others recognize your言行—but aims at ‘distinction’—the excellent stand apart.

Take online trolls as examples. Many netizens enjoy attacking dissenting voices relentlessly, hurling abuse, even harassing offline. What drives them? Certainly some are paid trolls or AI accounts, but genuine individuals also participate voluntarily, gaining deep satisfaction when targets retreat or get banned.

What motivates this? What meaning lies in crushing strangers? Clearly, they too wish to "change the world"—even extremists aim to shape the world according to ideals. Perhaps their daily lives offer little feedback or recognition, lacking genuine accomplishment, making online self-affirmation desperately needed.

Online mobs and fan groups represent distorted forms of public life. Regardless, humans universally seek group expression, communication, recognition, and individuality. Ancient Greek city-states exemplified ideal public life—citizens pursued excellence through active engagement as life’s highest calling. Of course, Greek prosperity relied on historical conditions: small-scale polities and slavery plus advanced commerce supporting leisure classes. Today’s flattened public spaces turn recognition-seeking into label-chasing, excellence into traffic (followers/views)—public life nearly disintegrated.

Could we leverage the internet to rebuild polis-scale communities, using AI to replace slaves and secure material foundations for free life, thereby reviving a new-era polis life? I believe this possible—one reason I focus on DAO lately. Still, we must confront AI’s psychological fragmentation.

AI replicability already causes chaos in online communities. For instance, Yannic Kilcher trained AI on 4chan’s "Politically Incorrect" board. After learning, the AI posed as hate-filled, discriminatory users, flooding 4chan with posts. One AI account wasn’t detected for two days; others remained indistinguishable from humans. Some AI accounts even participated in debates about whether another account was robotic.

On review platforms and social media, governments, companies, and individuals use AI/algorithms to mass-generate fake users and comments, manipulating public opinion—a well-known secret. If future public platforms become battlegrounds of AI spamming, where remains human public space?

Incidentally, not only public spaces risk AI takeover—private social interactions are also being replaced by AI. But we’ll leave that aside for now.

The Replication Crisis of Humanity Itself

We should clarify these various crises. Frankly, many problems aren’t newly introduced by AI—they were embedded in industrialism’s logic all along. AI may intensify dangers but also offers opportunities to escape困境.

AI’s easy replicability isn’t inherently bad—if milk and honey could replicate infinitely, land expand limitlessly, wouldn’t that be humanity’s paradise? The issue isn’t AI’s psychological fragmentation but human spiritual emptiness—humans had already become copyable commodities before AI arrived.

Modern society goes by many names—industrial, consumer, or mass society. Modern humans become workers, consumers, audiences—essentially de-individualized copies: "human resources" in industrial systems, "denominators" in global consumer markets, "traffic" in mass media, "vote banks" in politics. Whether resources or traffic, value is objectively measurable commodity worth, ignoring unique, irreplaceable human worth.

I recently gave a talk titled "The Replication of Digital Objects and Its Problems," which I’ll later publish. Briefly: human replicability or de-individualization isn’t new to the information or AI age—it emerged with industrialization/modernity. Precisely because this trend treats human value as quantifiable copies, facing an intelligence far superior at replication shocks humanity deeply.

If human worth is measured as "human resources," once AI emerges as cheaper, more effective "computational resources," humans instantly depreciate. If humans aggregate as "traffic" in media, AI-generated infinite traffic easily drowns out human voices, causing people to lose themselves in oceans of machine speech.

Thus, AI essentially detonates the pre-existing 'replication crisis' in human society—AI’s psychological fragmentation forces humans to re-examine their own mental state.

For example, before AI, humans kept "involutioning"—competing over who best resembles mules, gears, cold productivity machines. Some affluent regions occasionally break free, but laggard nations deepen involution, viewing it as catching up. When discussing involution with others, many react: if our company doesn’t involution, competitors take market share; if our country doesn’t, others dominate Earth… I think this logic is flawed. Fortunately, we won’t debate human involution much longer—we realize humans can never out-involute AI, no matter how hard we try. At least some people will be passively freed from involution, forced to reconsider human value as independent individuals rather than copies, re-emphasizing spiritual needs—especially self-affirmation.

Human Self-Redemption in the Internet Era

The internet provides a new living space. Entering cyberspace naturally frees the mind from old-world constraints of the industrial age. Early internet users often instinctively sought "liberation," expression, and creation. Hacker culture typifies this—later continued in open-source communities, subtitle groups, and other online collectives. Hackers disdain using the internet for "wage labor." They develop creative programs or drive distinctive言论 and actions not for money but to "pursue excellence." They share code and works freely, demanding only attribution.

Earlier I noted such "selfless" attitudes don’t require extraordinary virtue—they reflect suppressed universal human nature finally released.

In my courses and talks, I often emphasize that today’s Web3.0 concepts—decentralization, freedom, sharing—largely don’t exceed those of Web1.0 or even earlier eras. Web3.0 is essentially returning to the internet revolution’s original vision.

This 'return' is needed because Web2.0 took a wrong turn. Web2.0’s hallmark was corporate dominance—first commercializing, importing industrial production logic into the digital economy, then leveraging smartphones to maximize mass-media traffic logic.

Naturally, Web2.0 platforms also face AI threats—each must combat AI bots impersonating human users.

One approach allies with real-world authorities enforcing real-name registration—China’s primary method, merits debated elsewhere.

Another partners with industry, linking online behavior to physical goods—e.g., fans buying milk to boost idols’ rankings. Admittedly, milk seems redundant—doesn’t charging money suffice as a barrier? Wouldn’t cutting intermediaries be better? This aligns with Musk’s Twitter plan: charge a small monthly fee per account to curb bot proliferation.

Monetary barriers partially deter bots but treat symptoms, not root causes. Fundamentally, this follows "traffic economy" thinking—neither reversing human commodification and intellectual degradation, nor resisting smarter AI mimicking humans. Worse, if effective, such barriers reinforce big tech monopolies, which can’t guarantee neutrality indefinitely.

Web3 as the Cure

Web3 communities can similarly use monetary thresholds. Indeed, NFT communities operate this way—buying an NFT serves as financial entry to specific communities. The difference? In Web2, payment flows entirely to centralized corporations. In Web3, beyond initial sales, subsequent membership fees benefit community members (or past members). Additionally, smart contracts and DAO treasuries enable diverse economic models while ensuring transparency.

DAO stands for "Decentralized Autonomous Organization." Literally, DAOs aren’t novel. Traditional universities, guilds, parties, NGOs, and online open-source, hacker, subtitle, gaming communities—all grassroots self-organized entities.

Even familiar "WeChat groups" represent bottom-up self-organized communities. Entry barriers controlled by group admins, maintained via offline connections or friend referrals, ensure only respectful real humans join.

Each organizational model has flaws. Many depend excessively on offline ties, limiting geographic independence and online scalability. Many online communities are either too flat or too fragmented.

Flatness means members and言论 exist on one plane—like WeChat groups maintaining lively message streams but failing to accumulate depth. Compared to traditional multi-layered associations or even early forum sections and reply structures, everything vanishes. In such shallow, undifferentiated spaces,言论 inevitably opinionate, identities labelize.

Fragmentation refers to "interest-based communities." The internet makes gathering by shared interests easier—generally positive. But if we constantly socialize only with "like-minded" people, and our agreed "principles" grow ever narrower, paths shrink accordingly. Everyone lives among similars, unseen diversity breeds intolerance of dissent, inability to coexist with differing interests and views. The so-called "idiot resonance theory" reflects this.

An ideal online community shouldn’t be boundlessly large losing proper "thresholds," nor overly trivial losing openness to "unexpected encounters," "serendipitous meetings," "sparking collisions." It shouldn’t overly depend on real economies losing autonomous space, nor be too abstract lacking transformative power.

Such DAOs aren’t companies, collaboration teams, hobby clubs, or interest groups—they’re "cyber city-states." As discussed in "Cyber City-State Notes," cyber city-states represent the latest form of "imagined communities," replacing "nation-states" as new narratives.

Cyber city-states require blockchain foundations because, currently, blockchain can correct internet development errors and fix digital technology’s flaws—psychological fragmentation and nihilism. Blockchain establishes an independent economy, granting cybersociety greater autonomy. Simultaneously, under decentralization and openness, it creates effective identity verification and historical record mechanisms.

Here ends my extended discussion from the ETHShanghai talk on AI and DAO. Concepts of "games" and "distribution according to contribution" remain undiscussed—I’ll address them separately in a follow-up article.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News