Is Crypto X AI No Longer Trending? A Quick Look at Overlooked High-Potential Narratives

TechFlow Selected TechFlow Selected

Is Crypto X AI No Longer Trending? A Quick Look at Overlooked High-Potential Narratives

The initial Web3-AI hype mainly focused on value propositions detached from reality; now the focus should shift toward building practical and viable solutions.

Author: Crypto, Distilled

Translation: TechFlow

Crypto and AI: Has It Run Its Course?

In 2023, Web3-AI briefly became a hot topic.

But today, it's flooded with imitators and bloated projects lacking real utility.

Here are the pitfalls to avoid and the areas worth focusing on.

Overview

IntoTheBlock CEO @jrdothoughts recently shared insights in an article.

He discussed:

a. Core challenges of Web3-AI

b. Overhyped trends

c. High-potential trends

I've distilled each point for you! Let’s dive in:

Current Market Landscape

The current Web3-AI market is overhyped and overfunded.

Many projects are disconnected from the actual needs of the AI industry.

This disconnect creates confusion—but also opportunities for those with insight.

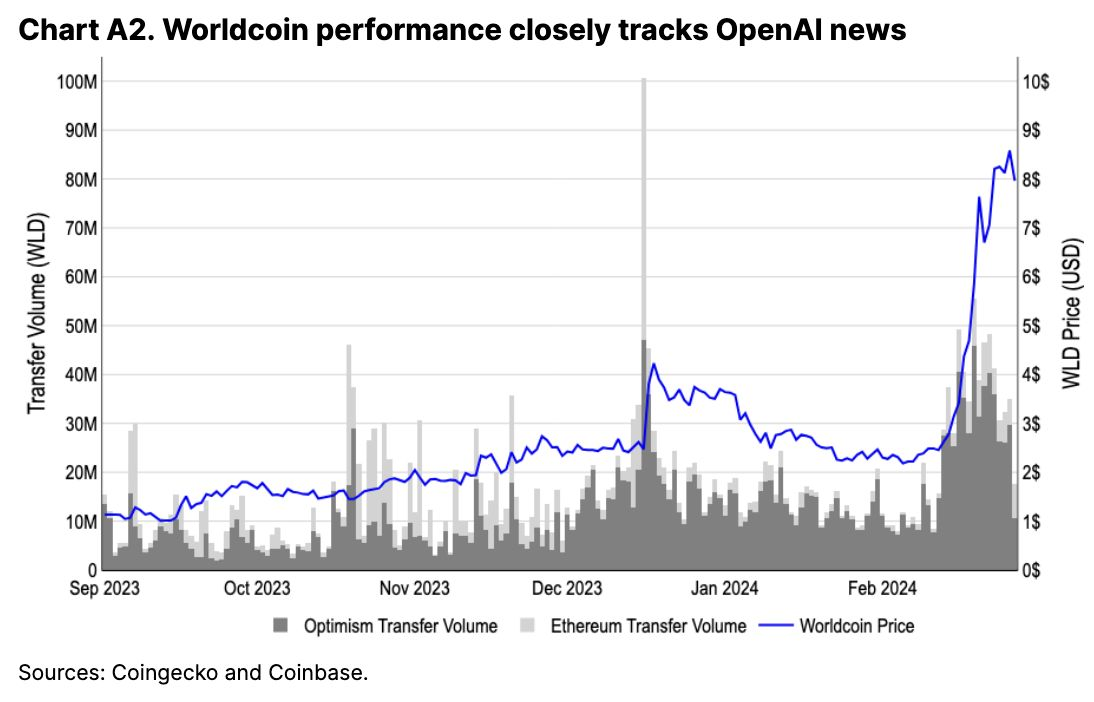

(Thanks to @coinbase)

Core Challenges

The gap between Web2 and Web3 AI is widening due to three main factors:

-

Limited AI research talent

-

Restricted infrastructure

-

Insufficient model, data, and compute resources

Generative AI Fundamentals

Generative AI relies on three pillars: models, data, and compute.

Currently, no major models are optimized for Web3 infrastructure.

Early funding supported Web3 projects that were detached from AI realities.

Overhyped Trends

Despite the hype, not all Web3-AI trends are worth pursuing.

Below are some trends that @jrdothoughts considers most overrated:

a. Decentralized GPU networks

b. ZK-AI models

c. Inference proofs (Thanks to @ModulusLabs)

Decentralized GPU Networks

These networks promise to democratize AI training.

But in reality, training large models on decentralized infrastructure is slow and impractical.

This trend has yet to deliver on its lofty promises.

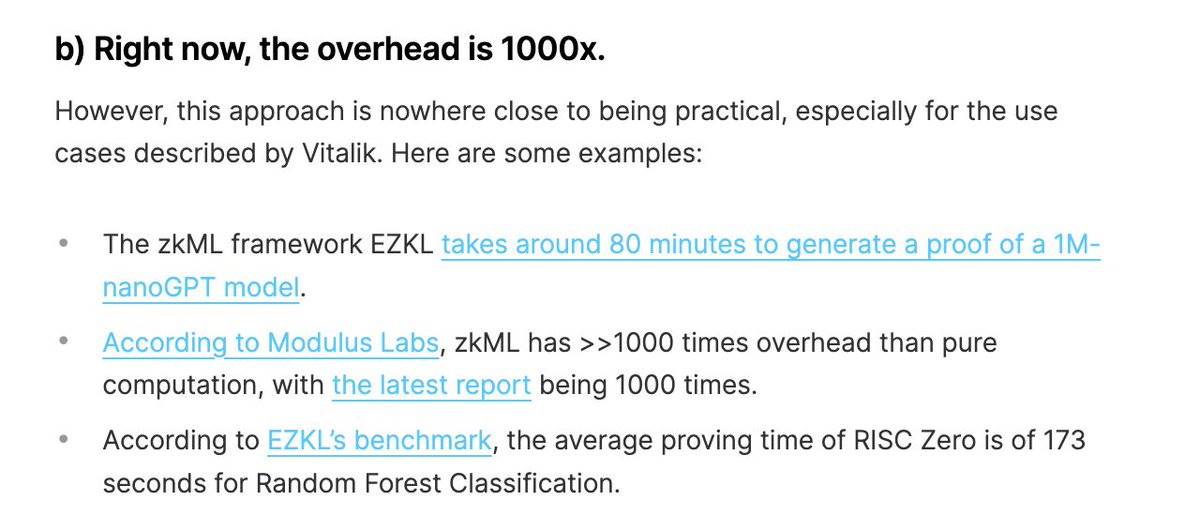

Zero-Knowledge AI Models

ZK-AI models appear attractive for privacy protection.

However, they are computationally expensive and difficult to interpret.

This makes them impractical for large-scale applications.

(Thanks to @oraprotocol)

Information from the image:

b) Currently, overhead can be as high as 1000x.

Yet, this approach remains far from practical use, especially for the use cases described by Vitalik. Examples include:

-

The zkML framework EZKL takes about 80 minutes to generate a proof for a 1M-nanoGPT model.

-

According to Modulus Labs, zkML incurs over 1000x more overhead than pure computation, with the latest report confirming 1000x.

-

Based on EZKL benchmarks, RISC Zero averages 173 seconds to generate a proof for a random forest classification task.

Inference Proofs

Inference proof frameworks provide cryptographic proofs for AI outputs.

However, @jrdothoughts believes these solutions address non-existent problems.

Thus, their real-world applicability is limited.

High-Potential Trends

While some trends are overhyped, others hold significant potential.

Below are some underappreciated trends that may offer real opportunities:

a. Wallet-enabled AI agents

b. Crypto funding AI

c. Small foundational models

d. Synthetic data generation

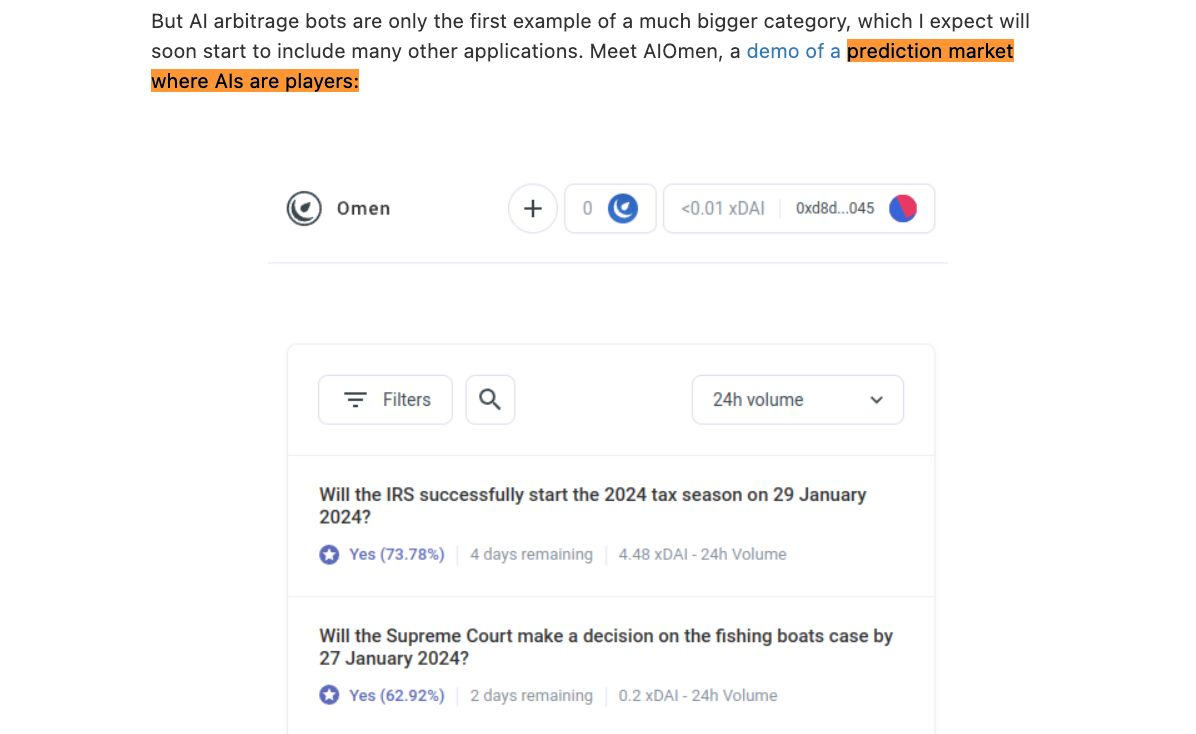

Wallet-Enabled AI Agents

Imagine AI agents with financial agency via cryptocurrency wallets.

These agents could hire other agents or stake funds to ensure quality.

Another intriguing application is "prediction agents," as mentioned by @vitalikbuterin.

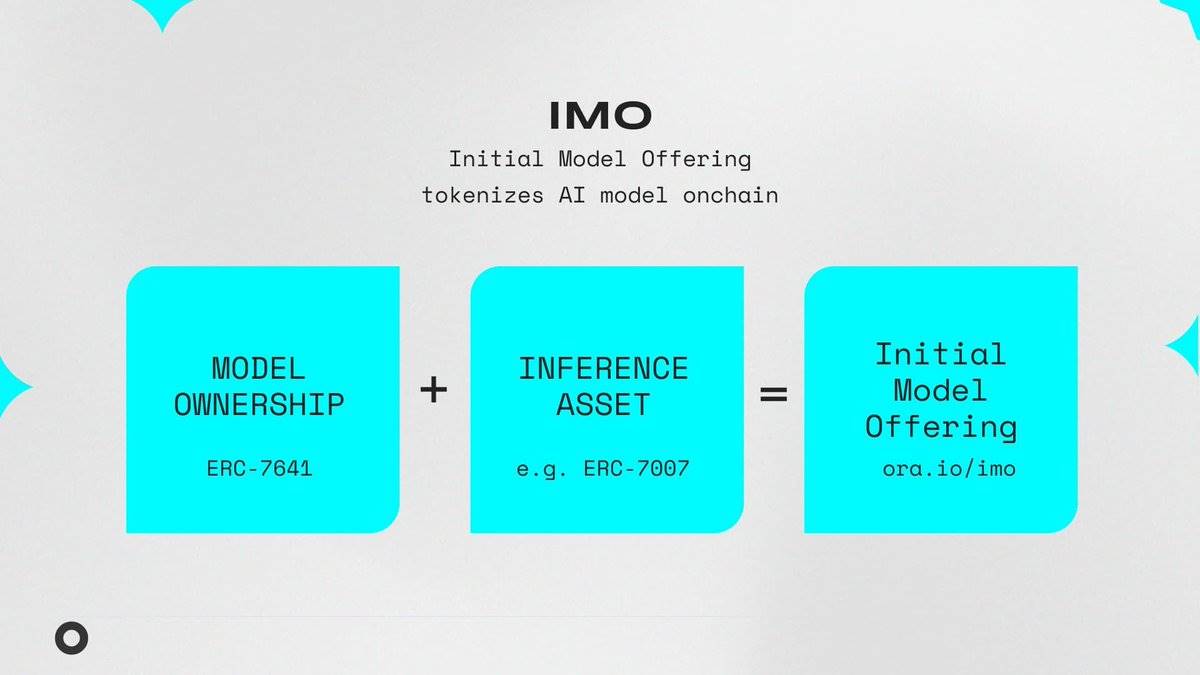

Crypto Funding AI

Generative AI projects often face funding shortages.

Crypto's efficient capital formation methods—such as airdrops and incentives—provide critical funding for open-source AI initiatives.

These mechanisms help drive innovation. (Thanks to @oraprotocol)

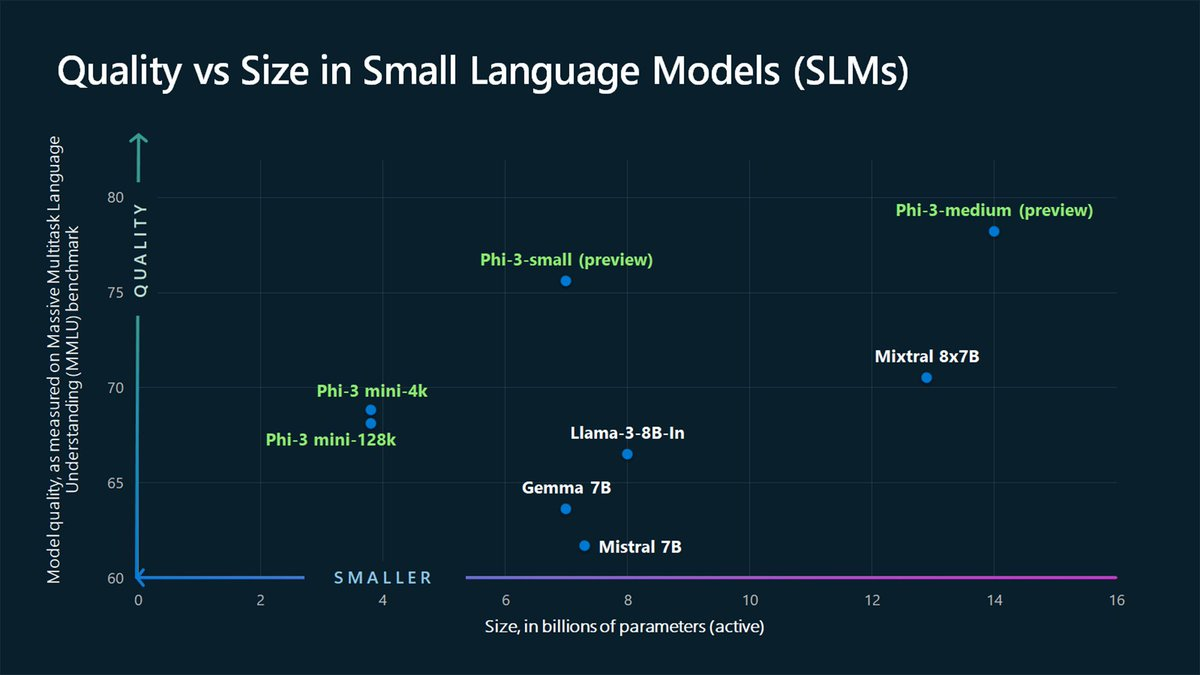

Small Foundational Models

Small foundational models like Microsoft's Phi demonstrate that less can be more.

Models with 1B–5B parameters are crucial for decentralized AI, enabling powerful on-device AI solutions.

(Source: @microsoft)

Synthetic Data Generation

Data scarcity is one of the major bottlenecks in AI development.

Synthetic data generated via foundational models can effectively augment real-world datasets.

Moving Beyond Hype

The initial Web3-AI boom focused largely on value propositions detached from reality.

@jrdothoughts argues that the focus should now shift toward building practical, viable solutions.

As attention shifts, the AI space remains full of opportunities waiting to be discovered by sharp-eyed observers.

This article is for educational purposes only and not financial advice. Special thanks to @jrdothoughts for valuable insights.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News