NVIDIA Omniverse: Leading the Future of AI and the Metaverse

TechFlow Selected TechFlow Selected

NVIDIA Omniverse: Leading the Future of AI and the Metaverse

NVIDIA said this year's theme is "bringing Omniverse applications to life."

By: MetaPost

At the recent CadenceLIVE Silicon Valley 2024 event, Jensen Huang publicly stated that AI will revolutionize three major fields: data centers, robotics and autonomous driving, and life sciences. Humanoid robots priced between $10,000 and $20,000 will inevitably become a future trend, as global tech companies are increasing investments in this area—including NVIDIA, which has established its own robotics lab.

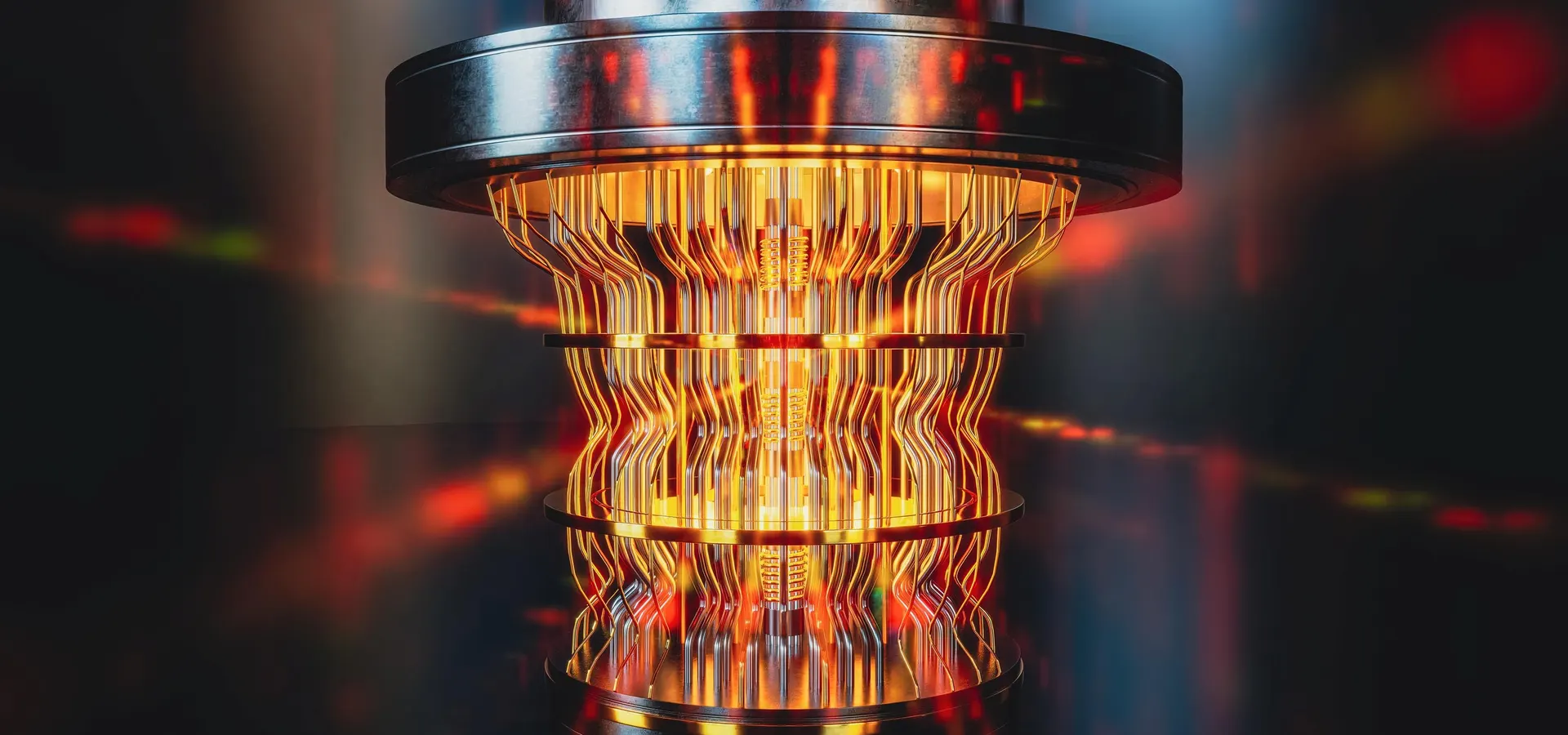

"We need a simulation engine to digitally represent the world for robots—a kind of 'gym' where robots can learn how to be robots. We call this virtual world Omniverse."

Who wouldn’t want a more affordable humanoid robot? Before owning a robot, perhaps we can first own its “gym.”

Omniverse Everywhere

Rather than saying the entire tech industry cannot do without NVIDIA, it's more accurate to say NVIDIA’s strategic vision is simply far ahead of its time.

Long before the metaverse concept entered mainstream awareness, NVIDIA had already begun developing and stockpiling related technologies. After deeply understanding various industries’ demand for virtual worlds and digital twins, NVIDIA launched the Omniverse platform through targeted R&D. While continuously iterating its solutions, numerous large enterprises have adopted Omniverse to build their own digital twins or industrial metaverses.

Omniverse is not just a tool—it is a technology platform: an industrial digitalization platform for developing and deploying physics-based simulations. Most users are already accustomed to their preferred ISVs (Independent Software Vendors) or specific ecosystems. Omniverse integrates and enhances these tools, connecting existing tools and digital assets into the largest ecosystem in design and simulation industries worldwide.

As widely known, NVIDIA invented the GPU and remains the industry leader in professional visual visualization. With its GPUs, NVIDIA enables advanced pixel and vertex rendering, supports 10K ray tracing, and performs physics-based simulation. By integrating digital asset connectivity and management with high-end graphics performance and AI technology, NVIDIA created the Omniverse platform.

Since its inception in 2019, Omniverse has evolved through multiple iterations over five years, introducing many new features across diverse application scenarios including automotive, manufacturing, media, film, architecture, energy, scientific computing, and simulation.

In NVIDIA’s own words: "Omniverse is everywhere!"

Why Can't AI Exist Without Omniverse?

The surge in generative AI deployment is accelerating digital transformation across industries, fundamentally reshaping workflows in autonomous vehicles, humanoid robots, smart warehouses, and large-scale smart cities. Training simulations based on AI-powered workflows in Omniverse—and subsequent deployment phases—are set for unprecedented acceleration.

1. What Are the Requirements for Deploying AI Training Environments?

First and foremost is efficiency. Efficiency determines everything—slower training means slower product development. If your product is merely “usable” or “somewhat usable,” or runs slower than competitors’, it may never reach market before rivals dominate. Ultimately, training and deployment efficiency depend entirely on computational power, which translates directly into GPU availability.

Second is fidelity. High fidelity ensures rendering accuracy, model precision, and training quality. Different digital assets require varying levels of precision. For example, consumer-facing applications like recommendation systems or feed algorithms don’t demand ultra-high rendering fidelity. Based on user needs, dual-precision rendering, training, and computation provide tailored solutions.

Third, results must render seamlessly on end-user display devices. Omniverse offers professional, visual, collaborative solutions, delivering high-quality visuals that adhere to physical laws with ray tracing—all ultimately reliant on GPU compute power.

2. Simulation Is Key to Enhancing Safety

Historically, developers trained, tested, and validated using real-world data. However, this approach has limitations when dealing with rare scenarios or unattainable real-world data. Sensor simulation provides an ideal solution, enabling effective testing of countless “what-if” scenarios and environmental conditions. It simplifies development, boosts efficiency, and lowers barriers to building autonomous machines.

Simulation is critical for training, testing, and deploying autonomous systems. Achieving real-world-level simulation fidelity is extremely challenging, requiring precise modeling of both sensor physics and surrounding environments.

Omniverse enables full-stack training and testing via high-fidelity, physics-accurate sensor simulation, allowing developers to better understand and predict how autonomous systems perform in the real world—equipping self-driving cars and robots with a “super brain” for safer, superior real-world performance.

Additionally, Omniverse’s application in AI extends to its powerful data processing and analysis capabilities. By integrating AI algorithms, Omniverse performs deep learning on vast simulation datasets, optimizing product design, improving production efficiency, and predicting maintenance needs.

Omniverse Cloud API: A New Chapter for Digital Twins

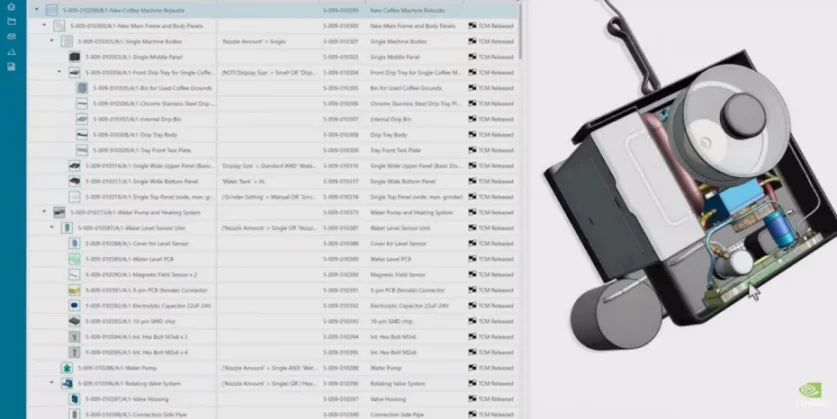

In 2022, Omniverse Cloud was launched. At GTC 2024, five new Omniverse Cloud APIs were introduced. With these APIs, developers can easily integrate Omniverse’s core technologies directly into existing digital twin design and automation software, as well as simulation workflows for testing and validating robots or autonomous vehicles.

Jensen Huang said: "Designers working remotely can collaborate as if they’re in the same studio; factory planners can design new production processes within a digital twin of a real factory; software engineers can test new autonomous vehicle software on digital twin models before rolling it out to fleets. A new wave of work—only possible in virtual worlds—is coming. Omniverse Cloud will connect tens of millions of designers and creators with billions of future AI and robotic systems."

How powerful are the Omniverse Cloud APIs? 3D modeling developers and programmers who may not understand large models or generative AI can now simply call pre-built APIs to execute tasks. Even better, by inputting minimal data in a large-model fashion, Omniverse Cloud responds intelligently. No specialized knowledge or expert technicians are required—AI understands and fulfills demands autonomously. This dramatically reduces enterprise development barriers while boosting efficiency and cutting costs.

Omniverse Cloud API enables designing, simulating, building, and running physics-accurate digital twins, accelerating product development cycles while enhancing design precision and reliability. Global industrial software leaders such as Ansys, Cadence, Dassault Systèmes’ 3DEXCITE, Hexagon, Microsoft, Rockwell Automation, Siemens, and Trimble have all integrated Omniverse Cloud API into their software portfolios.

When NVIDIA Omniverse Meets Apple Vision Pro

One of the highlights at GTC was the powerful collaboration between two tech giants—NVIDIA and Apple—as Omniverse comes to Apple Vision Pro. A physically accurate digital twin of a car is fully streamed in high fidelity to Apple Vision Pro’s high-resolution display, allowing designers to operate complex, high-fidelity 3D models in real time and immersive mixed reality (MR), significantly enhancing product design optimization.

Achieving this technology is highly challenging. Mobile devices have limited computing power—performing computations locally would fail to deliver ideal high-fidelity rendering results due to constraints in precision, latency, and adherence to physical laws. Therefore, combining remote and edge computing with streaming is essential. NVIDIA Omniverse’s powerful spatial computing capabilities enable immersive experiences and seamless interactions among people, products, processes, and physical spaces. By combining Apple Vision Pro’s groundbreaking high-resolution displays with NVIDIA’s RTX cloud rendering technology, spatial computing becomes accessible with only a device and network connection.

Why is high fidelity—high precision, low latency, and physics compliance—so important? When viewing content through MR devices, if head movement isn’t perfectly synchronized with visuals, it creates a mismatch similar to motion sickness in cars—where visual input conflicts with vestibular perception—causing dizziness. If the MR device computes too slowly or has high latency, users may experience nausea or even vomiting. Full fidelity must ensure not only sharp, realistic, high-resolution visuals but also accurate physics simulation of clouds, light, fire, particles, air, and dynamics—all rendered smoothly on MR devices. This is full fidelity—this is digital twinning.

Full-fidelity transmission on Apple Vision Pro effectively reduces discomfort like dizziness. Moreover, 3D workflows—especially industrial digital twin workflows—are becoming gamified, likely inspiring increasing numbers of young artists and designers.

Mike Rockwell, Apple’s Vice President of Vision Products, stated: "By combining Apple Vision Pro’s breakthrough ultra-high-resolution display with photorealistic rendering of OpenUSD content streamed from NVIDIA’s accelerated computing, we unlock tremendous potential for immersive experiences. Spatial computing will redefine how designers and developers create captivating digital content, ushering in a new era of creativity and interaction."

NVIDIA x Siemens: Building the Industrial Metaverse Together

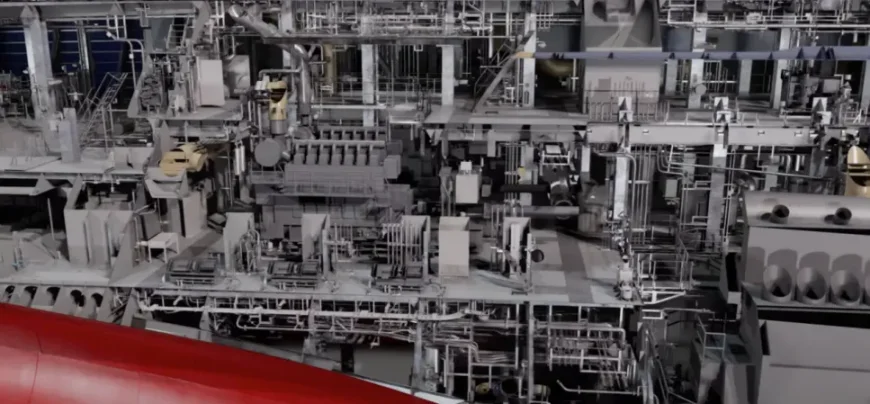

Another eye-catching demo at GTC was the collaboration between NVIDIA and Siemens. The demonstration evoked memories of the “Operation Guzheng” scene from *The Three-Body Problem*, where the massive ship “Judgment Day” is sliced apart. Of course, Omniverse has broader applications in filmmaking and media—virtual production has long been capable of photorealism. Now enhanced by AI, Omniverse delivers even more realistic rendering and richer details, offering audiences increasingly stunning cinematic experiences.

Jensen Huang stated: "Omniverse and generative AI are foundational technologies needed to digitize the heavy industry market worth $50 trillion." This massive $50 trillion market is already being redefined by software. Industry leaders are leveraging digital twins to design, simulate, build, operate, and optimize their assets and workflows.

Accelerated computing, generative AI, USD-based real-time collaboration across nodes and locations, RTX-powered advanced rendering—all of NVIDIA’s core strengths, unified and accessed via cloud APIs, accelerate workflows faster and better.

Siemens, a leader in industrial automation software infrastructure, building technologies, and transportation, combines its expertise with NVIDIA’s achievements in accelerated graphics and AI to enhance efficiency, productivity, and processes throughout product and production lifecycles. Currently, Siemens uses Omniverse Cloud API within its Xcelerator platform, starting with Teamcenter X, its cloud-based product lifecycle management software, unifying and visualizing complex industrial datasets to bridge digital and physical worlds.

As early as 2022, NVIDIA announced an expanded partnership with Siemens, linking Siemens’ Xcelerator with NVIDIA’s Omniverse platform to jointly build the industrial metaverse.

Roland Busch, President and CEO of Siemens, said: "With NVIDIA Omniverse APIs, Siemens can empower customers with generative AI, making their physics-compliant digital twins more immersive. This allows everyone to virtually design, build, and test next-generation products, manufacturing processes, and factories before actual construction. By merging the real and digital worlds, Siemens’ digital twin technology helps global enterprises improve competitiveness, resilience, and sustainability."

Conclusion

NVIDIA states that this year’s focus is on "making Omniverse applications practical," with three primary global application directions: digital twin factories, product design, and configuration systems based on product design.

Moreover, NVIDIA is not only a developer but also a beneficiary of AI-accelerated computing and Omniverse-based physical simulation. Without Omniverse, without Omniverse APIs, and without partner technology support, NVIDIA could not have built large-scale data center systems so rapidly.

With the rapid advancement of large models, Omniverse empowered by AI is poised to bring more surprises to the world and continue leading the future of the metaverse and generative AI.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News