From Cloud Computing to AI, Will Akash Be the Optimal Solution for the GPU Arms Race?

TechFlow Selected TechFlow Selected

From Cloud Computing to AI, Will Akash Be the Optimal Solution for the GPU Arms Race?

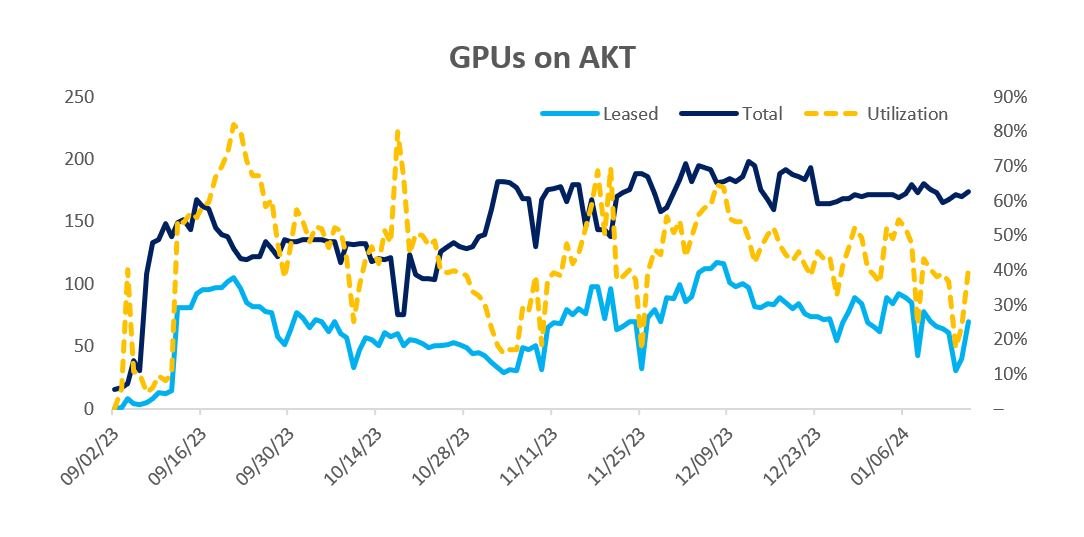

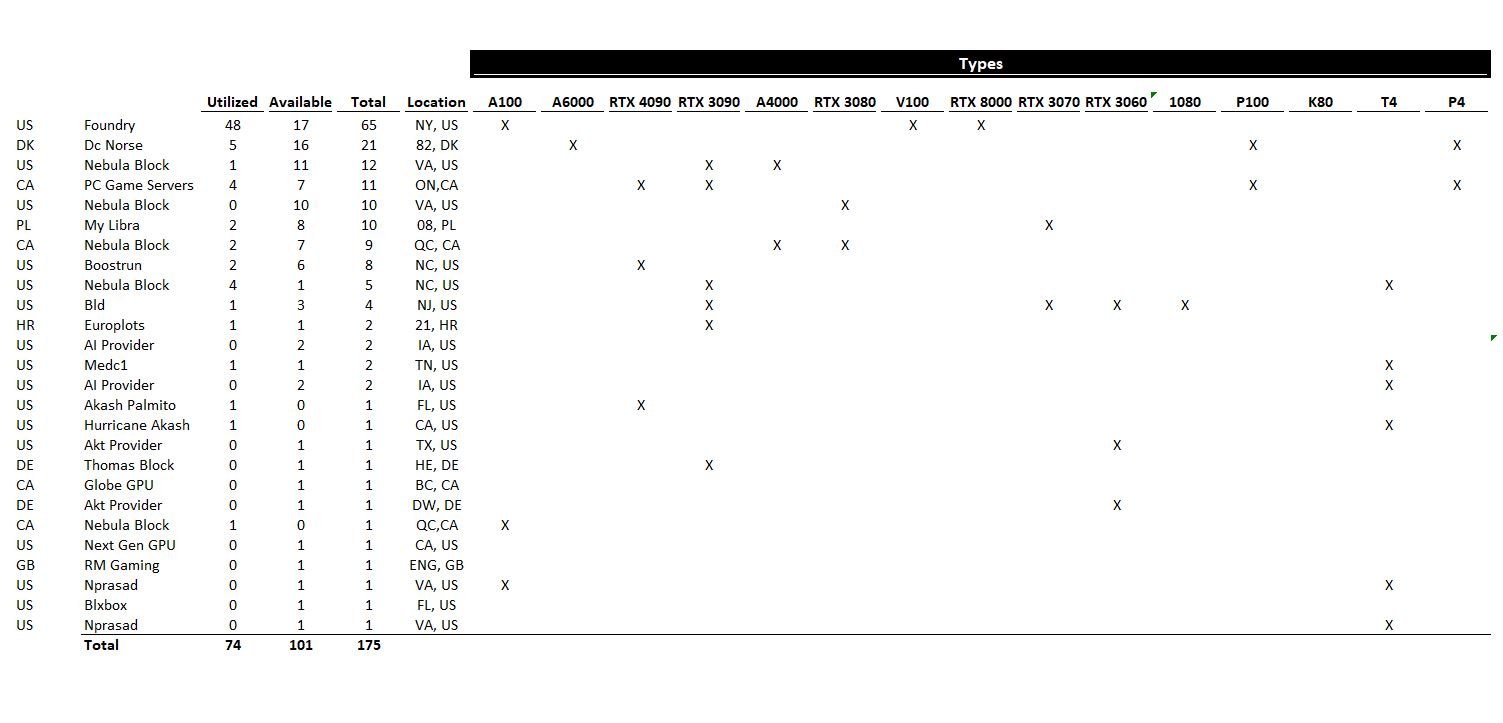

Akash's GPU network launched on mainnet in September 2023. Since then, Akash has scaled to 150–200 GPUs with utilization rates between 50% and 70%.

Author: Vincent Jow

Translation: 1912212.eth, Foresight News

Summary

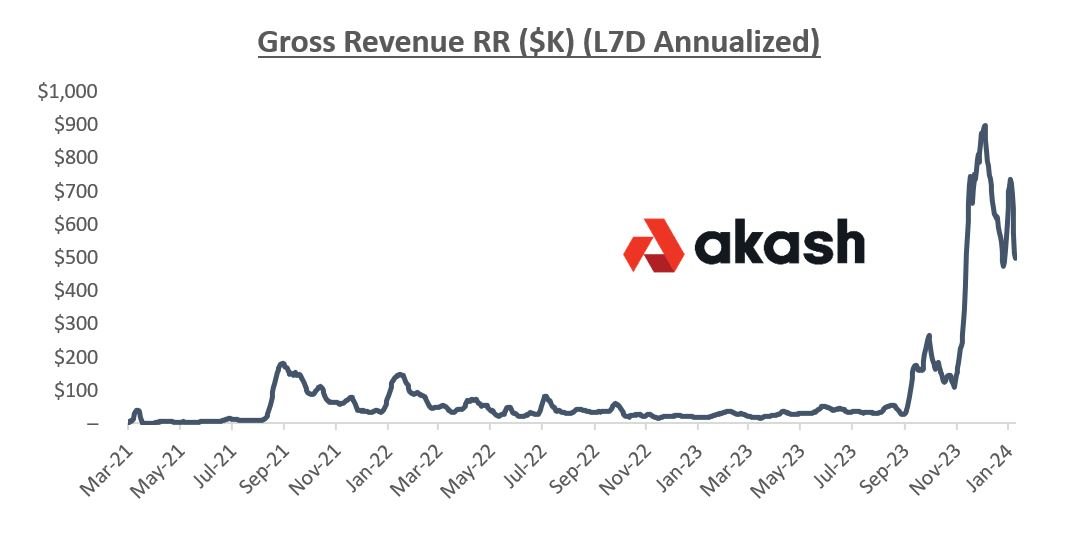

Akash is a decentralized computing platform designed to connect underutilized GPU supply with users needing GPU compute, aiming to become the "Airbnb" of GPU computing. Unlike other competitors, it primarily focuses on general-purpose, enterprise-grade GPU computing. Since launching its GPU mainnet in September 2023, Akash has hosted 150–200 GPUs on its network, achieving 50–70% utilization and generating $500,000 to $1 million in annual gross transaction value (GTV). Consistent with internet marketplaces, Akash charges a 20% transaction fee on USDC payments.

We are at the beginning of a massive infrastructure transformation driven by GPU-powered parallel processing. Artificial intelligence is expected to add $7 trillion to global GDP while automating 300 million jobs. Nvidia, the manufacturer of GPUs, is projected to grow its revenue from $27 billion in 2022 to $60 billion in 2023, reaching approximately $100 billion by 2025. Cloud providers (AWS, GCP, Azure, etc.) have increased their capital expenditures on Nvidia chips from low single digits to 25% today, with expectations to exceed 50% in the coming years. (Source: Koyfin)

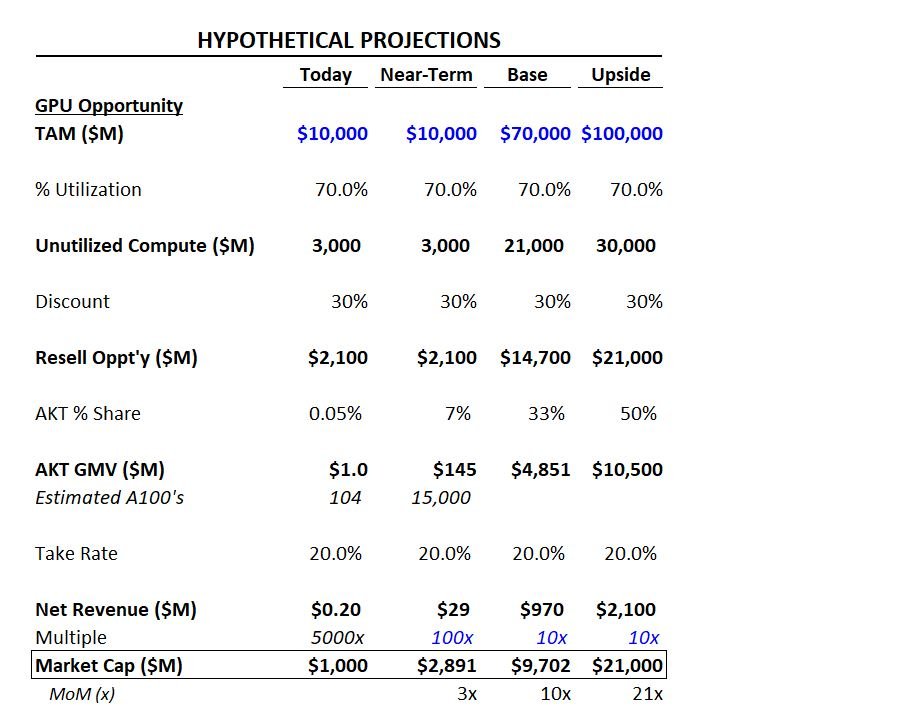

Morgan Stanley estimates that by 2025, the opportunity for hyperscale GPU Infrastructure-as-a-Service (IaaS) will reach $40–50 billion. As an illustration, if 30% of GPU computing capacity were resold through a secondary market at a 30% discount, this would represent a $10 billion revenue opportunity. Adding another $5 billion in revenue potential from non-hyperscale sources brings the total to $15 billion. Assuming Akash captures 33% of this opportunity ($5 billion GTV) with a 20% take rate, this translates into $1 billion in net revenue. Applying a 10x multiple results in a near $10 billion market cap valuation.

Market Overview

In November 2022, OpenAI launched ChatGPT, setting records for fastest user base growth—reaching 100 million users by January 2023 and 200 million by May. The impact has been immense, estimated to increase global GDP by $7 trillion through productivity gains and automation of 3 million jobs.

Artificial intelligence has rapidly evolved from a niche R&D area into the top spending priority for companies. Training GPT-4 cost $100 million upfront, with an annual operating cost of $250 million. GPT-5 will require 25,000 A100s (equivalent to $225 million worth of Nvidia hardware), with total hardware investment possibly reaching $1 billion. This has triggered a corporate arms race to secure enough GPUs to support AI-driven workloads.

This AI revolution has sparked a fundamental shift in infrastructure, accelerating the transition from CPU-based serial processing to GPU-based parallel computing. Historically, GPUs were used for rendering and processing images at scale simultaneously, while CPUs were designed for sequential operations. Due to high memory bandwidth, GPUs have evolved to handle other computationally parallel tasks such as training, optimizing, and improving AI models.

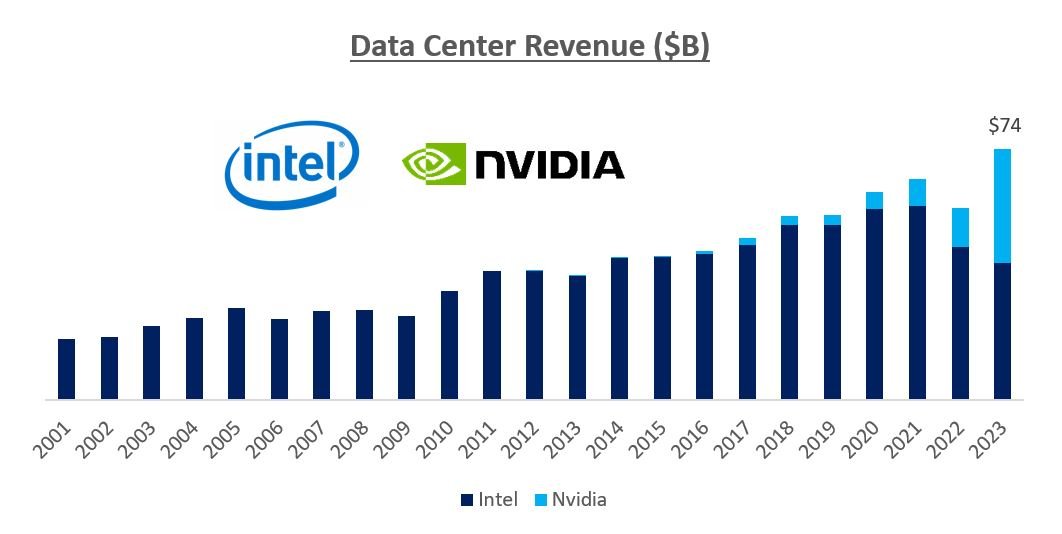

Nvidia, which pioneered GPU technology in the 1990s, combined best-in-class hardware with the CUDA software stack to build a multi-year lead over competitors (primarily AMD and Intel). Nvidia’s CUDA stack, developed in 2006, enables developers to optimize Nvidia GPUs for accelerated workloads and simplifies GPU programming. With 4 million CUDA users and over 50,000 developers building on CUDA, there is a robust ecosystem of programming languages, libraries, tools, applications, and frameworks. We expect Nvidia GPUs to surpass Intel and AMD CPUs in data centers over time.

Spending by hyperscale cloud providers and large tech firms on Nvidia GPUs has surged—from low single-digit percentages of capex in the early 2010s, to mid-single digits between 2015–2022, to 25% in 2023. We believe Nvidia could account for over 50% of cloud provider capex within the next few years. This trend is expected to drive Nvidia's revenue from $25 billion in 2022 to $100 billion by 2025 (source: Koyfin).

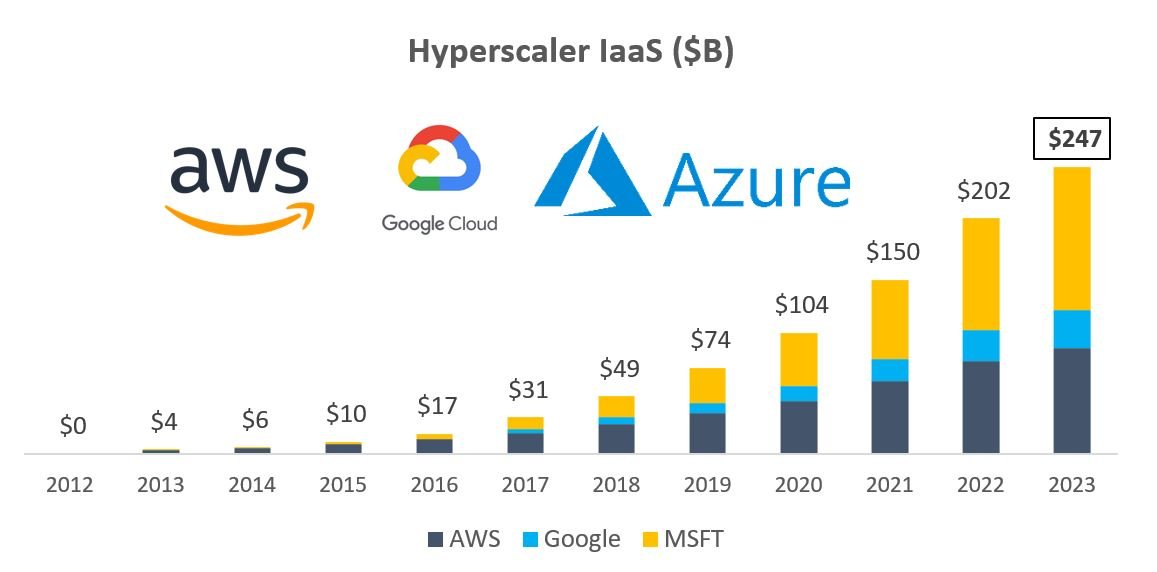

Morgan Stanley estimates that by 2025, the hyperscale cloud providers’ GPU IaaS market size will reach $40–50 billion. This remains only a small fraction of total hyperscaler revenues—today, the top three hyperscalers generate more than $250 billion in combined revenue.

Due to strong demand for GPUs, supply shortages have been widely reported by media outlets like The New York Times and The Wall Street Journal. AWS CEO said, “Demand exceeds supply—for everyone.” Elon Musk stated during Tesla’s Q2 2023 earnings call: “We’ll continue using—we’ll take Nvidia hardware as fast as we can get it.”

Index Ventures had to purchase chips directly for its portfolio companies. Outside major tech firms, acquiring chips directly from Nvidia is nearly impossible, and even accessing them via hyperscale cloud providers involves long wait times.

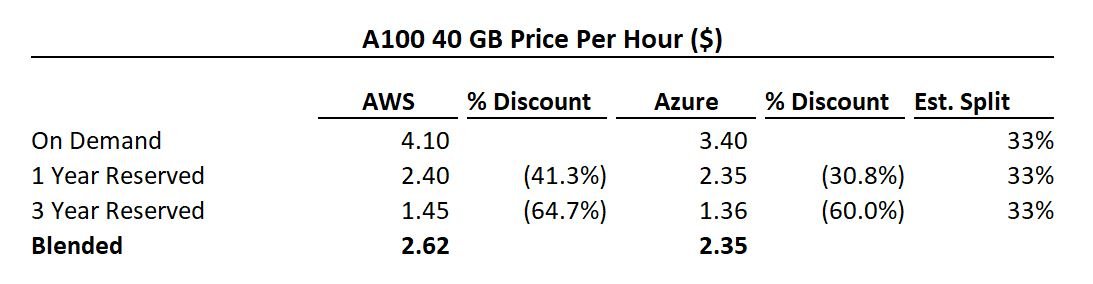

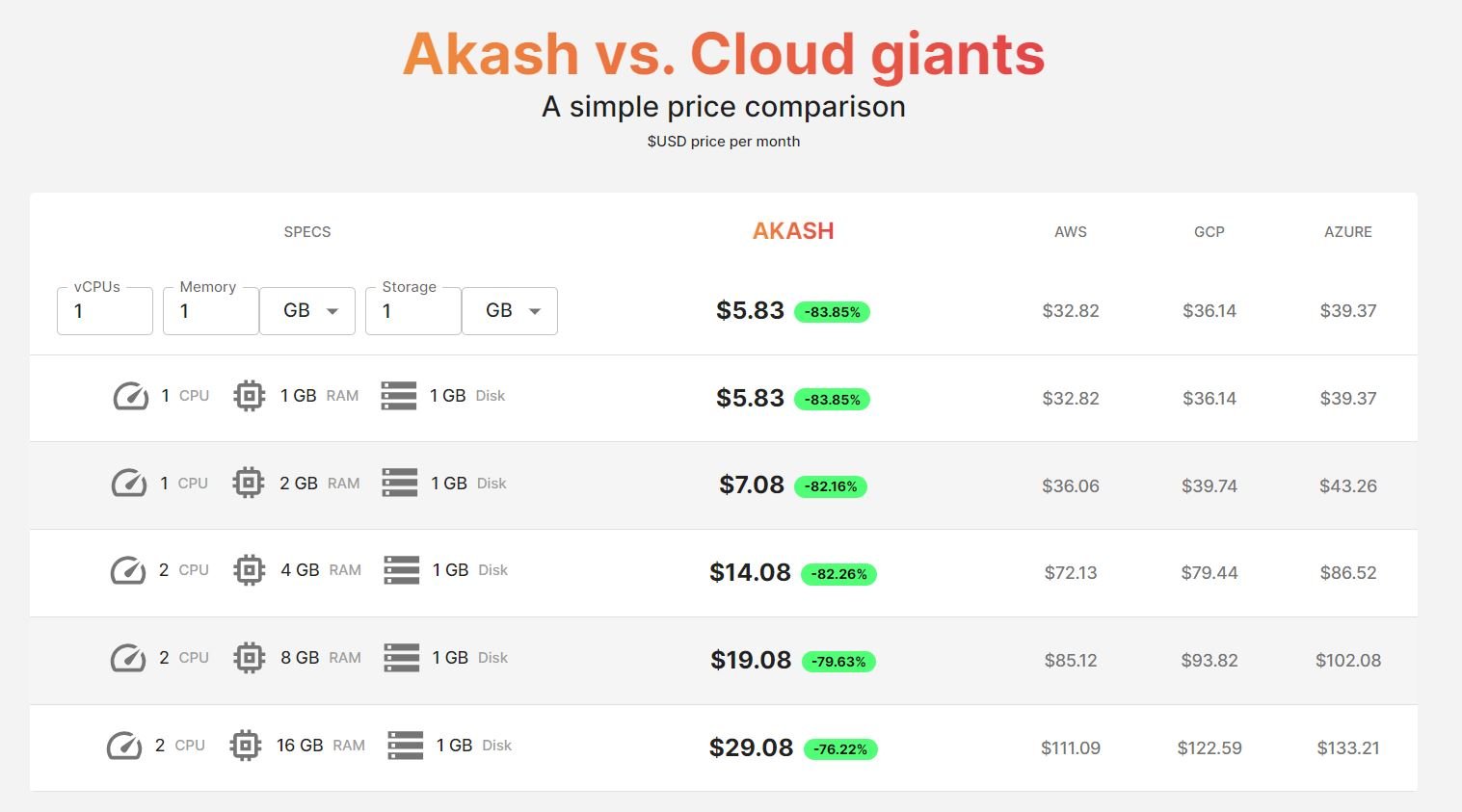

Below are GPU pricing examples from AWS and Azure. As shown, one- to three-year reservations offer 30–65% discounts. Because hyperscalers are investing billions to expand capacity, they seek investment opportunities with revenue visibility. Customers expecting utilization above 60% should opt for one-year reserved pricing; those expecting above 35% should choose three-year reservations. Any unused capacity can be resold, significantly lowering overall costs.

If hyperscalers build a $50 billion GPU compute leasing business, reselling unused compute becomes a major opportunity. Assuming 30% of compute is resold at a 30% discount, this represents a $10 billion market for reselling hyperscaler GPU compute capacity.

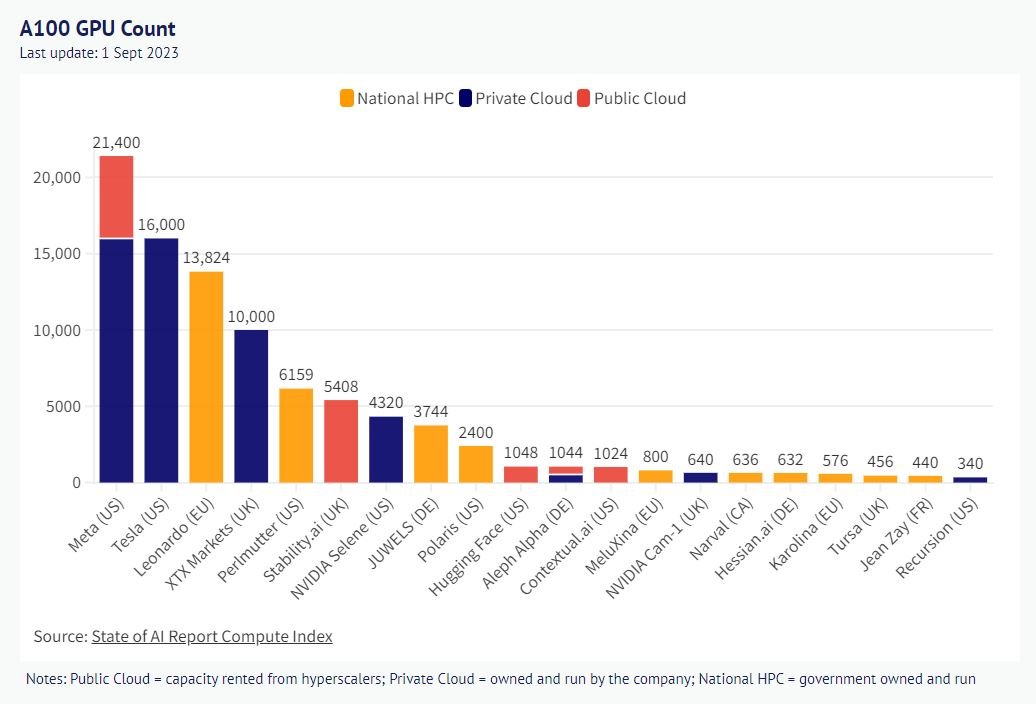

However, beyond hyperscalers, other supply sources exist—including large enterprises (e.g., Meta, Tesla), private competitors (CoreWeave, Lambda, etc.), and well-funded AI startups. From 2022 to 2025, Nvidia will generate around $300 billion in revenue. Assume $70 billion worth of chips reside outside hyperscalers—with 20% of compute resold at a 30% discount, this adds another $4.2 billion. Combined with prior estimates, total resalable capacity reaches ~$14.2 billion.

Akash Overview

Akash is a decentralized computing marketplace founded in 2015 and launched as a Cosmos appchain in September 2020. Its vision is to democratize cloud computing by offering underutilized compute resources at prices significantly below those of hyperscale cloud providers.

The blockchain handles coordination and settlement, recording requests, bids, leases, and settlements, while execution occurs off-chain. Akash hosts containers where users can run any cloud-native application. It includes a suite of cloud management services, including Kubernetes for orchestrating and managing these containers. Deployments occur over a private peer-to-peer network isolated from the blockchain.

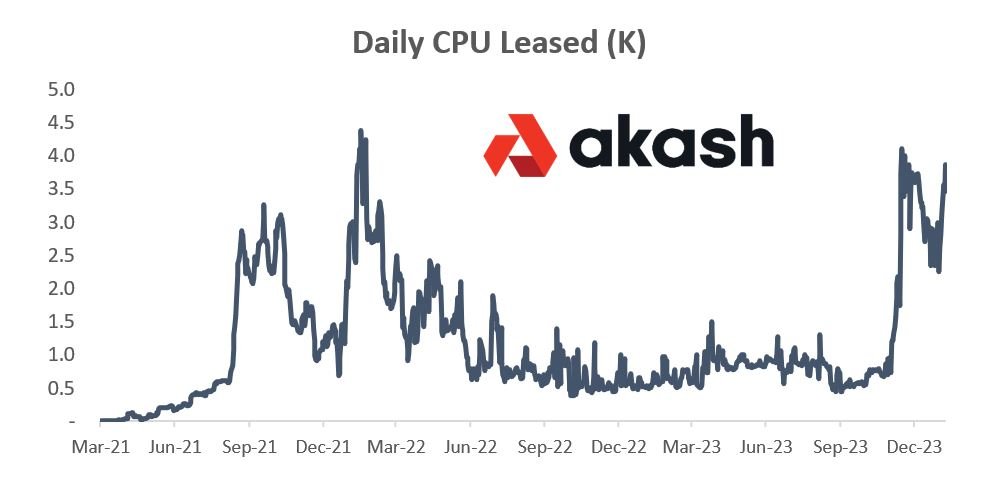

Akash’s first version focused on CPU computing. At its peak, this business achieved an annual GMV of about $200,000, leasing 4,000–5,000 CPUs. However, two key issues emerged: onboarding friction (requiring users to set up a Cosmos wallet and pay with AKT tokens) and customer churn (workloads stopped when AKT ran out or price fluctuated, with no fallback provider).

Over the past year, Akash has shifted from CPU to GPU computing, leveraging this paradigm shift in compute infrastructure and supply constraints.

Akash GPU Supply

Akash’s GPU network went live on mainnet in September 2023. Since then, Akash has scaled to 150–200 GPUs with 50–70% utilization.

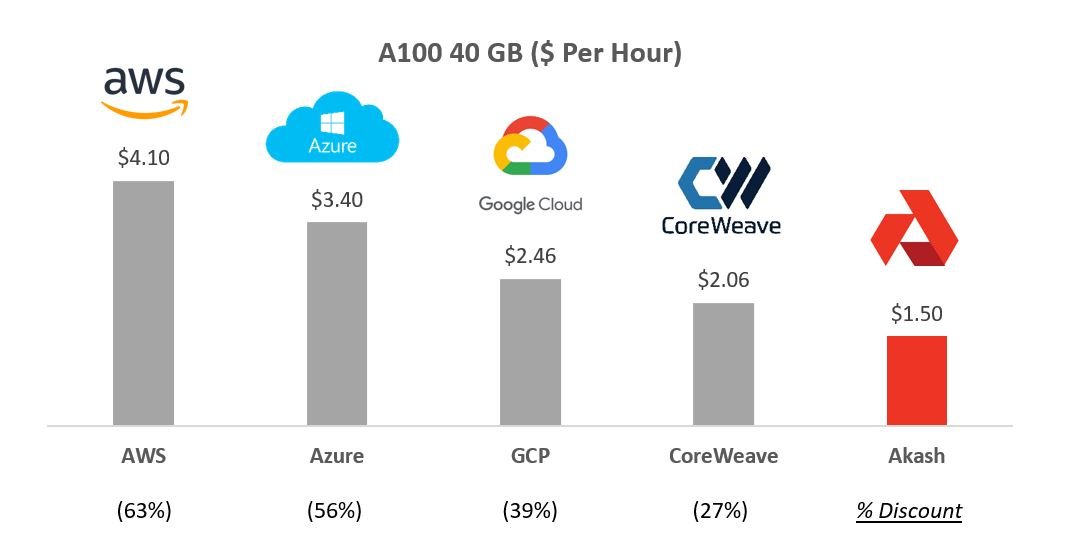

The chart below compares Nvidia A100 pricing across several providers—Akash offers prices 30–60% cheaper than competitors.

There are approximately 19 unique providers on the Akash network across seven countries, offering over 15 types of chips. The largest provider is Foundry, a DCG-backed firm also involved in crypto mining and staking.

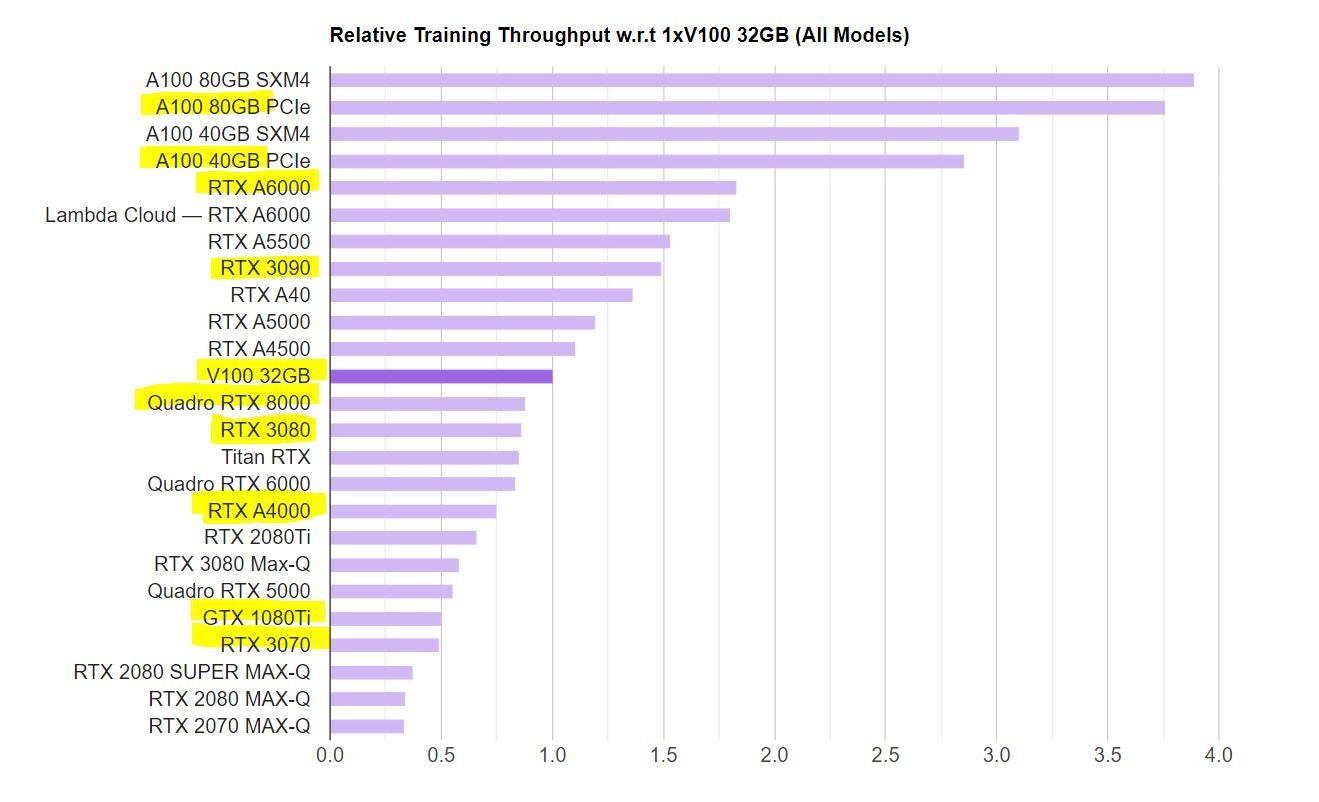

Akash primarily focuses on enterprise-grade chips (A100), traditionally used to support AI workloads. While consumer-grade GPUs are also available, they have historically faced challenges due to power consumption, software compatibility, and latency. Several companies like FedML, io.net, and Gensyn are attempting to build orchestration layers for AI edge computing.

As the market shifts increasingly toward inference rather than training, consumer-grade GPUs may become more viable—but currently, the focus remains on enterprise-grade chips for training.

On the supply side, Akash targets public hyperscalers, private GPU providers, crypto miners, and enterprises holding underutilized GPUs.

-

Hyperscale Public Cloud Providers: The biggest opportunity lies in enabling hyperscalers (Azure, AWS, GCP) to allow their customers to resell underutilized capacity on the Akash marketplace. This gives them revenue visibility on capital investments. Once one hyperscaler allows this, others may follow to remain competitive. As previously noted, hyperscalers could capture a $50 billion IaaS opportunity, creating a massive secondary market opportunity for Akash.

-

Private Cloud Competitors: Beyond public hyperscalers, several private cloud firms (CoreWeave, Lambda Labs, etc.) offer GPU leasing. Given competition dynamics where hyperscalers develop their own ASICs as alternative hardware, Nvidia has allocated additional supply to some private firms. Private competitors typically offer lower pricing (e.g., up to 50% cheaper for A100). CoreWeave, one of the most prominent private players, originally a crypto miner, pivoted in 2019 to build data centers and provide GPU infrastructure. It is fundraising at a $7 billion valuation with backing from Nvidia. Rapidly growing, CoreWeave reached $500 million in revenue in 2023 and expects $1.5–2 billion in 2024. With 45,000 Nvidia chips, private competitors collectively may hold over 100,000 GPUs. Enabling secondary markets for their customers could help these private players gain share against public hyperscalers.

-

Crypto Miners: Crypto miners have historically been major consumers of Nvidia GPUs. Due to computational complexity in proof-of-work systems, GPUs became dominant hardware for PoW networks. Ethereum’s transition from proof-of-work to proof-of-stake freed up substantial GPU capacity. An estimated 20% of these released chips can be repurposed for AI workloads. Additionally, Bitcoin miners seek diversified revenue streams. Over recent months, Hut 8, Applied Digital, Iris Energy, Hive, and other Bitcoin miners have announced AI/ML strategies. Foundry, the largest supplier on Akash, is one of the biggest Bitcoin miners.

-

Enterprises: As previously shown, Meta holds significant GPU inventory—15,000 A100s—at just 5% utilization. Similarly, Tesla owns 15,000 A100s. Enterprise compute utilization typically stays below 50%. Given heavy VC investment in this space, many AI/ML startups have also pre-bought chips. Reselling unused capacity would reduce total cost of ownership for smaller firms. Interestingly, leasing older GPUs may offer potential tax advantages.

Akash GPU Demand Side

For most of 2022 and 2023, prior to the GPU network launch, CPU GMV was around $50,000 annually. Since the GPU network launch, GMV has reached an annualized level of $500,000 to $1 million, with 50–70% utilization on the GPU network.

Akash has been actively reducing user friction, improving UX, and expanding use cases.

-

USDC Payments: Akash recently enabled stable USDC payments, removing the need for customers to buy and hold volatile AKT tokens.

-

Metamask Wallet Support: Akash implemented Metamask Snap to simplify onboarding without requiring a Cosmos-specific wallet.

-

Enterprise Support: Overclock Labs, creator of the Akash network, launched AkashML to onboard users onto Akash with enterprise-grade support.

-

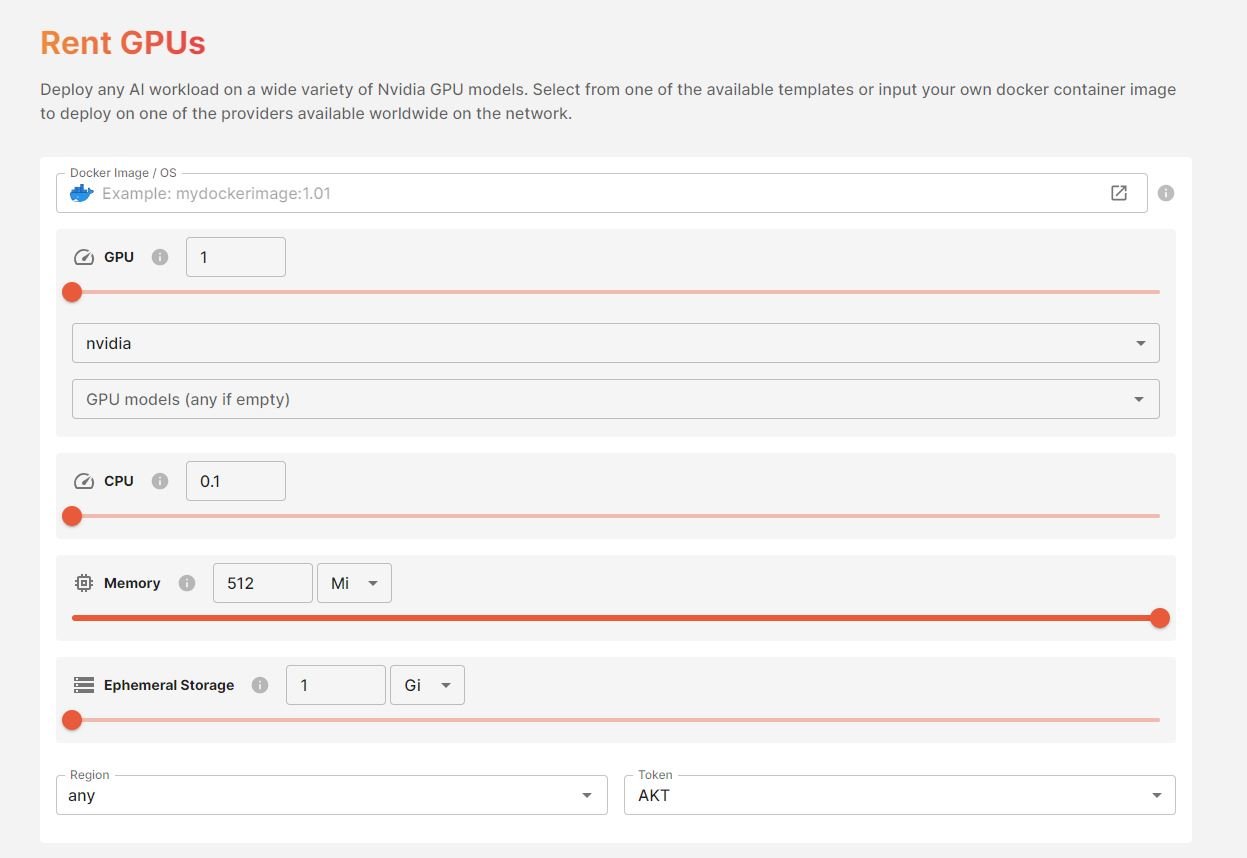

Self-Service: Cloudmos, recently acquired by Akash, launched an easy-to-use self-service interface for GPU deployment—previously done only via command-line code.

-

Choice: While focusing mainly on Nvidia enterprise chips, Akash also offers consumer-grade GPUs and added support for AMD chips by end of 2023.

Akash is also validating use cases across the network. During the GPU testnet phase, the community demonstrated the ability to deploy and run inference for many popular AI models. Applications like Akash Chat and Stable Diffusion XL showcased Akash’s inference capabilities. We believe the inference market will eventually dwarf the training market. Today, AI-powered search costs $0.02 per query (10x Google’s current cost). With 3 trillion searches annually, this implies $60 billion in yearly costs. For context, training OpenAI models costs around $100 million. While both costs may decline, this highlights a stark difference in long-term revenue potential.

Given that most current demand for high-end chips centers on training, Akash is currently working to demonstrate model training on its network and plans to release such a model in early 2024. After successfully using homogeneous chips from a single provider, the next step will involve heterogeneous chips from multiple suppliers.

Akash’s roadmap is ambitious. Ongoing product developments include privacy-managed support, on-demand/reserved instances, and improved discoverability.

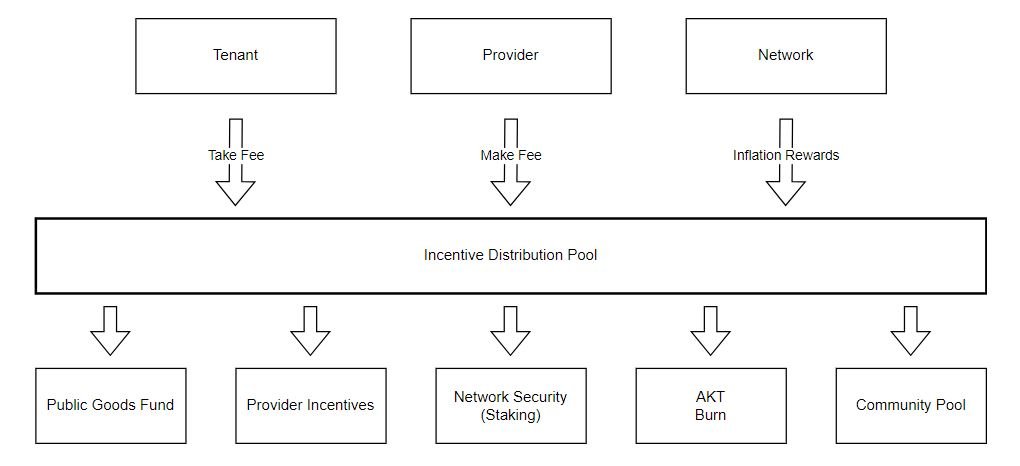

Token Model and Incentives

Akash charges a 4% fee on AKT payments and a 20% fee on USDC payments. This 20% fee aligns with typical internet marketplace rates (e.g., Uber takes 30%).

Approximately 58% of AKT tokens are in circulation (225 million circulating out of 388 million max supply). Annual inflation has increased from 8% to 13%. Currently, 60% of circulating tokens are staked, with a 21-day lockup period.

40% of inflation (up from 25%) plus GMV fees go into a community pool, which currently holds $10 million in AKT.

The allocation of these funds is still being determined but will likely be distributed among public grants, provider incentives, staking rewards, potential token burns, and the community pool.

On January 19, Akash launched a $5 million pilot incentive program aimed at bringing 1,000 A100s onto the platform. Over time, the goal is to provide revenue visibility for participating providers (e.g., 95% effective utilization).

Valuation and Scenario Analysis

Below are illustrative scenarios and key assumptions for Akash’s drivers:

Short-Term Case: We estimate that if Akash reaches 15,000 A100s, it would generate nearly $150 million in GMV. With a 20% take rate, this yields $30 million in protocol revenue. Considering growth trajectory and AI valuations, applying a 100x multiple gives a $3 billion valuation.

Base Case: We assume the IaaS market opportunity aligns with Morgan Stanley’s estimate of $50 billion. Assuming 70% utilization, $15 billion in resalable capacity exists. Discounted at 30%, this yields $10.5 billion from hyperscalers. Adding $10 billion from non-hyperscale sources gives $20.5 billion total. Given strong network effects and moats in marketplaces, we assume Akash captures 33% share (comparable to Airbnb’s 20% in vacation rentals, Uber’s 75% in ride-sharing, DoorDash’s 65% in food delivery). At a 20% take rate, this generates $4.1 billion in protocol revenue. Using a 10x multiple, Akash reaches a $41 billion valuation.

Upside Case: Our upside scenario uses the same framework. We assume greater penetration into unique GPU sources and higher market share, resulting in a $20 billion resale opportunity.

Contextually, Nvidia is a $1.2 trillion public company, OpenAI is valued at $80 billion privately, Anthropic at $20 billion, and CoreWeave at $7 billion. In crypto, Render and TAO are valued at over $2 billion and $5.5 billion respectively.

Risks and Mitigations:

Supply and Demand Concentration: Currently, much of GPU demand comes from large tech firms training massive, complex LLMs. Over time, we expect growing interest in training smaller AI models—cheaper and better suited for handling private data. Fine-tuning will grow in importance as models shift from general-purpose to vertical-specific. Finally, as usage and adoption accelerate, inference will become increasingly critical.

Competition: Numerous crypto and non-crypto companies aim to unlock underutilized GPUs. Notable crypto protocols include:

-

Render and Nosana are unlocking consumer-grade GPUs for inference.

-

Together is building open-source training models for developers to build upon.

-

Ritual is building a network for hosting models.

Latency and Technical Challenges: Since AI training is extremely resource-intensive and typically requires all chips to be co-located in a single data center, it remains unclear whether models can be trained effectively on decentralized, non-co-located GPU stacks. OpenAI plans to build its next training facility in Arizona housing over 75,000 GPUs. These are precisely the challenges that orchestration layers like FedML, Io.net, and Gensyn aim to solve.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News