Vitalik: Thoughts on Worldcoin and Biometric Identity Verification

TechFlow Selected TechFlow Selected

Vitalik: Thoughts on Worldcoin and Biometric Identity Verification

In principle, the concept of human identity verification seems highly valuable, although various implementations carry their own risks.

Author: Vitalik Buterin

Translation: HuoHuo, Baicai Blockchain

Today, WorldCoin, a Web3 crypto project co-founded by OpenAI CEO Sam Altman, officially launched. WorldCoin has developed a biometric identity verification system called World ID, which uses an orb-shaped device called "the Orb" to scan users' irises for identity verification.

On the same day, Ethereum founder Vitalik Buterin published an article titled “What do I think about biometric proof of personhood?”, sharing his views on biometric proof of personhood. Below is the full translation:

The Ethereum community has long been exploring decentralized solutions for Proof of Humanity (PoH)—a challenging yet highly valuable problem. PoH is a limited form of real-world identity that ensures a given registered account is controlled by a real human being—and uniquely different from all other registered accounts—ideally without revealing which specific individual it is.

There have already been many attempts to solve this problem: BrightID, Idena, and Circles are representative examples. Some come with their own application ecosystems—often Universal Basic Income (UBI) tokens—while others have found utility in systems like Gitcoin Passport to verify which accounts qualify for quadratic voting. Zero-knowledge technologies such as Sismo add privacy layers to many similar solutions.

Until recently, we’ve seen the rise of a larger, more ambitious Proof of Humanity project: Worldcoin.

Founded by Sam Altman, previously known as OpenAI’s CEO, the core idea behind the project is simple: AI will generate vast wealth for humanity, but it may also eliminate many jobs, eventually making it nearly impossible to distinguish humans from bots. To address this gap, we need to:

(1) Create a robust human identity verification system so people can prove they are indeed human;

(2) Provide UBI to everyone. Worldcoin's unique feature lies in its reliance on advanced biometrics, using a dedicated hardware device called "the Orb" to scan each user’s iris.

The goal is to mass-produce these orbs and widely distribute them globally, placing them in public spaces so anyone can easily obtain their own ID.

To its credit, Worldcoin is committed to decentralization—not only technologically, by building on Optimism stack as an L2 on Ethereum and leveraging ZK-SNARKs and other cryptographic tools to protect user privacy—but also in terms of governance over the system itself.

Worldcoin has faced criticism over privacy and security concerns related to the Orb, design flaws in its tokenomics, and ethical issues surrounding certain corporate decisions. Indeed, the project is still evolving. However, others raise more fundamental concerns: Can biometric technology—whether Worldcoin’s eye-scanning approach or simpler methods like video uploads and validation games used in PoH and Idena—gain broad public acceptance?

Criticism is certainly not lacking. Risks include inevitable privacy leaks, further erosion of people's ability to browse the internet anonymously, coercion by authoritarian regimes, and challenges in ensuring security while maintaining decentralization.

This article will discuss these issues and offer arguments to help you decide whether scanning your eyes in front of this new spherical tool is a good idea, whether we should abandon developing Proof of Humanity altogether, and what alternative paths exist.

01 What is Proof of Humanity and why does it matter?

Proof of Humanity is valuable because it addresses power centralization in today’s internet, avoids dependence on centralized authorities, and minimizes personal data exposure. If Proof of Humanity remains unsolved, decentralized governance—including micro-governance like voting on social media posts—is built on sand.

Many major applications today handle this issue using government-backed identity systems (e.g., IDs and passports). This does solve the problem, but at an enormous and unacceptable cost to privacy.

The dual risks facing our current human verification systems

In many human verification projects—not just Worldcoin, but also “flagship applications” like Circles—the concept of “tokens per person” (also known as “UBI Tokens”) is hardcoded. Every user registered in the system receives a fixed amount of tokens daily (or hourly or weekly). There are numerous other use cases, including:

- Airdrop mechanisms for token distribution;

- Preferential conditions for less wealthy users in token or NFT sales;

- Voting in DAOs;

- Quadratic voting (for funding and attention allocation);

- Preventing bot and Sybil attacks on social media;

- Alternatives to CAPTCHA for preventing DoS attacks.

The shared goal remains creating open and democratic mechanisms that avoid centralized control by project operators and domination by wealthy users. The latter is especially critical in decentralized governance.

Currently, existing solutions rely on:

(1) Highly opaque AI algorithms

(2) Centralized IDs, aka “KYC”.

Therefore, an effective identity verification solution would be superior—it could achieve the necessary security properties for these applications without the flaws inherent in current centralized approaches.

02 What were early attempts at internet identity verification?

Human identity verification comes in two main forms: social graphs and biometrics.

Social-graph-based human verification relies on some form of endorsement: If Alice, Bob, Charlie, and David are all verified humans who vouch for Emily as a verified human, then Emily likely is one too.

Endorsements are typically reinforced with incentives: If Alice says Emily is human, but it turns out she isn’t, both Alice and Emily may face penalties. Biometric proof involves verifying physical or behavioral traits of Emily that distinguish humans from bots—and individuals from one another. Most projects combine both techniques.

The four systems I mentioned earlier work roughly as follows:

(1) Proof of Humanity: You upload a video of yourself and post a deposit. To get approved, existing users must endorse you, and others may challenge your submission after a delay. If challenged, the Kleros decentralized court verifies whether your video is authentic; if fraudulent, your deposit is forfeited and challengers rewarded.

(2) BrightID: You join a video call “verification party” with other users and mutually verify each other. Higher-level verifications (Bitu) are possible—if enough Bitu-verified users vouch for you, you gain eligibility.

(3) Idena: At specific time points, you play a captcha-style game (to prevent multiple participations); part of the game involves creating and validating captchas used to verify others.

(4) Circles: Existing Circles users vouch for you. Circles’ uniqueness lies in not attempting to create a “globally verifiable ID”; instead, it builds a trust graph where someone’s credibility can only be assessed relative to your own position within that graph.

03 How does Worldcoin work?

Each Worldcoin user installs a mobile app that generates private and public keys, much like an Ethereum wallet. Then they visit “the Orb” in person. The user stares into the Orb’s camera while showing a QR code generated by their Worldcoin app, containing their public key. The Orb scans the user’s eye and uses sophisticated hardware and machine learning classifiers to verify:

(1) Whether the user is a real human;

(2) Whether the user’s iris differs from those of any previous users of the system.

If both checks pass, the Orb signs a message approving a private hash of the user’s iris scan. This hash is uploaded to a database—currently a centralized server, but intended to eventually be replaced by a decentralized on-chain system once the hashing mechanism proves reliable. Full iris scans are not stored; only hashes are kept, used solely to check uniqueness. From then on, the user possesses a “World ID”.

A World ID holder can prove they are a unique human by generating a ZK-SNARK proving they hold the private key corresponding to a public key in the database—without revealing which key they possess. Thus, even if someone rescan your iris, they cannot see any actions you take.

04 What are the main issues with Worldcoin’s design?

People mainly worry about four risks:

(1) Privacy

The registry of iris scans may leak information. At minimum, if someone else scans your iris, they could check against the database to determine whether you have a World ID. Iris scans might reveal even more.

(2) Accessibility

Unless there are enough Orbs readily accessible to anyone worldwide, World ID cannot be reliably accessed.

(3) Centralization

The Orb is a hardware device—we cannot verify whether it was correctly constructed and lacks backdoors. Thus, even if the software layer is perfect and fully decentralized, the Worldcoin Foundation could still insert backdoors allowing creation of arbitrary fake human identities.

(4) Security

Users’ phones may be hacked; users may be coerced into scanning their iris while presenting someone else’s public key; and 3D-printed “fake humans” might pass iris scans and obtain World IDs.

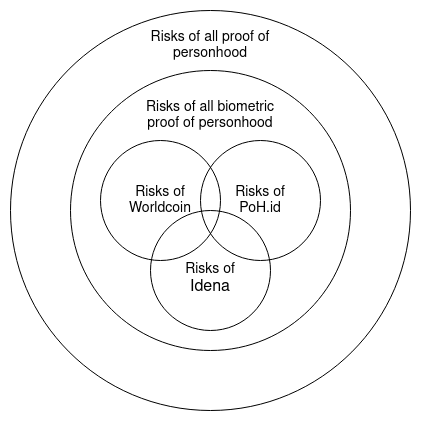

It’s important to distinguish between:

(1) Problems specific to Worldcoin’s design choices;

(2) Inevitable problems in any biometric proof-of-personhood system;

(3) Problems common to any general human identity verification system. For example, signing up for Proof of Humanity means posting your face online.

Joining a BrightID verification party doesn’t quite do this, but still exposes your identity to many. Joining Circles publicly reveals your social graph. Worldcoin offers far better privacy protection than either of these.

On the other hand, Worldcoin relies on specialized hardware, raising questions about whether we can fully trust the Orb manufacturer’s construction. This challenge has no equivalent in Proof of Humanity, BrightID, or Circles. Perhaps in the future, others outside Worldcoin will create different specialized hardware solutions with varying trade-offs.

05 How do biometric human verification schemes address privacy concerns?

The most obvious and significant potential privacy leak in any human verification system is linking every action a person takes to their real-world identity. This data leak is massive—unacceptably so. Fortunately, zero-knowledge proof technology makes it easy to solve.

Instead of directly signing with a private key whose corresponding public key is in the database, users can generate a ZK-SNARK proving they possess a private key whose public key exists somewhere in the database—without revealing which specific key. This can usually be done via tools like Sismo, and Worldcoin has its own built-in implementation. Here, “crypto-native” human verification matters: this basic step enables anonymization, something essentially absent from all centralized identity solutions.

The existence of a public registry of biometric scans represents a subtler privacy leak. In the case of Proof of Humanity, this aggregates large amounts of data: videos of every participant, making it very easy for anyone in the world to investigate all Proof of Humanity participants.

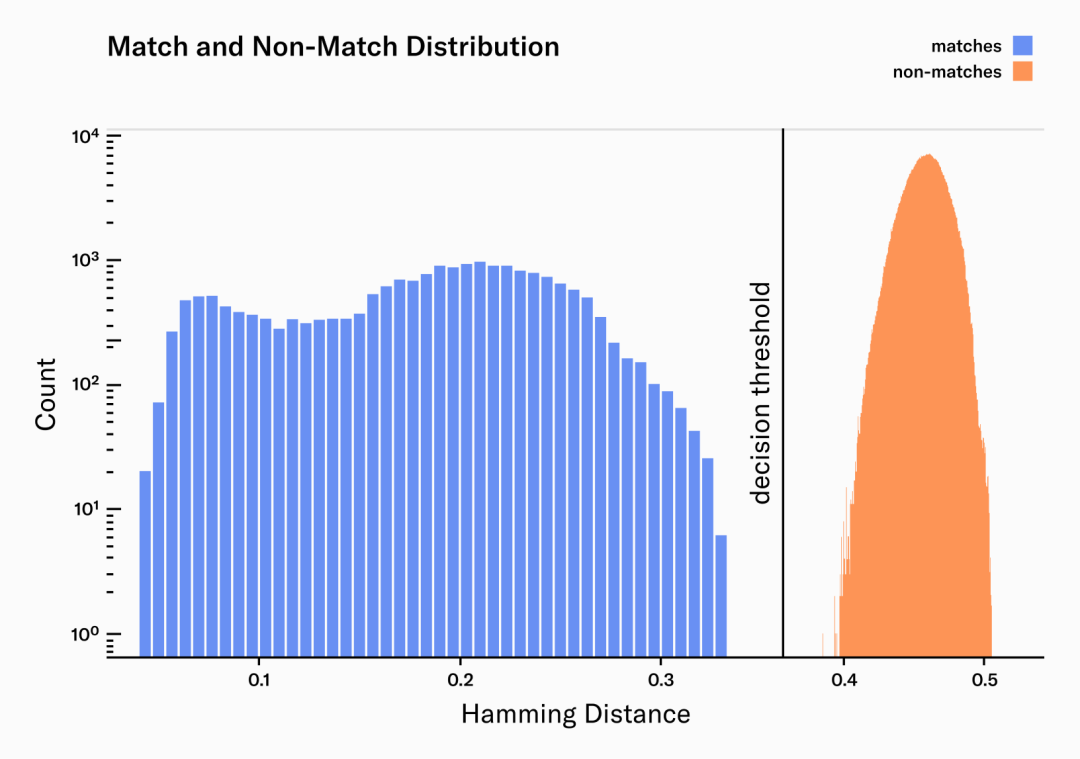

In Worldcoin’s case, the leakage is far more limited: the Orb computes locally and publishes only a “hash” of each person’s iris scan. This hash is not a conventional hash like SHA256; rather, it’s a specialized algorithm based on machine learning Gabor filters that handles inherent imprecision in any biometric scan and ensures consecutive hashes of the same person’s iris produce similar outputs.

Blue: percentage of differing bits between two iris scans of the same person

Orange: percentage of differing bits between two iris scans of two different people

These iris hashes leak only minimal data. If an adversary forcibly (or secretly) scans your iris, they can compute your iris hash and check it against the iris hash database to determine whether you participated in the system.

This ability to check registration status is necessary for the system to prevent duplicate registrations, but it could also be abused. Additionally, iris hashes might leak some medical data (gender, ethnicity, perhaps health conditions), though this leakage is far smaller than data captured by almost any other mass data collection system in use today (e.g., street cameras). Overall, to me, storing iris hashes seems sufficiently private.

06 What accessibility issues exist in biometric human verification systems?

Specialized hardware introduces accessibility issues because such hardware isn't universally accessible. Currently, 51% to 64% of people in sub-Saharan Africa own smartphones, expected to rise to 87% by 2030.

Yet, while billions own smartphones, only hundreds of Orbs exist. Even with large-scale distributed manufacturing, achieving a world where an Orb is within five kilometers of everyone is extremely difficult.

Credit to Worldcoin for trying hard!

It’s also worth noting that many other forms of human verification face even worse accessibility problems. Joining social-graph-based systems is very difficult unless you already know someone in the network. This makes such systems prone to being confined within single communities in single countries.

Even centralized identity systems have learned this lesson: India’s Aadhaar ID system is biometric-based because it was the only way to rapidly onboard its massive population while avoiding large-scale fraud from duplicate and fake accounts (thus saving huge costs). Of course, overall, Aadhaar is far weaker in privacy than any system widely proposed within the crypto community.

From an accessibility standpoint, the best-performing systems are those like existing proofs where you can register using just a smartphone. However, as we’ve seen and will see, such systems come with various other trade-offs.

07 What are the centralization issues in biometric human verification systems?

There are three main concerns:

(1) Risk of centralization at the top-level governance of the system;

(2) Risks specific to systems using specialized hardware;

(3) Risk of centralization if proprietary algorithms determine genuine participants.

Any human verification system must contend with (1). If the system uses incentives denominated in external assets (e.g., ETH, USDC, DAI), it cannot be entirely subjective, so governance risk is unavoidable.

For Worldcoin, the risk is much greater than in Proof of Humanity (or BrightID) because Worldcoin depends on specialized hardware while others don’t.

Particularly in a “logically centralized” system where only one entity performs verification, unless all algorithms are open-source and we can ensure they actually run the code they claim to, centralization risk persists. This is not a risk for purely peer-validated systems.

08 How does Worldcoin address hardware centralization?

Currently, Tools for Humanity—a Worldcoin-affiliated entity—is the sole manufacturer of Orbs. However, Orb source code is mostly public: you can view hardware specifications in this GitHub repository, and other parts of the source code are expected to be released soon.

The license is one of those “shared source, technically not open-source” types similar to Uniswap’s BSL, which prevents not only forking but also behaviors deemed unethical—specifically listing mass surveillance and three international human rights declarations.

The team’s stated goal is to allow and encourage other organizations to create Orbs, gradually transitioning from Orbs made by Tools for Humanity to a DAO-like system that approves and manages which organizations can manufacture officially recognized Orbs.

This design has vulnerabilities:

It might fail to decentralize in practice. This could stem from a common flaw in consortium agreements: one manufacturer inevitably dominates in practice, leading the system to re-centralize.

In fact, guaranteeing security in such distributed manufacturing is difficult. I see two risks here:

(1) Emergence of bad Orb manufacturers: A malicious or compromised Orb maker could generate infinite fake iris scan hashes and grant them World IDs.

(2) Government restrictions on Orbs: Governments opposed to citizens joining the Worldcoin ecosystem could ban Orbs from entering their countries. They might even force citizens to scan their irises, giving the government access to their accounts—with no recourse for citizens.

To effectively identify and counter bad Orb manufacturers, the Worldcoin team proposes regular audits to verify correct construction, adherence to specs for key hardware components, and absence of post-deployment tampering. This is a challenging task—essentially akin to the IAEA’s nuclear inspection bureaucracy, but for Orbs. Hopefully, even an imperfect audit regime could significantly reduce the number of fake Orbs.

To limit damage from any bad Orb manufacturer, a second mitigation makes sense. World IDs registered at different Orb manufacturers—or better yet, using different Orbs—should be distinguishable. If this information is private and stored only on the World ID holder’s device, that’s fine—but it must be provable on demand. This allows the ecosystem to respond to (inevitable) attacks by removing individual Orb manufacturers—or even single Orbs—from whitelists. If we see the North Korean government forcing people to scan Orbs, any resulting accounts can be immediately and retroactively disabled.

09 Security issues in general human verification systems?

Beyond Worldcoin-specific issues, several problems affect human verification designs. The main ones I can think of are:

(1) 3D-printed fake humans: People could use AI to generate convincing photos or even 3D prints of fake humans sufficient to fool Orb software. If even one group does this, they could generate infinite identities.

(2) Possibility of selling identities: Someone could provide another person’s public key during registration instead of their own, letting that person control their verified ID in exchange for money. This seems to already be happening. Beyond sales, IDs could be rented for short-term use in an application.

(3) Phone hacking: If someone’s phone is hacked, the attacker could steal the key controlling their World ID.

(4) Government coercion to steal identities: Governments could force citizens to verify while presenting a QR code belonging to the government. This way, malicious governments could access millions of IDs. In biometric systems, this could even be done secretly: governments could use disguised Orbs to extract World IDs from everyone entering their country via passport checkpoints.

Specific to biometric human verification systems. (2) and (3) are common to both biometric and non-biometric designs. (4) is shared by both, though the required technologies differ greatly; in this section, I’ll focus on issues in the biometric case.

These are very serious weaknesses. Some have already been addressed in existing protocols, some could be mitigated through future improvements, and others appear to be fundamental limitations awaiting solutions.

-

How do we deal with fake humans?

For Worldcoin, this risk is much lower than in systems like Proof of Humanity: in-person scanning checks many features of a person and is much harder to forge compared to merely deep-faking a video. Specialized hardware is inherently harder to deceive than commodity hardware, which in turn is harder to trick than digital algorithms verifying remotely sent images and videos.

Could someone 3D-print something capable of deceiving specialized hardware? Possibly. I expect increasing tension between keeping the mechanism open and keeping it secure: open-source AI algorithms are inherently more vulnerable to adversarial machine learning. Further ahead, even the best AI algorithms might eventually be fooled by the best 3D-printed fakes.

However, from my discussions with the Worldcoin and Proof of Humanity teams, neither protocol currently sees major deepfake attacks—simply because hiring real low-wage workers to register on your behalf is very cheap and easy.

-

Can we prevent ID selling?

Preventing such outsourcing is difficult in the short term, since most people worldwide don’t yet know about human verification protocols—and if told they can earn $30 by holding up a QR code and scanning their eyes, they will comply.

Once more people understand what human verification protocols are, a fairly simple mitigation becomes possible: allow holders of registered IDs to re-register and cancel previous IDs. This greatly reduces the credibility of “ID selling,” since the seller could re-register and invalidate the ID they just sold. But reaching this point requires the protocol to be very well-known and Orbs to be widely accessible, making on-demand re-registration practical.

This is one reason integrating UBI tokens into human verification systems is valuable: UBI coins provide a clear incentive for people to learn about the protocol and register—and potentially lose their own account if registering on someone else’s behalf.

-

Can we prevent coercion in biometric human verification systems?

This depends on what kind of coercion we’re discussing. Possible forms include:

- Governments scanning people’s eyes (or faces) at border controls and routine checkpoints to register their citizens;

- Governments banning Orbs domestically to prevent independent re-registration;

- Applications (possibly government-run) requiring users to “log in” by directly signing with their public key, allowing them to see the corresponding biometric scan and thus link a user’s current ID with any future ID obtained through re-registration.

A common concern is that this makes creating “permanent records” too easy—records that follow a person for life.

Especially for inexperienced users, it seems difficult to fully prevent these scenarios. Users could leave their country and re-register at an Orb in a safer country—but this is a difficult and costly process. In truly hostile legal environments, finding an independent Orb seems too difficult and risky.

Verification methods requiring a person to speak a specific phrase during registration serve as a good example: sufficient to prevent hidden scans, and higher barriers for coercion. The registration phrase could even include confirmation that the registrant knows they have the right to independently re-register and possibly receive UBI tokens or other rewards.

If coercion is detected, devices used for forced registration could have their access revoked. To prevent applications from linking a person’s current and past IDs and attempting to maintain “permanent records,” default human verification apps could lock users’ keys in trusted hardware, preventing any app from directly using the key without an intermediate anonymous ZK-SNARK layer. If governments or app developers want to circumvent this, they’d need to force custom apps.

Through a combination of these technical measures and active vigilance, containing truly hostile regimes while keeping neutral ones (like most of the world) honest seems possible. This could be managed by bureaucracies maintained by projects like Worldcoin or Proof of Humanity, or by revealing more information about how IDs were registered (e.g., which Orb in Worldcoin) and delegating classification tasks to the community.

-

Can we prevent ID renting (e.g., vote selling)?

Re-registration does not prevent renting your ID. This might be acceptable in some applications: the cost of renting your right to claim daily UBI coin share would just equal the value of that share. But in voting applications, ticket-selling is a major issue.

Systems like MACI can prevent vote selling by allowing users to cast a later vote that invalidates their earlier one, so no one can know whether they truly voted as claimed. However, this fails if the briber controls the key the user received upon registration.

I see two solutions here:

(1) Run the entire application inside an MPC. This includes the re-registration process: when someone registers with the MPC, it assigns them an ID separate from and unlinkable to their proof-of-personhood ID, and when they re-register, only the MPC knows which account to deactivate. This prevents users from proving their actions, as every critical step occurs inside the MPC using private information known only to it.

(2) Decentralized registration ceremonies. Essentially, implement protocols similar to in-person key registration, requiring four randomly selected local participants to jointly register someone. This ensures registration is a “trusted” process immune to eavesdropping by attackers.

Social-graph-based systems might actually perform better here, as they can automatically create decentralized local registration processes as a side effect of their operation.

10 Biometric vs. Social-Graph-Based Human Verification

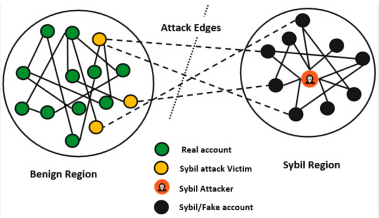

Besides biometrics, the other main contender for human verification so far is social-graph-based validation. These systems operate on the same principle: If a large number of existing verified identities attest to your validity, then you can achieve verified status.

If only a few real users (accidentally or maliciously) validate fake users,

basic graph theory techniques can set upper bounds on the number of fake users the system validates.

Proponents of social-graph-based verification often describe it as a superior alternative to biometrics for several reasons:

- It doesn’t rely on specialized hardware, making deployment easier;

- It avoids the perpetual arms race between fake-human manufacturers and Orb updates needed to reject such fakes;

- It doesn’t require collecting biometric data, enhancing privacy;

- It may be more pseudonym-friendly: if someone chooses to split their online life into multiple separate identities, both could potentially be verified (though maintaining multiple genuine, independent identities sacrifices network effects and is costly, so attackers can’t easily do this).

Biometric methods give a binary “human/not human” score, which is absolute: someone accidentally rejected gets no UBI and may be excluded from online life. Social-graph-based methods can yield more nuanced numerical scores—perhaps slightly unfair to some participants, but unlikely to completely dehumanize anyone.

My view on these arguments is that I largely agree! These are genuine advantages of social-graph-based approaches and deserve serious consideration. However, it’s also worth examining the weaknesses of social-graph methods: regional limitations, privacy leaks, inequality, centralization risks, etc.

11 In the real world, is human verification compatible with pseudonyms?

In principle, human verification is compatible with various forms of pseudonymity. There is no ideal form of human verification. Instead, we have at least three distinct paradigmatic approaches, each with unique strengths and weaknesses. A comparison chart might look like this:

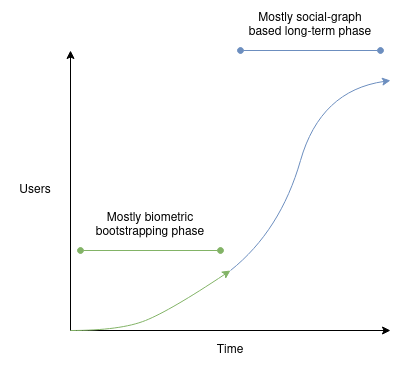

Ideally, we should treat these three technologies as complementary and integrate them. As India’s Aadhaar shows, specialized hardware biometrics offer advantages in large-scale security. They’re very weak in decentralization, though this could be addressed by assigning responsibility to individual orbs.

Today, general-purpose biometrics are easy to adopt but their security is rapidly declining—likely usable for only 1–2 more years. Social-graph-based systems bootstrapped by hundreds closely tied to founding teams may face constant trade-offs: either missing most of the world entirely or being vulnerable to attacks from communities they can’t see. However, a social-graph-based system bootstrapped by tens of millions of biometric ID holders could actually function. Biometric bootstrapping may work better in the short term, while social-graph techniques could prove more robust long-term, taking on greater responsibility as algorithms improve over time.

Potential hybrid development path forward

Creating an effective and reliable human verification system—especially one usable by people far removed from existing crypto communities—seems quite challenging. I certainly don’t envy those attempting this task, and it may take years to find a working solution.

In principle, the concept of human verification seems highly valuable. While various implementations carry risks, the absence of any human verification also carries risks: a world without it seems more likely to be dominated by centralized identity solutions, money, small closed communities, or a mix of all three. I look forward to seeing more progress across all types of human verification and hope to see different approaches eventually converge into a coherent whole.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News