Tether Launches Local AI to Compete Head-On with Cloud-Based Large Models

TechFlow Selected TechFlow Selected

Tether Launches Local AI to Compete Head-On with Cloud-Based Large Models

Computing power, AI models, datasets, and intelligent capabilities that can operate independently of centralized cloud infrastructure are becoming Tether’s second-largest reserve asset.

By Liam Akiba Wright

Translated by Luffy, Foresight News

Tether’s new initiative, QVAC, opens with a concept unusually rare among stablecoin firms: it describes its QVAC Psy as a suite of foundational large models “rooted in the principles of psychohistory.”

Psychohistory—a term coined by Isaac Asimov in his classic science fiction series *Foundation*—refers to a fictional discipline where mathematics, statistics, and sociodynamics are used to predict the behavior of massive populations. In the novels, Hari Seldon employs this science to anticipate the collapse of the Galactic Empire and shorten the ensuing dark age.

The *Encyclopedia of Science Fiction* defines Asimov’s psychohistory as a fictional science whose entire purpose is to forecast future events and preserve human knowledge and civilization amid societal collapse.

Tether’s invocation of psychohistory is, in essence, a science-fictional framing of its corporate mission.

Leveraging reserve assets, liquidity, and distribution channels, Tether has built the largest stablecoin system in crypto. Now, it is replicating that underlying logic in the field of artificial intelligence.

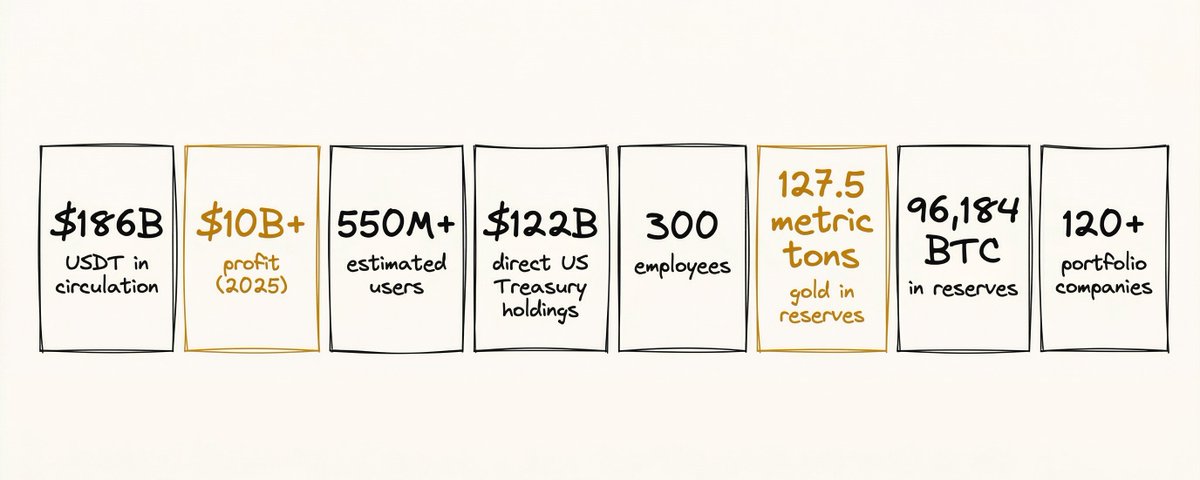

USDT forms Tether’s first pillar of reserves; computational power, AI models, datasets, and intelligent capabilities capable of operating independently of centralized cloud infrastructure now constitute its second pillar of reserve assets.

From Dollar Reserves to Intelligent Asset Reserves

Tether’s foray into AI extends the operational logic underpinning its core business. USDT transforms global offshore dollar demand into a reserve portfolio anchored primarily in short-term sovereign bonds.

According to Tether’s Q1 2026 Reserve Attestation Report, the company posted net income of $1.04 billion, maintained a reserve buffer of $8.23 billion, held token-related liabilities of approximately $183 billion, and directly or indirectly owned around $141 billion in U.S. Treasury bills.

This robust reserve base generates sustained revenue and provides ample balance-sheet capacity, enabling Tether to deploy operating profits toward long-term infrastructure investments.

CryptoSlate previously noted that Tether’s massive stablecoin scale allows strategic allocation of its reserve capital. In January, Tether purchased 8,888 bitcoins—an illustration of how interest income and operating profits can be converted into long-term Bitcoin holdings. The QVAC initiative represents an extension of this asset-allocation logic into the entirely new domain of artificial intelligence.

Beyond existing allocations in Bitcoin, gold, startups, energy, cryptocurrency mining, and telecom infrastructure, Tether has now made a major commitment to AI itself. This shift repositions Tether from a private issuer of dollar liquidity to a private builder of digital infrastructure.

The science-fiction narrative of “psychohistory” fits this strategic direction perfectly. Tether treats AI not as just another software category, but as a civilizational-scale foundational layer. Official QVAC materials position it as an “Infinitely Stable Intelligence Platform,” emphasizing decentralized, locally-first intelligent systems designed to compete with—and ultimately replace—centralized AI.

QVAC’s vision states that routing all intelligent interactions through centralized servers is not only slow and unreliable but also vulnerable to control and restriction. Instead, QVAC aims to become the edge-layer foundational platform for users’ personal intelligent systems.

This philosophy echoes Tether’s stablecoin ethos: permissionless fund transfers, user-controlled data, and AI running locally and proximally.

Beneath Asimov’s sci-fi veneer lies a more serious judgment by Tether: AI only truly accrues value when it achieves infrastructure-grade resilience and risk resistance.

Cloud-based large models may offer superior aggregate capability, but they carry inherent risks—including platform dependency, pricing volatility, regulatory intervention, network latency, and data routing vulnerabilities. Local AI models, while sacrificing some performance, deliver ownership, privacy, and continuous operational stability.

This trade-off logic resonates deeply with crypto principles. Self-custody may be less convenient than exchange custody—until an exchange collapses, revealing its true value. Likewise, local AI may be less plug-and-play than cloud-hosted models, yet its advantages emerge decisively during network outages, API changes, account bans, or restrictions on data export.

QVAC: A Distinctive Edge-AI Architecture

QVAC’s core differentiation lies in its foundational architecture. Leading large-model labs—including OpenAI, Anthropic, Google DeepMind, and xAI—are competing on general-purpose capability, coding proficiency, multimodal interaction, ultra-long-context reasoning, agent applications, and enterprise cloud deployment.

QVAC chooses a fundamentally different path: deployability, privacy protection, low latency, composability, and independence from any single platform.

QVAC’s official Getting Started documentation defines the project as an open-source, cross-platform ecosystem prioritizing local-first, peer-to-peer AI applications compatible with Linux, macOS, Windows, Android, and iOS. Users can run large language models, speech recognition, retrieval-augmented generation (RAG), and other AI tasks locally—or delegate inference workloads to other devices via built-in P2P functionality.

This means QVAC’s benchmarking criteria diverge sharply from those of top-tier cloud AI models: frontier AI pursues the strongest possible general-purpose model capability attainable through centralized services; QVAC focuses instead on *where* inference occurs, *who controls execution*, whether data remains on-device, and whether applications remain functional when centralized services fail.

In April 2026, Tether launched the QVAC Software Development Kit (SDK), offering a unified development suite enabling developers to build, run, and fine-tune AI applications across any device—fully cross-platform and code-compatible without modification.

The QVAC SDK uses a unified abstraction layer to support diverse local inference engines—including its proprietary QVAC Fabric and a fork of llama.cpp—while integrating speech and translation tools such as whisper.cpp, Parakeet, and Bergamot.

It has already transcended the scope of a single model release—it functions more like an operating system for AI. Today’s open-source AI ecosystem hosts numerous mature components: Llama, Qwen, Mistral, Gemma, DeepSeek, Hugging Face, llama.cpp, Ollama, and many others—all flourishing in local inference.

QVAC’s central bet is that developers urgently need a complete edge-native framework—one that unifies, via a consistent interface, model loading, inference computation, speech recognition, OCR, translation, text-to-image generation, RAG, P2P model distribution, delegated inference, and local fine-tuning.

QVAC aims to become the foundational distribution layer for intelligent compute, capturing the entry point to the edge AI ecosystem via continuously refined mid-tier local models.

QVAC Fabric sits at the heart of this technical architecture. Tether states that Fabric leverages Vulkan and Metal backends to perform model fine-tuning on mainstream consumer hardware—including Android devices equipped with Qualcomm Adreno or ARM Mali GPUs, Apple silicon Macs and iPhones, and Windows/Linux PCs powered by AMD, Intel, or NVIDIA chips.

It also incorporates dynamic chunking techniques to accommodate mobile GPU memory constraints and supports GPU-accelerated LoRA fine-tuning and masked loss instruction tuning.

If this workflow proves viable in external developer testing, its value would far exceed that of typical open-source model releases: model weights are merely the foundational layer—the real incremental value lies in local, personalized fine-tuning and adaptation.

MedPsy: QVAC’s First Real-World Stress Test

MedPsy is QVAC’s first flagship deployed model product. A technical report published on Hugging Face on May 7 reveals that QVAC MedPsy is a healthcare-focused language model specifically engineered for edge deployment, available in two variants: 1.7 billion and 4 billion parameters.

QVAC makes a bold claim: small models rigorously fine-tuned on medical-domain data can outperform larger medical benchmark models—while remaining fully deployable on laptops, high-end mobile devices, and even smartphones.

According to QVAC, MedPsy-1.7B scores an average of 62.62 across seven closed-book medical benchmarks—significantly surpassing Google’s MedGemma-1.5-4B-it (51.20), despite having less than half the parameter count. MedPsy-4B scores 70.54—slightly ahead of MedGemma-27B-text-it (69.95) while using only one-seventh the parameters.

The gap widens further on HealthBench and the more challenging HealthBench Hard: MedPsy-4B scores 74.00 and 58.00 respectively, compared to MedGemma-27B-text-it’s 65.00 and 42.67.

If these benchmark results hold up under third-party replication, they will directly validate QVAC’s core thesis: in high-value vertical domains, lightweight edge models can challenge massive cloud-based systems.

The training methodology reflects QVAC’s competitive strategy: MedPsy builds upon Qwen3 as its backbone model, undergoing multi-stage supervised fine-tuning and reinforcement learning optimized for medical Q&A. Over 30 million synthetic data points were generated during experimentation, employing a two-stage curriculum and using Baichuan M3-235B—a large model specialized in long-text reasoning—as the supervisory “teacher” model.

Its training corpus has not yet been disclosed—raising critical questions. All current benchmark results come from internal QVAC evaluations; key issues—including potential data contamination, coverage breadth, prompt engineering, and teacher-model influence—remain subject to independent verification.

Quantization deployment offers clear advantages: official GGUF-quantized versions compatible with both llama.cpp and the QVAC SDK are already available. Using Q4_K_M quantization reduces model size by 69% while degrading average scores by less than one point. In the optimal balance of size and performance, the 4B model occupies only 2.72 GB and the 1.7B version just 1.28 GB—easily deployable on local devices.

QVAC also explicitly discloses limitations: MedPsy supports text-only interaction, English only, is unsuitable for clinical emergency use, inherits standard LLM hallucination risks, and requires developers to implement end-to-end privacy safeguards within their application architecture.

Healthcare inherently demands strong local inference capabilities—making MedPsy’s prospects promising. Yet its true capabilities will only be confirmed once external researchers replicate its benchmark scores and test it in real-world clinical workflows.

Convenience vs. Control: The AI Industry’s Defining Trade-off

Debates between local and cloud AI are often reduced to a simple privacy-versus-performance dichotomy. QVAC reframes this entirely: the real trade-off is between convenience and autonomous control.

Cloud AI excels in extreme usability: users launch an app, type a prompt, and receive output—without worrying about model weights, GPU memory, quantization parameters, vector embeddings, or runtime environment compatibility. The platform absorbs all technical complexity. This unparalleled convenience is precisely why centralized AI platforms have risen so rapidly—users access cutting-edge intelligence at near-zero friction.

QVAC, by contrast, asks developers and users to shoulder greater operational responsibility—in exchange for a new security paradigm: offline local execution, functionality during network outages, minimized data leakage, freedom from API dependencies, and seamless peer-to-peer inference and model distribution.

As described in the Tether SDK documentation, QVAC-powered applications operate reliably even under weak-network conditions—and continue functioning entirely offline. An early QVAC announcement from 2025 further outlines plans for AI agents deployed directly on local devices, coordinating peer-to-peer via mesh networks, and—with the WDK toolkit—capable of autonomously executing Bitcoin and USDT transactions.

This embodies Tether’s full-stack top-level logic: money, compute, and intelligent agents, all governed by the same sovereign autonomy design principle.

Of course, its decentralization narrative isn’t flawless. From the user’s perspective—downloading models, running them locally, keeping sensitive data on-device—QVAC achieves high decentralization at the inference layer, removing platform control over every interaction command. Leveraging the Holepunch networking architecture, QVAC also enables delegated inference and decentralized model distribution—substantive architectural innovations.

Yet governance remains centralized. QVAC is fully funded, branded, and marketed by Tether; its flagship applications, model architecture, SDK roadmap, and “Stable Intelligence” philosophy are all directed by a single corporation.

This does not contradict its local-first value proposition—it simply confines decentralization’s strongest evidence to the inference execution layer. The broader ecosystem still needs to gradually establish distributed governance mechanisms across default node registration, version release channels, security standards, model accreditation, and long-term community stewardship.

Third-Party Replication Determines QVAC’s Ceiling

QVAC’s credibility today rests entirely on third-party replication. If MedPsy’s benchmark scores hold up under external evaluation, Tether will have successfully realized its “intelligent asset reserve” vision: lightweight, open-source, locally deployable vertical models capable of rivaling cloud-based giants in highly sensitive domains.

Even if third-party tests narrow—or reverse—the performance gap, QVAC’s infrastructure value remains intact; only its model-performance narrative would soften. The industry’s ultimate question persists, echoing timeless technological truths: extreme convenience breeds centralization of power, while autonomous control demands operational overhead.

This is precisely where Asimov’s sci-fi vision gains relevance: *Foundation*’s psychohistory studies how complex large-scale systems evolve under stress; Tether reinterprets it to examine how infrastructure resists centralized monopolization.

The sci-fi narrative is grand—but technical implementation remains early-stage. Still, the strategic logic is coherent and self-consistent. Leveraging the world’s largest stablecoin’s steady cash flow, Tether is building an AI architecture centered on local execution, peer-to-peer networking, open-source tooling, and lightweight edge models—extending stablecoin sovereignty from the monetary domain into the realm of intelligence.

The industry no longer questions whether stablecoin giants possess the capability to enter AI. The answer is obvious.

The real question is whether QVAC can deliver sufficiently powerful models and infrastructure to convince users to accept modest operational overhead—for the sake of local autonomy and control.

MedPsy is the first quantifiable threshold. Third-party replication results will determine whether QVAC’s psychohistory narrative remains merely a sci-fi metaphor—or evolves into a fully operational, mainstream edge-AI infrastructure with proven viability.

Join TechFlow official community to stay tuned

Telegram:https://t.me/TechFlowDaily

X (Twitter):https://x.com/TechFlowPost

X (Twitter) EN:https://x.com/BlockFlow_News